Resemble AI Voice Models Integration with TrueFoundry

Auf Geschwindigkeit ausgelegt: ~ 10 ms Latenz, auch unter Last

Unglaublich schnelle Methode zum Erstellen, Verfolgen und Bereitstellen Ihrer Modelle!

- Verarbeitet mehr als 350 RPS auf nur 1 vCPU — kein Tuning erforderlich

- Produktionsbereit mit vollem Unternehmenssupport

We are excited to announce the integration of Resemble AI with TrueFoundry AI Gateway that brings voice cloning and synchronous text-to-speech and streaming TTS into the same gateway path teams already use for LLMs and embeddings and agent traffic.

Teams routing AI traffic through TrueFoundry's AI Gateway can now connect Resemble AI as a first class text-to-speech provider through the gateway's native SDK pass-through. Requests to Resemble's /synthesize endpoint and /stream endpoint flow through the gateway path with centralized authentication and per-team access control and unified cost tracking and full request tracing. No client code changes are required beyond pointing the Resemble base URL at the gateway and authenticating with a TrueFoundry token.

This post covers the architecture of the integration. It explains how the TrueFoundry AI Gateway exposes TTS providers and how Resemble's native API surface is preserved through the pass-through layer and how failover across multiple TTS providers works through Virtual Models.

Why teams put a gateway in front of voice applications

TrueFoundry provides the control layer for production AI systems. Through the AI Gateway teams centralize model routing and key management and access control and observability and cost tracking across LLMs and embeddings and image and audio providers. Every request flows through a single proxy layer where identity is verified and rate limits are enforced and traces are captured.

TTS traffic in production tends to look like LLM traffic in three ways. Multiple providers are usually in play because no single TTS vendor wins on every dimension. Latency matters because voice agents stream audio back to users in real time. Cost adds up fast at the per-character or per-second level and benefits from the same chargeback and budget controls teams already apply to chat completions. The arguments for putting a gateway in front of LLM providers carry over directly.

Resemble AI is a voice generation and audio intelligence platform. Its core synthesis engine is the Chatterbox model with a Chatterbox Turbo variant for lower latency and paralinguistic tag support. The platform supports voice cloning and SSML and HD synthesis and streaming output. Resemble also exposes adjacent products including Resemble Detect for audio deepfake detection and Audio Edit and Voice Design and Watermark which can be wired in alongside the TTS workflow.

Together the two platforms give teams one place to govern and trace voice generation alongside the rest of their AI stack. TrueFoundry handles deployment and routing and operational control. Resemble handles the actual synthesis. The integration uses TrueFoundry's native SDK pass-through which preserves Resemble's full API surface without forcing it into an OpenAI compatible shape.

The Resemble API surface

Resemble's synchronous text-to-speech endpoint takes a small set of fields and returns audio along with timing metadata. The synthesize endpoint accepts a voice_uuid selecting which trained or pre-built voice to use and a data field containing text or SSML up to 3000 characters. Optional fields control the model selection through model (for example chatterbox-turbo) and the audio precision through precision (one of MULAW or PCM_16 or PCM_24 or PCM_32) and the output format through output_format (wav or mp3) and the sample rate and the HD mode through use_hd and custom pronunciation handling through apply_custom_pronunciations.

The response payload returns success and a base64-encoded audio_content field containing the synthesized audio bytes. Timing metadata arrives in audio_timestamps with grapheme characters and grapheme times and phoneme characters and phoneme times for downstream alignment use cases like lip sync and captioning. The response also reports duration (the audio length in seconds) and synth_duration (the raw synthesis time) and output_format and sample_rate and any issues the synthesizer flagged during generation.

A second endpoint at /stream supports streaming synthesis over HTTP for voice agent use cases where time to first audio chunk matters. The request shape is the same. The response is a stream of audio frames rather than a single base64 payload. Authentication for both endpoints is a bearer token issued from the Resemble account console.

How the gateway handles TTS providers

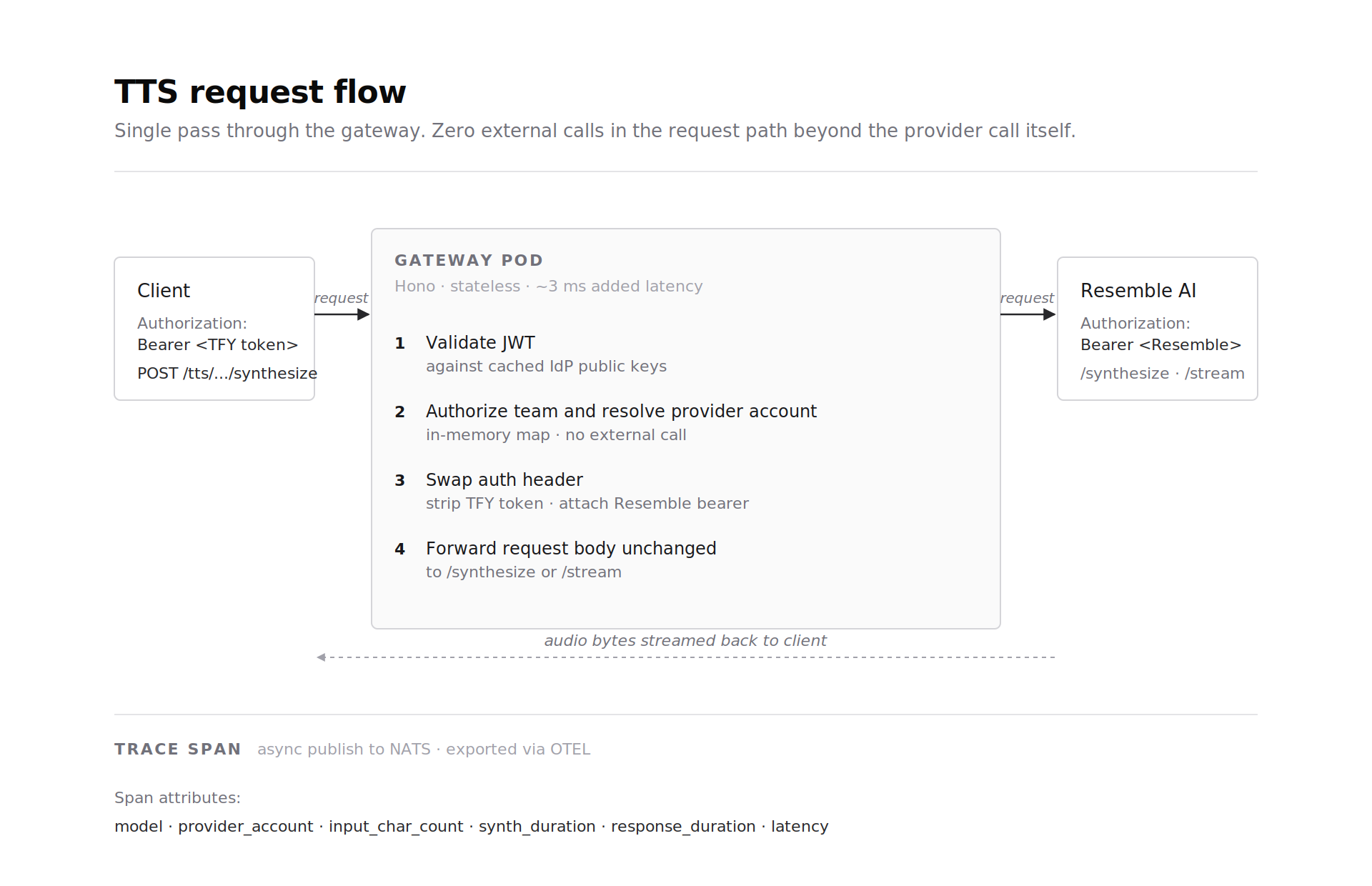

The TrueFoundry AI Gateway runs on the Hono framework and a single gateway pod handles 250 plus requests per second on 1 vCPU and 1 GB RAM with approximately 3 ms of added latency. Gateway pods are stateless and CPU bound and scale horizontally to tens of thousands of RPS through additional pods. The control plane and gateway plane are split. Provider configuration including credentials and routing rules and rate limits lives in the control plane and syncs to gateway pods through NATS. The actual request path stays in memory with no external calls beyond the provider call itself.

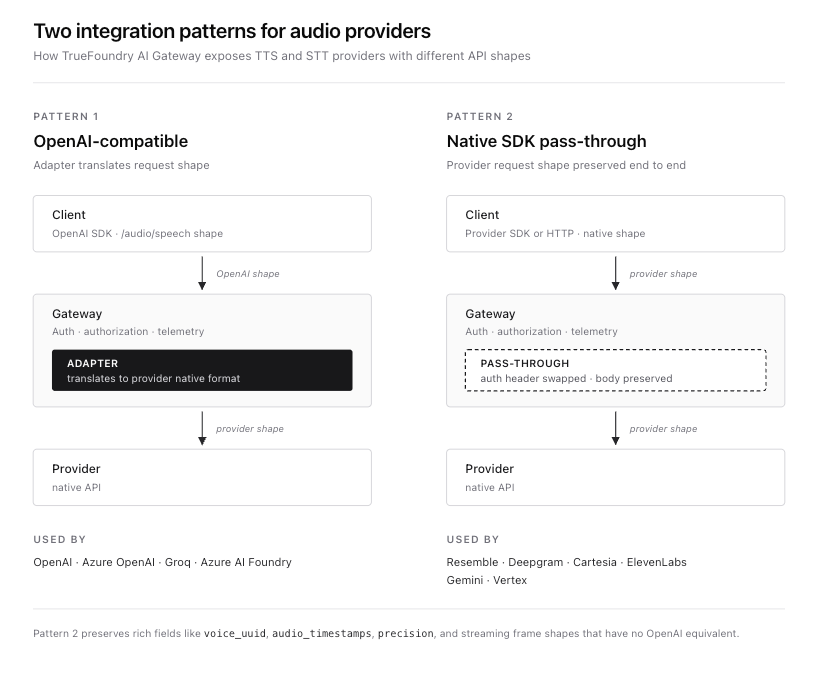

For TTS the gateway exposes two integration patterns.

The first is the OpenAI-compatible API pattern at the gateway base URL. Providers that speak the OpenAI /audio/speech shape (OpenAI and Azure OpenAI and Azure AI Foundry and Groq) plug in here. Clients use the standard OpenAI SDK and the gateway translates the request to the provider's native format through an adapter layer.

The second is the native SDK pass-through pattern at {GATEWAY_BASE_URL}/tts/{providerAccountName}. Providers with rich native APIs that do not map cleanly onto the OpenAI shape (Deepgram and Cartesia and ElevenLabs and Gemini and Vertex) plug in here. The full provider request and response shape is preserved. The gateway handles authentication and access control and tracing and routing but does not rewrite the payload. This is the pattern Resemble uses because the Resemble request body with voice_uuid and audio_timestamps and precision levels and the chatterbox-turbo model selector does not have an equivalent in the OpenAI TTS contract.

When a request hits a gateway pod the path looks like this. The TrueFoundry token in the Authorization header is validated against cached IdP public keys. The team identity is resolved against an in memory map and authorization to the Resemble provider account is checked. The request body is forwarded to the Resemble synthesize or stream endpoint with the Resemble bearer token attached server-side. The response is streamed back to the client. The full interaction is captured in a trace span with the model name and the provider account and the input character count and the response duration and the synth duration and the latency. There are no extra round trips beyond the actual provider call.

The integration surface

Resemble is registered in the TrueFoundry control plane as a provider account with the Resemble bearer token stored as a secret. Once the account is added the gateway exposes two TTS routes for it. The native SDK route at {GATEWAY_BASE_URL}/tts/{providerAccountName}/synthesize proxies to the synchronous endpoint. The streaming route at {GATEWAY_BASE_URL}/tts/{providerAccountName}/stream proxies to the streaming endpoint. Both routes preserve the Resemble request and response shape exactly.

A minimal client call looks like the snippet below. Note that the only change from a direct Resemble call is the base URL and the authentication header.

curl -X POST {GATEWAY_BASE_URL}/tts/resemble-prod/synthesize \

-H "Authorization: Bearer ${TFY_API_KEY}" \

-H "Content-Type: application/json" \

-d '{ "voice_uuid": "55592656",

"data": "Hello from the gateway.",

"model": "chatterbox-turbo",

"output_format": "mp3",

"use_hd": false }'Existing application code that targets Resemble directly migrates by swapping the base URL and the bearer token. Voice UUIDs and SSML payloads and precision settings and HD mode all carry over without modification. Resemble's official client libraries can be configured the same way by overriding their base URL.

Routing and failover across TTS providers

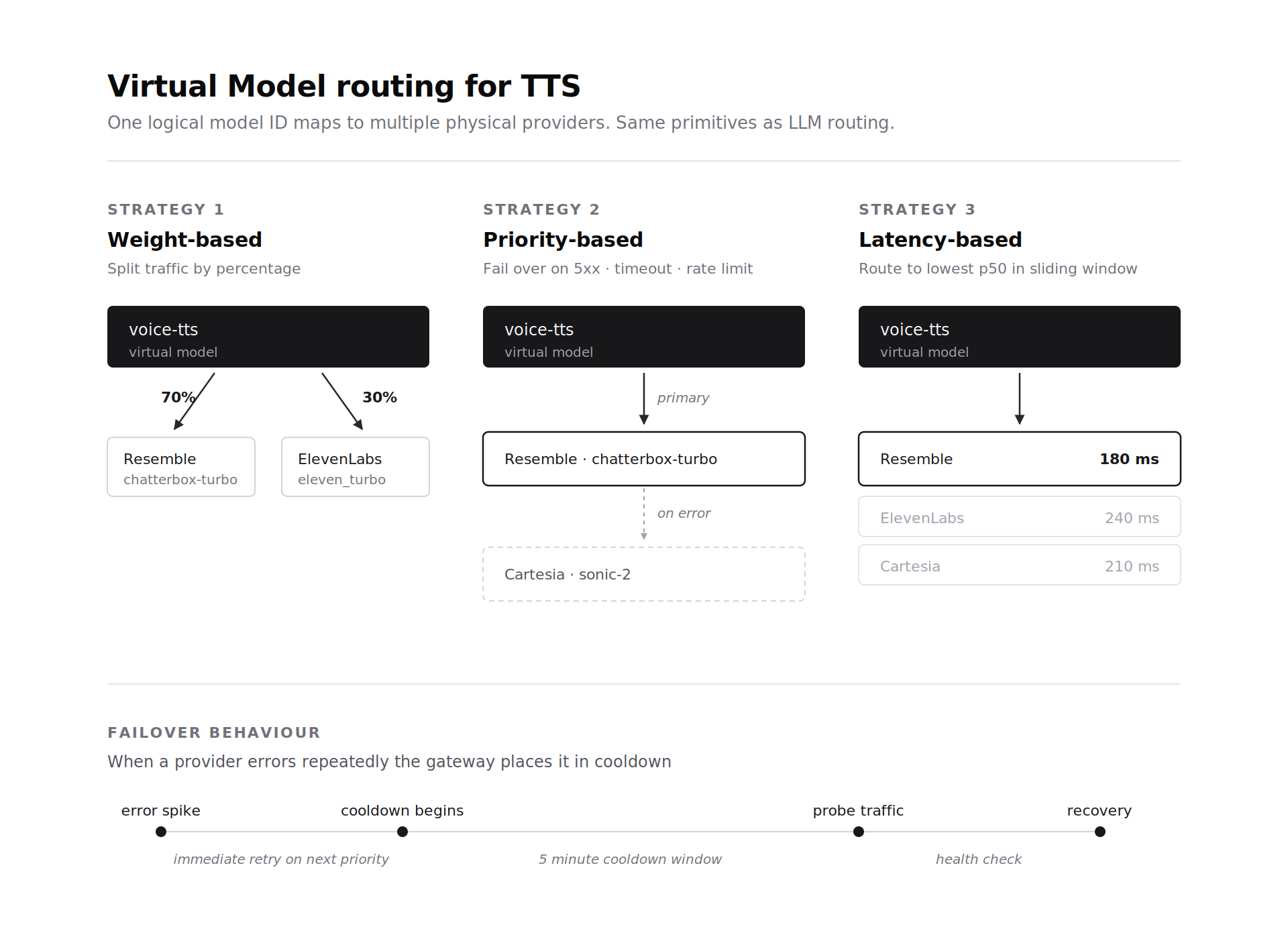

Voice agent stacks often run more than one TTS provider in production for cost and latency reasons. The gateway's Virtual Model abstraction extends to TTS providers the same way it does to LLM providers. A virtual model identifier maps to one or more physical TTS deployments with routing rules. Weight based routing distributes traffic by percentage across providers. Priority based routing tries the first provider and fails over on a 5xx or a timeout or a rate limit. Latency based routing sends traffic to whichever provider has the lowest p50 latency in the sliding window.

Failover for TTS works on the same primitives as LLM failover. Non retriable errors trigger an immediate retry on the next priority provider. Error spikes place a provider in a 5 minute cooldown and probe traffic checks for recovery. A team running Resemble Chatterbox Turbo as the primary low-latency path can failover to Cartesia or ElevenLabs without changing client code. The Virtual Model handles the selection.

Cost tracking captures TTS usage at the same granularity as LLM usage. The gateway records the input character count and the synthesis duration and the model and the team and the user against each request. The aggregator service computes per-team and per-user spend and feeds the same dashboards and budget enforcement primitives that already cover chat completions and embeddings. Rate limits apply through the Sliding Window Token Bucket algorithm with per-minute windows scoped on user or team or model. For TTS the unit is characters or requests rather than tokens but the algorithm is unchanged.

Observability and traces

Every TTS request emits a trace span. The span attributes include the provider account and the model identifier (for example resemble-prod/chatterbox-turbo) and the input character count and the response duration in seconds and the raw synthesis time and the output format and the sample rate and the gateway-side latency. Traces are emitted asynchronously through NATS and exported via OTEL to whichever observability backend the team has configured (Arize or Langfuse or LangSmith or any of the supported targets). The Exclude Request Data toggle applies the same way it does for chat completions to keep the input text out of exported traces when data privacy requires it.

This means TTS calls show up in the same trace timeline as the upstream LLM call that produced the text and the downstream agent action that consumed the audio. For voice agent debugging this consolidation matters. A failed turn can be traced from the LLM completion that selected the response through the TTS synthesis that rendered it through the action the agent took next.

Architecture Summary

End to end the request flow looks like this. A client sends a TTS request to the gateway at {GATEWAY_BASE_URL}/tts/{providerAccountName}/synthesize or its streaming counterpart with a TrueFoundry bearer token. The gateway authenticates the caller against cached IdP keys and resolves the provider account and checks team and user authorization in memory. If a Virtual Model is in use the routing logic selects a physical provider based on weight or priority or latency. The request body is forwarded to Resemble with the server-side Resemble bearer token attached. The response is streamed back to the client preserving the full Resemble payload shape including audio content and timestamps and duration metadata. Every step is captured in a trace span emitted asynchronously to NATS and exported via OTEL.

Nothing else has to change in the application. There is no SDK rewrite required and no per-provider auth handling on the client and no separate observability pipeline for voice traffic. The gateway is already in the request path for the rest of the AI stack and Resemble attaches to that path through native pass-through. Existing Resemble client code keeps working with a base URL swap.

Get Started

Learn more about the TrueFoundry AI Gateway and the Resemble AI platform. Add Resemble as a provider account in the gateway control plane and call the synthesize or stream endpoint at the /tts/{providerAccountName} route from existing application code.

TrueFoundry AI Gateway bietet eine Latenz von ~3—4 ms, verarbeitet mehr als 350 RPS auf einer vCPU, skaliert problemlos horizontal und ist produktionsbereit, während LiteLM unter einer hohen Latenz leidet, mit moderaten RPS zu kämpfen hat, keine integrierte Skalierung hat und sich am besten für leichte Workloads oder Prototyp-Workloads eignet.

Der schnellste Weg, deine KI zu entwickeln, zu steuern und zu skalieren

.webp)

.webp)

.png)