TrueFoundry Integration with Smallest AI

.webp)

Auf Geschwindigkeit ausgelegt: ~ 10 ms Latenz, auch unter Last

Unglaublich schnelle Methode zum Erstellen, Verfolgen und Bereitstellen Ihrer Modelle!

- Verarbeitet mehr als 350 RPS auf nur 1 vCPU — kein Tuning erforderlich

- Produktionsbereit mit vollem Unternehmenssupport

Smallest AI and TrueFoundry AI Gateway

Smallest AI's text to speech and speech to text models integrate with TrueFoundry AI Gateway through native passthrough. Requests flow to Smallest AI's REST endpoints for batch synthesis and transcription and the corresponding server sent event streams and WebSocket endpoints for chunked audio output and live transcription. The gateway substitutes the Smallest AI Bearer token from its credential store and applies access control and emits OpenTelemetry spans before proxying the request or upgrading the WebSocket.

This post covers the Smallest AI API surface across the TTS and STT families. It also covers how the gateway plane handles the native passthrough path for non OpenAI compatible voice endpoints.

What Smallest AI exposes

Smallest AI ships two model families. Lightning is the text to speech family. Pulse is the speech to text family. Both run as REST endpoints for batch use and as WebSocket endpoints for streaming use.

Lightning v3.1 is a 44 kHz TTS model with a published time to first audio under 100 ms. It supports 15 languages with strong Indic coverage including Hindi and Tamil and Telugu and Malayalam and Kannada and Marathi and Gujarati alongside English and Spanish and French and German and Italian and Portuguese and Swedish and Dutch. The model uses a non autoregressive architecture that generates entire speech segments in parallel rather than token by token. This is what produces the latency profile and what allows the model to run in under 1 GB of VRAM which makes on premises deployment practical for regulated environments.

The model is exposed through three endpoint shapes.

- POST https://waves/v1/lightning-v3.1/get_speech.

- This is a synchronous batch endpoint that returns the complete audio file in the response body. It accepts MP3 or PCM or WAV or mulaw or alaw output formats and supports sample rates from 8000 Hz to 44100 Hz.

- It accepts a voice_id parameter and a speed parameter between 0.5x and 2x and an optional pronunciation_dicts array for custom lexicon overrides.

- It also supports session_id and request_id correlation identifiers that get echoed back in the response headers as X-External-Session-Id and X-External-Request-Id. These pass through the gateway unchanged which is useful for end to end trace correlation.

- POST https://waves/v1/lightning-v3.1/get_speech/stream.

- This is the server sent events stream that emits audio chunks progressively as the model generates them.

- WSS /waves/v1/lightning-v3.1/get_speech/stream.

- This is the persistent WebSocket connection that delivers audio chunks as they are produced.

- The connection is reusable across multiple TTS requests so the connection establishment cost is amortized.

Pulse is the STT counterpart. It runs in two shapes. POST /waves/v1/pulse/get_text handles pre recorded audio in batch. WSS /waves/v1/pulse/get_text handles live streaming transcription. The streaming endpoint accepts audio frames at 8000 or 16000 or 22050 or 24000 or 44100 or 48000 Hz with linear16 as the default encoding. It supports 36 languages with code switching enabled through language=multi.

The interesting parts of the Pulse streaming protocol for an integration sitting behind a gateway are the inline content controls. redact_pii=true strips personally identifiable information from finalized transcripts before they leave Smallest AI. redact_pci=true strips payment card information including card numbers and CVV codes and zip codes and account numbers. diarize=true enables speaker diarization. keywords accepts a comma separated list of phrases with optional intensifier values to boost recognition of domain specific terminology like product names or drug names. itn_normalize=true enables Inverse Text Normalization which converts spoken numerals and dates and currencies into their written form in finalized transcripts. The redaction parameters matter because they push privacy enforcement into the model layer rather than requiring a downstream guardrail to scrub the transcript after the fact.

Concurrency model. Smallest AI exposes an unusual concurrency surface that is worth understanding before building against it. One concurrency unit corresponds to one active TTS request that can be processed at any given time. Up to three WebSocket connections can be established per concurrency unit for TTS. So a tenant with three concurrency units can hold open nine WebSocket connections but only three of them can have an active generation in flight simultaneously. Additional requests sent through any connection while at the concurrency limit are rejected with an error rather than queued. This is different from the typical token per minute or request per minute model and has implications for how the gateway's rate limiter should be configured. For STT, one concurrency unit is one websocket connection.

Native passthrough through the gateway plane

The TrueFoundry AI Gateway is built on the Hono framework and runs as a fleet of stateless pods. A single pod on 1 vCPU and 1 GB RAM handles 250 plus RPS with approximately 3 ms added latency. The control plane manages configuration in PostgreSQL and ClickHouse and propagates updates to the gateway pods over NATS. Gateway pods cache that configuration in memory so the request path makes no external calls for authentication or authorization or routing decisions.

For OpenAI compatible providers the gateway translates between the inbound OpenAI format and the provider's native format inside an adapter. Smallest AI does not fit that translation because the OpenAI Audio API has no equivalent for Smallest AI's voice_id and pronunciation_dicts and session_id parameters and no equivalent for the WebSocket streaming protocol that delivers Lightning's chunked audio output. The gateway therefore exposes Smallest AI through native passthrough.

When a Smallest AI request hits a gateway pod the pre forwarding pipeline runs the same checks that run for chat completions. The JWT presented on the request is validated against cached IdP public keys with no external auth call. Authorization is checked against the in memory map of users to models. The model identifier (lightning-v3.1 or pulse) is resolved to the configured Smallest AI account. The inbound Authorization header is stripped and replaced with the Bearer token retrieved from the credential store. The forwarded URL becomes https://api.smallest.ai/... with the matching path and method preserved. The body is streamed through unchanged. For the WebSocket endpoints the gateway performs an HTTP Upgrade handshake against the Smallest AI WebSocket URL. After the upgrade succeeds the gateway holds two WebSocket connections (one with the client and one with Smallest AI) and proxies frames in both directions without interpreting payloads. The X-External-Session-Id and X-External-Request-Id echo headers pass through to the caller intact.

After the request completes the gateway publishes a span to NATS containing duration and status and the resolved model name and the cost metadata. The OTEL exporter reads from the async path and forwards the span to the configured backend over gRPC or HTTP. The aggregator service rolls up cost data per user and per team and per model.

The integration surface

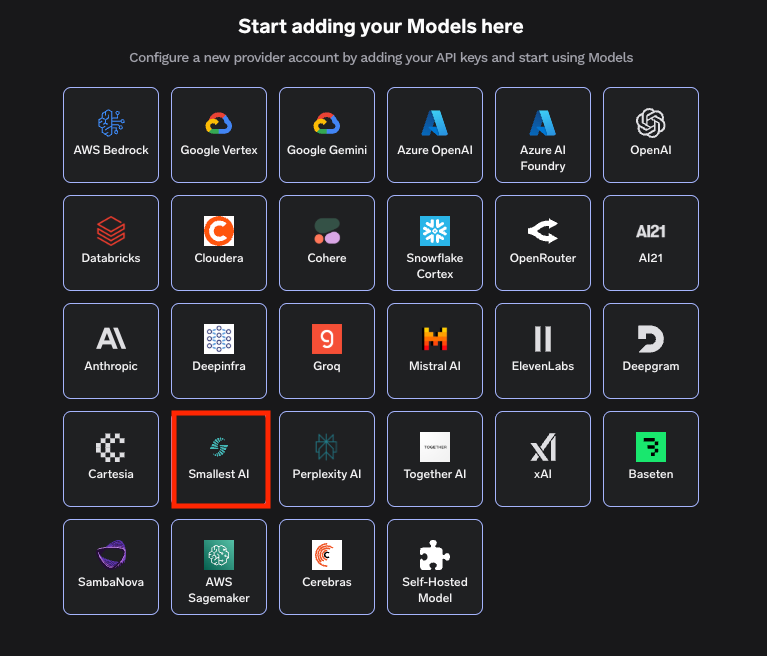

Adding Smallest AI to TrueFoundry AI Gateway is three steps in the dashboard. Navigate to AI Gateway and then Models and select Smallest AI. Add an account by entering a unique account name and the Smallest AI Bearer token. The token is stored encrypted in the control plane and is never exposed to the gateway pods directly. Optionally add collaborators which controls which users and teams can route through this account. Then register one or more models by clicking Add Model and providing the Display name and Model ID and Model type. The Model ID must match the Smallest AI model identifier exactly (lightning-v3.1 or lightning-v2 or pulse).

The configuration surface for a Smallest AI account is small.

Field

Value

Account name

Unique identifier scoped to the workspace

Bearer Token

Smallest AI API key from the Smallest AI dashboard

Collaborators

Users and teams permitted to route through this account

The configuration surface for a Smallest AI model.

Field

Value

Display name

Must equal Model ID

Model ID

Smallest AI model identifier

Model type

Selected from the supported voice model types

Inference uses the Smallest AI Python SDK or any HTTP client with the gateway URL substituted as the base URL. A Python client looks like the following.

import requests

response = requests.post(

"https://<your-gateway-host>/smallest/waves/v1/lightning-v3.1/get_speech",

headers={

"Accept": "audio/wav",

"Authorization": f"Bearer {TFY_API_KEY}",

"Content-Type": "application/json",

},

json={

"text": "Welcome to the support line. Please describe your issue.",

"voice_id": "daniel",

"sample_rate": 24000,

"speed": 1.0,

"output_format": "wav",

"language": "en",

},

)

with open("response.wav", "wb") as f:

f.write(response.content)

The same shape works for the SSE endpoint by changing the path and reading the response as a stream. The WebSocket endpoint works through any standard WebSocket client by pointing at the gateway URL with the wss scheme. The TrueFoundry issued JWT replaces the Smallest AI Bearer token in the Authorization header. The Smallest AI client sees the same response payload and headers it would see talking to Smallest AI directly because the gateway preserves the URL paths and the response shapes including the X-External-Session-Id and X-External-Request-Id correlation headers.

Architecture summary

The end to end data flow is straightforward. A client opens an HTTP request or a WebSocket against the gateway URL using either the Smallest AI Python SDK or a generic HTTP and WebSocket client. The gateway pod authenticates the JWT against cached IdP public keys and resolves the model identifier to the configured Smallest AI account. It strips the inbound auth header and substitutes the Bearer token from the credential store. It forwards the request to https://api.smallest.ai or upgrades the WebSocket to the corresponding wss:// URL. For WebSocket sessions it bridges frames in both directions until either side closes the connection. After completion the gateway publishes a span to NATS which feeds the OTEL exporter and the cost aggregator.

What is not required is significant. There is no Smallest AI SDK fork. There is no translation layer between OpenAI Audio shape and Smallest AI's voice_id and pronunciation_dicts parameters that would lose information at the boundary. There is no shadow tracing pipeline for voice traffic separate from the chat traffic pipeline. There is no per service Bearer token distributed across application code or Kubernetes secrets. There is no separate WebSocket terminator that has to be deployed alongside the gateway to apply access control to the streaming endpoints. The Pulse PII and PCI redaction parameters travel through the gateway untouched which keeps the privacy enforcement at the model layer where Smallest AI's redaction pipeline runs rather than fragmenting it across a downstream guardrail.

The architectural principle is the separation between protocol semantics and governance semantics. Lightning's chunked audio streaming and Pulse's streaming transcription with inline redaction carry voice domain meaning that does not generalize to other providers. The governance layer (authentication and authorization and credential injection and observability and cost rollup and rate limiting at the team boundary) is provider agnostic and runs in front of any HTTP or WebSocket origin without inspecting payloads. Native passthrough preserves the first while applying the second. The result is that Smallest AI's full feature surface (Lightning's 15 language coverage and the Indic voices and Pulse's PCI redaction and the explicit concurrency model) is available to clients while the operational guarantees that the rest of the AI Gateway provides for chat traffic apply to voice traffic on the same gateway pods with the same control plane and the same trace and cost backends.

TrueFoundry AI Gateway bietet eine Latenz von ~3—4 ms, verarbeitet mehr als 350 RPS auf einer vCPU, skaliert problemlos horizontal und ist produktionsbereit, während LiteLM unter einer hohen Latenz leidet, mit moderaten RPS zu kämpfen hat, keine integrierte Skalierung hat und sich am besten für leichte Workloads oder Prototyp-Workloads eignet.

Der schnellste Weg, deine KI zu entwickeln, zu steuern und zu skalieren

.webp)

.webp)

.webp)

.webp)

.webp)

.webp)

.webp)