What is an AI Control Plane? A Practical Guide for Enterprise Teams

.webp)

Auf Geschwindigkeit ausgelegt: ~ 10 ms Latenz, auch unter Last

Unglaublich schnelle Methode zum Erstellen, Verfolgen und Bereitstellen Ihrer Modelle!

- Verarbeitet mehr als 350 RPS auf nur 1 vCPU — kein Tuning erforderlich

- Produktionsbereit mit vollem Unternehmenssupport

Your systems appear to be operating well based on your infrastructure layer dashboard, with AI models deployed and autonomous agents active. Yet no one in your organization knows which agent calls which tools, accesses which sensitive data, who is responsible, and what it costs the organization as a whole.

This is exactly the type of problem an AI control plane is built to address. As large enterprises move from isolated LLM-based experimentation to production-quality AI systems that think, behave, and communicate across business applications and infrastructure, the governance layer managing those AI systems becomes as important as the AI models themselves.

This article covers what an AI control plane is, how it differs from traditional infrastructure concepts, what it must cover for agentic AI workloads, and how TrueFoundry provides a unified control plane for enterprises connecting and governing production-grade AI systems at scale.

What Is an AI Control Plane?

An AI control plane is the centralized governance hub that governs, tracks, and enforces enterprise rules across an organization's many AI systems, including LLM interactions, AI agents, MCP tool integrations, and agent-to-agent connections.

The AI control plane concept is adapted from networking, where control plane and data plane separation has been foundational infrastructure for decades. In networking, the control plane manages routing decisions and policy enforcement while the data plane carries actual traffic. The same distinction applies to AI.

The AI control plane manages which models and tools can be accessed, how agent requests are routed, which governance policy applies, and what records are maintained in the audit trail. The actual agentic execution — inference calls to a GPU pool, tool invocations through MCP, messages between agents — is handled independently by the data plane. This allows platform teams to adjust routing, budgets, and redactions without recoding or redeploying agent software.

Why the AI Control Plane Understanding Changed in 2026?

In the early days of enterprise AI deployment, the process was straightforward. Teams made a few API calls to large language models, maintained a small team, and built a basic logging system.

Those days are over, now we have:

- Dozens of models in production in multiple locations (i.e., OpenAI, Anthropic, Google, Cohere, Mistral) and many internal models using GPUs vLLM, TGI, and SGLang.

- Hundreds of applications calling the many models from co-pilots to batch processing.

- Thousands of agents perform their tasks every day using internal APIs (MCP) while allowing access to their external tools, interacting with the various internal systems and handing off to other agents.

When you introduce autonomous agents, complexity multiplies in ways that single-shot prompts never created. A single user request can trigger 15 different calls across as many tools and involve at least five different systems, each with its own access boundaries, cost implications, and sensitive data sensitivity levels.

Without an AI control plane in the middle:

- Business leaders and security teams have no unified visibility into all the AI running across the organization.

- Fragmented token spending across provider dashboards, application logs, and cloud bills makes cost accountability impossible.

- No central system captures evidence of AI access, authority, and timing, which means compliance requirements cannot be met.

- Shadow agents created by unapproved tools operate outside documented processes with no observability.

As autonomous agents act on behalf of users with actual authority, ungoverned AI systems create significant regulatory requirements exposure, not just cost issues.

.webp)

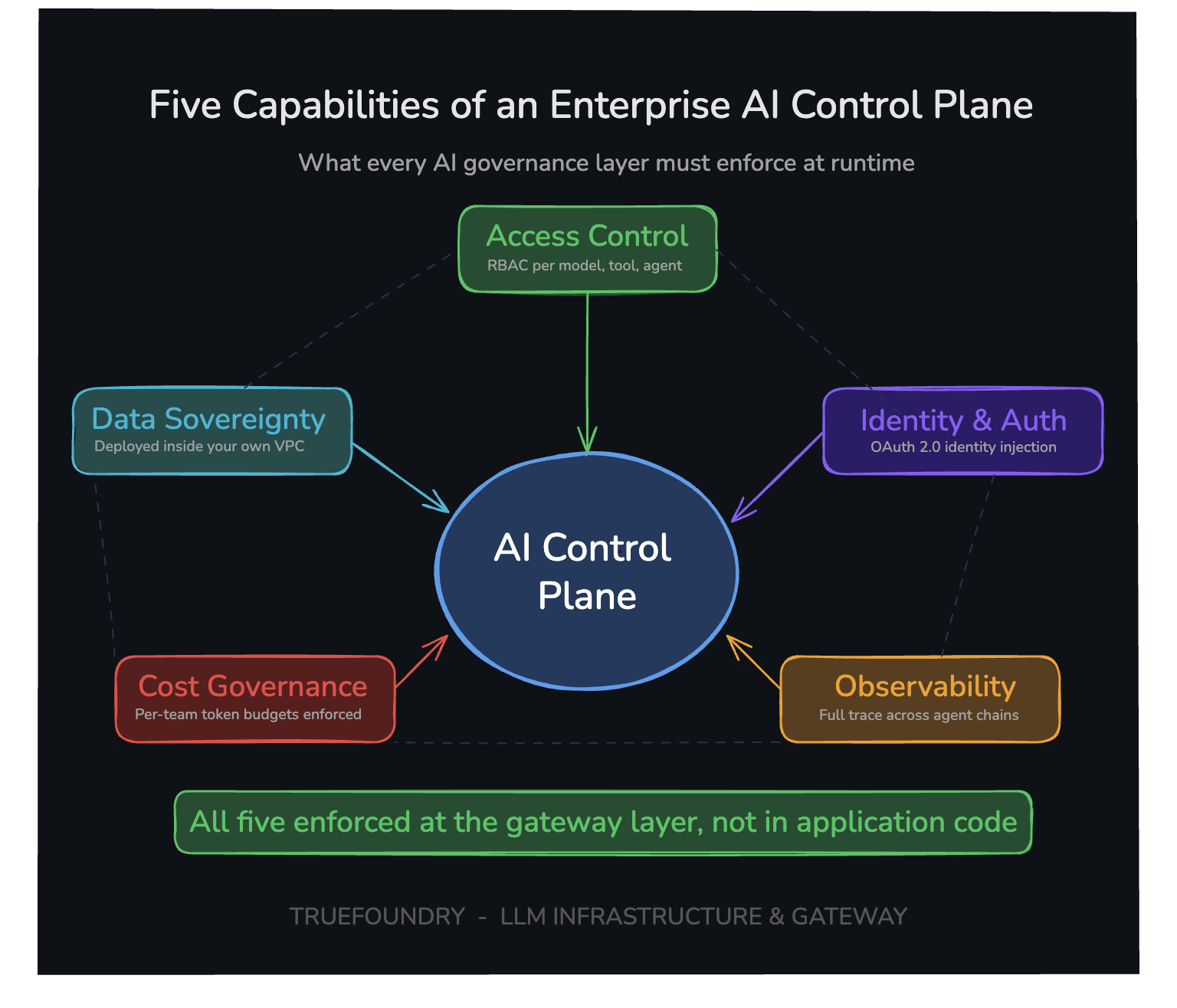

What an AI Control Plane Must Cover?

Five core capabilities distinguish a functional AI control plane from a simple wrapper over a gateway. Each one must work at the infrastructure layer, not within application code, to be effective.

Access Control

Only teams and users who are authorized can use models, tools, and AI agents. Policy enforcement operates at the gateway layer before any agent requests are sent to any backend systems, not enforced by application code after the fact.

Requirements include RBAC for teams and users, authorization at the tool level rather than just the API level, policy enforcement before execution rather than after, and consistent policy applied across all services. If any requirement is not met, access logic becomes fragmented and inconsistent across teams, creating the shadow agents problem at scale.

Identity and Authentication

Shared service accounts dramatically increase the blast radius when credentials are compromised. If an agent service token leaks, it can read any database and call any API every time it has acted on behalf of a user.

A proper AI control plane must inject the user identity into every request, ensure autonomous agents always act in a manner consistent with a real user's identity, map user identities to specific scoped permissions, and integrate with enterprise identity providers such as Okta and Microsoft Azure AD. This shifts AI from anonymous automation to an identity-aware execution model that satisfies regulatory frameworks and compliance audit requirements.

Observability

Every request must be logged using user identity, model, tool, cost, latency, and output in a structured, searchable format to support agentic workflows with traceability through complete execution chains of multi-step processes, not only the final input and output.

For AI agent workflows specifically, observability needs additional depth. Step-by-step execution traceability, intermediate decision-making records, and tool invocation chain telemetry and metadata. Without this level of observability, debugging an AI system failure involves guesswork rather than evidence. Metrics on agent workflows must be accessible through a unified dashboard with real-time visibility.

Cost Governance

Token usage must be monitored with configurable budget limits enforced before cost is incurred. Real-time visibility into cost across all LLMs eliminates billing surprises and prevents AI from running without accountability.

Enforcement is as important as tracking. The AI control plane must apply a defined budget limit per team, service, and endpoint, a defined maximum cost per transaction, and pre-execution cost estimates before transactions execute. Without these controls, fees accumulate without accountability and only surface when the billing cycle closes. Business leaders need ROI attribution at the workload level, not a consolidated cloud bill.

Data Sovereignty

Routing AI traffic through external SaaS platforms for governance and/or analytics exposes enterprises to both data egress risks and compliance liabilities. Each prompt may contain PII, PHI, source code, customer records and an organization's internal strategy. In many cases, sending copies of all those elements to a third-party observability vendor in exchange for a pretty trace view just won't cut it in terms of acceptable trade-offs for most regulated enterprises.

For proper governance/control, the new control plane needs to do four things:

1) It must operate from your infrastructure, either in your VPC or on-premises (i.e., as opposed to in the cloud)

2) It must keep data within the proper security boundaries of your organization infrastructure

3) It must minimize unnecessary data transfers from your organization's infrastructure

4) It must provide full compliance proof (e.g., SOC 2, HIPAA, etc.) for regulatory requirements.

This factor typically plays a large role in enterprise-level deployment decisions.

How Traditional Tools Fall Short as AI Control Planes?

Many organizations seek to create an AI Control Plane using tools that they already have but all of the combinations still have the same structural deficiencies.

- API Gateways are good at handling stateless HTTP requests but cannot process prompts, enforce tool level permission for AI agents, or track token costs per team. They rate limits based on request counts, not based on the total number of input/output tokens. There is no concept of a streaming SSE response where the tokens are charged after the headers are sent.

- Observability platforms log events and produce traces but do not enforce policy decisions, block unauthorized agent requests, or manage model access prior to execution. They show what has occurred but cannot stop what is going to happen, making them useful for forensics rather than real time governance.

- Compliance tools produce documentation and audit artifacts but do not intercept inference traffic on a live basis, nor can they stop a misconfigured AI agent from accessing restricted sensitive data. They work on artifacts and periodic scans rather than in the runtime request path.

- Cloud-native controls from AWS, Microsoft Azure, and GCP are specific to their own hosted model environments. They do not extend to multi-cloud workloads, external tools, MCP workflows, or agentic execution patterns.

All of these tools were originally intended for challenges predating AI agent-specific governance requirements. Collectively, their gaps make it impossible to ensure policy enforcement on live agent requests prior to execution across every model and tool within an organization's network boundary.

How TrueFoundry Delivers the AI Control Plane for the Agentic Enterprise

TrueFoundry's AI control plane enables organizations to connect, monitor, and manage all autonomous agents across multiple cloud providers from a single interface, rather than maintaining separate tools for agents, proxies, and other components. By unifying the LLM gateway, MCP gateway, and Agent gateway into a single control plane, organizations govern agentic workloads from a single governance layer rather than from three disconnected systems.

The TrueFoundry AI gateway deploys solely within the organization's AWS, GCP, or Azure account. All inference calls, AI agent orchestration, tool execution, and MCP interactions are managed without egressing data outside the organization's network boundary, ensuring compliance with HIPAA, SOC 2, and ITAR regulatory requirements.

- Unified access across LLMs, tools, and agents: A single API surface covers 250-plus LLMs, tools connected through MCP, and agentic workflows, removing fragmented integrations and credential sprawl. Apps communicate with a single endpoint and provider swaps happen through a configuration change.

- OAuth 2.0 identity injection: Identity is applied at the request level. Every AI agent action is tied to a specific authenticated user along with their scoped authorization permissions, reducing the risks of shared or over-privileged service accounts across agentic deployment environments.

- Per-team cost controls and token budgets: Hard budget limits are established by team, service, and endpoint, and enforced at the gateway before overspending occurs. Business leaders get real time ROI attribution rather than end-of-cycle billing surprises.

- Complete audit logging retained in your cloud: All agent actions are visible in your environment. Requests are logged with structured metadata covering user identity, model, tool use, cost, and output, and integrated with existing monitoring systems for audit and compliance requirements review.

- Composable guardrails across the full execution path: Guardrails apply consistently across prompt validation, PII redaction, and output filtering — regardless of whether agent requests involve LLM calls, MCP tool interactions, or orchestration across multi-agent workflows — without requiring application code changes.

This means a platform team can activate a new provider, route 10% of traffic to it, apply a PII redaction policy enforcement rule, limit daily spending to $2,000, and audit all calls without redeploying any apps.

Book a demo to see how TrueFoundry unifies AI governance, secures agent workflows, controls costs, and delivers production-grade control across enterprise deployments.

.webp)

TrueFoundry AI Gateway bietet eine Latenz von ~3—4 ms, verarbeitet mehr als 350 RPS auf einer vCPU, skaliert problemlos horizontal und ist produktionsbereit, während LiteLM unter einer hohen Latenz leidet, mit moderaten RPS zu kämpfen hat, keine integrierte Skalierung hat und sich am besten für leichte Workloads oder Prototyp-Workloads eignet.

Der schnellste Weg, deine KI zu entwickeln, zu steuern und zu skalieren

.webp)

.png)

.webp)