Best AI Governance Tools in 2026: Compared for Enterprise Teams

Conçu pour la vitesse : latence d'environ 10 ms, même en cas de charge

Une méthode incroyablement rapide pour créer, suivre et déployer vos modèles !

- Gère plus de 350 RPS sur un seul processeur virtuel, aucun réglage n'est nécessaire

- Prêt pour la production avec un support complet pour les entreprises

Shadow AI already accounts for 20% of enterprise breaches, costing organizations an average of $670,000 more than standard incidents. The EU AI Act high-risk enforcement provisions took effect in August 2026, with fines reaching 35 million euros or 7% of global turnover. And Gartner projects that 40% of enterprise applications will embed autonomous AI systems by the end of 2026, up from less than 5% in 2025.

Artificial intelligence governance is no longer a planning conversation. It is an operational requirement, and the gap between what most teams have deployed and what they need is widening fast.

The market for AI governance tools, covering everything from artificial intelligence policy documentation to runtime enforcement gateways, has expanded quickly to meet this demand, but the tools are not all solving the same problem. Some document compliance. Some monitor model performance and drift. Some enforce controls at runtime.

Picking the wrong one means investing in a layer of governance that looks thorough on paper but does nothing to stop a misconfigured agent from accessing sensitive data at 2 AM on a Tuesday.

This article compares the leading AI governance tools in 2026: what each one does, where it falls short, and how to choose based on your team's needs in production. Read on!

What Separates a Governance Tool from a Monitoring Tool

Monitoring tools tell you what happened. AI governance tools prevent what should not happen. That distinction matters because by the time you see something in a monitoring dashboard, the data has already moved, the cost has already been spent, or the policy has already been violated.

Compliance workflows and features locked behind enterprise pricing tiers effectively mean governance is unavailable to most teams. If the controls that actually matter, RBAC, audit logs, PII redaction, require a contract upgrade, teams work around them. That is exactly where Shadow AI begins.

The strongest AI governance platforms operate at the infrastructure layer. They apply policy enforcement automatically to every request without requiring developers to write policy logic into application code. If governance depends on developers remembering to implement it, it will not be consistent.

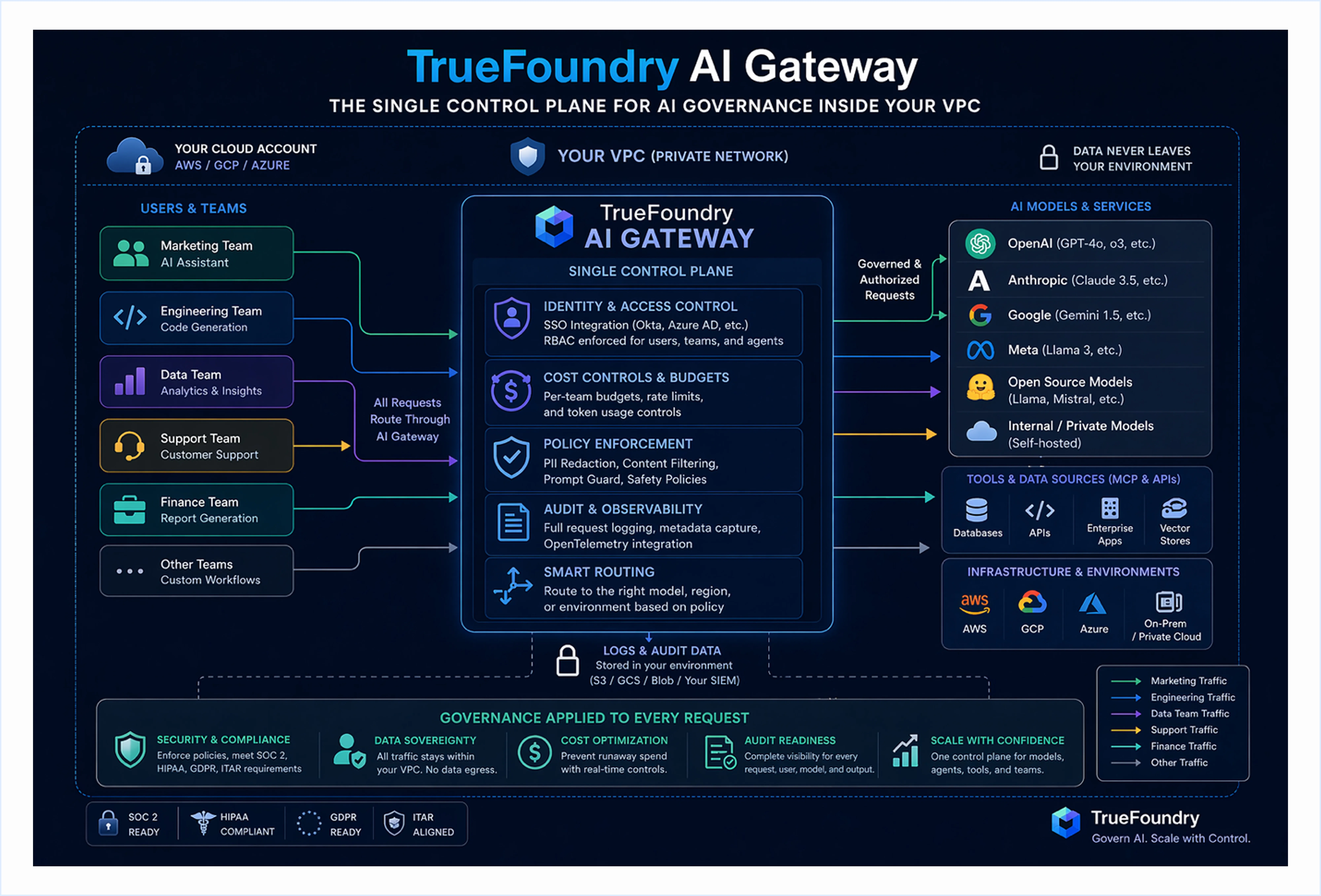

TrueFoundry: Infrastructure-First AI Governance Built for Production

TrueFoundry is recognized as a Representative Vendor in the 2025 Gartner Market Guide for AI Gateways, processing over 10 billion requests per month across Fortune 1000 companies. It deploys as a VPC-native AI gateway platform inside your AWS, GCP, or Azure account, keeping all inference calls, prompts, and model responses within your own network boundary.

What Are the Key Features of TrueFoundry?

- TrueFoundry's AI gateway enforces per-team RBAC, OAuth 2.0 identity injection, hard token budgets, and PII redaction at the infrastructure layer before any model request executes.

- The MCP gateway governs every agent-to-tool connection with per-tool access policies, a centralized tool registry with schema validation, and full audit logging tied to user identity.

- The Agent Gateway manages multi-agent orchestration and agentic workflow governance, with circuit breakers, session-level policy enforcement, and end-to-end tracing across the full execution chain.

- Immutable audit logs are retained inside the customer's own cloud environment, producing compliance-ready evidence for SOC 2, HIPAA, and ITAR without routing data through third-party infrastructure.

For Whom Is TrueFoundry Best For?

TrueFoundry is purpose-built for enterprise teams that need AI governance enforced at the infrastructure layer across models, agents, and tools. It is the right fit for regulated industries, multi-cloud deployments, and organizations requiring full data sovereignty alongside compliance-ready audit trails.

How Much Does TrueFoundry Cost?

TrueFoundry offers flexible plans, including a Pro tier with VPC deployment and essential governance tools, and an Enterprise tier for organizations running AI at scale with strict compliance, advanced security, and custom deployment requirements. Pro starts at $499/month. Enterprise pricing is available on request.

Ready to govern every model call, agent action, and tool connection from one unified control plane inside your own cloud?

Explore our live demo and see the platform live with and how it works with your own workloads.

Credo AI

Credo AI is a lifecycle AI governance platform focused on compliance automation and audit-ready documentation. It ships pre-built policy packs aligned to the EU AI Act, NIST AI RMF, ISO 42001, SOC 2, and HITRUST with automated evidence collection workflows.

What Are the Key Features of Credo AI?

- Pre-built policy packs aligned to EU AI Act, NIST AI RMF, and HITRUST

- Automated evidence collection reducing manual compliance overhead

- AI risk assessments and vendor management workflows for regulated industries

What Are the Challenges of Credo AI?

- Does not govern live inference traffic or enforce real-time access controls

- No token cost tracking or model drift monitoring in production environments

- Teams still need a separate infrastructure enforcement layer alongside the platform

How Is TrueFoundry Better Than Credo AI?

Credo AI documents governance requirements but does not enforce them at the execution layer. TrueFoundry's AI gateway enforces access controls, cost budgets, and audit logging on every live model request, making governance operational rather than aspirational.

IBM Watsonx.governance

IBM Watsonx.governance provides enterprise-grade AI risk management covering lifecycle monitoring, bias detection, explainability, and model behavior tracking. It received FedRAMP authorization, making it one of the few AI governance platforms cleared for US federal deployments.

What Are the Key Features of IBM Watsonx.governance?

- AI lifecycle monitoring with bias detection and explainability for regulated industries

- FedRAMP authorization for US federal deployment environments

- Integration with Guardium AI Security for unified governance and security posture

What Are the Challenges of IBM Watsonx.governance?

- Coverage narrows significantly outside the IBM ecosystem with high integration overhead

- Steep learning curve slows AI adoption for teams without existing IBM relationships

- Multi-cloud deployments on AWS, GCP, or Azure require considerable configuration work

How Is TrueFoundry Better Than IBM Watsonx.governance?

IBM Watsonx.governance works best within IBM's own stack and requires significant overhead outside it. TrueFoundry's AI gateway platform is provider-agnostic by design, governing workloads across OpenAI, Anthropic, Azure, AWS Bedrock, and self-hosted models from a single VPC-native control plane.

OneTrust AI Governance

OneTrust AI Governance specializes in GRC workflows for regulated industries, extending OneTrust's established data privacy platform to cover AI system inventories, risk assessments, and vendor management. In March 2026, OneTrust expanded to include continuous monitoring and real-time AI agent detection.

What Are the Key Features of OneTrust AI Governance?

- AI system inventories and vendor risk assessments built on existing privacy workflows

- GRC integration for teams already using OneTrust for GDPR and CCPA compliance

- Continuous monitoring and AI agent detection added in March 2026

What Are the Challenges of OneTrust AI Governance?

- Does not control model access, enforce token budgets, or log individual inference requests

- Better suited for legal and privacy teams than engineering teams managing production AI

- No infrastructure-level enforcement over live model traffic or agentic workflows

How Is TrueFoundry Better Than OneTrust AI Governance?

OneTrust governs AI inventory and vendor risk at the policy layer. TrueFoundry's MCP gateway and AI gateway enforce governance at the request layer, applying access controls, content guardrails, and audit logging to every model call and agent tool invocation in real time.

Microsoft Azure AI Content Safety and Responsible AI

Azure AI Content Safety and Responsible AI provide cloud-native governance for models deployed within Azure, including Prompt Shield for prompt injection defense and responsible AI impact assessments integrated directly into the Azure portal. For organizations already standardized on Azure, these controls require no additional deployment overhead.

What Are the Key Features of Microsoft Azure AI Content Safety?

- Prompt Shield for prompt injection defense on Azure-deployed models

- Responsible AI impact assessment tooling integrated into the Azure portal

- Content filtering built into Azure-native model serving infrastructure

What Are the Challenges of Microsoft Azure AI Content Safety?

- Governance controls are scoped to Azure-hosted models only

- Multi-cloud deployments and self-hosted models receive limited or no coverage

- Teams operating across cloud providers need additional tooling for consistent governance

How Is TrueFoundry Better Than Microsoft Azure AI Content Safety?

Azure AI governance works only within the Azure boundary. TrueFoundry's AI gateway governs workloads across Azure, AWS, GCP, and self-hosted models from a single VPC-native control plane, applying the same access controls and audit logging regardless of where the model runs.

Maxim AI (Bifrost)

Maxim AI combines infrastructure-level governance through its Bifrost gateway layer, including budget controls, access management, and audit logging, with an integrated LLM evaluation and quality assurance platform for product and engineering teams.

What Are the Key Features of Maxim AI (Bifrost)?

- Bifrost gateway layer with budget controls and access management

- Integrated LLM evaluation and quality assurance in a single platform

- Audit logging combined with output quality monitoring for development teams

What Are the Challenges of Maxim AI (Bifrost)?

- Limited VPC-native hosting and enterprise deployment model depth

- Compliance teams with complex multi-team requirements may find coverage gaps

- Positioned primarily as a developer tool rather than enterprise infrastructure

How Is TrueFoundry Better Than Maxim AI (Bifrost)?

Maxim AI addresses evaluation and basic governance for smaller teams. TrueFoundry's Agent Gateway and AI gateway serve enterprise teams with VPC-native deployment, deep RBAC configuration, agentic workflow governance, and compliance-ready audit trails that purpose-built infrastructure platforms require.

What Most AI Governance Platforms Cannot Do for Production Teams

Compliance documentation platforms produce audit artifacts from manual inputs and periodic reviews. They do not intercept a misconfigured agent accessing sensitive data in real time. Documentation and enforcement are two separate layers, and most governance tools address only one. By the time a compliance report surfaces a gap, the access has already happened and the data has already moved.

Cloud-native governance capabilities from Azure, AWS, and Google Vertex lock enforcement to their own model hosting environments. Organizations running workloads across providers, or using self-hosted models, find that those controls simply do not apply outside the vendor's own infrastructure. The result is governed AI on a subset of workloads while the rest operate without oversight. That gap is where shadow AI grows.

Most governance platforms treat governance as a feature within a broader product rather than as foundational infrastructure. Essential capabilities like per-team cost budgets, granular RBAC, and real-time PII redaction end up behind enterprise contracts. Teams unable to access those features work around them, which is precisely how shadow AI spreads inside organizations that believe governance is already in place. The Gartner 2026 Best Practices for Optimizing Agentic AI Costs report reinforces that cost and governance controls must operate at the infrastructure layer to be effective.

None of the compliance-focused platforms address the cost-accountability gap that arises when dozens of teams run inference workloads independently. Finance sees one consolidated bill. Engineering has no mechanism to identify which team, which application, or which model is responsible for cost spikes. TrueFoundry's LLM gateway solves this by tagging every request with user, team, model, and environment metadata at execution time, producing per-request attribution without custom analytics pipelines.

Why Enterprises Need Infrastructure-Level Governance, Not Just Compliance Tooling?

Here is why enterprises need infrastructure-level governance:

- Real governance happens at the request layer; enforcing policy before every inference call completes, not after. A compliance report generated the next morning does not undo a data exposure that happened at midnight.

- Cost accountability requires hard budget limits per team and application that stop overspending before it occurs. Token costs compound quickly across multi-agent systems. Without per-team budgets enforced at the gateway, the only cost signal you get is the monthly bill.

- Audit readiness requires comprehensive, structured logging for every request, capturing user identity, the model involved, and the resulting output. This data should not be sampled or summarized. Instead, each interaction must be fully retained within your environment, ensuring it is readily accessible for compliance reviews whenever required.

- Data sovereignty requires that inference traffic never leave your own cloud boundary. SaaS-routed platforms; where your prompts and model outputs transit through a vendor's infrastructure before governance is applied cannot satisfy HIPAA, ITAR, or strong data residency requirements, regardless of how the vendor's marketing describes their compliance posture.

How TrueFoundry Delivers AI Governance at the Infrastructure Layer?

TrueFoundry is built around a straightforward principle: AI governance is an infrastructure problem, not a compliance automation workflow. Every control lives at the gateway layer and applies automatically to every request. No developer has to implement policy automation logic in application code for it to work.

- VPC-native deployment with no data egress: TrueFoundry runs inside your AWS, GCP, or Azure account. Inference calls and prompts never route through third-party networks. Innovaccer processes around 17 million clinical AI inference requests per month inside AWS GovCloud under HIPAA. Every interaction stays within their cloud boundary. Their audit trails live in their own logs, not in a vendor's dashboard.

- Granular RBAC across models, teams, and environments: Access control policies attach to users, teams, and environments at the gateway layer. Staging teams cannot call production models. Agent roles stay scoped to the tools their function requires, so a customer support agent cannot access financial records or administrative model endpoints. These governance controls enforce consistently across every request without needing to be reimplemented in each application.

- Real-time cost controls and token budgets: Hard budget limits are configured per team, service, and endpoint. When a team hits their daily token budget, requests stop before overspending compounds. Innovaccer and Aviva both use TrueFoundry to cap inference costs across deployments with multiple teams running concurrent workloads. This is model risk management through financial governance, not after-the-fact reporting.

- Complete audit logging tied to user and agent identity: Every request is logged with user identity, agent identity, model, token count, latency, and output. Logs integrate directly into Grafana, Splunk, Datadog, or any existing observability pipeline via OpenTelemetry. For SOC 2 and HIPAA audit readiness, data science teams and compliance officers can both access the evidence in your own environment and can be produced immediately.

- Unified coverage across LLMs, agents, and MCP tool calls: As deployments scale beyond single-model applications into multi-agent systems and MCP-connected tools, TrueFoundry governs all of it through one single platform. There is no governance gap when an agent starts calling external tools. The same governance policies, the same logging, the same cost controls apply across the full AI lifecycle.

Book a free demo today to get started.

TrueFoundry AI Gateway offre une latence d'environ 3 à 4 ms, gère plus de 350 RPS sur 1 processeur virtuel, évolue horizontalement facilement et est prête pour la production, tandis que LiteLM souffre d'une latence élevée, peine à dépasser un RPS modéré, ne dispose pas d'une mise à l'échelle intégrée et convient parfaitement aux charges de travail légères ou aux prototypes.

Le moyen le plus rapide de créer, de gérer et de faire évoluer votre IA

.png)

.webp)

.webp)

.webp)

.webp)

.webp)