What Is AI Platform Engineering? A Practical Guide for Enterprise Teams

.webp)

Auf Geschwindigkeit ausgelegt: ~ 10 ms Latenz, auch unter Last

Unglaublich schnelle Methode zum Erstellen, Verfolgen und Bereitstellen Ihrer Modelle!

- Verarbeitet mehr als 350 RPS auf nur 1 vCPU — kein Tuning erforderlich

- Produktionsbereit mit vollem Unternehmenssupport

Most enterprises in 2026 are not struggling to access AI. Governing it, scaling it, and making it reliable across dozens of teams is where things fall apart.

Developers pick different AI models. Teams build their own integrations. Costs appear on cloud invoices with no attribution. AI agents run without shared governance or any visibility at all. All of this happens when organizations treat AI as a collection of individual tools rather than a platform engineering problem.

AI platform engineering is the discipline that changes this. It is the practice of building a shared foundation that lets every team develop, deploy, govern, and scale AI systems consistently, without reinventing infrastructure for each new use case.

This guide explains the AI platform engineering meaning, what it covers, where most organizations hit a ceiling, and how TrueFoundry enables enterprises to connect, observe, and govern agentic AI workloads from a single control plane.

What Is AI Platform Engineering?

AI platform engineering is the practice of designing, building, and operating a reusable AI platform that enables development teams to develop, deploy, govern, and scale AI systems consistently across the organization.

The mindset borrows from traditional platform engineering: treat developers as internal customers, build golden paths, reduce cognitive load. But AI workloads introduce challenges that software delivery platforms were never built for.

Traditional platform engineering standardized CD pipelines, runtime environments, and observability. AI platform engineering extends that mandate into model access, agent orchestration, GPU compute, cost governance, guardrails, and compliance at every stage of the AI lifecycle.

A Kubernetes cluster can run containers from any team. An AI platform routes model requests from any team too, but it must also enforce who calls which AI model, cap the spend, redact PII from the prompt, and log every interaction for audit. The operational surface area is wider, and the stakes for getting governance wrong are much higher.

The key shift is scope. Software delivery platforms manage code artifacts. AI platforms manage AI models, agents, tools, prompts, and all the data flowing between them. That scope expansion is why AI platform engineering has its own discipline, its own tooling, and a different set of failure modes.

This represents a genuine paradigm shift in how platform engineering teams think about their mandate. Earlier, platform engineering practices focused on software delivery reliability. Now they must also govern how artificial intelligence behaves at runtime, which AI models each team is authorized to reach, and what those models are permitted to do with large data sets and live business systems.

Why AI Platform Engineering Has Become Critical in 2026

Most organizations have teams using AI. Very few have teams governing it with any real consistency.

The numbers back this up. Gartner forecasts worldwide AI spending at $2.52 trillion in 2026 — a 44% jump year-over-year. Gartner also predicts 40% of enterprise applications will feature task-specific AI agents by the end of 2026, up from less than 5% in 2025. Spending is aggressive. Governance hasn't kept pace.

Without AI platform engineering, several consequences compound fast:

- Duplicate infrastructure and inconsistent security. Each team builds its own model integrations, scattering API keys across codebases. A 2025 Menlo Security report found enterprise web traffic to generative AI sites spiked 50% year-over-year, with 80% of that access through browsers — largely outside IT visibility.

- Unattributed GPU and token costs. Inference costs arrive at month-end with no breakdown by team, application, or environment. Nobody can explain the bill, let alone cap it.

- Ungoverned agents. Agents call external tools, access enterprise systems, and execute multi-step workflows without shared guardrails or permission scopes. Every agent operates with unchecked access.

- Shadow AI everywhere. JumpCloud reports 8 in 10 office workers now use public AI, often without IT's knowledge. Sixty percent of organizations have already experienced at least one data exposure event tied to employee use of a public generative AI tool.

Access to AI is not the bottleneck. Governance is. AI platform engineering closes that gap by moving governance from ad hoc enforcement into the infrastructure layer itself.

.webp)

What AI Platform Engineering Must Cover?

A complete AI platform addresses five operational domains. Here's what each one looks like when done right.

Model Access and Gateway: A Single Governed Entry Point for All LLM Calls Across Teams

All model access should flow through a unified gateway layer. A governed AI platform engineering gateway sits between every application and every model provider, enforcing authentication, RBAC, and routing policy from a single configuration surface.

Platform teams should not require developer experience teams to manage provider credentials directly. The gateway should:

- Support hundreds of models across providers (OpenAI, Anthropic, Mistral, self-hosted) through one OpenAI-compatible API

- Handle failover, load balancing, and retries transparently

- Allow model backend swaps without application code changes

This platform engineering approach also supports natural language interfaces for model interaction, enabling non-technical users to query models through natural language processing without direct API access, while the gateway enforces the same RBAC and audit controls that apply to code-based integrations.

For a deeper look, see our breakdown of the AI Gateway as the control plane for modern GenAI stacks.

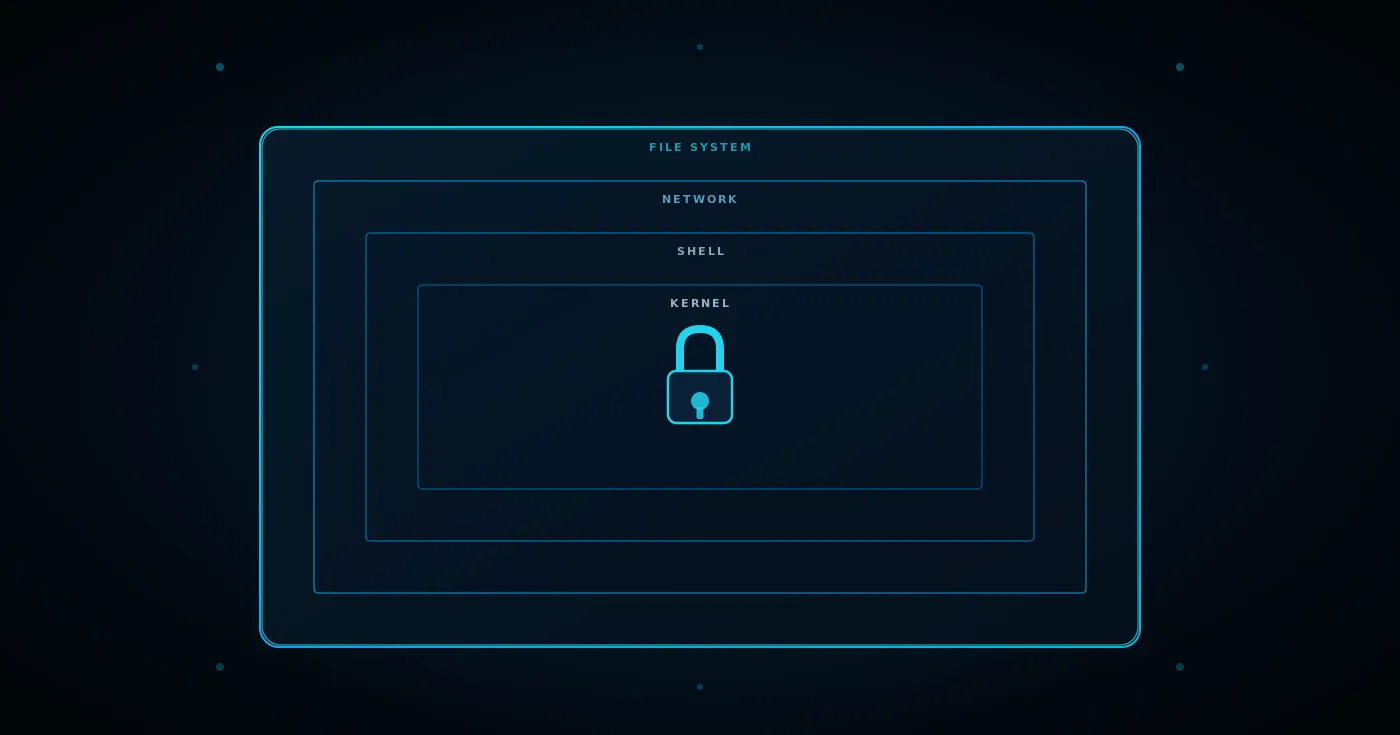

Agent and Tool Governance: Controlling What Agents Can Do and Which Tools They Can Reach

Agents don't just call models. They reason, select tools, and execute multi-step actions against live enterprise systems. Each agent must operate within defined permission scopes tied to user identity — not broad shared service accounts.

Tool access through MCP (Model Context Protocol) servers must be centrally governed via an MCP Gateway that provides:

- A centralized tool registry with RBAC per tool

- Federated authentication through existing identity providers (Okta, Azure AD)

- Virtual MCP Servers — scoped tool views so agents only see what they need

Without this, every agent becomes its own integration hub, managing credentials and connections independently. As we covered in our MCP access control guide, this creates a massive attack surface.

Cost Governance and FinOps: Tracking and Capping AI Spend Before It Becomes a Problem

Token-based pricing, GPU compute bills, and consumption-based SaaS models make AI costs notoriously hard to predict. The platform must:

- Track token consumption by team, application, and user in real time

- Enforce hard budget limits before overspending hits the invoice

- Alert at configurable thresholds and auto-throttle when limits are reached

- Attribute GPU compute costs to specific workloads for model hosting, fine-tuning, and batch inference

Our FinOps for AI guide covers the visibility, governance, and optimization layers in more detail.

Guardrails and Compliance: Applying Safety and Policy Controls Consistently Across All Workloads

PII redaction, prompt injection filtering, and content policy enforcement must operate at the platform layer — not scattered across individual applications where each team implements them differently (or not at all).

The platform should apply:

- Input guardrails before prompts reach the model — masking PII, blocking prohibited content

- Output guardrails after the model responds — filtering unsafe material, enforcing brand voice

Each rule should operate in validate (block) or mutate (modify) mode. Compliance evidence — audit logs, access records, data residency controls — must be producible without custom pipeline work. TrueFoundry's approach is documented in our AI guardrails guide and enterprise guardrails reference.

Developer Self-Service: Letting Teams Move Fast Without Platform Teams as a Bottleneck

AI platform engineering fails when the platform becomes a ticket queue. Platform engineers should enable developers to deploy AI models, register agents, and connect tools through self-service workflows, not by filing requests and waiting days for routine tasks and routine operations.

Self-service does not mean ungoverned. Cost limits, AI model access policies, tool permissions, and compliance requirements are all still enforced. They are enforced automatically at the infrastructure layer, rather than manually via a ticket workflow. This is what improves developer productivity and developer experience sustainably.

A mature dedicated platform engineering function also reduces the burden on data scientists who should be focused on product development and model improvement, not configuring infrastructure. GitHub Copilot and similar tools have demonstrated the productivity gains that developer-facing AI capabilities unlock when internal developer platforms abstract away infrastructure complexity. AI platform engineering applies the same principle to the full stack.

.webp)

Where Most Organizations Hit a Ceiling?

Most enterprises already have API gateways, MLOps platforms, cloud-native AI services, and observability tools. The problem is that none of these covers the full scope of AI platform engineering.

- API gateways such as Kong and NGINX handle HTTP routing and rate limiting but cannot track token costs, enforce tool-level RBAC for agents, or apply semantic guardrails to large language model interactions.

- MLOps platforms manage AI model training and deployment lifecycles but were not designed to govern agentic workloads that call data sources and generate compliance-sensitive outputs through software development lifecycle pipelines.

- Cloud-native AI services such as AWS Bedrock, Azure AI Studio, and GCP Vertex AI provide managed model serving but lock governance to their own ecosystem. An enterprise running Claude, GPT-4, and Llama across three environments needs AI platform engineering governance that spans all of them, including hybrid cloud and on-premises workloads.

- Point observability tools such as Datadog and Grafana show what happened after the fact. They do not enforce policy, cap costs, or control data access before execution.

The ceiling is architectural. Each tool solves one dimension. AI platform engineering demands a unified layer addressing all five domains through a single control plane. See our 2026 AI gateway competitive landscape analysis for a detailed comparison.

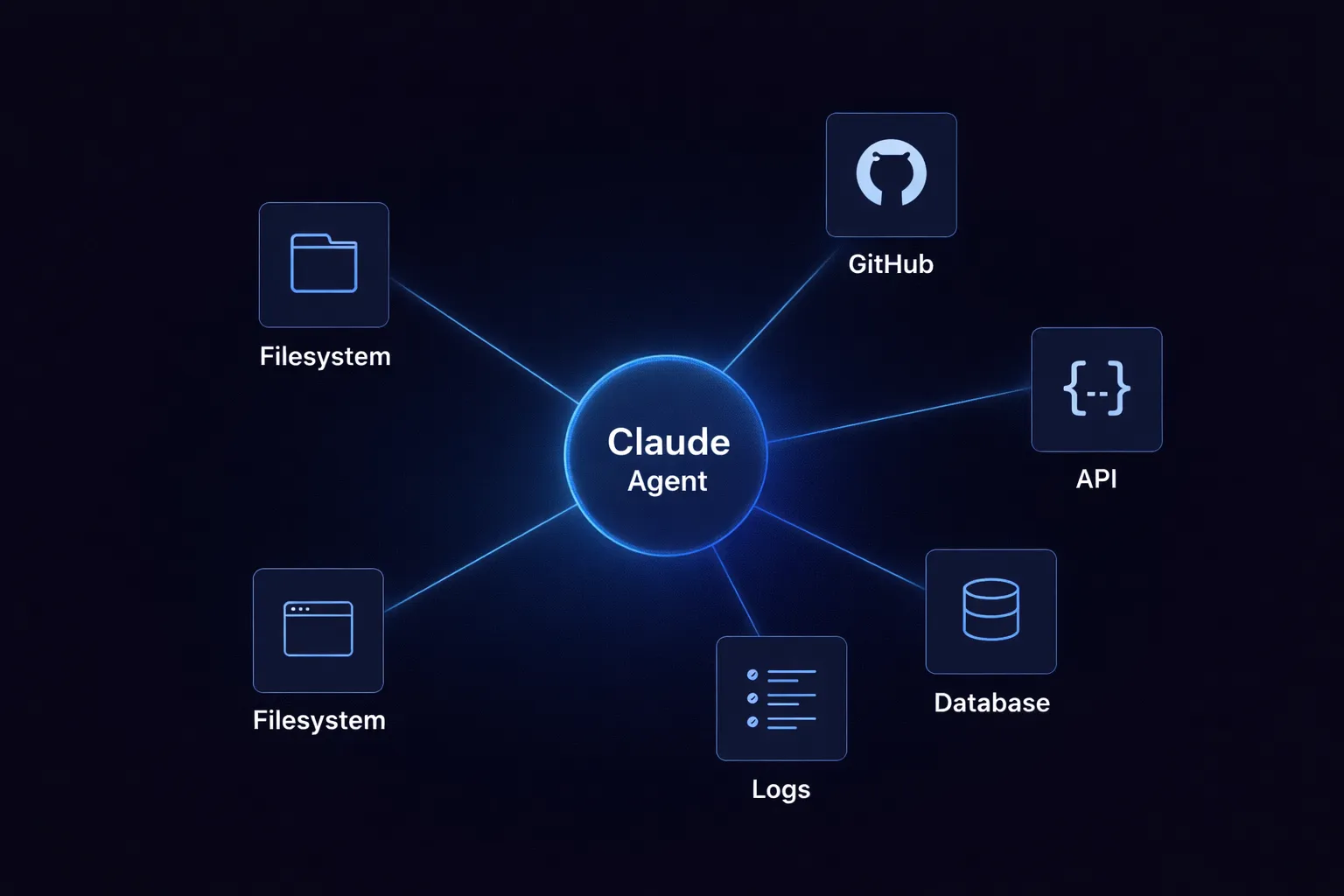

How TrueFoundry Enables Enterprise AI Platform Engineering?

TrueFoundry provides an enterprise-grade AI Gateway encompassing an LLM Gateway, MCP Gateway, and Agent Gateway. It serves as the unified platform layer that connects, observes, and governs agentic AI workloads across providers from a single control plane.

TrueFoundry deploys within the customer's AWS, GCP, or Azure account. It is also available for SaaS, on-premises, or air-gapped deployments — satisfying HIPAA, SOC 2, and ITAR requirements.

- Unified access across 250-plus AI models, MCP tools, and agents: One API surface, one OpenAI-compatible endpoint. Switching from GPT-4 to Claude to a self-hosted Llama AI model is a configuration change, not a code change. This is what eliminates repetitive tasks for development teams managing provider integrations.

- Per-team cost controls and token budgets enforced at the gateway: Hard spending limits per team, service, and endpoint. Real-time dashboards with full team-level attribution. Finance teams get actionable AI FinOps data without exporting logs elsewhere, enabling operational excellence through better resource allocation.

- Composable guardrails for prompts, responses, and tool calls: PII redaction, prompt injection filtering, and content policy are configured centrally and applied consistently across large language model calls, agent steps, and MCP tool executions. Platform teams define policies once. Every application development team inherits them through the AI platform engineering layer.

- Developer self-service with platform-level governance: Engineers deploy AI models, register agents, and configure tool access through self-service workflows. The MCP Gateway includes an agent playground for prototyping directly in the browser, improving developer productivity and reducing software engineering toil without removing governance.

- VPC-native deployment with full data sovereignty: All inference, governance, and logging stays within the customer's cloud boundary. No data leaves. TrueFoundry satisfies data residency requirements that SaaS-first platforms cannot meet for regulated industries, directly addressing the impact of AI on data collection governance in production.

The gateway adds roughly 3–4 ms of latency per request. Each proxy instance handles 350+ requests per second on a single vCPU. Horizontal scaling is built in, supporting software development lifecycle demands at enterprise scale.

.webp)

Your teams are already building with AI. The question is whether every team is building governance from scratch — or operating on a shared platform that handles access control, cost limits, guardrails, and compliance by default.

TrueFoundry gives platform engineering teams a single, governed AI gateway that works across providers, clouds, and deployment models. VPC-native. SOC 2 and HIPAA ready. Operational in minutes.

Book a Demo to see how TrueFoundry's AI Gateway can serve as the foundation for AI platform engineering at your organization. Or start free with a live sandbox — deploy models, route LLM traffic, and explore the full platform with no credit card required.

TrueFoundry AI Gateway bietet eine Latenz von ~3—4 ms, verarbeitet mehr als 350 RPS auf einer vCPU, skaliert problemlos horizontal und ist produktionsbereit, während LiteLM unter einer hohen Latenz leidet, mit moderaten RPS zu kämpfen hat, keine integrierte Skalierung hat und sich am besten für leichte Workloads oder Prototyp-Workloads eignet.

Der schnellste Weg, deine KI zu entwickeln, zu steuern und zu skalieren

%20(10).webp)

.webp)

.webp)

.webp)

.webp)

.webp)

.png)

.webp)