Enterprise MCP access control: managing tools, servers, and agents

.webp)

Built for Speed: ~10ms Latency, Even Under Load

Blazingly fast way to build, track and deploy your models!

- Handles 350+ RPS on just 1 vCPU — no tuning needed

- Production-ready with full enterprise support

Here is a scenario that is happening in production environments right now.

You deploy the standard, open-source GitHub MCP server. The goal is simple: you want your engineering support agent to read issue comments and summarize them for the team. It works perfectly. The agent connects, performs a list_tools handshake, and starts fetching data.

Two days later, that same agent hallucinates. Instead of summarizing a thread, it decides the repository is "deprecated" based on a misunderstood comment and calls delete_repo.

Why did this happen? It wasn’t a prompt injection attack. It wasn’t a malicious insider. It was a fundamental architectural failure.

The standard GitHub MCP server like the Stripe, Postgres, and Kubernetes servers you find on GitHub is binary. It exposes every API endpoint it wraps. If the server supports delete_repo, and you give the agent the connection string, the agent has delete_repo. There is no native .gitignore for tool capabilities. There is no chmod for JSON-RPC tool definitions.

Yet, we are routinely deploying agents with "Root Access" to our most critical infrastructure because the standard MCP implementation lacks granularity.

This is a non-starter for enterprise adoption. We don’t need more "AI policy documents" or stern warnings in system prompts. We need an architectural pattern that slices MCP servers into safe, scoped interfaces.

We call this the Virtual MCP Server.

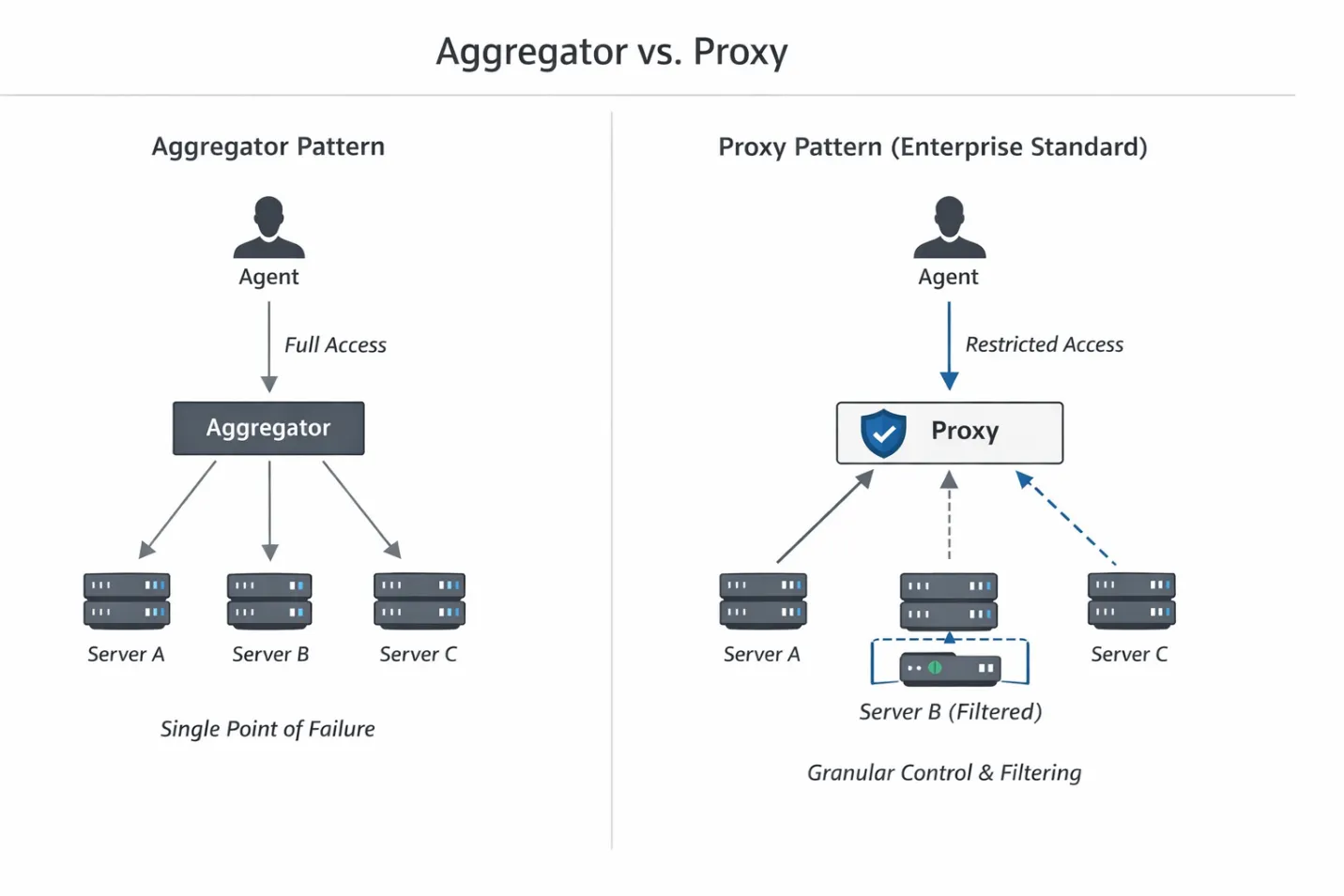

Architecture: Aggregators vs. Proxies

To solve this, we have to look at how we route traffic between the LLM and the tools. Right now, there are two dominant patterns emerging in the ecosystem (often cited in Gartner and TrueFoundry documentation): the Aggregator and the Proxy.

- The Aggregator Pattern This is the "getting started" approach. You set up a single endpoint that fans out to multiple underlying servers.

- Traffic Flow: Agent -> Aggregator -> [Server A, Server B, Server C]

- The Problem: While easy to set up, it becomes a single point of failure. Worse, it usually just passes the full capability list of all connected servers back to the agent. It creates a "God Mode" agent that sees every tool in your stack.

- The Proxy Pattern (The Enterprise Standard) This is where we need to be. The Proxy acts as a smart sidecar or gateway that sits between the agent and the execution layer. It creates a strict 1:1 or 1:N mapping, but with a crucial difference: it doesn't just route packets.

The Proxy performs payload inspection before requests ever reach the backend, allowing unsafe tools to be removed at discovery time. It allows us to intercept the tools/list JSON-RPC response and surgically remove the tools the agent shouldn't know exist.

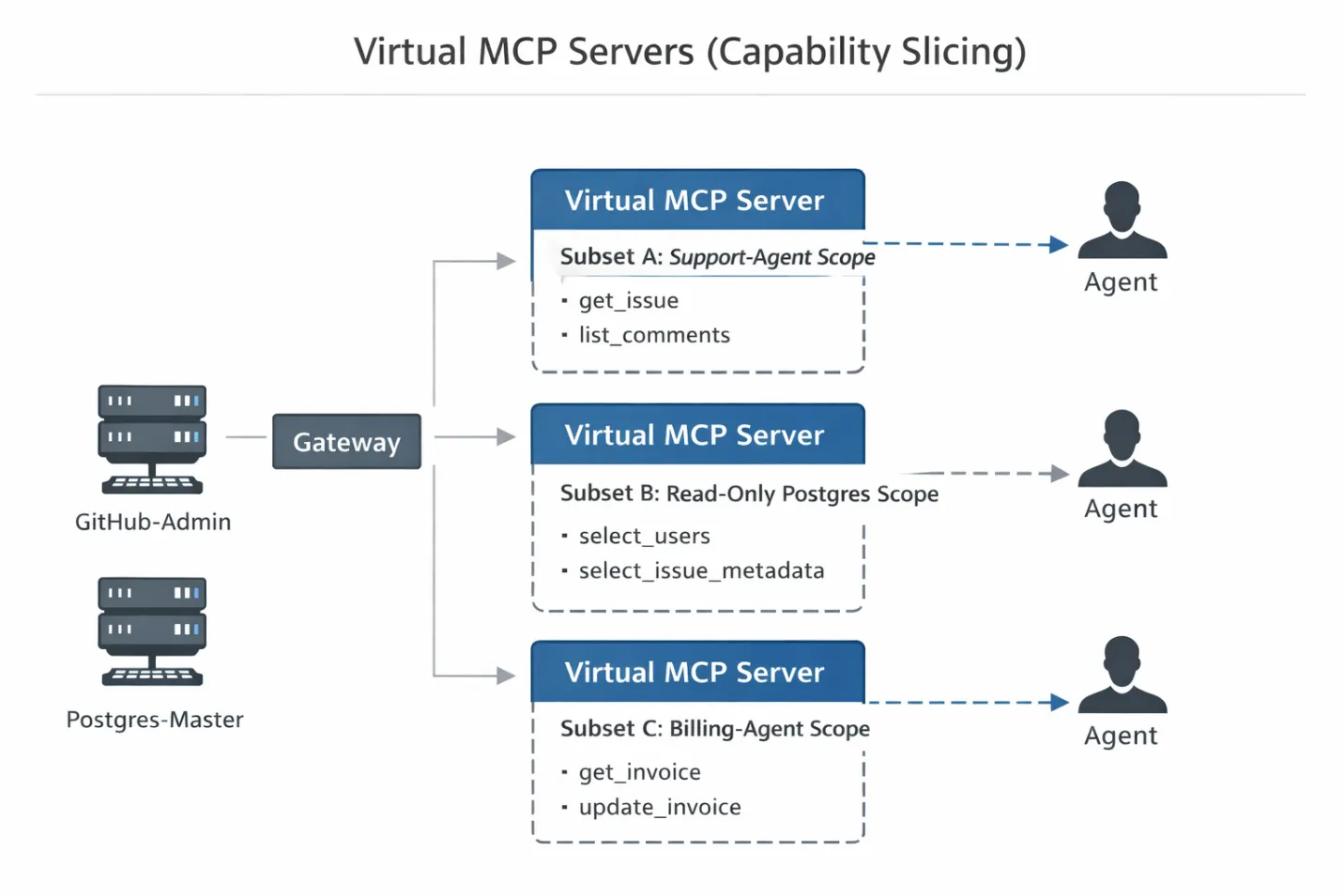

Implementation: The "Virtual Server" Pattern

This brings us to the core implementation pattern: the Virtual MCP Server.

A Virtual MCP Server is a logical construct. It references specific tools from physical MCP servers without duplicating their execution logic or redeploying infrastructure. This is where MCP vs API becomes practical for enterprise teams: traditional APIs usually restrict access at the endpoint level, while MCP must also decide which tools are visible during discovery before an agent ever makes a call. Think of it like a VIEW in SQL: it allows you to present a restricted subset of data (or in this case, capabilities) to a specific user (the agent) without altering the underlying table (the physical server).

Here is how you implement this pattern in a production Gateway architecture:

Step 1: The Backend Connection (The "Service Account") First, you connect your raw, high-privilege MCP servers to the Gateway.

- Connection: The Gateway establishes a persistent connection to the postgres-master or github-admin MCP server.

- Credential Management: The Gateway holds the "Service Account" or Admin credentials required to authenticate with these servers.

- Isolation: Crucially, the Agent never sees these credentials. The Agent connects to the Gateway, not the tool. The Gateway acts as the vault.

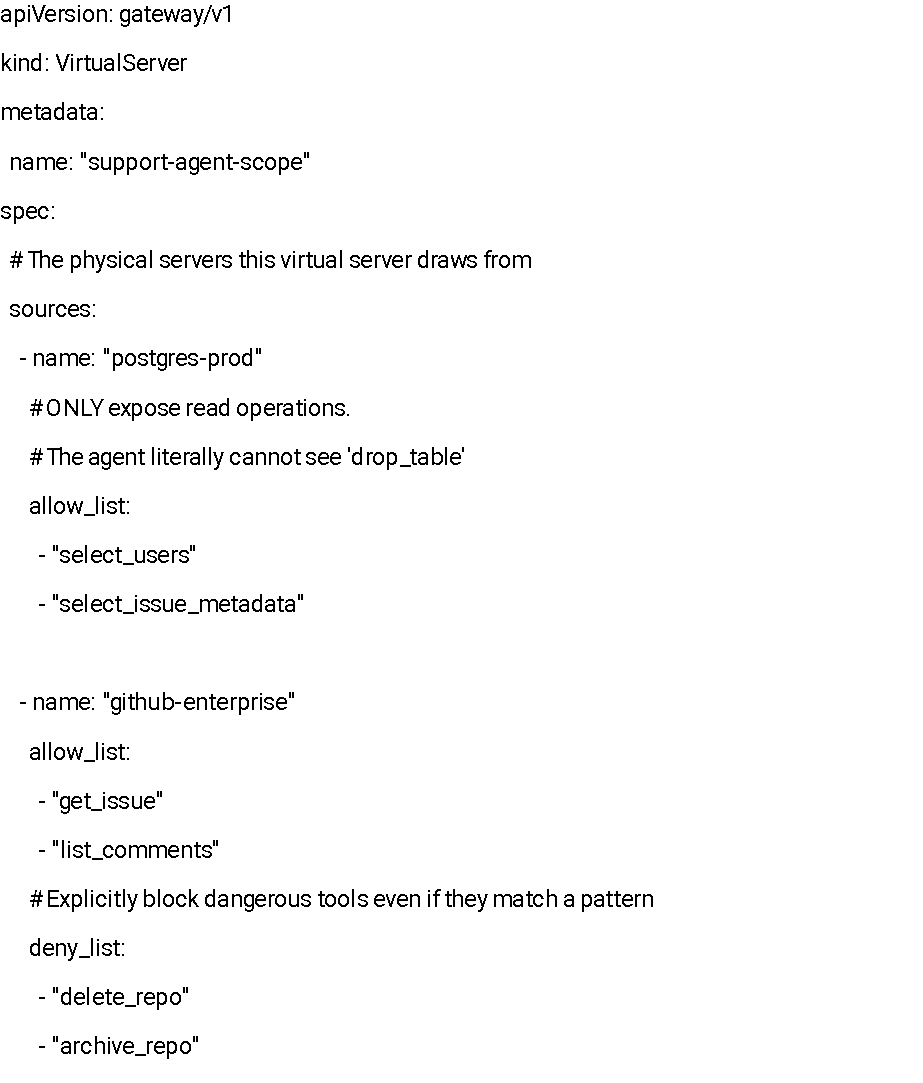

Step 2: The Slice (The Manifest) Next, you define a Virtual Server manifest. This is a configuration file (typically YAML or JSON) that defines exactly which tools from the physical servers are exposed to a specific agent scope.

Instead of granting access to github-all, you create a slice. Here is what a typical Virtual Server configuration looks like:

Step 3: The Client View (The Handshake) When the Agent initializes its connection, it performs the standard JSON-RPC tools/list handshake with the Gateway.

Because the Agent is connected to the support-agent-scope Virtual Server, the Gateway intercepts this request. It filters the master list against the manifest defined in Step 2 and returns a sanitized list.

The result? It is technically impossible for the agent to hallucinate a call to delete_repo. That function simply does not exist in its context window. You haven't just told the model "don't do it, "you have removed the hands it would use to do it.

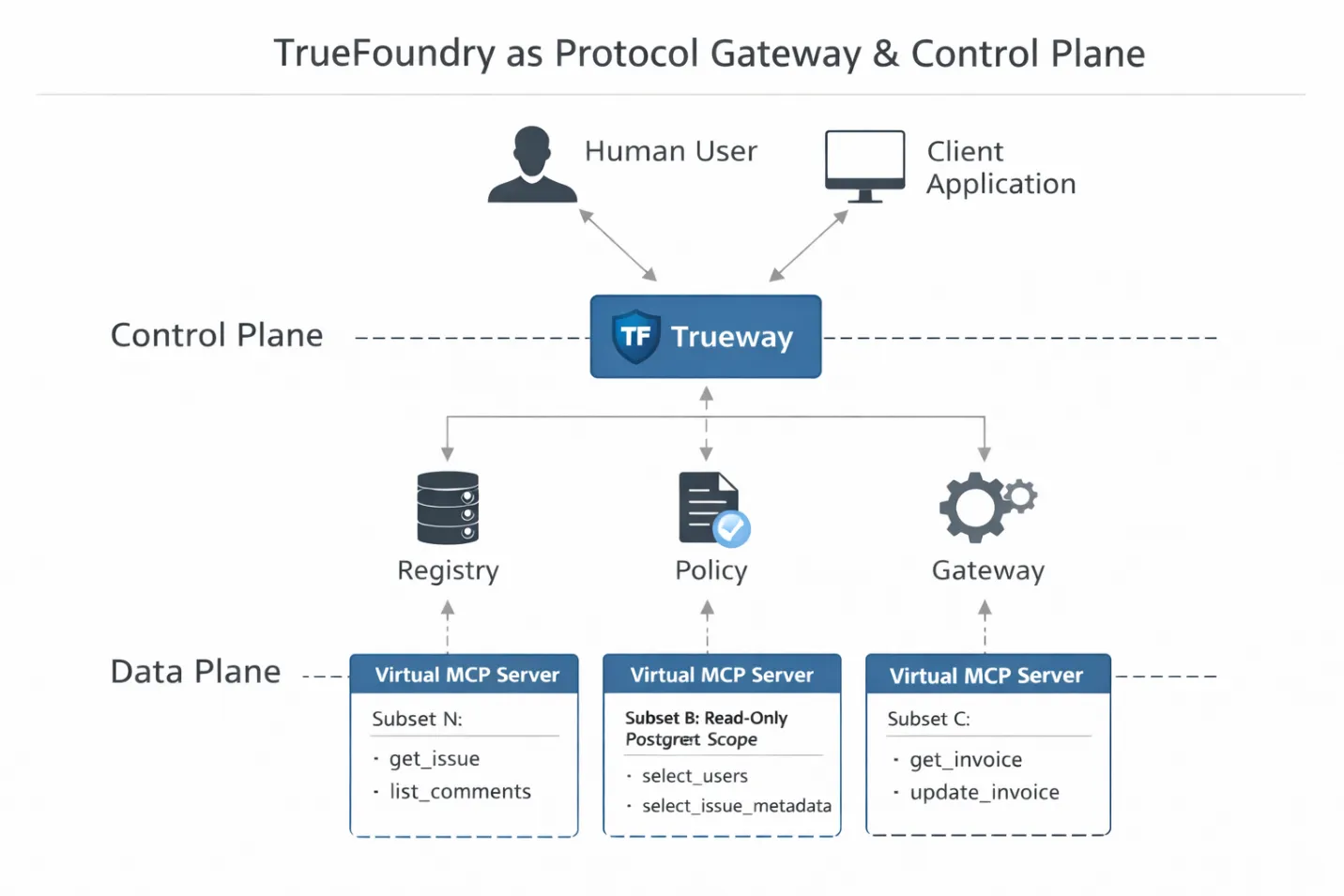

Where TrueFoundry Fits

So where does TrueFoundry actually sit in this architecture?

Not as a hosting layer, and not as a convenience wrapper. In a production MCP stack, TrueFoundry functions as the protocol gateway and control plane. It sits directly in the execution path between the LLM runtime and the tools, where enforcement is still possible.

This positioning matters. Because the gateway terminates the MCP connection, it can parse and reason about the JSON-RPC payload in real time. It is not just forwarding requests. It is interpreting intent, identity, and scope before any tool ever executes.

That enables three concrete engineering capabilities.

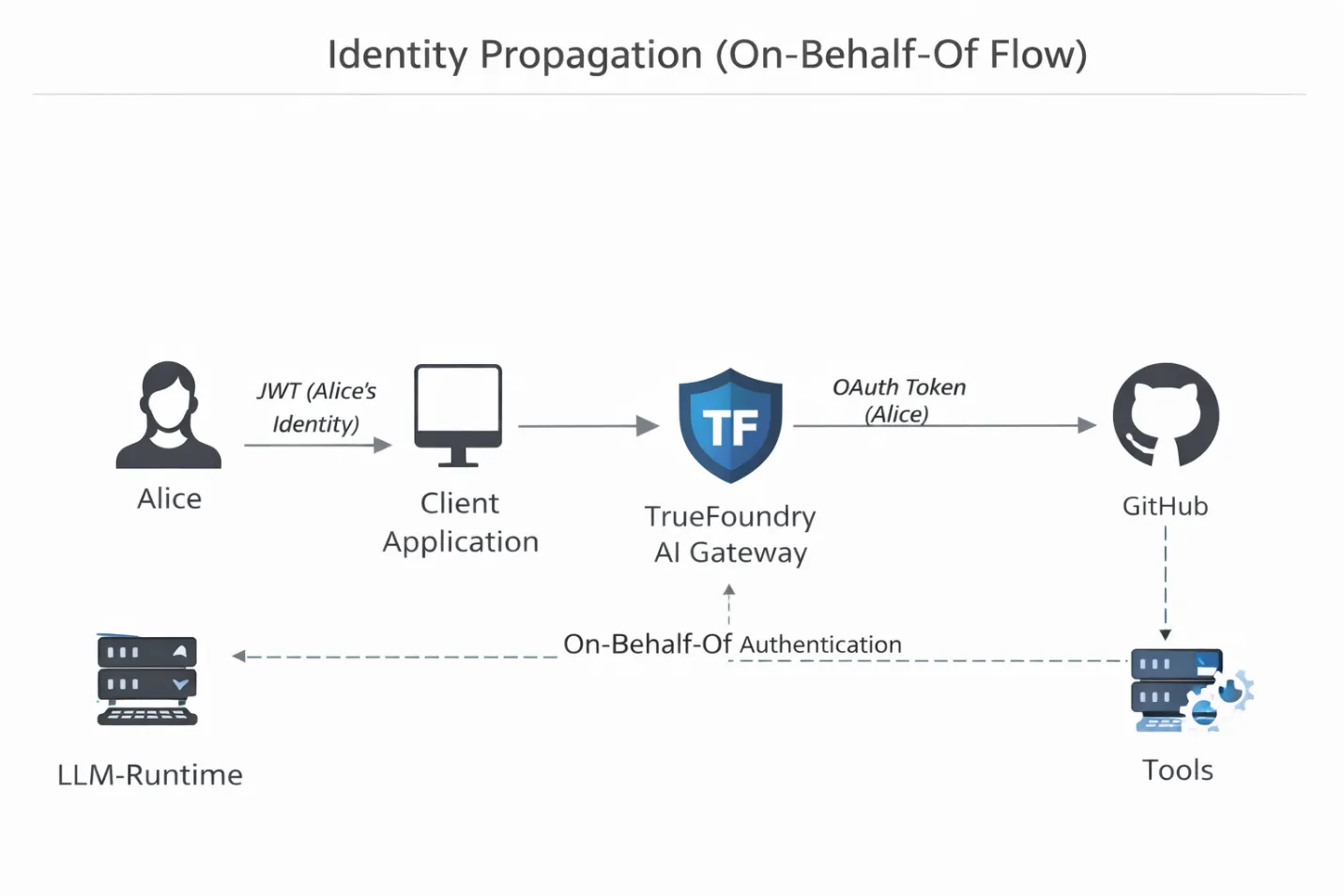

- Identity injection (on-behalf-of execution).

Most DIY agent stacks suffer from the “generic key” problem. Agents run with a shared API token, so when something goes wrong, all you see in the logs is “the agent did it.” There is no accountability. TrueFoundry’s gateway inspects the incoming client JWT, maps it to the authenticated human user, and injects the correct OAuth or service token downstream. If Alice cannot delete a repository, neither can the agent acting on her behalf. The agent’s authority is no longer theoretical. It is cryptographically bound.

- Virtual server runtime enforcement.

The gateway is where Virtual MCP Servers become real. TrueFoundry maintains the routing tables that map a virtual server scope to physical MCP servers and allowed tools. If an agent attempts to call something outside its declared slice, the gateway returns a structured JSON-RPC error. The model does not get a silent failure. It gets a clear “tool not found,” which helps it self-correct instead of hallucinate.

- Traffic inspection and replay.

Because the gateway terminates the connection, it can buffer and trace MCP traffic. This enables PCAP-style inspection of tool interactions. When an agent gets stuck in a loop or makes a bad decision, you can replay the exact tool call sequence without rerunning the expensive inference steps that led to it. Debugging shifts from guesswork to inspection.

Taken together, this is the difference between hoping an agent behaves and enforcing that it cannot misbehave. Access control moves out of prompts and into infrastructure, where it belongs.

Defense in Depth: Guardrails at the Protocol Layer

Virtual Servers control which tools an agent can see. Guardrails control how those tools are used. Just because an agent is allowed to call sql_query, doesn't mean it should be allowed to run SELECT * FROM users and dump the entire customer database into its context window.

This is where Guardrails come in. In the TrueFoundry architecture, guardrails operate as middleware that intercepts the JSON-RPC payload at the protocol layer, inspecting traffic before it is executed.

Input Guardrails (The "WAF" for Agents) We can write Python middleware or simple regex rules that validate tool arguments before the request reaches the backend container.

- The Scenario: An agent tries to execute a query that lacks a LIMIT clause, potentially crashing the database or costing a fortune in token usage.

- The Fix: An input guardrail intercepts the sql_query tool. It checks the arguments payload. If LIMIT is missing, it either rejects the request or injects a default LIMIT 100 automatically.

- Security Use Case: Regex filters can detect patterns like API keys or private keys in the input arguments, preventing the agent from accidentally leaking secrets to a third-party logging tool.

Output Guardrails (Data Loss Prevention) Agents are prone to "verbose leaking," fetching more data than they need and summarizing it.

- The Mechanism: Output guardrails sanitize the JSON response from the tool before it is passed back to the LLM.

- The Implementation: If a database tool returns a column named ssn or credit_card, the guardrail can hash or mask this data (e.g., ****-1234) in the JSON-RPC response. The LLM gets the context it needs ("Credit card exists") without ever holding the sensitive data in its context window.

Observability: Tracing the "Thought Process"

In traditional software, if an API call fails, you check the logs. You see a 500 Internal Server Error and a stack trace.

In agentic systems, "failure" is often silent. The agent calls a tool, gets a result, but misinterprets it. Or it calls the tool with slightly wrong arguments that technically work but return garbage data. Standard application logs show "200 OK," but the outcome is wrong.

To debug this, you need Distributed Tracing for MCP.

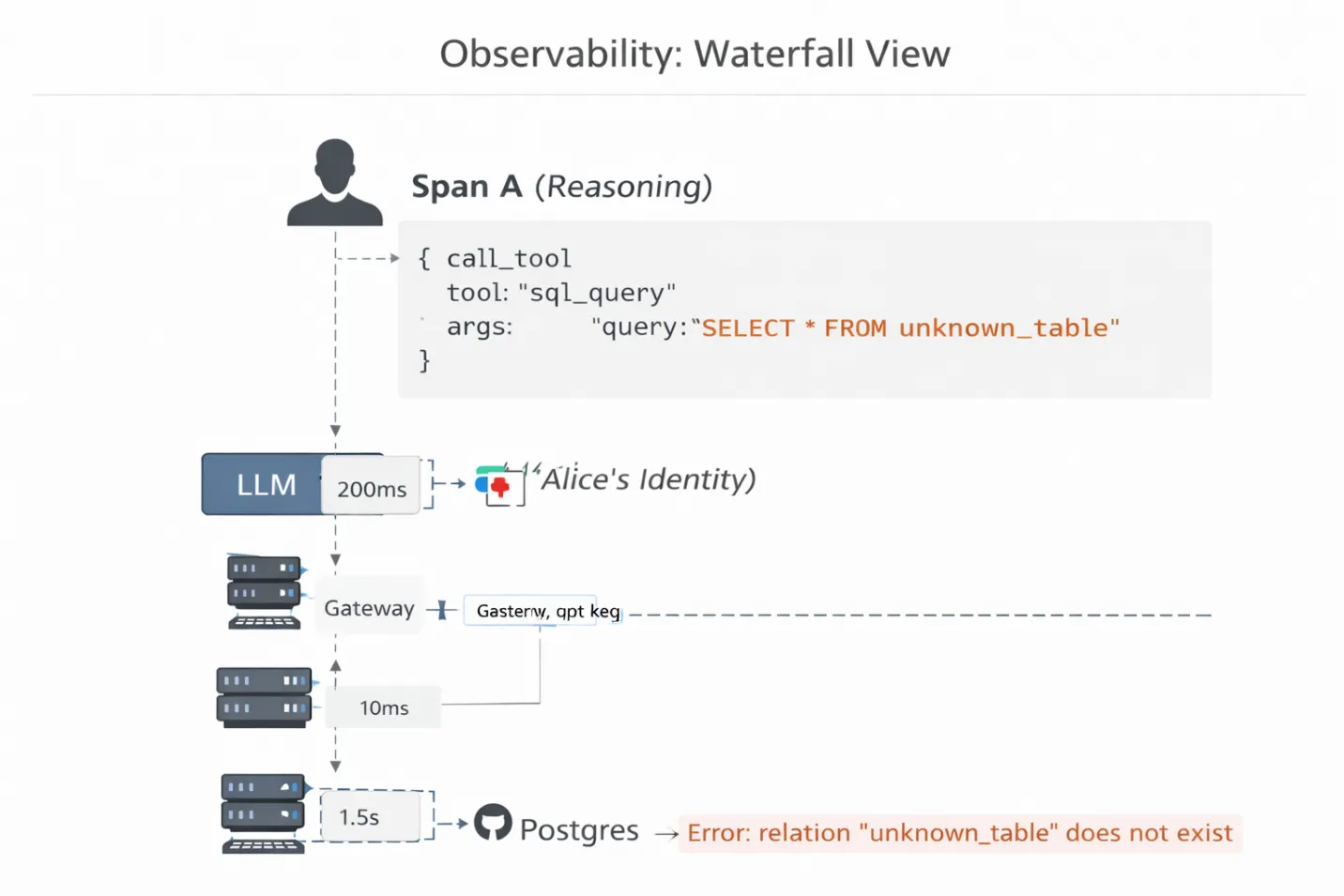

TrueFoundry provides a waterfall visualization of the agent's execution chain. You don't just see "Request Failed." You see the latency and payload at every hop:

- Span A (Reasoning): The LLM spends 200ms generating the thought.

- Span B (Gateway): The Gateway takes 10ms to validate the JWT and route the request.

- Span C (Tool Execution): The Postgres container takes 1.5s to run the query.

Why this matters: You can drill down into Span C and see the exact SQL query the agent generated. You might realize the agent is hallucinating a table name that doesn't exist, or using a deprecated API parameter. Without this protocol-level visibility, you are debugging a black box by guessing.

Conclusion: Security as an Enabler

The transition from "Chatbot" to "Agent" is effectively the transition from "Text Generation" to "Remote Code Execution." That reality is exactly what keeps most enterprise pilots grounded in the PoC phase.

TrueFoundry bridges that gap. When you implement the Virtual MCP Server pattern via the AI Gateway, you stop asking your security team to trust a probabilistic model and start showing them a deterministic architecture. You aren't just deploying a tool; you are deploying a scoped, identity-aware interface that inherently limits blast radius.

For enterprises, TrueFoundry doesn't only provide the "pipes" for MCP; it provides the valves, gauges, and locks. It turns a reckless "Root Access" agent into a trusted digital employee. You wouldn't run a production Kubernetes cluster without RBAC; you shouldn't run an enterprise agent stack without TrueFoundry.

TrueFoundry AI Gateway delivers ~3–4 ms latency, handles 350+ RPS on 1 vCPU, scales horizontally with ease, and is production-ready, while LiteLLM suffers from high latency, struggles beyond moderate RPS, lacks built-in scaling, and is best for light or prototype workloads.

The fastest way to build, govern and scale your AI

.webp)

.png)