TrueFoundry und Gemini Enterprise Agent Platform: Ein praktischer Vergleich von Plattformgrenzen, Betriebsmodellen und langfristiger Eignung für Unternehmen

Auf Geschwindigkeit ausgelegt: ~ 10 ms Latenz, auch unter Last

Unglaublich schnelle Methode zum Erstellen, Verfolgen und Bereitstellen Ihrer Modelle!

- Verarbeitet mehr als 350 RPS auf nur 1 vCPU — kein Tuning erforderlich

- Produktionsbereit mit vollem Unternehmenssupport

REDAKTIONELLER HINWEIS — Geprüft anhand öffentlicher Anbieterdokumente vom 24. April 2026. Die meinungsbasierte Positionierung bleibt redaktionell; Konfigurationsdetails wurden an die aktuelle veröffentlichte Dokumentation angepasst.

Perspektive: TrueFoundry

Die Gemini Enterprise Agent Platform bietet Google eine der bisher klarsten Darstellungen einer Unternehmens-Agentenplattform. Das ist wichtig. Der Vergleich sollte nicht so dargestellt werden, als ob „Google endlich eine Antwort hat“ gegenüber „Google hier nichts zu bieten hat.“ Google hat hier eindeutig eine glaubwürdige Antwort – und für einige Teams wird sie sehr gut sein.

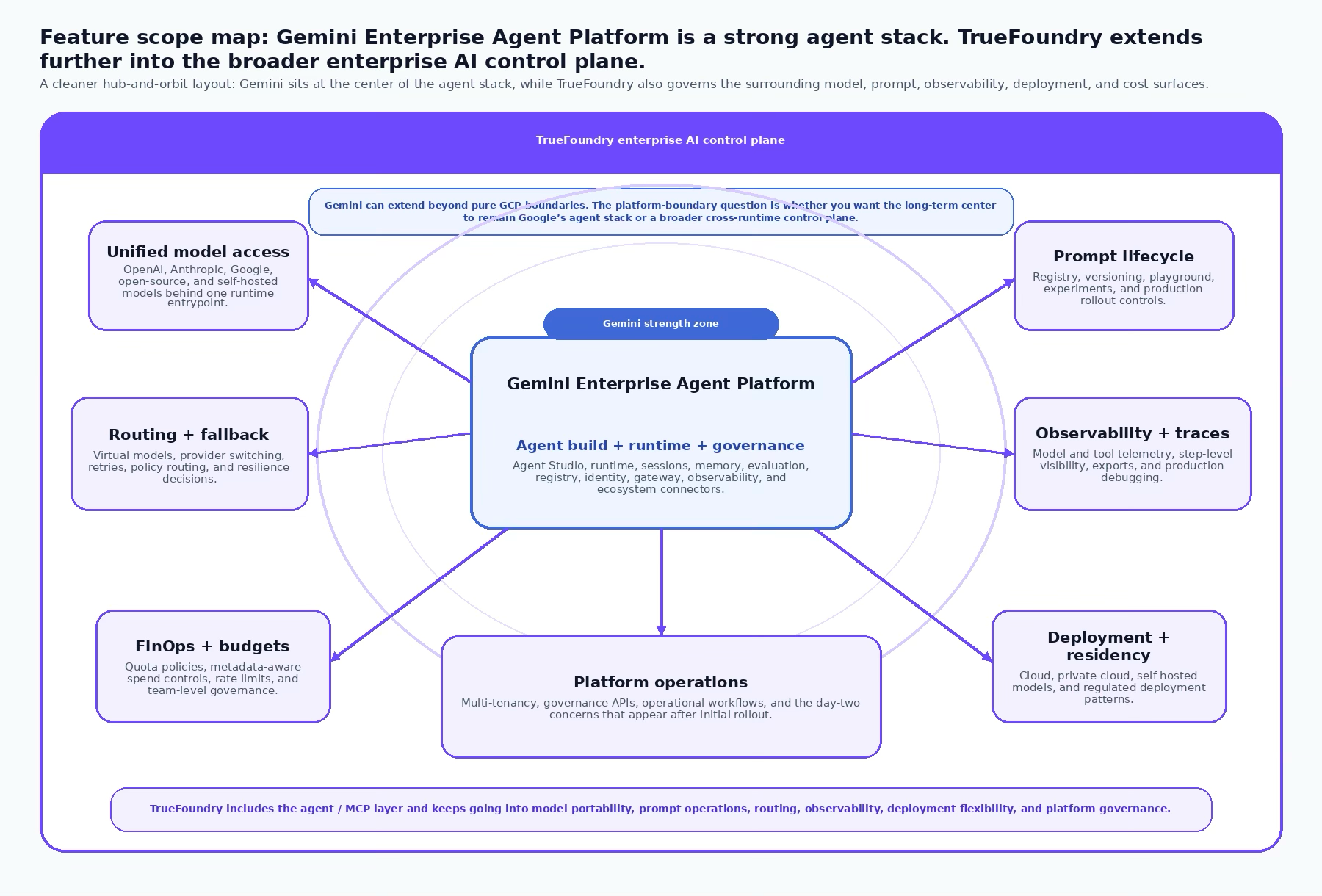

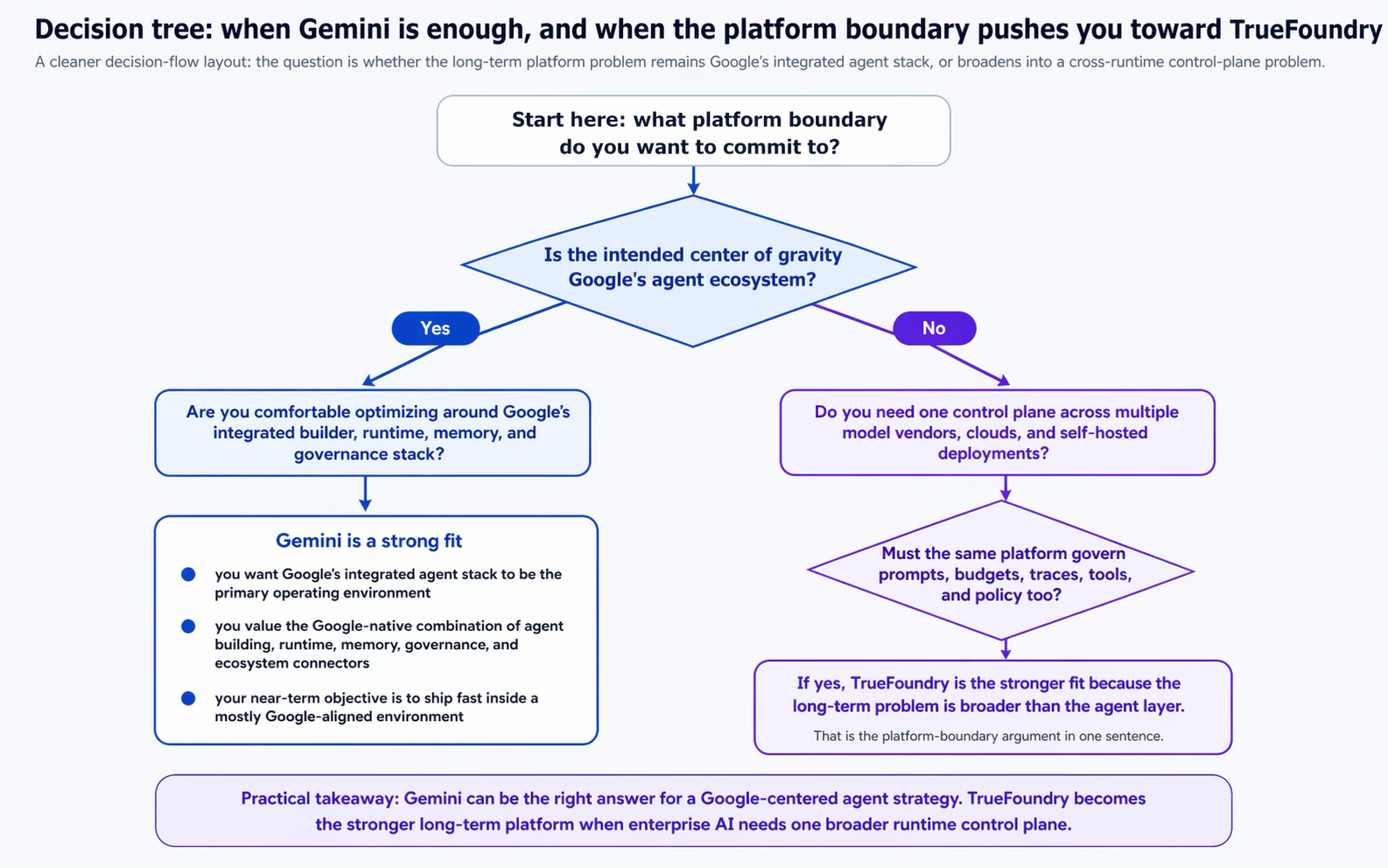

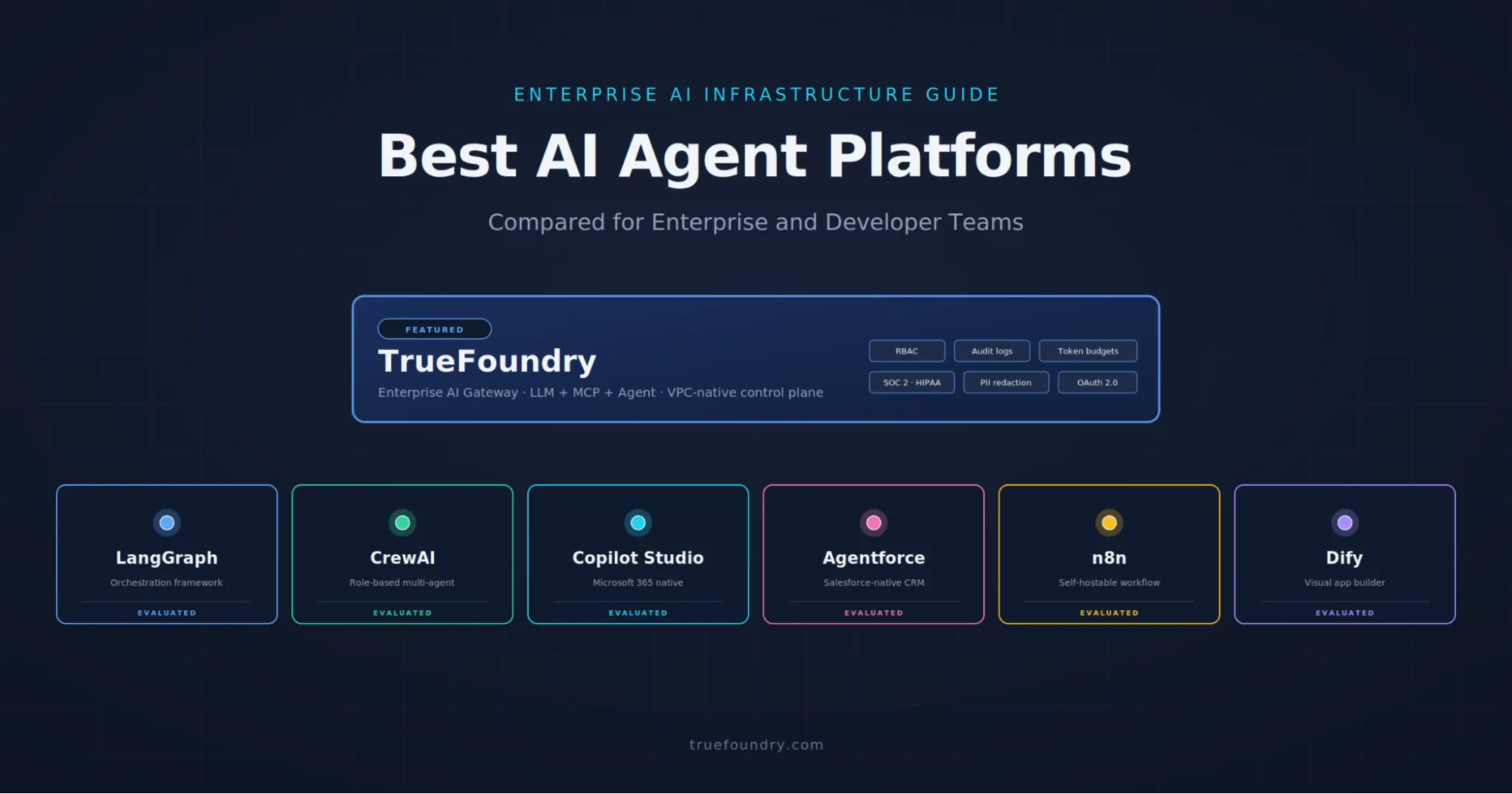

Die nützlichere Frage ist eine andere: Wo liegt die Plattformgrenze? Wenn Ihr Hauptbedarf eine Google-zentrierte Plattform ist, um Agenten zu erstellen, bereitzustellen, zu steuern und zu optimieren, ist die Gemini Enterprise Agent Platform eine glaubwürdige Wahl. Wenn Ihr Team eine breitere KI-Kontrollebene für Unternehmen benötigt, die über Modellhersteller, Prompts, Tool-Aufrufe, Clouds, selbst gehostete Modelle und den Produktionsbetrieb hinweg konsistent bleibt, ist TrueFoundry oft die natürlichere langfristige Lösung für Plattformteams.

Das ist das Kernargument dieses Blogs. Es ist nicht gegen Google gerichtet. Es geht um die Abgrenzung der Plattformen: Die Gemini Enterprise Agent Platform ist am besten als Googles integrierte Agentenplattform zu verstehen. TrueFoundry ist am besten als eine laufzeitübergreifende KI-Plattformschicht für Unternehmen zu verstehen.

TL;DR und schnelle Empfehlung

• Wählen Sie die Gemini Enterprise Agent Platform, wenn Ihr Schwerpunkt Google Cloud ist und Sie Googles integrierten Agenten-Builder, Laufzeit, Speicher, Governance und das mitarbeiterorientierte Gemini-Ökosystem wünschen.

• Wählen Sie TrueFoundry, wenn Ihr Plattformteam eine einzige Kontrollebene für Modelle, Prompts, Tools, Routing, Richtlinien, Observability, Budgets und Bereitstellungsmuster über verwaltete Anbieter und selbst gehostete Infrastruktur hinweg wünscht.

• Kurz gesagt: Gemini ist eine starke Agentenplattform. TrueFoundry ist die breitere KI-Laufzeit- und Betriebs-Plattform für Unternehmen.

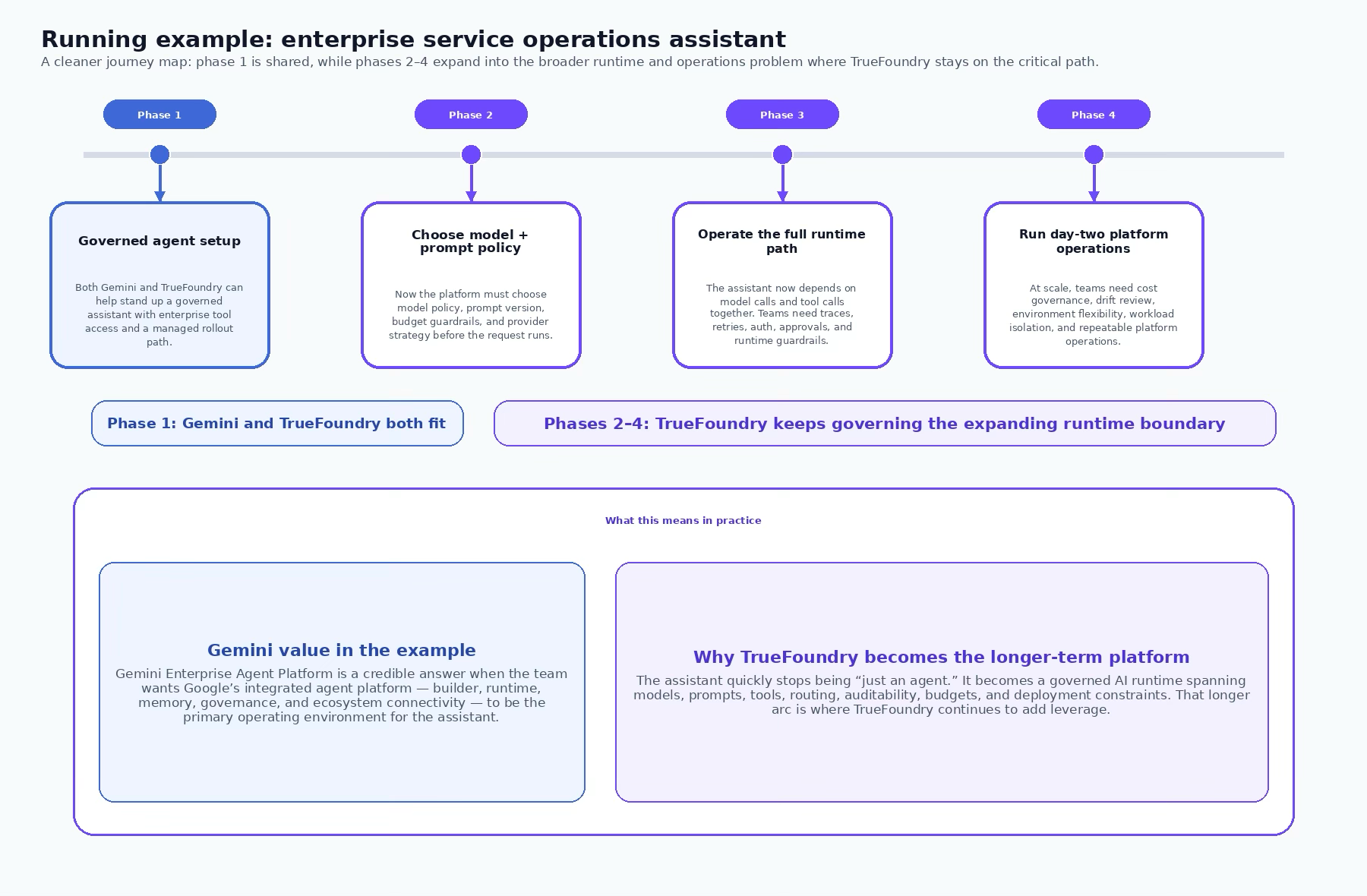

Praxisbeispiel: Assistent für den Unternehmens-Servicebetrieb

Stellen Sie sich einen Unternehmensassistenten vor, der Support- und Betriebsteams bei der Lösung hochpriorer Vorfälle unterstützt. Er benötigt Salesforce-Kontokontext, Jira- und ServiceNow-Tickets, Confluence- und interne Dokumente, SAP-Bestellinformationen und eine Reihe interner APIs. Er benötigt auch unterschiedliche Modellrichtlinien: Standardanfragen können ein kostengünstigeres, verwaltetes Modell nutzen, aber hochsensible oder domänenspezifische Abläufe benötigen möglicherweise einen anderen Anbieter oder ein selbst gehostetes Modell.

Auf den ersten Blick sieht dies wie ein Problem der Agentenentwicklung aus. Doch im Produktionsbetrieb wird es zu einem Kontrollebenen-Problem. Welches Modell ist erlaubt? Welche Prompt-Version ist aktiv? Welche Tools sind für welche Teams erlaubt? Wie leiten Sie den Traffic? Wie verfolgen Sie Modell- und Tool-Fehler gemeinsam? Wie wenden Sie Budgets, Prüfbarkeit und Bereitstellungsbeschränkungen über die gesamte Laufzeit an?

Hier wird der Unterschied zwischen Gemini und TrueFoundry deutlicher. Gemini deckt einen wesentlichen Teil des Agenten-Stacks ab. TrueFoundry ist darauf ausgelegt, das System weiterhin zu steuern, während sich diese Laufzeitoberfläche erweitert.

1) Was die Gemini Enterprise Agent Platform gut kann

Es verleiht Google Cloud ein wirklich kohärentes Gesamtkonzept für Agentenplattformen.

Google bietet nun eine kohärentere End-to-End-Lösung als die bloße Empfehlung, „einen Modell-Endpunkt zu nutzen und den Rest selbst zusammenzustellen“. Die Plattform vereint Funktionen für Agentenentwicklung, Laufzeit, Sitzungen, persistenten Speicher, Evaluierung, Beobachtbarkeit und Governance. Das ist ein bedeutender Fortschritt gegenüber einer fragmentierten Tool-Landschaft.

Es ist umfassender als das Zerrbild einer reinen GCP-Lösung.

Ein fairer Vergleich sollte dies explizit anerkennen. Google positioniert die Gemini Enterprise Agent Platform so, dass sie sich auch außerhalb von Google Cloud verbinden lässt – über Business-System-Konnektoren, von Partnern entwickelte Agenten, das Agent-to-Agent-Protokoll und Datenzugriffsmuster, die über eine einzige Cloud hinausgehen können. Das schwache Argument „Gemini ist nur relevant, wenn bereits alles in GCP ist“ ist somit nicht mehr zutreffend.

Es bietet starke, Google-native Vorteile.

Wenn Ihr Unternehmen Googles Daten, Infrastruktur und Agenten-Ökosystem als Grundlage nutzen möchte, wird Gemini zunehmend attraktiver. Die Kombination aus Gemini-Modellen, Vertex AI Lineage, Googles Agenten-Laufzeitumgebung und den für Mitarbeiter zugänglichen Gemini-Oberflächen ist überzeugend für Teams, die auf den Google-Stack standardisieren.

2) Warum TrueFoundry oft die bessere Wahl für Unternehmensplattformen ist

TrueFoundry ist auf die Laufzeit-Steuerungsebene ausgerichtet, nicht nur auf die Agenten-Grenzen einer einzelnen Cloud.

Der Schwerpunkt von TrueFoundry liegt auf dem KI-Gateway und der Plattform-Steuerungsebene: einer Schicht zur Verwaltung von Modellzugriff, Routing, Schutzmechanismen, Prompts, Budgets, Beobachtbarkeit und operativen Kontrollen. Das ist wichtig, denn Unternehmen stellen oft fest, dass „den Agenten zu bauen“ nur der erste Schritt ist. Der Betrieb der Laufzeitumgebung wird zum schwierigeren Problem.

Modell-Governance wird großgeschrieben, einschließlich Anbieterneutralität und Self-Hosted-Optionen.

Gemini Enterprise kann mehrere Modelle unterstützen, ist aber naturgemäß in Googles Plattform-Weltbild verankert. TrueFoundry ist darauf ausgelegt, die Modellebene portabel zu halten. Wenn Sie eine Plattform wünschen, die OpenAI-, Anthropic-, Google-, Open-Source- und selbst gehostete Modelle mit virtuellen Modellen, Fallbacks und Routing-Logik vermitteln kann, entspricht TrueFoundry dieser Anforderung direkter.

Der Prompt-Lebenszyklus wird als ein Anliegen des Produktionsbetriebs betrachtet.

In Produktionssystemen ist das Prompt-Verhalten kein Nebenaspekt. Teams benötigen Prompt-Versionierung, Tests, Iteration, Rollout-Disziplin und Transparenz darüber, wie Prompt-Änderungen Kosten und Ergebnisse beeinflussen. TrueFoundry behandelt dies als Teil der Plattform-Oberfläche, anstatt es implizit innerhalb eines Agentenprojekts zu belassen.

Die Art der Bereitstellung ist oft Teil der Plattformentscheidung.

Ein Plattformteam muss oft gleichzeitig Public Cloud, Private Cloud, regulierte Umgebungen, selbst gehostete Modelle oder Residenzpflichten unterstützen. Diese Bereitstellungsflexibilität ist ein Kernbestandteil des Werts von TrueFoundry. Sie ermöglicht es Plattformteams, die architektonische Konsistenz zu wahren, selbst wenn ihre Bereitstellungsrealität gemischt ist. Dies ist besonders relevant für selbst gehostete, regulierte und luftdichte Umgebungen, in denen die Plattform die Compliance-Grenzen des Käufers erfüllen muss, anstatt zu verlangen, dass diese Grenzen verschoben werden.

Betriebliche Konsistenz ist entscheidend für Modelle und Tools gemeinsam.

Produktionsausfälle sind oft keine isolierten „Modellfehler“ oder „Tool-Fehler“. Es sind Fehler in der Modell-Prompt-Tool-Kette. TrueFoundry glänzt hier, weil es speziell dafür entwickelt wurde, den gesamten Ausführungspfad der Modell-Prompt-Tool-Kette mit Beobachtbarkeit, Traces, Quoten, Richtlinien und Laufzeitkontrollen über die gesamte kombinierte Ausführungsoberfläche hinweg zu steuern.

Vergleichsmatrix

3) Redaktionelles Fazit

Ein ausgewogener Vergleich sollte dies klar festhalten: Die Gemini Enterprise Agent Platform ist gut. In manchen Organisationen mag sie sogar sehr gut sein. Google bietet nun eine wesentlich umfassendere Lösung für Agentenentwicklung, Laufzeit, Governance und Unternehmensreichweite als zuvor.

Der Fall TrueFoundry bleibt jedoch relevant für Teams, deren Plattformgrenzen über ein einzelnes Agenten-Ökosystem hinausgehen. Viele Unternehmensteams wählen nicht einfach nur einen Agenten-Builder. Sie wählen die Betriebsschicht, die zwischen Anwendungen und einem sich ständig wandelnden Universum von Modellen, Prompts, Tools, Richtlinien, Clouds und Bereitstellungsmustern liegen wird. Genau dafür wurde TrueFoundry entwickelt.

Die Schlussfolgerung ist also nicht: „Gemini kann keine Enterprise Agents.“ Das kann es eindeutig. Die praktische Schlussfolgerung lautet: Gemini ist am besten geeignet, wenn das Unternehmen Googles Agentenplattform als Dreh- und Angelpunkt etablieren möchte. TrueFoundry ist besser geeignet, wenn das Unternehmen eine breitere, portablere KI-Laufzeit-Steuerungsebene wünscht, die auch bei wachsendem Plattformumfang nützlich bleibt.

Fazit: Die Frage ist nicht, ob Gemini Enterprise Agents unterstützen kann; das kann es. Die Frage ist, wo die dauerhafte Plattformgrenze liegen sollte: innerhalb einer Google-zentrierten Agentenstrategie oder in einer portablen Steuerungsebene, die Plattformteams über Modelle, Tools, Clouds, selbst gehostete Bereitstellungen und regulierte Umgebungen hinweg nutzen können.

TrueFoundry AI Gateway bietet eine Latenz von ~3—4 ms, verarbeitet mehr als 350 RPS auf einer vCPU, skaliert problemlos horizontal und ist produktionsbereit, während LiteLM unter einer hohen Latenz leidet, mit moderaten RPS zu kämpfen hat, keine integrierte Skalierung hat und sich am besten für leichte Workloads oder Prototyp-Workloads eignet.

Der schnellste Weg, deine KI zu entwickeln, zu steuern und zu skalieren

.webp)

.webp)

.webp)

.webp)

.webp)