Die 25 besten MLOps-Tools des Jahres 2026

Auf Geschwindigkeit ausgelegt: ~ 10 ms Latenz, auch unter Last

Unglaublich schnelle Methode zum Erstellen, Verfolgen und Bereitstellen Ihrer Modelle!

- Verarbeitet mehr als 350 RPS auf nur 1 vCPU — kein Tuning erforderlich

- Produktionsbereit mit vollem Unternehmenssupport

As machine learning adoption continues to accelerate across industries, the need for robust, scalable, and automated ML pipelines has never been greater. In 2026, MLOps platforms have become foundational to operationalizing AI, from model training and deployment to monitoring and governance.

These platforms streamline the end-to-end lifecycle, helping teams manage complexity, ensure reproducibility, and accelerate time-to-value. Whether you’re a startup scaling your first model or an enterprise deploying hundreds, choosing the right MLOps platform is critical.

In this guide, we explore what MLOps is, why it matters, and the best MLOps tools shaping the landscape in 2026.

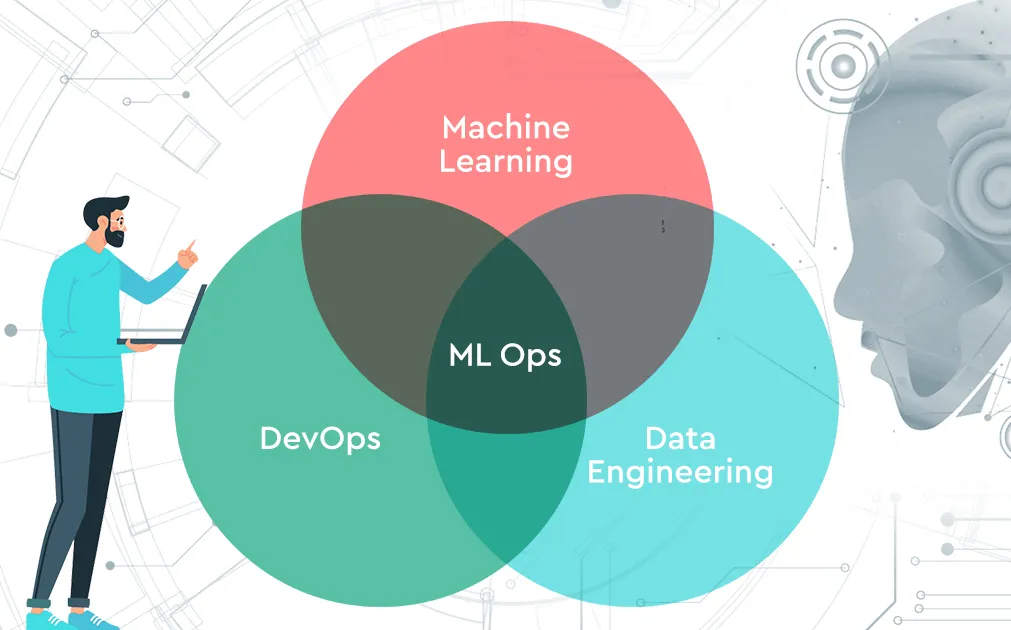

What is MLOps?

MLOps (Machine Learning Operations) is a discipline that merges the principles of machine learning, DevOps, and data engineering to enable the development, deployment, monitoring, and maintenance of reliable ML systems at scale. It ensures that models built in experimental environments can be safely and efficiently transitioned into production, where they must perform consistently, adapt to change, and remain accountable.

Traditional DevOps workflows focus on version control, CI/CD pipelines, automated testing, and system reliability. MLOps inherits these, but extends them to tackle the unique challenges of machine learning: managing constantly evolving data, retraining models to account for drift, evaluating non-deterministic results, and maintaining reproducibility across model iterations.

Why Do You Need MLOps Tools?

As machine learning moves from experimentation to enterprise-scale deployment. MLOps tools have become essential for ensuring consistency, reliability, and speed across the model lifecycle. Without a centralized MLOps solution, teams often end up with fragmented tools, manual processes, and inconsistent workflows that slow down innovation and introduce operational risk.

MLOps platforms solve these challenges by providing a unified interface to manage data pipelines, training workflows, model tracking, deployment, and monitoring, all in one place. This consolidation enables tighter collaboration between data scientists, ML engineers, and DevOps teams, reducing handoff friction and improving reproducibility across environments.

How to Choose Best MLOps Platforms?

When selecting the MLOps tools in 2026, it's important to evaluate not just features, but how well the platform supports your ML workflow, scales with your infrastructure, and aligns with your team’s operational goals. Below are some essential criteria to consider::

End-to-End Lifecycle Support

An ideal MLOps platform should cover the full machine learning lifecycle, from data versioning and training to deployment and monitoring. Fragmented toolchains can create inefficiencies and inconsistencies across teams. Platforms that unify these stages into a single workflow help improve reproducibility, reduce handoffs, and accelerate iteration.

Scalability and Infrastructure Flexibility

As ML workloads scale, so must the platform. A good MLOps solution should support everything from local experimentation to distributed training across multiple GPUs or nodes. It should also offer flexibility in deployment, supporting cloud-native, on-premise, and hybrid environments without locking you into a specific stack.

Ease of Use and Developer Experience

Usability is often overlooked but critical. A strong platform offers clean interfaces, both UI and CLI, along with comprehensive SDKs that integrate with popular frameworks like PyTorch, TensorFlow, and Hugging Face. A platform that’s intuitive for both data scientists and ML engineers promotes better collaboration and faster onboarding.

Integration Ecosystem

MLOps doesn’t exist in isolation. Your platform should integrate seamlessly with existing systems for storage (like S3 or GCS), CI/CD tools (like GitHub Actions or Jenkins), observability platforms (like Prometheus or Grafana), and model registries. Strong integration ensures smooth data and model flow across your pipeline.

Governance, Security, and Compliance

For organizations working in regulated environments, governance features are a must. The platform should support role-based access control (RBAC), audit logs, and lineage tracking. Compliance with standards like SOC 2, HIPAA, or GDPR helps ensure data privacy, trust, and long-term viability in enterprise settings.

Which Are The Best MLOps Tools of 2026?

The MLOps landscape in 2026 is rich with platforms catering to different needs, from lightweight experiment tracking to enterprise-grade model deployment and monitoring. Below are the 25 best MLOps tools helping teams streamline their ML workflows, optimize infrastructure, and operationalize models at scale. Each platform has its strengths depending on your tech stack, team maturity, and business goals.

1. TrueFoundry

TrueFoundry is a modern MLOps and LLMOps platform built for teams that want to deploy, scale, and monitor machine learning and generative AI models in production. It abstracts away infrastructure complexity while offering complete control, allowing teams to move from experimentation to deployment in minutes.

Unlike legacy systems, TrueFoundry is optimized for performance, developer productivity, and GenAI-first workflows, including support for agents, RAG pipelines, and advanced tracing. Its enterprise-grade security and modular design make it one of the best MLOps tools, suitable for organizations of all sizes.

Key Features:

- Production-grade model serving with support for vLLM, SGLang, and autoscaling for high-throughput, low-latency inference.

- Integrated fine-tuning, tracing, and RAG orchestration, including LoRA/QLoRA, vector DBs, prompt management, and agent frameworks like LangChain and CrewAI.

- Enterprise readiness with SOC 2, HIPAA, GDPR compliance, unified API gateway, role-based access control, and audit logs.

Best For:

AI-driven teams building LLM-backed products, especially where performance, security, and observability are critical. Excellent fit for fast-moving teams or enterprises needing scalable GenAI deployment. Here are some of the best LLM gateway tools.

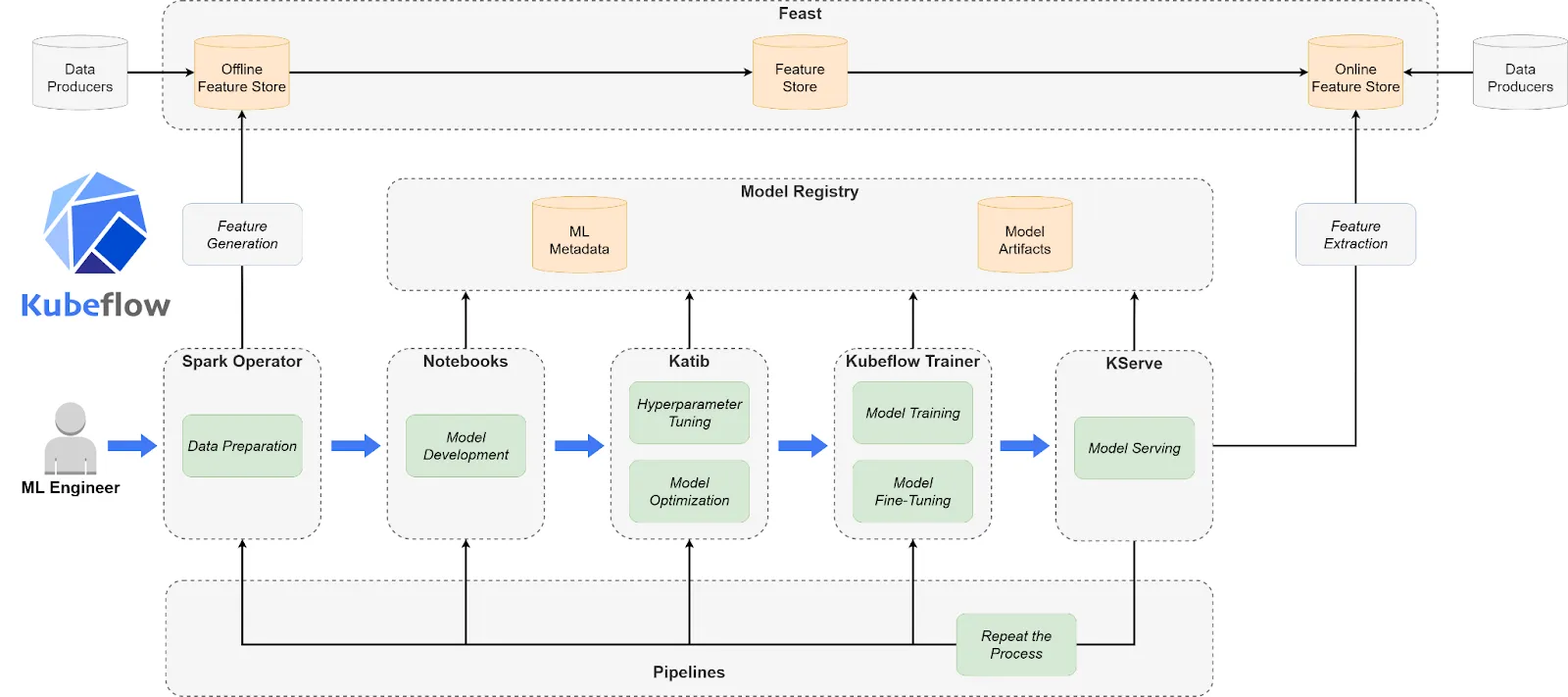

2. Kubeflow

Kubeflow is a Kubernetes-native, open-source and one of the best MLOps tools for building and managing portable, composable ML workflows. It provides the flexibility to orchestrate training, tuning, and serving using familiar Kubernetes abstractions. Though powerful, Kubeflow requires deep infrastructure knowledge and isn’t ideal for teams without dedicated DevOps support. It shines when customized, scalable, and secure ML pipelines are a necessity.

Key Features:

- Modular, cloud-agnostic ML pipelines built on Kubeflow Pipelines with DAG orchestration, notebook support, and multi-step workflows.

- Native Kubernetes integration for managing compute resources, scaling jobs, and deploying models using KFServing.

- Secure multi-user environments with namespace isolation, RBAC, and compatibility across AWS, GCP, Azure, and on-prem clusters.

Best For:

Teams with strong Kubernetes expertise looking to fully customize and control their MLOps workflows, especially in regulated or hybrid cloud environments.

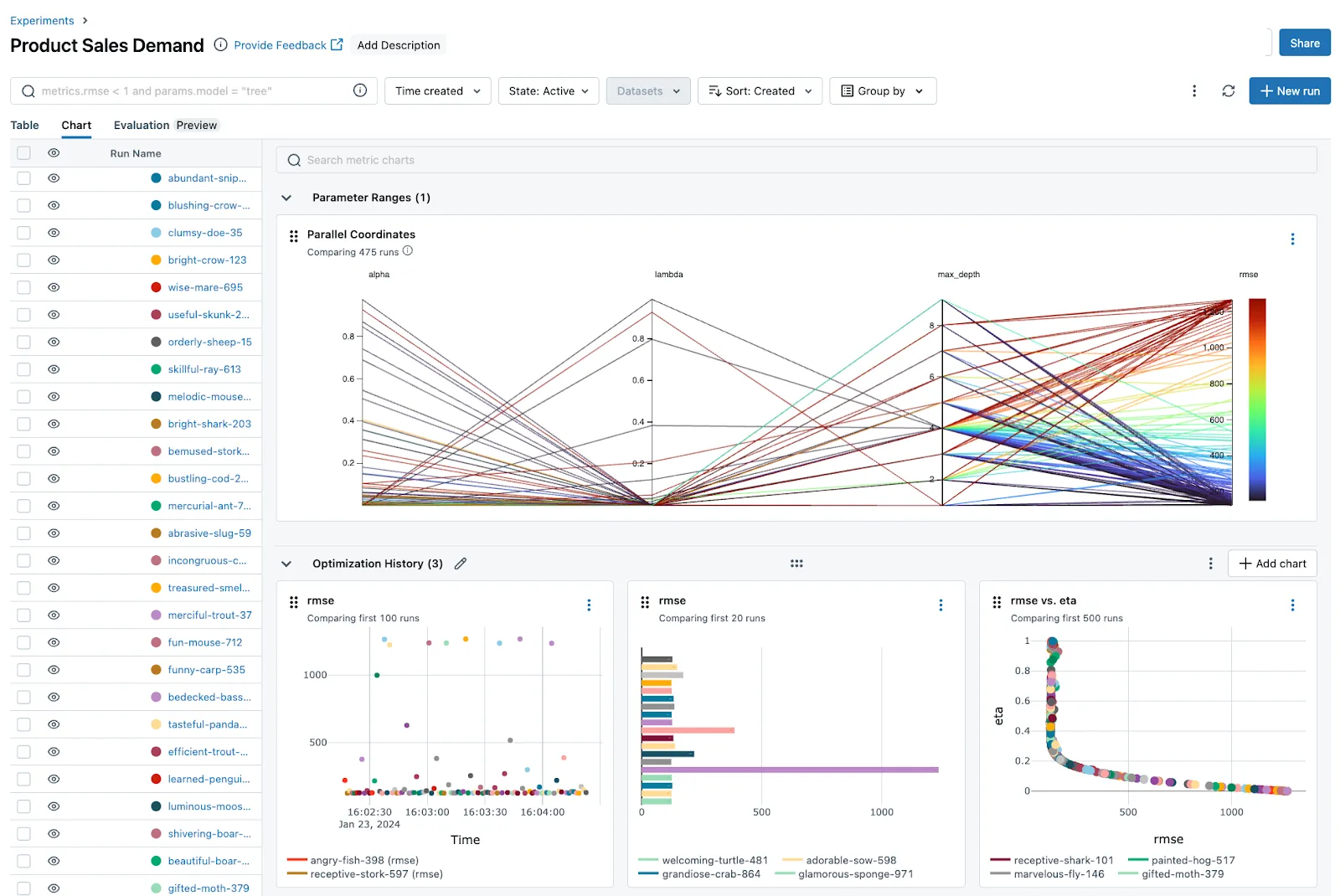

3. MLflow

MLflow is a lightweight, open-source MLOps platform created by Databricks, focused on managing ML experimentation and model versioning. Its modular components let teams integrate tracking, registry, and deployment into their existing workflows.

This MLOps tool is ideal for smaller teams or organizations that want flexibility without the overhead of full-scale infrastructure or Kubernetes.

Key Features:

- Experiment tracking and model registry with seamless logging of parameters, metrics, and artifacts across runs.

- Framework-agnostic and extensible, supporting TensorFlow, PyTorch, Scikit-learn, and custom ML workflows with REST and CLI integration.

- Deployment-ready with support for Docker, cloud environments, and custom serving tools for production integration.

Best For:

ML teams seek lightweight, customizable tooling for tracking experiments, sharing models, and managing versions without relying on a large-scale platform.

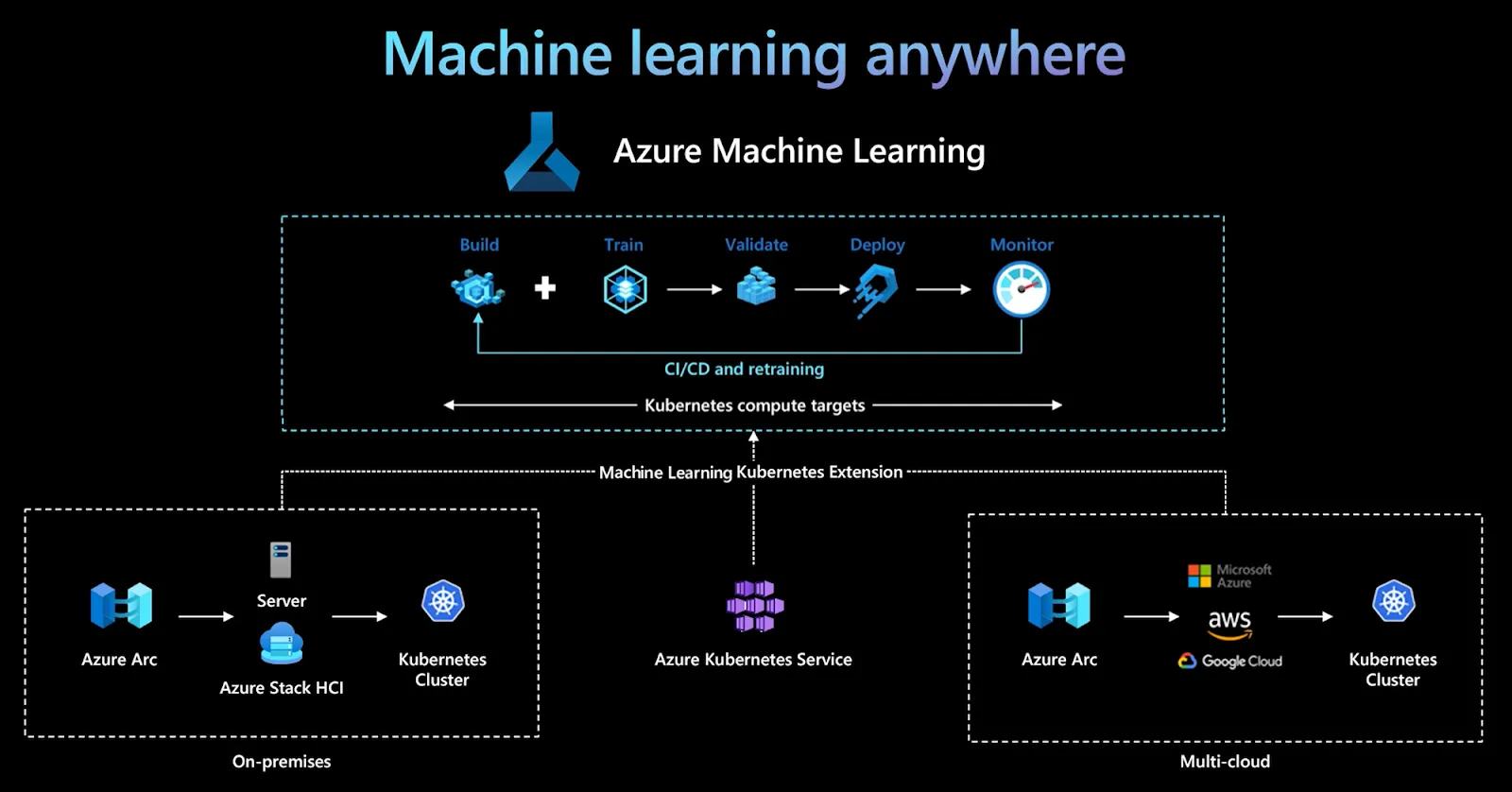

4. Azure Machine Learning

Azure Machine Learning is Microsoft’s fully managed MLOps platform designed for building, training, deploying, and monitoring machine learning models at enterprise scale. It integrates tightly with the Azure ecosystem, offering a powerful suite of tools for model management, AutoML, and responsible AI. Azure ML is ideal for organizations already invested in Microsoft’s cloud and looking for security, scalability, and compliance.

Key Features:

- End-to-end ML lifecycle support, including data labeling, automated training, hyperparameter tuning, model registry, and deployment pipelines.

- Deep Azure integration, enabling seamless use of Azure Blob Storage, Azure DevOps, Azure Kubernetes Service (AKS), and Azure Synapse.

- Built-in governance and compliance features like lineage tracking, role-based access, model explainability, and support for responsible AI.

Best For:

Enterprises operating on Microsoft Azure need a highly secure, scalable, and fully integrated MLOps platform with enterprise compliance baked in.

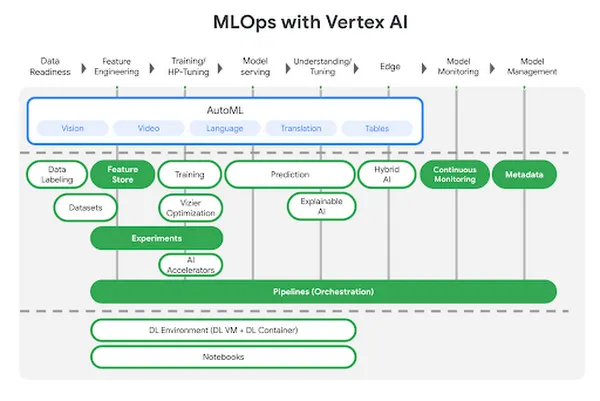

5. Google Vertex AI

Vertex AI is Google Cloud’s unified platform for ML development, combining AutoML and custom model training under one interface. It abstracts infrastructure while offering advanced services like feature stores, pipelines, and experiment tracking.

Built for scalability and integration with Google’s ecosystem, this MLOps tool is optimized for production-level ML deployment and data-driven workflows.

Key Features:

- Unified MLOps platform combining AutoML, custom training, managed notebooks, pipelines, and feature stores in one place.

- Native GCP ecosystem integration, including BigQuery, Dataflow, and Kubernetes Engine for data and compute orchestration.

- Built-in model monitoring with support for drift detection, explainability, and Vertex AI Model Registry for lifecycle management.

Best For:

Teams building and scaling machine learning on Google Cloud who want a managed, scalable MLOps platform with full data and deployment integration.

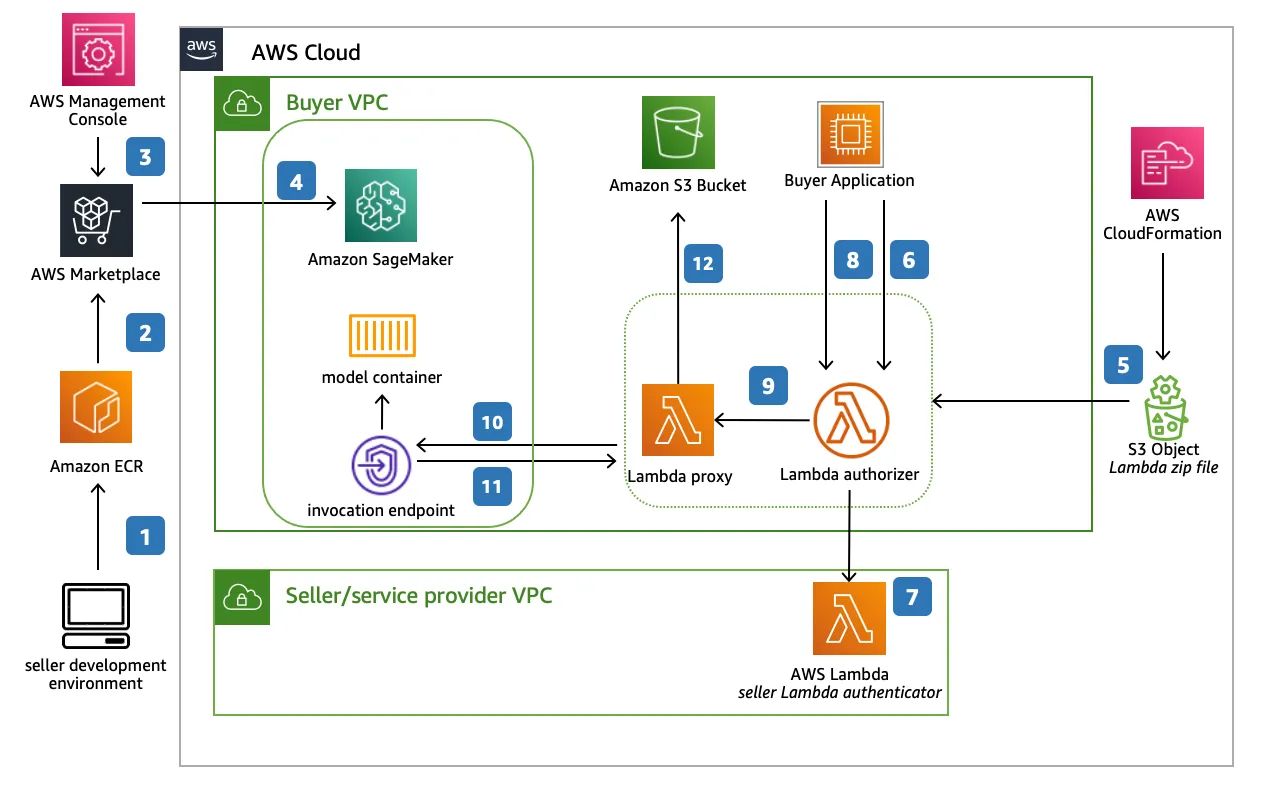

6. Amazon SageMaker

Amazon SageMaker is AWS’s flagship MLOps platform that offers everything from data preprocessing to real-time model deployment. Known for its broad functionality, SageMaker supports custom model development, AutoML, model hosting, and advanced monitoring tools. It’s tightly integrated with the AWS ecosystem, making it a go-to choice for cloud-native enterprises.

Key Features:

- Comprehensive ML services including training jobs, experiments, pipelines, AutoML (SageMaker Autopilot), and model registry.

- Tight AWS integration, leveraging S3, Lambda, CloudWatch, and IAM for data access, security, and automation.

- Advanced production tools such as model monitoring, debugger, Shadow Deployments, and multi-model endpoints.

Best For:

Organizations already using AWS for infrastructure who need a robust, scalable MLOps platform with deep integration and full lifecycle support.

7. DVC (Data Version Control)

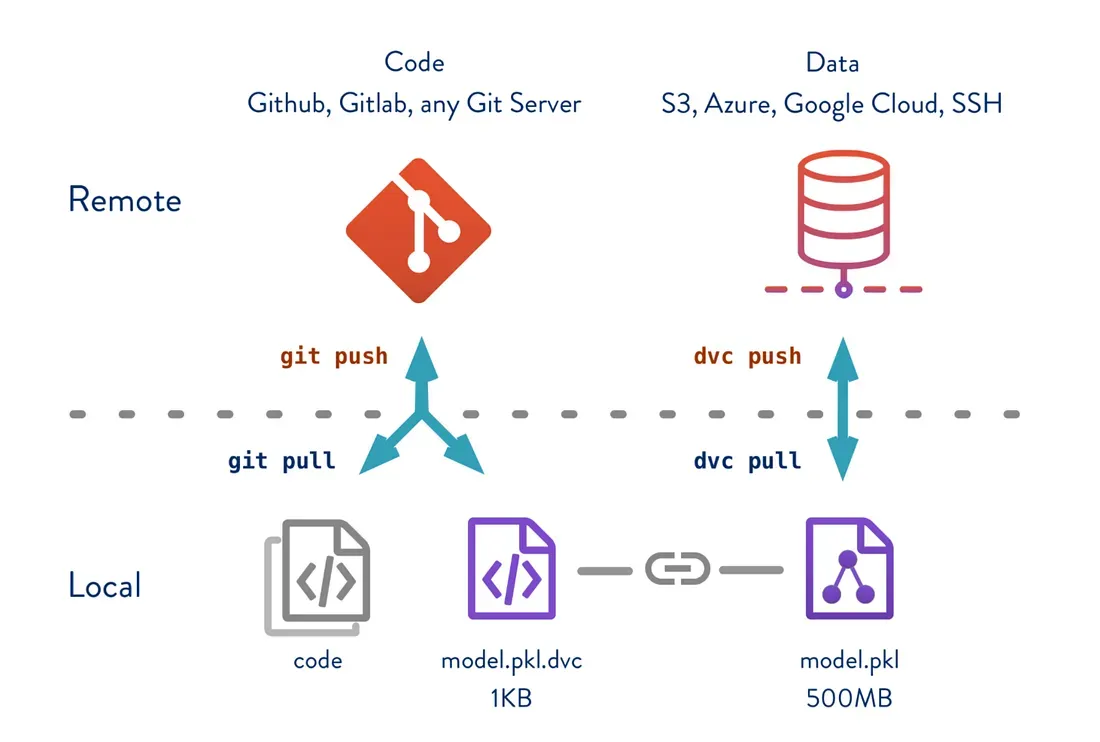

DVC is an open-source tool that brings version control to machine learning projects by tracking datasets, models, and experiments—similar to how Git manages code. It doesn’t aim to be a full-stack MLOps platform, but instead focuses on reproducibility, collaboration, and model tracking through Git-compatible workflows. DVC integrates seamlessly into existing pipelines and gives ML practitioners more control over experiment management.

Key Features:

- Data and model versioning using Git-style commands, enabling reproducible pipelines and consistent checkpoints across teams.

- Experiment tracking and comparison with support for metrics, parameters, and results visualization, either locally or via DVC Studio.

- Remote storage integration for datasets and artifacts across S3, GCS, Azure, SSH, and local directories.

Best For:

Teams looking for lightweight, code-first MLOps capabilities centered around reproducibility, Git-based workflows, and experiment management—especially in research and iterative ML projects.

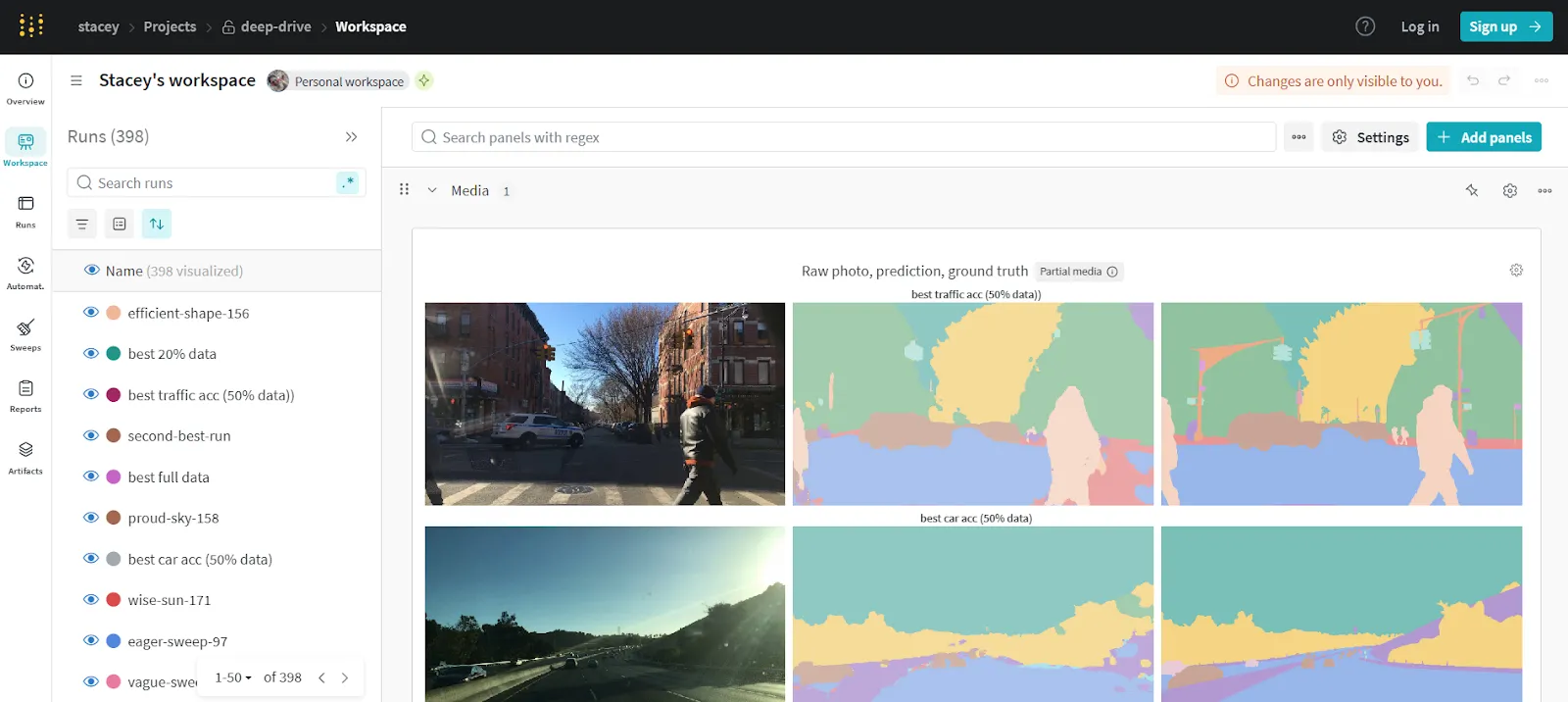

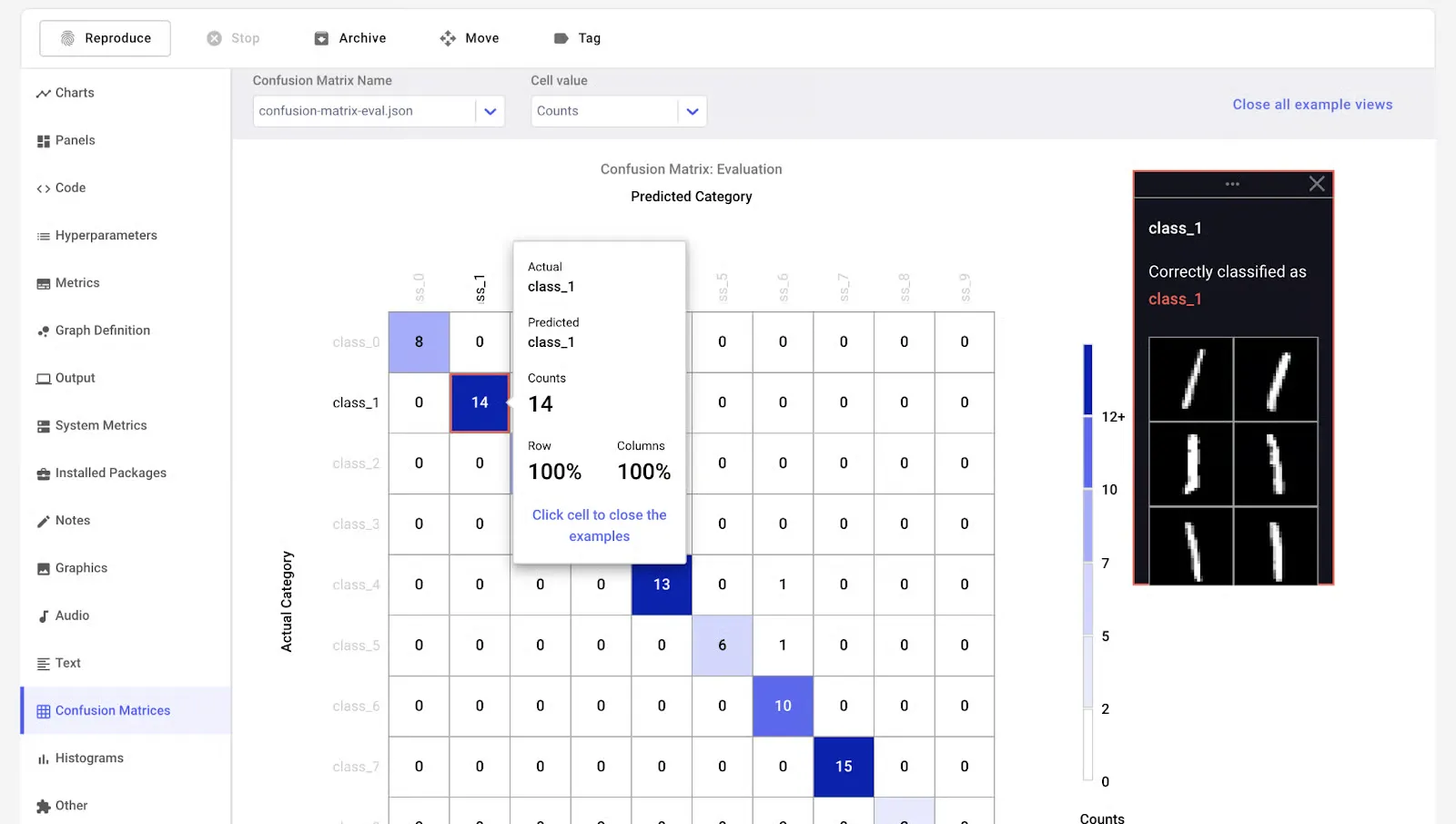

8. Weights & Biases

Weights & Biases (W&B) is one of the best MLOps tools for experiment tracking, collaboration, and model visualization. It’s widely adopted in both research and production environments, offering simple integration with most ML frameworks. W&B focuses on observability, enabling real-time insight into training performance, hyperparameters, and system metrics.

Key Features:

- Experiment and model tracking, with live dashboarding for training runs, hyperparameter tuning, and performance visualization.

- Seamless integration with PyTorch, TensorFlow, JAX, Hugging Face, and others, with minimal code changes required.

- Collaboration tools including team dashboards, project reports, and artifacts versioning for centralized project visibility.

Best For:

ML teams focused on rapid iteration, visualization, and collaboration. Ideal for research-driven environments and teams that want better insight into training performance.

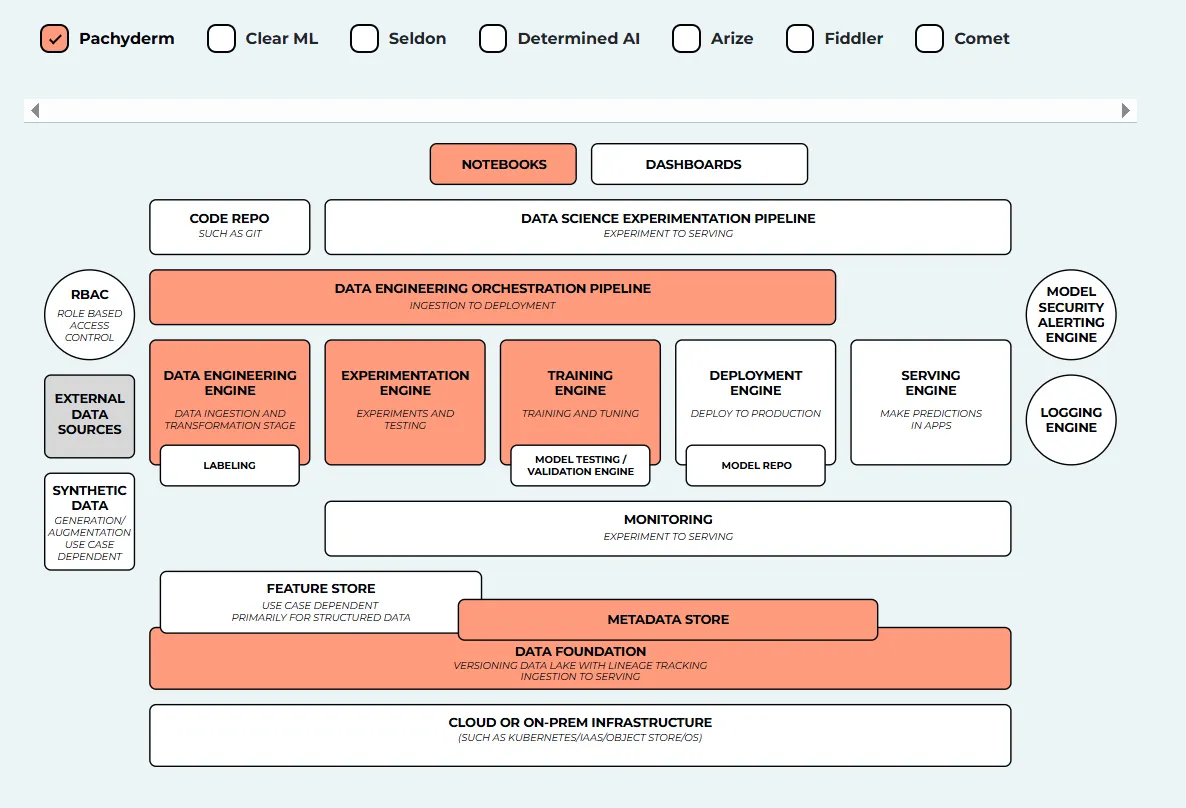

9. Pachyderm

Pachyderm is an open-source data science platform built for data lineage, version control, and reproducible pipelines. Unlike traditional MLOps tools, Pachyderm uses a Git-like approach for data, making it highly suitable for teams handling complex data dependencies or regulated environments. It combines containerization with data pipeline orchestration to ensure versioned, traceable workflows.

Key Features:

- Data versioning and lineage tracking to ensure full logs of datasets used in model training.

- Scalable, Docker-native pipelines that support parallel processing across large datasets with minimal configuration.

- Enterprise integrations and on-prem support, with compatibility for Kubernetes, cloud, and hybrid deployments.

Best For:

Teams in regulated industries or data-intensive workflows that need strong version control and lineage tracking for compliance, reproducibility, and scale.

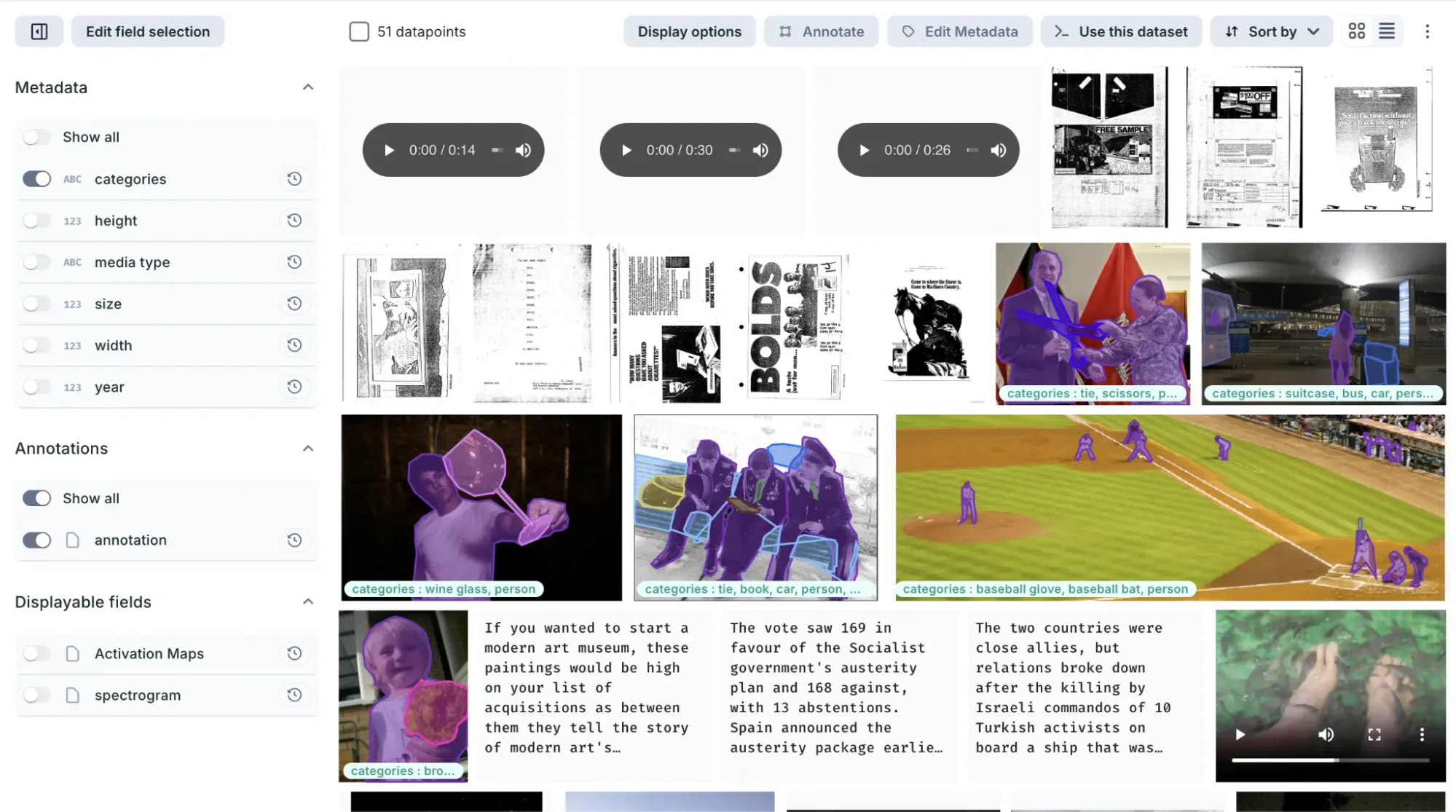

10. Allegro AI

Allegro AI is an MLOps platform designed specifically for managing deep learning workflows at scale—particularly in computer vision and edge AI environments. It focuses on improving reproducibility, collaboration, and traceability across the AI lifecycle.

With strong capabilities in dataset management, model versioning, and experiment tracking, this MLOps tool offers a secure, end-to-end infrastructure for teams building and deploying high-performance models in production or regulated environments.

Key Features:

- Visual dataset and model management with automated versioning, annotations, and lineage tracking for deep learning projects.

- Experiment tracking and collaboration with project-based views, performance comparison, and real-time team dashboards.

- Edge AI support for deploying models to edge devices with reproducibility, rollback, and performance monitoring.

Best For:

Teams working on computer vision, deep learning, or edge deployment use cases—especially in industries like automotive, manufacturing, healthcare, or defense, where traceability and control over data and models are essential.

11. Comet ML

Comet ML is a machine learning platform designed to help you monitor, analyze, and refine models and experiments. It works seamlessly with popular libraries such as Scikit-learn, PyTorch, TensorFlow, and Hugging Face.

Comet MLOps tool makes it easy to explore and compare experiment results, while also providing rich visualizations for data samples, including images, audio, text, and structured tables.

Key Features:

- Automatically records settings, results, code, and dependencies so you can compare experiments side by side.

- Provides a central place to store, organize, version, and share models with your team.

- Saves and tracks versions of datasets and models using “Artifacts,” making experiments reproducible.

- Helps you find the best parameter settings to improve model performance.

- Creates graphs and custom dashboards to monitor training results (like loss and accuracy) and system usage (CPU/GPU).

- Monitors deployed models to detect performance drops or data drift.

Best For:

Best for data scientists, machine learning engineers, and teams who want an easy way to track experiments, compare results, and improve model performance.

12. Prefect

Prefect is a modern workflow orchestration tool designed to monitor, coordinate, and manage data pipelines across applications. It is an open-source, lightweight solution built to support end-to-end machine learning and data workflows.

You can use either Prefect Orion UI or Prefect Cloud for managing and visualizing workflows. Prefect Orion UI is an open-source, locally hosted orchestration engine and API server that provides insights into local workflow runs and system activity.

Prefect Cloud, on the other hand, is a hosted service that lets you visualize flows, runs, and deployments while also managing accounts, workspaces, and team collaboration.

Key Features:

- Flexible workflow orchestration across applications and environments

- Real-time monitoring and observability of flows and tasks

- Local orchestration with Prefect Orion UI

- Hosted management and collaboration with Prefect Cloud

- Easy deployment and scheduling of workflows

- Scalable infrastructure for data and ML pipelines

Best For:

Data engineers, ML engineers, and teams that need reliable workflow orchestration, visibility into pipelines, and scalable collaboration for data and machine learning projects.

13. Metaflow

Metaflow is a workflow management tool for data science and machine learning that simplifies building, running, and deploying models. This MLOps tool helps teams manage pipelines at scale while automatically handling experiment tracking, data versioning, and production deployment.

Key Features:

- Workflow design and execution for data science and ML pipelines

- Automatic experiment tracking and data versioning

- Scalable execution on cloud platforms (AWS, GCP, Azure)

- Seamless deployment of models to production

- Notebook-friendly visualization of results

- Integration with popular ML libraries and Python tools

- R API support for broader language compatibility

Best For:

Data scientists and ML teams who want a simple, scalable workflow tool that handles orchestration, tracking, and deployment while minimizing MLOps overhead.

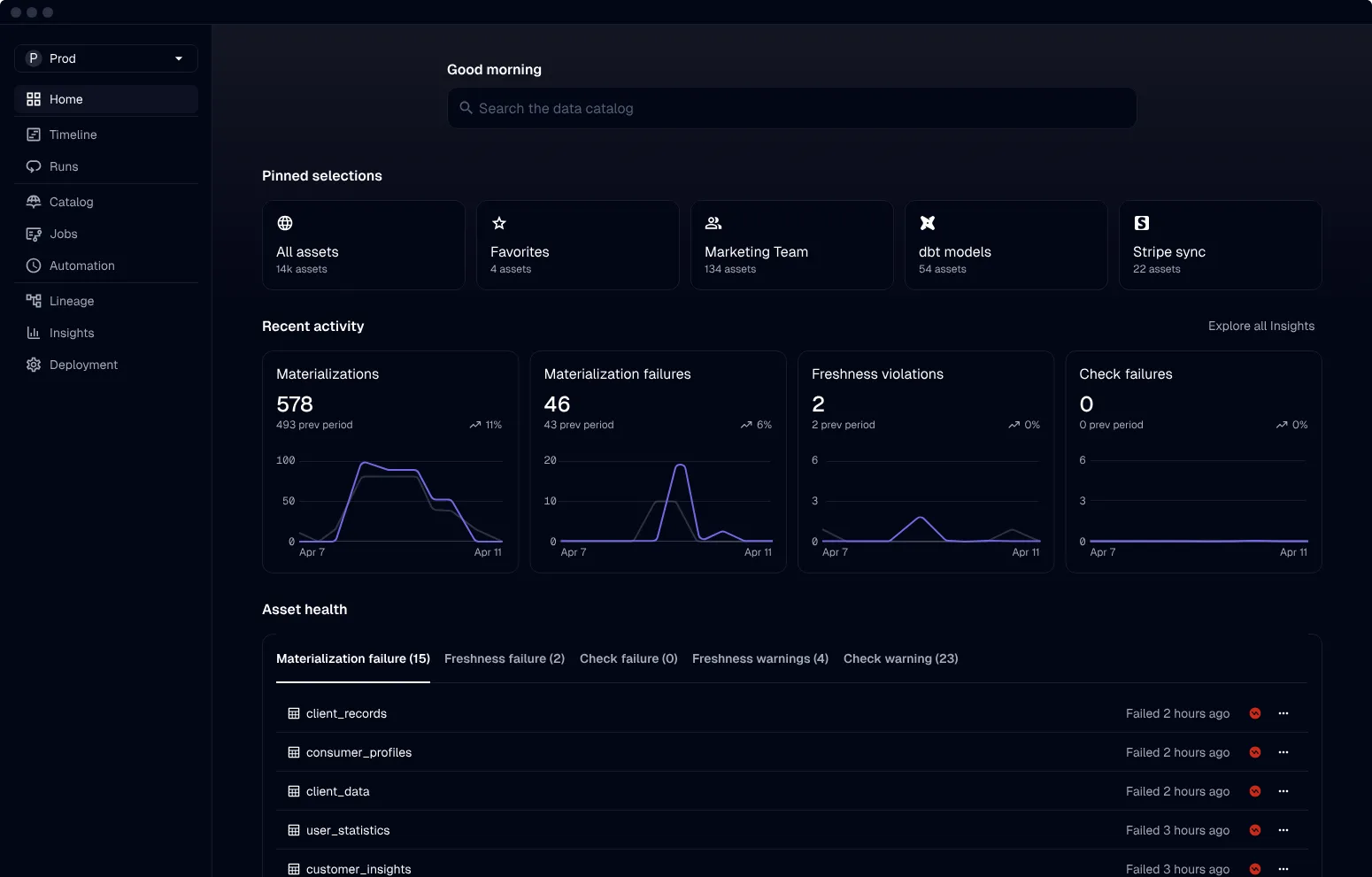

14. Dagster

Dagster is a cloud-native orchestration platform that helps data teams define, run, and monitor complex data pipelines efficiently. It focuses on reliability, observability, and a modern development experience for managing data workflows.

Key Features:

- Task-based workflows for modular and reusable pipeline design

- Declarative programming model for clearer pipeline definitions

- Strong observability with built-in logging, monitoring, and debugging

- Enhanced testability for reliable data pipeline development

- Integrations with popular data tools and platforms

- Scalable, cloud-native architecture for modern data teams

Best For:

Data engineers and data teams who need reliable, testable, and observable data pipeline orchestration with strong integration support and a modern development workflow.

15. Kedro

Kedro is a Python-based workflow orchestration tool that helps build reproducible, maintainable, and modular data science projects. It brings software engineering best practices, like modularity, separation of concerns, and versioning, into machine learning workflows.

Key Features:

- Modular pipeline creation, visualization, and execution

- Built-in configuration and dependency management

- Data catalog for organized data access and versioning

- Logging and experiment tracking support

- Deployment on single machines or distributed environments

- Encourages reusable, maintainable, and production-ready code

- Facilitates collaboration across data science teams

Best For:

Data scientists and teams who want structured, maintainable, and reproducible data science workflows using software engineering best practices.

16. TruEra

TruEra is a platform focused on improving machine learning model quality through testing, explainability, and root cause analysis. This MLOps tool helps teams debug models, understand performance issues, and ensure fairness across the ML lifecycle.

Key Features:

- Automated model testing to improve quality in development and production

- Systematic checks for performance, stability, and fairness

- Model version tracking to analyze performance over time

- Root cause analysis to identify sources of errors and bias

- Feature-level insights to detect and reduce model bias

- Easy integration with existing ML infrastructure and workflows

Best For:

ML engineers, data scientists, and organizations that need deeper model insights, fairness checks, and reliable performance monitoring across the model lifecycle.

17. BentoML

BentoML is a Python-first platform that simplifies deploying, serving, and monitoring machine learning models in production. It helps teams ship ML applications faster with scalable, high-performance model serving.

Key Features:

- Easy deployment of models as production-ready APIs

- High-performance serving with parallel inference and adaptive batching

- Hardware acceleration support for optimized performance

- Centralized dashboard for organizing and monitoring deployments

- Compatibility with major ML frameworks (Keras, ONNX, LightGBM, PyTorch, Scikit-learn)

- End-to-end solution for model deployment, serving, and monitoring

Best For:

ML engineers and teams that need a fast, scalable, and reliable way to deploy and manage machine learning models in production environments.

18. Evidently AI

Evidently AI is an open-source Python library for monitoring machine learning models across development, validation, and production. It helps ensure data and model quality by detecting drift, performance issues, and other potential problems.

Key Features:

- Data and model quality checks for regression and classification tasks

- Detection of data drift and target drift

- Batch testing with structured checks for datasets and models

- Interactive reports and dashboards for performance and drift analysis

- Real-time monitoring of data and model metrics in production

- Easy integration into existing ML pipelines and workflows

Best for:

Data scientists and ML engineers who need reliable model monitoring, drift detection, and performance tracking throughout the ML lifecycle.

19. DagsHub

DagsHub is a collaboration platform for machine learning projects that helps teams track, version, and manage data, models, experiments, pipelines, and code in one place. Often described as “GitHub for machine learning,” it provides tools to streamline the end-to-end ML workflow.

Key Features:

- Git and DVC repositories for versioning data, models, and code

- Built-in experiment tracking with DagsHub Logger and MLflow integration

- Dataset annotation with Label Studio integration

- Diffing support for Jupyter notebooks, code, datasets, and images

- Inline comments on files, code lines, and datasets for collaboration

- Project reports similar to a GitHub wiki

Best For:

ML teams and organizations that need a collaborative, version-controlled environment to manage the full machine learning lifecycle with strong integration and reproducibility support.

20. Iguazio MLOps Platform

The Iguazio MLOps Platform is an end-to-end solution that automates the entire machine learning lifecycle, from data ingestion and preparation to training, deployment, and production monitoring. This MLOps tool offers both an open-source framework (MLRun) and a fully managed platform, with flexible deployment across cloud, hybrid, or on-premises environments.

Key Features:

- Data ingestion from multiple sources with an integrated feature store for reusable features

- Scalable, serverless training and evaluation with automated tracking and data versioning

- Built-in CI/CD for continuous model training and deployment

- One-click model deployment with ongoing performance monitoring

- Model drift detection and mitigation in production

- Centralized dashboard for managing, governing, and monitoring models in real time

- Flexible deployment options across cloud, hybrid, and on-prem environments

Best For:

Enterprises and regulated industries (e.g., healthcare, finance) that need a flexible, scalable, and governed MLOps platform with strong automation and deployment control.

21. Qdrant

Qdrant is an open-source vector database and similarity search engine that enables you to store, manage, and query vector embeddings through a production-ready service and simple API. It is designed for high-performance semantic search and AI-powered applications.

Key Features:

- Easy-to-use API with Python support and client libraries for multiple languages

- High-speed, accurate search using a modified HNSW algorithm for nearest neighbor search

- Support for rich data types and filters, including text, numeric ranges, and geo-locations

- Distributed, cloud-native architecture with horizontal scalability

- Built in Rust for high performance and resource efficiency

Best For:

Developers and ML teams building semantic search, recommendation systems, and AI applications that require fast, scalable vector search and filtering.

22. lakeFS Data Versioning System

LakeFS is an open-source data version control system that brings Git-like operations to object storage, allowing teams to manage data lakes with the same workflows used for code. It enables scalable, reliable data versioning for large-scale data environments.

Key Features:

- Git-like operations (branch, commit, merge) for data in object storage

- Zero-copy branching for fast experimentation and collaboration

- Pre-commit and merge hooks for CI/CD and data quality checks

- Revert and recovery capabilities to quickly fix data issues

- Scalable version control for large data lakes, up to exabyte scale

- Compatible with major cloud storage services

Best For:

Data engineers and organizations managing large data lakes who need reliable version control, safe experimentation, and reproducible data workflows at scale.

23. Fiddler

Fiddler AI is a model monitoring and explainability platform that helps teams understand, debug, and track machine learning models in production. It provides clear insights into model behavior, performance, and data quality through an intuitive interface.

Key Features:

- Performance monitoring with detailed data drift detection and analysis

- Data integrity checks to prevent incorrect or corrupted training data

- Outlier detection for both univariate and multivariate anomalies

- Service metrics for monitoring ML system operations and health

- Explainability tools to understand and debug model predictions

- Alerts and notifications for model issues in production

Best For:

ML engineers, data scientists, and organizations that need transparent model monitoring, explainability, and proactive alerts to maintain reliable production ML systems.

24. Ray

Ray is a distributed computing framework that helps developers scale AI and Python applications with ease. It provides a flexible runtime and a suite of AI libraries for building, training, and deploying machine learning systems at scale.

Key Features:

- Distributed runtime for scaling Python and AI workloads across clusters

- Core abstractions: tasks (stateless functions), actors (stateful workers), and objects (shared immutable data)

- Scalable data processing for large ML datasets

- Distributed training for machine learning and deep learning models

- Hyperparameter tuning for optimizing model performance

- Reinforcement learning support for advanced AI workloads

- Scalable model serving for production deployments

Best For:

Developers, ML engineers, and AI teams who need a flexible, high-performance framework to scale training, data processing, and model serving across distributed environments.

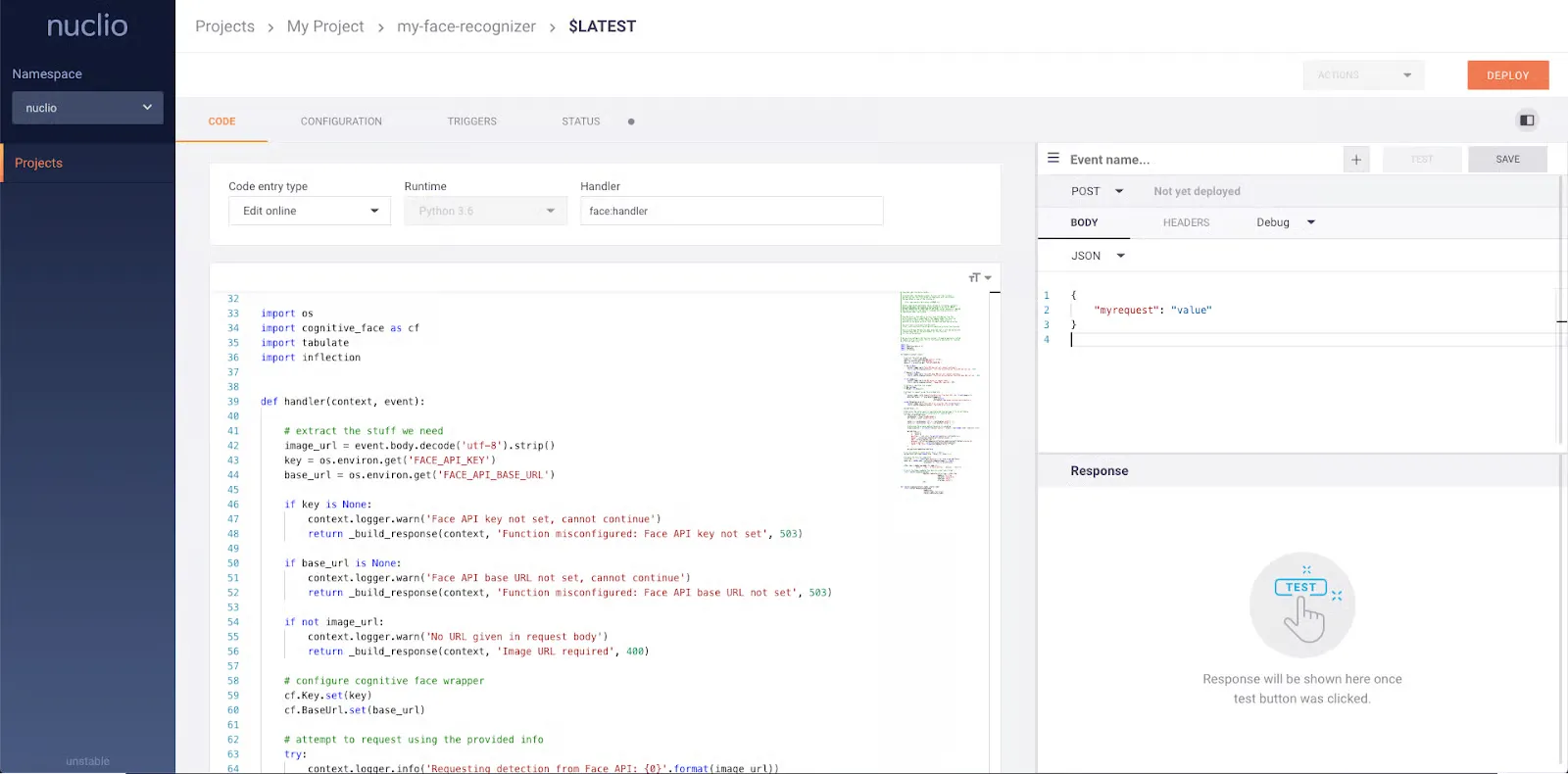

25. Nuclio

Nuclio ist ein leistungsstarkes, serverloses Framework, das für daten-, I/O- und rechenintensive Workloads entwickelt wurde. Es ermöglicht Echtzeitverarbeitung ohne Servermanagement und lässt sich gut in Data-Science-Tools und ML-Plattformen integrieren.

Die wichtigsten Funktionen:

- Serverlose Ausführung mit Echtzeitverarbeitung und hoher Parallelität

- Effiziente Nutzung von CPU-, GPU- und I/O-Ressourcen

- Integration mit beliebten Tools wie Jupyter und Kubeflow

- Unterstützung für verschiedene Daten- und Streaming-Quellen

- Stateful-Funktionen mit Datenpfadbeschleunigung für schnellere Verarbeitung

- Portabel auf Cloud-Plattformen, Edge-Geräten und Umgebungen mit geringem Stromverbrauch

- Unternehmensgerechtes Design für skalierbare Produktionsworkloads

Am besten geeignet für:

Organisationen und ML-Teams, die eine serverlose, leistungsstarke Plattform für Datenverarbeitung, Streaming und skalierbare KI-Workloads in Cloud- und Edge-Umgebungen in Echtzeit benötigen.

Vorteile von MLOps Tools

Die besten MLOps-Tools helfen Unternehmen dabei, den gesamten Lebenszyklus des maschinellen Lernens effizienter zu verwalten. Sie sorgen für Automatisierung, Zusammenarbeit und Zuverlässigkeit beim Aufbau, der Bereitstellung und Wartung von ML-Systemen.

1. Beschleunigen Sie die Modellentwicklung

MLOps-Tools automatisieren sich wiederholende Aufgaben wie Datenaufbereitung, Versuchsverfolgung und Pipeline-Orchestrierung. Dadurch können Teams schneller iterieren, manuelle Fehler reduzieren und Modelle schneller von der Idee zur Produktion überführen.

2. Verbessern Sie die Zusammenarbeit im Team

Diese Tools bieten gemeinsame Arbeitsbereiche, versionierte Ressourcen und eine übersichtliche Dokumentation, sodass Datenwissenschaftler, Ingenieure und Interessenvertreter leichter zusammenarbeiten, Änderungen überprüfen und Erkenntnisse teamübergreifend austauschen können.

3. Verbessern Sie die Leistung und Qualität des Modells

Mit integrierten Überwachungs-, Test- und Validierungsfunktionen helfen MLOps-Tools dabei, Probleme wie Datenabweichungen, Verzerrungen und Leistungseinbußen zu erkennen. Dadurch wird sichergestellt, dass die Modelle präzise und zuverlässig bleiben und auf die Geschäftsziele abgestimmt sind.

4. Verbesserte Versionskontrolle und Reproduzierbarkeit

MLOps-Plattformen verfolgen Versionen von Daten, Code, Modellen und Experimenten und ermöglichen es Teams, Ergebnisse zu reproduzieren, Änderungen zu überprüfen und die Konsistenz in allen Umgebungen aufrechtzuerhalten.

5. Optimierte Modellbereitstellung und Skalierung

Sie vereinfachen die Bereitstellung von Modellen in der Produktion durch Automatisierung, CI/CD-Pipelines und skalierbare Infrastruktur, sodass Unternehmen höhere Arbeitslasten bewältigen und sich effizient an wechselnde Anforderungen anpassen können.

Fazit

MLOps hat sich von einer Nischenpraxis zu einem grundlegenden Bestandteil moderner Workflows für maschinelles Lernen entwickelt. Im Jahr 2026 fragen sich Unternehmen nicht mehr, ob sie MLOps benötigen, sie fragen, welche Plattform am besten zu ihren Zielen, ihrer Infrastruktur und ihrem Umfang passt.

Wie wir gesehen haben, bietet die Landschaft alles, von leichten, modularen Tools wie MLflow und DVC bis hin zu vollständig verwalteten Unternehmenslösungen wie Azure ML, Vertex AI und SageMaker.

Für Teams, die sich auf GenAI, Feinabstimmung und Echtzeit-Inferenz konzentrieren, bieten neuere Plattformen wie TrueFoundry modernste Funktionen, die für moderne KI-Herausforderungen entwickelt wurden.

Operationalisieren Sie Ihre ML- und GenAI-Workloads schneller. Eine Demo buchen mit TrueFoundry um loszulegen.

Häufig gestellte Fragen

Ist MLOps besser als DevOps?

MLOps ist nicht besser als DevOps; es ist eine Erweiterung von DevOps, die auf maschinelles Lernen zugeschnitten ist. Während sich DevOps auf Softwarebereitstellung und Infrastrukturautomatisierung konzentriert, bietet MLOps Funktionen für Datenmanagement, Versuchsverfolgung, Modellüberwachung und Reproduzierbarkeit, wodurch die einzigartigen Herausforderungen beim Aufbau, der Bereitstellung und der Wartung von ML-Systemen in der Produktion angegangen werden.

Was ist das beste MLOps-Tool für Unternehmens-KI?

Die besten MLOps-Tools für Unternehmen sind solche, die die Geschwindigkeit der Entwickler mit einer strengen Infrastruktur-Governance in Einklang bringen. Während große Cloud-Anbieter ein breites Spektrum an Diensten anbieten, ist TrueFoundry oft die ideale Wahl für Teams, die Datenhoheit und Multi-Cloud-Flexibilität benötigen. Es bietet eine einheitliche Steuerungsebene, die nativ in Ihrer privaten VPC ausgeführt wird. So können Sie den gesamten Lebenszyklus automatisieren, von der Schulung bis zur Bereitstellung, ohne Kompromisse bei der Sicherheit oder Infrastrukturkontrolle einzugehen.

Ist Docker ein MLOps-Tool?

Docker ist eine grundlegende Technologie für die Containerisierung und damit ein wichtiger Bestandteil des MLOps-Tool-Stacks. Es stellt sicher, dass Modelle in allen Entwicklungs- und Produktionsumgebungen konsistent ausgeführt werden, obwohl es keine übergeordneten Aufgaben wie Modellüberwachung oder Versionierung verwaltet. TrueFoundry vereinfacht den Containerisierungsprozess, indem es automatisch Docker-Images erstellt und auf Kubernetes orchestriert, sodass Datenwissenschaftler Code bereitstellen können, ohne dass sie DevOps-Experten werden müssen.

Wie funktioniert TrueFoundry für MLOps?

TrueFoundry fungiert als entwicklerorientierte Abstraktionsebene, die auf Ihrer vorhandenen Cloud-Infrastruktur aufbaut. Es stellt eine direkte Verbindung zu Ihren Kubernetes-Clustern her und automatisiert komplexe Aufgaben wie Ressourcenbereitstellung, CI/CD und Model Serving. Durch die Bereitstellung einer einzigen Schnittstelle zur Verwaltung von Experimenten und Produktionsworkloads reduziert es die Bereitstellungszeiten von Wochen auf Minuten und senkt gleichzeitig die Kosten durch automatische GPU-Optimierung und Spot-Instance-Unterstützung.

Welche Cloud eignet sich am besten für eine MLOps-Plattform?

Keine einzelne Cloud eignet sich am besten für MLOps. Die richtige Wahl hängt von Ihren Anforderungen, Tools und Ihrem Budget ab. AWS, Azure und Google Cloud bieten alle leistungsstarke MLOps-Dienste, einschließlich automatisierter Pipelines, skalierbarer Schulungen und Modellüberwachung. Teams entscheiden häufig auf der Grundlage der vorhandenen Infrastruktur, der Compliance-Anforderungen und der Integration in ihr Datenökosystem.

TrueFoundry AI Gateway bietet eine Latenz von ~3—4 ms, verarbeitet mehr als 350 RPS auf einer vCPU, skaliert problemlos horizontal und ist produktionsbereit, während LiteLM unter einer hohen Latenz leidet, mit moderaten RPS zu kämpfen hat, keine integrierte Skalierung hat und sich am besten für leichte Workloads oder Prototyp-Workloads eignet.

Der schnellste Weg, deine KI zu entwickeln, zu steuern und zu skalieren

.png)

.png)

.webp)

.webp)