Exporting TrueFoundry AI Gateway Traces to Honeycomb with OpenTelemetry

Auf Geschwindigkeit ausgelegt: ~ 10 ms Latenz, auch unter Last

Unglaublich schnelle Methode zum Erstellen, Verfolgen und Bereitstellen Ihrer Modelle!

- Verarbeitet mehr als 350 RPS auf nur 1 vCPU — kein Tuning erforderlich

- Produktionsbereit mit vollem Unternehmenssupport

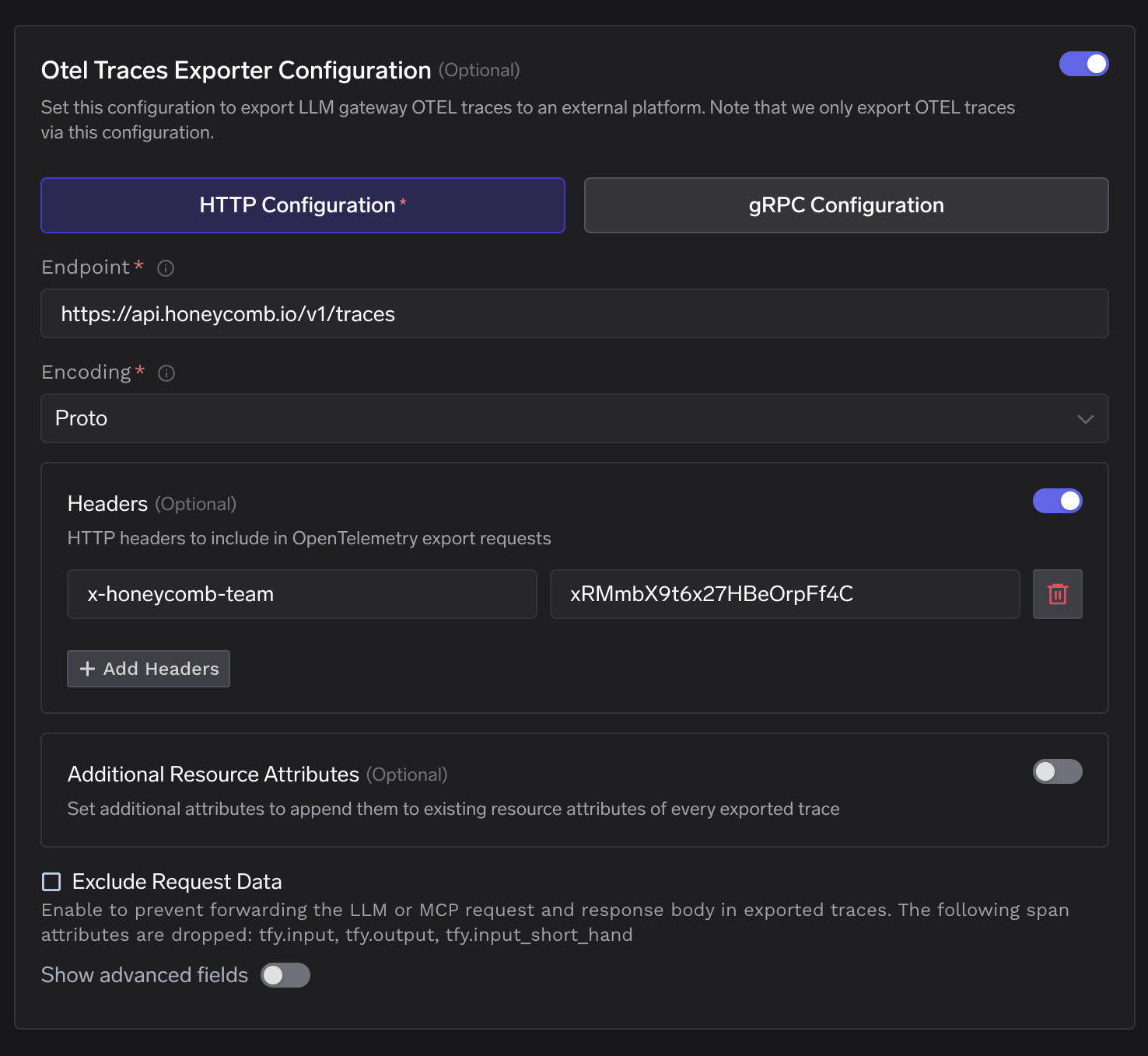

TrueFoundry AI Gateway emits OpenTelemetry traces for every request it processes and publishes them asynchronously over NATS to an OTEL exporter that forwards them to any OTLP-compatible backend over HTTP or gRPC. Honeycomb is one such backend. It accepts OTLP data at https://api.honeycomb.io/v1/traces over HTTP with protobuf encoding and authenticates using the x-honeycomb-team header. Once traces arrive Honeycomb indexes every span attribute and makes them available for ad hoc queries without requiring any pre-declared schema.

This post covers how the TrueFoundry gateway generates and exports traces and what Honeycomb does with them once they arrive and how the two systems connect at the protocol level.

How the Gateway Generates and Exports Traces

The TrueFoundry AI Gateway is built on the Hono framework and runs as a stateless gateway pod on 1 vCPU and 1 GB RAM handling 250+ requests per second with approximately 3 ms of added latency. The gateway is OpenTelemetry compliant and generates spans across the full lifecycle of every inbound request.

The span tree covers five stages. The first is the inbound HTTP handler which records the arrival of the request along with client metadata. The second is authentication where the gateway verifies the JWT token against a cached public key downloaded from the identity provider. No external auth call is made during this step. The third is model resolution where the gateway resolves the logical model identifier to a physical provider endpoint using an in-memory routing table synced from the control plane via NATS. The fourth is the outbound provider call where the gateway translates the request from OpenAI-compatible format to the target provider format via an adapter and forwards it. The fifth is streaming response handling where the gateway captures token counts and finish reasons as the response streams back.

Span attributes follow the gen_ai.* semantic conventions alongside TrueFoundry-specific attributes. The gen_ai.request.model attribute records the model identifier. The gen_ai.usage.prompt_tokens and gen_ai.usage.completion_tokens attributes record token consumption. The tfy.input and tfy.output attributes carry the full prompt and response text. The tfy.input_short_hand attribute carries a truncated version for display. The tfy.span_type attribute identifies the span category such as ChatCompletion or MCPGateway.

After the request completes the gateway publishes these spans to NATS asynchronously. A background OTEL exporter reads from this async path and forwards the spans to the configured external endpoint. This design means trace export never adds latency to the request path. The gateway does not fail a request if the external OTEL endpoint is unreachable. The export path is additive and does not replace TrueFoundry's own internal trace storage.

For workloads where prompt and response content must not leave the environment the gateway provides an Exclude Request Data toggle. When enabled it strips tfy.input and tfy.output and tfy.input_short_hand from spans before export. All other span attributes including token counts and latencies and model metadata continue to flow.

The MCP Gateway follows the same tracing model. Every tool invocation generates a span recording the calling user and the MCP server and the tool name and the full request and response payload and latency. These spans appear in the same trace tree as the LLM call spans enabling end-to-end trace visibility across agentic workflows.

What Honeycomb Does with the Trace Data

Honeycomb ingests OTLP data and stores every span as a row with arbitrary columns. There is no fixed schema. Every attribute that TrueFoundry emits whether gen_ai.usage.prompt_tokens or tfy.span_type or http.response.status_code becomes a queryable column in Honeycomb the moment the first span carrying it arrives.

The core query primitive in Honeycomb is the BubbleUp analysis. Given a slow or failed set of traces BubbleUp computes which attribute values are statistically overrepresented in that set compared to the baseline. For LLM gateway traffic this means identifying whether a latency spike is correlated with a specific model or a specific user or a specific MCP server without writing a query by hand.

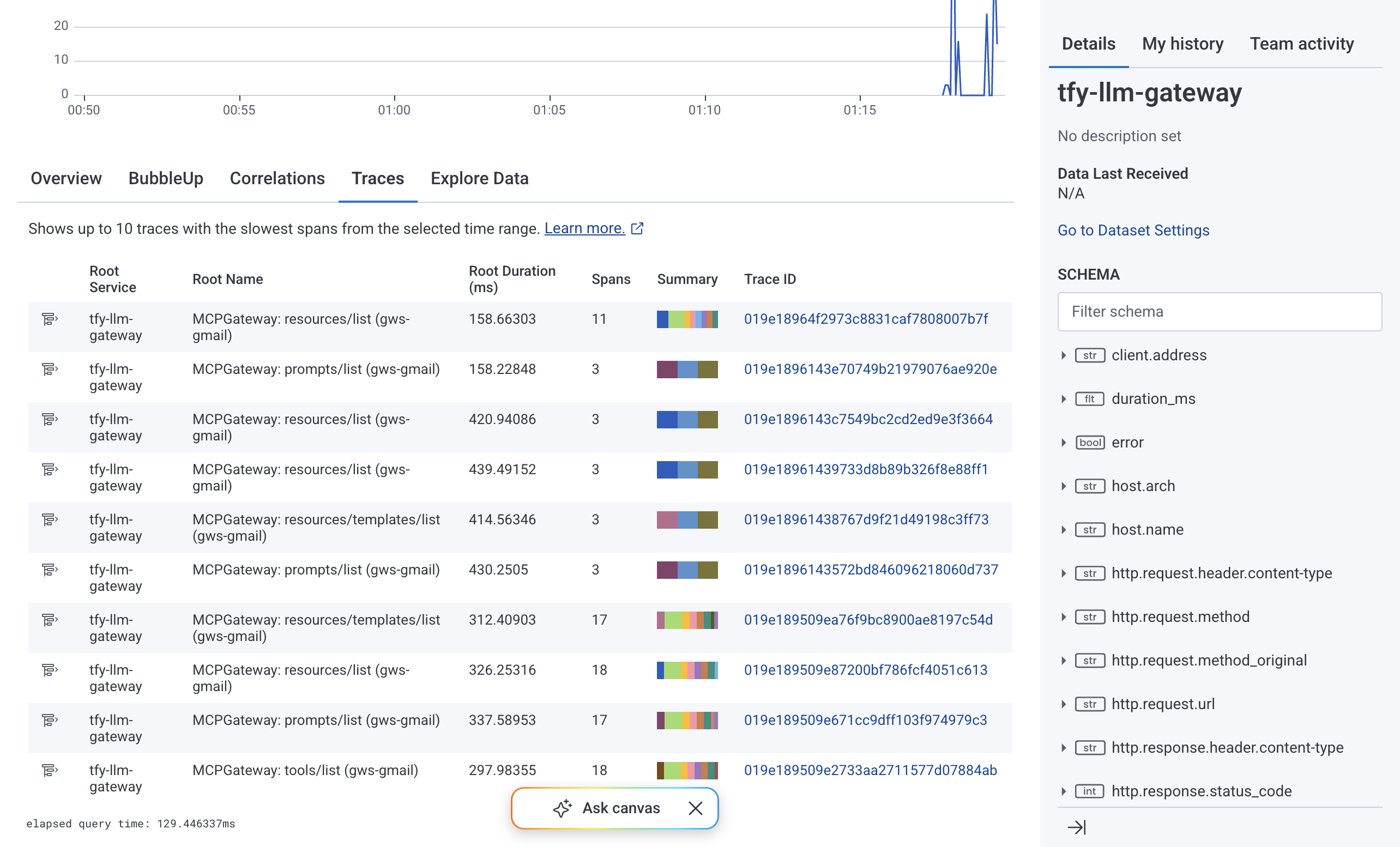

Honeycomb organizes data into datasets. The TrueFoundry gateway sets service.name to tfy-llm-gateway and Honeycomb routes spans into a dataset of that name by default. To route spans into a different dataset the x-honeycomb-dataset header is added to the exporter configuration alongside x-honeycomb-team. Multiple datasets can be used to separate production and staging traffic or to separate LLM gateway traces from MCP gateway traces.

The Traces tab in Honeycomb presents the span waterfall view. Each row is a span. The hierarchy shows parent-child relationships so a root MCPGateway: resources/list span with nested MCP: resources/templates/list spans and an outbound POST https://... span maps directly to what the gateway executed. Duration bars make the latency distribution visible at a glance. The Spans with errors counter isolates fault-bearing traces.

The Overview tab aggregates Total Spans and Total Errors and Total Exceptions over the selected time window and renders Trace Volume and Span Volume and Error Volume as time series charts. This view reflects the health of the gateway at a glance without building a dashboard from scratch.

Clicking any Trace ID expands the full span waterfall for that trace. Each span shows its service name and duration and any error flags. Nested child spans reflect the internal call hierarchy of the gateway making it possible to isolate which stage introduced latency on a per-request basis.

The Integration Surface

The TrueFoundry gateway exports traces over OTLP HTTP with protobuf encoding. Honeycomb accepts this format at two regional endpoints.

Authentication uses a single header. The x-honeycomb-team header carries the Honeycomb ingest API key. The key must have the Send Events permission scope. There is no OAuth flow and no bearer token exchange. The key is sent as a plain header value on every export request.

x-honeycomb-team: <your-honeycomb-ingest-api-key>

Dataset routing is controlled by a second optional header. When x-honeycomb-dataset is omitted Honeycomb uses service.name from the resource attributes to determine the target dataset. When it is set explicitly all spans in that export batch are written to the named dataset regardless of service.name.

x-honeycomb-dataset: tfy-llm-gateway-production

The TrueFoundry gateway does not auto-append signal paths to the configured endpoint. The full path including /v1/traces must be present in the endpoint field. This differs from the OpenTelemetry Collector's OTLP HTTP exporter which appends /v1/traces automatically based on the pipeline signal type. In the Collector a single base URL like https://api.honeycomb.io:443 is sufficient because the Collector resolves the path from the pipeline definition. In TrueFoundry the endpoint is used verbatim.

The configuration surface in TrueFoundry maps directly to the fields Honeycomb requires.

The Additional Resource Attributes field appends key-value pairs to the resource block of every exported span. This is useful for adding a deployment environment tag or a cluster identifier that is not already present in the span attributes.

The Exclude Request Data checkbox strips tfy.input and tfy.output and tfy.input_short_hand before spans leave the gateway. Honeycomb will still receive all structural attributes including token counts and latencies and model names and error flags.

Architecture Summary

When a request reaches the TrueFoundry gateway the full span tree is assembled in memory during request processing and published to NATS after the response completes. The OTEL exporter subscribes to this NATS subject and batches spans before sending them to https://api.honeycomb.io/v1/traces over HTTPS with the x-honeycomb-team header present. Honeycomb writes each span as a row in the tfy-llm-gateway dataset. The spans become queryable within seconds of arrival.

No changes to application code are required. No sidecar containers are deployed alongside the gateway. No SDK is embedded in the client. The integration is a configuration surface on the gateway: one endpoint URL and one authentication header. Existing clients calling the gateway over the OpenAI-compatible API continue to work without modification.

The principle that makes this integration reliable is the async export path. Trace export is decoupled from the request lifecycle via NATS. A Honeycomb API outage or network partition between the gateway and Honeycomb's ingestion endpoint does not affect inference availability. The gateway processes requests and publishes spans to NATS regardless of whether the downstream export succeeds. This means the observability pipeline can be configured and reconfigured and restarted without touching the request-serving path.

TrueFoundry AI Gateway bietet eine Latenz von ~3—4 ms, verarbeitet mehr als 350 RPS auf einer vCPU, skaliert problemlos horizontal und ist produktionsbereit, während LiteLM unter einer hohen Latenz leidet, mit moderaten RPS zu kämpfen hat, keine integrierte Skalierung hat und sich am besten für leichte Workloads oder Prototyp-Workloads eignet.

Der schnellste Weg, deine KI zu entwickeln, zu steuern und zu skalieren

.webp)

.webp)

.webp)

.webp)

.webp)