Types of AI Agents: Definitions, Roles, and What They Mean for Enterprise Deployment

Auf Geschwindigkeit ausgelegt: ~ 10 ms Latenz, auch unter Last

Unglaublich schnelle Methode zum Erstellen, Verfolgen und Bereitstellen Ihrer Modelle!

- Verarbeitet mehr als 350 RPS auf nur 1 vCPU — kein Tuning erforderlich

- Produktionsbereit mit vollem Unternehmenssupport

Choosing which type of AI agent to deploy is an architecture decision, not a vocabulary exercise. The agent type determines how it reasons and acts without human intervention. It also determines how far its actions cascade and what governance layer it requires before production deployment.

A simple reflex agent reading a sensor value needs different controls than a multi-agent system coordinating across CRM, database, and communications stacks. Classification shapes the infrastructure decision. Infrastructure decisions shape the risk profile.

This guide covers each agent category, the work it does, where it fits in enterprise use cases, and how TrueFoundry approaches governance across all of them.

Also Read: What Is AI Observability

What Is an AI Agent?

An AI agent is an autonomous software system that perceives inputs, reasons over them using a model or logic system, and takes actions toward a goal. Human instruction is not required at every step.

The autonomy loop separates an agent from an ordinary model call. The loop runs: evaluate, decide, act, observe, and continue based on what surfaces. That loop is where enterprise workflow value lives. It is also where governance risk concentrates the moment an agent starts operating without controls.

The Main Types of AI Agents

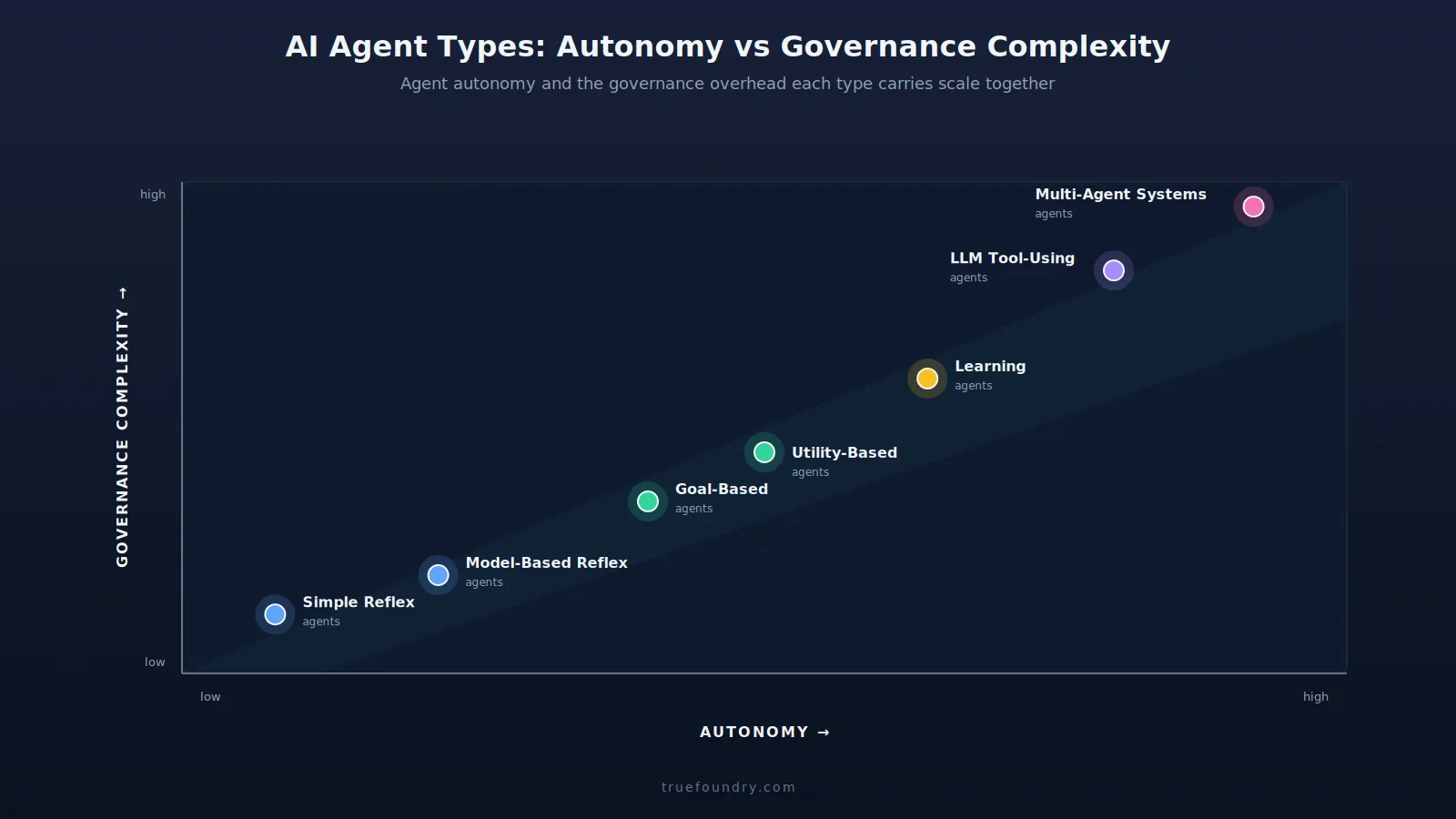

The taxonomy below moves from simpler agents, fixed rules and no memory, to the most complex multi-agent systems. The further down, the more autonomy each agent type carries.

This progression is central to understanding different types of AI agents and what each requires in production infrastructure.

.webp)

Simple Reflex Agents

The simplest pattern in the types of AI agents taxonomy. The simple reflex agent acts strictly on the current input using predefined condition-action rules. It stores no memory of prior interactions and makes no plans for future states.

Key characteristics:

What breaks at the edges: Anything outside the ruleset falls through. There is no reasoning, no adaptation, and no path to completing multi-step tasks.

Where it fits: Spam filters, industrial sensor data monitors, rule-based alert systems, and anywhere a consistent response to known patterns is the entire job. These agents excel at repetitive tasks that require speed and precision over adaptability.

Model-Based Reflex Agents

A step beyond the simple reflex agent pattern. This agent type carries an internal model of the environment that represents how the world changes over time, allowing it to handle incomplete or ambiguous inputs by filling in from the model of the world rather than failing.

The agent uses its internal state to make better decisions across incomplete information. The internal state must still be programmed manually. The agent does not learn from experience, which puts a hard ceiling on adaptability in dynamic environments.

Where it fits: Autonomous vehicle navigation, robotics, computer vision systems, and industrial control systems, where tracking the internal state of the environment improves the quality of each decision in partially observable environments.

Goal-Based Agents

Goal-based agents evaluate possible actions against a defined goal state and select the sequence of steps most likely to lead the system from its current state to the target, reasoning about future consequences of each available path.

Where it goes wrong: Performance tracks the quality of goal definition. A poorly specified goal produces an agent that hits the literal objective while missing the intended outcome, a classic specification-gaming failure.

Where it fits: Route optimization, supply chain optimization, and workflow automation. These agents excel anywhere the end state is clearly definable, and the agent's job is to find the optimal path.

Utility-Based Agents

A close cousin of the goal-based agent, with one addition. This type of AI agent assigns a utility function to different possible outcomes and picks actions that maximize expected utility. The result is principled trade-off behavior when objectives compete, rather than binary goal-hitting.

Specifying utility function values correctly at scale is the hard part. Misaligned functions produce agents that optimize aggressively for the wrong measure, which is Goodhart's law appearing in production.

Where it fits: Financial portfolio optimization, resource allocation systems, and recommendation engines. These are contexts where the agent must rank outcomes by business value under uncertainty.

Learning Agents

A learning agent architecture has four main components working together:

- Performance element: Takes actions in the environment

- Critic: Scores outcomes against the performance standard

- Learning element: Updates behavior based on critic feedback

- Problem generator: Proposes new situations to learn from

Active concerns: Training stability and safety are both genuine risks. Learning agents exhibit unexpected behaviors when reward signals are misspecified or when the environment shifts during deployment. Robust feedback loops are required to catch emergent behavior before it reaches users.

Where it fits: Personalization engines, dynamic pricing systems, and fraud detection, and predictive analytics. These are contexts where optimal behavior keeps shifting and manual rule updates cannot keep pace. Reinforcement learning and machine learning, powered by carefully curated training data, are the core mechanisms powering this agent type.

LLM-Powered Tool-Using Agents

Most enterprise interest in types of AI agents currently flows toward this category. The agent uses large language models for reasoning, planning, and natural language communication, paired with the ability to invoke external tools, APIs, databases, search, code execution to take real-world actions.

Three concerns stack here:

- Non-deterministic reasoning: The same prompt can produce different possible actions across invocations.

- Prompt injection risk: External content can embed instructions that hijack the agent's behavior.

- Real-world execution: Tool calls take actual actions in connected systems. Errors compound across complex workflows.

Generative AI and natural language processing power the interface layer, while the tool-calling layer is where agentic AI risks concentrate.

Where it fits: Customer support automation, internal knowledge assistants, developer copilots, and agentic AI workflows wired into CRM, ticketing, and data systems via the TrueFoundry MCP Gateway.

These are the highest-governance AI agents in any enterprise stack. For a detailed breakdown of the security risks specific to this type, see MCP Security Risks and Best Practices.

Multi-Agent Systems

The pattern: distribute complex tasks among several hierarchical agents, each handling manageable subtasks and reporting back to an orchestrator that coordinates the overall workflow toward a shared goal.

Governance complexity scales faster than the number of agents. A single misconfigured permission in a single agent propagates to every other agent the workflow touches.

Use cases: End-to-end workflow agents for business processes automation, research and analysis pipelines, data agents for analytics workflows, software development agents, and anything requiring several specialized AI systems running in sequence across various industries.

What are the 5 Levels of AI Agents?

A complementary way to think about types of AI agents, by capability level rather than architecture. The five levels describe the extent of autonomy and coordination an agent can sustain, regardless of its underlying agent type.

The level of autonomy an agent operates at directly determines the governance infrastructure required. A Level 1 agent handling simple tasks in a single-agent setup and a Level 5 autonomous agent in production require fundamentally different control layers.

How TrueFoundry Governs All Types of AI Agents From One Control Plane?

The TrueFoundry AI Gateway bundles an LLM Gateway, an MCP Gateway, and an Agent Gateway to govern every agent category from a single VPC-native control plane. The same controls cover a tool-using customer service agent and a coordinated multi-agent research system.

- Framework-agnostic coverage: Every agent type, regardless of framework, routes through the gateway. LangGraph, CrewAI, AutoGen, and in-house implementations all have their model calls and tool invocations covered by uniform access policies. No separate governance tooling is required per agent category.

- Per-agent and per-workflow access controls: OAuth 2.0 identity injection scopes every AI agent action to the requesting user's actual permissions. The over-permissioned service account problem, the one creating an outsized blast radius in multi-agent deployments, never gets a foothold.

- Cost governance for single- and multi-agent workloads: Token budgets and circuit breakers operate at both the single-agent and workflow levels. Runaway costs cannot compound silently across coordination loops.

- Complete audit trails for each agent type, stored within your VPC: Every step in the workflow, model calls, tool invocations, AI agent coordination is logged with structured metadata. Logs remain within the customer's cloud boundary, available for real-time monitoring and on-demand compliance review. The result is compliance-ready evidence for SOC 2 and HIPAA across every agent category the platform runs.

For teams running multiple agent types in production, or planning a multi-agent rollout, book a demo with the TrueFoundry team.

TrueFoundry AI Gateway bietet eine Latenz von ~3—4 ms, verarbeitet mehr als 350 RPS auf einer vCPU, skaliert problemlos horizontal und ist produktionsbereit, während LiteLM unter einer hohen Latenz leidet, mit moderaten RPS zu kämpfen hat, keine integrierte Skalierung hat und sich am besten für leichte Workloads oder Prototyp-Workloads eignet.

Der schnellste Weg, deine KI zu entwickeln, zu steuern und zu skalieren

.png)

.webp)

.webp)

.webp)