Operant AI Integration with TrueFoundry

.webp)

Auf Geschwindigkeit ausgelegt: ~ 10 ms Latenz, auch unter Last

Unglaublich schnelle Methode zum Erstellen, Verfolgen und Bereitstellen Ihrer Modelle!

- Verarbeitet mehr als 350 RPS auf nur 1 vCPU — kein Tuning erforderlich

- Produktionsbereit mit vollem Unternehmenssupport

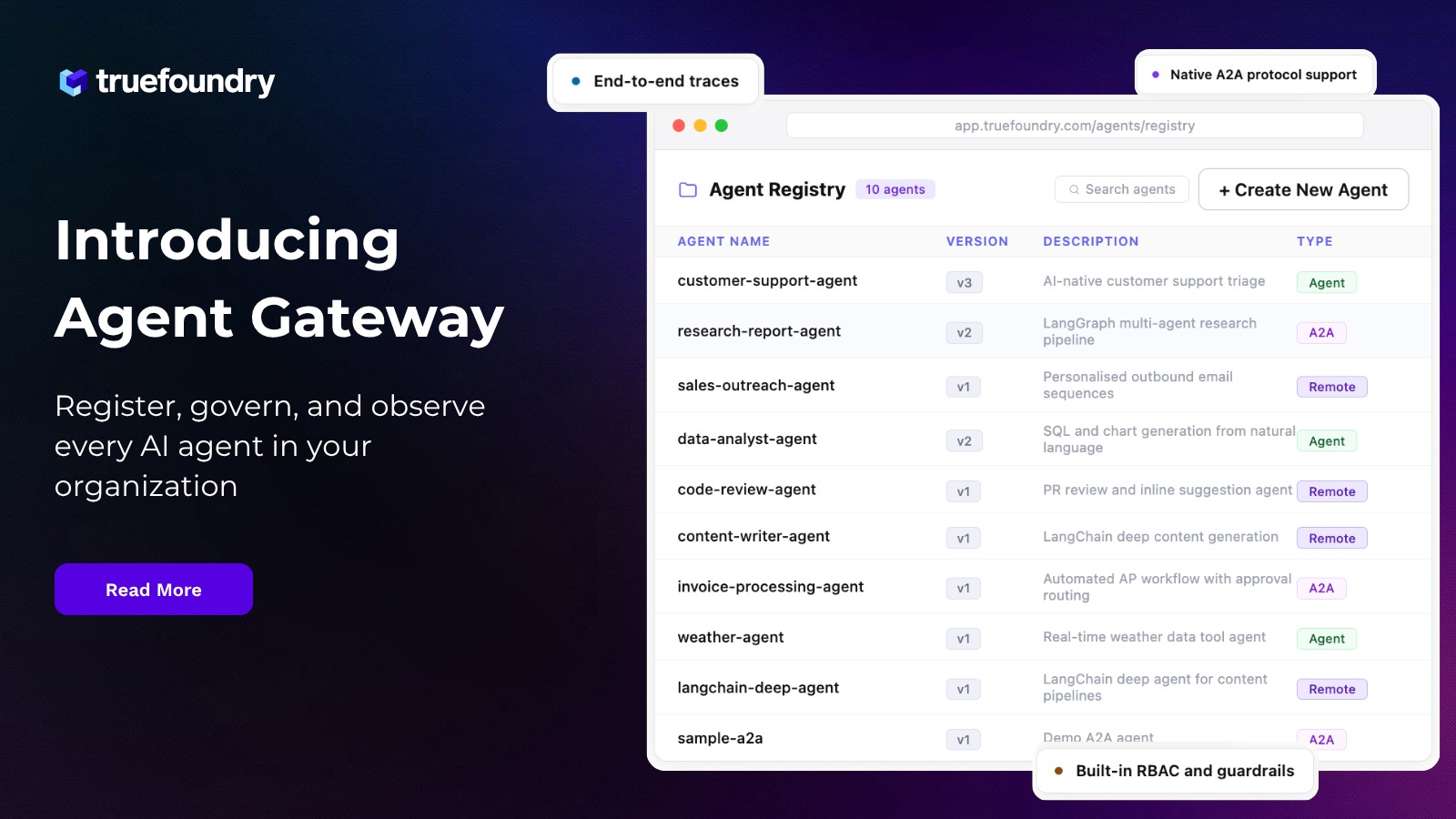

We are excited to announce our partnership with Operant AI that brings runtime AI defense and inline data redaction directly into the path of LLM and agent traffic.

Teams routing model and agent traffic through TrueFoundry's AI Gateway can now connect Operant AI Gatekeeper as a first class guardrail provider to gain real-time threat detection and inline auto-redaction and zero trust enforcement across prompts and responses and tool calls and MCP interactions in production. The integration runs at the four guardrail hooks exposed by the gateway and requires no changes to agent or application code.

This post covers the architecture of the integration. It explains how the TrueFoundry AI Gateway executes guardrails at runtime and how Operant's runtime defense engine plugs into that execution model and how teams configure rules that target specific models and MCP servers and user populations.

Why enterprise agentic AI needs two layers

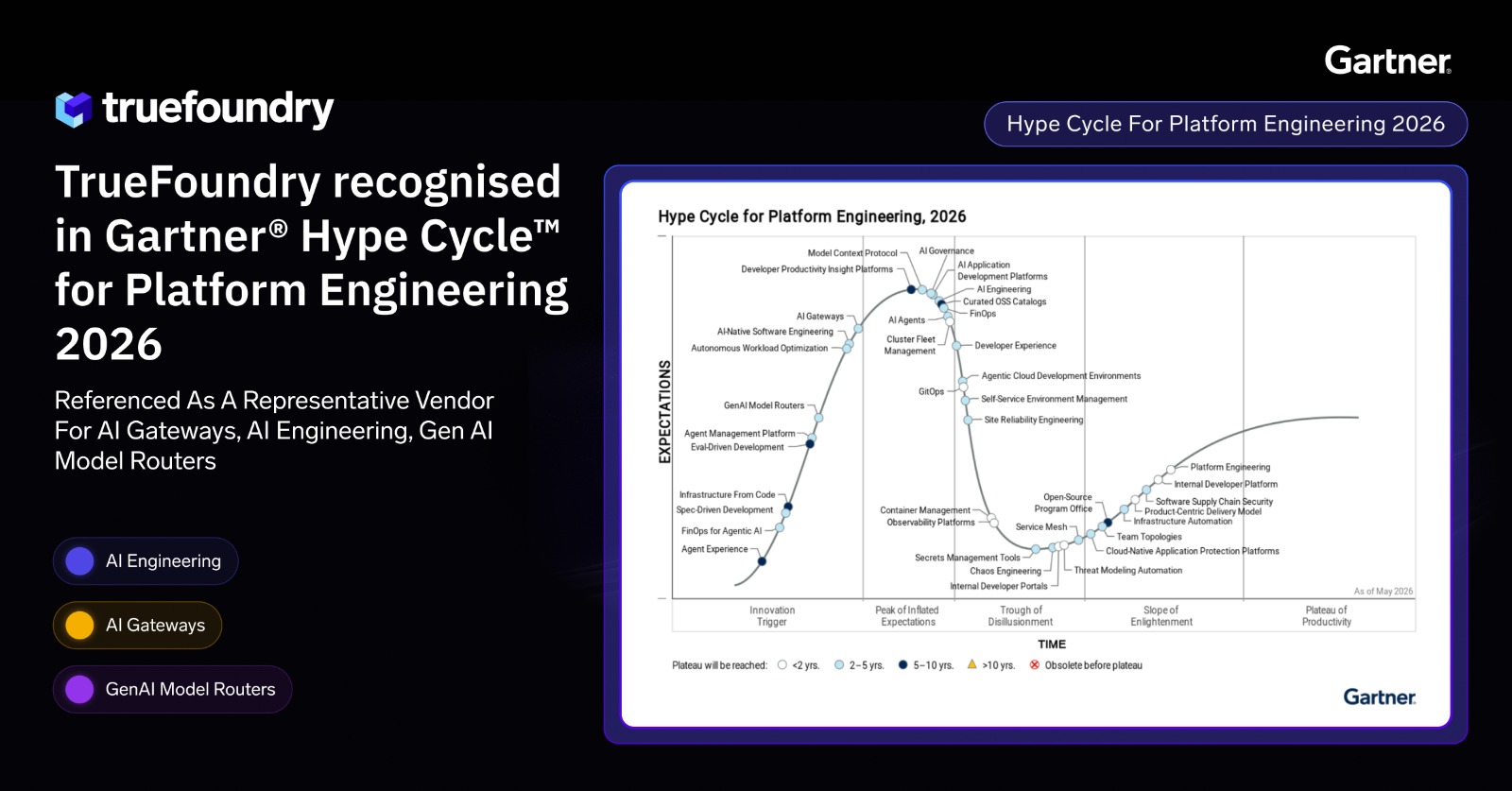

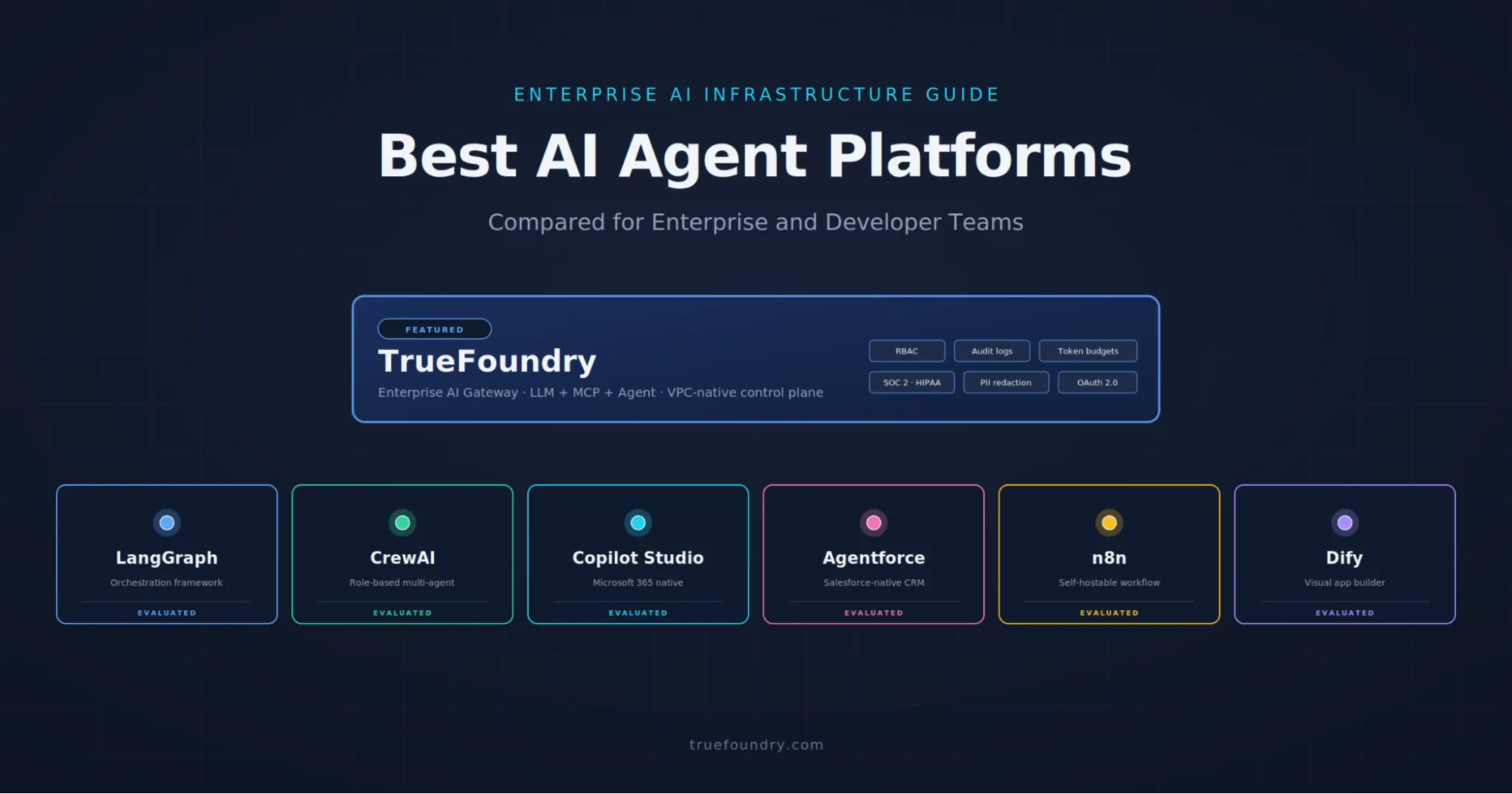

TrueFoundry provides the control layer for production AI systems. Through the AI Gateway teams centralize model routing and key management and access control and observability and governance across LLMs and tools and MCP-connected workflows. Every request flows through a single proxy layer where identity is verified and rate limits are enforced and traces are captured.

Operant AI provides the runtime defense layer. Its 3D Runtime Defense engine discovers and detects and defends against the full OWASP LLM Top 10 and MITRE ATLAS attack patterns. AI Gatekeeper runs natively in the application stack and applies inline auto-redaction for PII and PCI and PHI and secrets and API keys before that data crosses any boundary. Operant is the only vendor named across all five of Gartner's most critical AI security reports covering AI TRiSM and API Protection and MCP Gateways and Agent security.

Together the two solutions give teams a clean production architecture. TrueFoundry handles deployment and routing and operational control. Operant handles runtime inspection and inline redaction and behavioral threat enforcement. Operant AI Gatekeeper is supported as a first class guardrail provider inside the TrueFoundry gateway with hooks at llm_input_guardrails and llm_output_guardrails and mcp_tool_pre_invoke_guardrails and mcp_tool_post_invoke_guardrails.

The gap in production agent deployments

Most teams building AI agents focus on getting deployment and reliability right. The agent has to call the right tools and manage context across long conversations and handle retries and scale across users. That work is necessary but it does not answer the runtime security question.

Security in many agentic AI deployments stops at the perimeter. Platform access controls and MCP server allowlists and tool-level permissions and scoped credentials for downstream systems are all in place. Those controls matter but they leave the data path uninspected and the agent reasoning loop unprotected.

The questions that the perimeter cannot answer include what data is actually flowing into the model and what is leaving in the response and which tools the agent is calling and with what arguments. If a prompt injection arrives through retrieved context or through an MCP server response or through an external API result the perimeter has no visibility into whether the agent is about to act on it. If the model output contains a customer email or an AWS secret key the perimeter cannot stop the leak before it leaves the environment.

Runtime guardrails on the gateway path

The architectural idea behind this integration is direct. If all model and tool and MCP traffic already flows through the gateway then the gateway is the right place to apply runtime defense. With Operant connected to the TrueFoundry AI Gateway teams enforce guardrails on the same path where agent traffic is already being routed and governed. Evaluation happens on live traffic and not on traces reviewed after execution.

Operant AI Gatekeeper runs as the runtime defense layer. The defense engine deploys natively in the application environment through a single step Helm install and applies its scanners and redaction logic in place. Because the engine runs natively no external call is required for the guardrail decision and the entire data flow stays inside the customer environment. This is the foundation for what Operant calls private mode where sensitive data is redacted at ingress and egress before it ever leaves the cluster.

Operant exposes the following defense capabilities at runtime. Inline auto-redaction identifies and masks over forty categories of sensitive data covering PII and PCI and PHI and API keys and tokens and credentials before that data reaches the model or leaves the environment. Prompt injection detection covers both direct and indirect injection through retrieved context or tool output. Jailbreak detection identifies attempts to bypass model safety training. Data exfiltration defense monitors egress flows for unauthorized movement of sensitive data. Tool poisoning detection is purpose-built for MCP and identifies weaponized tool descriptions and rogue tool registrations and compromised tool binaries. Behavioral threat detection flags deviations from each agent's defined business purpose. Non Human Identity controls apply zero trust enforcement to agent and service identities calling tools and APIs.

For agentic systems Operant evaluates not just a single prompt and response pair but the tool invocations and MCP requests and the multi-step execution context. AI Security Graphs map the live data flows between AI workloads and agents and APIs so the defense engine has the context to flag when an agent steps outside its sanctioned trust boundary.

How the gateway executes guardrails

The TrueFoundry AI Gateway runs on the Hono framework and a single gateway pod handles 250 plus requests per second on 1 vCPU and 1 GB RAM with approximately 3 ms of added latency. Gateway pods are stateless and CPU bound and scale horizontally to tens of thousands of RPS through additional pods. The control plane and gateway plane are split. Configuration including guardrail rules and model definitions and rate limits lives in the control plane and syncs to gateway pods through NATS. The actual request path stays in memory with no external calls beyond the LLM provider.

Guardrails execute at four discrete hooks in the request lifecycle.

llm_input_guardrails intercepts a prompt before it reaches the model. The gateway sends the input payload to Operant first. If Operant returns a violation verdict for any configured detector the request is blocked and the LLM is never called. If Operant runs in mutate mode the redacted payload is returned and the gateway forwards the masked version to the model. The input guardrail call runs concurrently with the model request to optimize time to first token and the model call is cancelled immediately on a block decision to avoid incurring provider cost.

llm_output_guardrails fires after the LLM has responded but before the response is returned to the caller. Output guardrails are sequential. The gateway waits for the model output and submits it to Operant for scanning before delivering to the client. This is the enforcement point for catching PII leakage and secret exposure and any data exfiltration attempt the model produced. Operant's egress redaction strips sensitive data from the response before it leaves the environment.

mcp_tool_pre_invoke_guardrails fires before a tool is executed by the agent. Operant evaluates the tool name and the arguments and the calling Non Human Identity. If the tool description contains injected instructions or the arguments contain sensitive data or the calling identity is operating outside its sanctioned trust boundary the tool invocation is blocked before any real-world action occurs. This is the enforcement point that catches MCP tool poisoning at runtime.

mcp_tool_post_invoke_guardrails fires after the tool returns its result and before that result is passed back into the agent reasoning loop. This is the enforcement point for detecting indirect prompt injection in tool output and credential leakage from MCP servers and PII returned by upstream APIs. Stopping it here keeps the agent from acting on poisoned context.

Each hook supports three enforcing strategies. Enforce blocks on violation or on guardrail service error. Enforce But Ignore On Error blocks on violation but allows the request to proceed if the guardrail service itself is unreachable. Audit logs the verdict and never blocks. Each guardrail also supports two operation modes. Validate mode produces a block or pass decision. Mutate mode allows the guardrail service to modify content in flight. Mutate is how Operant's inline auto-redaction is wired in. The gateway forwards the request to Operant and substitutes the redacted payload into the request before continuing to the model.

The integration surface

Operant is configured in the TrueFoundry control plane as a guardrail integration with the API endpoint for the AI Gatekeeper service and the credentials for the deployment. Because Operant deploys natively in the same environment as the gateway the endpoint is typically a cluster-local service URL and the guardrail call adds minimal network latency.

FieldValueProviderOperant AI GatekeeperEndpointhttps://api.operant.ai/v1/gatekeeper (or cluster-local service URL)AuthenticationBearer token via OPERANT_API_KEYDetectorsprompt_injection and jailbreak and pii and pci and phi and secrets and data_exfiltration and tool_poisoning and behavioral_anomalyOperation modesValidate and MutateMutate behaviorInline auto-redaction for sensitive data categories

Once the integration is registered the gateway exposes it as a selector that can be referenced from any guardrail rule. Rules are configured through a YAML rules block. Each rule uses a when block with two conditions. target matches on model or mcpServers or mcpTools or request metadata. subjects matches on user or team identity with in and not_in operators. The rule then declares which guardrail integrations to run on which of the four hooks.

A baseline rule that runs Operant on input and output for an OpenAI model used by all teams looks like this.

name: guardrails-control

type: gateway-guardrails-config

rules:

- id: operant-baseline

when:

target:

operator: or

conditions:

model:

values:

- openai-main/gpt-4o

condition: in

subjects:

operator: and

conditions:

in:

- team:everyone

llm_input_guardrails:

- operant/operant-redact-profile

llm_output_guardrails:

- operant/operant-redact-profile

mcp_tool_pre_invoke_guardrails: []

mcp_tool_post_invoke_guardrails: []

A second rule that adds Operant scanning around an MCP server used by an agent team would target the MCP server and apply the integration on the pre and post tool invocation hooks. This is the configuration that catches tool poisoning and data exfiltration through tool output. All matching rules are evaluated together and their guardrail sets are unioned per hook. Two rules that both target llm_input_guardrails will both run on the input.

Per request overrides are supported through the X-TFY-GUARDRAILS header. The header carries a JSON object specifying guardrail selectors for any combination of the four hooks. This lets application teams pin a stricter or more permissive policy for a specific call without modifying the global config.

Every guardrail decision is captured in the request trace. The span includes the hook that fired and the integration selector and the verdict and the latency of the guardrail call and the categories that matched. Traces are emitted asynchronously through NATS and exported via OTEL to whichever observability backend the team has configured. Operant's dashboard surfaces the same events from its side with the AI Security Graph showing live data flows and the threat blocking telemetry.

Architecture Summary

End to end the request flow looks like this. A client sends a chat completion or agent request to the gateway. The gateway authenticates the caller against cached IdP keys and resolves the model identifier through Virtual Model routing. Matching guardrail rules are evaluated in memory and the input payload is dispatched to Operant concurrently with the model call. If Operant flags the input the model call is cancelled and a structured error is returned. If Operant returns a redacted payload the gateway forwards the masked version to the model. The model response is then submitted to Operant's output detectors for egress redaction before delivery. For agent traffic the same logic applies on each MCP tool invocation and on each tool response before it re-enters the agent context. Every step is captured in a trace span with the guardrail verdict attached.

Nothing else has to change in the application. There is no SDK to install on the client and no per-service security middleware to maintain. The gateway is already in the request path and Operant attaches to that path natively in the same environment. Existing OpenAI compatible client code keeps working without modification. Sensitive data is masked before it ever reaches the model and before it ever leaves the cluster.

The architectural principle that makes this clean is consolidation of policy enforcement at the gateway layer combined with inline data redaction at the runtime layer. When model traffic and tool traffic and MCP traffic all converge on a single proxy then guardrails configured at that proxy apply uniformly across every model and every team and every agent without per-application code. Operant's defense engine runs inline at the same point and the gateway's hook model gives Operant access to the four enforcement points where runtime decisions actually matter. Data stays inside the environment because Operant operates natively in the stack rather than calling out to an external scanning service.

Get Started

Learn more about the TrueFoundry AI Gateway and the Operant AI Gatekeeper platform. Connect Operant in the TrueFoundry guardrails configuration and reference the integration selector from any rule that targets your models or MCP servers.

TrueFoundry AI Gateway bietet eine Latenz von ~3—4 ms, verarbeitet mehr als 350 RPS auf einer vCPU, skaliert problemlos horizontal und ist produktionsbereit, während LiteLM unter einer hohen Latenz leidet, mit moderaten RPS zu kämpfen hat, keine integrierte Skalierung hat und sich am besten für leichte Workloads oder Prototyp-Workloads eignet.

Der schnellste Weg, deine KI zu entwickeln, zu steuern und zu skalieren

.webp)

.webp)

.webp)

.webp)

.webp)

.webp)

.webp)