AI Security Frameworks in 2026: Which Ones Apply and Where Each Stops

.webp)

Conçu pour la vitesse : latence d'environ 10 ms, même en cas de charge

Une méthode incroyablement rapide pour créer, suivre et déployer vos modèles !

- Gère plus de 350 RPS sur un seul processeur virtuel, aucun réglage n'est nécessaire

- Prêt pour la production avec un support complet pour les entreprises

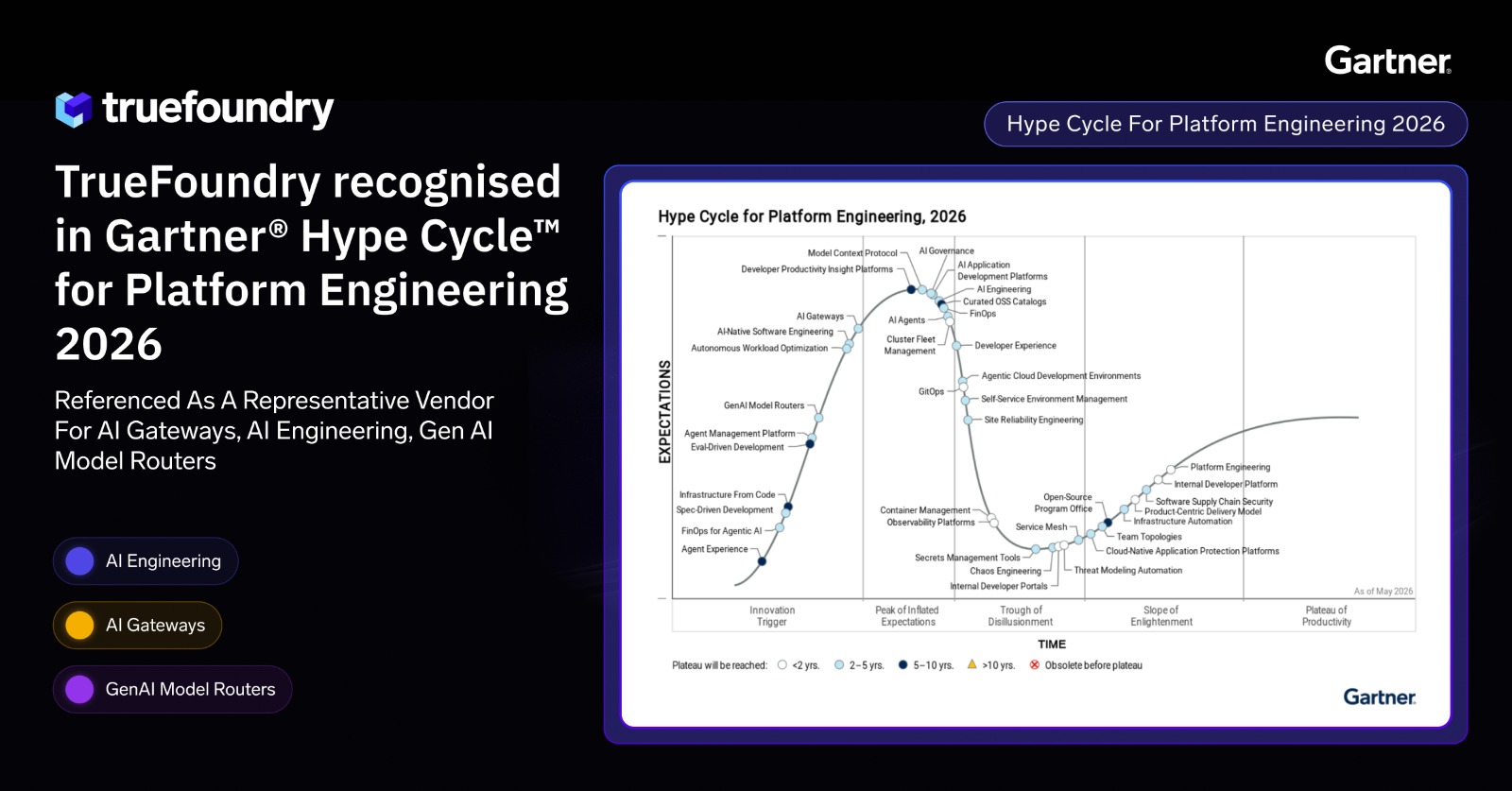

In 2026, enterprise security teams have more AI security frameworks to choose from than ever. NIST, OWASP, MITRE ATLAS, Google SAIF, ISO 42001, and CSA MAESTRO each address different aspects of the overall AI security problem, and none of them is complete on its own.

Once organizations determine which frameworks to adopt, they must understand what problem each AI security framework was designed to solve and, more importantly, where each one stops.

This guide compares the major AI security frameworks based on their scope, audience, and practical coverage, and shows how much remains unaddressed after the framework work is complete.

.webp)

What Are AI Security Frameworks Trying to Solve?

Traditional cybersecurity frameworks were developed specifically for deterministic applications. AI systems operate behaviorally and probabilistically, learn from training data, use natural language processing to execute instructions, and are increasingly capable of operating independently. These behavioral characteristics present unique risks that no previous cybersecurity framework was designed to address.

AI security frameworks attempt to close this gap by offering structured guidelines for identifying AI risk, governing AI systems throughout their AI lifecycle, and building reliable defenses against the ways AI fails or gets exploited.

Enterprise security teams face a real challenge because the various frameworks cover different aspects of the same layered problem. Singular reliance on any given AI security framework without considering its complete set of gaps will create identifiable weaknesses in the overall enterprise security posture.

The Core AI Security Frameworks

Let’s have a look at the key AI security frameworks:

NIST AI Risk Management Framework

The NIST AI RMF, published by the National Institute of Standards and Technology, has four major components: Govern, Map, Measure, and Manage. "Govern" involves establishing policies and defining roles and responsibilities. "Map" involves identifying where AI is being used and the AI risk associated with its implementation. "Measure" defines criteria for evaluating those risks. "Manage" establishes a plan for implementing risk mitigation strategies once evaluated risks have been identified.

With respect to regulated industries, the NIST AI Risk Management Framework is the default governance anchor. It is directly mapped to the risk tiers defined in the EU AI Act, and regulated industries including financial services, healthcare, and critical infrastructure explicitly reference the NIST AI RMF in their AI governance guidance.

The Limitation: The NIST AI RMF provides governance structures, not technical controls. It defines what accountability structures should exist and what AI risk categories should be monitored, but it does not provide guidance on how to prevent a prompt injection attack during execution. The AI RMF does not address how to ensure agents can only invoke tools their users are authorized to access. It presumes a downstream entity will handle enforcement. Teams that consider NIST AI RMF compliance comprehensive will have detailed policies but no actual enforcement during live operations.

.webp)

OWASP LLM Top 10 and Agentic Top 10

The LLM Top 10, produced by OWASP, outlines the highest-level security risks to large language model applications, including prompt injection, insecure output handling, data poisoning, denial-of-service attacks on the model, and supply-chain compromise. The Agentic Top 10 extends these risks by identifying unique risks posed by autonomous agents, including insecure tool use, excessive privilege, violation of inter-agent trust boundaries, and uncontrolled resource consumption.

Both documents help convert attack research into engineering controls that development teams can act on directly. This is particularly valuable for teams building LLM applications, as OWASP provides the best starting point for understanding what attack surface their AI development introduces.

The Limitation: OWASP serves as a threat-awareness document, identifying what to address rather than prescribing a programmatic approach to continuous enforcement. Although prompt injection is clearly the highest AI security risk, identifying it in a document does not intercept it in production. If nothing in the operational stack can block prompt injection at runtime, OWASP awareness alone provides no security measures against it.

MITRE ATLAS

MITRE ATLAS is a catalog of actual adversarial tactics and techniques employed against AI-based solutions. Structured as a matrix similar to MITRE ATT&CK, it maps directly to SOC teams already using ATT&CK-based security tools. The techniques include model evasion, data poisoning, backdoor attacks, and model extraction, all based on published research and confirmed incident-response data rather than on estimated threat modeling.

For red teams, ATLAS provides a structured way to test against realistic adversarial behaviors of AI systems. For blue teams, ATLAS provides the vocabulary needed to write detection rules for AI-specific attack patterns and to map AI risk into existing SIEM workflows.

The Limitation: ATLAS describes how attacks occur and enables their testing, which is genuinely valuable. However, MITRE ATLAS does not provide runtime controls for production AI workloads. A security team can derive a complete ATLAS-based threat model, conduct a red team exercise against every ATLAS technique, and still find no runtime defenses protecting those attack pathways in production. ATLAS provides visibility into gaps but requires separate tooling to fill them.

Google SAIF

The Secure AI Framework developed by Google has identified six broad areas of focus:

1) Build solid security foundations across the AI ecosystem;

2) Expand detection and response capabilities to the AI pipeline;

3) Automate defensive measures to stay ahead of AI-enhanced risks;

4) Standardize platform-level controls so that they are governed by one overarching policy;

5) Adjust controls as necessary based on the context of the AI system;

6) Assess AI risk in relation to existing threat models.

SAIF provides organizations developing AI applications on Google Cloud or using Google engineering standards with an excellent understanding of effective AI security approaches from the earliest phases of model development through AI development and deployment.

The Limitation: SAIF is helpful as high-level best practices but does not prescribe specific controls or how to enforce them once an AI application is in production. SAIF provides substantial guidance on data integrity, model security, and supply chain security during the model training phase, but offers only a starting point for enforcing controls on production agents after deployment.

ISO 42001

ISO 42001 is an international management system standard for AI. It describes how to establish, implement, maintain, and improve an AI management system, using the same high-level structure as ISO 27001 (information security) and ISO 9001 (quality management). Organizations already using ISO governance frameworks can extend their existing program into AI using ISO 42001 as a common language.

The primary reason organizations adopt ISO 42001 is that it provides a certification path to meet AI governance certification requirements increasingly imposed by enterprise procurement processes. ISO 42001 is the most credible framework available for this purpose.

The Limitation: ISO 42001 focuses on management system certification, not technical controls or operational security measures. It provides evidence that an organization has developed its AI governance policy, established accountability, and implemented review processes.

However, ISO 42001 does not address agentic AI behavior, prompt injection, or runtime enforcement at the infrastructure level. Having an ISO 42001-certified management system does not mean the organization's systems filter or log individual requests flowing through them. The certification attests to the governance management system, not to data security or enforcement of live traffic.

.webp)

How to Use Multiple Frameworks Together?

These AI security frameworks were created for different purposes, and using them together compensates for each one's weaknesses.

The NIST AI RMF provides the governance model. The OWASP LLM Top 10 and Agentic Top 10 serve as a developer and engineer baseline for assessing security vulnerabilities. MITRE ATLAS supports threat modeling and red teaming against AI-specific attack techniques. ISO 42001 handles external verification and regulatory compliance. Google SAIF provides guidance on embedding security into model development and model training.

Stacking these AI security frameworks provides additional assurance that all layers of the AI system are considered. The NIST AI RMF provides guidance on what to govern. OWASP provides guidance on what to monitor. ATLAS provides guidance on how attacks are executed. ISO 42001 provides proof of regulatory requirements compliance. SAIF provides guidance on how to develop secure AI models.

However, one critical gap remains. None of these AI security frameworks address the control plane through which every AI request, agent action, and tool invocation requires policy enforcement before execution takes place.

.webp)

What AI Security Frameworks Leave Unaddressed?

All of the AI security frameworks reviewed here enforce actions at one of three levels: policy, documentation, or threat modeling. None of them directly enforces controls on live inference traffic.

Using the NIST AI RMF does not prevent an over-privileged agent from executing an action via a restricted tool; it relies on something downstream to handle enforcement correctly.

OWASP identifies prompt injection as the number one AI security vulnerability, but recognizing it in a document does not block injected instructions from reaching a production AI model.

MITRE ATLAS provides a model for how an attacker exploits an agent's capabilities, but does not prevent that exploitation in a live deployment. The red team identifies the vulnerability. A separate technical layer must address the gap.

ISO 42001 certification indicates that a management system is in place, but does not ensure that all requests processed by that system are logged or filtered in real time.

This gap is structural. The AI security frameworks were designed for planning, documentation, and testing environments. Closing the gap requires a control plane operating at the infrastructure layer, where AI systems execute in real time, and where policy is enforced before requests reach models and tools.

How TrueFoundry Operationalizes AI Security Frameworks at the Infrastructure Layer?

.webp)

TrueFoundry operates on an important premise. The infrastructure layer should enforce the control frameworks as described previously, and not leave their enforcement to the individual development teams or document them in the governance artifacts.

The TrueFoundry platform deploys in the customer's AWS / GCP / Azure account and enforces policy at the gateway layer before a model/tool receives any requests.

- Addressing OWASP insecure tool use: OAuth 2.0 identity injection ties every agent action to the scope of the authenticated user's permissions. An agent cannot invoke a tool unless the requesting user is authorized to access it, directly implementing the least-privilege principle that AI security frameworks describe but cannot enforce themselves.

- Mapping to NIST AI RMF governance standards: The per-model and per-tool access controls mechanism establishes AI risk management accountability at a system level rather than leaving it to policy documents that development teams may or may not implement consistently.

- Addressing OWASP LLM01 prompt injection: Prompt injection filtering is applied at the infrastructure level before instructions reach the AI model context. PII redaction addresses OWASP LLM06 sensitive information disclosure by intercepting personal data and sensitive data before they enter the model, satisfying the data protection requirements that AI security frameworks specify.

- Producing auditable records: Immutable audit records satisfy the requirements indicated in the NIST AI RMF, ISO 42001, and EU AI Act without requiring separate logging infrastructure for each team or application.

- Addressing data privacy and residency requirements: VPC-native deployment complies with the sovereignty and residency requirements that all AI security frameworks indicate but none of them enforce on their own.

- Closing the gap between framework guidance and organizational reality: At the infrastructure level, every policy is enforced against every execution request, rather than documented in a governance binder and applied inconsistently across teams.

TrueFoundry AI Gateway offre une latence d'environ 3 à 4 ms, gère plus de 350 RPS sur 1 processeur virtuel, évolue horizontalement facilement et est prête pour la production, tandis que LiteLM souffre d'une latence élevée, peine à dépasser un RPS modéré, ne dispose pas d'une mise à l'échelle intégrée et convient parfaitement aux charges de travail légères ou aux prototypes.

Le moyen le plus rapide de créer, de gérer et de faire évoluer votre IA

.webp)