LiteLLM Enterprise Pricing vs TrueFoundry: A Real Total Cost of Ownership Analysis

.png)

Conçu pour la vitesse : latence d'environ 10 ms, même en cas de charge

Une méthode incroyablement rapide pour créer, suivre et déployer vos modèles !

- Gère plus de 350 RPS sur un seul processeur virtuel, aucun réglage n'est nécessaire

- Prêt pour la production avec un support complet pour les entreprises

LiteLLM is the most widely used open-source LLM proxy. It solves a real problem elegantly: you get a unified OpenAI-compatible API that routes across dozens of providers, and the community version costs nothing to run. The routing logic is solid. The developer experience is good. For teams that just need a lightweight proxy and have the DevOps capacity to run it, it works.

The conversation changes when teams hit the limits of the self-managed open-source version and start evaluating LiteLLM Enterprise. Public references and vendor discussions commonly cite a Basic tier around $250/month and a Premium tier near $30,000/year, but LiteLLM does not publish standardized pricing and final costs are typically negotiated directly with the vendor. These figures reflect publicly referenced estimates, but LiteLLM pricing is not fully standardized and should be verified directly with the vendor. LiteLLM Enterprise is a self-hosted product. You provision the infrastructure, you manage the PostgreSQL database and Redis cache, you handle upgrades and security patches, and you own the on-call rotation when the proxy goes down at 2am. None of that shows up on the pricing page.

This is not a feature list comparison. It is an honest total cost of ownership analysis covering LiteLLM enterprise pricing, infrastructure costs, engineering maintenance overhead, the MCP governance gap, and how TrueFoundry compares before you commit to a vendor.

[Please Verify current LiteLLM pricing at litellm.ai/enterprise before publication. I couldn’t as it requires registration. Commercial pricing changes frequently. The $250/month Basic and $30,000/year Premium figures reflect published information available online as of early 2026 from my research.]

What LiteLLM Enterprise Pricing Actually Includes

LiteLLM Enterprise is the commercial layer built on top of the open-source proxy. It adds governance features that are not available in the community version: SSO/SAML integration, granular RBAC for model access, Prometheus metrics, custom callbacks, LLM guardrails for content filtering, JWT authorization, and priority support.

Two tiers target different organizational profiles. Verify current details at litellm.ai/enterprise before making purchasing decisions.

- Basic ($250/month): Adds the enterprise management UI, SSO integration for up to a defined user threshold, Prometheus metrics, JWT authentication, LLM guardrails, and a dedicated Slack support channel. Targets smaller enterprise teams or teams moving from community to commercial licensing for compliance reasons.

- Premium (~$30,000/year, or $2,500/month): Adds priority support with defined SLA response times, dedicated account management, enhanced governance features, and access to compliance certification assistance for SOC2 and HIPAA. Targets organizations with significant token volume, multiple teams on the platform, and formal compliance requirements.

- What both tiers share: LiteLLM Enterprise is self-hosted in all tiers. The license grants the right to use the commercial feature set. The customer provisions, operates, and maintains all infrastructure. Redis, PostgreSQL, the proxy cluster, load balancers, monitoring, backups, and incident response are all the customer's responsibility. This architectural reality has significant cost implications that do not appear on the pricing page.

The Hidden LiteLLM Enterprise Costs That Do Not Appear on the Pricing Page

Enterprise buyers comparing AI gateway options frequently start with the license fee and stop there. The actual litellm enterprise cost picture only becomes clear after deployment, when the infrastructure bill arrives and the first engineering rotation hits the calendar. There are three cost categories that consistently exceed the licensing fee over a two-to-three year horizon.

The figures below are based on representative enterprise deployments and internal benchmarks rather than standardized vendor pricing, and should be treated as directional estimates rather than fixed costs.

Infrastructure and Hosting Costs

LiteLLM Enterprise typically runs on a dedicated compute stack: a proxy server or cluster, often alongside a PostgreSQL database for configuration and audit logging, and a Redis instance for caching and rate limit counters. On AWS or Azure, a production-grade high-availability deployment for meaningful LLM traffic typically falls in the range of several hundred to low thousands of dollars per month in cloud infrastructure costs, separate from the license fee.

Teams that need 99.9% uptime for their LLM gateway, which is a reasonable requirement when the gateway sits on the critical path of production AI features, require multi-region redundancy and database replication that push monthly infrastructure costs toward the higher end. These costs also escalate. Cloud provider pricing changes, data transfer fees, and log management overhead add 10 to 15 percent annually to a realistic 3-year infrastructure projection.

Engineering Maintenance: The 0.25 to 0.5 FTE Cost

Self-hosted infrastructure requires ongoing engineering attention that does not show up in vendor pricing but absolutely shows up in headcount planning. Activities include applying security patches, managing version upgrades (LiteLLM releases frequently, and upgrades occasionally require configuration changes), handling gateway outages, and managing configurations as the organization adds new models or teams.

Enterprises that migrate from self-managed LiteLLM to managed platforms often underestimate the ongoing engineering overhead required to maintain the system. In practice, organizations typically allocate approximately 0.25 to 0.5 full-time-equivalent engineering capacity to support LiteLLM operations, including maintenance, scaling, and reliability work. Based on a fully-loaded senior engineer cost of $250,000 per year, the 0.25 to 0.5 FTE allocation translates to an estimated $62,500 to $125,000 per year in engineering effort dedicated purely to infrastructure management, often more than the license fee. And this grows nonlinearly: an organization that starts with five teams on LiteLLM and grows to fifty will find that configuration complexity and maintenance burden compound faster than team count.

The MCP Gateway Gap: A Second Procurement

As of current documentation and feature availability, LiteLLM does not provide a native MCP gateway. Organizations deploying agentic AI systems where agents invoke tools through the Model Context Protocol need a separate solution to govern MCP server access. That means a second vendor evaluation, a second security review, a second procurement process, and a separate integration project to make two governance systems produce a unified audit trail and enforce consistent identity policies.

Gartner projects that 70% of software engineering teams building multimodal applications will use AI gateways, including for agentic tool access, by 2028. Organizations that choose LiteLLM for LLM routing today are choosing a platform that will need supplementation as their agentic AI footprint grows. The integration cost of connecting two separate governance systems is real and is consistently underestimated in initial procurement decisions. A second tool's annual cost, plus the ongoing engineering overhead of maintaining the integration, adds a meaningful additional annual cost depending on vendor choice, integration complexity, and compliance requirements.

A Realistic 3-Year LiteLLM Enterprise TCO Model

The following uses a representative enterprise scenario: a 200-person engineering organization routing approximately 500 million tokens per month through the gateway, operating across two cloud providers, with 20 teams on the platform and compliance requirements that mandate structured audit logging. Adjust the numbers for your actual profile.

LiteLLM Enterprise Premium: Year 1 Cost Breakdown

The fully-loaded cost comparison frequently inverts what the license-only comparison suggests. Organizations that account for engineering maintenance and MCP governance find that managed platforms are cost-competitive, and sometimes cheaper, than self-hosted alternatives at enterprise scale. The question is not whether LiteLLM Enterprise licensing is reasonably priced. It is. The question is whether the total cost of the self-hosted model, including everything the customer operates themselves, fits the organization's budget and capacity.

LiteLLM vs TrueFoundry: Feature-by-Feature Comparison

License cost and infrastructure cost tell you what you pay. Feature coverage tells you what you get. The following covers the capabilities that enterprise procurement teams consistently identify as evaluation criteria for AI gateway decisions in 2026.

Feature Comparison: LiteLLM Enterprise vs TrueFoundry

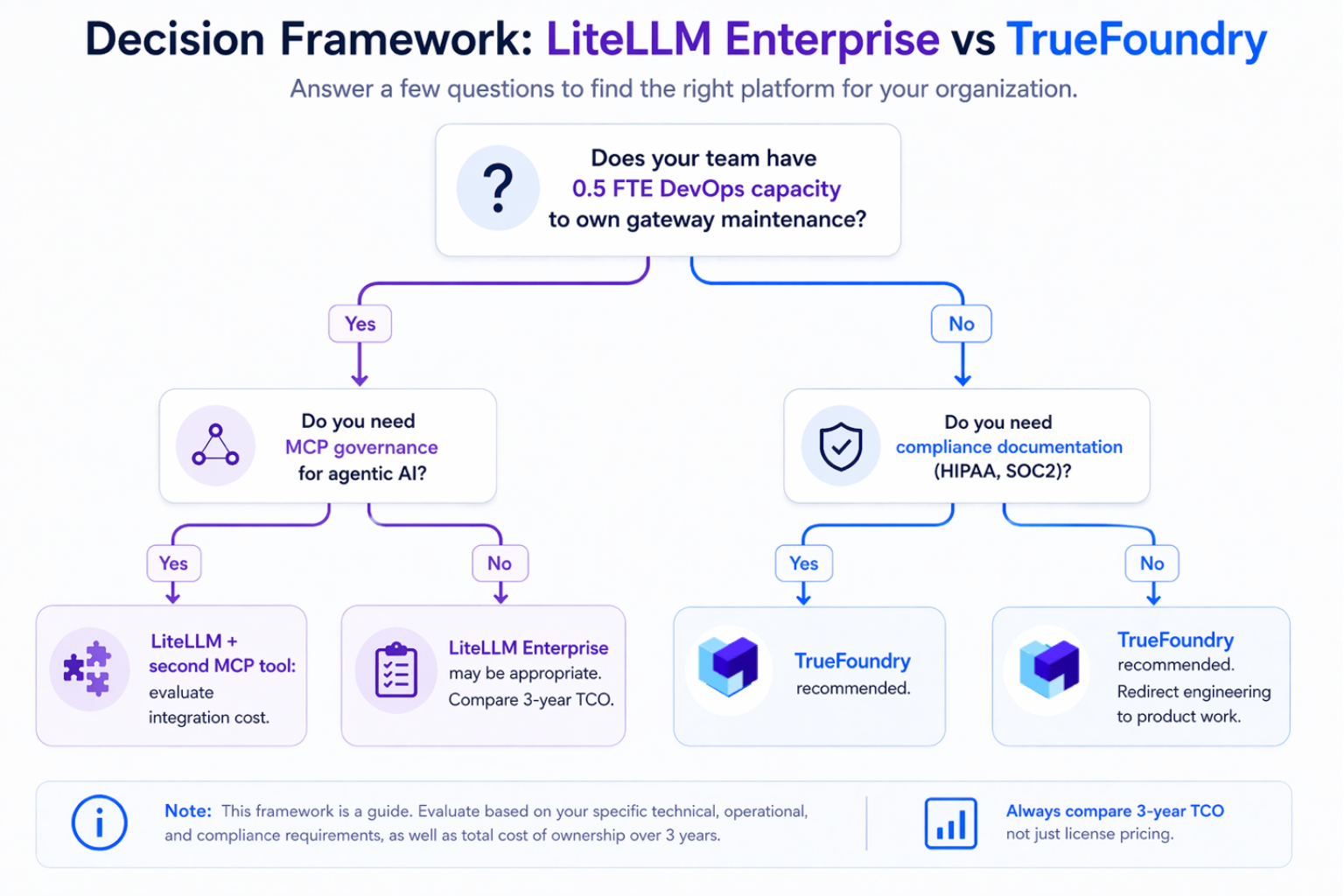

Which Platform Fits Your Enterprise

LiteLLM Enterprise Makes Sense When

- Your team has deep investment in the LiteLLM open-source ecosystem, with existing tooling and integrations built around LiteLLM's API surface. Migrating away would require meaningful re-engineering of dependent systems, and the switching cost outweighs the operational savings.

- Your engineering team has demonstrable, available capacity to own gateway maintenance. Not theoretical availability, but actual headcount that can be assigned to infrastructure management without pulling people from product work.

- Your AI roadmap does not include significant agentic AI deployments using MCP tool invocations within your planning horizon, so the MCP governance gap will not become a blocker.

TrueFoundry Makes More Sense When

- You need a single platform governing both LLM model access and MCP tool access. Running two separate governance systems and maintaining the integration between them adds cost and complexity that compounds as both systems evolve.

- Your compliance requirements, HIPAA, SOC2 Type II, or GDPR, require audit trails, access controls, and vendor risk documentation that go beyond what a self-managed open-source proxy provides out of the box.

- You operate across multiple cloud providers and need consistent governance, unified cost attribution, and a single audit log stream across all environments rather than separate per-cloud deployments with separate management overhead.

How TrueFoundry Works as a LiteLLM Alternative for Enterprise

TrueFoundry is not a LiteLLM replacement that does the same thing with a different price tag. It is a broader platform that addresses the governance gap that emerges as enterprise AI deployments mature beyond simple LLM proxy routing into agentic AI with tool use, multi-cloud deployments, and regulated data handling.

- MCP gateway included: TrueFoundry provides OAuth2-secured, RBAC-controlled MCP governance on every tool call, with Pre Tool and Post Tool guardrails covering SQL injection, prompt injection, secrets, PII, and custom Cedar/OPA policies. This is the capability that forces LiteLLM customers to evaluate a second vendor. For enterprises running at significant agent invocation volumes, TrueFoundry's policy-enforced cost controls and caching have delivered material reductions in monthly inference spend. Contact TrueFoundry for case-specific figures relevant to your deployment scale.

- Zero infrastructure management: TrueFoundry handles all infrastructure provisioning, updates, patching, and high-availability configuration. The 0.25 to 0.5 FTE maintenance cost of self-hosted LiteLLM disappears. Engineering capacity goes to building AI products rather than managing AI infrastructure. TrueFoundry's self-hosted Gateway Plane option runs approximately $600 per month in cloud infrastructure cost inside your own AWS, Azure, or GCP account. This figure covers the compute infrastructure only, in the same way the $750 to $1,500 figure for LiteLLM covers its cloud infrastructure. TrueFoundry platform fees are separate and should be confirmed with TrueFoundry's sales team for your specific deployment profile.

- Semantic caching at up to 40% redundancy reduction: TrueFoundry's semantic caching layer reduces redundant LLM API calls by up to 40% by serving cached responses for semantically similar prompts. For an organization spending $100,000 per month on LLM API costs, that reduction can offset a meaningful portion of the platform cost.

- Hard enforcement on per-team token budgets: TrueFoundry enforces hard spending limits per team, service, and endpoint. When a team's monthly budget is exhausted, new requests are blocked, not just flagged. You can set a budget of $50 for an intern team and $5,000 for a production application and the gateway enforces both automatically. This prevents the overruns that commonly occur in self-managed deployments where budget controls are advisory.

- Compliance-ready deployment in your VPC: TrueFoundry deploys within the customer's AWS, Azure, or GCP account with SOC2 Type II certification available for auditors. Audit logs are written to your own S3, GCS, or Azure Blob storage in Parquet format, with configurable retention that satisfies HIPAA's six-year requirement and financial services' seven-year record-keeping obligations. Nothing leaves your perimeter to reach TrueFoundry infrastructure.

TrueFoundry AI Gateway offre une latence d'environ 3 à 4 ms, gère plus de 350 RPS sur 1 processeur virtuel, évolue horizontalement facilement et est prête pour la production, tandis que LiteLM souffre d'une latence élevée, peine à dépasser un RPS modéré, ne dispose pas d'une mise à l'échelle intégrée et convient parfaitement aux charges de travail légères ou aux prototypes.

Le moyen le plus rapide de créer, de gérer et de faire évoluer votre IA

Gouvernez, déployez et suivez l'IA dans votre propre infrastructure

Blogs récents

Questions fréquemment posées

What is the difference between LiteLLM's Basic and Premium enterprise tiers, and which features are exclusive to Premium?

LiteLLM Enterprise Basic, at approximately $250 per month, adds the enterprise management UI, SSO/SAML integration, Prometheus metrics, JWT authentication, LLM guardrails for content filtering, and a dedicated Slack support channel to the open-source feature set. Enterprise Premium, at approximately $30,000 per year, adds priority support with defined SLA response times, dedicated account management, custom feature development, and assistance with compliance certifications for SOC2 and HIPAA.

The practical distinction is support and compliance assistance. Basic gives you the governance features. Premium gives you a vendor partner for enterprise deployment. Verify the current feature breakdown at litellm.ai/enterprise before purchasing, as feature availability changes with releases.

Does LiteLLM Enterprise include infrastructure hosting, or does the customer need to provision and manage their own servers?

LiteLLM Enterprise is self-hosted in all tiers. The license covers the software and support. The customer provisions and operates all infrastructure: a proxy server or cluster, a PostgreSQL database for configuration and audit logging, and a Redis instance for caching and rate limit counters. High-availability deployments require load balancers and database replication on top of that. LiteLLM does offer cloud and self-managed deployment options, but the operational responsibility sits with the customer regardless of which deployment model they choose.

How much engineering time does a typical enterprise spend maintaining a self-hosted LiteLLM deployment?

Enterprises that have migrated from self-managed LiteLLM to managed platforms consistently report 0.25 to 0.5 full-time-equivalent of ongoing engineering capacity consumed by maintenance. Initial deployment takes two to four weeks of senior DevOps time to set up Kubernetes clusters, configure load balancers, establish CI/CD pipelines, and integrate monitoring systems. Ongoing maintenance adds 10 to 20 hours per month for security patches, dependency updates, scaling adjustments, and infrastructure troubleshooting. Incident response for gateway outages falls entirely on the customer's on-call team.

At a fully-loaded senior engineer cost of $250,000 per year, the ongoing maintenance overhead represents $62,500 to $125,000 in annual engineering spend dedicated purely to infrastructure management. This figure grows as the number of teams and use cases on the gateway increases.

Does TrueFoundry offer a migration path for teams already running LiteLLM in production?

Yes. TrueFoundry's AI Gateway exposes an OpenAI-compatible API, so applications built against LiteLLM's unified API can point at TrueFoundry's gateway endpoint without rewriting application code. The migration involves updating endpoint URLs, moving provider credentials into TrueFoundry's credential vault, configuring RBAC and team budgets in the TrueFoundry management interface, and setting up SSO integration with your existing identity provider.

TrueFoundry's solutions team provides migration support and can produce a personalized TCO comparison for teams evaluating the switch. The typical migration timeline for a mid-sized engineering organization is two to four weeks for technical migration plus a parallel-run period to validate behavior before decommissioning the LiteLLM deployment.

How does TrueFoundry's semantic caching compare to LiteLLM's caching implementation in terms of cost reduction?

TrueFoundry's semantic caching matches prompts based on semantic similarity rather than exact string matching, serving cached responses for prompts that are functionally equivalent even when phrased differently. TrueFoundry's documented reduction rate is up to 40% of redundant LLM API calls. LiteLLM's caching implementation uses exact match and does not publish independent benchmarks for semantic similarity reduction rates. Verify current LiteLLM caching capabilities at docs.litellm.ai before comparing.

For organizations with high repetition in query patterns, such as customer support, documentation search, or internal Q&A tools, the semantic caching difference can be material. At $100,000 per month in LLM API spend, a 40% reduction from semantic caching generates $40,000 per month in direct savings, which offsets a significant portion of managed gateway costs.

What does TrueFoundry's pricing model look like for an organization with 50 teams and 1 billion tokens per month?

TrueFoundry's pricing is based on usage and deployment model rather than a fixed published rate for this scale. The fully managed SaaS option eliminates infrastructure costs entirely. The self-hosted gateway plane option runs approximately $600 per month in infrastructure cost for the gateway deployment itself. The full self-hosted control plane plus gateway option runs approximately $800 to $1,000 per month.

For a specific organization with 50 teams and 1 billion tokens per month, TrueFoundry's solutions team will produce a personalized pricing and TCO model that accounts for token volume, team count, compliance requirements, and deployment model. Book a 20-minute call to get the actual numbers for your scenario rather than working from generic estimates.

.webp)

.webp)

.webp)

.webp)

.webp)

.webp)