Beste Prompt Engineering Tools im Jahr 2026: Alles was Sie wissen müssen

Auf Geschwindigkeit ausgelegt: ~ 10 ms Latenz, auch unter Last

Unglaublich schnelle Methode zum Erstellen, Verfolgen und Bereitstellen Ihrer Modelle!

- Verarbeitet mehr als 350 RPS auf nur 1 vCPU — kein Tuning erforderlich

- Produktionsbereit mit vollem Unternehmenssupport

Prompt Engineering bezieht sich auf die Verbesserung der Eingaben, um bessere Ergebnisse aus LLMs zu erzielen.

Prompt Engineering ist wie zu lernen, effektiv mit KI zu sprechen. Es geht darum, die richtigen Worte zu wählen, wenn man KI um etwas bittet, egal ob es darum geht, Text zu schreiben, zu programmieren oder Bilder zu erstellen.

Es gibt spezielle Tools, die uns dabei helfen, besser zu werden und sicherzustellen, dass die KI uns richtig versteht und das, was wir wollen, genauer macht.

Es geht darum, die Kommunikation zwischen Mensch und KI reibungsloser und effektiver zu gestalten. Lassen Sie uns in diesem Blog über die besten Tools für schnelles Engineering sprechen, die 2026 verfügbar sind.

Was ist ein Prompt Engineering Tool?

EIN schnelles Engineering Ein Tool ist eine Softwareplattform, Anwendung oder ein Framework, das Benutzern hilft, die Anweisungen (Eingabeaufforderungen), die sie großen Sprachmodellen (LLMs) oder generativen KI-Systemen zur Verfügung stellen, zu erstellen, zu testen, zu verfeinern und zu organisieren.

Diese Tools sind entscheidend für die Verbesserung der KI-Genauigkeit, die Aufrechterhaltung einer konsistenten Ausgabe, die Minimierung von Fehlern oder Halluzinationen und die Optimierung der Art und Weise, wie Menschen mit KI interagieren. Sie gehen über einfache Chat-Oberflächen hinaus und ermöglichen strukturierte, wiederholbare Arbeitsabläufe.

Die besten Prompt-Engineering-Tools bilden eine Brücke zwischen menschlichen Ideen und maschinellen Reaktionen und helfen Benutzern dabei, vage oder einfache Anfragen in präzise, umsetzbare Aufforderungen umzuwandeln, die zu zuverlässigeren Ergebnissen führen.

.webp)

Die besten Tools für schnelles Engineering

Hier ist ein kurzer Überblick über die besten Prompt-Engineering-Tools im Jahr 2026:

LLM Gateway (Truefoundry)

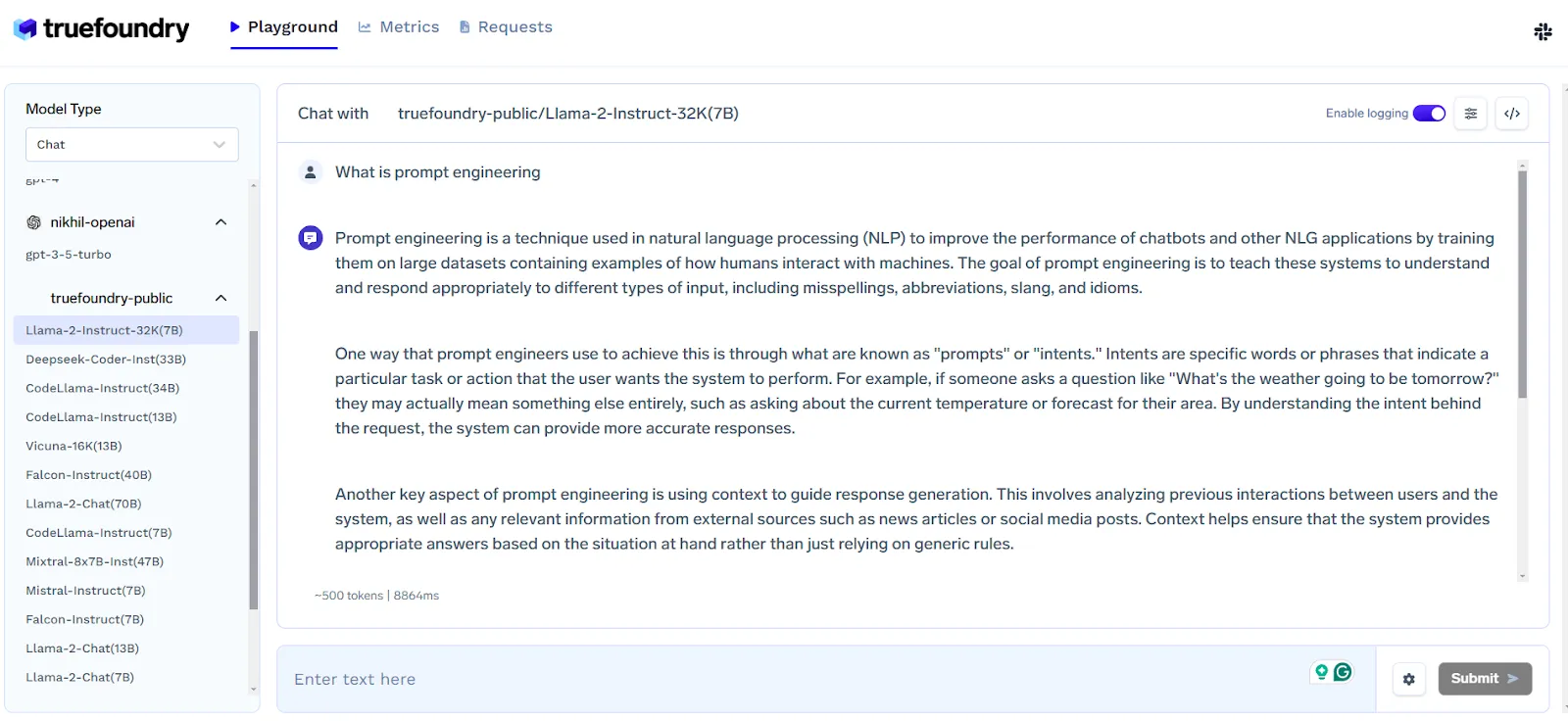

Der TrueFoundry LLM Playground ist eine Plattform, die das Experimentieren mit Open-Source-Large Language Models (LLMs) vereinfacht. Es bietet eine einfache Möglichkeit, verschiedene LLMs über eine API zu testen, ohne dass komplexe Setups erforderlich sind, die GPUs oder das Laden von Modellen beinhalten.

Auf diesem Spielplatz können Sie Modelle vergleichen, um die beste Lösung zu finden, bevor Sie sich für eine Hosting-Lösung entscheiden.

Interaktion mit dem LLM Gateway

Hier können Sie ganz einfach zwischen verschiedenen LLMs wählen, einschließlich OpenAI für Inferenz.

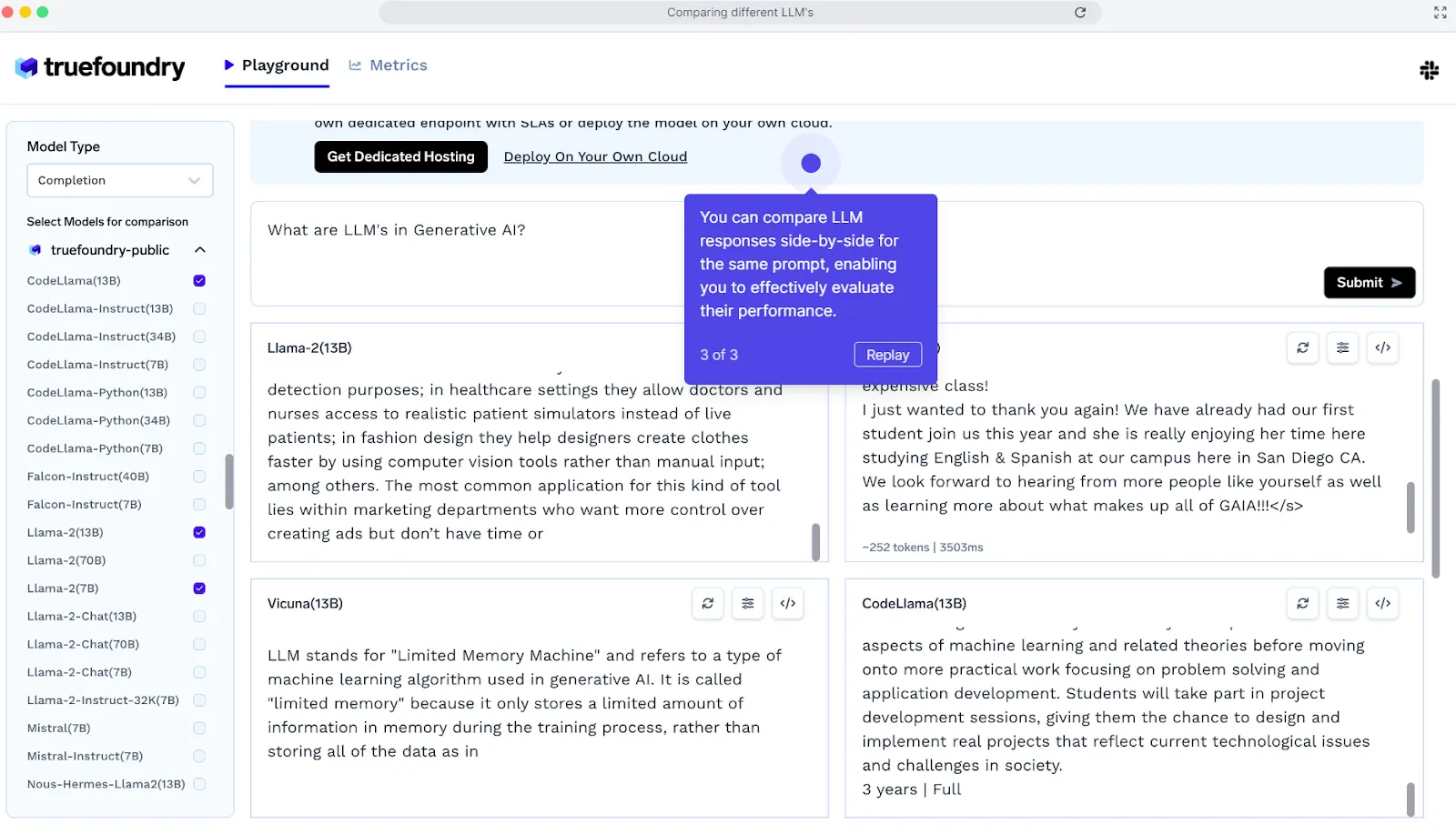

Vergleichen Sie verschiedene Modelle mit LLM Gateway:

Hier können Sie bis zu 4 Modelle für einen bestimmten Prompt vergleichen und entscheiden, welches für einen bestimmten Prompt besser funktioniert.

Die wichtigsten Funktionen:

LLM-Gateway bietet eine einzige API, mit der Sie jeden LLM-Anbieter anrufen können — einschließlich OpenAI, Anthropic, Bedrock, Ihrem selbst gehosteten Modell und den Open-Source-LLMs. Es bietet die folgenden Funktionen:

- Einheitliche API für den Zugriff auf alle LLMs mehrerer Anbieter, einschließlich Ihrer eigenen selbst gehosteten Modelle.

- Zentralisiertes Schlüsselmanagement

- Authentifizierung und Zuordnung pro Benutzer, pro Produkt.

- Kostenzuweisung und -kontrolle

- Unterstützung für Fallback, Wiederholungen und Ratenbegrenzung

- Integration von Leitplanken

- Caching und semantisches Caching

- Unterstützung für Vision- und multimodale Modelle

- Führen Sie Auswertungen Ihrer Daten durch

Während Echte Gießerei TrueFoundry bietet hervorragende Tools für schnelles Engineering. Die Funktionen von TrueFoundry gehen weit darüber hinaus. Dazu gehören Funktionen wie nahtloses Modelltraining, mühelose Bereitstellung, Kostenoptimierung und eine einheitliche Verwaltungsoberfläche für Cloud-Ressourcen.

Preisgestaltung:

TrueFoundry bietet eine kostenlose Testoption für Entwickler und Erbauer, die mit KI-Workflows experimentieren. Der kostenpflichtige Tarif von TrueFoundry beginnt bei 499 USD/Monat.

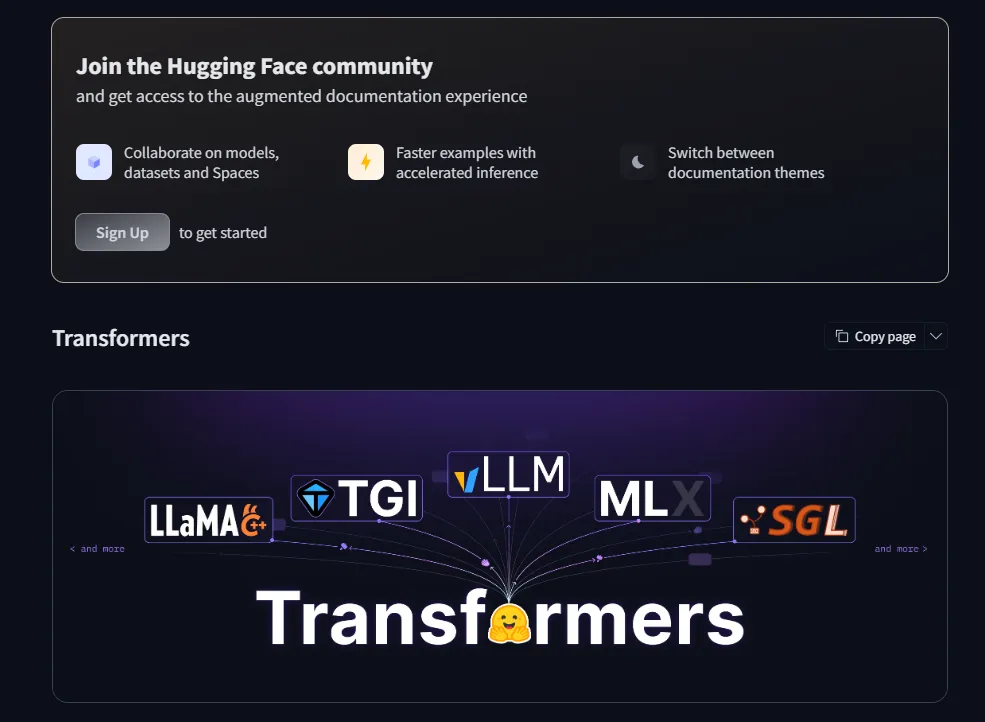

Transformers mit umarmtem Gesicht

Hugging Face Transformers bietet einfach zu bedienende APIs und Dienstprogramme für den Zugriff auf und das Training modernster, vortrainierter NLP-Modelle. Es unterstützt Aufgaben wie Übersetzung, Entitätserkennung und Textklassifizierung und fördert gleichzeitig die Open-Source-Zusammenarbeit.

Vorteile:

- Benutzerfreundlich mit zugänglichen APIs für NLP-Aufgaben.

- Unterstützt mehrere Frameworks: PyTorch, TensorFlow und JAX.

- Flexibles Modelltraining und Framework-übergreifende Inferenz.

- Open Source, fördert die Zusammenarbeit und Innovation in der Community.

Nachteile:

- Kein eigenständiges Dashboard oder GUI.

- Kann für Anfänger aufgrund umfangreicher Konfigurationsmöglichkeiten überwältigend sein.

Am besten für

- NLP-Praktiker und Forscher, die sich auf Prompt Engineering konzentrieren.

- Entwickler, die flexible, vortrainierte Modelle für die Textklassifizierung, Übersetzung oder Entitätserkennung benötigen.

- Teams, die Modelle in verschiedenen ML-Frameworks nahtlos integrieren.

Preisgestaltung

- Hugging Face Transformers ist Open Source und kostenlos. Für einige Premium-Funktionen und gehostete Inferenz-APIs gibt es möglicherweise kostenpflichtige Tarife über Hugging Face Hub.

Allen NLP

AllennLP ist eine Open-Source-NLP-Bibliothek, die entwickelt wurde, um eine Vielzahl von Aufgaben zur Verarbeitung natürlicher Sprache zu vereinfachen. Es ist zwar etwas komplexer als AdaptNLP, bietet aber eine umfangreiche Sammlung von Tools und vorgefertigten Komponenten und ist daher ideal für Forscher und Entwickler, die mit fortschrittlichen NLP-Modellen arbeiten.

Vorteile:

- Hochrangige Konfiguration für die einfache Einrichtung komplexer NLP-Aufgaben.

- Modulare Abstraktionen zum Bauen und Experimentieren mit Modellen auf dem neuesten Stand der Technik.

- Open Source und von der Community betrieben mit aktiver Unterstützung und Beiträgen.

Nachteile:

- Steilere Lernkurve im Vergleich zu einfacheren NLP-Bibliotheken.

- Für die vollständige Nutzung sind Kenntnisse der Python-Bibliothek und der NLP-Konzepte erforderlich.

Am besten für

- Forscher und Entwickler, die an fortgeschrittenen NLP-Aufgaben arbeiten.

- Multitasking-Lernen, transformatorbasierte Modelle und Textklassifizierungsprojekte.

- Experimentieren mit modularen NLP-Architekturen und benutzerdefinierten Pipelines.

Preisgestaltung

- AllennLP ist völlig kostenlos und Open Source und wird von der Community unterstützt.

NLP anpassen

AdaptNLP ist eine einfach zu bedienende NLP-Bibliothek, die die Arbeit mit fortgeschrittenen Sprachmodellen sowohl für Anfänger als auch für Experten vereinfacht. Es basiert auf Fastai und Hugging Face Transformers und bietet schnelle, flexible und effiziente Lösungen für das Training und die Feinabstimmung von Modellen.

Vorteile:

- Kombiniert Transformers und Flair für vielseitige NLP-Funktionen.

- Vereinfacht komplexe Aufgaben wie Textklassifizierung, Entitätsextraktion und Beantwortung von Fragen.

- Die benutzerfreundliche API ermöglicht schnelles Experimentieren und Training.

- Unterstützt moderne Trainingstechniken mit schneller, effizienter Inferenz.

Nachteile:

- Hauptsächlich auf Python ausgerichtet; eingeschränkte Unterstützung für Nicht-Python-Umgebungen.

- Für erweiterte Anpassungen sind möglicherweise Kenntnisse mit Hugging Face oder Fastai erforderlich.

Am besten für

- Anfänger, die nach einfach zu bedienenden NLP-Pipelines suchen.

- ML-Ingenieure, die eine effiziente Feinabstimmung der Transformatormodelle benötigen.

- Schnelles Prototyping von NLP-Aufgaben wie Klassifizierung, Entitätserkennung und POS-Tagging.

Preisgestaltung

- AdaptNLP ist Open Source und kostenlos und nutzt die von der Community betriebene Entwicklung.

LM-Scorer

LMScorer ist ein Open-Source-Tool, das eine einfache Programmier- und Befehlszeilenschnittstelle zur Bewertung von Sätzen mithilfe verschiedener ML-Sprachmodelle bietet. Es hilft bei der Bewertung und Verfeinerung von Eingabeaufforderungen, um die KI-Interaktionen zu verbessern und sicherzustellen, dass die Ergebnisse effektiver sind.

Vorteile:

- Open Source und kostenlos mit zugänglichem Code zur Änderung.

- Einfache Programmierschnittstelle und CLI für einfache Integration.

- Bewertet Sätze mithilfe von ML-Sprachmodellen, um die Qualität zu bewerten.

- Hilft bei der Verbesserung der Eingabeaufforderungen für eine bessere KI-Leistung.

Nachteile:

- Beschränkt auf die Bewertung; kein vollständiges NLP- oder Modelltraining-Framework.

- Für eine effektive Nutzung sind grundlegende Programmierkenntnisse erforderlich.

Am besten für

- KI-Entwickler und Forscher verfeinern die schnelle Qualität.

- Experimentieren mit Sprachmodellen für natürliche, verständliche Ergebnisse.

- Schnelle Auswertung mehrerer Varianten von Aufforderungen zur Optimierung.

Preisgestaltung

- LMScorer ist völlig kostenlos und Open Source.

Promptfoo

Promptfoo ist ein Open-Source-Befehlszeilentool und eine Bibliothek, die entwickelt wurden, um das Testen und Entwickeln großer Sprachmodelle (LLMs) zu optimieren. Es ermöglicht Entwicklern, Eingabeaufforderungen systematisch zu testen, Ausgaben zu vergleichen und Ergebnisse automatisch zu bewerten, wodurch Versuch und Irrtum durch einen testgesteuerten Ansatz ersetzt werden.

Vorteile:

- Open Source und kostenlos, mit CLI- und Bibliotheksintegration.

- Unterstützt gleichzeitige Tests für eine schnellere Bewertung von LLMs.

- Funktioniert mit mehreren LLM-APIs wie OpenAI und Google.

- Ermöglicht eine systematische, testgetriebene Entwicklung für qualitativ hochwertige Modellergebnisse.

Nachteile:

- In erster Linie an Entwickler gerichtet; weniger anfängerfreundlich.

- Erfordert Vertrautheit mit Befehlszeilenoperationen und LLM-APIs.

Am besten für

- LLM-Entwickler testen und verfeinern Prompts effizient.

- QA-Ingenieure und Forscher, die eine systematische Bewertung der Modellreaktionen wünschen.

- Teams, die testgesteuerte Workflows für die Qualität der KI-Ergebnisse implementieren möchten.

Preisgestaltung

- Promptfoo ist völlig kostenlos und Open Source.

PromptHub

PromptHub ist eine Closed-Source-Plattform, die zum Testen, Evaluieren und Optimieren von Prompts in mehreren Sprachmodellen entwickelt wurde. Sie ermöglicht es Benutzern, die Effektivität von Prompts zu beurteilen, Modellreaktionen zu untersuchen und die Auswirkungen verschiedener Hyperparametereinstellungen zu analysieren.

Vorteile:

- Benutzerfreundliche Oberfläche mit API-Zugriff und optionaler Docker-Bereitstellung.

- Bietet eine umfangreiche Bibliothek mit gebrauchsfertigen Prompts für die NLP- und Chatbot-Entwicklung.

- Unterstützt die schnelle Anpassung an bestimmte Modelle und Aufgaben.

- Erleichtert die Teamzusammenarbeit, Versionskontrolle und kontinuierliche Verbesserung.

Nachteile:

- Es fehlt an voller Transparenz.

- Für erweiterte Funktionen ist möglicherweise ein Abonnement oder eine Lizenz erforderlich.

- Eingeschränkte Flexibilität im Vergleich zu Open-Source-Alternativen zum Experimentieren.

Am besten für

- Teams und Entwickler testen Prompts auf mehreren LLMs.

- NLP-Praktiker, die Chatbots oder Pipelines zur Generierung von KI-Inhalten erstellen.

- Organisationen, die Zusammenarbeit und Versionskontrolle benötigen schnelle Verwaltung.

Preisgestaltung

- PromptHub ist eine kostenpflichtige Plattform mit Abonnementplänen ab 9 $/Monat.

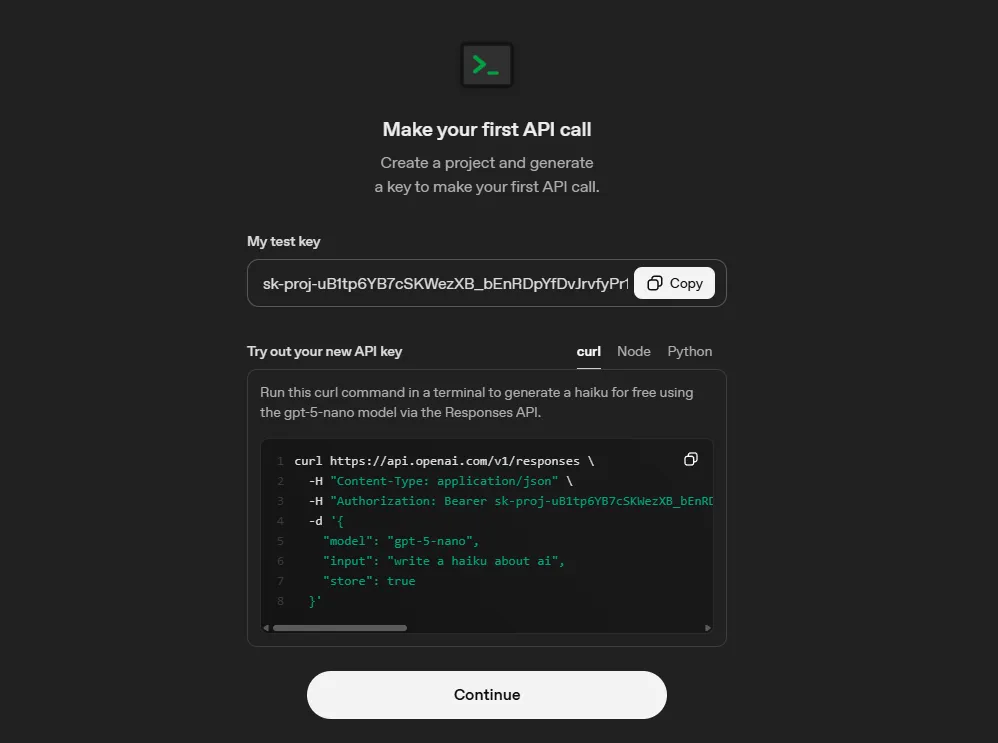

OpenAI-Spielplatz

OpenAI Playground ist ein Closed-Source-Webtool, das zum Experimentieren mit den fortschrittlichen KI-Modellen von OpenAI, einschließlich GPT-4, entwickelt wurde. Es ermöglicht Benutzern, Eingabeaufforderungen zu testen, Strategien zu vergleichen und Sprachmodelle in einer interaktiven, benutzerfreundlichen Umgebung zu optimieren.

Vorteile:

- Intuitive, browserbasierte Oberfläche, ideal für schnelles Testen und schnelles Engineering.

- Unterstützt mehrere OpenAI-Modelle und einstellbare Parameter für Experimente.

- Bietet sofortiges Feedback für eine schnelle Iteration.

- Umfangreiche Bildungsressourcen, Tutorials und API-Dokumentation verfügbar.

Nachteile:

- Beschränkt auf OpenAI-Modelle.

- Für eine erweiterte Nutzung sind möglicherweise ein OpenAI-Abonnement oder API-Credits erforderlich.

- Weniger geeignet für Nicht-OpenAI-Modelle oder benutzerdefinierte LLM-Bereitstellungen.

Am besten für

- Entwickler und Forscher experimentieren mit GPT-4 oder anderen OpenAI-Modellen.

- Fordere Techniker dazu auf, Strategien mit Nullschuss, wenigen Schüssen oder Feinabstimmungen zu untersuchen.

- Pädagogen und Schüler, die lernen, mit fortgeschrittenen KI-Sprachmodellen zu arbeiten.

Preisgestaltung

- Für die Nutzung von OpenAI Playground sind in der Regel API-Credits oder ein Abonnement erforderlich, abhängig von den Nutzungsbeschränkungen und der Modellauswahl.

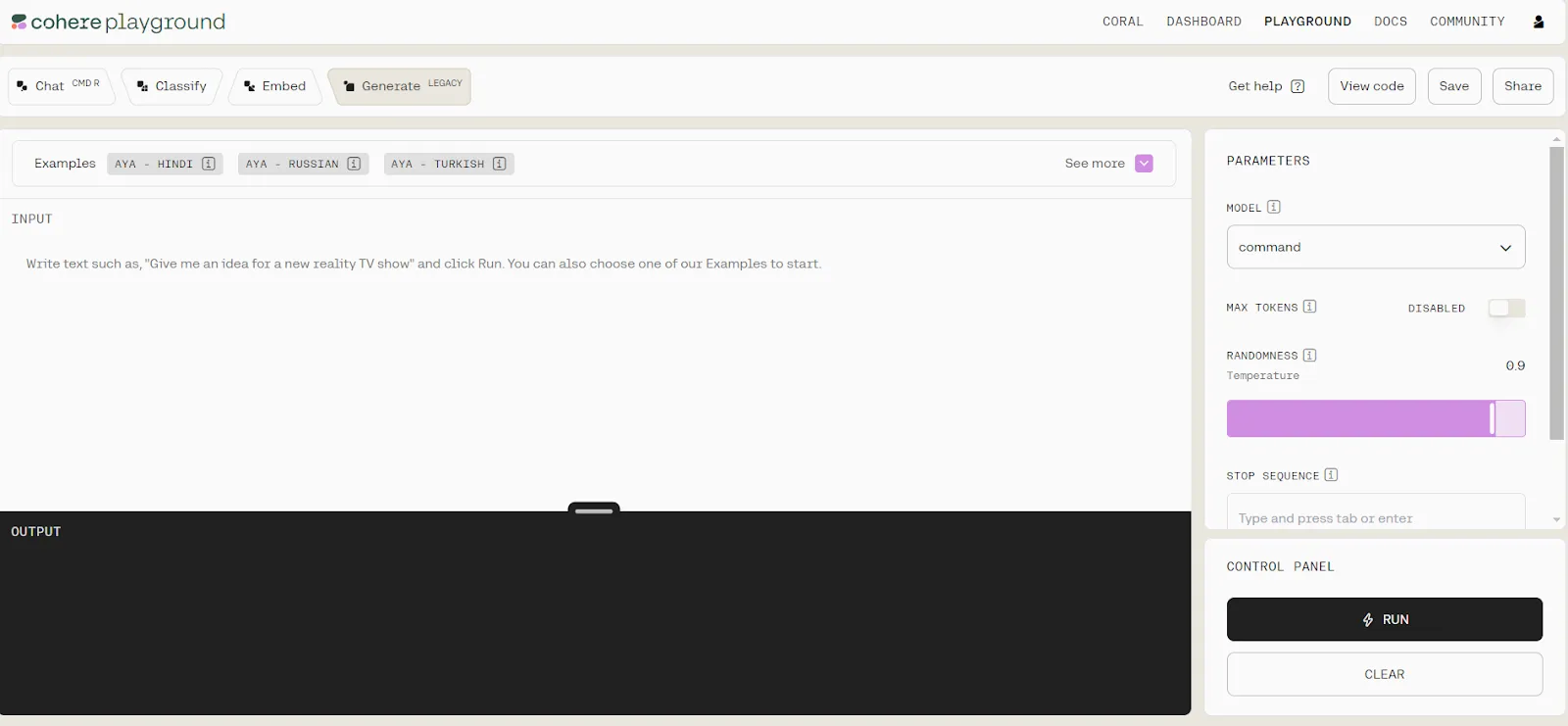

Cohere Spielplatz

Cohere Playground ist eine intuitive Online-Plattform, die es Benutzern ermöglicht, mit großen KI-Sprachmodellen ohne Codierung zu arbeiten. Sie ist sowohl für Anfänger als auch für erfahrene Benutzer geeignet und ermöglicht Textgenerierung, Einbettungsanalysen, die Erstellung von Klassifikatoren und einfache chat-basierte Interaktionen.

Vorteile:

- Keine Codierung erforderlich; anfängerfreundliche Oberfläche.

- Generieren Sie Text in natürlicher Sprache und erstellen Sie auf einfache Weise Textklassifikatoren.

- Visualisieren Sie Einbettungen für die semantische Analyse in einem 2D-Raum.

- Wählen Sie die Modellgröße je nach Bedarf aus.

Nachteile:

- Eingeschränkte Modellauswahl und Funktionen im Vergleich zu anderen Plattformen.

- Verschiedene Eingabeaufforderungen oder Modelle können nicht nebeneinander verglichen werden.

- Erfordert spezielle Berechtigungen, um Ihre eigenen Modelle zu trainieren.

Am besten für

- Anfänger, die große Sprachmodelle erkunden.

- Benutzer, die schnell mit der Textgenerierung oder -klassifizierung experimentieren müssen.

- Semantische Analyse mit Einbettungen ohne technische Einrichtung.

Preisgestaltung

- Cohere Playground benötigt möglicherweise ein Konto oder Abonnement für eine erweiterte Nutzung und den Modellzugriff

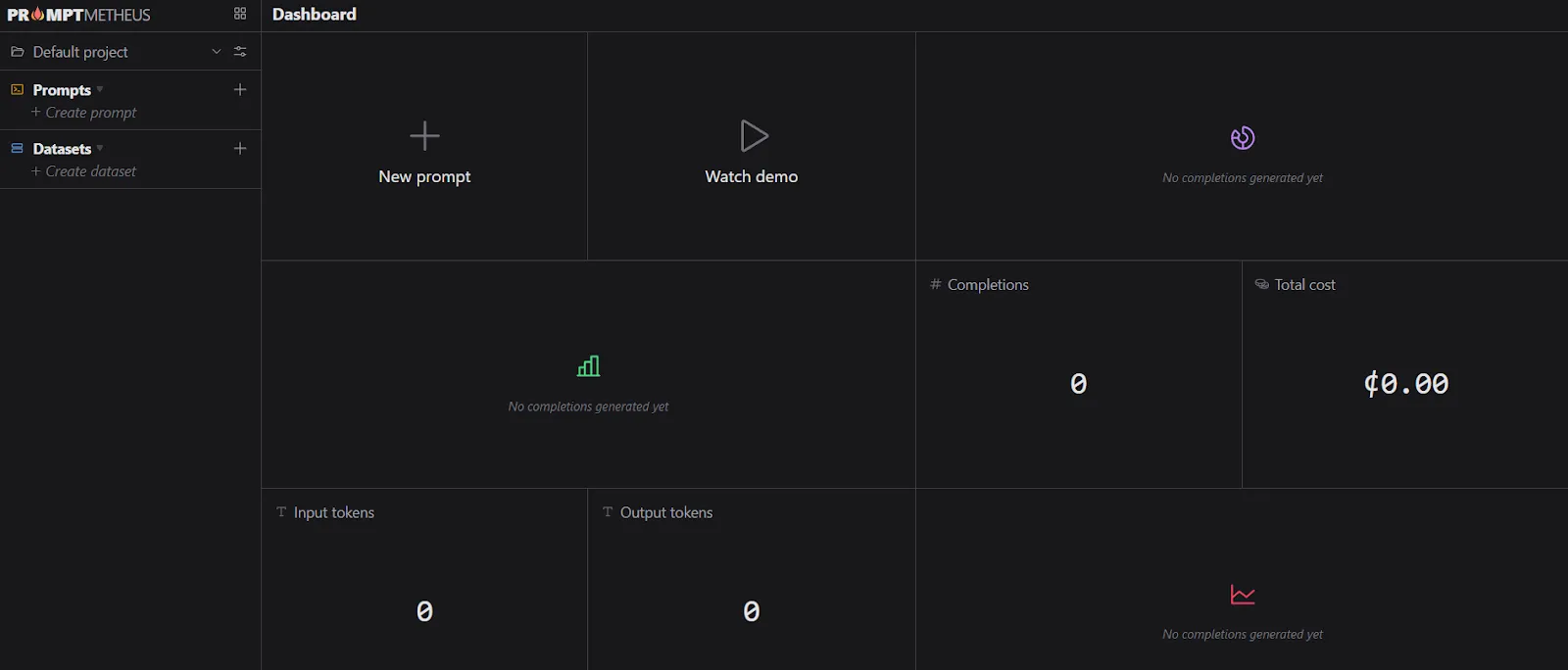

Prompt Metheus

PromptMetheus ist eine spezialisierte Prompt Engineering-IDE, die für das Erstellen, Testen und Bereitstellen von Prompts für große Sprachmodelle (LLMs) entwickelt wurde. Sie bietet eine strukturierte Umgebung mit Analysen, Tools für die Zusammenarbeit und Bereitstellungsfunktionen in Echtzeit, wodurch Prompt Engineering effizienter und überschaubarer wird.

Vorteile:

- Mithilfe von Text- und Datenblöcken zusammensetzbare Eingabeaufforderungen.

- Vollständige Verlaufsverfolgung und Rückverfolgbarkeit für ein schnelles Design.

- Kostenschätzung für die LLM-API-Nutzung vor der Ausführung.

- Zugriff auf Leistungsstatistiken und Analysen.

- Zusammenarbeit mit Teammitgliedern in Echtzeit.

Nachteile:

- Möglicherweise ist ein Abonnement erforderlich.

- Komplexer als Allzweck-Eingabeaufforderungstools; Lernkurve für Anfänger.

- Beschränkt auf LLMs und Integrationen, die von der IDE unterstützt werden.

Am besten für

- KI-Entwickler, die sich auf strukturiertes Prompt-Engineering konzentrieren.

- Teams, die an einer groß angelegten schnellen Entwicklung zusammenarbeiten.

- Benutzer, die Analysen und Kostenschätzungen benötigen, bevor sie Eingabeaufforderungen bereitstellen.

Preisgestaltung

- Die Preisangaben hängen von den Abonnementplänen oder der Unternehmenslizenz ab und beginnen bei 29 USD/Monat

Wie wähle ich das richtige Prompt Engineering Tool aus?

Die Wahl des richtigen Prompt-Engineering-Tools hängt von Ihren Zielen, Ihrem technischen Fachwissen und den KI-Modellen ab, die Sie verwenden möchten. Hier finden Sie eine schrittweise Anleitung, die Ihnen bei der Entscheidung hilft.

1. Definieren Sie Ihren Anwendungsfall

Identifizieren Sie zunächst klar, was Sie mit zeitnaher Planung erreichen möchten. Wenn Ihr Schwerpunkt auf der Erstellung von Inhalten liegt, z. B. dem Schreiben von Artikeln, Marketingtexten oder Blogbeiträgen, benötigen Sie ein Tool, das die Anpassung des Stils ermöglicht und qualitativ hochwertigen Text erstellt.

Für Entwickler sind Tools ideal, die sich direkt in IDEs integrieren lassen und Unterstützung bei der Codegenerierung oder beim Debuggen bieten. Wenn Ihr Ziel die Recherche oder Datenanalyse ist, suchen Sie nach Tools, mit denen Sie Dokumente zusammenfassen, strukturierte Daten verarbeiten oder detaillierte Berichte erstellen können.

2. Bewerten Sie die Modellkompatibilität

Nicht alle Tools unterstützen jedes KI-Modell. Prüfen Sie, mit welchen Modellen das Tool kompatibel ist, wie GPT-4, Claude oder LLama, und ob Sie je nach Komplexität Ihrer Aufgabe zwischen den Modellen wechseln können.

Die Verwendung eines Tools, das mehrere Modelle unterstützt, bietet Ihnen Flexibilität und kann die Ausgabequalität für spezielle Anwendungsfälle verbessern.

3. Beurteilen Sie die Funktionen für die schnelle Verwaltung

Ein gutes Prompt-Engineering-Tool sollte die Verwaltung und Verfeinerung von Prompts einfach machen. Funktionen wie eine Vorlagenbibliothek bieten vorgefertigte Eingabeaufforderungen, die Zeit sparen und die Konsistenz gewährleisten. Mithilfe der Versionskontrolle können Sie Änderungen nachverfolgen und Eingabeaufforderungen im Laufe der Zeit optimieren.

Wenn Sie in einem Team arbeiten, ermöglichen Funktionen für die Zusammenarbeit wie gemeinsame Repositorys mehreren Benutzern, Beiträge zu leisten und einen einzigen Prompt-Workflow aufrechtzuerhalten.

4. Überprüfen Sie die Benutzeroberfläche und die Benutzerfreundlichkeit

Die Benutzeroberfläche kann über Erfolg oder Misserfolg Ihres Erlebnisses entscheiden. Einige Tools bieten visuelle Drag-and-Drop-Oberflächen, während andere rein textbasiert sind. Wählen Sie eines, das Ihrem Komfortniveau und Ihrem Arbeitsablauf entspricht.

Eine intuitive Benutzeroberfläche reduziert die Lernkurve, sodass Sie sich auf die Erstellung von Eingabeaufforderungen konzentrieren können, anstatt durch komplexe Menüs zu navigieren.

5. Erwägen Sie Integrationen

Überlegen Sie, wie gut das Tool in Ihren bestehenden Arbeitsablauf passt. Prüfen Sie, ob es APIs oder Plugins unterstützt, die eine Verbindung zu Plattformen wie Slack, Notion oder Google Workspace herstellen. Exportoptionen sind ebenfalls nützlich, da Sie Eingabeaufforderungen, Ausgaben und Protokolle zur weiteren Analyse oder Berichterstattung herunterladen können.

6. Bewerten Sie die Preisgestaltung und Skalierbarkeit

Die Preisstrukturen sind sehr unterschiedlich. Kostenlose Tarife eignen sich hervorragend zum Experimentieren, schränken jedoch häufig API-Aufrufe, Vorlagen oder erweiterte Funktionen ein.

Bezahlte Tarife bieten in der Regel höhere Nutzungsbeschränkungen, besseren Support und Funktionen zur Zusammenarbeit. Stellen Sie sicher, dass das Tool an Ihre Bedürfnisse angepasst werden kann, um zu vermeiden, dass Sie später die Plattform wechseln.

7. Testen Sie auf Flexibilität und Ausgabequalität

Führen Sie vor dem Commit Beispiel-Prompts aus, um zu beurteilen, wie gut das Tool Anweisungen interpretiert. Prüfen Sie, ob es eine Anpassung der Ausgabelänge, des Tons oder der Persona ermöglicht. Tools, die eine fein abgestimmte Steuerung der Ausgabe ermöglichen, können die Produktivität und die Ergebnisqualität erheblich verbessern.

8. Bewerte Community und Support

Betrachten Sie abschließend das Support-Ökosystem. Umfassende Dokumentationen und Tutorials helfen Ihnen dabei, schnell loszulegen. Aktive Benutzergemeinschaften bieten gemeinsam genutzte Bibliotheken und Tipps für Eingabeaufforderungen. Ein zuverlässiger Kundensupport ist unerlässlich, um Probleme zu beheben und Ihre Nutzung effizient zu skalieren.

Warum sind schnelle Engineering-Tools im Jahr 2026 wichtig?

Schnelle technische Tools sind im Jahr 2026 unverzichtbar geworden, da KI tief in fast jeden Aspekt der Arbeit und des täglichen Lebens integriert ist. Bei immer leistungsfähigeren Modellen wie GPT-4.5, Claude 3 und LLama 3 hängt die Generierung qualitativ hochwertiger, zuverlässiger Ergebnisse stark davon ab, wie die Eingabeaufforderungen erstellt werden.

Eine gut gestaltete Aufforderung kann Genauigkeit, Relevanz und Kreativität drastisch verbessern, während eine schlecht strukturierte Aufforderung zu verwirrenden oder minderwertigen Ergebnissen führen kann.

Diese Tools optimieren auch Arbeitsabläufe, indem sie Funktionen wie Vorlagen für Eingabeaufforderungen, Versionskontrolle und Teamzusammenarbeit bieten. Sie reduzieren den Prozess des Ausprobierens und ermöglichen es Benutzern, von Inhaltserstellern bis hin zu Entwicklern und Datenanalysten, ihre Ziele schneller und effizienter zu erreichen.

Darüber hinaus verlassen sich Unternehmen angesichts der zunehmenden Verbreitung von KI in Wirtschaft und Forschung auf schnelle technische Tools, um die Konsistenz, Konformität und Reproduzierbarkeit der KI-Ergebnisse aufrechtzuerhalten.

Kurz gesagt, schnelle Engineering-Tools überbrücken die Lücke zwischen reinen KI-Funktionen und praktischen, umsetzbaren Ergebnissen und machen sie für jeden, der KI im Jahr 2026 einsetzt, unverzichtbar.

Evaluierung eines Prompt-Engineering-Tools

Bei der Bewertung eines Prompt-Engineering-Tools können Sie anhand einer Reihe einfacher Leitfragen dessen Nützlichkeit beurteilen. Denken Sie daran, dass diese Fragen weit gefasst sind und nicht alle auf jedes Tool zutreffen.

Bedienbarkeit

- Ist die Oberfläche einfach, übersichtlich und leicht zu bedienen?

- Kann ich schnell lernen, wie man dieses Tool benutzt?

- Bietet es hilfreiche Anleitungen oder Unterlagen?

- Gibt es klare Fehlermeldungen und Korrekturen, wenn etwas schief geht?

Effektivität

- Ist das Tool schnell und reagiert während der Verwendung?

- Liefert es genaue und korrekte Ergebnisse?

- Funktioniert es im Laufe der Zeit und in verschiedenen Anwendungsfällen zuverlässig?

Integration

- Funktioniert es gut mit den Tools und Systemen, die ich bereits verwende?

- Stehen starke und flexible APIs zur Verfügung?

- Ist es einfach, Daten zu importieren/exportieren oder zu verschieben?

Skalierbarkeit

- Behält das Tool die Leistung bei großen oder komplexen Workloads bei?

- Welche Rechenressourcen werden benötigt?

- Kann es die gestiegene Nachfrage bewältigen, ohne zusammenzubrechen?

Optionen zur individuellen Anpassung

- Kann ich das Tool so konfigurieren, dass es zu meinem Arbeitsablauf passt?

- Ermöglicht es eine Personalisierung für mich oder mein Team?

- Kann ich Ergebnisse oder Verhalten an bestimmte Bedürfnisse anpassen?

.webp)

Die Zukunft der Prompt Engineering Tools

Die Zukunft der Prompt-Engineering-Tools im Jahr 2026 und darüber hinaus zeichnet sich dadurch ab, dass sie hochentwickelt und benutzerorientiert sein wird. Zu den wichtigsten Trends und Entwicklungen gehören:

- Intelligente Prompt-Optimierung: Die Tools schlagen auf der Grundlage der Aufgabe, des Benutzerstils und der bisherigen Leistung Eingabeaufforderungen vor, verfeinern sie und generieren sie sogar automatisch.

- Feedback in Echtzeit: Die Anwender erhalten sofort Hinweise zur schnellen Effektivität und tragen so dazu bei, die Druckqualität ohne Versuch und Irrtum zu verbessern.

- Automatisierte Fehlerkorrektur: KI-gestützte Tools erkennen und korrigieren schlecht strukturierte Eingabeaufforderungen oder mehrdeutige Anweisungen.

- Verbesserte Zusammenarbeit: Teamweite Repositorys für Eingabeaufforderungen, Versionsverfolgung und nahtloser Mehrbenutzerzugriff verbessern die Konsistenz der Arbeitsabläufe.

- Orchestrierung mit mehreren Modellen: Benutzer werden in der Lage sein, verschiedene KI-Modelle innerhalb eines einzigen Workflows zu nutzen und für jede Aufgabe das beste Modell auszuwählen.

- Integration mit Produktivitätsplattformen: Durch die direkte Integration mit Tools wie Projektmanagement-Apps, Kommunikationsplattformen und Datensystemen werden Arbeitsabläufe optimiert.

- Erkennung von Verzerrungen und Sicherheitsprüfungen: Durch die integrierte Überwachung wird sichergestellt, dass die KI-Ergebnisse ethisch und sicher sind und den regulatorischen oder organisatorischen Standards entsprechen.

- Zentralisiertes KI-Workflow-Management: Prompt Engineering-Tools werden sich zu Knotenpunkten für die Verwaltung, Optimierung und Steuerung KI-gesteuerter Aufgaben in Teams und Projekten entwickeln.

Fazit

Prompte Engineering-Tools sind im Jahr 2026 unverzichtbar, um menschliche Absichten in genaue, kreative und zuverlässige KI-Ergebnisse umzusetzen. Sie optimieren Arbeitsabläufe durch Vorlagen, Zusammenarbeit, Unterstützung mehrerer Modelle und Feedback in Echtzeit. Die sorgfältige Auswahl des richtigen Tools unter Berücksichtigung von Benutzerfreundlichkeit, Kompatibilität und Skalierbarkeit sorgt für bessere Ergebnisse und Effizienz.

Plattformen wie TrueFoundry gehen noch einen Schritt weiter, indem sie eine einheitliche Spielwiese für mehrere LLMs, zentralisierte Versionskontrolle, Kostenverfolgung und Teamzusammenarbeit bieten und so das Testen, Optimieren und Bereitstellen von Eingabeaufforderungen in großem Maßstab vereinfachen.

Beginnen Sie noch heute mit der Optimierung Ihrer KI-Workflows, entdecken Sie TrueFoundry und erfahren Sie, wie optimiertes Prompt-Engineering Ihre Produktivität und Ausgabequalität steigern kann.

Häufig gestellte Fragen

Was sind einige häufig verwendete Prompt-Engineering-Tools?

Zu den häufig verwendeten Tools für das Prompt-Engineering gehören Open-Source-Bibliotheken wie Hugging Face Transformers und Promptfoo für automatisierte Tests. Closed-Source-Plattformen wie OpenAI Playground und PromptHub sind für iteratives Experimentieren beliebt. Diese Tools standardisieren die Art und Weise, wie Entwickler Modelleingaben verfeinern, um eine höhere Ausgabegenauigkeit zu erzielen.

Was ist das beste Prompt-Engineering-Tool?

Für das Management auf Produktionsebene ist TrueFoundry das beste Tool für schnelles Engineering, da es einen Spielplatz mit mehreren Modellen mit zentraler Versionierung und Kostenverfolgung kombiniert. Es vereinheitlicht die Eingabeaufforderungen aller Anbieter, sodass Teams optimierte Eingaben mit integrierter Governance evaluieren und bereitstellen können.

Was sind die drei Arten von Prompt Engineering?

Zu den drei Haupttypen gehören Zero-Shot-, Few-Shot- und Chain-of-Thought-Prompting. Bei Zero-Shot handelt es sich um eine Aufgabe ohne Beispiele, während bei Fe-Shot spezielle Demonstrationen zur Orientierung am Modell enthalten sind. Das Chain-of-Thought-Engineering ermutigt den LLM, komplexe Denkschritte zu verarbeiten, was für die Lösung komplizierter Logik- oder Codierungsprobleme unerlässlich ist.

Was sind die 5 wichtigsten technischen Techniken für schnelle Konstruktionen?

Zu den wichtigsten Techniken des Prompt-Engineerings gehören Chain-of-Thought, Few-Shot-Prompting, Trennzeichen für Struktur, Rollenaufforderung und iterative Verfeinerung. Die Verwendung der besten Tools für die schnelle Entwicklung wie Promptfoo hilft dabei, die Bewertung dieser Techniken zu automatisieren. Diese Methoden stellen sicher, dass das Modell den Fokus beibehält, Halluzinationen reduzieren und bestimmte Formatierungsbeschränkungen einhalten, die für die Produktion erforderlich sind.

Was sind einige bewährte Methoden bei der Verwendung von Prompt-Engineering-Tools?

Zu den bewährten Methoden gehören die Versionierung aller Eingabeaufforderungen, die Verwendung paralleler Modellvergleiche und die Implementierung automatisierter Bewertungen. Sie sollten die Token-Kosten und die Latenz bei verschiedenen Anbietern verfolgen, um die Leistung zu optimieren. Durch die Verwendung einer zentralen Verwaltungsplattform wird sichergestellt, dass Ihr gesamtes Team die effektivsten Eingabeaufforderungen verwendet und gleichzeitig strenge Sicherheits- und Überprüfbarkeitsregeln gewährleistet.

TrueFoundry AI Gateway bietet eine Latenz von ~3—4 ms, verarbeitet mehr als 350 RPS auf einer vCPU, skaliert problemlos horizontal und ist produktionsbereit, während LiteLM unter einer hohen Latenz leidet, mit moderaten RPS zu kämpfen hat, keine integrierte Skalierung hat und sich am besten für leichte Workloads oder Prototyp-Workloads eignet.

Der schnellste Weg, deine KI zu entwickeln, zu steuern und zu skalieren

.png)

.png)

.webp)

.webp)