Orchestrating Bare-Metal AI: TrueFoundry Integration with Oracle Cloud Infrastructure

Built for Speed: ~10ms Latency, Even Under Load

Blazingly fast way to build, track and deploy your models!

- Handles 350+ RPS on just 1 vCPU — no tuning needed

- Production-ready with full enterprise support

Deploying distributed training jobs or high-throughput inference on Oracle Cloud Infrastructure (OCI) requires a specific architectural approach. OCI provides bare-metal GPU instances with zero hypervisor overhead and Remote Direct Memory Access (RDMA) cluster networking over Converged Ethernet.

While bare-metal infrastructure maximizes performance, it requires advanced operational management. You must configure network interfaces, manage low-level NVIDIA drivers, and handle node failures manually without the abstraction layer of managed virtualization. TrueFoundry operates as an infrastructure overlay within your OCI tenancy. It translates high-level machine learning workloads into exact bare-metal execution commands. We detail the technical integration between TrueFoundry and OCI below, focusing on Kubernetes orchestration, RDMA networking, and workload identity.

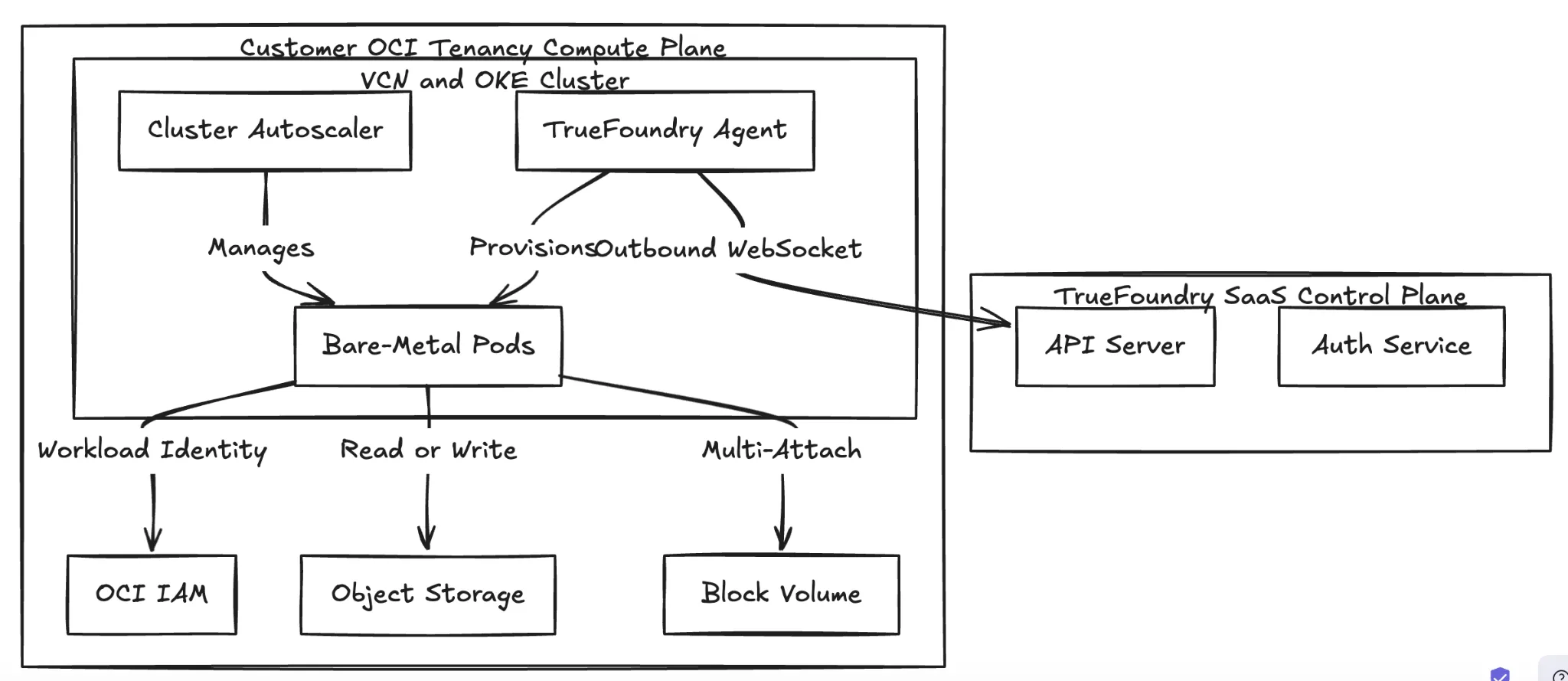

Deployment Model: Control Plane vs. Compute Plane

TrueFoundry utilizes a split-plane architecture. The Control Plane manages RBAC, metadata, and routing. The Compute Plane executes the model weights and processes customer data. In an OCI environment, you run the Compute Plane on Oracle Cloud Infrastructure Kubernetes Engine (OKE).

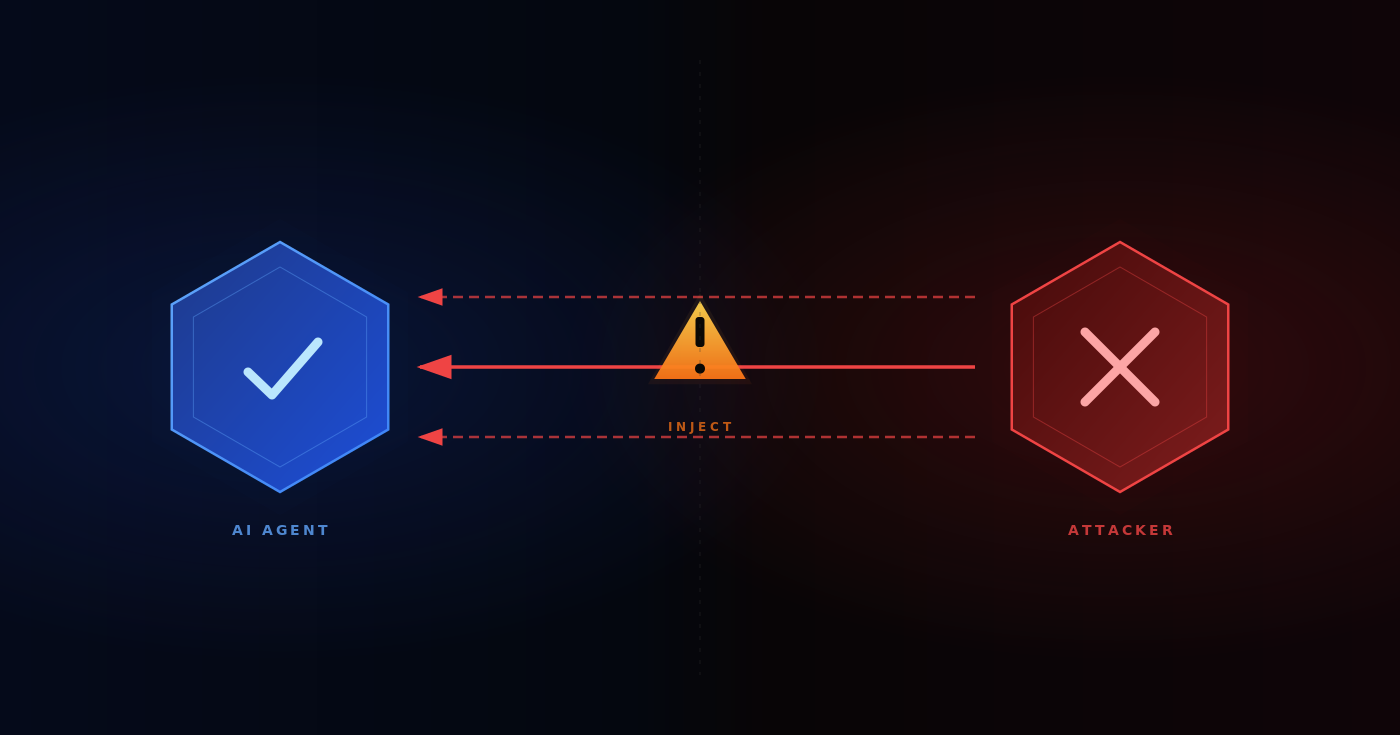

The Control Plane hosts the API server and scheduling logic. The TrueFoundry agent runs on your OKE cluster. The agent initiates an outbound-only gRPC or WebSocket stream to poll for deployment manifests. This design removes the requirement for standard inbound ports on the Virtual Cloud Network (VCN), keeping your execution environment private.

Fig 1: The Split-Plane Architecture isolates data processing within the customer VCN.

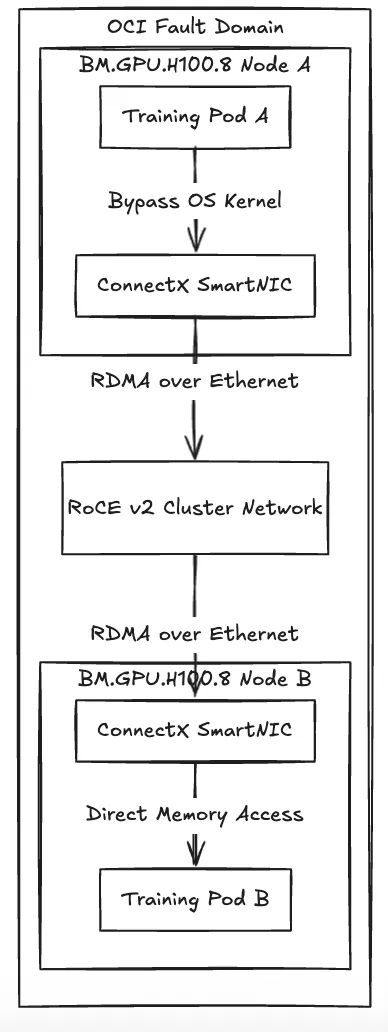

Networking: Abstracting RoCE v2 and RDMA

Training Large Language Models demands massive node-to-node bandwidth. OCI provides a specialized cluster network that can deliver latency as low as two microseconds by bypassing the OS kernel using RDMA over Converged Ethernet v2 (RoCE v2). To utilize this hardware, you must schedule workloads on bare-metal nodes within the same Fault Domain and configure them to access the Mellanox ConnectX SmartNICs directly.

TrueFoundry automates these scheduling constraints. When you submit a distributed training job using PyTorch DDP or DeepSpeed, the TrueFoundry controller translates your request into a Kubernetes MPIJob. The controller applies strict node affinity rules to ensure all pods land on the designated bare-metal cluster network. It then injects the required host path volumes and privileged security contexts so the container accesses the InfiniBand devices natively. You do not need to write custom Kubernetes manifests.

Fig 2: RDMA network flow detailing kernel bypass for inter-node GPU communication.

Identity Federation and Security

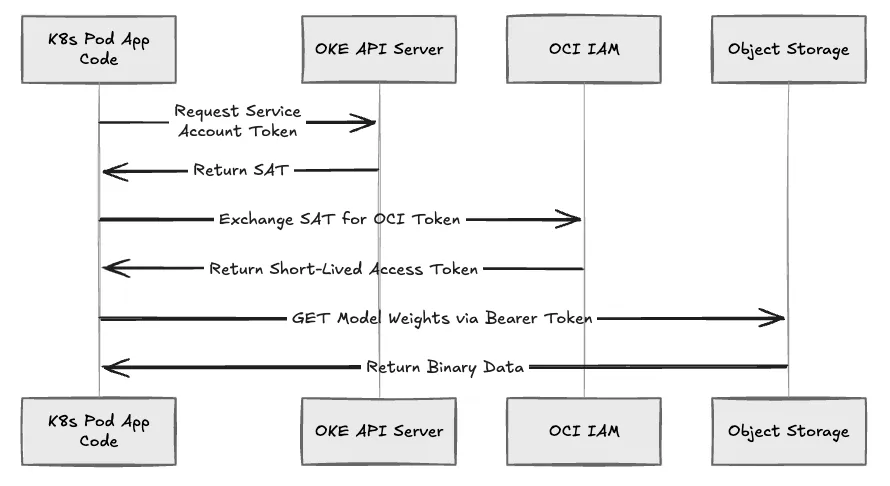

OCI implements Workload Identity to replace static credentials or user-principal API keys within application code.

When a TrueFoundry deployment requires access to OCI Object Storage to load model weights, the platform provisions a Kubernetes Service Account bound to an OCI Identity and Access Management (IAM) policy. The OKE metadata server intercepts the authentication request, validates the Kubernetes token, and issues a short-lived OCI access token to the pod. Your application code uses the standard OCI SDK via this injected token mechanism. We restrict the blast radius of a compromised pod to the specific IAM policies attached to that isolated Service Account.

Fig 3: The OKE Workload Identity authentication sequence.

Compute Optimization: Block Volume Multi-Attach

OCI provides bare-metal hardware options like the BM.GPU.H100.8 via predictable compute pricing models. Because these are physical machines, provisioning logic differs entirely from virtualized environments. TrueFoundry integrates directly with the OKE Cluster Autoscaler to manage these nodes, treating bare-metal hardware as elastic capacity.

Loading a 100GB model into VRAM across 64 GPUs concurrently strains standard network storage and delays deployment readiness. TrueFoundry circumvents this by utilizing OCI Block Volume multi-attach features. The platform mounts a single high-IOPS block volume containing the model weights across multiple bare-metal instances simultaneously in a read-only configuration. This architecture minimizes the network bottleneck of pulling weights from Object Storage on every pod startup, which can reduce deployment times for large models significantly.

Operational Comparison: Native OCI vs. TrueFoundry Overlay

The following table outlines the operational differences between managing raw OCI bare-metal primitives and using the TrueFoundry overlay.

Conclusion

The collaboration between TrueFoundry and Oracle Cloud Infrastructure is designed to remove the operational impedance of bare-metal computing. By automating the complexities of Kubernetes orchestration, RoCE v2 RDMA networking, Workload Identity federation, and high-performance Block Volume multi-attach, TrueFoundry ensures that your data science and engineering teams can maximize the raw speed of OCI’s bare-metal GPUs. This infrastructure overlay allows you to shift your focus entirely back to building, training, and deploying large-scale AI models without dedicating extensive engineering resources to managing low-level cloud primitives.

TrueFoundry AI Gateway delivers ~3–4 ms latency, handles 350+ RPS on 1 vCPU, scales horizontally with ease, and is production-ready, while LiteLLM suffers from high latency, struggles beyond moderate RPS, lacks built-in scaling, and is best for light or prototype workloads.

The fastest way to build, govern and scale your AI

.webp)

.webp)

.webp)

.webp)