Bifrost Alternatives: Top Tools You Can Consider in 2026

.webp)

Built for Speed: ~10ms Latency, Even Under Load

Blazingly fast way to build, track and deploy your models!

- Handles 350+ RPS on just 1 vCPU — no tuning needed

- Production-ready with full enterprise support

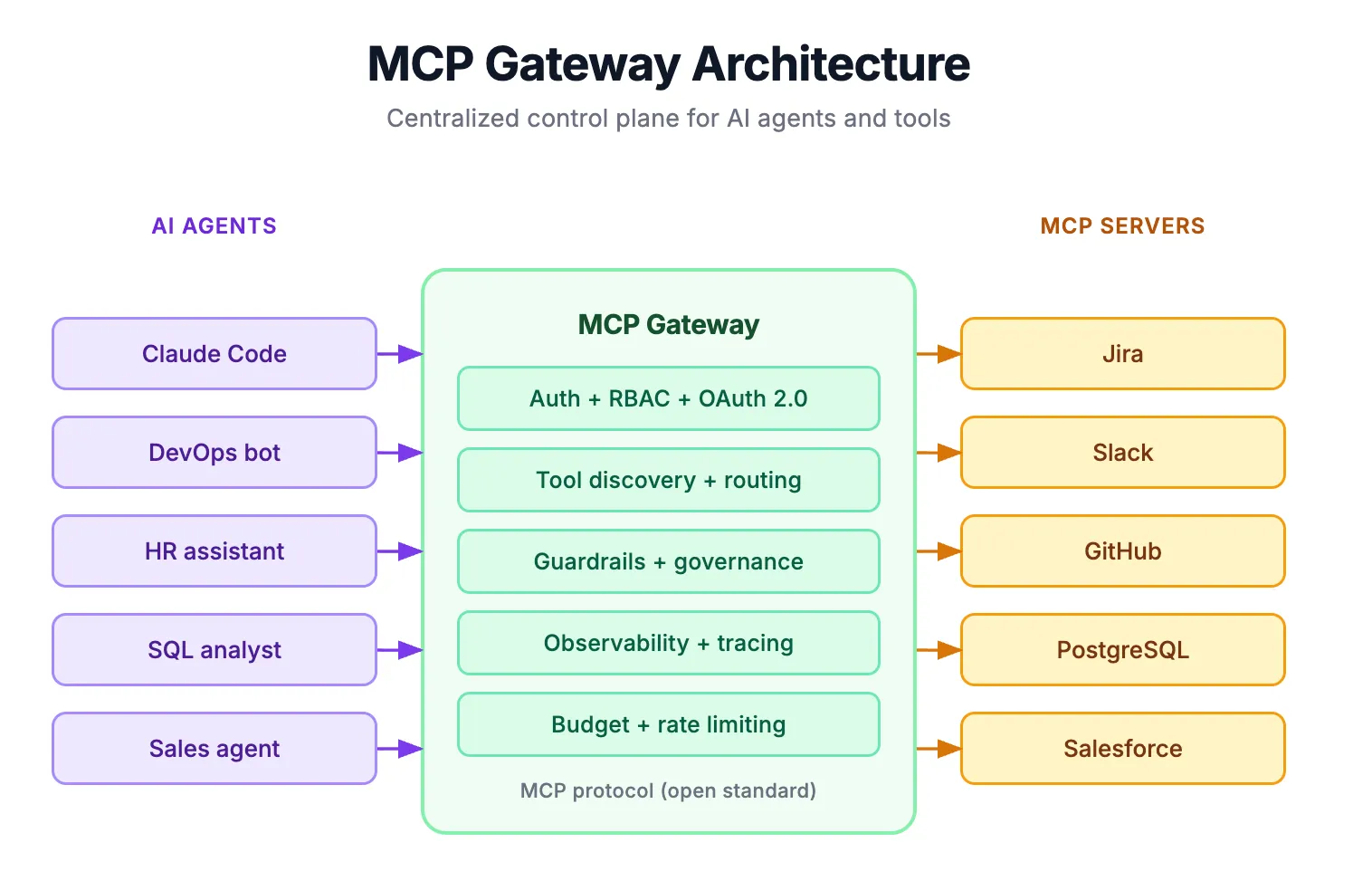

What is Bifrost?

Bifrost is an open-source AI gateway that was built in Go by Maxim AI (H3 Labs). It provides teams with a centralized interface that works with multiple LLM providers through the same OpenAI-compatible endpoint, as well as allows teams to perform tasks with their own MCP Tools.

The Bifrost Gateway is completely self-hosted and available for both Docker and NPX deployment and uses an Apache 2.0 open source license. Additionally, Bifrost provides complete native MCP capability, serving as both an MCP Client and Server; this centralisation of authentication, authorisation, and tool discovery gives Teams a single Control Layer for managing all of their LLM workloads.

Bifrost has also incorporated Maxim AI’s observability platform for its production environment monitoring.

Why teams look for alternatives

Many organizations that use Bifrost to serve as their Managed Control Point (MCP) gateway are considering other options because of some limitations that Bifrost has, including:

- Enterprise Governance: Advanced features that are typically included with higher-tier solutions; for example, have the ability to create guardrails, cluster instances of applications, use adaptive load balancing, create federated authentication models, etc., are often excluded from Bifrost’s service.

- Operational Burden: There is no managed cloud option for teams to use; therefore, your team must manage the entire infrastructure lifecycle–deploying infrastructure, scaling it, upgrading it, maintaining it, etc.

- Lack of AI Lifecycle: Bifrost only covers a portion of the full AI Lifecycle–only the gateway layer. It does not include capabilities for deploying and/or fine-tuning models or managing prompts.

- Observability Lock-In: Bifrost’s deeper observability capabilities come with Maxim AI’s proprietary platform and, as a result, cannot be used with an open observability tool.

- Complexity of Orchestrating Multi-Agent Workflows: As an organization’s systems grow in complexity, the number of agents on their system and how they interact require significant custom engineering. Most of this custom engineering must go beyond simply routing tools.

In this document, we will be exploring the best alternatives to Bifrost in 2026 with respect to solving the problems that Bifrost has in regards to MCP Routing, Governance, Observability and full-stack AI Infrastructure.

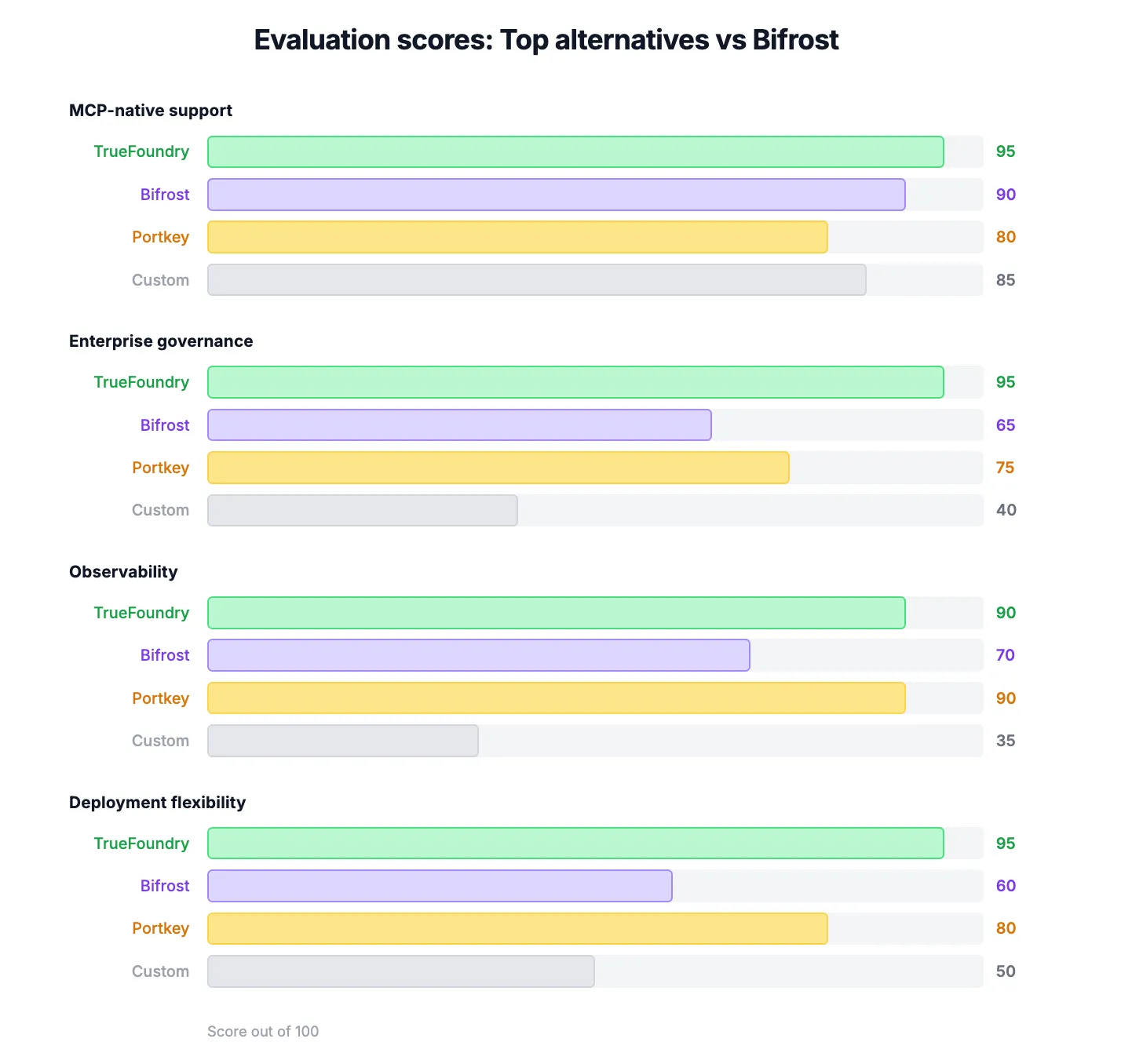

How Did We Evaluate Maxim AI MCP Gateway (Bifrost) Alternatives

We assessed Maxim-AI MCP Gateway alternatives to determine which represented production ready solutions, based upon 4 major factors.

1. MCP-native vs. MCP-compatible

MCP protocol support varies by platform. During our analysis, we identified two distinct categories:

- MCP-native: All standard tools can be discovered through the MCP standard. Tools can be invoked during execution and work in a seamless manner with MCP clients (e.g., Claude Desktop, Cursor, VS Code).

- MCP-compatible: Tools will either employ proprietary mechanisms to call and/or invoke (e.g., OpenAI function calling or AWS actions), or will provide adapters/bridges to MCP; however, no native implementation of the MCP exists.

Overall, during our evaluation of platforms, native implementations scored higher than non-natives. Native implementations lower latencies, remove unnecessary translation layers, and eliminate proprietary middleware from/for tools/agents resulting in faster, easier and more reliable tool invocation.

2. Enterprise capabilities (Authorization, Oversight, and Audit Processes)

Routing alone does not work for a production environment. Therefore, we evaluated four main areas that represent a gauge of how capable the platform is at supporting enterprise-level governance:

- Identity and access management: Role-based access control and support for third-party identity providers, such as Okta and Azure AD.

- Tool permissions: Detailed permissions so users can access and manage individual MCP tools.

- Budget controls: The controller enforces the budget limit for the user, team, or application.

- Auditing: All model and tool invocations are logged for compliance with standards like SOC2, HIPAA, and EU AI Act.

3. Observability and debugging

An MCP Gateway is a black box without visibility. Therefore, we evaluated the observability of our platforms on:

- Distributed Tracing: Correlating LLM decisions with Tool invocations.

- Structured Telemetry: With dashboards providing insight into request latency, token usage, errors, and request volume.

- Debugging capabilities: Quickly resolving failures through multi-step agent workflows.

4. Deployment flexibility and developer experience

To finish our assessment, we evaluated how easily teams can deploy and scale these platforms to use in real-life situations:

- Deployment Options: Managed, Self-Hosted, and Hybrid Deployment Options

- Multi-Cloud Support: Support for the vendor lock-in, multi-cloud compatibility with AWS, GCP, and Azure

- Developer Experience: Ease of setup, documentation support level, and time it takes to go from a nonexistent to a working (up-and-running) implementation.

The platforms with the most flexible types of deployment and the shortest amount of time to be raised from an existing situation made it have the highest cumulative scores in this category.

Also Read: Bifrost vs LiteLLM

Quick Comparison Table - Top 6 Maxim AI MCP Gateway (Bifrost) Alternatives for 2026

Before diving into each platform, here is a quick comparison table to help you understand how the leading Bifrost gateway alternatives differ in focus and capabilities.

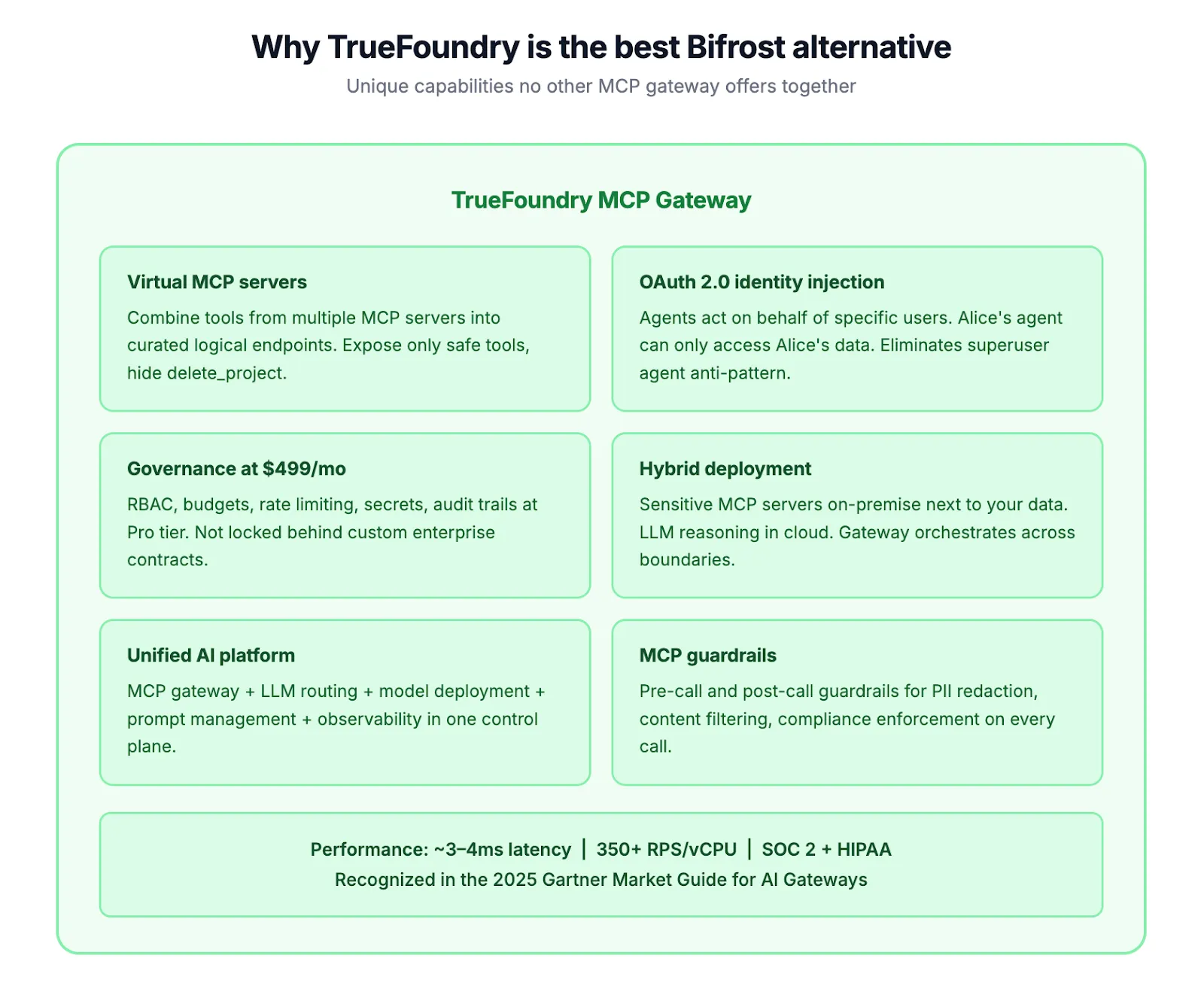

1. TrueFoundry MCP Gateway

TrueFoundry, which is recognized as a vendor on the 2025 Gartner Market Guide for AI Gateways, provides an all-inclusive infrastructure platform that includes LLM Routing combined with the ability to manage multiple different MCP Tools, deploy models, manage prompts, and maintain observability through a single control plane.

Key Feature:

- Unified LLM + MCP Gateway: Next-gen routing across 1,000+ LLM models from multiple providers, central control of MCP Tool connectivity and central management of Agent workflows from a single dashboard.

- Virtual MCP Servers: Create a curated list of logical endpoints allowing users to combine tools from multiple MCP servers; only expose approved tools for that particular logical endpoint; hide dangerous operations from view.

- OAuth 2.0 Identity Injection: Authorize any agent to act on behalf of the user with user permission; removes the "superuser agent" anti-pattern (where all agents use the same set of privileged credentials).

- Enterprise Governance at Pro Tier: Included all Enterprise Governance components (RBAC (Role-Based Access Control), Budget Control (spending limits for customers), Rate-Limiting, Secret Management and Audit Trail); no requirement for custom enterprise contracts).

- MCP Guardrails: Pre-call and post-call enforcement of PII Redaction, Content Filtering, and Compliance Policies

- Deep Observability: Complete support of all OpenTelemetry compliant distributed tracing, latency tracking, Token Analytics and integration with Datadog.

- Hybrid Deployment: Maintain sensitive MCP servers locally while handling LLM reasoning in the cloud, with the Gateway managing these interests. Deploy to multiple clouds, utilize Air-gaps, or create Virtual Private Clouds (VPCs).

- Performance: Minimally 3 - 4ms Latency (Gateway) to ~350 RPS/vCPU; supports horizontal scaling via in-memory Authentication and Rate Limiting.

Best for:

Enterprise/marketing platform teams want a single solution to control the full lifecycle (from modeling to governing) of all tools; useful for companies subject to regulatory scrutiny (ex. - banking, healthcare & insurance) as there is no leeway to negotiate compliance with SOC 2; HIPAA; and EU AI regulations. Also, teams want to use a managed deployment model while maintaining the option of self-hosting.

Why is TrueFoundry a Better Alternative than Bifrost?

Bifrost may excel in routing but it only provides basic functionality, whereas TrueFoundry offers complete, end-to-end support for the full lifecycle (including deployment, fine-tuning, MCP Governance, prompt management & observability). This eliminates the need to glue together many disparate tools in order to support your models' lifecycle.

One area where virtual MCP servers are unique is solving the N×M architectural dilemma (i.e., how to route requests through multiple virtual machines). With virtual MCP servers, each of your agents can only connect to the appropriate endpoint(s), which gives them more security than protocols-only connections, and thus allows you to restrict the types of credentials that your agents can use (something that no other gateway we've compared provides).

In addition, Bifrost's guardrails (such as clustered or federated authentication) necessitate you to have a “contract” with them in place, such that you would not know how much those contracts would cost you upfront.

2. Portkey

The Port Key gateway enables a unified API interface to over 1,600 LLMs with production-oriented enablement, integrated observability, and a fully open-source API (MCP) Gateway for centralized governance of all production enablement tools.

Key Features:

- Open-source MCP Gateway: the MCP Gateway allows for centralized authentication layers via OAuth 2.1, API tokens, and header authentication, with an immediately inspectable codebase.

- Fast Core Gateway: processes more than one trillion tokens in production with sub-1ms latency and 122KB of total footprint each day.

Pros:

- Excellent Developer Experience with a three-line SDK for integration, a two-minute installation time, and the ability to work with existing OpenAI-compatible APIs.

- Strong Observability: active open-source development community (over 10K GitHub stars) and an observability dashboard with comprehensive functionality.

- Compliant with SOC2 and HIPAA: the application can be utilized as a SaaS offering, private cloud, or completely self-hosted.

Cons:

- Newer MCP Gateway: the maturity of features in the new MCP Gateway are continuing to catch-up with the existing core LLM Gateway.

- No Virtual MCP Server abstractions allow the end-user to determine subsets of tools to be accessed through logical endpoints.

- Log retention is limited to thirty days on the Pro tier of the Port Key application.

Best for:

The optimal solution for observability first teams searching for fast, low weight gateway to LLM and MCP routing combined. Startups/mid-market that have already used Portkey to manage LLMs and would like to also manage MCPs under the same governance but don't want to utilize another tool.

3. LangChain + LangGraph

LangChain + LangGraph (the top LLM Application Framework) includes LangGraph, a stateful way to manage multiple agents in a choreographed environment. Use LangChain and LangGraph to create custom workflows that adhere to MCP specifications but are not hosted services.

Key Features:

- Flexible agent orchestration - allow full control over the routing decisions you make when composing your workflow.

- LangGraph - utilizes stateful execution graphs to allow for coordination between multiple agents while preserving the state of the agent across turns.

- LangSmith - provides monitoring, evaluation, and tracing functionality built specifically for agent pipelines.

Pros:

- Maximum flexibility - allows you to build your workflow topology to fit your use case.

- No vendor lock-in, through the means of a large variety of end-users contributing to the element of assurance.

- LangSmith has built-in tools to facilitate development and debugging, allowing for rapid iteration.

Cons:

- Not a gateway - routing through MCP, authorization and governance can only be accomplished via custom builds and subsequent management by you alone.

- No built-in role based access control, audit trails or enterprise identity management capabilities.

- Significant engineering resources needed for building a reliable and secure system for production use.

Best for:

Teams looking to establish complete control over the agent orchestration process, with sufficient engineering resources, and interested in creative, unique uses of multi-agent systems that other LLM gateways will not support.

4. Anthropic MCP Ecosystem Tools

Late last year, Anthropic released their initial MCP (Model Context Protocol) to establish the common standard (open) framework for agent-to-tool connection. Claude Desktop / Code and the SDK all have native support for MCP, representing the initial step in building agents using MCP.

Key Features:

- Native Support for MCP: The native integration into each of the three primary products (Claude Desktop / Claude Code / SDK) provides the best integration of all suppliers into the MCP standard.

- Open Specification: All of the reference implementations and protocol documentation for MCP is publicly available, allowing for others to build upon.

- Growing Ecosystem: There are currently thousands of community created MCP servers supporting databases, API's, development tools, and enterprise level systems.

- Claude Code: This supports agentic development via the use of the command line and provides the same direct access to your tool uses through MCP.

Pros:

- Protocol Creator: They have the deepest native connection to the MCP standard of any suppliers.

- Claude Models have been specifically designed for workflow of tool use and structured data.

- MCP is an open standard and does not lock consumers into any vendor at the protocol level.

Cons:

- There is no single entry point for accessing all your MCP connections as each instance of Claude is responsible for managing their own connections.

- There is no enterprise oriented governance layer (RBAC, Audit Trails, Budget Control).

- Limited only to using Claude Models out of the box and require additional tooling to connect multiple vendor usage.

Best for:

Development of Claude First Agent Workflows. Individual Developers and Small Teams currently not focused on Enterprise Oriented governance.

The natural starting for developing new MCP-based agents using Claude will be through the existing Claude tools.

5. AWS Bedrock Agents

An entirely managed agent framework that is hosted in AWS, provides capabilities to orchestrate multi-step tasks to connect foundation models to enterprise data sources through the inherent services of AWS.

Key Features:

- Fully managed agent orchestration - automatic multi-step planning and execution with no infrastructure to provision.

- Action groups - the ability to connect agents to Lambda function and external APIs to execute real-world tasks.

- Observability - default integration of CloudWatch metrics and CloudTrail audit logs.

Pros:

- Fully managed - there is no infrastructure to deploy or maintain and a fully scalable environment.

- Enterprise compliance is built-in (SOC 2, HIPAA, FedRAMP certifications).

Cons:

- AWS-locked - cannot be migrated to other clouds and not supported in on-premise environments.

- Complex consumption-based pricing that may be unpredictable at scale.

Best For:

AWS invested enterprises who desire fully managed agent infrastructure within existing AWS ecosystems, IAM policies, and compliance frameworks. Teams that place a premium on operational simplicity over the ability to migrate between cloud offers.

6. Custom-Built MCP Gateway

A custom gateway gives you the most control over how data is routed, how it is secured, and what tools are used; however, you will incur a significant investment in terms of both building and maintaining a custom-built MCP Gateway.

Key Features:

- Complete Protocol Control — implement your own specifications to the handling of both the client and server sides of the MCP protocol

- Customized Authentication Logic — design authentication and authorization flows that are tailored to meet your application's identity infrastructure

- Purpose-Built Architecture — every aspect of the architectural design has been created for the specific workloads and scalability of your application

Pros:

- No Licensing Fees — as you will have used only open-source components to create your gateway

- You Will Own Security Posture & Compliance

Cons:

- You will need to invest at least 3-6 months in engineering to create a production level solution

- Your team will be responsible for ongoing support of security patching, MCP protocol updates, and feature enhancements

- You are responsible for defining & implementing your own RBAC, audit logging, identity propagation, and guardrails.

Best For:

Large engineering organizations that have engineering teams that are large enough to warrant the creation of an MCP Gateway and other products will not satisfy their engineering needs.

Bifrost Alternatives at a Glance: Detailed Feature Comparison

How to Choose the Right Maxim AI MCP Gateway Alternative

Choosing the right Maxim AI MCP Gateway alternative depends on your scale, architecture, and governance needs.

Key decision factors:

When selecting a gateway option there will be repercussions sometime after approving the initial selection — technology debt, compliance issues, or a complete system “rip-and-replace” that was unforeseen and not budgeted for.

Here are five questions to consider when making a Gateway selection that can help streamline the process and minimize project risk.

- Which protocol is needed? If agents are using a standardized tooling method for discovery (such as the use of Claude Desktop, Cursor and VS Code), then the use of a native protocol is non-negotiable. All proprietary protocol methods will work within their respective ecosystems until one day they do not.

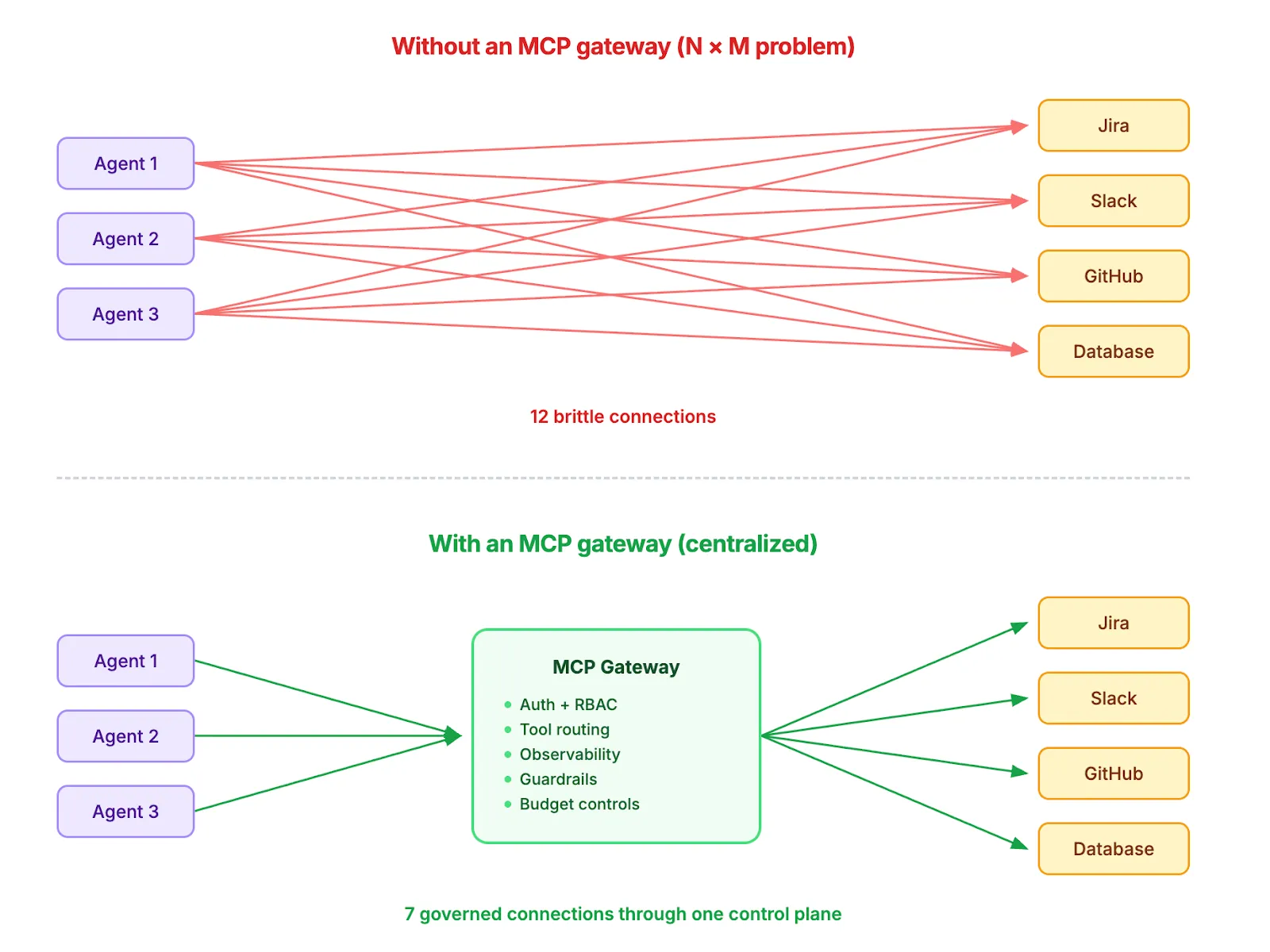

- How many agents will you have? Five Agents and a manual MCP wiring is manageable, but having 50 Agents accessing 50 different tools creates a problem of 50 x 50 = 2,500 potential credential fraud opportunities.

- Is a Compliance representative involved? In regulated environments, having a compliance representative as part of the team for RBAC, audit trail and fiscal control are standard practices. If a compliance rep requires that they be able to find traceability for every invocation of a Tool, then “coming soon” is not acceptable.

- Who do your agents act as? Sharing credentials between agents will eventually result in a security incident. Creating an identity scoped agent using OAuth 2.0 context will be a defensible framework. If your agents touch User Data, then this requirement will be required.

- Should you build or buy? A Custom Gateway will take 3-6 months of engineering development and require constant maintenance. If you have the engineering capacity required to complete a custom gateway and you have genuine unique specifications, you can build your own. Otherwise, you may want to spend engineering hours working on product development.

Scenario-based recommendations

For most teams, TrueFoundry offers the best balance between flexibility, governance, and speed to production.

Frequently Asked Questions

What is Maxim AI MCP Gateway (Bifrost)?

Bifrost is an open-source AI gateway developed in Go by Maxim AI, which serves as one unified endpoint for LLM routing from over 15 different providers and governance for all MCP tools.

It can be self-hosted using Docker or NPX under an Apache 2.0 license and includes built-in support for both MCP server and client.

Why do teams look for Maxim AI MCP Gateway alternatives?

While Bifrost does provide excellent routing capabilities for your organization's workloads, there are still a number of limitations that organizations face as they continue to outgrow Bifrost:

- Enterprise Governance — guardrail, clustering, adaptive load-balancing, and federated auth are locked behind custom contracts.

- No Managed Cloud — has to own the entire infrastructure life cycle.

- Gateway-Only — no model deployment, fine-tuning, or prompt management.

- Observability Dependent — deep observability available only through Maxim AI Proprietary Platform vs. having full access to open toolset.

What are the best MCP gateway tools in 2026?

The primary concern for any solution is what’s most valuable …

- TrueFoundry: Best for unified enterprise AI with end-to-end lifecycle coverage.

- Portkey: Best for observability-first teams that require lightweight, fast routing.

- Bifrost: Best for raw, self-hosted performance at very high RPS.

- AWS Bedrock Agents: Best for AWS-native environments with fully managed infrastructure.

In addition to being the best option in each of these categories, TrueFoundry also offers the most comprehensive platform with all aspects of LLM routing, MCP governance, model deployment, and observability under a single control plane using OAuth 2.0 identity injection and Virtual MCP Servers that are published.

Do I really need an MCP gateway?

The answer is based on your environment and scalability:

- One agent + 1–2 tools: Direct MCP integration will be sufficient. No gateway is required.

- Multiple agents + multiple tools: Requires a centralized gateway to handle the multiple tenants, user credentials, policies, and visibility required to connect successfully given the N x M complexity of wiring.

- Regulated industry: Gateway is a must-have, as you must demonstrate an audit trail, RBAC, and compliance by creating a set of records that cannot be retrofitted.

How is MCP different from APIs or SDK-based integrations?

1. Traditional APIs = Hard-coded, per tool, require change to application code whenever tool is added/updated.

2. SDK-based tool calling = Structured & type-safe but proprietary to each provider (e.g. OpenAI).

3. MCP = Open standard protocol for runtime discovery/invocation of tools, tools are added/removed/updated without touching agent code.

By decoupling, MCP allows agents to discover available tools at runtime instead of compile time, allowing for a portable architecture across clients (i.e. Claude Desktop, Cursor, VS Code) without having vendor-specific shim.

Final Thoughts

Bifrost is an excellent option for groups that focus on self-control and performance. Because Bifrost is open-source and has low latency, it also makes it a good option for experimentation.

While the demand for AI systems continues to grow, most organizations will eventually face the challenge of governance, observability and lifecycle management for their AI use cases. At this point is when a lot of organizations are looking to find TrueFoundry as an alternative to the MCP Gateway and the other alternatives.

Among the other alternatives, TrueFoundry is the most feature rich in terms of providing MCP Routing natively, enterprise-grade governance and full-stack AI infrastructure. For teams that want to transition from prototypes to production as fast and as reliably as possible, TrueFoundry is the best option.

TrueFoundry AI Gateway delivers ~3–4 ms latency, handles 350+ RPS on 1 vCPU, scales horizontally with ease, and is production-ready, while LiteLLM suffers from high latency, struggles beyond moderate RPS, lacks built-in scaling, and is best for light or prototype workloads.

The fastest way to build, govern and scale your AI

Govern, Deploy and Trace AI in Your Own Infrastructure

Recent Blogs

Frequently asked questions

What is similar to Bifrost?

Platforms similar to Bifrost for MCP gateway and AI routing functionality include TrueFoundry's AI Gateway, Portkey, and LiteLLM proxy. Each offers multi-provider LLM routing and API management, but they differ in the depth of enterprise controls, MCP server support, and observability features they provide.

What are the alternatives to Bifrost?

The leading alternatives to Bifrost as an MCP gateway and LLM proxy include TrueFoundry's AI Gateway (which adds enterprise governance, budget controls, and MCP routing), LiteLLM (a popular open-source option for multi-provider routing), Portkey (which focuses on reliability and observability), and Kong AI Gateway (for teams already using Kong's API management platform).

What is the difference between TrueFoundry and Bifrost?

TrueFoundry goes beyond LLM proxying by providing an enterprise-grade AI Gateway that combines LLM Gateway, MCP Gateway, and Agent Gateway within a unified control plane. While Bifrost primarily focuses on LLM routing, proxying, and provider abstraction, TrueFoundry adds the governance, observability, and infrastructure layers required to run agentic AI securely at enterprise scale..

.png)

.webp)

.webp)

.webp)

.webp)