LLMOps for Model Serving & Inference

.webp)

- Deploy any open-source LLM within your LLMOps pipeline using pre-configured, performance-tuned setups

- Seamlessly integrate with Hugging Face, private registries, or any model hub—fully managed within your LLMOps platform

- Leverage industry-leading model servers like vLLM and SGLang for low-latency, high-throughput inference

- Enable GPU autoscaling, auto shutdown, and intelligent resource provisioning across your LLMOps infrastructure.

.webp)

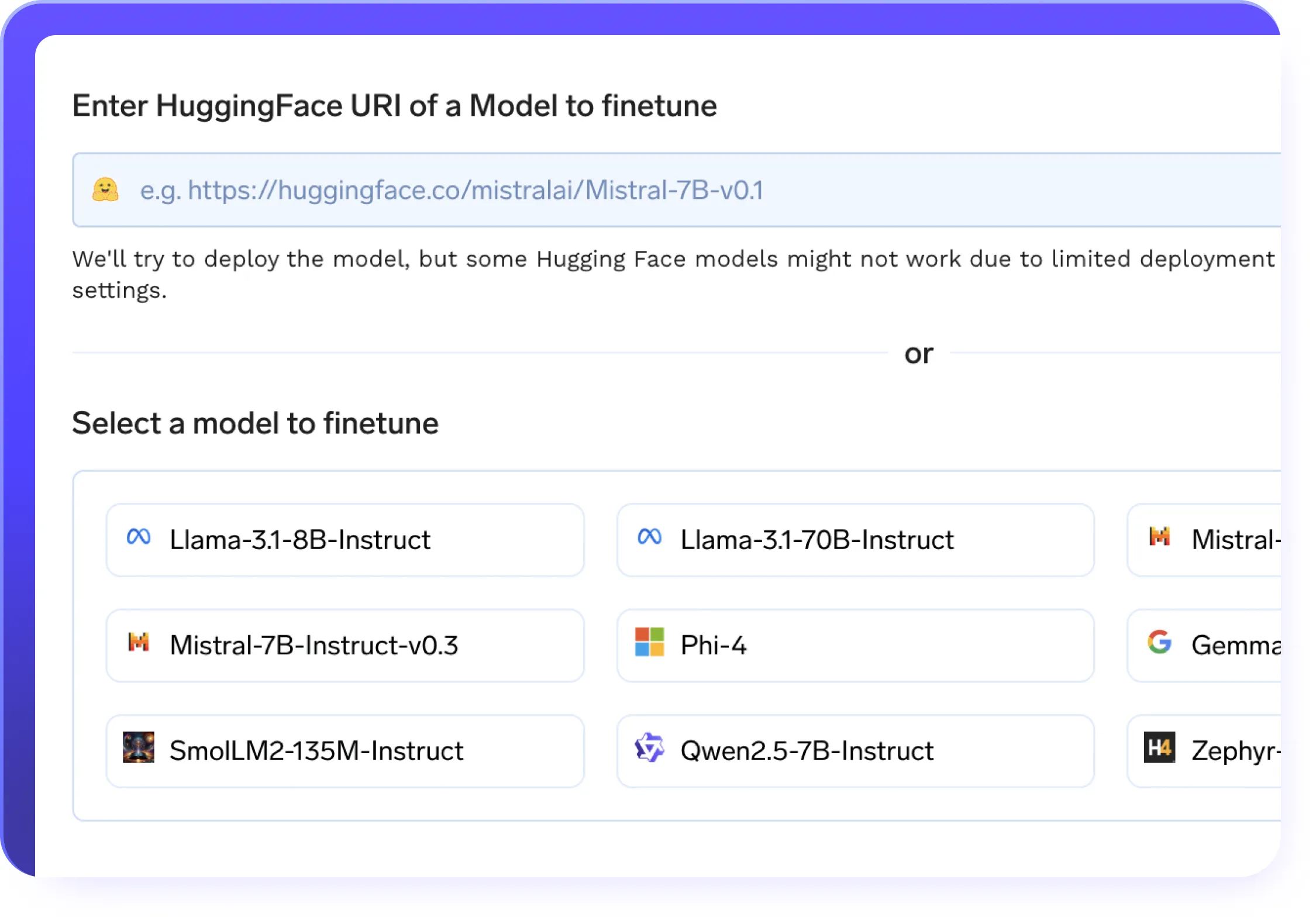

Efficient Finetuning

.webp)

- No-code & full-code fine-tuning support on custom datasets

- LoRA & QLoRA for efficient low-rank adaptation

- Resume training seamlessly with checkpointing support across your LLMOps pipelines

- One-click deployment of fine-tuned models with best-in-class model servers

- Automated training pipelines with built-in experiment tracking baked into your LLMops workflows

- Distributed training support for faster, large-scale model optimization

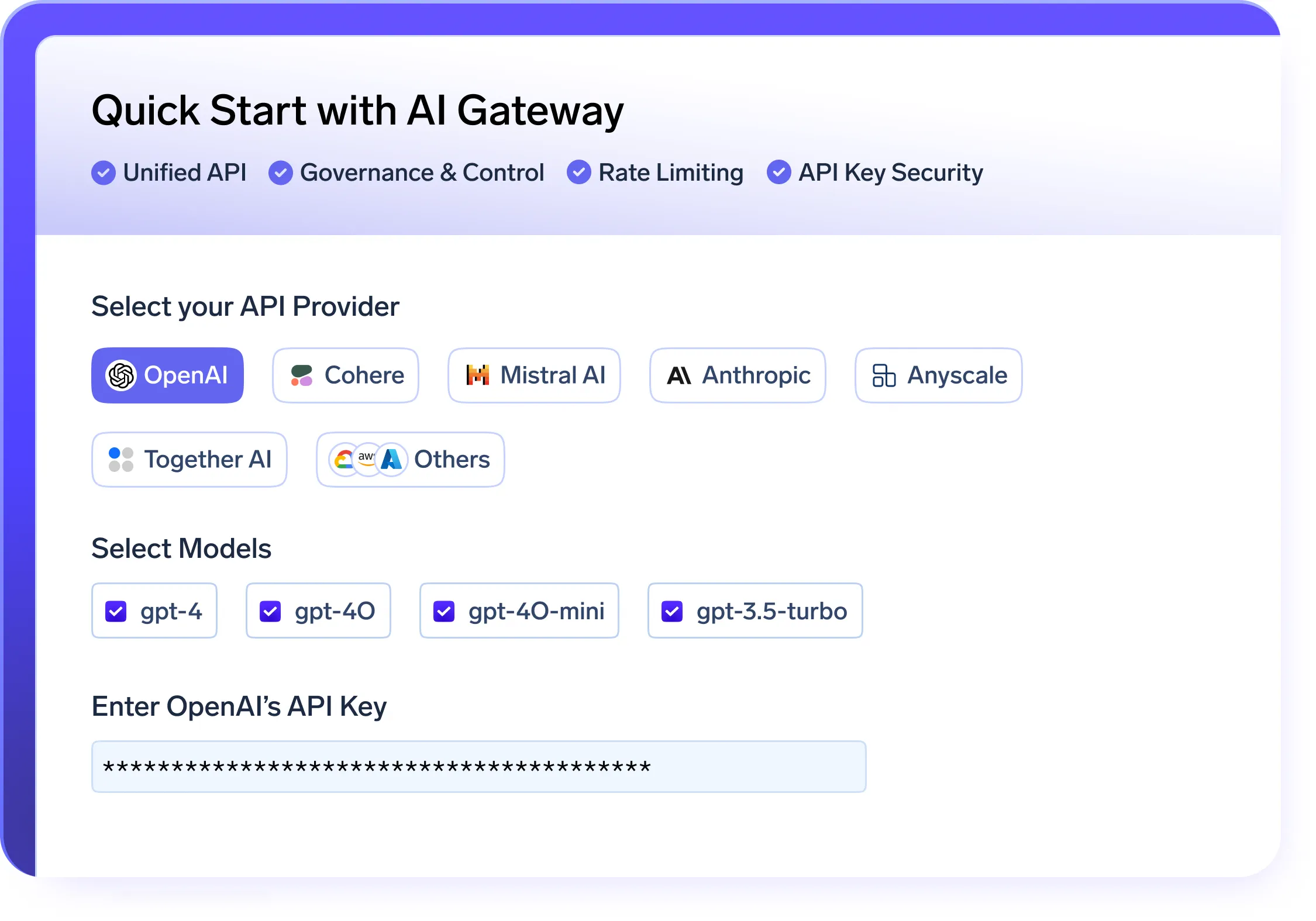

Secure and Scalable AI Gateway

.webp)

- A unified API layer to serve and manage models across OpenAI, LLaMA, Gemini, and other providers

- Built-in quota management and access control to enforce secure, governed model usage within your LLMOps platform

- Real-time metrics for usage, cost, and performance to improve LLMOps observability

- Intelligent fallback and automatic retries to ensure reliability across your LLMOps pipelines

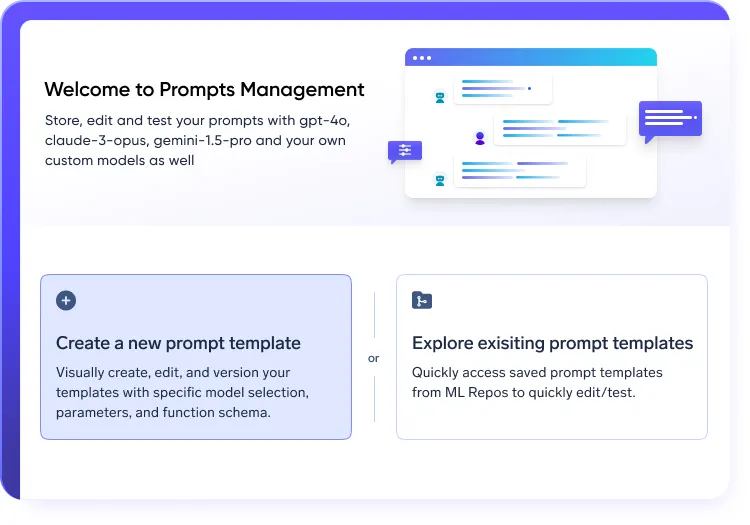

Structured prompt workflows in LLMOps stack

.webp)

- Experiment and iterate using version-controlled prompt engineering

- Run A/B tests across models to optimize performance

- Maintain full traceability of prompt changes within your LLMOps platform

Tracing & Guardrails for LLMOps Workflows

.webp)

- Capture full traces of prompts, responses, token usage, and latency

- Monitor performance, completion rates, and anomalies

- Integrate with guardrails for PII detection and content moderation in LLMOps pipelines

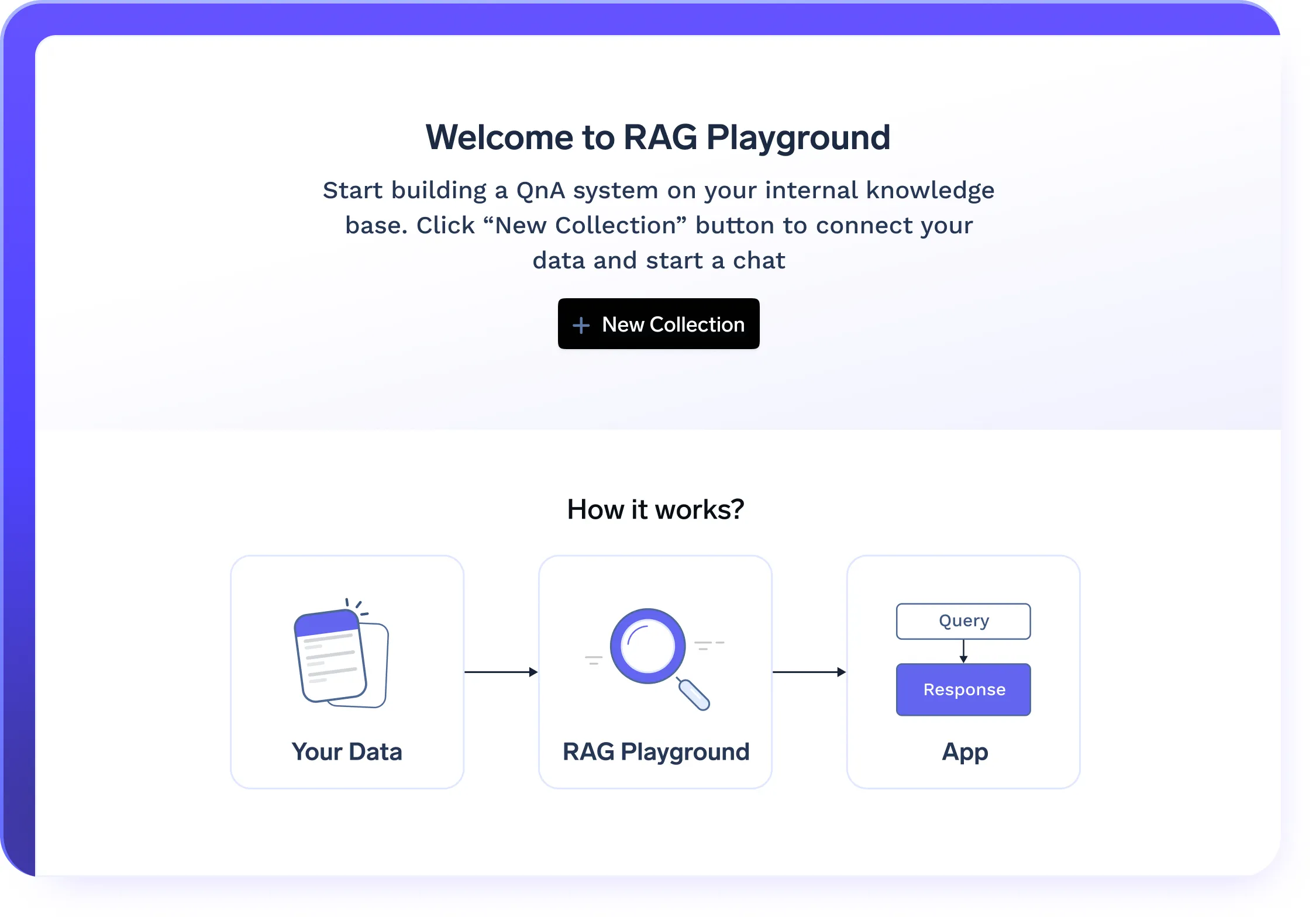

One click RAG deployment

.webp)

- Deploys all RAG components in a single click, including VectorDB, embedding models, frontend, and backend

- Configurable infrastructure to optimize storage, retrieval, and query processing

- Handle growing document bases with cloud-native LLMOps scalability

LLMOps for AI Agent Lifecycle Management

.webp)

- Run and scale agents across any framework using your LLMOps infrastructure

- Support for LangChain, AutoGen, CrewAI, and custom agents

- Framework-agnostic agent orchestration with built-in LLMOps monitoring

- Support for multi-agent orchestration, enabling agents to interact, share context, and execute tasks autonomously

.webp)

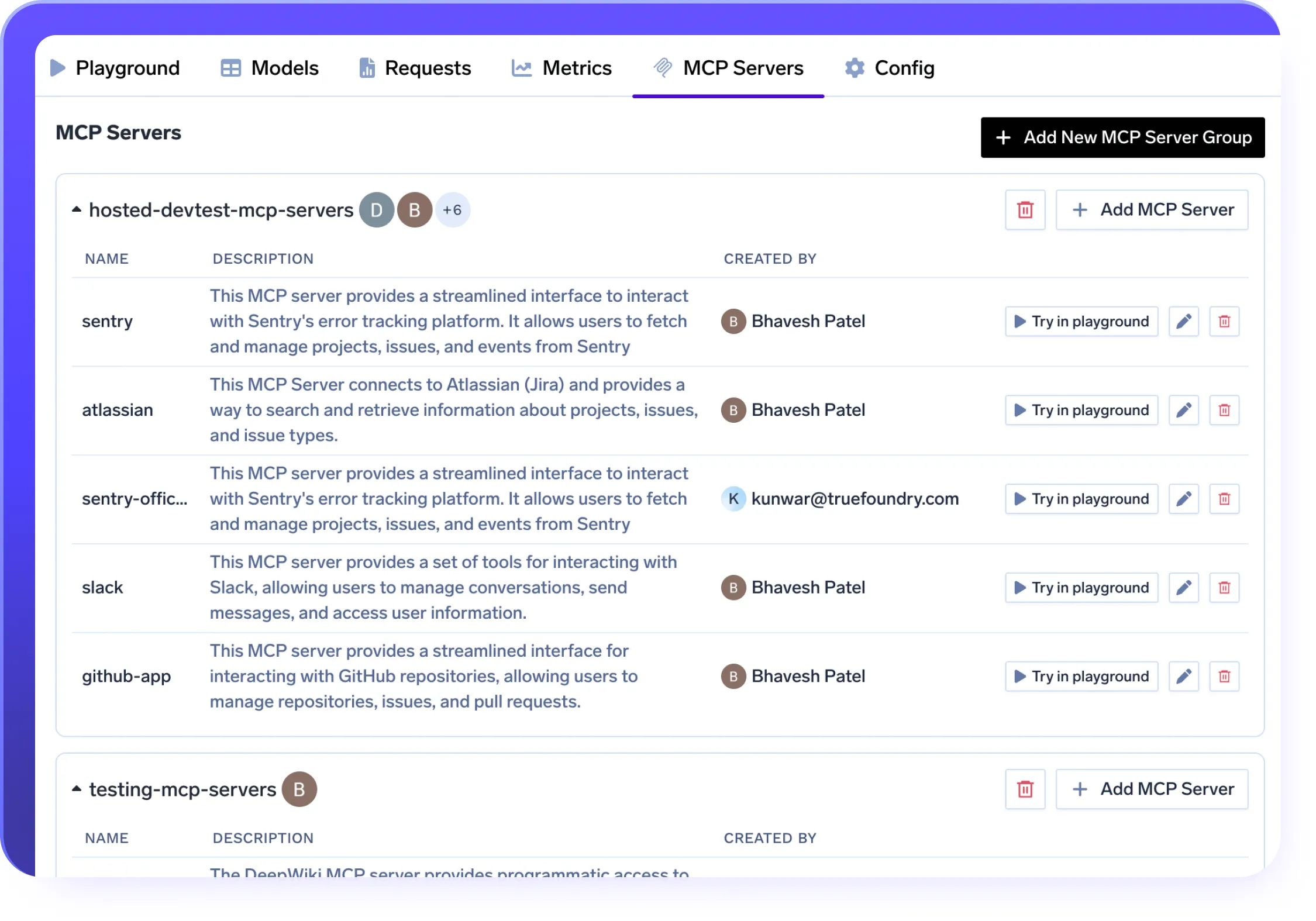

MCP Server Integration in Your LLMOps Stack

.webp)

- Securely connect LLMs to tools like Slack, GitHub, and Confluence using the MCP protocol

- Deploy MCP Servers in VPC, on-prem, or air-gapped setups with full data control

- Enable prompt-native tool use without wrappers—fully integrated into your LLMOps stack

- Govern access with RBAC, OAuth2, and trace every call with built-in observability

Enterprise-Ready

Your data and models are securely housed within your cloud / on-prem infrastructure

Compliance & Security

SOC 2, HIPAA, and GDPR standards to ensure robust data protectionGovernance & Access Control

SSO + Role-Based Access Control (RBAC) & Audit LoggingEnterprise Support & Reliability

24/7 support with SLA-backed response SLAs

VPC, on-prem, air-gapped, or across multiple clouds.

No data leaves your domain. Enjoy complete sovereignty, isolation, and enterprise-grade compliance wherever TrueFoundry runs

Frequently asked questions

What is LLMOps and why does it matter?

lifecycle of large language models—from training and fine-tuning to deployment, inference,

monitoring, and governance. LLMOps helps organizations bring GenAI applications into

production reliably and at scale. TrueFoundry provides a production-grade LLMOps platform

that simplifies and accelerates this entire process.

How is LLMOps different from traditional MLOps?

large language models. It includes capabilities like model server orchestration, prompt

management, token-level observability, agent frameworks, and secure API access.

TrueFoundry’s LLMOps platform handles these GenAI-specific workflows natively—unlike

generic MLOps tools.

Why should I invest in a dedicated LLMOps platform like TrueFoundry?

What are the core features of TrueFoundry’s LLMOps platform?

Can I deploy TrueFoundry’s LLMOps platform on my infrastructure?

How does LLMOps improve observability and debugging?

Is TrueFoundry’s LLMOps platform secure and compliant?

Which models and frameworks are supported in TrueFoundry’s LLMOps platform?

Can I use TrueFoundry’s LLMOps platform to manage multiple teams and projects?

How fast can I start using TrueFoundry for LLMOps?

GenAI infra- simple, faster, cheaper

Trusted by 30+ enterprises and Fortune 500 companies