Prerequisites

Your model server must expose an HTTP endpoint. The gateway works best with OpenAI-compatible APIs, which is the default output format for popular inference frameworks:- vLLM — OpenAI-compatible by default

- Ollama — OpenAI-compatible API

- SGLang — OpenAI-compatible by default

- Text Generation Inference (TGI) — supports OpenAI-compatible mode

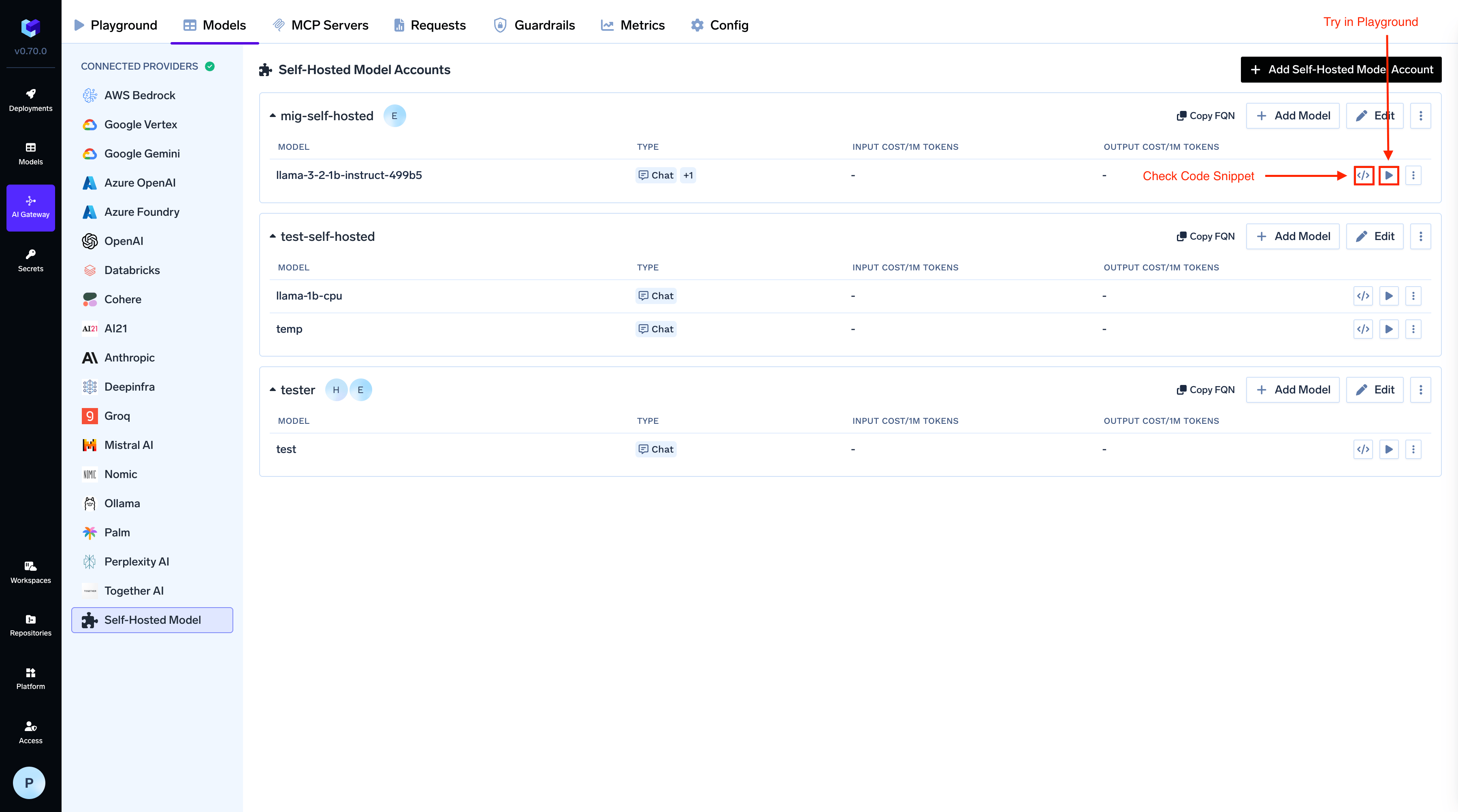

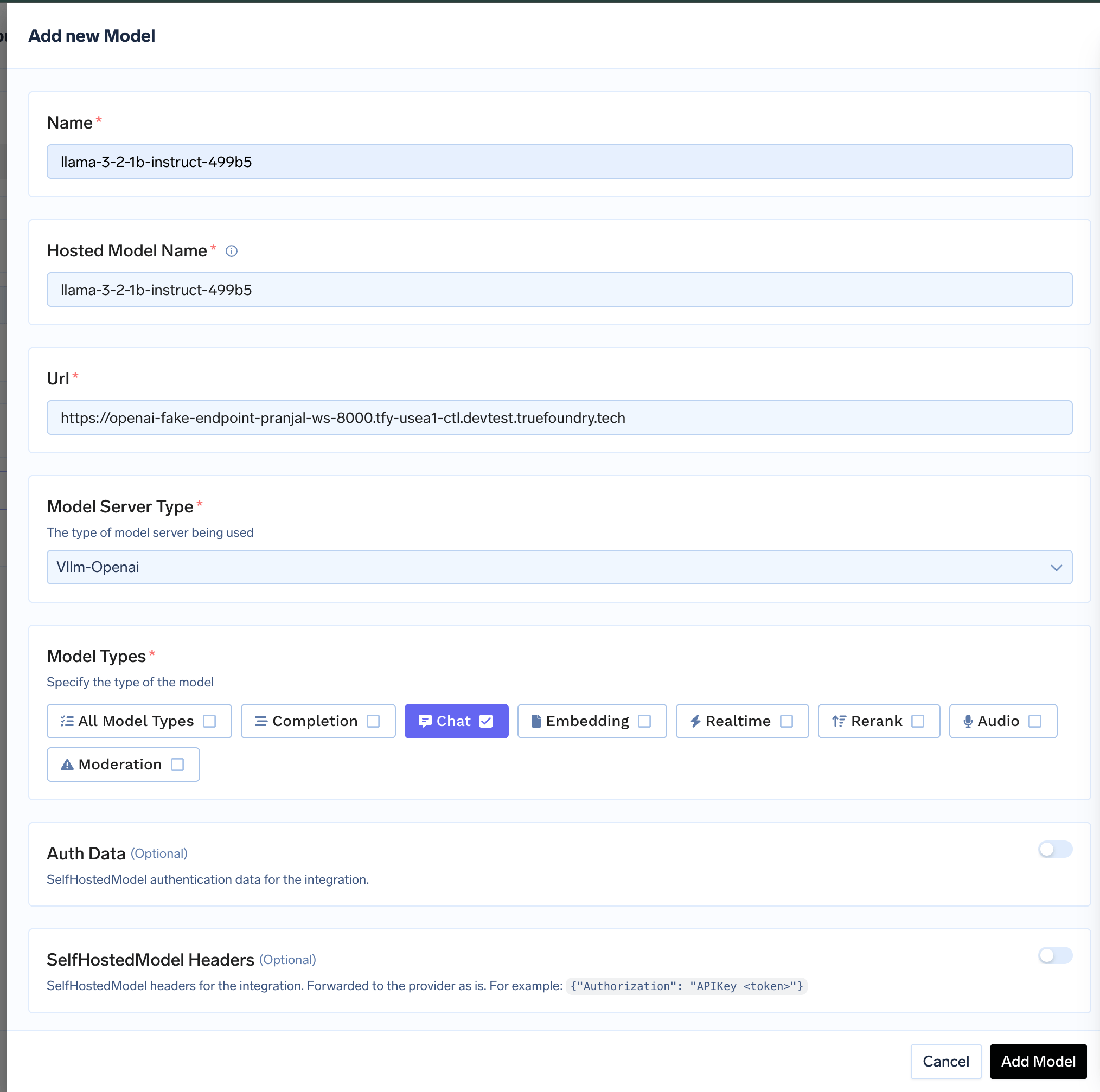

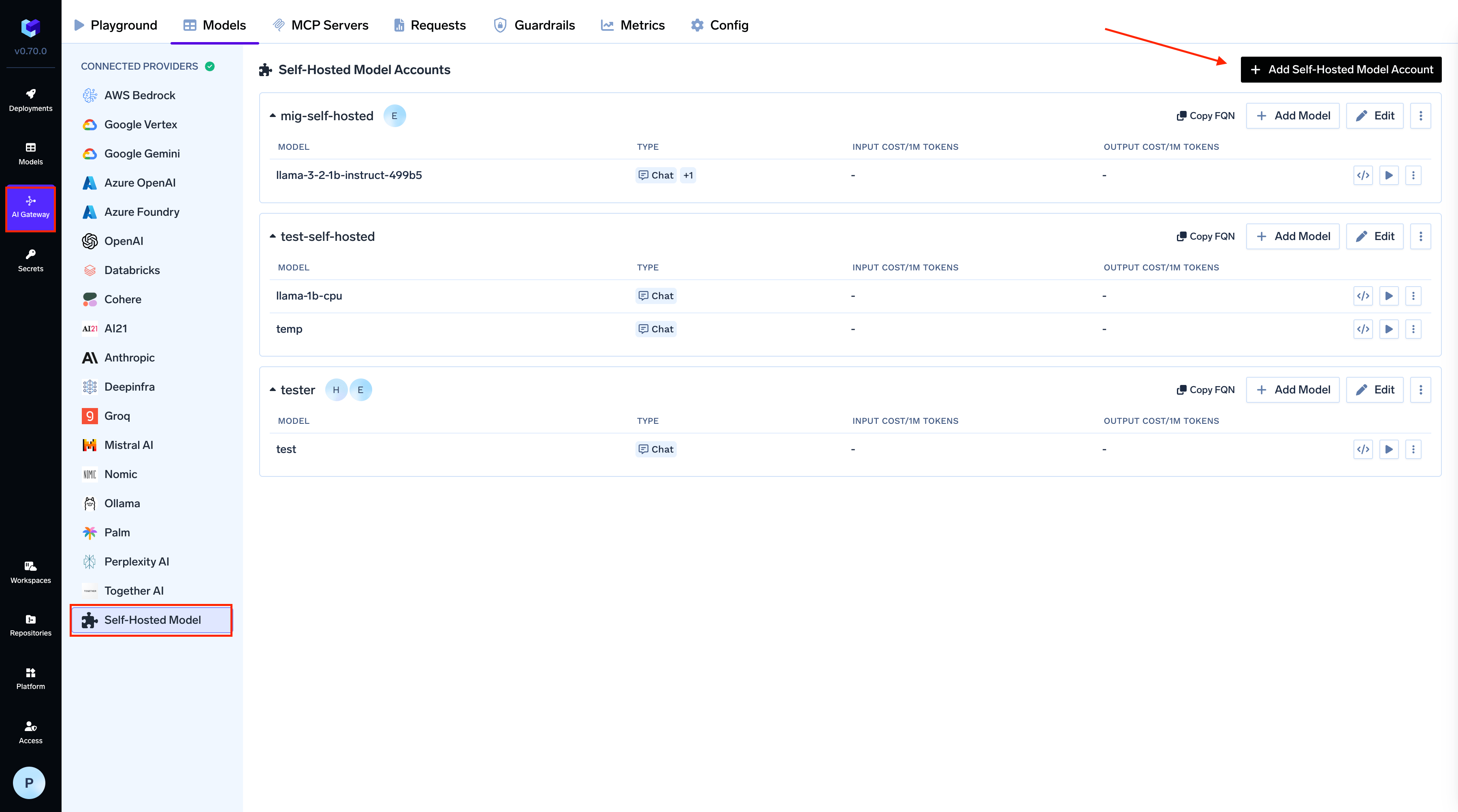

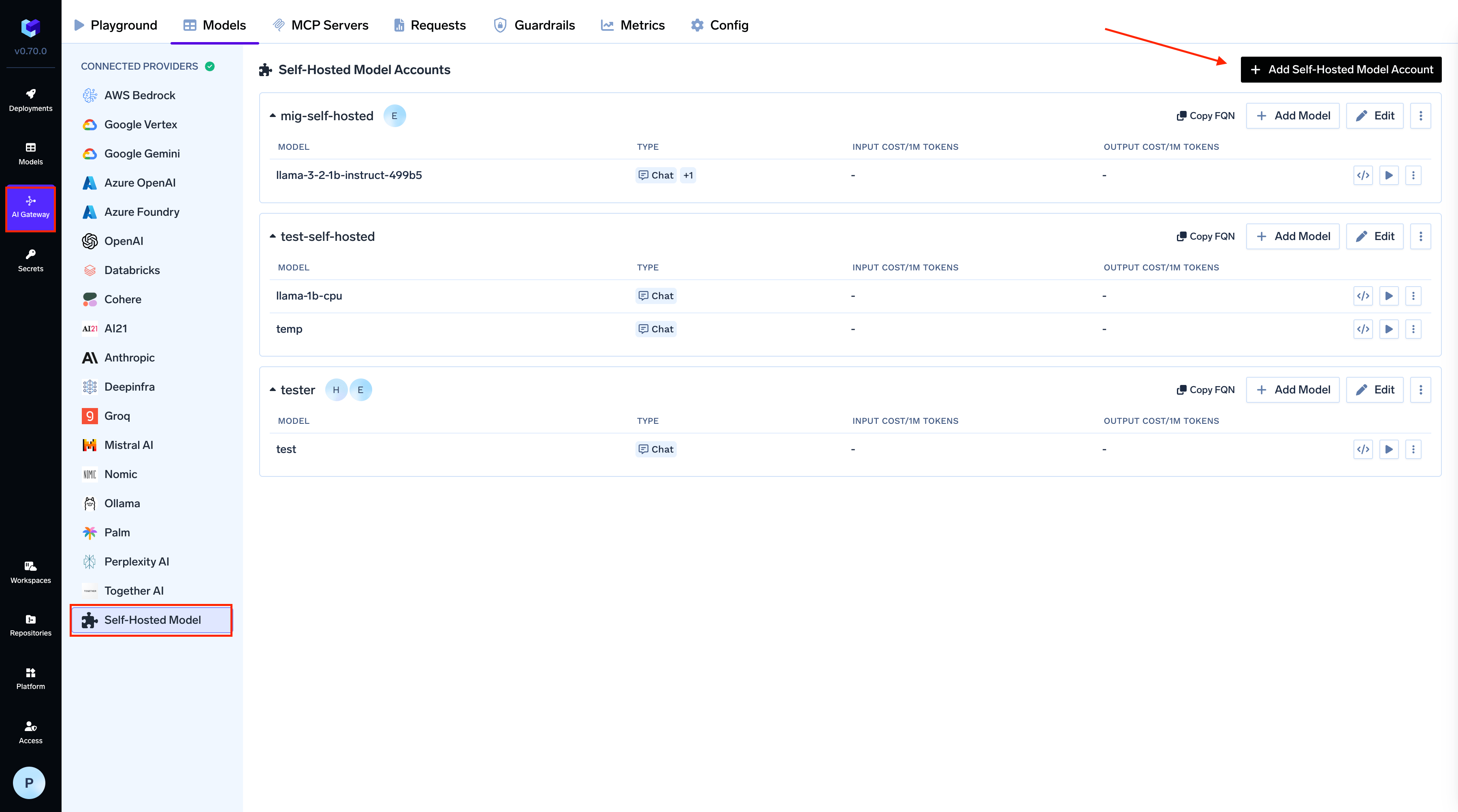

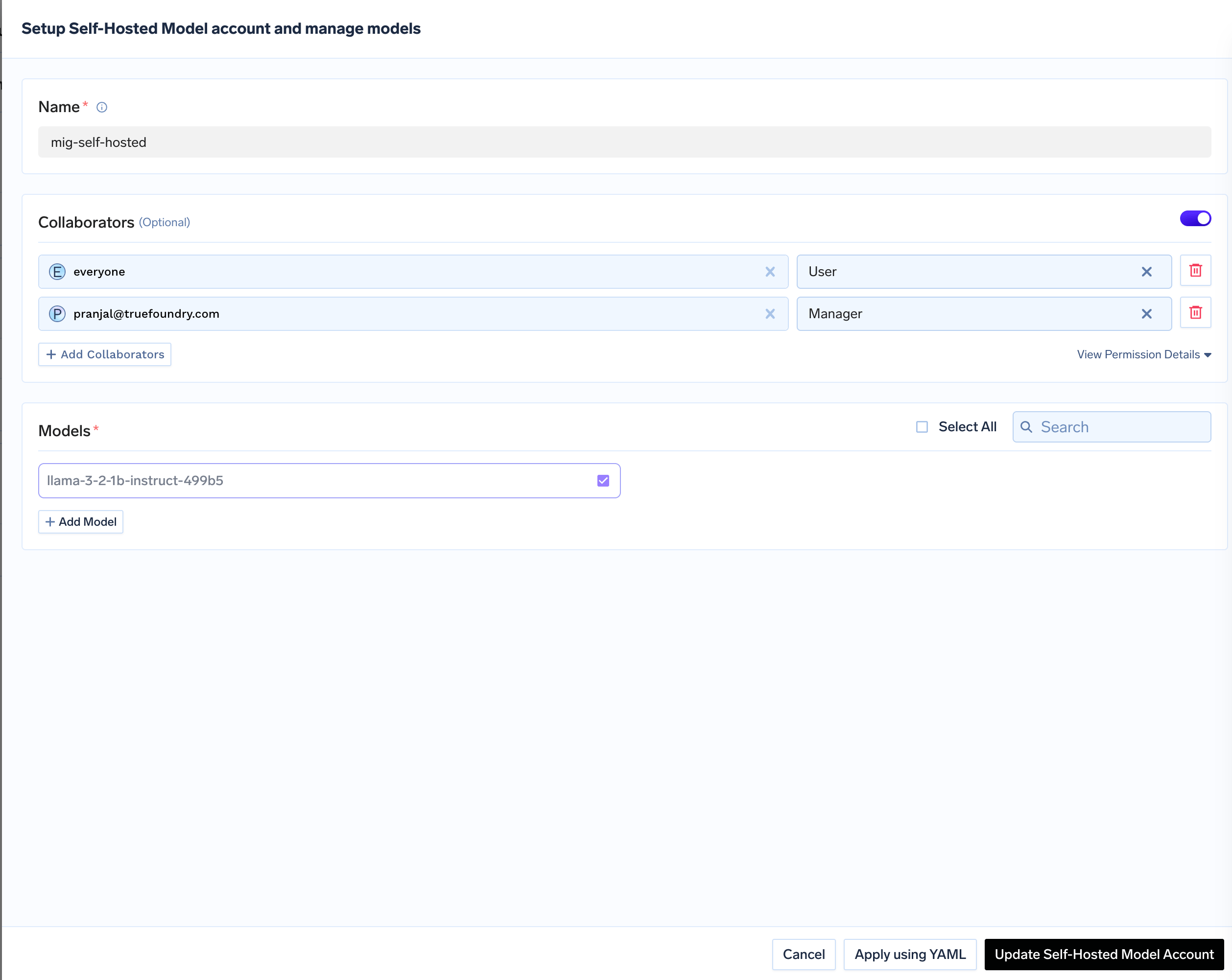

Adding Self-Hosted Models to the Gateway

Follow these steps to add a self-hosted or external model (deployed on TrueFoundry or elsewhere) to the AI Gateway.Navigate to Self Hosted Models in AI Gateway

From the TrueFoundry dashboard, navigate to

AI Gateway > Models and select Self Hosted Models.

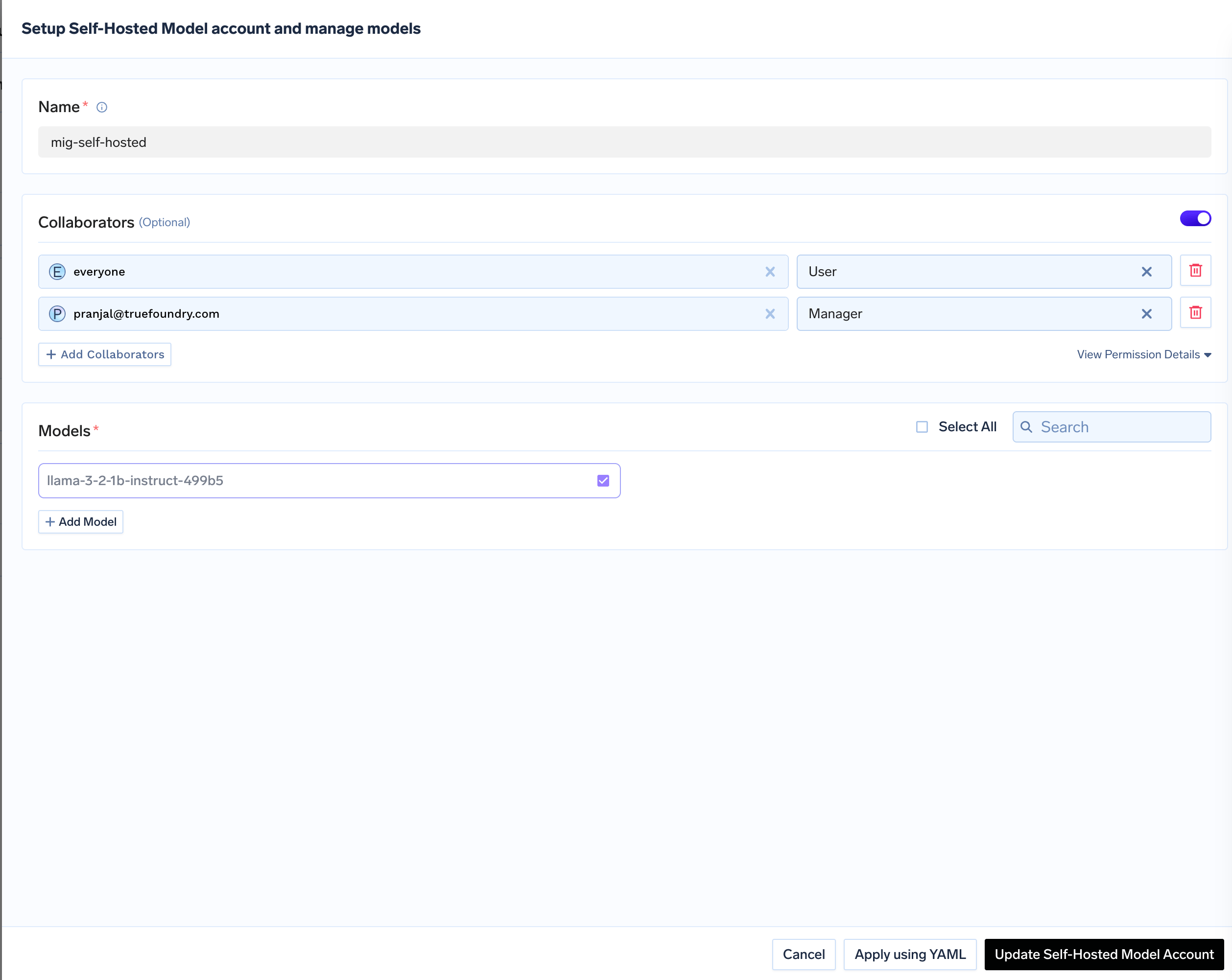

Add Collaborators

Add collaborators to your account, this will give access to the account to other users/teams. Learn more about access control here.

Inference

After adding the models, you can perform inference using an OpenAI-compatible API via the Playground or by integrating with your own application.