Adding Models

This section explains the steps to add Anthropic models and configure the required access controls.Navigate to Anthropic Models in AI Gateway

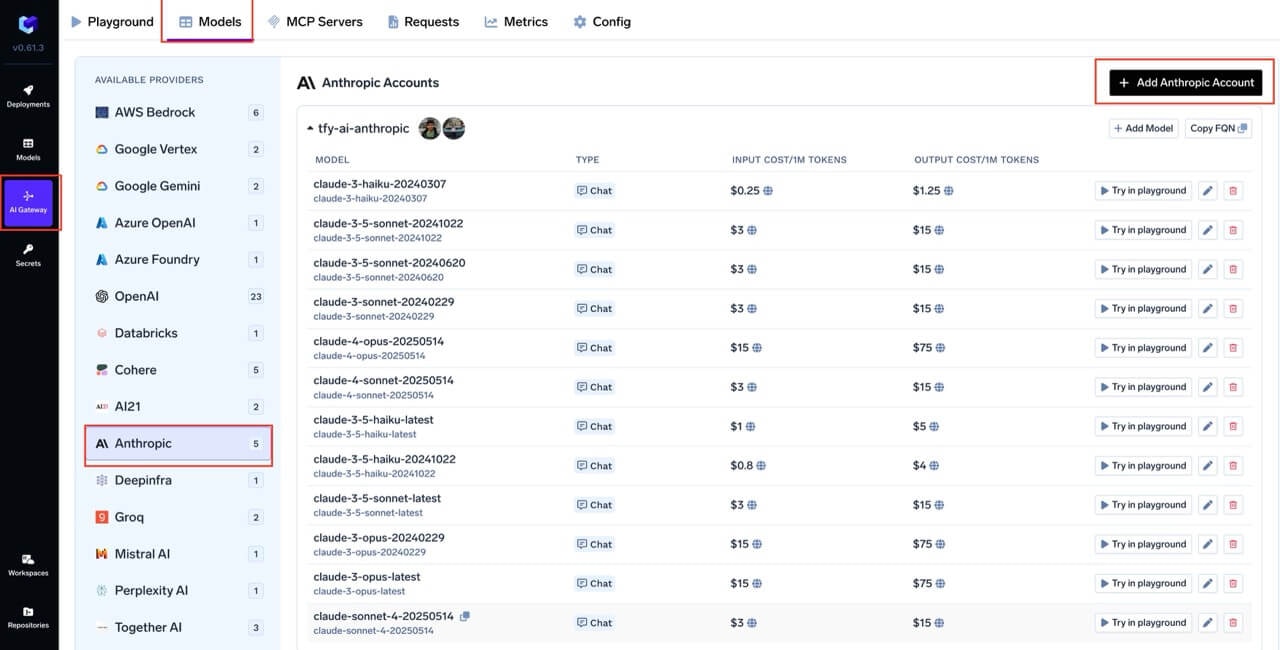

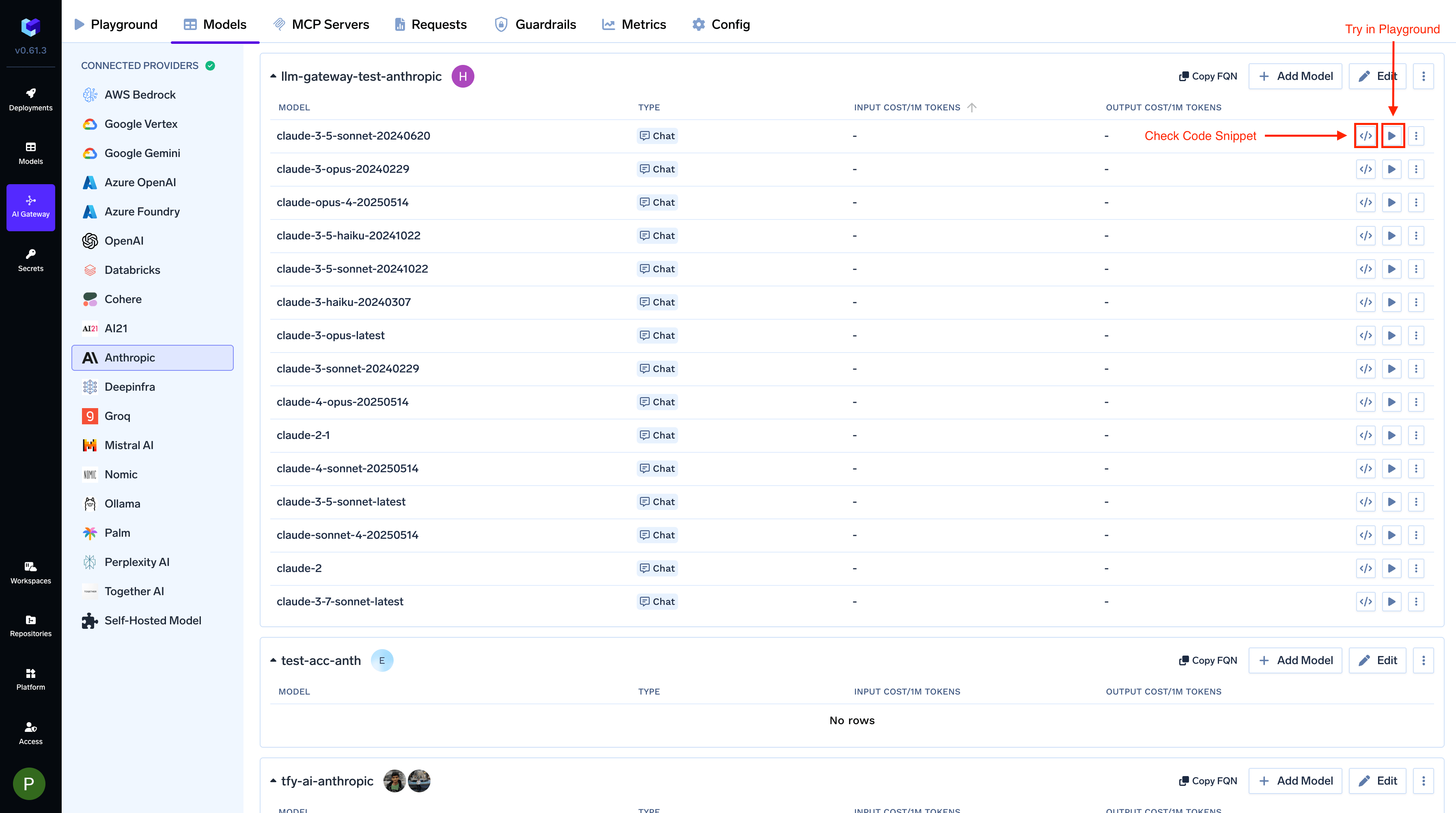

From the TrueFoundry dashboard, navigate to

AI Gateway > Models and select Anthropic.

Add Anthropic Account Details

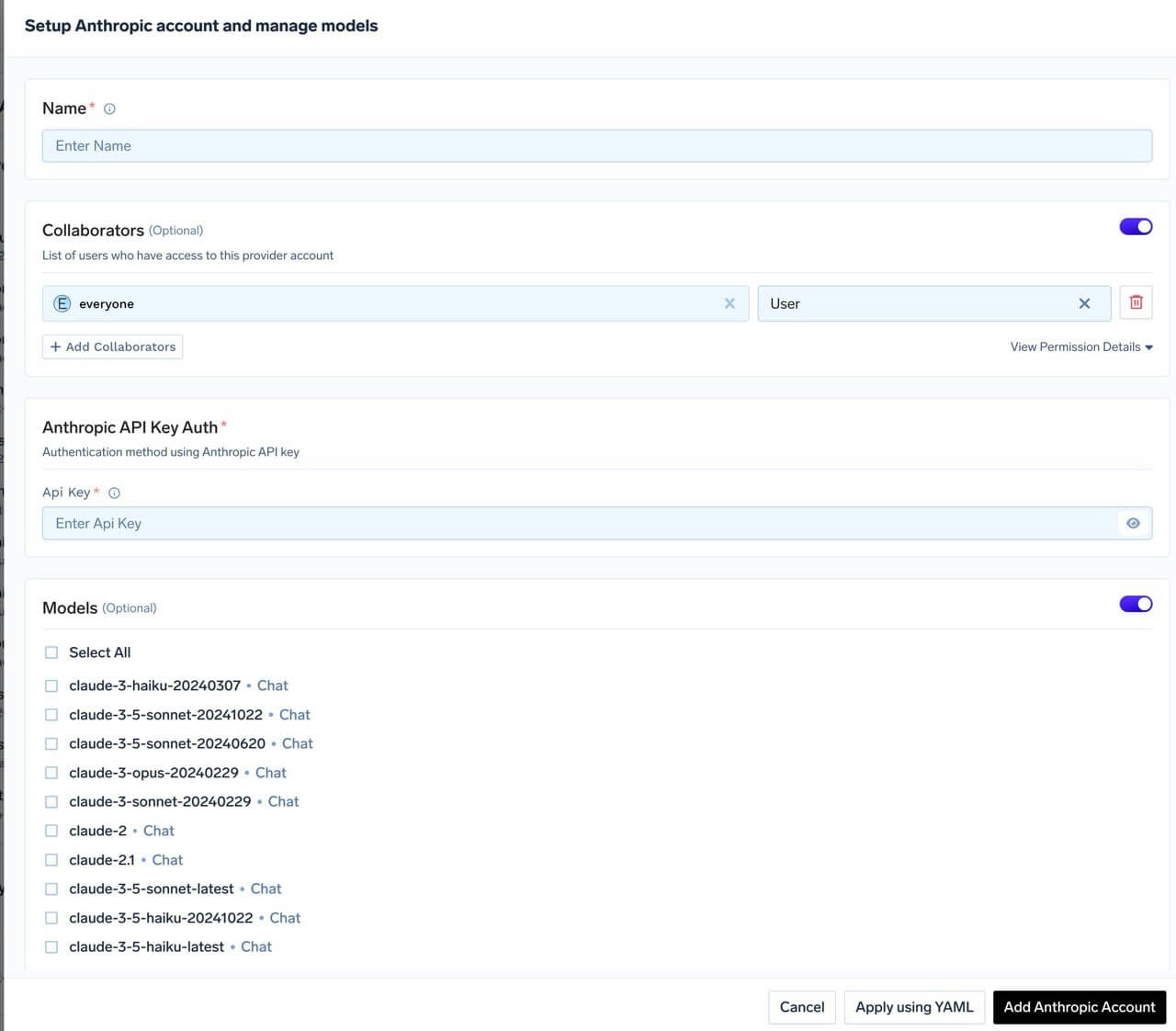

Click

Add Anthropic Account. Give a unique name to your Anthropic account and complete the form with your Anthropic authentication details (API Key). Add collaborators to your account, this will give access to the account to other users/teams. Learn more about access control here. For Claude Code Max, leave the API key empty.

Add Models

Select the model from the list. If you see the model you want to add in the list of checkboxes, we support public model cost for these models.

(Optional) If the model you are looking for is not present in the options, you can add it using

+ Add Model at the end of list (scroll down to see the option) by filling the form.TrueFoundry AI Gateway supports all text and image models in Anthropic.The complete list of models supported by Bedrock can be found here.

Inference

After adding the models, you can perform inference using an Anthropic-compatible API via the Playground or by integrating with your own application.For Anthropic streaming requests, AI Gateway supports fallback on

overloaded_error before generation begins. The gateway waits for the first non-empty stream chunk; if it receives an overloaded_error before that first chunk, it automatically falls back to the next configured model. Learn more in Anthropic Stream Overload Fallback.

Supported APIs

Once your Anthropic provider account is configured, the following API surfaces are available through the gateway. The table below summarizes each endpoint alongside platform feature support (tracing, cost tracking).Legend:

- ✅ Supported by Provider and Truefoundry

- Supported by Provider, but not by Truefoundry

- Provider does not support this feature

| API | Endpoint | Tracing | Cost Tracking |

|---|---|---|---|

| Chat Completions | /chat/completions | ✅ | ✅ |

| Messages API | /messages | ✅ | ✅ |

| Files API | /files | ✅ | ✅ |

Chat Completions

Chat Completions

The chat completions endpoint is the most widely used — it supports streaming, tools, multimodal input (images, PDF), structured JSON outputs, prompt caching and extended thinking.

Full provider capability matrix: Chat Completions API.

Python

Streaming

Streaming

Set

stream=True to start streaming responses and iterate over delta chunks. You may defensively check that chunk.choices is non-empty and delta.content is not None.Python

Function calling / tools

Function calling / tools

Advertise a tool, hand the model’s

tool_calls back as a tool role message, then request the final response. Use tool_choice to force the model to call a specific tool when you need deterministic behaviour.Python

Vision (multimodal images)

Vision (multimodal images)

Claude 3+ models support image inputs via the

image_url content part.The URL can be a public HTTP URL or an inline data:image/...;base64,... URI. For self-contained examples we recommend the inline form.Python

PDF document input

PDF document input

Claude models support PDF documents via the

file content type with base64 encoding.Python

Structured outputs (JSON schema)

Structured outputs (JSON schema)

Use

response_format={"type": "json_schema", ...} to force the model to return data matching a JSON schema. Claude 4.5/4.6 models use native JSON schema support; older models use a tool-conversion fallback.Python

Prompt caching

Prompt caching

Anthropic requires explicit

cache_control on content blocks you want cached (unlike OpenAI’s automatic caching). Cached tokens appear as cache_creation_input_tokens (first call) and cache_read_input_tokens (subsequent calls) in the usage response.Python

Extended thinking (reasoning)

Extended thinking (reasoning)

Claude Sonnet 3.7, Claude 4, and Claude 4.5 series models support extended thinking. Use the The response includes

reasoning_effort parameter — the gateway translates it into Anthropic’s native thinking parameter format.The gateway maps

reasoning_effort to a thinking.budget_tokens ratio of the request’s max_tokens: none = 0%, low = 30%, medium = 60%, high = 90%.reasoning_content (plain text) and thinking_blocks (structured blocks with cryptographic signatures required for multi-turn reasoning continuity).Python

Messages API

Messages API

Anthropic’s native Messages API (

/messages) is also exposed through the gateway, letting you use the official anthropic Python SDK directly.

You get the same gateway features — routing, logging, rate-limiting, budget management — as with the OpenAI-compatible interface.

Full docs: Messages API, Native SDK SupportThe gateway accepts both Anthropic SDK auth patterns and translates

internally:

api_key=TFY_API_KEY- SDK sends thex-api-keyheaderauth_token=TFY_API_KEY— SDK sends theAuthorization: Bearerheader

api_key is the idiomatic Anthropic SDK pattern - use it unless you have a reason to send a Bearer token.Python

Streaming

Streaming

Use

.messages.stream() and iterate over text_stream for incremental

output.Python

Files API

Files API

Upload, list, retrieve, and delete files held by the gateway. The gateway translates the OpenAI-compatible Files API into Anthropic’s native Files API automatically.

Full docs: Files API.

File content retrieval (

files.content) only works for files created by skills or the code execution tool. User-uploaded files cannot be downloaded back — you can only list metadata and delete them.Python