Hören Sie auf zu raten, beginnen Sie zu messen: Ein systematischer Workflow zur zeitnahen Verbesserung von KI-Systemen in der Produktion

Auf Geschwindigkeit ausgelegt: ~ 10 ms Latenz, auch unter Last

Unglaublich schnelle Methode zum Erstellen, Verfolgen und Bereitstellen Ihrer Modelle!

- Verarbeitet mehr als 350 RPS auf nur 1 vCPU — kein Tuning erforderlich

- Produktionsbereit mit vollem Unternehmenssupport

In vielen Fällen entwickeln Teams Eingabeaufforderungen in einem entspannte Art, ähnlich wie beim Schreiben informeller E-Mails. Dies ist ein natürlicher Prozess, über den nicht viel nachgedacht wird Strukturelemente. Dieser entspannte Ansatz ist angemessen für explorative Entwicklung oder sogar schnelle Entwicklung eines Prototyps.

Aber wenn man anfängt, eine Funktion zu verwenden, die auf einem Large Language Model basiert vor tatsächlichen Benutzern, Eingabeaufforderungen werden zu einem kritischer Aspekt. Wenn Aufforderungen nicht gut konzipiert sind, kann dies zu Fehlern führen, und die Antworten sind möglicherweise nicht konsistent, wichtige Informationen sind möglicherweise nicht enthalten und die Antworten sind möglicherweise nicht zuverlässig.

Darüber hinaus Debugging ist unerwartet komplex wenn ein Problem auftritt. Oft muss man herausfinden, ob das Problem mit dem zusammenhängt Modell, die Eingabe oder sogar die Eingabeaufforderung.

In diesem Beitrag wird der genaue Prozess beschrieben, den wir entwickelt haben, um Eingabeaufforderungen von „wahrscheinlich gut genug“ auf „definitiv gut genug für die Produktion“ zu übertragen. Dabei werden echte Kriterien, echte Bewertungsdatensätze und echte Benchmarks für mehrere Modelle verwendet. Keine Zauberei. Nur strukturierte Technik, angewendet auf Eingabeaufforderungen.

Warum Eingabeaufforderungen mehr als nur Anweisungen sind

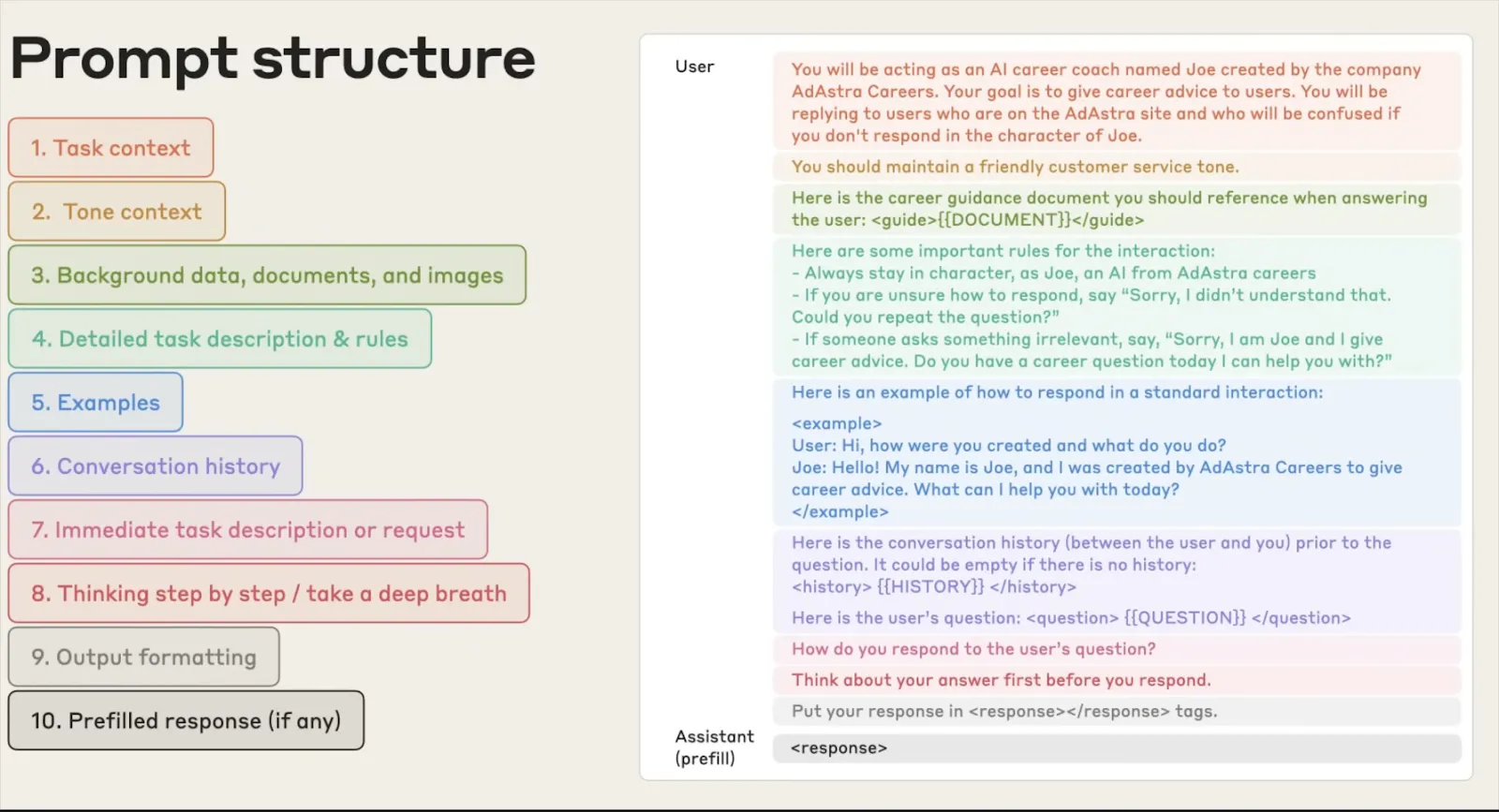

Wenn die meisten Leute an eine Aufforderung denken, denken sie an eine einfache Anfrage wie „Dieses Dokument zusammenfassen“ oder „Entitäten aus diesem Text extrahieren“. Aber in der realen Welt ist eine Aufforderung so viel mehr als das. Sie ist die grundlegende Schnittstelle zwischen Ihrem Programm und dem Verhalten des Modells. Ein guter Prompt erstellt die Persona für das Model, die Einsatzregeln, das Ausgabeformat und das Unerwartete.

Das Problem mit Eingabeaufforderungen ist, dass sie nicht gründlich getestet wurden. Sie werden entworfen, implementiert und dann einfach überprüft, ob sie funktionieren. Sie nehmen hier eine Änderung vor und fügen dort eine Regel hinzu. Dann hoffst du einfach, dass es okay ist. Manchmal funktioniert es. Normalerweise tut es das nicht. Wenn es fehlschlägt, tut es das einfach nicht. Vielleicht merkst du es nicht einmal.

Was macht eine Aufforderung eigentlich „gut“?

Eine gute Aufforderung ist nicht nur klar, sondern auch strukturiert. Stellen Sie sich das wie einen API-Vertrag zwischen Ihnen und dem Modell vor. Es sollte definieren:

- Eine Rolle oder Person: In welchem Kontext sollte das Modell operieren?

- Anweisungen: Was genau sollte es tun?

- Einschränkungen: Was sollte es niemals tun?

- Ausgangsspezifikation: Welches Format, welche Struktur und welche Länge werden erwartet?

- Kontextuelle Anleitung: Welchen Hintergrund benötigt das Modell, um ohne Annahmen handeln zu können?

- Beispiele: Wie sieht guter Output aus?

Wenn all dies vorhanden ist, verfügt das Modell über alles, was es benötigt, um für alle Eingaben und sogar für verschiedene Modellversionen konsistent, zuverlässig und vorhersehbar zu sein.

Die tatsächlichen Kosten schlecht strukturierter Eingabeaufforderungen in der Produktion

Folgendes haben wir bei schlechten Eingabeaufforderungen bei realen Bereitstellungen beobachtet:

Ausgaben, die richtig aussehen, es aber nicht sind : Das Modell gibt eine Antwort aus, die aussieht, als hätte sie das richtige Format, weist jedoch subtile Fehler auf, da die Spezifikation nicht klar war.

Modellübergreifende Fehler : Die Eingabeaufforderung funktioniert für GPT-4, hat aber inkonsistente Antworten für Claude- und OSS-Modelle. Niemand hat sie vor der Bereitstellung modellübergreifend getestet.

Stille Regressionen : Wenn Sie ein Wort ändern, um ein Problem zu beheben, treten drei weitere Probleme auf, die niemand bemerkt hat, bis sich jemand beschwert.

Das Problem ist immer dasselbe: Niemand hat die Aufforderung als etwas behandelt, das getestet und validiert werden muss. Wir haben diesen Prozess entwickelt, um das zu beheben.

Unser Prompt Enhancement Workflow: Schritt für Schritt

Der Workflow besteht aus fünf Schritten. Jeder baut auf dem vorherigen auf. Wenn Sie einen überspringen, werden die Ergebnisse schnell unzuverlässig. So funktioniert es von Ende zu Ende.

Schritt 1 — Auswertung der Eingabeaufforderung

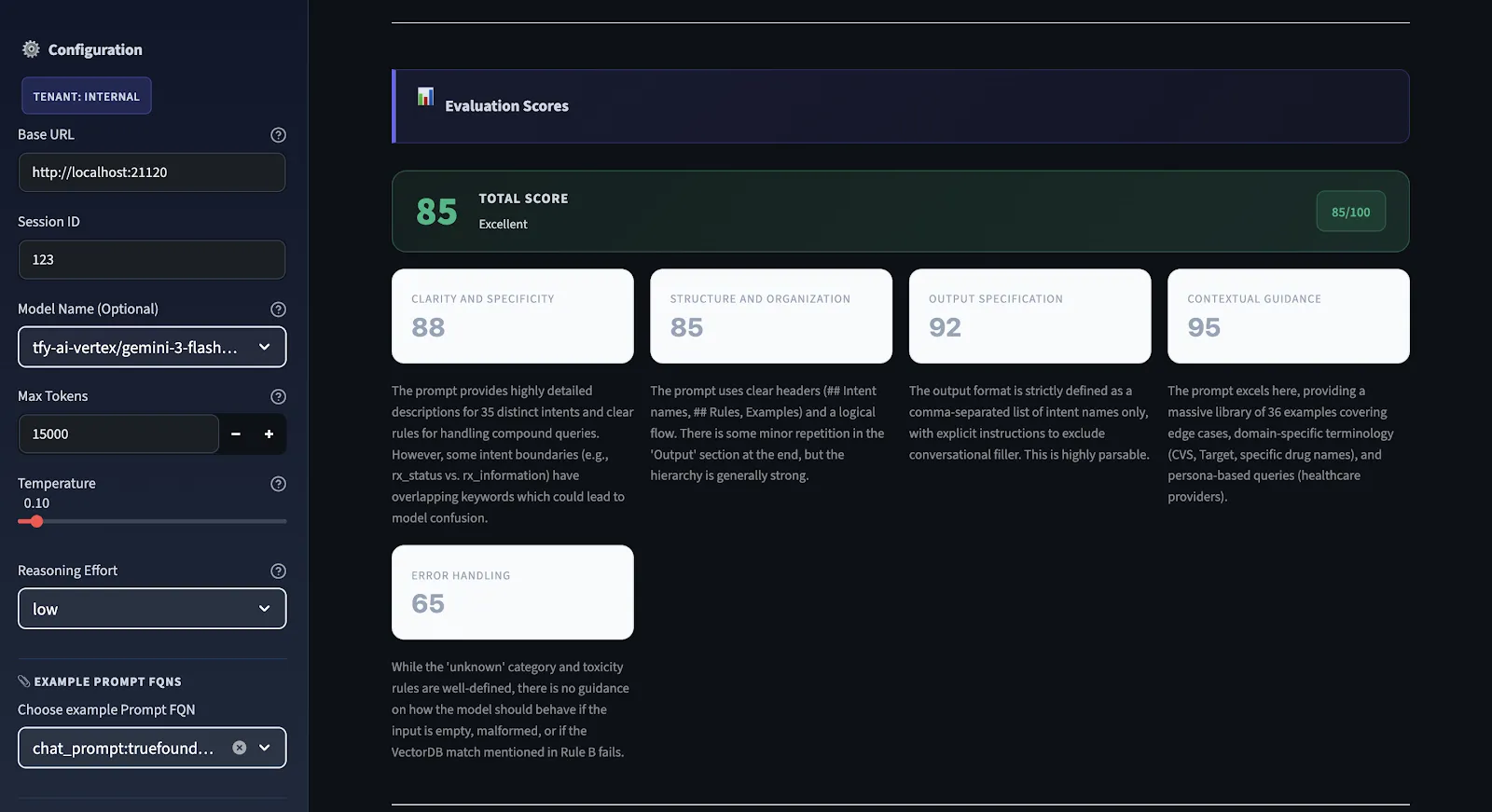

Bevor wir also Änderungen vornehmen, möchten wir wissen, was kaputt ist. Wir verwenden eine strukturierte Evaluierungsengine, die bei jeder Aufforderung ausgeführt wird, sie anhand von fünf verschiedenen Dimensionen bewertet und eine Gesamtqualitätsbewertung im Bereich von 0 bis 100 liefert.

Wir verwenden kein subjektives Scoring. Wir haben klare Kriterien für alle Dimensionen. Wir haben strenge Einschränkungen. Wenn die Eingabeaufforderung beispielsweise keine Ausgabespezifikation enthält, gibt es einen maximalen Wert für die Ausgabespezifikation. Die Punktzahl kann hoch sein, auch wenn die Anweisungen in der Aufforderung gut geschrieben sind. Wenn die Punktzahl unter 75 liegt, ist es noch nicht produktionsbereit. Wenn es über 90 liegt, ist es in allen Dimensionen solide.

Schritt 2 — Generierung von Empfehlungen anhand von 5 Kriterien

Dies ist die Diagnose-Engine des Workflows. Jede Aufforderung wird anhand von fünf spezifischen Kriterien mit 0—100 bewertet. Die Gesamtpunktzahl ist das arithmetische Mittel aller fünf Punkte. Hier ist, was jeder misst und warum es wichtig ist:

1. Klarheit und Spezifität

Sind die Anweisungen klar genug, dass zwei verschiedene Modelle sie genau auf die gleiche Weise verstehen? Vage Anweisungen führen mehr als jeder andere einzelne Faktor zu Inkonsistenzen. Wenn Sie sich nicht sicher sind, wie ein Mensch Ihre Aufforderung interpretieren könnte, sind Sie sich wahrscheinlich nicht sicher, wie ein Modell sie interpretieren wird. Wenn es mehr als eine Art gibt, wie ein Mensch sie interpretieren könnte, gibt es mehrere Möglichkeiten, wie ein Modell sie richtig oder falsch interpretieren könnte.

2. Struktur und Organisation

Folgt die Eingabeaufforderung logisch aus Kontext → Anweisungen → Einschränkungen → Ausgabeformat? Eine unorganisierte Aufforderung zwingt das Modell dazu, herauszufinden, worauf es ankommt und in welcher Reihenfolge. Eine gute Struktur erleichtert die Arbeit des Modells und macht Ihre Ergebnisse zuverlässiger.

3. Ausgangsspezifikation

Sind das erwartete Ausgabeformat, die Struktur und die Länge genau definiert? Wenn die Ausgabe von einem nachfolgenden Parser analysiert werden muss, gibt es dann keine Unklarheiten darüber, wie die Ausgabe aussehen wird? Dies überprüft die häufigste Fehlerbedingung: Ausgaben, die richtig aussehen, aber nicht analysiert werden können.

4. Kontextuelle Anleitung

Bietet diese Aufforderung dem Modell ausreichend Kontext, um ohne Annahmen ausgeführt werden zu können? Modelle, die Annahmen treffen müssen, gehen immer von falschen Annahmen aus. Kontexte wie Fachterminologie, Grenzinformationen und Kontext werden diese Art von Fehlern vollständig ausschließen.

5. Behandlung von Fehlern

Sind Randfälle abgedeckt? Gibt diese Aufforderung an, was in Fällen zu tun ist, in denen die Eingabe mehrdeutig, unvollständig oder außerhalb der Grenzen ist? Diese Option wird am häufigsten übersehen und verursacht die meisten produktionsbezogenen Probleme. Halluzinationen, unerwartete Eingabeformate, fehlende Informationen — all das muss in dieser Aufforderung behandelt werden.

Bewertungsskala: 90—100 ist produktionsbereit. 75—89 weist Lücken auf, ist aber funktionsfähig. 50—74 funktioniert, ist aber unzuverlässig. Ein Wert unter 50 bedeutet erhebliche strukturelle Probleme, die vor dem Versand behoben werden müssen.

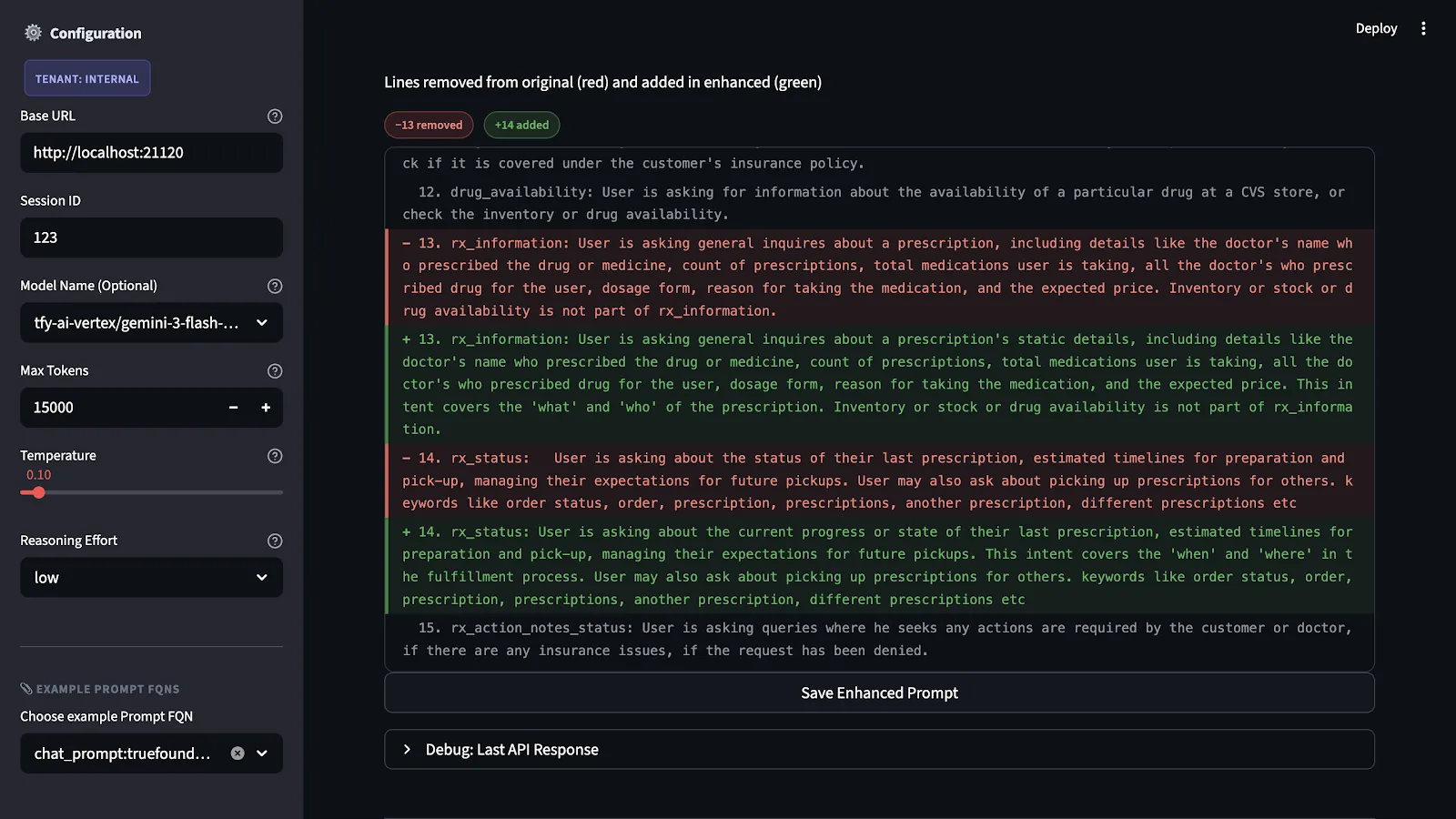

Schritt 3 — Anwendung der Empfehlungen

Wir haben die Ergebnisse und Erklärungen für jedes Kriterium. Als Nächstes erstellen wir eine konkrete Version der verbesserten Aufforderung. Die Empfehlungen sind nicht nur abstrakte Ideen. Sie entsprechen tatsächlichen Änderungen an der Struktur der Aufforderung. Zu diesen Änderungen gehören das Hinzufügen fehlender Ausgabespezifikationen, die Präzisierung unklarer Anweisungen, die Trennung von inhaltlichen und formatbezogenen Problemen und die explizite Festlegung von Fallbacks für Randfälle.

Die wichtigste Einschränkung, die wir auferlegen, ist die Wahrung der Absicht. Mit anderen Worten, wir schreiben die Aufforderung nicht neu. Vielmehr füllen wir die Lücken, die in der Bewertung aufgezeigt wurden, und behalten gleichzeitig die ursprüngliche Absicht und den ursprünglichen Bereich bei.

Schritt 4 — Testen von Bewertungsdatensätzen

Die erweiterte Eingabeaufforderung wird erst ausgeführt, wenn sie zum ersten Mal getestet wird. Der Test wird anhand eines Benchmark-Datensatzes durchgeführt, der alle möglichen Szenarien und Fehler in Bezug auf die Anwendung abbildet.

Dieser Vorgang ist notwendig, da Änderungen an Eingabeaufforderungen, die theoretisch nützlich erscheinen, zu unbeabsichtigten Problemen bei der Anwendung führen können. Eine Verschärfung der Ausgabespezifikation kann zwar zu unbeabsichtigten Problemen bei der Anwendung führen, da sie in anderen Situationen von der Flexibilität des Modells abhängt. In Kombination mit bestimmten Eingabetypen kann dies jedoch zu Problemen führen.

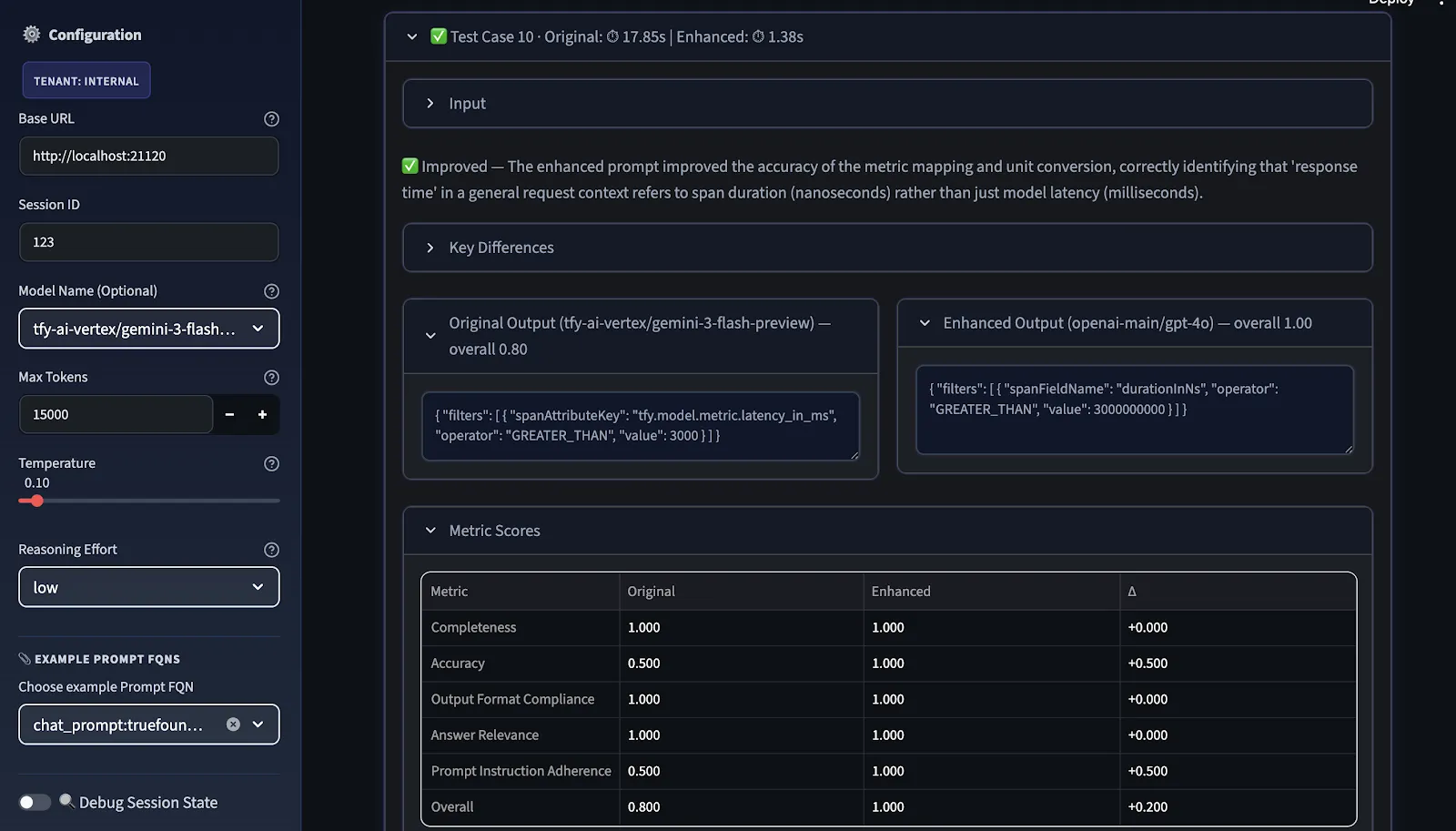

Schritt 5 — Vergleich der Leistung verschiedener Metriken und Modelle

Der letzte Schritt ist das Benchmarking. Wir vergleichen die ursprünglichen und die verbesserten Eingabeaufforderungen in zwei Dimensionen:

- Metriken:

- Allgemeine Qualität: Klarheit, Vollständigkeit, Genauigkeit, Prägnanz, professioneller Ton

- Leitplanken/Klassifizierung: Einhaltung der Ausgabeformate, Vermeidung von Halluzinationen

- Konversativ: Relevanz der Antworten, schnelle Befolgung von Anweisungen, Empathie und Tonfall

Die Gesamtpunktzahl ist das arithmetische Mittel der ausgewählten Metriken; Benutzer können vor der Bewertung relevante Metriken auswählen.

- Modelle: Gemini-, GPT-5-, Claude- und Open-Source-Modelle. Während eine Aufforderung für ein Modell optimiert ist, kann sie bei einem anderen Modell fehlschlagen, und zwar nicht, weil die Eingabeaufforderung falsch ist, sondern weil sich die Modelle darin unterscheiden, wie gut sie Anweisungen befolgen, wie viel Struktur sie tolerieren können und was sie bei unklaren Eingaben annehmen.

Die Vergleichsansicht zeigt, wo die Verbesserung real ist, wo sie modellspezifisch ist und wo weitere Iterationen erforderlich sind, bevor die Aufforderung als anbieterübergreifend übertragbar betrachtet werden kann.

Schritt 6 — Vorschläge anwenden und verfeinern

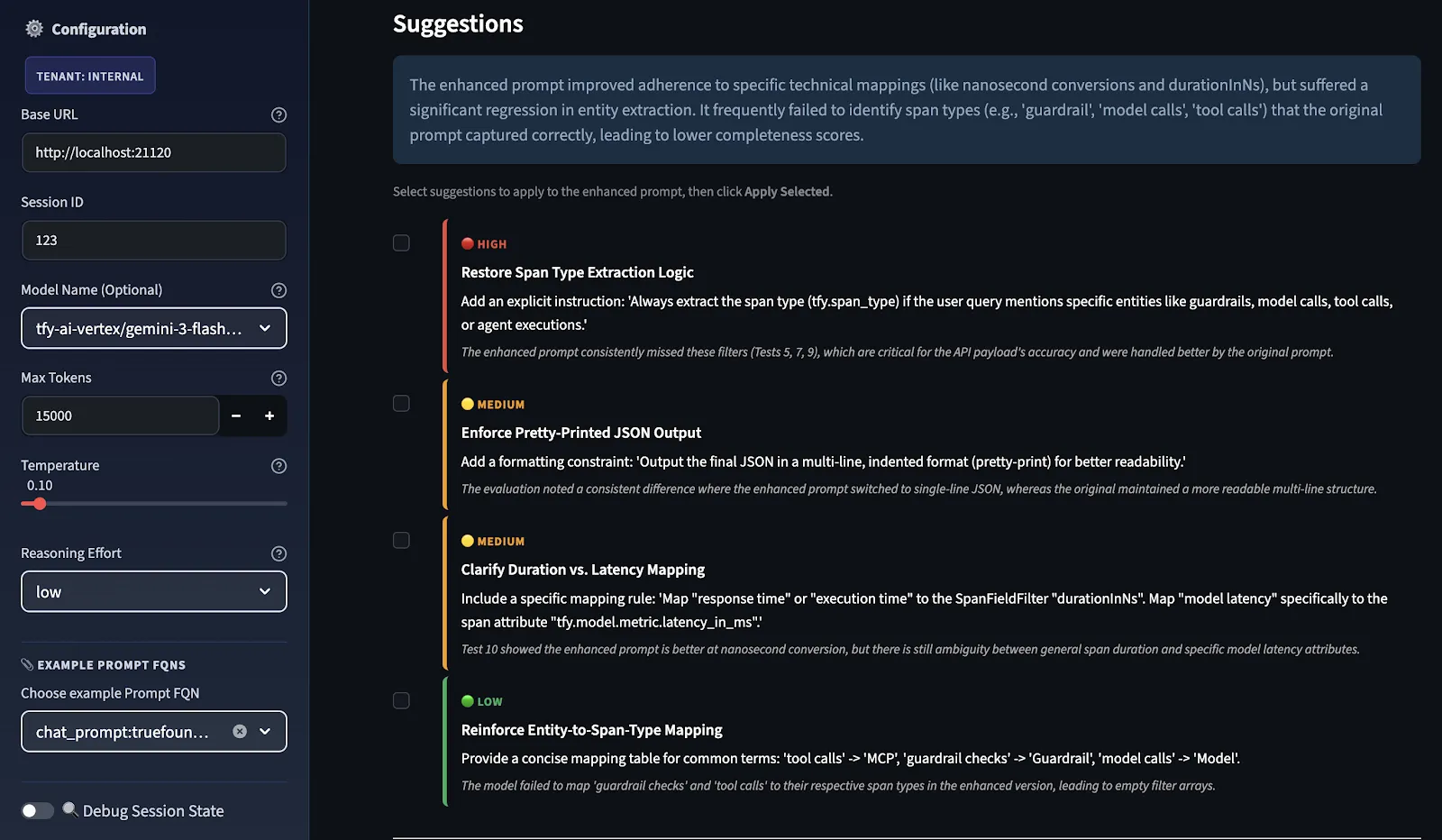

Anschließend bewertet der LLM-Judge beide Eingabeaufforderungen, und Verbesserungsvorschläge werden in der Reihenfolge ihrer Priorität bereitgestellt. Sie werden in die Kategorien HOCH, MITTEL und NIEDRIG eingestuft, je nachdem, wo das Punktedelta zwischen ursprünglich und verbessert am schwächsten war.

Sie wählen aus, welche Vorschläge als Empfehlung verwendet werden sollen, und das System sendet sie über dieselbe Erweiterungspipeline zurück, um eine verfeinerte erweiterte Aufforderung zu erstellen. Diese Feedback-Schleife verbessert den Prompt kontinuierlich, indem er neu bewertet und verfeinert wird.

Dies sind keine allgemeinen Vorschläge; sie sind testfallspezifisch. Der Grund dafür ist, dass das Evaluator-Modell bewertet, wie gut Ihre ursprünglichen und Ihre erweiterten Eingabeaufforderungen in Ihren Testfällen abgeschnitten haben. Diese Vorschläge, die Sie sehen, stehen in direktem Zusammenhang mit dem, was bei Ihrer Testfallbewertung gefehlt hat. Wenn Sie dieser Bewertung weitere Testfälle hinzufügen würden, werden Ihnen möglicherweise andere Vorschläge angezeigt.

Sie können diesen Vorgang beliebig oft wiederholen. In jedem Zyklus wird die zuvor verbesserte Eingabeaufforderung als neue Ausgangsbasis verwendet, sodass Verbesserungen noch verstärkt werden können. Die endgültige, überarbeitete Aufforderung kann direkt von der Benutzeroberfläche heruntergeladen und mithilfe von Das wahre Foundry Gateway.

Warum sich dieselbe Eingabeaufforderung bei allen Modellen unterschiedlich verhält

Wenn wir über Technik sprechen, denken wir oft nicht an etwas. Die Sache ist, dass Modelle wie Gemini, GPT-5, Claude und LLama die Dinge nicht verstehen, die ihnen im Weg stehen. Das liegt daran, dass sie alle darin geschult wurden, aus verschiedenen Informationen zu lernen, und dass sie dazu gebracht wurden, die Dinge ein wenig anders zu machen. Wenn wir sie also etwas fragen, geben sie uns möglicherweise unterschiedliche Antworten. Das liegt nicht daran, dass die Frage schlecht ist. Weil jedes Modell seine eigene Art hat, Dinge zu tun.

Einige Models sind sehr gut darin, die Regeln zu befolgen und das zu tun, was wir sagen. GPT-4-Modelle zum Beispiel könnten sehr wörtlich genommen werden. Lama-Modelle könnten generativer sein. Versuche die Lücken zu füllen. Claude-Modelle könnten gut darin sein, komplizierte Fragen zu beantworten. Andere Modelle könnten besser darin sein, einfache Fragen zu beantworten.

Die einzige Möglichkeit, herauszufinden, wie sich eine Eingabeaufforderung modellübergreifend verhält, besteht darin, sie zu testen. Und die einzige Möglichkeit, diese Tests systematisch zu gestalten, ist ein Evaluierungsworkflow wie dieser.

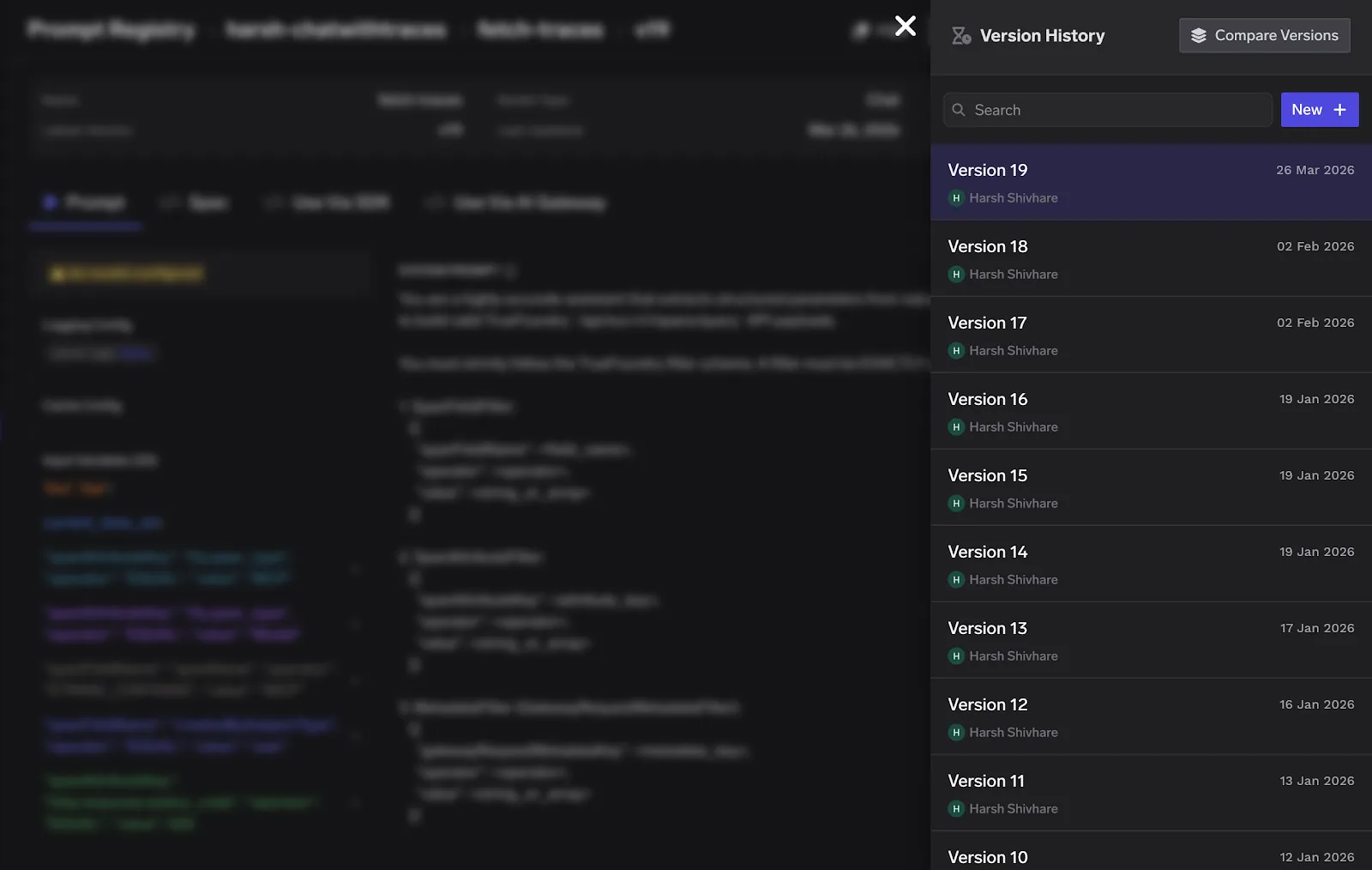

Verwaltung von Prompt-Versionen in der Produktion mit TrueFoundry Gateway

Sobald Ihre Aufforderung evaluiert, verbessert und getestet wurde, benötigen Sie ein System, das sie im Laufe der Zeit verwaltet. Versionierung, umgebungsspezifische Bereitstellung und die Möglichkeit, fehlerhafte Änderungen rückgängig zu machen, ohne Ihre gesamte Anwendung erneut bereitzustellen.

Hier kommt das AI Gateway von TrueFoundry ins Spiel. TrueFoundry bietet ein zentralisiertes System zur Verwaltung von Eingabeaufforderungen mit integrierter Versionierung. Jede Änderung an einer Aufforderung wird verfolgt, und Sie können mithilfe von für Menschen lesbaren Aliasen wie v1-prod oder v2-staging auf bestimmte Versionen verweisen. Das Gateway löst die Prompt-Version zur Laufzeit auf, was bedeutet, dass für Prompt-Updates keine erneute Codebereitstellung mehr erforderlich ist.

Gelernte Erkenntnisse und bewährte Verfahren

Da der Workflow auf eine Vielzahl von Eingabeaufforderungen und Projekten angewendet wurde, wurden mehrere wichtige Punkte deutlich:

- Es ist wichtig, immer sicherzustellen, dass Diagnosescores werden vor der Bearbeitung abgerufen. Die natürliche Reaktion auf ein Problem besteht darin, Änderungen vorzunehmen, sobald das Problem erkannt wird. Dies sollte vermieden werden. Stellen Sie sicher, dass die Diagnosen erfasst wurden, bevor Sie Änderungen vornehmen. Das Hauptproblem liegt oft in einem Gebiet, das ursprünglich nicht vermutet wurde.

- Halten Sie den Ausgabestil vom Inhalt getrennt. Ihre Kombination führt zu Mehrdeutigkeiten, die auf subtile Weise die Konsistenz untergraben. Das Modell sollte separat auf den Inhalt und die Ausgabestile aufmerksam gemacht werden.

- Überspringen Sie nicht die Fehlerbehandlung. Der zusätzliche Aufwand wird erst deutlich, wenn unerwartete Eingaben zu unbeabsichtigtem Verhalten führen. Spezifizieren Sie Fehlerbehandlungspfade, um Kosteneffizienz zu gewährleisten.

- Eingabeaufforderungen als Code behandeln. Implementieren Sie die Versionierung und überprüfen Sie sie vor der Veröffentlichung mit dem aktuellen Toolset.

- Integrieren Sie frühzeitig modellübergreifende Tests. Nach der Änderung festzustellen, dass eine Aufforderung für ein Modell funktioniert, für andere jedoch nicht, ist nicht ideal. Machen Sie modellübergreifende Tests zu einem Teil des Standard-Workflows.

Wo es als Nächstes hingeht: Prompt Engineering als System

Das Ziel, auf das wir hinarbeiten, ist es, schnelle Qualität so messbar und überprüfbar zu machen wie jeden anderen Teil Ihres Software-Stacks. Das bedeutet automatische Regressionstests für Eingabeaufforderungen, wenn sich die zugrunde liegende Modellversion ändert, eine in Ihre Bereitstellungspipeline integrierte Prompt-Versionierung und Evaluierungs-Dashboards, die einen Überblick über die Leistung von Prompts im Laufe der Zeit geben.

Schnelles Ingenieurwesen wird nicht mehr als Handwerk, sondern als Wissenschaft betrachtet. Organisationen, die es als solches behandeln und einen formalen Prozess für Bewertung, Iteration und Testen implementieren, werden besser in der Lage sein, zuverlässigere KI-Systeme zu entwickeln als Unternehmen, die dies nicht tun. Der in diesem Arbeitsablauf beschriebene Prozess ist ein Versuch, dieses Ziel zu erreichen.

TrueFoundry AI Gateway bietet eine Latenz von ~3—4 ms, verarbeitet mehr als 350 RPS auf einer vCPU, skaliert problemlos horizontal und ist produktionsbereit, während LiteLM unter einer hohen Latenz leidet, mit moderaten RPS zu kämpfen hat, keine integrierte Skalierung hat und sich am besten für leichte Workloads oder Prototyp-Workloads eignet.

Der schnellste Weg, deine KI zu entwickeln, zu steuern und zu skalieren

.png)

.png)

.webp)

.webp)