Why TrueFoundry is the stronger long-term platform investment than MintMCP

Built for Speed: ~10ms Latency, Even Under Load

Blazingly fast way to build, track and deploy your models!

- Handles 350+ RPS on just 1 vCPU — no tuning needed

- Production-ready with full enterprise support

TL;DR

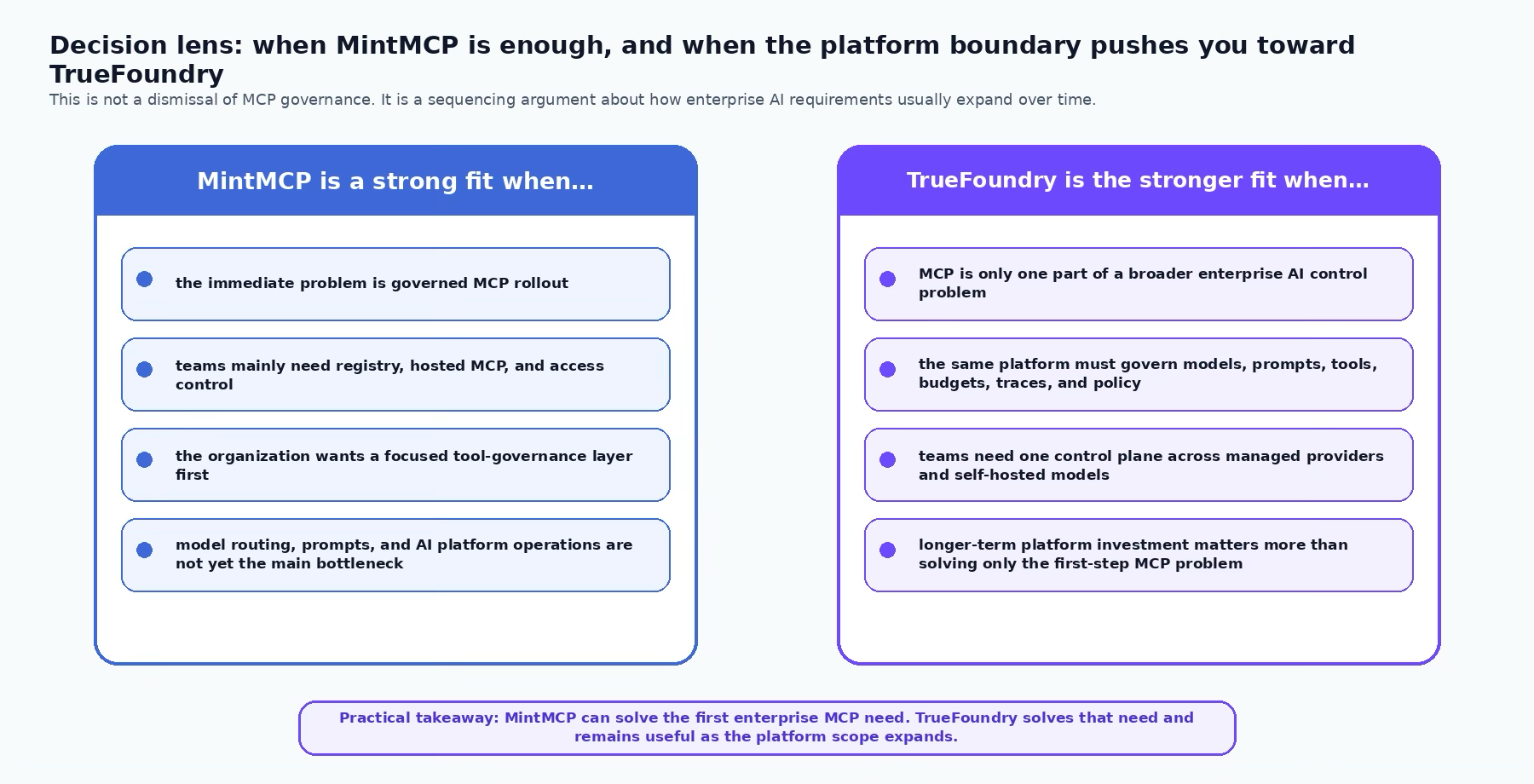

MintMCP is a serious product for governed MCP rollout. It earns respect because it makes a hard early problem concrete: take MCP servers out of laptop-local setups, host them centrally, wrap them in enterprise auth, give teams role-based endpoints, and make tool access auditable. If your main problem is “we need MCP in the enterprise, safely, now,” MintMCP can be a credible answer.

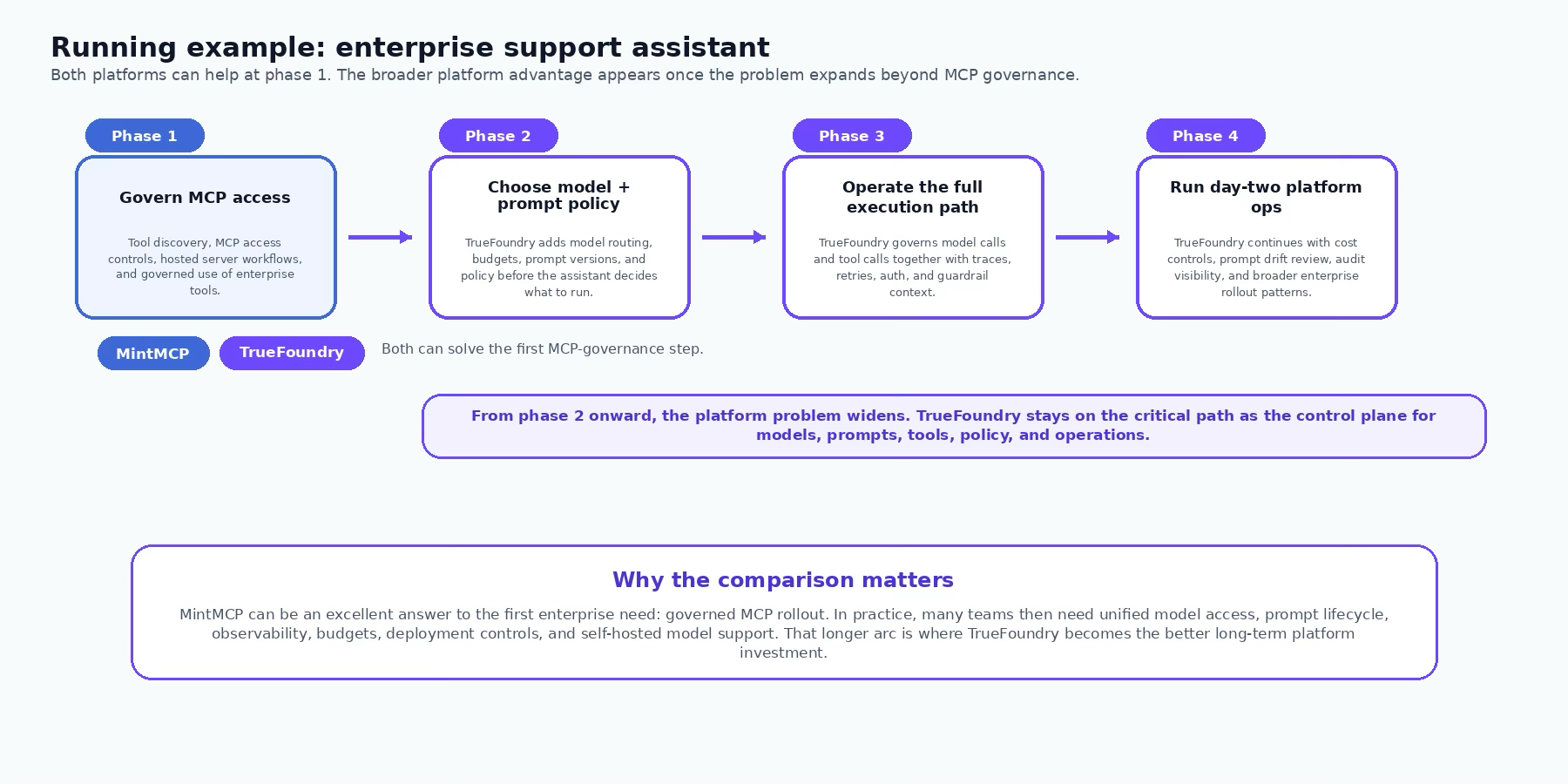

Our argument is about the next set of problems. Once the first MCP rollout succeeds, the enterprise platform team rarely stops at tool governance. The backlog expands. Which model should route this request? Which prompt version is live for this team? Where do budget rules apply? How do you trace the model call and the tool call in one place? Which workloads must run against self-hosted models? Where does the gateway live for regulated environments? That is the moment where TrueFoundry becomes the stronger long-term platform investment.

Running example

Think about a support assistant used by engineering and customer-success teams. It needs GitHub, Slack, Notion, ticketing tools, and internal docs through MCP. But it also needs model choice, per-team budgets, prompt versions, guardrails before and after tool calls, traces for incident review, and a deployment posture that can mix managed providers with self-hosted models.

1. Why enterprises start with MCP

MCP is easy to understand because the failure mode is visible. Without a gateway, every team runs servers locally, credentials sprawl across developer tools, and there is no shared approval point for tool access. That is why a product like MintMCP resonates quickly with engineering and security leaders. It turns MCP from a developer convenience into a managed enterprise layer.

That is also why we do not need to minimize MintMCP. The product is strong where it is supposed to be strong: hosted STDIO lifecycle, central registry, virtual servers, enterprise auth, audit visibility, and a straightforward operating model for rolling MCP across the org. If the main problem is governed tool access, MintMCP is not a toy. It is purpose-built for that job.

2. Why the platform boundary keeps expanding

The deeper enterprise problem is that production AI failures are usually not “tool failures” or “model failures” in isolation. They are chain failures. A request arrives with the wrong prompt version, gets routed to a more expensive or less capable model than expected, triggers a tool with insufficient validation, returns a noisy result, and then shows up later as an unexplained cost spike or shallow answer. Solving only the MCP slice of that loop is valuable, but it does not close the control-plane problem.

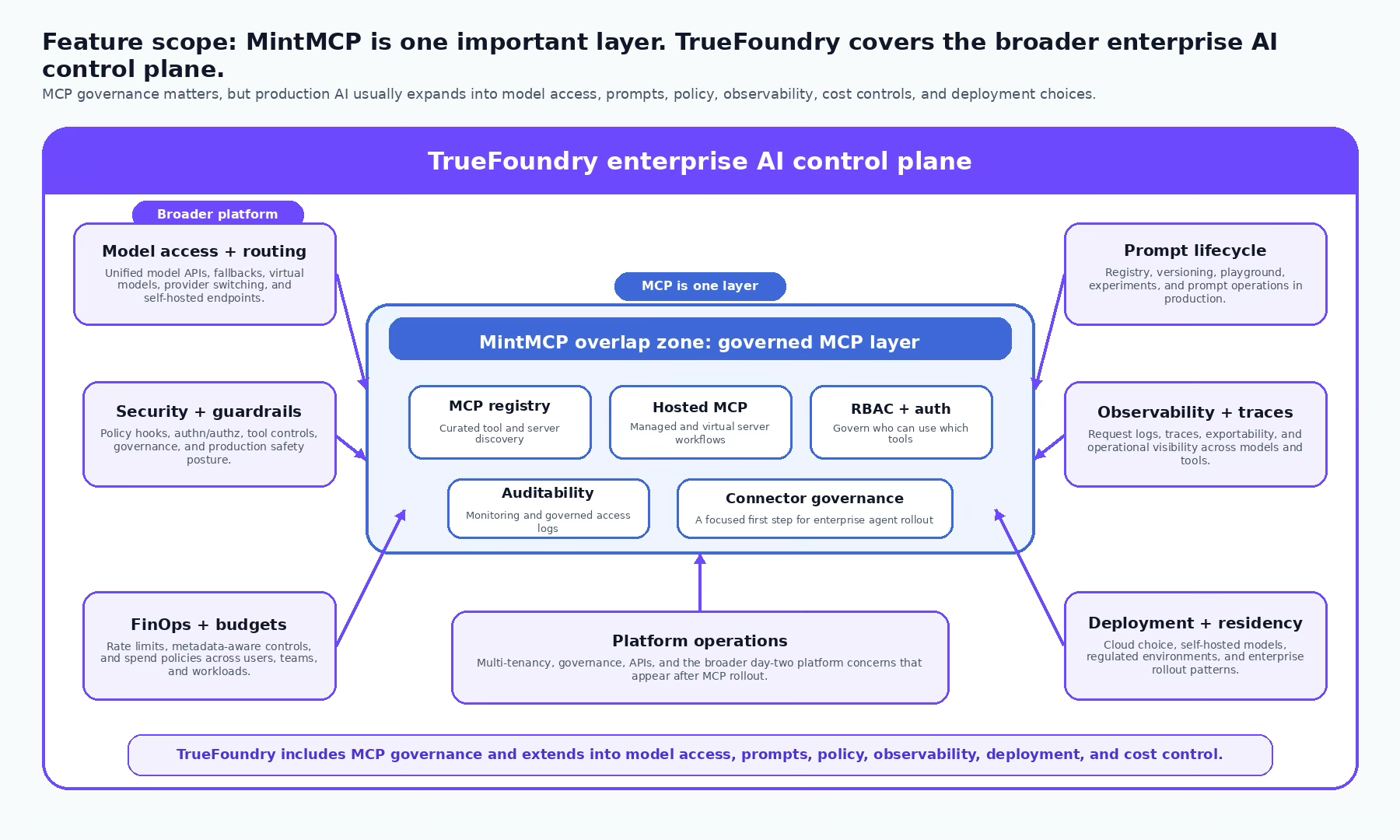

This is where our view of the product line is different. We do not think of the MCP gateway as the platform. We think of the MCP gateway as one control surface inside the platform. The same gateway should be able to decide model routing, apply budget rules, inspect prompts and tool calls, expose prompt versions, emit traces, and work with both managed and self-hosted inference backends. In other words: the enterprise AI gateway has to govern the whole execution path, not only the tool half of it.

3. The technical reason TrueFoundry scales further

The strongest technical argument for TrueFoundry is architectural. The AI Gateway is the proxy between applications and both model providers and MCP servers. That matters because it means the same operating plane can see the inbound request, the resolved model, the prompt configuration, the budget and rate rules, the MCP tool calls, and the response traces. The enterprise team does not have to stitch those controls together from separate products after the fact.

The architecture also matters operationally. The gateway plane is designed as a stateless hot path with in-memory evaluation for routing, authentication, authorization, rate limits, and guardrails, while logs and metrics are queued asynchronously. That is the kind of design that makes a gateway usable as a primary production control point rather than only as an admin surface. It is also why budgets, routing, traces, and tool governance can sit next to one another without turning every request into an external policy round-trip.

From there, the rest of the platform starts to matter. Budget limiting is not an add-on dashboard metric; it is an enforceable rule surface. Prompt management is not a separate notebook habit; it is part of the same operating story through the registry, versions, variables, and playground. Self-hosted models are not an afterthought; they are part of the model-access layer the gateway can sit in front of. That is why the comparison should not stop at “who has MCP.”

4. Where MintMCP still wins cleanly

It is still important to say this plainly: MintMCP can look like the faster answer for teams whose roadmap is very clearly centered on enterprise MCP enablement. If the buying motion is owned mainly by security and engineering enablement, and the main success metric is “roll out governed MCP access to Claude, Cursor, Copilot, and ChatGPT across the company,” MintMCP has a very legible product story. That focus is a strength, not a weakness.

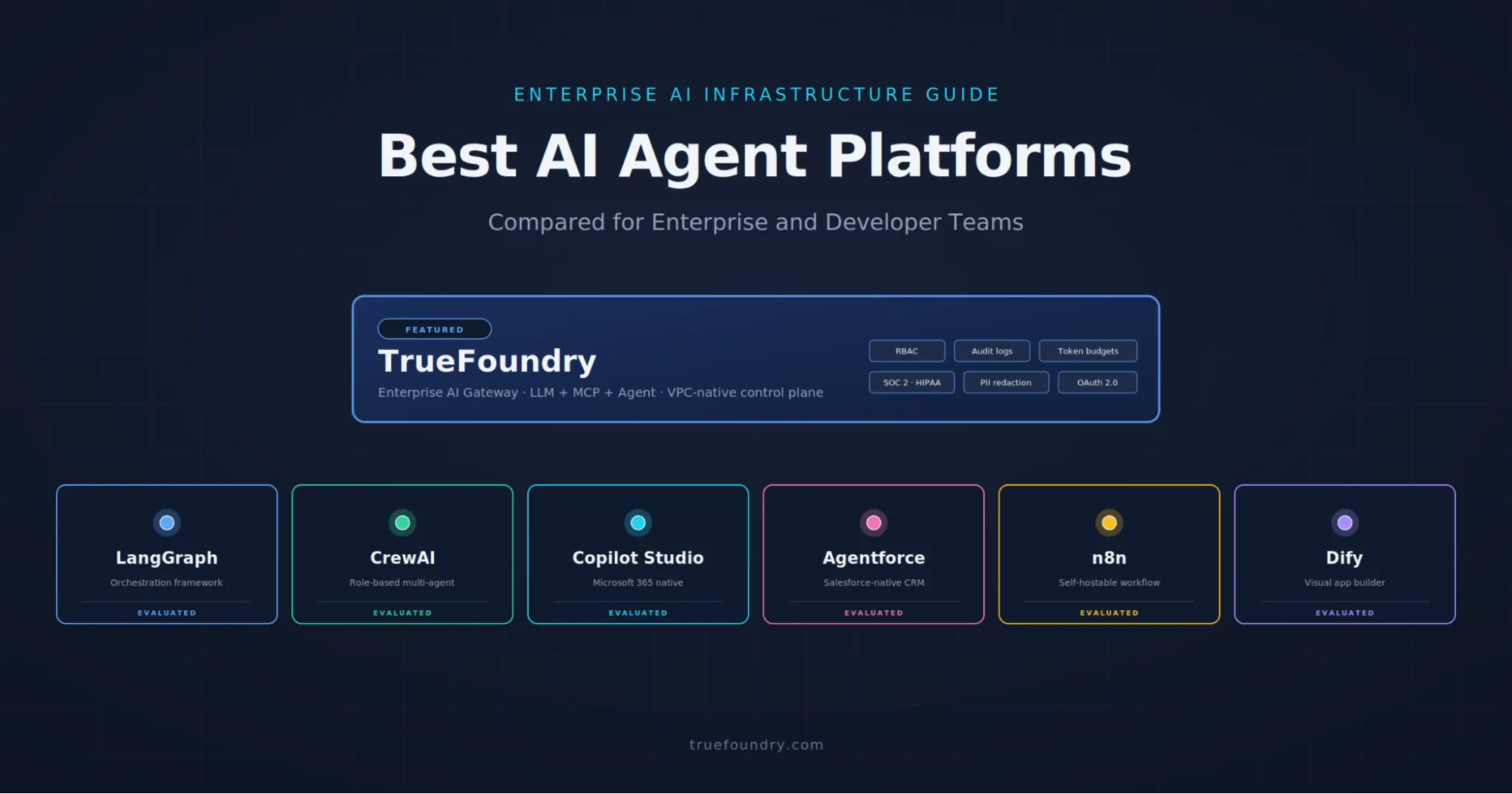

But focus can also become the ceiling. Once the central platform team is asked to unify model routing, prompt lifecycle, spend controls, observability, deployment options, and mixed-provider infrastructure, the narrower product shape starts to matter. The enterprise rarely buys a second control plane on purpose. It usually discovers it has one by accident. Our position is that it is better to choose the platform that already treats MCP as one aspect of the AI control plane rather than as the whole story.

Architecture and operating model

Recommendation

If the immediate enterprise task is to roll out governed MCP quickly, MintMCP is a respectable answer. If the actual platform roadmap is heading toward mixed model estates, per-team budgets, prompt lifecycle, traces, self-hosted inference, and deeper day-two operations, TrueFoundry is the stronger long-term investment. That is the framing we would stand behind publicly: respect the narrower product, but choose the broader control plane.

Capability matrix

References

- MintMCP MCP Gateway — https://www.mintmcp.com/mcp-gateway

- MintMCP homepage — https://www.mintmcp.com/

- MintMCP About / security posture — https://www.mintmcp.com/about

- TrueFoundry AI Gateway overview — https://www.truefoundry.com/docs/gateway

- TrueFoundry MCP Gateway overview — https://www.truefoundry.com/docs/ai-gateway/mcp-overview

- TrueFoundry budget limiting — https://www.truefoundry.com/docs/ai-gateway/budgetlimiting

- TrueFoundry prompt management — https://www.truefoundry.com/docs/ai-gateway/prompt-management

- TrueFoundry gateway plane architecture — https://www.truefoundry.com/docs/platform/gateway-plane-architecture

- TrueFoundry deployment overview — https://www.truefoundry.com/docs/platform/deployment-overview

- TrueFoundry self-hosted models — https://www.truefoundry.com/docs/ai-gateway/self-hosted-models

TrueFoundry AI Gateway delivers ~3–4 ms latency, handles 350+ RPS on 1 vCPU, scales horizontally with ease, and is production-ready, while LiteLLM suffers from high latency, struggles beyond moderate RPS, lacks built-in scaling, and is best for light or prototype workloads.

The fastest way to build, govern and scale your AI

.webp)

.webp)

.webp)

.webp)

.webp)