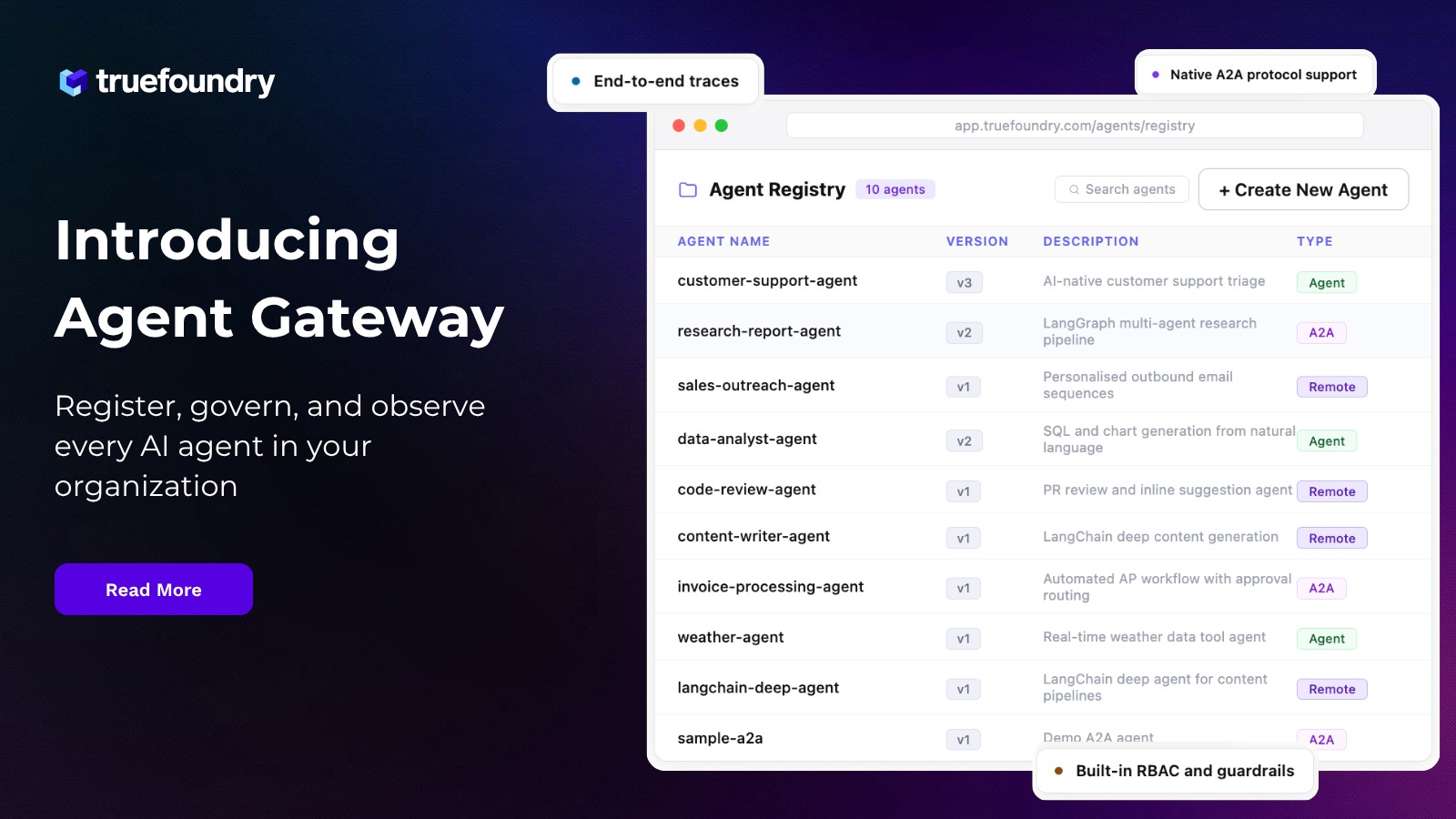

TrueFoundry vs. Apigee (Google): Why a Purpose-Built AI Control Plane Beats an API-Management-First MCP Strategy

Built for Speed: ~10ms Latency, Even Under Load

Blazingly fast way to build, track and deploy your models!

- Handles 350+ RPS on just 1 vCPU — no tuning needed

- Production-ready with full enterprise support

§0 — TL;DR and quick pick

Real-world scenario: from APIs to agents

A large enterprise already runs Apigee for internal and partner APIs. The AI team now wants to let agents use those APIs as tools. At first glance, the answer seems obvious: just expose APIs through Apigee MCP support and reuse the existing policy stack.

That gets you part of the way.

But the real production system has more moving parts:

- model selection and failover,

- token budgets and quotas,

- prompt versioning,

- tool discovery and curation,

- pre-tool and post-tool policy checks,

- user-to-tool credential brokering,

- traces that connect model calls to tool calls,

- deployment patterns for self-hosted and regulated environments.

Apigee clearly addresses some of that surface. TrueFoundry addresses all of it as a first-class AI product boundary.[4][5][6][7][8][9][10][11]

Editorial quick pick

- Choose Apigee if your primary goal is to turn existing APIs into governed MCP tools and you already operate a strong Apigee-centric API estate.[1][2][3]

- Choose TrueFoundry if your primary goal is to operate enterprise agentic AI end-to-end: model access, routing, budgets, prompts, MCP servers, guardrails, traces, and agent workflows in one platform.[4][5][6][7][8][9][10][11]

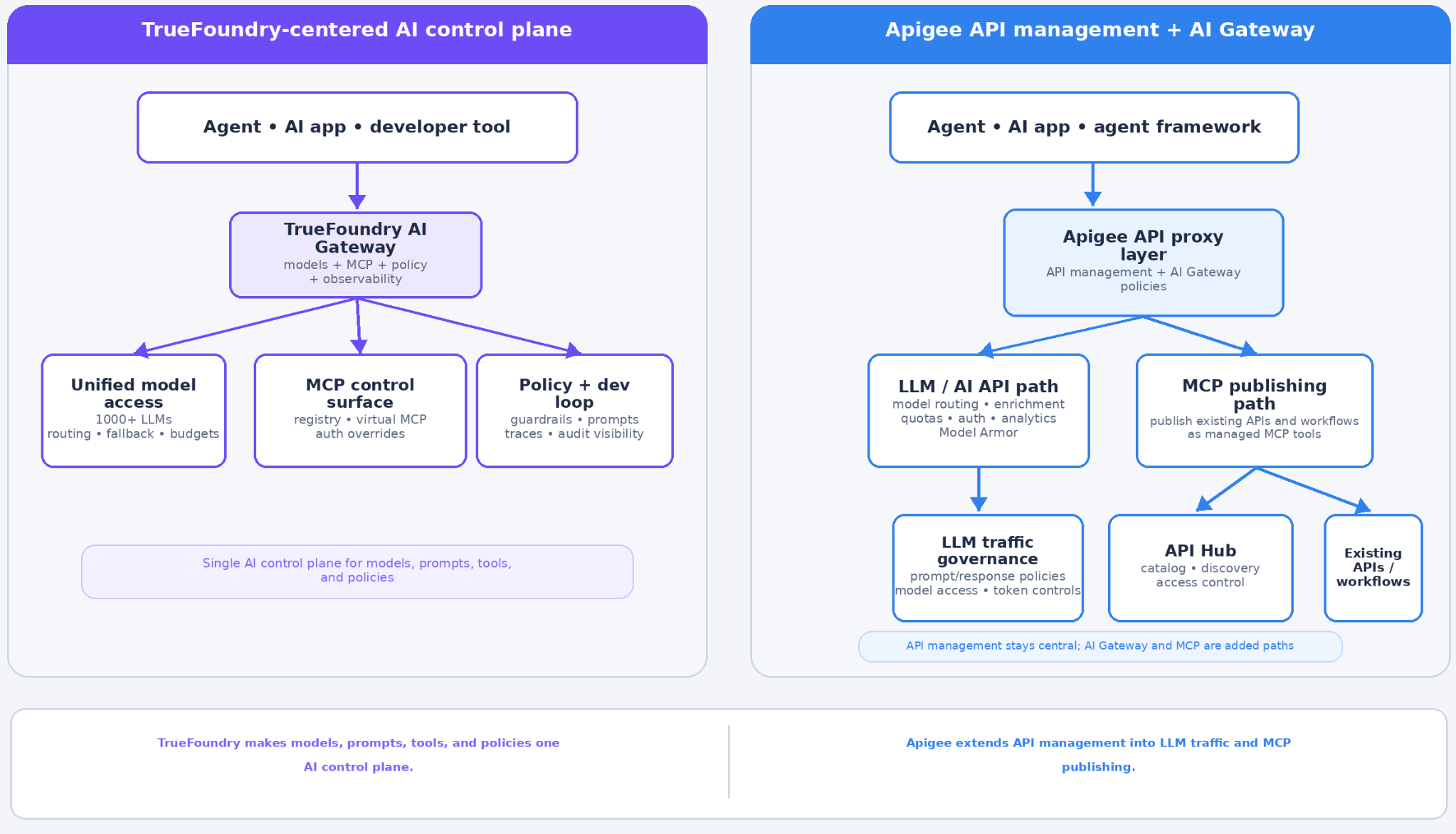

§1 — The central architectural difference

Apigee starts from the API gateway world and extends toward AI.

TrueFoundry starts from the AI gateway world and extends toward APIs, MCP servers, and agents.

That distinction is not cosmetic. It changes the shape of the control plane.

Apigee’s own docs say it is Google Cloud’s native API management platform, providing high-performance API proxies and a rich policy layer for backend services.[1] Its MCP support lets agents use secure, governed APIs and custom workflows in API Hub as tools, often without changing the existing backend APIs.[2][3]

That is excellent API-to-agent enablement.

TrueFoundry’s docs, on the other hand, describe the AI Gateway as the proxy between applications and LLM providers and MCP servers, with unified model access, routing, governance, observability, prompt management, MCP registry, and agent-facing capabilities.[4][5][6][7][8][9][10][11]

The practical implication is simple:

Apigee adapts your API estate for agent use. TrueFoundry governs the whole agentic runtime.

In other words, Apigee is making MCP a feature of API management.

TrueFoundry is making APIs, models, prompts, and tools all features of one AI control plane.

That is the core architectural advantage.

§2 — What Apigee does well

Any serious comparison should say this plainly:

1) Apigee gives enterprises a strong way to MCP-ify existing APIs

Google documents that with Apigee’s MCP support, you can use existing APIs as MCP tools and register deployed MCP proxies in API Hub.[2][3] The Google Cloud blog explicitly says you can do this without changing existing APIs, writing extra code, or managing local or remote MCP servers yourself.[2]

That is a powerful migration story for enterprises with large API estates.

2) Apigee has mature traffic and security policy machinery

Apigee’s core policy system is extensive. It includes standard and extensible policies, security controls, mediation, rate limiting, quotas, and custom logic through JavaScript, Python, Java, and XSLT policies.[1][12]

For AI-specific traffic, Google documents:

- PromptTokenLimit for prompt-token throttling,[13]

- LLMTokenQuota for token consumption control over longer intervals,[14]

- Model Armor-based sanitation for prompts and model responses,[15][16]

- and broader Model Armor policy integration in Apigee proxies.[17]

That means Apigee is not “just a generic API gateway” anymore in this space.

3) Apigee is strong when the system of record is still APIs

If your architecture is fundamentally:

- existing business APIs,

- strong API producer governance,

- existing API Hub catalogs,

- existing Apigee operations teams,

then Apigee offers a low-friction extension path into agentic tooling.[1][2][3]

All of that is real.

§3 — Why TrueFoundry is the stronger choice for enterprise agentic AI

The rigorous case is not “Apigee cannot do AI.” It obviously can.

The rigorous case is this:

Apigee remains API-management-first. TrueFoundry is AI-runtime-first.

That matters because enterprise agentic AI is not just API exposure.

1) TrueFoundry unifies model and tool governance in one platform

TrueFoundry’s docs explicitly say the AI Gateway sits between applications and both LLM providers and MCP servers.[4] That means model access and tool access are governed in the same place.

This is a stronger architecture than stitching together:

- one system for model routing,

- one system for prompt lifecycle,

- one system for MCP exposure,

- one system for traces.

When the control plane is fragmented, policy fragments with it.

2) It has first-class model routing, budgets, rate limits, and caching

TrueFoundry publicly documents:

- unified access to 1000+ LLMs,[4]

- routing / virtual-model policy with retries and fallbacks,[5]

- rate limiting for LLM workloads,[6]

- budget limiting with layered rules,[7]

- cost tracking,[8]

- exact and semantic caching.[9]

Apigee documents token policies and rate controls for AI traffic.[13][14] But the public Apigee MCP story is still centered on exposing APIs as tools. It does not present Apigee as the same kind of unified multi-model AI runtime control plane that TrueFoundry does.[2][3]

That is a major structural difference.

3) TrueFoundry has a deeper MCP-native control surface

TrueFoundry’s MCP docs show:

- centralized MCP registry,[10]

- unified token management,[10]

- split inbound/outbound auth architecture,[11]

- tool-level RBAC/ABAC,[10][11]

- Auth Overrides for user-specific outbound credentials,[18]

- Virtual MCP Server for curated cross-server tool bundles without additional deployment,[19]

- OpenAPI-to-MCP paths,[20]

- hosted stdio-based and other MCP deployment patterns.[21]

Apigee’s MCP story is real, but it is shaped around MCP proxies for APIs and API Hub parsing.[2][3] TrueFoundry’s MCP story is more purpose-built around enterprise AI tool governance itself.

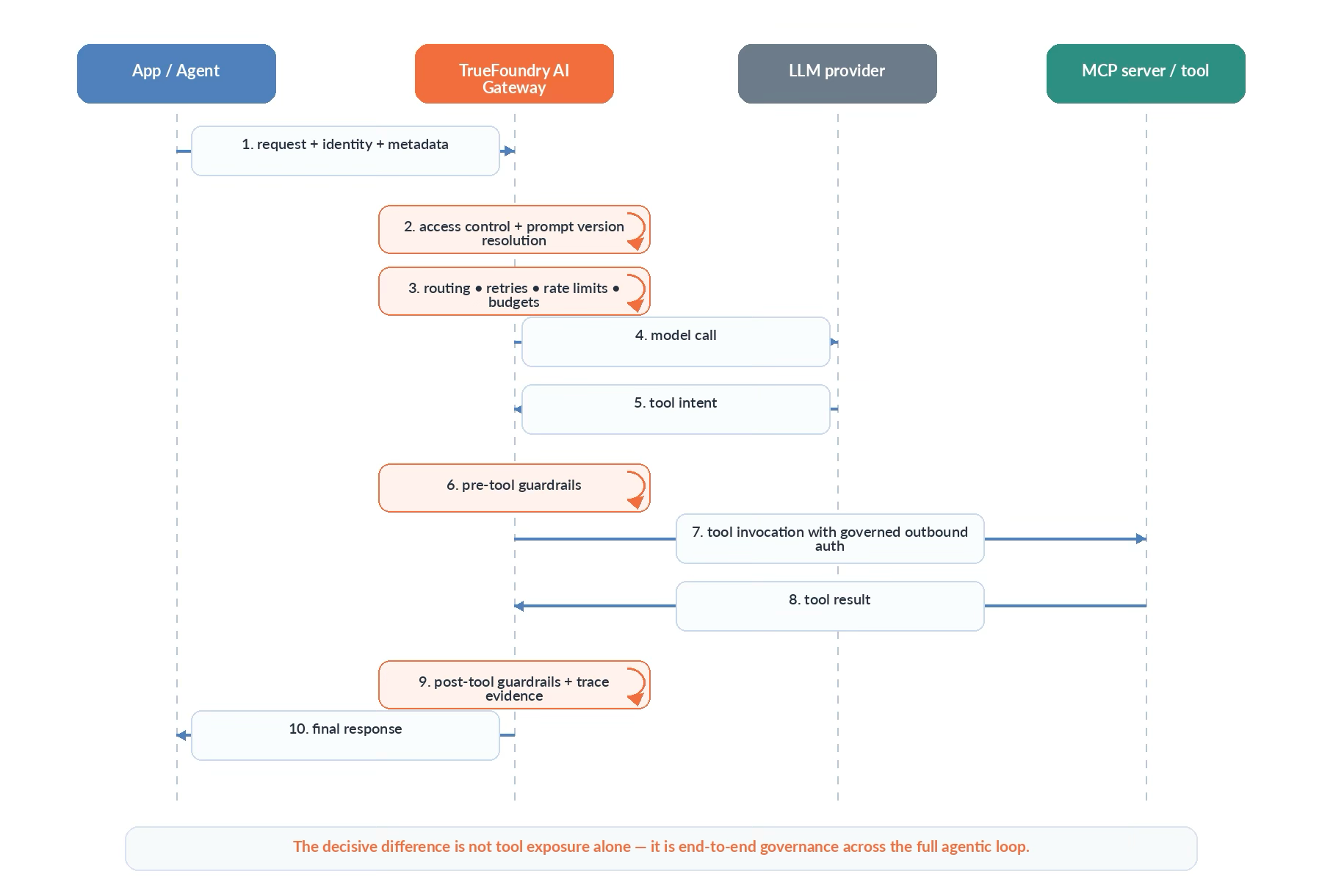

4) TrueFoundry documents dedicated MCP lifecycle guardrails

This is one of the strongest differentiators.

TrueFoundry documents MCP pre-tool and post-tool guardrail execution, testing through the Playground, and inspection through traces.[22] It also documents Cedar and OPA guardrails for fine-grained authorization over MCP tool executions.[23][28]

Apigee documents Model Armor-based sanitation for prompts and model responses, which is valuable for LLM safety.[15][16][17] But that is not the same thing as a publicly documented MCP-native pre-tool/post-tool lifecycle guardrail model.

That makes TrueFoundry the stronger fit for agentic workflows where the dangerous moment is not only the model prompt, but the tool invocation itself.

5) TrueFoundry integrates prompts, models, MCP, and observability in one developer loop

TrueFoundry publicly documents:

- Prompt Management,[24]

- Prompt Versioning,[25]

- an AI Gateway Playground with models, guardrails, MCP servers, and reusable prompts together,[26]

- metrics and traces as part of the same AI Gateway surface.[10][26]

That distinction matters because production AI failures usually happen across the model-prompt-tool chain, not just at the API layer.

Apigee is fairly good at governing API traffic. TrueFoundry is better aligned to govern the full model-prompt-tool workflow.

6) It offers a cleaner architecture for AI platform teams than API retrofitting

Apigee’s MCP value proposition is partly that you can reuse your API estate.[2]

That is good.

But retrofitting APIs into agent tools is not the same as running a purpose-built AI control plane.

A platform team that adopts TrueFoundry gets a cleaner control plane for:

- AI identities,

- virtual AI models,

- tool identities,

- prompt versions,

- rate limits and budgets,

- trace evidence,

- and deployment choices focused on AI workloads, including self-hosted, air-gapped, and regulated environments.[4][5][6][7][8][9][10][11]

That reduces the amount of AI-specific control logic you have to assemble yourself out of general API primitives.

§4 — Important Apigee limitations in the current public MCP docs

A rigorous superiority case should also call out current public limitations precisely.

1) Apigee MCP overview currently does not apply to Apigee hybrid

Google’s MCP in Apigee overview explicitly says the page applies to Apigee, but not to Apigee hybrid.[3]

That does not mean Google has no future or adjacent answer. It does mean the current public MCP overview is not framed as a universal hybrid story.

For organizations with strict self-managed or hybrid-first requirements, that matters.

2) API Hub MCP tool handling has documented caveats

Google documents at least two current caveats in API Hub MCP tool handling:

- API Hub parses MCP tools from the latest deployed revision only.

- The underlying OAS file is not currently converted to the MCP specification schema; Google marks that as a known issue.[22]

Those are not disqualifying limitations. But they are exactly the kind of detail rigorous buyers should care about.

3) Some AI policies are extensible and can have cost / environment implications

Google’s PromptTokenLimit and LLMTokenQuota docs state these are extensible policies and may have cost or utilization implications depending on license and environment type.[13][14][12]

Again, that is not a fatal flaw. It is simply part of the real operational story.

§5 — The comparison matrix

TrueFoundry vs Apigee: capability-by-capability

§6 — The decisive technical point: reuse is not the same as fitness

Apigee’s strongest argument is reuse:

“You already have APIs. We can govern them and expose them to agents.”

That is compelling.

But reuse is not the same thing as platform fitness.

The deeper enterprise question is whether the system can govern the whole agentic loop.

This is where TrueFoundry’s purpose-built architecture has the clear edge.

Apigee can expose APIs to agents.

TrueFoundry governs the end-to-end agentic execution path.

That is a higher-order capability.

§7 — Editorial verdict

The fair verdict

Apigee is a very good answer to this question:

“How do I apply enterprise API governance to APIs that agents should call?”

TrueFoundry is the better answer to this question:

“How do I operate enterprise agentic AI as one governed runtime across models, prompts, tools, and traces?”

The sharper verdict

Apigee is an excellent API platform extending into agent tooling. TrueFoundry is the stronger enterprise AI platform for teams whose control plane must span models and tools together for teams whose control plane must span models and tools together.

That is the rigorous, technically defensible superiority case.

References

- Google Cloud, What is Apigee? — https://docs.cloud.google.com/apigee/docs/api-platform/get-started/what-apigee

- Google Cloud Blog, MCP support for Apigee — https://cloud.google.com/blog/products/ai-machine-learning/mcp-support-for-apigee

- Google Cloud, Model Context Protocol (MCP) in Apigee overview — https://docs.cloud.google.com/apigee/docs/api-platform/apigee-mcp/apigee-mcp-overview

- TrueFoundry, Introduction to AI Gateway — https://www.truefoundry.com/docs/ai-gateway/intro-to-llm-gateway

- TrueFoundry, Routing Config / Virtual Models — https://www.truefoundry.com/docs/ai-gateway/load-balancing-overview

- TrueFoundry, Rate Limiting — https://www.truefoundry.com/docs/ai-gateway/ratelimiting

- TrueFoundry, Budget Limiting — https://www.truefoundry.com/docs/ai-gateway/budgetlimiting

- TrueFoundry, Cost Tracking — https://www.truefoundry.com/docs/ai-gateway/cost-tracking

- TrueFoundry, Caching (Exact and Semantic) — https://www.truefoundry.com/docs/ai-gateway/caching

- TrueFoundry, MCP Gateway overview — https://www.truefoundry.com/docs/ai-gateway/mcp/mcp-overview

- TrueFoundry, Authentication and Security for MCP Servers — https://www.truefoundry.com/docs/ai-gateway/mcp/mcp-gateway-auth-security

- Google Cloud, Policy reference overview — https://docs.cloud.google.com/apigee/docs/api-platform/reference/policies/reference-overview-policy

- Google Cloud, PromptTokenLimit policy — https://docs.cloud.google.com/apigee/docs/api-platform/reference/policies/prompt-token-limit-policy

- Google Cloud, LLMTokenQuota policy — https://docs.cloud.google.com/apigee/docs/api-platform/reference/policies/llm-token-quota-policy

- Google Cloud, SanitizeUserPrompt policy — https://docs.cloud.google.com/apigee/docs/api-platform/reference/policies/sanitize-user-prompt-policy

- Google Cloud, SanitizeModelResponse policy — https://docs.cloud.google.com/apigee/docs/api-platform/reference/policies/sanitize-llm-response-policy

- Google Cloud, Get started with Apigee Model Armor policies — https://docs.cloud.google.com/apigee/docs/api-platform/tutorials/using-model-armor-policies

- TrueFoundry, Auth Overrides — https://www.truefoundry.com/docs/ai-gateway/mcp/mcp-server-auth-overrides

- TrueFoundry, Virtual MCP Server — https://www.truefoundry.com/docs/ai-gateway/mcp/virtual-mcp-server

- TrueFoundry, OpenAPI to MCP Server — https://www.truefoundry.com/docs/ai-gateway/mcp/openapi-mcp-server

- TrueFoundry, Hosted Stdio-based MCP Server and MCP deployment overview — https://www.truefoundry.com/docs/ai-gateway/mcp/stdio-mcp-server, https://www.truefoundry.com/docs/mcp-server-deployment/mcp-server-deployment-overview

- Google Cloud, Manage MCP tools in API Hub — https://docs.cloud.google.com/apigee/docs/apihub/manage-mcp-tools

- TrueFoundry, Guardrails getting started, Cedar Guardrails, OPA Guardrails — https://www.truefoundry.com/docs/ai-gateway/guardrails-getting-started, https://www.truefoundry.com/docs/ai-gateway/cedar-guardrails, https://www.truefoundry.com/docs/ai-gateway/opa-guardrails

- TrueFoundry, Prompt Management — https://www.truefoundry.com/docs/ai-gateway/prompt-management

- TrueFoundry, Prompt Versioning — https://www.truefoundry.com/docs/ai-gateway/prompt-versioning

- TrueFoundry, AI Gateway Playground — https://www.truefoundry.com/docs/ai-gateway/playground-overview

TrueFoundry AI Gateway delivers ~3–4 ms latency, handles 350+ RPS on 1 vCPU, scales horizontally with ease, and is production-ready, while LiteLLM suffers from high latency, struggles beyond moderate RPS, lacks built-in scaling, and is best for light or prototype workloads.

The fastest way to build, govern and scale your AI

.webp)

.webp)

.webp)

.webp)

.webp)

.webp)