Cursor Security: Complete Guide to Risks, Data Flow & Best Practices

Built for Speed: ~10ms Latency, Even Under Load

Blazingly fast way to build, track and deploy your models!

- Handles 350+ RPS on just 1 vCPU — no tuning needed

- Production-ready with full enterprise support

You probably don’t think much about your cursor, but it can affect your digital security more than you know. Every time you click links or enter information, your cursor interacts with websites and apps, and this can create risks if you’re not careful. This guide is designed for DevSecOps teams, security engineers, and developers who need a clear picture of what happens to their code: what cursor security is, how Cursor works, the types of data it handles, past vulnerabilities, key risks, and best practices to use it safely.

.webp)

What is Cursor Security and Why It Matters?

AI-powered IDEs have quickly evolved from “nice-to-have” tools to core development infrastructure, often faster than security teams can adapt. Cursor, by Anysphere, is a leading example: a VS Code–based editor with deep AI integration that can generate, refactor, and even execute code or external actions.

Cursor is no longer niche. By mid-2025, reports highlighted its rapid growth, placing its revenue around $500M ARR [Source]. Cursor itself states it’s used by “tens of thousands of enterprises,” with customers publicly named in its Enterprise launch post [Source]. NVIDIA’s CEO has also publicly acknowledged widespread use of AI coding tools, including Cursor, within NVIDIA. [Source] This growth has been largely organic, driven by viral adoption, developer communities, and a generous freemium model.

What makes Cursor unique is its approach: you’re no longer just writing code manually; you’re directing an AI agent that reads, writes, and executes code for you, a trend often called “vibe coding.”

However, embedding agentic AI into the IDE introduces new security challenges. Unlike traditional editors, Cursor operates in a cloud-connected, semi-autonomous environment where code continuously interacts with external systems.

For enterprises, these risks are high-stakes. With large-scale adoption and emerging vulnerabilities like CVE-2025 exploits, understanding how Cursor handles security is essential.

Anatomy of Cursor: Understanding the Attack Surface

Before diving into cursor security infrastructure, it’s important to understand the Cursor engine. Cursor isn’t just a static IDE; it’s a collection of high-autonomy agents working together. In security terms, every feature of Cursor represents a security “ask”, which is also a potential exposure. Here’s how Cursor’s primary capabilities translate into an attack surface.

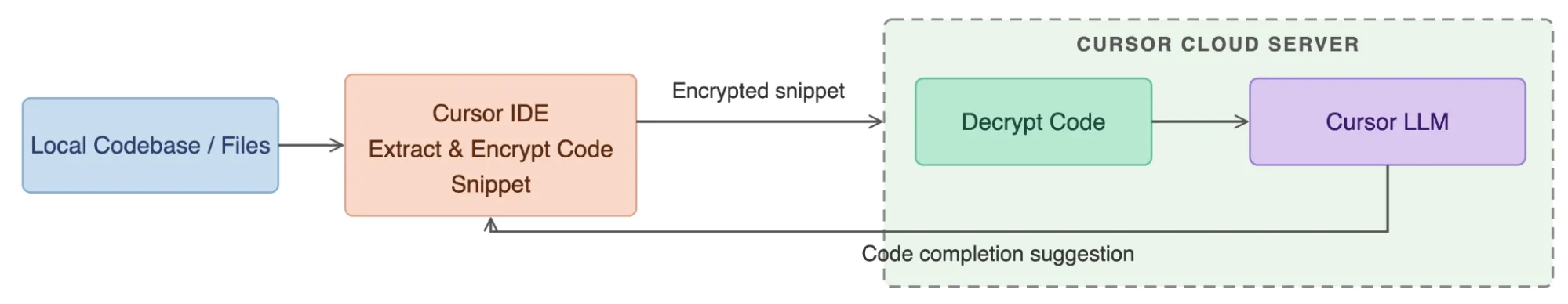

1. Code Autocomplete

Cursor predicts code inline as you type. Small snippets of your active file are encrypted and sent to Cursor’s servers, where a custom LLM generates completions in under a second.

Security Risk: For organizations, this requires absolute zero data retention (ZDR), ensuring that millions of queries per second are processed in-memory only and never written to permanent logs. Any lapses could expose sensitive code.

2. Chat Assistant

Cursor can collaborate to build features or fix bugs. It uses agentic reasoning to search your indexed codebase and combine snippets into rich prompts for the AI model.

Security Risk: Despite improvements over snippet-based AI, indirect prompt injection is possible. Malicious instructions hidden in third-party libraries, README files, or comments could "poison" the agent, causing it to execute unauthorized tasks or leak internal logic to an external URL.

3. Inline Edit Mode (Cmd+K)

Cursor refactors targeted blocks of code in a precise, surgical manner. The selected code and instructions are sent to the model, which returns a diff that is applied directly to your file.

Security Risk: Rapid AI refactors can introduce subtle security flaws, especially under reviewer fatigue. Examples include unvalidated inputs, hardcoded secrets, or logic errors hallucinated by the AI. Developers must carefully inspect edits before accepting them.

.webp)

4. Automated Code Review with BugBot

BugBot reviews Pull Requests and suggests fixes, requiring read/write access to your private GitHub repositories.

Security Risk: Your CI/CD pipeline now depends partially on Cursor’s cloud infrastructure. BugBot must be treated as a privileged entity, and its permissions should be strictly scoped to minimize risk.

5. Background Agents

Cursor offloads long-running tasks to background agents, such as running full test suites on isolated Ubuntu-based VMs in Cursor’s AWS cloud.

Security Risk: This introduces remote code execution into the threat model. Cloud tenant isolation and VM security are critical because proprietary code moves from your local machine to Cursor-managed cloud environments.

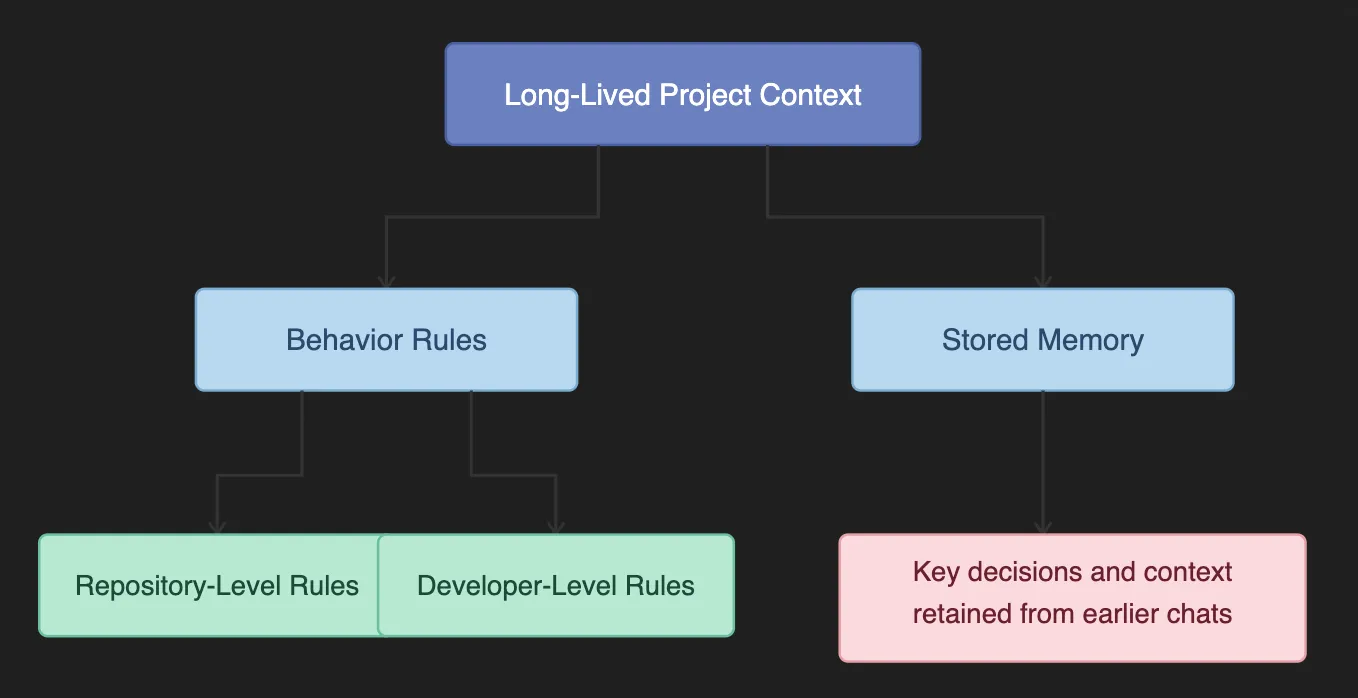

6. Persistent Behaviour

Cursor remembers project-specific style guides and past architectural decisions. It injects instructions from .cursorrules files and “memories” into the context of every prompt.

Security Risk: A compromised .cursorrules file in a shared repository could inject persistent, invisible biases into the AI. For example, attackers could force the AI to consistently use a malicious library for encryption across the team’s IDEs.

7. Codebase Intelligence

Cursor can “understand” and search your entire project based on semantic meaning, not just keywords. It uses Merkle Tree synchronization to map code into embeddings stored in a remote vector database (Turbopuffer).

Security Risk: Sensitive logic or secrets (like .env files) could be unintentionally vectorized if they aren’t excluded with .cursorignore. This exposes critical information to remote storage.

Now that you understand Cursor’s capabilities and attack surface, the next step is to examine where your data goes, how it is processed, and the multi-layered infrastructure Cursor uses to keep your code safe.

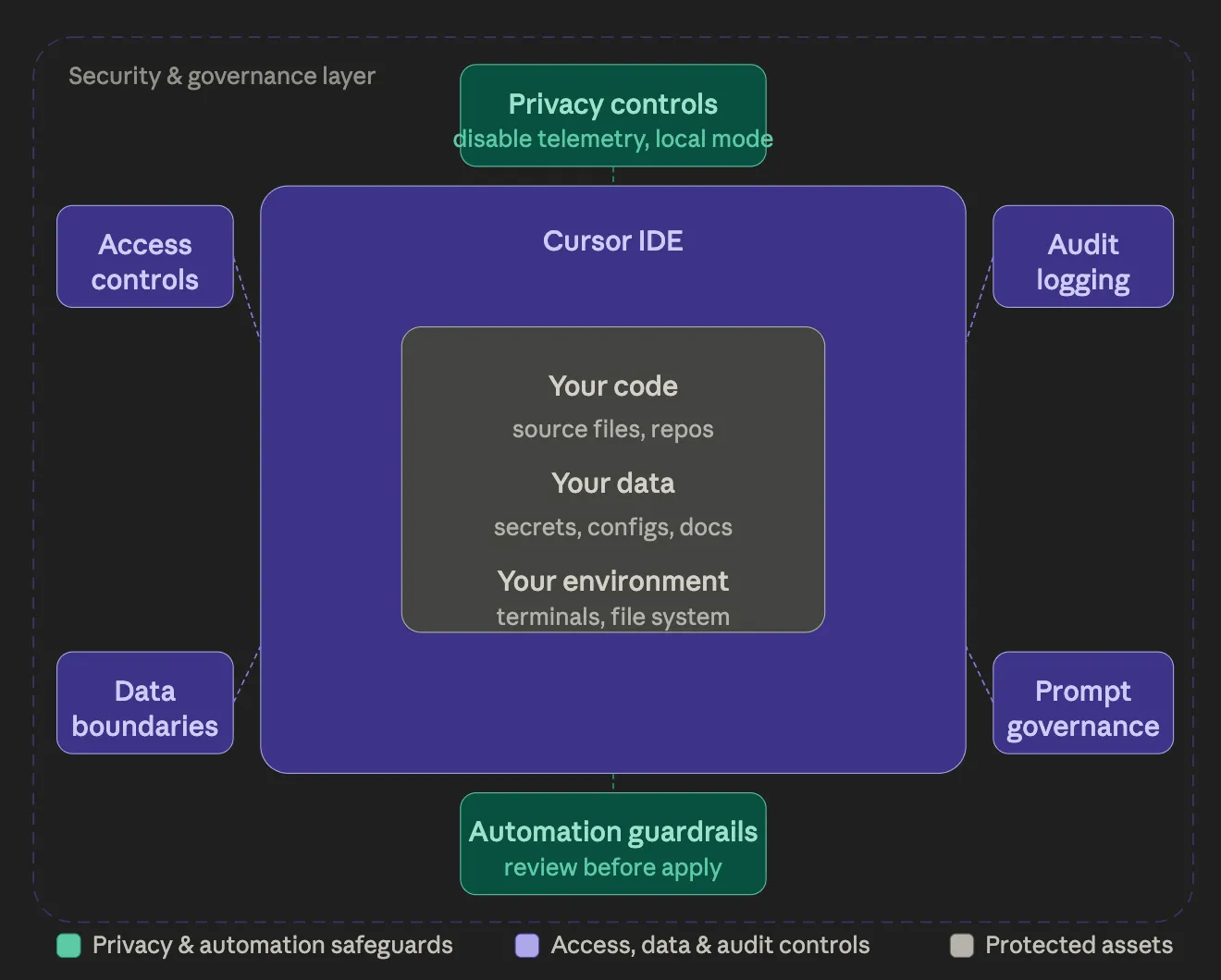

How does Cursor handle your code?

Cursor integrates tightly with your IDE, bringing AI-driven automation to your development workflow. While this enables speed and flexibility, many developers treat their IDE as a black box.

If you’re a CISO, security engineer, or senior AI developer, one key question arises:

“Where exactly does my code go?”

Cursor addresses this through a three-tiered architecture that creates a clear chain of custody- from your local machine, through Cursor’s cloud orchestration, to AI inference, and back:

- Tier 1 – Client (Local Machine)

- Tier 2 – Cursor Backend (Gateway)

- Tier 3 – Third-Party LLM Subprocessors

.webp)

Tier 1 – Client (Your Local Machine)

The Cursor client, a fork of VS Code, keeps your most sensitive data anchored locally and performs all critical pre-processing before anything leaves your environment. It indexes your code, creates local embeddings, maintains a Merkle tree of file hashes, and performs semantic searches to select only the relevant snippets to send to the cloud. Cursor also respects .cursorignore and .gitignore rules, filtering out sensitive files, secrets, or PII.

From a security perspective, this ensures data minimization, only a narrow subset of code ever leaves your machine, and pre-transmission filtering, making the client the strongest boundary for protecting your source code.

Tier 2 – Cursor Backend (Gateway)

The Cursor backend acts as a secure orchestration layer, assembling AI prompts without storing your source code. Hosted primarily on AWS, this “Cursor Gateway” combines user queries with the snippets selected on the client and persistent project instructions (like .cursorrules). It applies prompt engineering, routing logic, and safety controls, and even user-supplied API keys pass through for governance.

In Privacy Mode, all code is processed in-memory with zero-retention logging, and no long-term storage occurs. The Gateway represents the last Cursor-controlled boundary before data reaches third-party LLM providers.

Tier 3 – Third-Party LLM Subprocessors

Inference occurs through third-party LLM providers such as OpenAI, Anthropic, or Google. These models receive the final assembled prompt, process it, and stream the output back through the backend to the client.

For enterprise customers, Zero Data Retention (ZDR) agreements ensure that code is not stored beyond the inference window, prompts are not used for training, and no secondary retention occurs. Selecting compliant providers and enforcing contractual guarantees is critical to maintaining enterprise security and compliance.

.webp)

How does the Cursor handle different types of data?

Cursor interacts with multiple types of data, each flowing through different tiers and carrying unique security considerations. The table below summarizes the key data types, their primary tiers, examples, and security notes.

What are the security risks with Cursor?

Cursor’s AI capabilities bring powerful automation, but they also introduce new security challenges. Here, have a look at the seven major security risks with Cursor:

1. Malicious Prompt Execution

Attackers can craft prompts designed to trick Cursor’s AI into executing unintended commands or modifying critical project files. Since these actions may run directly in your terminal or file system, exploitation can result in code corruption or even full environment compromise.

2. Cross-Project Context Contamination

Cursor uses project and conversational context to enhance AI suggestions. If this context is poisoned, for example, with misleading snippets or instructions, it can carry over to other projects. This may introduce logic errors, security vulnerabilities, or the accidental leakage of sensitive information.

3. Compromised Rules Files

Rules files govern how Cursor agents behave, including triggers and execution parameters. A tampered rules file can hide malicious instructions, granting attackers persistent access or the ability to run arbitrary code whenever the file is loaded.

4. Unsupervised Agent Auto-Runs

Auto-run mode allows agents to perform commands without human approval. While convenient, it removes critical review steps, increasing the risk of unsafe scripts executing unnoticed and potentially introducing malware or compromising the development environment.

5. Credential Exposure

AI-generated outputs can accidentally include API keys, authentication tokens, or login credentials. If these secrets are exposed in logs, version control, or shared environments, attackers could gain direct access to sensitive systems and data.

6. Malicious Dependency Execution

Cursor can install packages automatically as part of AI workflows. Malicious or compromised packages from public registries can contain obfuscated scripts that exfiltrate data, deploy malware, or execute unauthorized commands on installation.

7. Namespace Collisions and Agent Spoofing

When multiple agents share the same namespace, a malicious actor can create a spoofed agent that impersonates a trusted one. This can hijack Cursor’s agent system, allowing attackers to execute unauthorized commands or exfiltrate sensitive information.

.webp)

Real-World Exploits: CurXecute and MCPoison

In 2025, researchers identified two high-profile vulnerabilities in how Cursor handles MCP servers, highlighting the real-world risks of agentic AI in IDEs.

CurXecute

The first, CurXecute (CVE-2025-54135), demonstrated the dangers of indirect prompt injection. Researchers found that Cursor allowed certain files to be created within a workspace without requiring user approval.

This meant that an attacker could craft a malicious message, such as a Slack notification monitored by the AI agent, that would trick the AI into writing a harmful .cursor/mcp.json file. Because older versions of Cursor did not prompt for confirmation when creating new files, this flaw could be exploited to achieve Remote Code Execution (RCE) simply by sending a text message.

MCPoison

The second vulnerability, MCPoison (CVE-2025-54136), took advantage of a trust-validation flaw in Cursor’s handling of MCP server approvals. In earlier versions, once a server was approved, Cursor would trust that server indefinitely. An attacker could initially commit a benign configuration to a shared repository and wait for it to be approved.

Later, they could silently replace the command with a malicious payload, such as a reverse shell. Since the server name remained unchanged, Cursor would not prompt the user again for approval, enabling stealthy execution of malicious commands.

Together, these vulnerabilities underscore the importance of strict approval workflows, monitoring configuration files, and maintaining up-to-date versions of AI-enabled IDEs.

Cursor best practices

Securing Cursor means layering controls to prevent mistakes, misleading suggestions, or unexpected behavior from turning into incidents. Each layer adds human oversight, limits autonomous actions, and improves visibility. Here, have a look:

Level 1: Immediate IDE Hardening (Individual Developers)

These are the essential steps that every developer should implement from day one:

- Turn off Terminal Auto-Run. Auto-Run may be convenient, but it bypasses the pause where errors can be caught. Every AI-suggested command should require explicit review before execution to prevent accidental destructive actions.

- Enable Privacy Mode. This ensures that code isn’t retained outside the local session. Privacy Mode maintains the user experience while significantly reducing the exposure of sensitive code.

- Protect dotfiles and credentials. Many real-world leaks come from .env files, SSH keys, and other configuration files stored on the local machine. Enabling dotfile protection ensures these files are never accessed by AI agents.

If teams implement these three foundational steps, they mitigate most obvious individual-level risks.

Level 2: Project-Level Guardrails (Team Defaults)

Once Cursor is used by multiple developers on a team, consistency and enforceable practices become essential:

- Always use a .cursorignore file. Every repository should explicitly exclude sensitive files, PII, or environment data from AI indexing, embeddings, and prompts. This keeps confidential material from leaving the local machine.

- Treat .cursorrules like production code. Rules files define persistent AI behavior and influence decisions across the project. Require a second set of eyes before merging any changes, and think of them as policy rather than personal preferences.

- Enforce consistent project settings. Standardization prevents accidental leaks, ensures reproducible AI behavior, and reduces the risk of logic or context contamination across projects.

- Use version control diligently. Track all changes in Git or a similar system so AI-suggested edits can be rolled back if needed.

Level 3: Enterprise Controls (Centralized Governance with an AI Gateway)

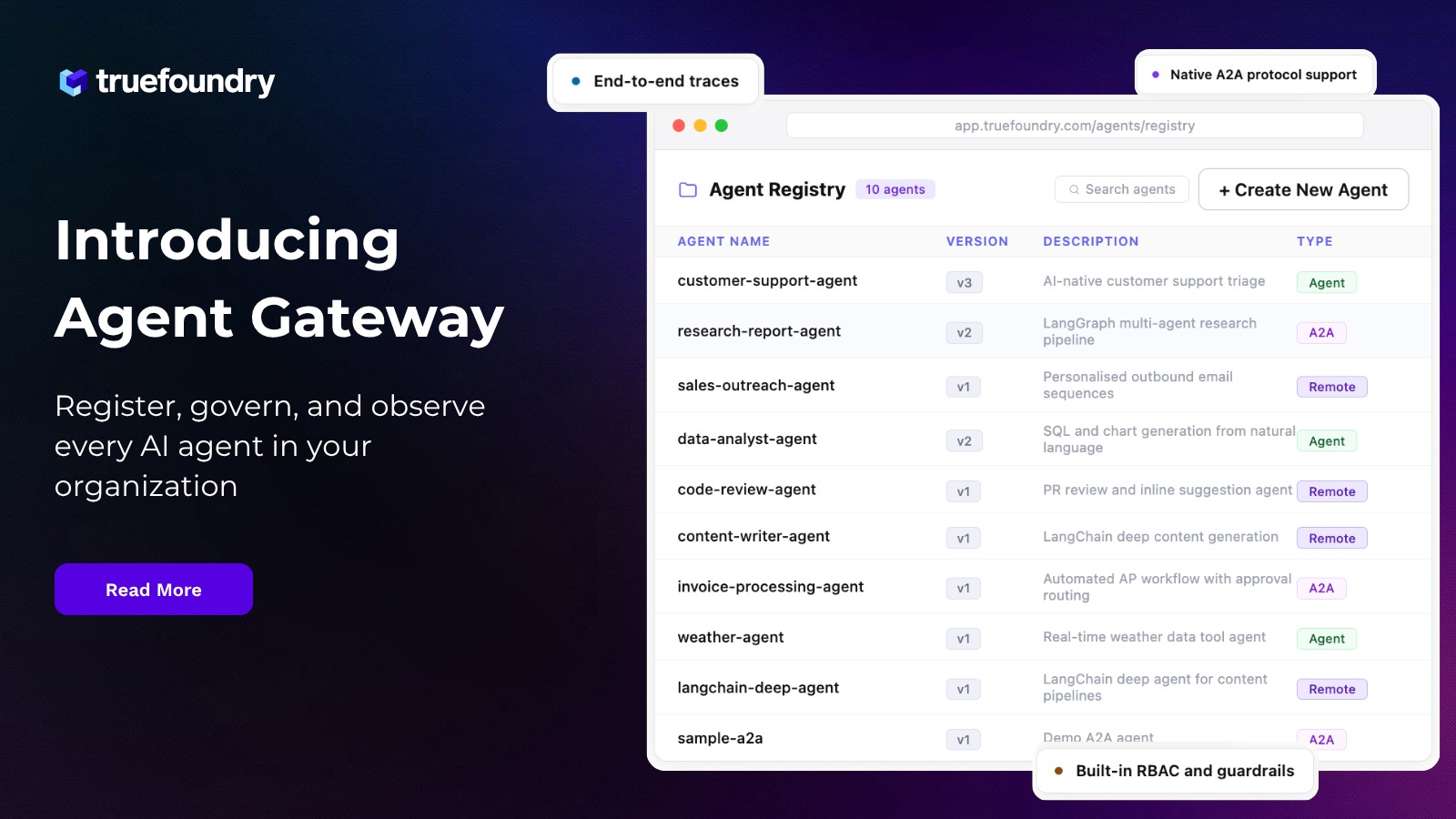

At a certain scale, relying on “please configure this correctly” is insufficient. Centralized controls such as an AI gateway are needed for visibility, routing, and enforceable guardrails.

Put something in the middle

Once Cursor is used across teams, you need a central place to see and control what agents are doing. The simplest pattern is to route model and tool traffic through a gateway layer, instead of letting every laptop talk directly to providers and MCP servers.

For example, TrueFoundry AI Gateway is designed as a proxy between applications and LLM providers, and can also sit between applications and MCP servers, so teams can standardize routing and add governance in one place. In practice, this gives security teams a clean control point for things like request observability, policy enforcement, and guardrails that validate or transform requests and responses.

This matters because most AppSec tools (SAST/DAST/SCA) only see the code after it’s written. A gateway gives you visibility into AI usage while it’s happening, which is where prompt injection, unsafe tool use, and policy violations usually start.

Important boundary: This control point governs model/tool traffic, but it does not automatically prevent local actions (like running terminal commands) unless your environment and settings enforce those constraints separately.

Standardize identity and data policies: If Cursor is widely used, it should be covered by the same rules as other development tools. Single Sign-On, consistent settings, and zero data retention policies reduce guesswork and make audits easier.

At this stage, Cursor stops being “just an editor” and becomes part of your development infrastructure.

Conclusion

Cursor feels powerful because it removes friction, and that’s its purpose. But friction exists for a reason, especially when it comes to executing commands or managing dependencies. Teams that use Cursor safely don’t fight this friction, nor do they trust the AI blindly. They let the AI handle thinking and drafting, while humans remain responsible for approval and accountability.

The mental model is simple and effective: AI can suggest, but humans decide.

As development tools grow more agentic, success won’t belong to those who move the fastest at any cost. It will belong to the teams that move fast with intention, balancing speed with deliberate oversight and security.

Secure and govern your AI workflows with TrueFoundry AI Gateway. Book a demo.

Frequently Asked Questions

Is Cursor a security risk?

Cursor introduces security risks like prompt injection, agent auto-runs, and exposure through third-party integrations. Risks are real but manageable when proper controls, privacy settings, and human oversight are applied. Enterprise teams should treat Cursor as part of their security infrastructure.

Is Cursor safe for privacy?

Cursor can be safe for privacy if Privacy Mode is enabled, At a certain scale, relying on “please configure this correctly” is insufficient. Centralized controls such as an AI gateway are needed for visibility, routing, and enforceable guardrails.sensitive files are protected, and rules like .cursorignore are used. Without these measures, snippets of code could leave the local environment, potentially exposing sensitive or proprietary information.

Does Cursor track IP?

Cursor may log minimal telemetry, which could include network identifiers like IP addresses for diagnostics or analytics. Enterprise privacy policies and Privacy Mode help limit exposure, but users should assume some network-level metadata may be visible to service providers

Is Cursor Privacy Mode safe?

Privacy Mode is effective in reducing code retention outside the local session, minimizing exposure to AI servers. While it strengthens privacy, combining it with dotfile protection, .cursorignore, and controlled integrations ensures comprehensive security for sensitive projects.

TrueFoundry AI Gateway delivers ~3–4 ms latency, handles 350+ RPS on 1 vCPU, scales horizontally with ease, and is production-ready, while LiteLLM suffers from high latency, struggles beyond moderate RPS, lacks built-in scaling, and is best for light or prototype workloads.

The fastest way to build, govern and scale your AI

.webp)

.webp)