7 Things You Must Get Right to Put LLM Agents in Production

Built for Speed: ~10ms Latency, Even Under Load

Blazingly fast way to build, track and deploy your models!

- Handles 350+ RPS on just 1 vCPU — no tuning needed

- Production-ready with full enterprise support

Getting an LLM agent to work in a demo is satisfying. Getting it to work reliably in production for real users, at scale, day after day is a different discipline entirely.

In a recent video, developer educator Sam explored exactly this gap. He laid out a seven-part framework for teams serious about moving beyond the proof of concept. The final three principles he covers, tools and MCP servers, monitoring and tracing, and agent evals, are where most production deployments quietly fall apart. But they sit on top of four foundations that need to be solid first.

This post expands that framework into a complete guide. If you're an engineering team, a CTO, or a founder moving an agentic AI system toward real users, these are the seven things you can't shortcut.

Why LLM Agents in Production Break

The failure pattern is almost always the same. An agent performs brilliantly in a notebook - one user, controlled inputs, a patient evaluator. Then it meets the real world: concurrent sessions, inconsistent inputs, tool outages, compliance requirements, and users who behave nothing like the test cases.

The models aren't the problem. Today's frontier LLMs are genuinely capable. The problem is the operational layer — everything that wraps around the model. This is what LLMOps is: the discipline of running LLM-based systems in production with the same rigor you'd bring to any critical piece of software. Most teams building LLM agents in production learn its importance the hard way.

Here are the seven pillars.

1. Prompt Management

Prompts are the most fragile part of any LLM system — and most teams treat them like Post-it notes.

In prototypes, prompts live in Python strings inside Jupyter notebooks. Nobody tracks when they changed, what the previous version was, or whether a tweak last Tuesday is why the agent started behaving differently this week. That's fine for experimentation. In production, it's a ticking clock.

When a prompt changes — even subtly — it can silently alter agent behavior in ways that don't show up immediately. A character removed from a system prompt. An instruction reworded. A few-shot example swapped. Each of these is a potential regression with no audit trail.

What good looks like:

- Every system prompt and few-shot example lives in a versioned prompt registry — not in application code

- Changes are tracked with authorship, timestamps, and diff views

- You can roll back to any previous version in seconds

- Staging and production environments use explicitly pinned prompt versions, never "latest"

Prompt management is the bedrock of any serious LLMOps practice. Every other layer of the stack depends on having stable, auditable inputs to the model.

2. State and Memory Management

Multi-step agents are stateful. Managing that state cleanly across turns, tool calls, and sessions is one of the hardest unsolved problems in production agentic AI — and one of the least discussed.

An agent in production needs to maintain context within a conversation, across the steps of a multi-tool task, and sometimes even across sessions for returning users. Get any of these wrong and you get agents that forget critical context mid-task, bleed information between users, or arrive at wrong conclusions because they're reasoning over stale state.

The memory question isn't just technical — it's architectural. What lives in the context window? What gets summarized? What persists to a vector store? What gets discarded entirely? There are no universal answers, but there needs to be a deliberate answer for your use case.

What good looks like:

- A documented memory architecture: short-term context, long-term storage, and summarization rules all explicitly defined

- Session state that is properly scoped per user and cannot leak between tenants

- Retrieval pipelines (RAG, vector search) that are tested against real queries — not assumed to work

- Graceful degradation: the agent should handle missing or truncated context without hallucinating a substitute

Memory management is often treated as an afterthought. In production, it's the difference between an agent that feels coherent and trustworthy and one that feels erratic.

3. Multi-User Architecture and Access Control

If you're building for one user, skip this section. If you're building for a team, a company, or any multi-tenant use case — and most serious LLM agents in production are — this is non-negotiable from day one.

Multi-user environments introduce a cascade of concerns that don't exist in prototypes: who can invoke which agents, what data can each user access, how are costs attributed, and what's the audit trail when something goes wrong? LLM agents often operate with elevated permissions — they query databases, call external APIs, write to storage. Without proper governance, even a well-intentioned agent becomes a security and compliance liability.

Retrofitting access control onto an agent architecture that wasn't designed for it is expensive and error-prone. Build it in at the start.

What good looks like:

- Role-based access control (RBAC) that governs which users can trigger which agents and access which tools

- Hard data isolation between tenants — no possibility of cross-user context leakage

- Immutable audit logs for every agent action: who triggered it, what it did, what data it touched, when

- Per-user and per-team rate limits and cost caps that prevent runaway spend

- Compliance alignment: SOC 2, HIPAA, GDPR mapped to actual agent behaviors — not just infrastructure certifications

4. Model Management and AI Gateway

In a prototype you call one model. In production you're managing a portfolio: different providers, different model sizes, different latency/cost/capability tradeoffs — and you need intelligent routing between them. This kind of AI agent orchestration — directing the right task to the right model at the right cost — is what separates a production-grade system from a prototype.

An AI gateway is the traffic controller for all your LLM calls. It centralizes API key management, enforces rate limits, routes requests based on cost or task type, provides fallback handling when a provider has an outage, and gives you a single observability surface across every model call in the organization.

Without a gateway, you end up with shadow AI — teams spinning up their own model connections with their own keys, their own costs, and no visibility into what's being called. At scale, this is both a governance failure and a cost problem.

What good looks like:

- All agent LLM traffic routes through a centralized gateway — no direct model calls from application code

- AI agent orchestration rules: complex reasoning goes to frontier models, simpler tasks go to faster/cheaper ones

- Provider fallback so a single API outage doesn't take your agent offline

- Unified cost dashboards and budget enforcement across teams and projects

- API keys stored and rotated centrally — never hardcoded in services

5. Tools and MCP Servers

This is one of the three principles Sam covers in detail in the video — and the one he gives the most time to.

Tools are how your agent acts in the world. In the modern agentic ecosystem, MCP (Model Context Protocol) servers have become the standard interface for exposing tools to agents — a structured, discoverable way for an agent to interact with external systems: databases, APIs, code execution environments, search engines, and more.

But tools are also the most common source of silent production failures. An agent that calls a broken tool doesn't fail cleanly. It often spirals — retrying, generating plausible-sounding output based on an error it misread as success, or triggering downstream actions on garbage data. These failures are insidious because they look like agent reasoning failures when the real problem is a broken integration.

Sam's point is direct: every tool needs tests, and authentication needs to be centralized. These aren't nice-to-haves. They're the minimum bar for production.

What good looks like:

- Every tool has its own test suite — unit tests for individual functions, integration tests against live or mocked endpoints — run on every deployment

- Authentication for tool calls is managed in one central place, not scattered across agent code; MCP servers inherit credentials from a secure secrets manager

- Every tool call is fully instrumented: you know exactly when it was called, what inputs it received, what it returned, and how long it took

- Tools fail loudly with structured, interpretable errors — not silent nulls or misleading responses that confuse the agent

- MCP servers are deployed, versioned, and monitored like any other production microservice — not treated as ad-hoc scripts

The best production teams treat tools as first-class services with their own operational lifecycle. If you don't know whether your tools are healthy, you don't know whether your agent is healthy.

6. Monitoring, Tracing, and LLM Observability

Sam's sixth principle — and the one that unlocks everything that comes after it.

Standard APM and logging tools weren't designed for the execution patterns that LLM agents produce. A single agent task might involve a dozen LLM calls, five tool invocations, branching logic, retries, and sub-agent delegation — all non-deterministic, all potentially long-running. A Datadog trace or a CloudWatch log can tell you the response time. It can't tell you why the agent reached the wrong conclusion at step four.

LLM tracing solves this. It follows a complete agent run end-to-end, capturing every prompt sent, every response received, every tool call made, and every branching decision — stitched together into a single inspectable execution graph. Without LLM tracing, debugging a production failure is like reconstructing a conversation from memory.

LLM observability is the broader practice: not just the ability to trace individual runs, but the ability to monitor agent behavior in aggregate — catching cost anomalies, quality regressions, latency outliers, and unusual tool call patterns before users notice them.

Sam frames this as knowing "what's working and what's going wrong." That's the minimum. Done properly, LLM observability also tells you why things are working and why things go wrong — which is the input you need for continuous improvement.

What good looks like:

- Framework-agnostic distributed tracing that works across LangGraph, CrewAI, AutoGen, and custom stacks

- Automatic capture of: full prompt/response pairs, token counts, latency per step, tool call inputs and outputs, model versions used

- Real-time alerting on anomalies: cost spikes above threshold, latency outliers, error rate increases, unexpected tool usage patterns

- Infrastructure monitoring alongside model monitoring — GPU utilization, cluster health, API quota consumption

- A shared dashboard accessible to both engineering and product teams — so quality discussions are grounded in data, not anecdote

Monitoring is what makes agent evals possible. You can't evaluate what you can't see.

7. Agent Evals

Sam's seventh and final principle — and the one that closes the loop.

Agent evals are how you know whether your LLM agents in production are actually getting better or worse with every change you make.

In traditional ML, evaluation is relatively clean: a held-out test set, a defined metric, a clear answer. In agentic AI, it's harder. Outputs are long-form and multi-step. Correctness is often subjective. The agent interacts with live tools, so even running an eval can have real-world side effects. And because agents are non-deterministic, the same input can produce different outputs on different runs.

None of these challenges are excuses to skip agent evals. Sam's point is emphatic: you cannot responsibly ship agent changes — new prompt versions, model upgrades, tool changes — without an evaluation layer that catches regressions before they reach users. Without agent evals, you're guessing.

The key insight Sam highlights: agent evals should build on your LLM observability and tracing infrastructure. Your best eval cases aren't synthetic — they're real production runs, annotated and curated from your trace data. This is why monitoring comes first.

What good looks like:

- A curated eval set drawn from real production traces: the edge cases users actually hit, not the ones you imagined in advance

- A mix of automated metrics (tool call accuracy, task completion rate, factual correctness, hallucination detection) and LLM-as-judge scoring for harder qualitative criteria

- Agent evals integrated into the deployment pipeline: every prompt change, model upgrade, or tool modification triggers an automated eval run before it reaches production

- Regression tracking across versions — you should immediately know if a change degraded quality on any benchmark

- Human review workflows for high-stakes scenarios where automated evals aren't sufficient

Agent evals are the feedback engine. LLM observability tells you what happened. Agent evals tell you whether it was good enough. Together, they let you improve an LLM agent in production continuously without breaking it.

The Seven as a System

These principles aren't a checklist you can pick from. They're a system, and the sequence matters.

Prompt management gives you a stable LLMOps foundation to build on. State and memory management makes your agent coherent over time. Multi-user architecture makes it safe to expose to real users. The AI gateway and AI agent orchestration layer give you control over the entire model portfolio. Tools and MCP servers let your agent act reliably in the world. Monitoring and LLM observability gives you the visibility to understand what's actually happening at runtime. And agent evals close the feedback loop — turning production trace data into systematic quality improvement.

Sam's video focuses on the final three because these are the ones teams most commonly skip when they're rushing to ship. The first four tend to get partially addressed by default — you have some prompt discipline, some auth, some model management. But monitoring, LLM tracing, and agent evals are the pieces that get deliberately deferred and then never revisited. That's exactly when production incidents become inevitable.

The teams that succeed with LLM agents in production are the ones who take all seven seriously — regardless of which agent framework they use, which cloud they're on, or what use case they're building for.

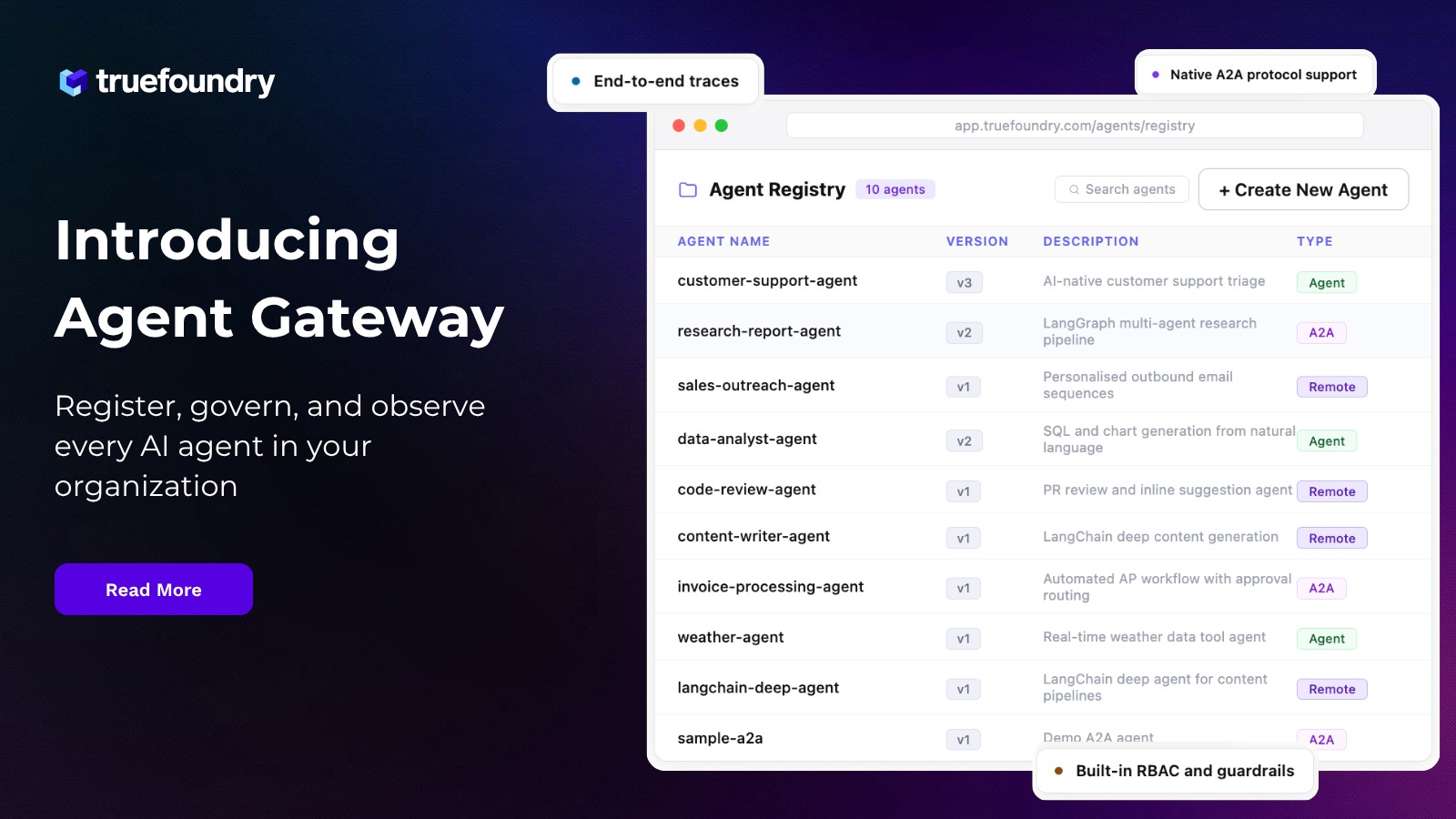

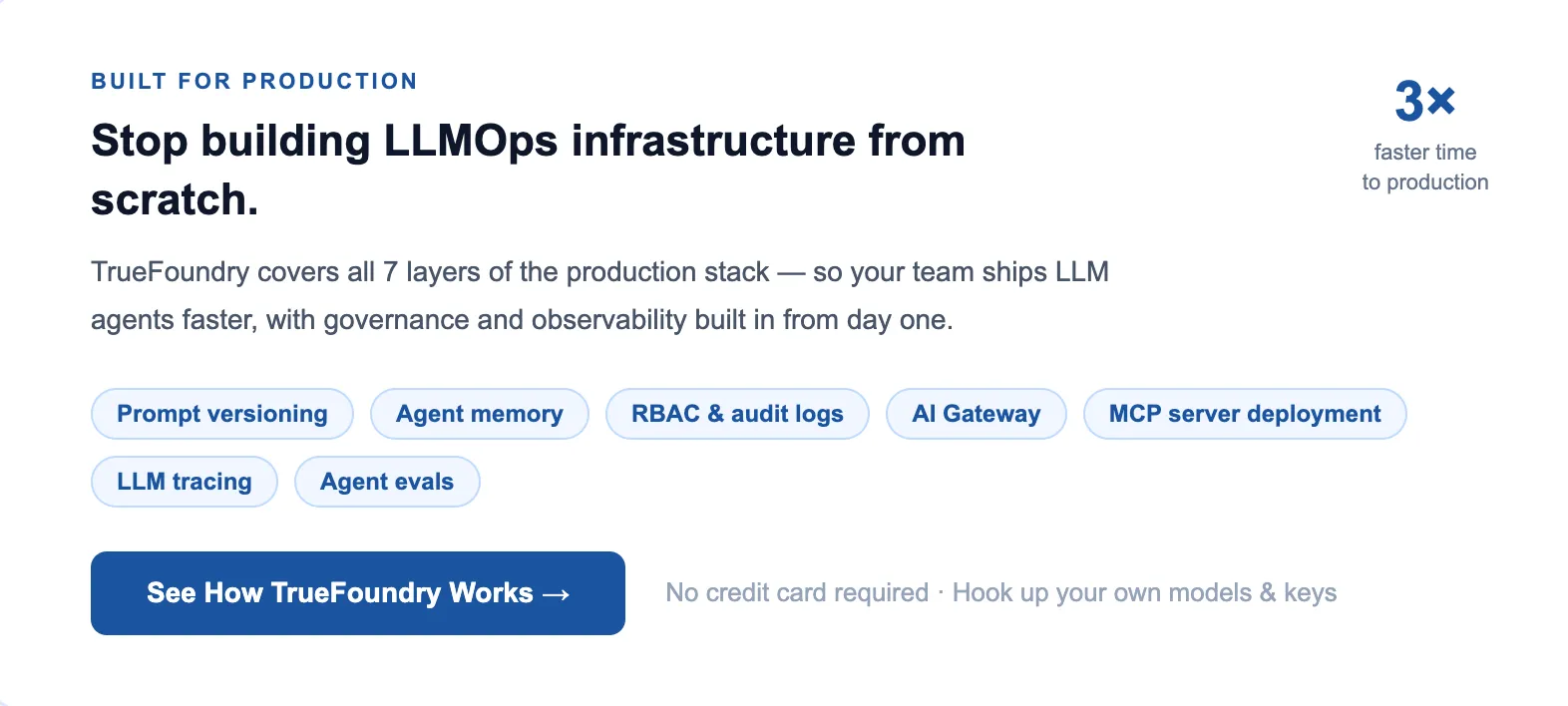

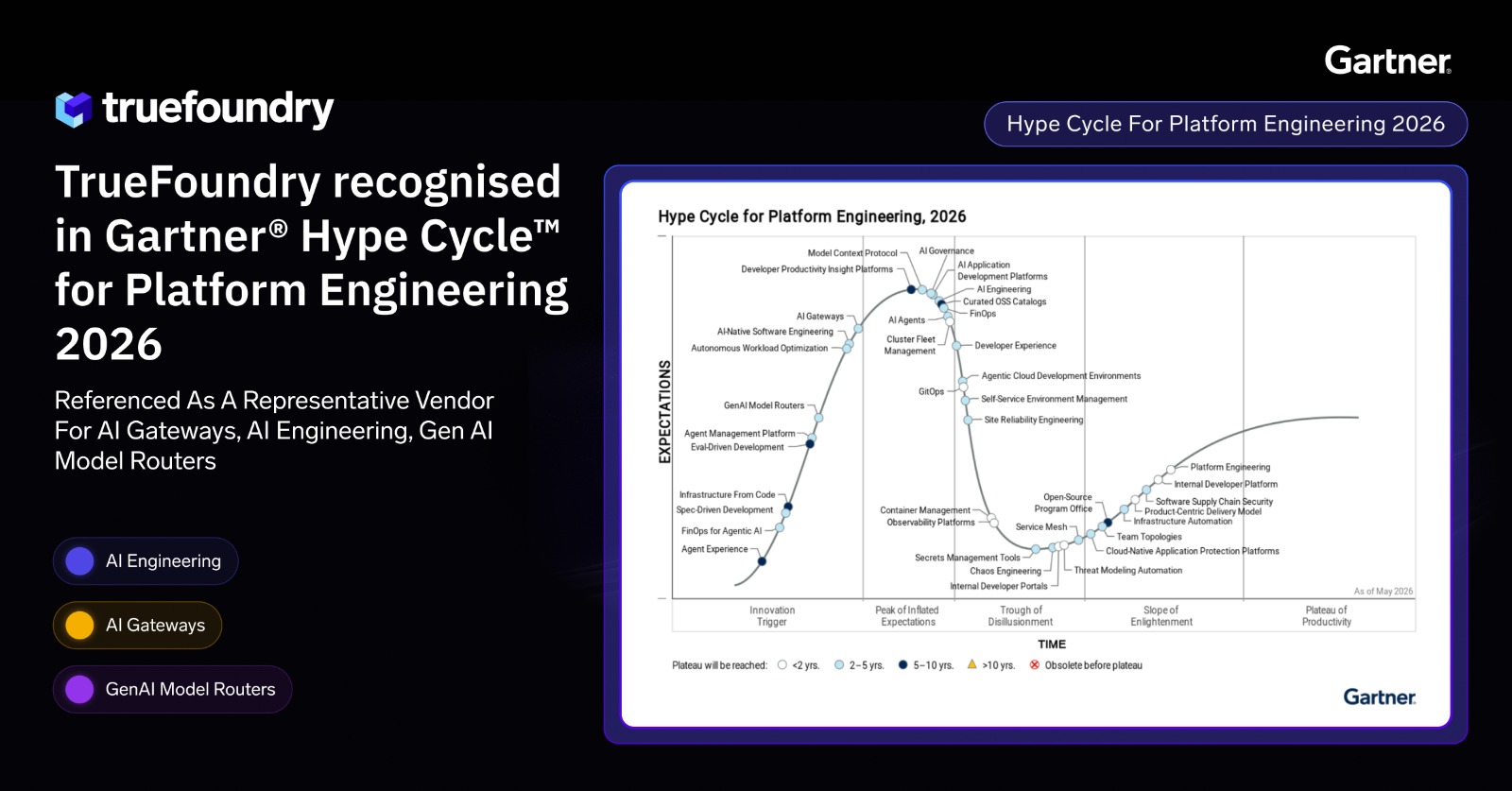

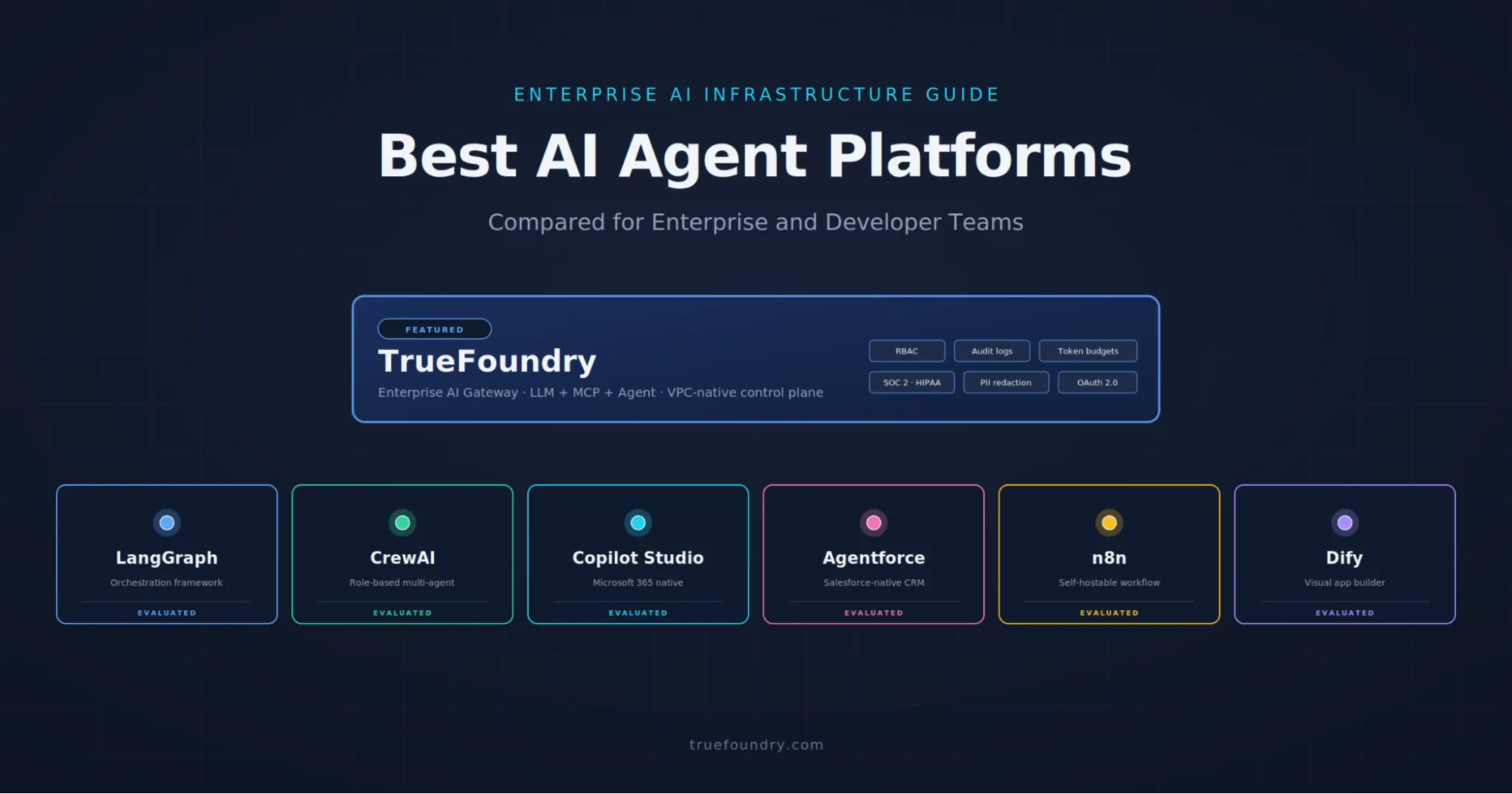

How TrueFoundry Covers All Seven

TrueFoundry is an enterprise AI platform built from the ground up for this challenge: taking LLM agents in production from proof of concept to operational reality, with the complete LLMOps stack and enterprise governance baked in at every layer.

It covers all seven:

- Prompt management with full versioning, lifecycle controls, and environment-pinned deployment

- Agent memory management and stateful orchestration across sessions

- RBAC and multi-tenant architecture with immutable audit logs and compliance certifications (SOC 2, HIPAA, GDPR)

- AI Gateway and AI agent orchestration for centralized LLM routing, multi-provider fallback, cost tracking, and API key management

- MCP server deployment — your tools and integrations treated as production services, not scripts

- Framework-agnostic LLM tracing and LLM observability across LangGraph, CrewAI, AutoGen, and custom stacks — from prompt execution to GPU performance

- Agent eval infrastructure that integrates directly with production traces and plugs into your CI/CD pipeline

Customers using TrueFoundry report 80% higher GPU cluster utilization, 3× faster time-to-value with AI agents, and 35–50% infrastructure cost reductions.

Sam mentions TrueFoundry at the end of the video: "You can hook up your own models, your own keys to actually get started and make it easy for you to take something and actually put it into production with your team."

TrueFoundry AI Gateway delivers ~3–4 ms latency, handles 350+ RPS on 1 vCPU, scales horizontally with ease, and is production-ready, while LiteLLM suffers from high latency, struggles beyond moderate RPS, lacks built-in scaling, and is best for light or prototype workloads.

The fastest way to build, govern and scale your AI

One Gateway for Every LLM, Agent and MCP Server

Recent Blogs

Frequently asked questions

What is LLMOps?

LLMOps (Large Language Model Operations) is the set of practices, tools, and infrastructure required to develop, deploy, monitor, and improve LLM-based applications in production. It extends MLOps to address properties unique to generative AI: non-determinism, prompt sensitivity, multi-step reasoning, and tool use. It covers everything from prompt management and model routing to LLM observability and agent evals.

Why do LLM agents fail in production?

The most common causes: prompts changing without version control creating silent regressions; state management errors causing agents to confuse or lose context; missing LLM observability making failures impossible to diagnose; untested tool integrations causing cascading errors; and lack of agent evals meaning nobody knows quality has degraded until users complain.

What is LLM observability?

LLM observability is the practice of gaining visibility into what language models and agents are doing at runtime, at both the individual run level (LLM tracing: prompts, responses, tool calls, latency, tokens) and the aggregate level (dashboards, anomaly detection, cost monitoring). It's the operational foundation for debugging production failures and driving systematic quality improvement.

What is LLM tracing?

LLM tracing is a form of distributed tracing purpose-built for multi-step agent runs. It captures the complete execution graph of an agent task: every LLM call, every tool invocation, every branching decision, all stitched together into an inspectable trace. This is what enables root-cause analysis of production failures in non-deterministic, multi-step AI systems.

What are agent evals?

Agent evals are systematic processes for measuring the quality and reliability of AI agent outputs across prompt versions, model changes, and tool updates. Unlike traditional unit tests, agent evals must handle non-deterministic outputs, multi-step completion, and subjective quality criteria. Best practice combines automated metrics, LLM-as-judge scoring, and human review, ideally drawing test cases from real production traces.

What is an MCP server?

MCP (Model Context Protocol) is an open standard for exposing tools and external integrations to LLM agents in a structured, discoverable way. An MCP server hosts a collection of tools (database queries, API calls, web search, code execution) that an agent can invoke. In production, MCP servers should be deployed, versioned, tested, and monitored like any microservice. Authentication for MCP tools should be centralized, not scattered across individual tool implementations.

What does TrueFoundry do?

TrueFoundry is a Kubernetes-native enterprise AI platform that covers the full LLMOps stack, from prompt management and multi-tenant access control to AI gateway, MCP server deployment, LLM tracing, and eval infrastructure. It's designed for teams moving agentic AI systems from proof-of-concept to production, with enterprise governance included by default.

.webp)

.webp)

.webp)

.webp)

.webp)

.webp)

.webp)