Exporting LLM Gateway Traces to Traceloop with OpenTelemetry

Built for Speed: ~10ms Latency, Even Under Load

Blazingly fast way to build, track and deploy your models!

- Handles 350+ RPS on just 1 vCPU — no tuning needed

- Production-ready with full enterprise support

TrueFoundry AI Gateway exports OpenTelemetry traces to Traceloop over OTLP/HTTP using the https://api.traceloop.com/v1/traces endpoint and a Bearer token in the Authorization header. Every LLM request that passes through the gateway produces a span tree that lands in the Traceloop dashboard without any changes to application code or deployment topology.

This post covers the trace generation path inside the TrueFoundry AI Gateway and how Traceloop ingests and surfaces that data. It also describes the configuration surface and the data privacy controls available at the gateway level.

How the Gateway Generates Traces

The TrueFoundry AI Gateway is built on the Hono framework and runs as a stateless pod handling over 250 requests per second on a single vCPU with approximately 3 ms of added latency per request. The gateway operates in a split architecture where a control plane manages configuration and one or more gateway pods process inference traffic.

When a request arrives the gateway executes the following sequence in the hot path:

- JWT token validated against public keys cached in memory (downloaded once from the IdP and refreshed via NATS)

- Authorization checked against an in-memory user-to-model map kept current by NATS pub/sub

- Model identifier resolved to a physical provider endpoint via Virtual Model routing logic running in memory

- Request translated from OpenAI-compatible format to the target provider format via an adapter layer

- Request forwarded to the provider and the response streamed back to the client

None of these steps make external calls except the provider call itself. Rate limiting runs the Sliding Window Token Bucket algorithm against in-memory state. Guardrail evaluation (when configured) runs concurrently with the model call for input checks and sequentially for output checks.

After the request completes the gateway publishes the span tree asynchronously to NATS. The OTEL exporter reads from this async path and forwards spans to the configured external endpoint. Because the export path is fully decoupled from the request path a slow or unreachable OTEL backend never adds latency to the client and never causes a request to fail. If Traceloop is unreachable spans are dropped at the exporter and logged internally. TrueFoundry's own internal trace storage is unaffected because export is additive.

The gateway generates spans across five stages: the inbound HTTP handler and authentication and model resolution and the outbound provider call and the streaming response assembly. Each span carries a consistent set of attributes.

The gen_ai.* attributes follow the OpenTelemetry Semantic Conventions for Generative AI Systems. This means the trace data arriving in Traceloop is structurally identical to what any OpenLLMetry-instrumented application would produce.

What Traceloop Does with the Data

Traceloop is an LLM observability platform built on OpenLLMetry which is its open-source OpenTelemetry instrumentation layer. Traceloop's backend accepts OTLP/HTTP trace data and indexes it for the Traceloop dashboard. The platform is trace-native. Metrics such as token usage and latency and cost are computed from span attributes rather than from a separate OTLP metrics stream. This is why configuring only the Traces Exporter in TrueFoundry is sufficient — there is no /v1/metrics endpoint in Traceloop's ingestion surface.

Traceloop organizes data around three core abstractions. Traces are the top-level unit and correspond directly to an LLM request or an agentic workflow. Spans within a trace represent individual operations (an LLM call and a tool invocation and a retrieval step). Environments map to deployment stages and each environment has its own API key allowing Development and Staging and Production traces to remain isolated in the dashboard.

The Traceloop dashboard surfaces token usage over time and latency distributions and error rates and model breakdowns directly from gen_ai.* span attributes. Because TrueFoundry populates these attributes on every span the Traceloop dashboard is fully populated without any SDK instrumentation in the application layer.

Traceloop also supports prompt versioning and regression testing pipelines but those features operate at the application SDK level and are outside the scope of this integration. The gateway-level integration covers the full observability surface: every request that passes through TrueFoundry produces a trace in Traceloop regardless of what LLM provider or model is called.

The Integration Surface

The connection between TrueFoundry and Traceloop is a single OTLP/HTTP POST to https://api.traceloop.com/v1/traces carrying Proto-encoded span batches. Authentication is a Bearer token in the Authorization header. The token is a Traceloop API key scoped to a specific environment.

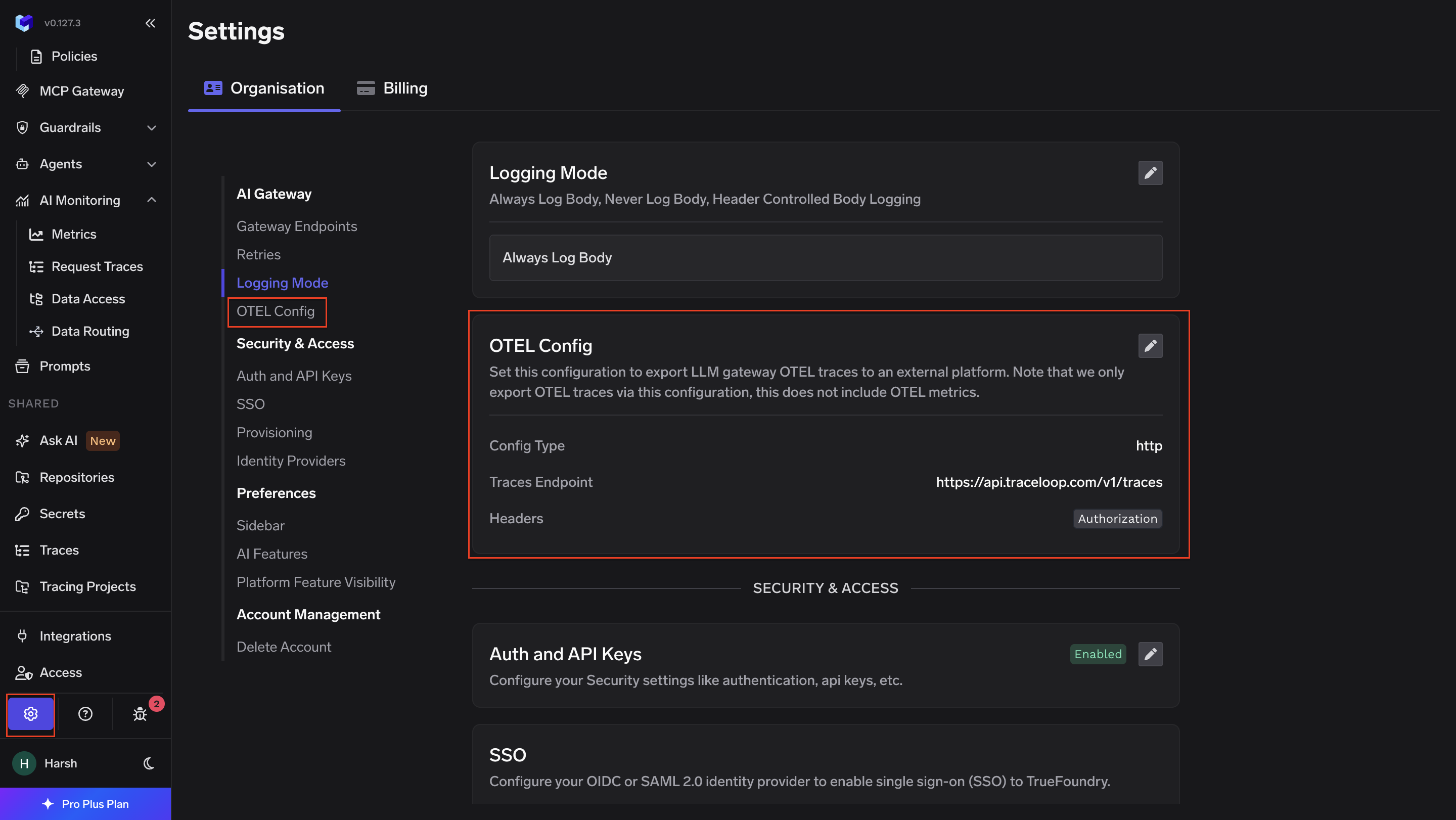

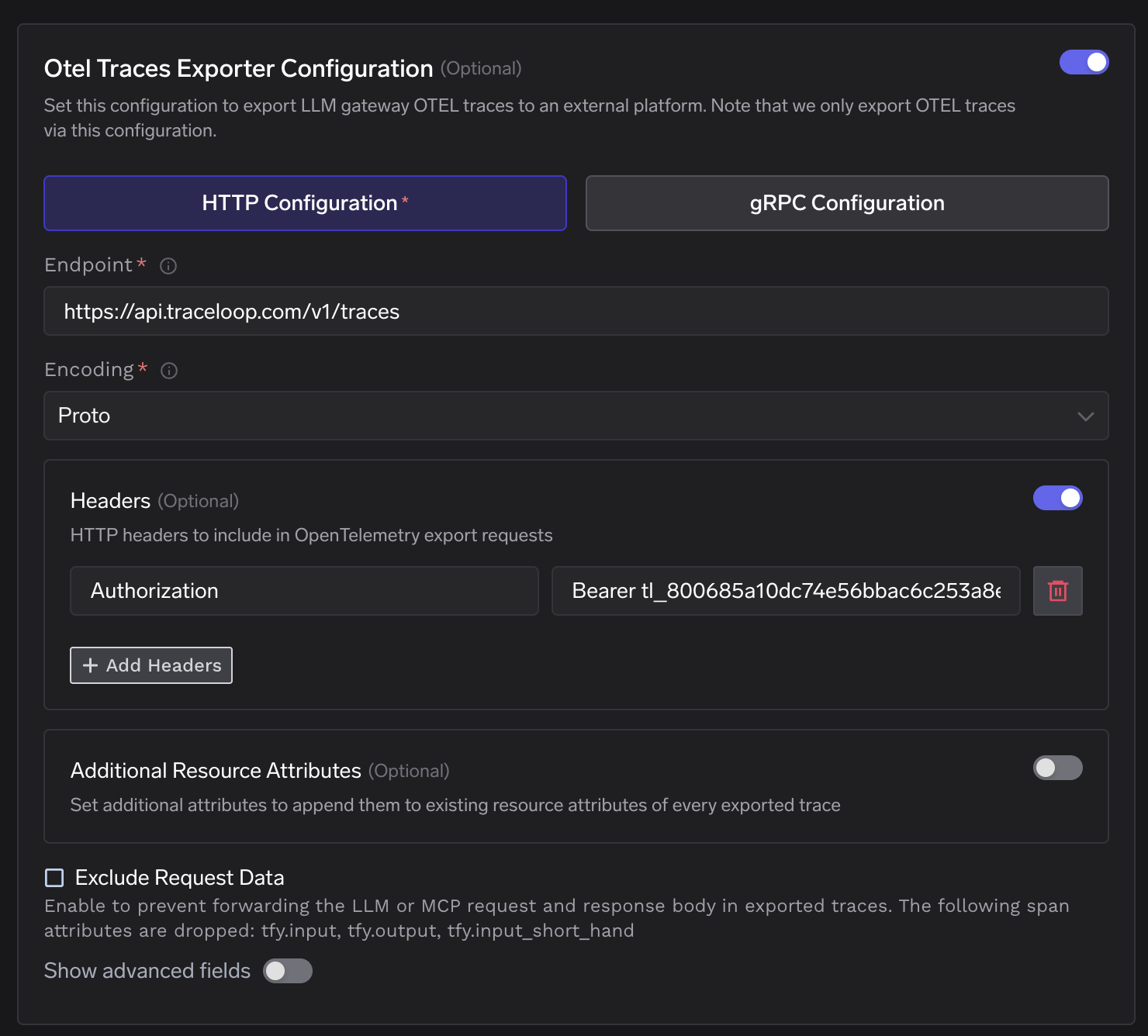

TrueFoundry exposes this configuration under AI Gateway → Controls → Settings → OTEL Config. The Otel Traces Exporter section accepts the following fields.

The endpoint must include the full /v1/traces path. TrueFoundry's exporter does not auto-append signal paths. This differs from the OTel Collector otlphttp exporter which appends the path automatically from the base URL. Both resolve to the same destination.

Traceloop API keys are generated per environment from the Environments page in the Traceloop dashboard. A key is displayed only once at creation time. The key value is passed in the header as Bearer <key> including the Bearer prefix as a literal string.

Data Privacy Controls

The gateway provides an Exclude Request Data toggle in the OTEL Config section. When enabled the exporter strips tfy.input and tfy.output and tfy.input_short_hand from every span before forwarding to Traceloop. The remaining span attributes (token counts and model names and latency and routing metadata) are unaffected. This toggle is appropriate when prompts or completions contain user PII or proprietary content that should not leave the cluster boundary.

The Additional Resource Attributes field allows appending custom key-value pairs to every exported span. This is useful for environment tagging and cost center attribution and multi-tenant filtering within a single Traceloop environment.

Architecture Summary

Every LLM request through TrueFoundry AI Gateway produces a span tree covering authentication and routing and the provider call and the response. After the request completes the gateway publishes this span tree to NATS asynchronously. The OTEL exporter reads from NATS and POSTs Proto-encoded batches to https://api.traceloop.com/v1/traces with a Bearer token. Traceloop indexes the spans and surfaces token usage and latency and model breakdowns in its dashboard from the gen_ai.* attributes on each span.

No sidecars are required. No changes to application code are required. No OpenLLMetry SDK needs to be added to services calling the gateway. The integration operates entirely at the gateway layer and covers 100% of traffic passing through it regardless of the calling application's instrumentation state.

The architectural property that makes this clean is the async NATS publish. Because span export is decoupled from the request path the integration adds zero latency to inference calls and introduces no availability dependency on Traceloop. The gateway processes requests at full throughput whether or not Traceloop is reachable.

TrueFoundry AI Gateway delivers ~3–4 ms latency, handles 350+ RPS on 1 vCPU, scales horizontally with ease, and is production-ready, while LiteLLM suffers from high latency, struggles beyond moderate RPS, lacks built-in scaling, and is best for light or prototype workloads.

The fastest way to build, govern and scale your AI

.webp)

.webp)

.webp)

.webp)

.webp)