What is an MCP Gateway? A Practical Guide for Enterprise AI Teams

Updated: March 12, 2026

.webp)

Built for Speed: ~10ms Latency, Even Under Load

Blazingly fast way to build, track and deploy your models!

- Handles 350+ RPS on just 1 vCPU — no tuning needed

- Production-ready with full enterprise support

AI systems are no longer limited to answering questions. Increasingly, they act. They read support tickets, query internal databases, update CRMs, open Jira issues, and sometimes trigger production workflows. The shift from conversational assistants to operational agents changes the architecture underneath.

Most teams begin with direct connections. An LLM calls a Slack API. Another agent queries Postgres. A third talks to GitHub. It works, at first. But as the number of agents and tools grows, the pattern becomes brittle. Credentials are scattered. Access rules live inside prompts. There is little centralized visibility into what any given agent is actually doing.

The Model Context Protocol (MCP) introduces a standard way for models to discover and invoke external tools. It removes the need to build custom glue code for every model–tool pairing. That alone is meaningful.

But MCP by itself does not solve governance. It does not enforce access control. It does not provide centralized auditing or cost boundaries. In enterprise environments, those gaps matter.

This is where the idea of an MCP Gateway emerges. It is not an optional add-on but rather a control layer that makes MCP usable at scale.

Deploy a secure MCP Gateway inside your own cloud environment with TrueFoundry.

- Run the MCP Gateway inside your cloud while maintaining full control over security and access.

Why AI Agents Need a Standardized Integration Layer?

The first generation of LLM systems was largely passive. You sent a prompt. You received a text. If the answer was wrong, it was inconvenient, not catastrophic. That model no longer holds.

Modern AI agents query databases, modify tickets, send emails, and trigger downstream automation. They do not just generate language. They execute intent. And once execution enters the picture, architecture matters more than prompt engineering.

Most teams begin with direct integrations. An agent is given a GitHub API key. Another gets Slack credentials. A third connects to the CRM. Each integration is built independently, often inside application code or wrapper scripts. It feels fast. It is fast. But it does not scale.

Every agent manages its own credentials. Access rules are scattered. Observability becomes fragmented across systems. Adding a new tool requires wiring yet another direct connection. Soon, no one has a clear picture of which agent can touch which system.

The pattern looks something like this:

.webp)

There is no control layer. No central audit trail. No unified policy enforcement.

As agent counts increase, this topology turns fragile. What begins as flexibility gradually becomes operational risk. A standardized integration layer is not about elegance. It is about containment.

What is a Model Context Protocol (MCP)?

The Model Context Protocol, or MCP, is an open protocol that defines how AI models discover and interact with external tools. Instead of every model integrating with every API differently, MCP introduces a shared language between agents and systems. It builds on concepts similar to the language server protocol, applying the same idea of standardized capability negotiation to AI tool use.

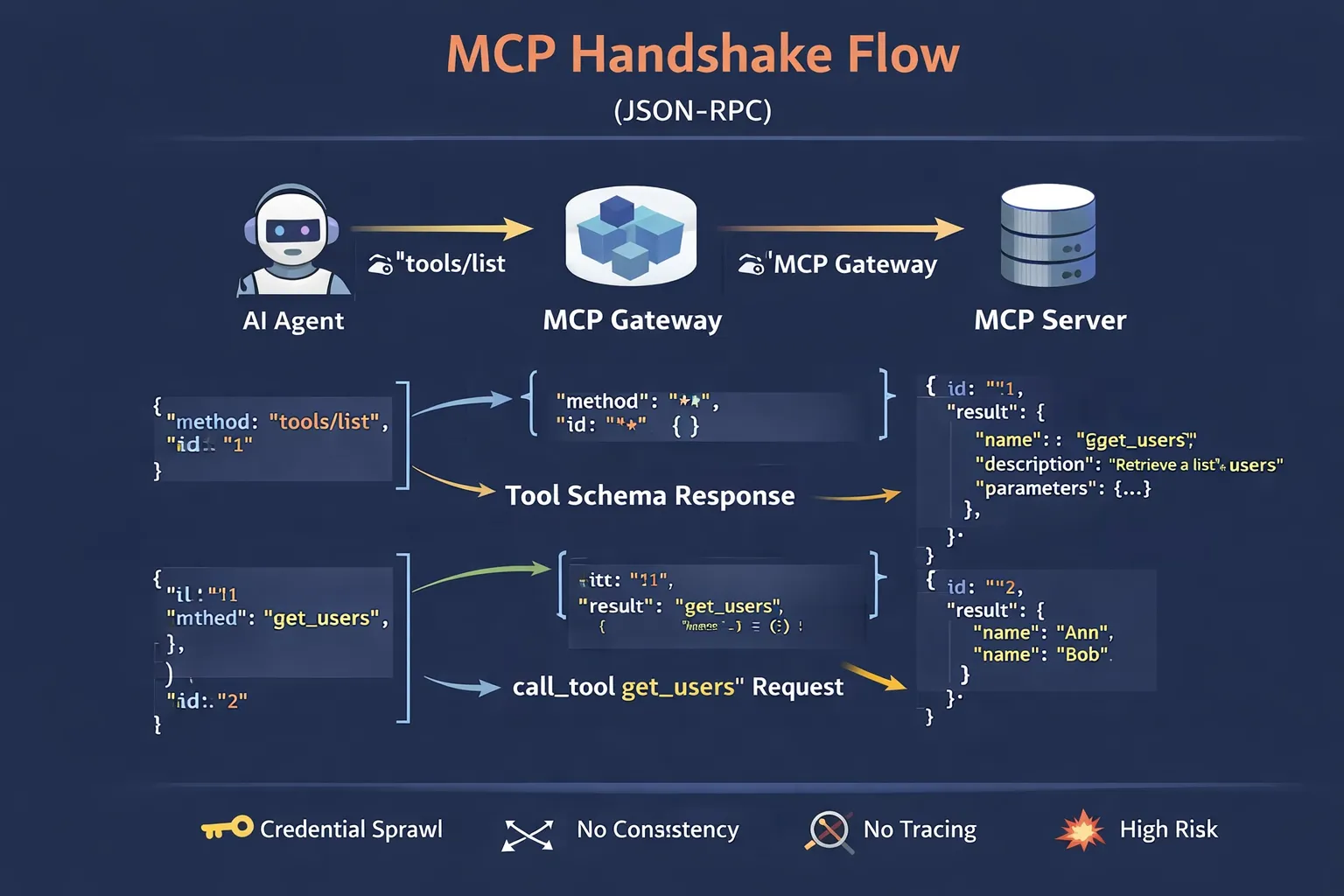

At its core, MCP uses JSON-RPC over HTTP. When an MCP client connects to an MCP server, it performs a discovery step. The model sends a tools/list request to understand what available tools are exposed.

{

"method": "tools/list"

}

The server responds with a structured list of MCP tools, including their names, input schemas, and expected outputs. From there, the agent can invoke a specific tool using a call_tool method, passing arguments that conform to the declared schema.

This handshake matters. It standardizes capability discovery and invocation across providers and systems. A GitHub connector and a Postgres connector expose tools differently at the backend, but through MCP they present a unified interface to the model.

In effect, MCP removes the combinatorial explosion of custom integrations. Instead of writing bespoke glue code for every model–tool pairing, teams implement MCP servers once and allow models to interact through a uniform protocol. This has become the MCP standard for how AI models communicate with backend services and data sources across the MCP ecosystem.

But protocol standardization is not the same as governance. That distinction becomes important.

What is an MCP Gateway?

If MCP defines how models talk to tools, an MCP Gateway defines who is allowed to speak, and under what conditions.

An MCP Gateway sits between AI agents and one or more MCP servers. Instead of agents making direct connections to GitHub servers, database connectors, or workflow engines, they connect to the gateway. The gateway becomes the single endpoint: a central point of ingress for tool discovery and invocation. It functions as a centralized proxy for all MCP traffic.

From the agent's perspective, nothing changes structurally. It still performs a tools/list handshake. It still issues call_tool requests. But those requests are intercepted, evaluated, and routed by the gateway before any backend system executes them.

At a minimum, the MCP Gateway performs four roles.

First, discovery control. It filters which tools are visible to which agents.

Second, routing. It forwards requests to the appropriate MCP server.

Third, authentication. It validates identity and may propagate credentials on a user's behalf.

Fourth, policy enforcement. It can apply rate limits, scope restrictions, or execution constraints before forwarding traffic.

Architecturally, the difference is simple but profound:

.webp)

The MCP Gateway centralizes control without modifying individual servers. MCP remains the protocol. The gateway becomes the management layer that makes protocol use safe in production.

Where MCP Alone Falls Short in Enterprise Environments

MCP standardizes interaction. It does not standardize control.

Out of the box, MCP does not define role-based access control. If an agent can connect to a server, it can discover every tool that server exposes. There is no native concept of scoping visibility per team, per workload, or per user. Access becomes an infrastructure concern outside the protocol itself.

There is also no centralized audit layer. Each MCP server may log its own activity, but there is no inherent aggregation point showing which agent invoked which tool, on whose behalf, and in what sequence. Reconstructing intent across different MCP servers becomes a manual exercise.

Cost governance presents a similar gap. MCP does not track token consumption or enforce usage boundaries. An agent can invoke tools repeatedly, triggering model calls or database queries, without any built-in budget constraints.

Then there is the tool explosion problem. As organizations add connectors — GitHub, Slack, internal services, observability systems — the list of discoverable tools grows. Without visibility controls, agents are exposed to capabilities they may not need, which complicates reasoning and increases blast radius. In real time, the absence of security policies turns a governance gap into an active liability.

MCP is necessary. It is not sufficient. Enterprise deployment demands an additional control layer.

What is an MCP Gateway and Its Role in Production AI Systems

In production environments, what is an MCP Gateway best understood as? Not just a routing proxy, it behaves more like a control plane layered above multiple execution planes.

The execution plane consists of MCP servers. They connect to GitHub, Postgres, Slack, internal APIs, and legacy systems. They perform actions. They return results.

The control plane lives at the gateway. It decides which agent can see which tools, under which identity, and with what constraints. That separation is subtle, but it changes how risk is managed. An MCP Gateway is a reverse proxy purpose-built for MCP traffic, with security features that standard proxies do not offer.

One critical capability is identity propagation. In many real systems, an AI agent acts on behalf of a human user. Without propagation, the agent often runs with a shared service account. With a gateway, the authenticated user's identity can be injected downstream via OAuth or OIDC tokens.

The flow looks something like this:

.webp)

The gateway validates the JWT, maps it to Alice's permissions, and forwards the request using her scoped credentials. If Alice cannot delete a repository, neither can the agent acting for her. Authorization is enforced at the protocol layer, not assumed in prompts.

Tool slicing is another core function. The gateway filters tools/list responses, exposing only a subset relevant to a particular team or workload. Dangerous capabilities simply never appear in the agent's context. Sensitive data and high-privilege endpoints remain invisible to agents that have no business accessing them.

Finally, policy enforcement happens at the protocol layer. Before a call_tool request reaches a server, the gateway can inspect arguments, apply quotas, or block operations entirely.

In production AI systems, that mediation layer becomes the difference between experimentation and governable infrastructure.

Security and Operational Risks Without an MCP Gateway

Direct model-to-tool connections look efficient until you examine the failure modes.

The first issue is credential sprawl. Each agent often carries its own API keys or service accounts. Those keys live in environment variables, config files, or secret stores scattered across services. Rotating credentials becomes tedious. Revoking direct access for a compromised workflow is worse. There is no single choke point.

Then comes excessive tool exposure. When agents are given broad direct access to all available MCP servers, the tools/list response can become crowded. Models perform better when the action space is constrained. Exposing dozens of loosely related external tools increases reasoning complexity and raises the probability of incorrect tool selection. In other words, security posture and model performance degrade together.

Observability suffers too. Without a gateway aggregating MCP traffic, tracing an agent's behavior requires combing through logs across multiple MCP servers. There is no unified execution timeline. Debugging becomes guesswork.

Finally, there is a blast radius. If an agent connects directly to production systems with high-privilege credentials, mistakes propagate immediately. A misinterpreted instruction can trigger irreversible operations.

The absence of a control layer does not merely reduce elegance. It increases operational risk in ways that compound quietly over time.

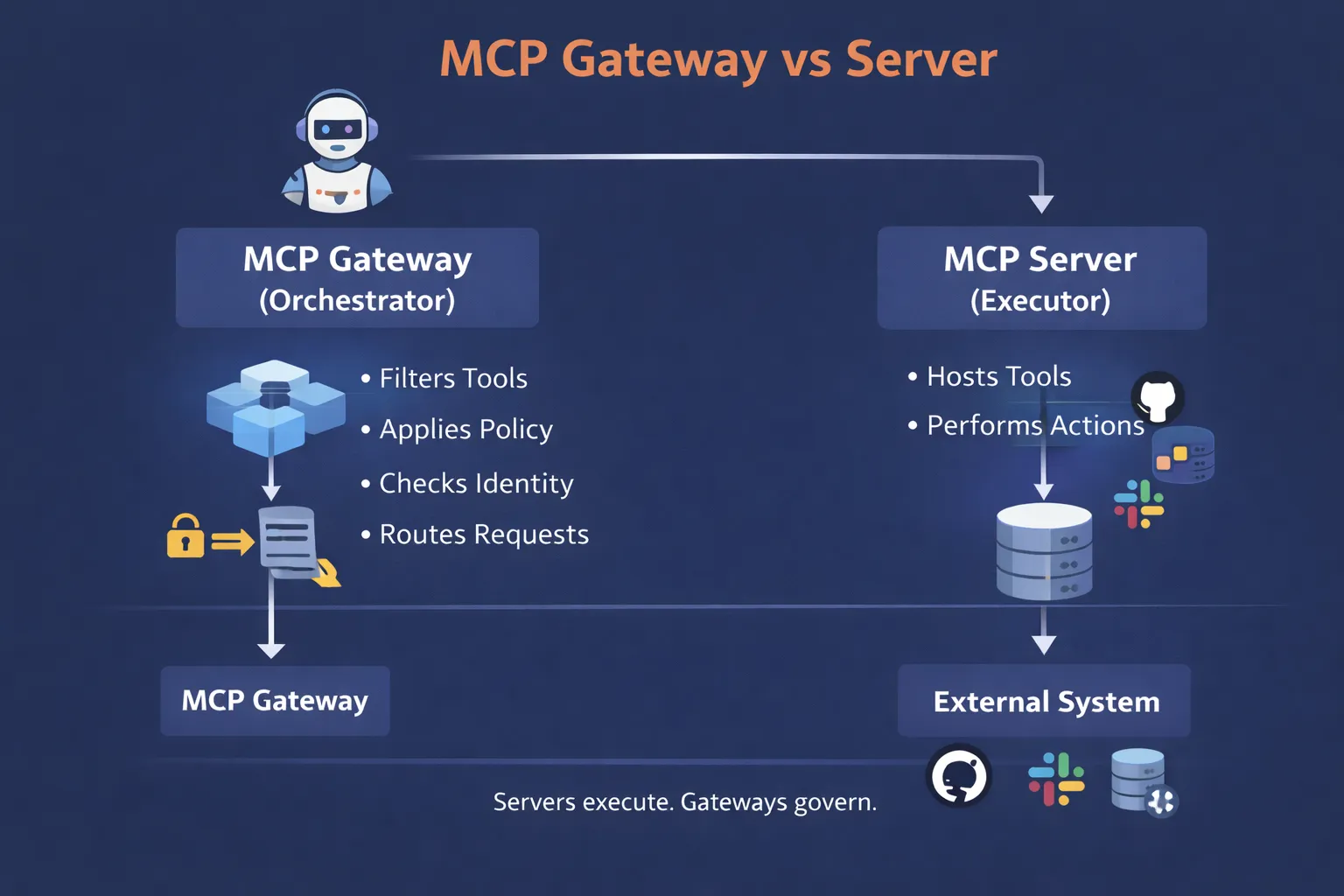

MCP Gateway vs Server

The terminology can be confusing, especially since both components speak MCP.

An MCP server is an executor. It connects to a specific system, GitHub, Slack, Postgres, an internal API, and exposes tools that perform concrete actions. It knows how to translate a call_tool request into a database query or an API call. It does the work.

An MCP Gateway, by contrast, is an orchestrator. It does not execute business logic. It governs access control to backend servers. It filters discovery responses, enforces authentication, propagates identity, applies security policies, and routes requests to the correct execution endpoint.

The distinction is structural:

Without servers, there are no tools. Without gateways, there is no centralized control. In production architectures, both roles typically coexist, each operating at a different layer of responsibility. Adding new MCP servers is straightforward when they register through a gateway; new servers are onboarded once, and their tools become available selectively rather than universally.

Is MCP Better than an API Gateway?

MCP is not a replacement for APIs, and it is not a substitute for an API gateway.

APIs remain the foundational interface between systems. They define contracts, enforce schemas, and expose business capabilities in a structured way. API gateways sit in front of those interfaces, handling authentication, rate limiting, traffic management, and sometimes transformation.

MCP operates at a different layer. It does not replace REST endpoints or GraphQL. Instead, it makes those APIs usable by AI models. Through standardized tool discovery and invocation, MCP translates structured API capabilities into a format models can reason about. The distinction matters especially for system integration involving both traditional services and AI-driven workflows.

In modern enterprise architectures, the two often coexist. APIs serve app services and integrations. API gateways govern traditional HTTP traffic. MCP servers expose selected capabilities to agents. An MCP Gateway then governs agent access control.

The relationship is complementary, not competitive. Removing APIs would collapse system integration. Removing MCP would make those APIs invisible to models. Production AI systems typically require both layers working together.

Core Capabilities of an Enterprise MCP Gateway

Not every gateway that forwards JSON-RPC traffic qualifies as enterprise-ready. Production environments demand more than routing. They require identity, visibility, and deliberate scoping across use cases ranging from customer support to complex multi-step workflows.

Unified Authentication (On-Behalf-Of Access)

.webp)

In serious deployments, agents rarely act alone. They act for someone. An enterprise MCP gateway validates the incoming user context, typically via JWT or OIDC, and propagates that identity downstream. Instead of a shared service key, requests execute on behalf of a specific user.

This prevents the “generic agent” problem. If a user cannot access a production repository, the agent cannot either. Identity becomes enforced at the protocol layer, not assumed in prompts.

Centralized Tool Registry

An enterprise MCP Gateway maintains a registry of available MCP servers and MCP tools. Instead of each agent discovering everything by default, visibility is managed centrally. New capabilities from new servers are registered once and exposed selectively. This configuration eliminates the documentation gap that typically emerges when teams lose track of what tools exist and who can use them.

Audit Logging

Every tools/list and call_tool invocation can be logged with metadata: agent identity, user context, arguments, and response status. This creates a coherent audit trail across systems. Debugging and compliance reviews become tractable rather than forensic.

Logical Tool Grouping

Production systems often require scoped exposure. A support agent does not need database administration tools. A finance workflow does not need deployment controls. This kind of scoping directly improves user experience; agents behave more predictably when their action space is intentionally limited.

A simple configuration might look like:

virtual_server:

name: support-scope

allow_tools:

- github.list_issues

- github.get_comments

- crm.update_ticket

The gateway filters discovery responses accordingly. Agents only see what they are meant to see.

Enterprise capability is not about adding more tools. It is about reducing uncontrolled surface area.

How TrueFoundry’s MCP Gateway Helps Enterprises?

TrueFoundry's MCP Gateway is designed to operate where enterprise workloads actually live: inside the customer's cloud environment. It can be deployed within a dedicated VPC using Docker containers, which means tool traffic, credentials, and model interactions do not have to traverse external shared infrastructure. For regulated industries, that deployment model alone reduces friction.

Access control is handled with fine-grained RBAC across agents, MCP tools, and teams. Instead of hardcoding credentials into agent runtimes, the gateway integrates with centralized identity providers and maps roles to scoped tool visibility. Security policies are declarative. Authorization happens before execution.

Credential vaulting is another practical concern. API keys and service tokens are stored and managed centrally rather than embedded in code or environment files. Rotation policies can be applied once at the MCP Gateway level, rather than across dozens of agents.

Safe testing environments are equally important. Teams can define isolated tool scopes for staging agents, preventing accidental direct access to production systems. That separation is enforced structurally, not just by convention.

Observability ties the system together. Tool invocations, identity propagation, telemetry, and policy decisions are fully traceable. Metrics on usage patterns, latency, and MCP traffic are surfaced in a single dashboard. When something misbehaves, you can inspect the execution chain without reconstructing events across multiple systems.

The goal is not abstraction for its own sake. It is containment, clarity, and controlled execution across MCP automation platforms inside infrastructure you already govern.

MCP Gateway vs Traditional Approaches

When teams evaluate MCP gateways, the real comparison is not only against other gateways. It is against the alternatives they are already using.

A detailed comparison to see TrueFoundry’s positioning.

| Aspect | Direct Tool Access | API Gateway Only | TrueFoundry MCP Gateway |

|---|---|---|---|

| Tool Governance | None | Limited | Built-in |

| Access Control | Manual | App-centric | Agent-aware RBAC |

| Observability | Minimal | API-level | Tool and intent-level |

| Deployment Model | Ad-hoc | SaaS or self-hosted | Runs in your cloud |

Direct access control maximizes speed but leaves control distributed and fragile. An API gateway improves perimeter security, yet it does not understand agent intent or tool scoping. An MCP Gateway introduces a management layer specifically aware of model-driven execution and MCP traffic.

The distinction is subtle, but it determines whether AI systems remain experiments or become infrastructure.

Book a demo to know more about how TrueFoundry MCP Gateway works in enterprise environments.

Final Thoughts on MCP Gateways

MCP provides a shared language between models and tools. That alone is a meaningful step forward. It reduces integration sprawl and standardizes how agents discover and invoke external systems. But standardization is not governance.

As AI agents move closer to operational systems, the architecture around them becomes decisive. Who can see which tools? Under whose identity actions execute. Where audit trails live. How risk is contained. Those questions sit above the protocol layer.

An MCP gateway answers them by introducing control without breaking interoperability.

For enterprise teams, the real shift is mental. Agents are no longer experiments attached to APIs. They are actors inside production infrastructure. Once you accept that, the need for a control plane becomes less optional and more structural.

Frequently Asked Questions

What does an MCP gateway do?

An MCP gateway sits between AI agents and MCP servers. It controls which tools an agent can see, enforces authentication, routes requests, and logs activity. Instead of agents connecting directly to systems like GitHub or databases, they connect to the gateway, which acts as a control layer. TrueFoundry operationalizes this by providing a unified interface that manages these connections across your entire AI ecosystem.

What is the difference between an MCP gateway and a server?

An MCP gateway governs access to those servers. It handles identity, visibility, and policy enforcement before any tool is invoked. An MCP server executes actions. It connects to a specific tool or data source and performs operations. TrueFoundry MCP Registry allows you to organize these servers into governed groups for better administrative control.

What is the difference between API gateway and MCP gateway?

An MCP Gateway is designed for agent-driven workflows. It governs MCP tools discovery and execution within the MCP protocol, adding authorization and observability that API gateways do not provide. An API gateway manages traditional application traffic. It understands HTTP routes, authentication, and rate limits. TrueFoundry bridges this gap by offering a specialized AI control plane that understands the unique context of LLM-to-tool interactions.

Is MCP better than API?

No, MCP and APIs serve different purposes: APIs define system interfaces and REST endpoints, while MCP makes those interfaces usable and discoverable by AI models. In most enterprise systems, both layers are required to work together to ensure seamless integration. The TrueFoundry platform supports this coexistence by providing standard connector interfaces that require no SDK changes to existing tools.

How does TrueFoundry MCP gateway help enterprises?

TrueFoundry provides a managed MCP gateway that runs in your cloud. It centralizes credential management, enforces fine-grained access control, and provides observability across agent workflows.

TrueFoundry AI Gateway delivers ~3–4 ms latency, handles 350+ RPS on 1 vCPU, scales horizontally with ease, and is production-ready, while LiteLLM suffers from high latency, struggles beyond moderate RPS, lacks built-in scaling, and is best for light or prototype workloads.

The fastest way to build, govern and scale your AI

One Layer of Control for All AI

Govern, Deploy and Trace AI in Your Own Infrastructure

Book a 30-min with our AI expert

The fastest way to build, govern and scale your AI

Book DemoRecent Blogs

The Agentic Token Explosion: Cost Attribution & Budgets for Claude Code in CI/CD

Boyu Wang

LLM Deployment in Regulated Industries: HIPAA, SOC2, and GDPR Playbook for 2026

Ashish Dubey

TrueFoundry and Gemini Enterprise Agent Platform: A practical comparison of platform boundaries, operating models, and long-term enterprise fit

.webp)

.webp)

.webp)

.webp)

.webp)

.webp)

.png)

.webp)