Cursor AI MCP Server Configuration: A Complete Setup Guide

Built for Speed: ~10ms Latency, Even Under Load

Blazingly fast way to build, track and deploy your models!

- Handles 350+ RPS on just 1 vCPU — no tuning needed

- Production-ready with full enterprise support

Introduction

Cursor AI code editor is designed to make developers more productive by deeply integrating AI into the coding workflow. It can understand your codebase, suggest changes, and help you iterate faster.

But modern software development doesn’t happen in isolation.

Developers constantly interact with systems beyond the editor—databases, APIs, repositories, and internal tools. Without access to these systems, even the most capable AI assistant is limited to code-level tasks.

This is where MCP (Model Context Protocol) servers come in.

MCP provides a standardized way to connect Cursor to external tools and services, allowing it to move beyond code suggestions and participate in real development workflows.

With MCP servers configured, Cursor can:

- Interact with external systems

- Execute multi-step tasks

- Coordinate workflows across tools

In other words, it evolves from a coding assistant into a workflow-aware AI agent.

However, setting up MCP servers in Cursor is not always straightforward, especially for developers encountering MCP for the first time.

This guide provides a step-by-step approach to configuring MCP servers in Cursor AI, helping you go from initial setup to a working, production-ready integration.

What You Need Before Getting Started

Before configuring MCP servers in Cursor AI code editor, it’s important to ensure a few prerequisites are in place.

Having these ready will make the setup process smoother and help avoid common issues later.

1. Cursor Installed and Set Up

Make sure Cursor is installed and functioning correctly on your system.

At a minimum, you should be able to:

- Open and navigate a codebase

- Run prompts within the editor

- Access settings and configuration options

If Cursor isn’t fully set up, complete that first before moving to MCP configuration.

2. An MCP Server to Connect To

Cursor does not provide MCP servers by default—you need to configure and connect one.

Depending on your use case, this can be:

- A local MCP server running on your machine

- A hosted MCP server (internal or third-party)

Examples include:

- GitHub MCP server for repository management

- Filesystem MCP server for file operations

- Database MCP server for querying data

- API-based MCP servers for interacting with services

Start with one server that aligns with your immediate workflow, and expand later.

3. Credentials and Access Configuration

Most MCP servers require authentication before they can be used.

This may include:

- API keys

- OAuth tokens

- Database credentials

- Service-specific access tokens

Make sure:

- Credentials are valid

- Permissions are scoped appropriately

- Sensitive data is handled securely

Misconfigured or missing credentials are one of the most common causes of setup failures.

4. A Clear Use Case

Before configuring MCP servers, it’s important to understand what you want Cursor to do.

For example:

- If you want to manage code → GitHub MCP

- If you need to query data → Database MCP

- If you’re working with services → API MCP

Avoid adding multiple servers without a clear purpose. Start with a focused use case and expand as needed.

5. A Suitable Environment for Testing

It’s best to configure and test MCP servers in a development or staging environment before using them in production.

This helps you:

- Validate configurations safely

- Debug issues without risk

- Fine-tune permissions and workflows

Once everything is working as expected, you can extend the setup to production environments.

How MCP Works in Cursor

Before jumping into configuration, it’s useful to understand how MCP fits into Cursor AI code editor at a high level.

MCP acts as a bridge between Cursor and external systems.

- Cursor sends a request (based on your prompt)

- The MCP server processes that request

- The result is returned back to Cursor

For example:

- You ask Cursor to fetch data → MCP server queries a database

- You ask Cursor to create a PR → MCP server interacts with GitHub

- You ask Cursor to run tests → MCP server executes shell commands

This architecture allows Cursor to:

- Stay lightweight

- Remain flexible

- Integrate with any tool that exposes an MCP interface

Cursor handles reasoning, MCP servers handle execution.

Step-by-Step: Configure MCP Server in Cursor

Let’s walk through the process of setting up an MCP server in Cursor.

Step 1: Set Up Your MCP Server

Before configuring Cursor, your MCP server needs to be running and accessible.

Depending on the server, this may involve:

- Installing dependencies

- Running a local service

- Configuring environment variables

For example, a local MCP server might be started using a command like:

npm install

npm startOr via Docker:

docker run <mcp-server-image>Once running, make sure:

- The server is accessible (URL/port)

- No errors are present

- Required credentials are configured

Step 2: Open MCP Settings in Cursor

In Cursor:

- Open Settings

- Navigate to MCP / Integrations section

- Locate the option to add or configure MCP servers

Step 3: Add MCP Server Configuration

You’ll need to provide a configuration that tells Cursor how to connect to the MCP server.

This typically includes:

- Server name

- Endpoint (URL or local path)

- Authentication details (if required)

Example Configuration

Here’s a simple example of an MCP server configuration:

{

"mcpServers": [

{

"name": "github",

"type": "http",

"url": "http://localhost:3000",

"auth": {

"type": "bearer",

"token": "YOUR_API_TOKEN"

}

}

]

}Key fields explained:

name→ Identifier for the servertype→ Connection type (HTTP, local, etc.)url→ Endpoint where the server is runningauth→ Authentication configuration

Step 4: Configure Authentication

If your MCP server requires authentication, ensure:

- Tokens or credentials are valid

- Permissions are correctly scoped

- Secrets are not hardcoded in insecure ways

Depending on the setup, authentication can be:

- Bearer tokens

- API keys

- OAuth flows

Step 5: Test the Connection

Once configured, test the setup directly in Cursor.

Try a simple prompt like:

- “List repositories from GitHub”

- “Fetch data from the database”

If everything is working:

- Cursor should call the MCP server

- You should receive a valid response

If not, check:

- Server logs

- Credentials

- Endpoint configuration

Common Issues and Fixes

Even with the correct setup, MCP configuration can fail due to small issues.

Here are the most common ones:

1. Connection Errors

Problem: Cursor cannot reach the MCP server

Fix:

- Verify server is running

- Check URL and port

- Ensure no firewall/network issues

2. Authentication Failures

Problem: Invalid or missing credentials

Fix:

- Double-check tokens

- Verify permission scopes

- Regenerate credentials if needed

3. Permission Issues

Problem: MCP server responds, but actions fail

Fix:

- Ensure correct access levels

- Check service-specific permissions

- Limit or expand scope as needed

4. Incorrect Configuration Format

Problem: Cursor does not recognize the MCP server

Fix:

- Validate JSON structure

- Check required fields

- Ensure correct syntax

Best Practices for MCP Server Configuration

Once you’ve set up MCP servers in Cursor AI code editor, the next step is ensuring your setup is secure, reliable, and scalable.

1. Start with Minimal Access

Avoid giving MCP servers broad permissions by default.

Instead:

- Grant only the access required for your use case

- Use scoped tokens and roles

- Expand permissions gradually as needed

This reduces risk, especially when working with:

- Production databases

- Deployment systems

- Sensitive APIs

2. Use Separate Environments

Always configure and test MCP servers in development or staging environments before production.

This helps you:

- Validate configurations safely

- Debug issues without impacting real systems

- Fine-tune workflows

Once stable, replicate the setup in production with stricter controls.

3. Validate Actions Before Execution

Even with MCP configured, it’s important to maintain control over execution.

Best practices include:

- Reviewing generated changes

- Adding validation steps for critical workflows

- Avoiding fully autonomous execution in high-risk systems

This is especially important for workflows involving:

- Code deployments

- Database updates

- Infrastructure changes

4. Monitor MCP Interactions

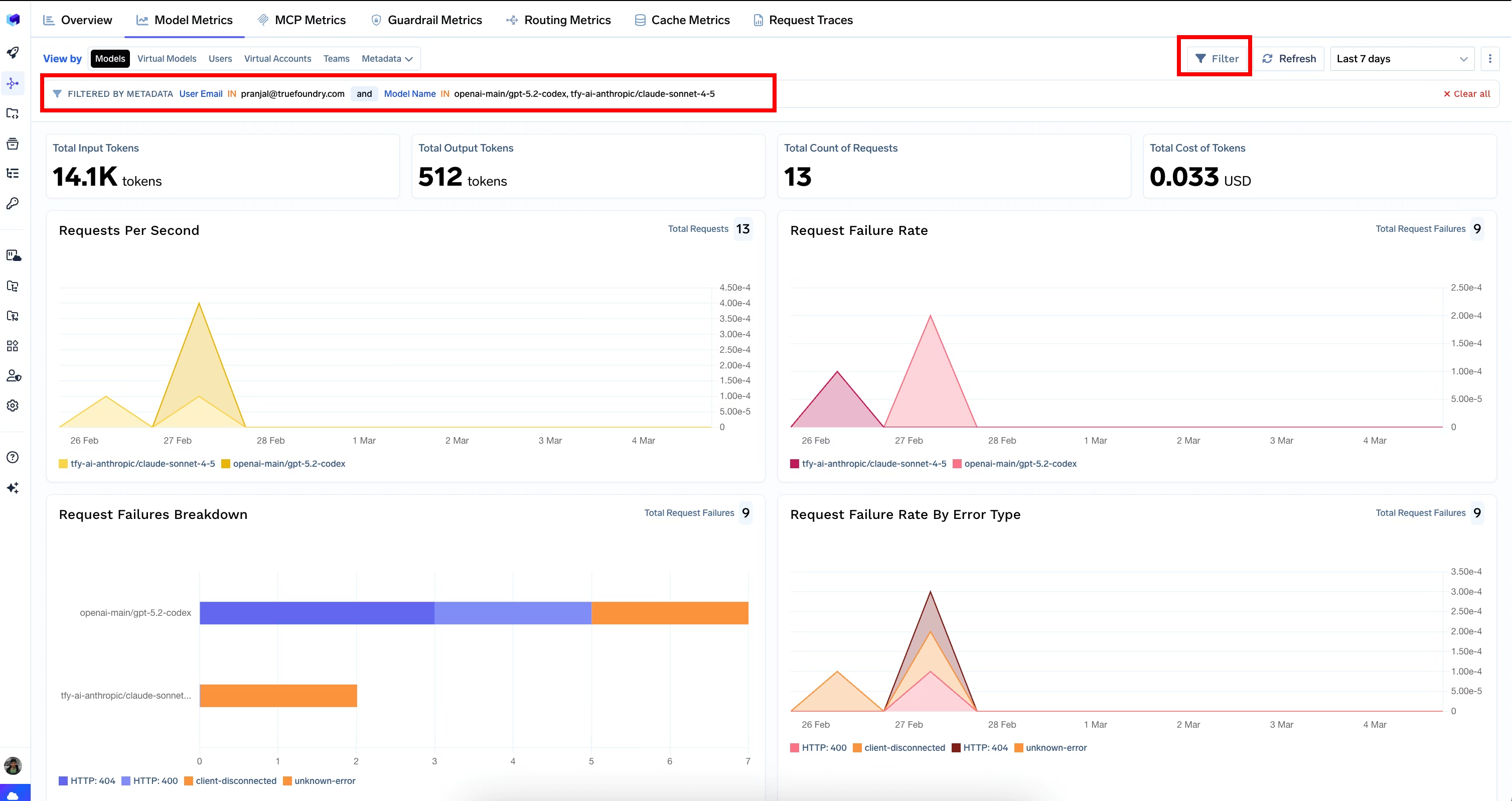

Visibility into MCP usage is critical.

Track:

- What requests are being made

- Which systems are being accessed

- What actions are being executed

This helps with:

- Debugging issues

- Auditing behavior

- Improving workflows over time

5. Keep Configurations Maintainable

As you add more MCP servers, complexity increases.

To manage this:

- Keep configurations clean and well-structured

- Use consistent naming conventions

- Document your MCP setup

This becomes especially important for teams working across multiple services.

Production Considerations: Scaling MCP Workflows

While MCP servers are easy to set up for individual use, running them in production introduces additional challenges.

1. Managing Multiple MCP Servers

As workflows grow, teams often integrate multiple MCP servers:

- GitHub

- Databases

- APIs

- Internal tools

Managing these individually can quickly become complex.

You need a way to:

- Standardize configurations

- Coordinate interactions

- Maintain consistency across environments

2. Enforcing Security and Guardrails

In production, MCP servers interact with critical systems.

Without proper controls, this can lead to:

- Unauthorized access

- Risky actions

- System instability

Teams need:

- Role-based access control

- Action-level restrictions

- Clear boundaries on what agents can do

3. Observability and Debugging

When Cursor interacts with multiple systems via MCP, debugging becomes harder.

You need to understand:

- What the agent attempted

- Which MCP server handled the request

- Where failures occurred

Without observability, troubleshooting becomes time-consuming and unreliable.

4. Managing Models, Costs, and Performance

MCP workflows depend on underlying models.

At scale, teams must handle:

- Model selection (latency vs quality)

- Cost optimization across repeated tasks

- Performance bottlenecks

This requires centralized control over how models are used.

The Infrastructure Layer: Making MCP Production-Ready

While Cursor AI code editor enables powerful MCP-based workflows, it doesn’t solve the challenges of running those workflows reliably in production.

Setting up a single MCP server locally is straightforward. But as teams begin to scale usage, the complexity grows quickly:

- Multiple MCP servers across different tools

- Sensitive systems like databases and internal APIs

- Autonomous or semi-autonomous execution

- Increasing usage across teams and environments

At this point, MCP is no longer just a configuration problem, it becomes an infrastructure problem.

This is where platforms like TrueFoundry play a critical role.

From Integrations to Infrastructure

In early setups, teams often rely on:

- Hardcoded configurations

- Local tokens and credentials

- Ad-hoc scripts to manage workflows

While this works for experimentation, it doesn’t scale. As adoption increases, teams need a standardized way to:

- Manage access across multiple MCP servers

- Control how agents interact with systems

- Ensure consistency across environments

TrueFoundry provides this missing layer by turning MCP integrations into production-grade infrastructure.

What This Infrastructure Layer Enables

Secure Connectivity Across Systems

MCP servers connect AI agents to critical systems, but those connections need to be tightly controlled.

With TrueFoundry, teams can:

- Manage credentials centrally

- Enforce secure access patterns

- Avoid exposing sensitive tokens in configs

This ensures that agents interact with systems safely and predictably.

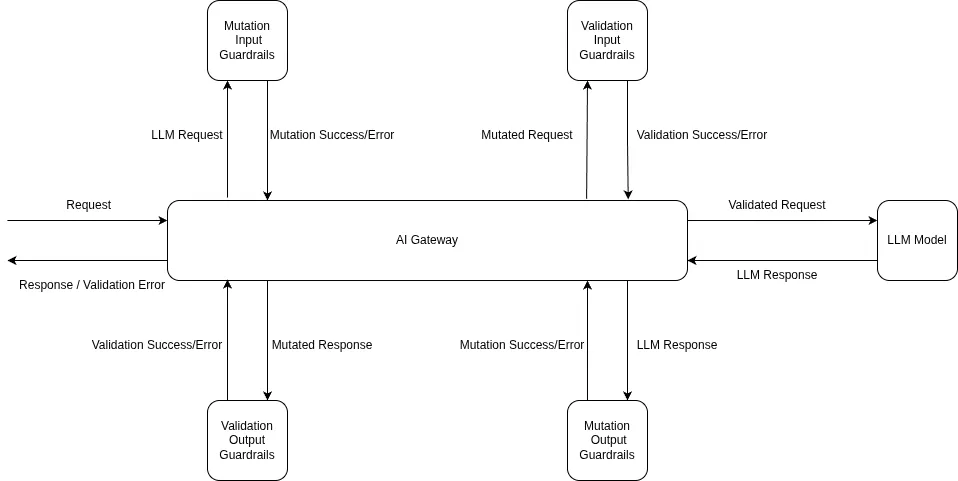

Guardrails for Agent Behavior

As AI agents gain the ability to execute actions, defining boundaries becomes essential.

TrueFoundry allows teams to:

- Restrict which tools an agent can access

- Limit the types of actions it can perform

- Introduce approval or validation steps for sensitive operations

This prevents scenarios where agents:

- Modify critical systems unintentionally

- Execute unsafe or unintended workflows

Observability and Debugging

When something goes wrong in an MCP workflow, debugging can be difficult without visibility.

Teams need to answer:

- What did the agent attempt?

- Which MCP server handled the request?

- Where did the failure occur?

TrueFoundry provides:

- End-to-end tracing of agent actions

- Logs across MCP interactions

- Insights into execution flow

This makes AI-driven workflows auditable and debuggable, just like traditional systems.

Centralized Model and Cost Management

MCP workflows depend on underlying models, and as usage grows, so do costs and performance considerations.

TrueFoundry enables:

- Model routing across providers

- Control over latency vs quality trade-offs

- Monitoring and optimization of usage

This ensures teams can scale without losing control over cost and performance.

Standardizing AI Workflows at Scale

Without an infrastructure layer, teams often end up with:

- Fragmented configurations

- Inconsistent access controls

- Limited visibility into agent behavior

By introducing a platform like TrueFoundry, organizations can:

- Standardize how MCP servers are configured and used

- Apply consistent security and governance policies

- Scale AI workflows across teams with confidence

Conclusion

Configuring MCP servers in Cursor AI code editor is what transforms it from a powerful coding assistant into a system that can participate in real-world development workflows. By connecting Cursor to repositories, databases, APIs, and internal tools, you enable it to move beyond code suggestions and start executing meaningful tasks across your stack.

However, as these integrations grow, the challenge shifts from setup to management. What starts as a simple configuration quickly becomes a question of security, reliability, and scalability, especially when multiple systems, environments, and teams are involved.

This is where infrastructure becomes critical. Platforms like TrueFoundry help teams move beyond ad-hoc setups by providing a standardized way to manage MCP integrations, enforce guardrails, monitor agent behavior, and control model usage at scale.

As AI-driven development continues to evolve, success won’t just depend on how well you configure MCP servers but on how effectively you can operate, govern, and scale these workflows in production.

TrueFoundry AI Gateway delivers ~3–4 ms latency, handles 350+ RPS on 1 vCPU, scales horizontally with ease, and is production-ready, while LiteLLM suffers from high latency, struggles beyond moderate RPS, lacks built-in scaling, and is best for light or prototype workloads.

The fastest way to build, govern and scale your AI

Govern, Deploy and Trace AI in Your Own Infrastructure

Recent Blogs

Frequently asked questions

What is MCP server integration in Cursor AI?

MCP server integration in Cursor AI is the capability that allows Cursor's AI to connect with external tools and data sources through the Model Context Protocol. When an MCP server is configured in Cursor, the editor's AI can invoke tools from that server — such as running database queries, fetching file contents, or calling external APIs — as part of its responses and autonomous actions.

Why do you need MCP servers in Cursor AI?

MCP servers extend Cursor AI's capabilities beyond static code analysis and text completion. With MCP servers, Cursor can interact with live systems — querying databases for schema information, reading from the file system for up-to-date context, or calling external APIs to retrieve real-world data. This makes Cursor's AI significantly more useful for full-stack development tasks that require awareness of external systems.

How do I add multiple MCP servers to my Cursor?

Multiple MCP servers can be added to Cursor by editing the `mcp.json` configuration file located in Cursor's settings directory. Each server is defined as a separate entry in the `mcpServers` object, with its own command, arguments, and environment variable settings. Cursor loads all configured servers on startup and makes their tools available to the AI simultaneously.

How do you configure an MCP server in Cursor AI?

Configuring an MCP server in Cursor AI involves opening the Cursor settings, navigating to the MCP configuration section, and adding the server's startup command and arguments in the required JSON format. For example, a Filesystem MCP server is configured by specifying the `npx @modelcontextprotocol/server-filesystem` command along with the allowed directory paths as arguments. After saving, Cursor connects to the server automatically.

.webp)

.webp)

.webp)

.webp)

.webp)

.webp)

.webp)