From Browser to Prompt: Building Infra for the Agentic Internet

.webp)

Built for Speed: ~10ms Latency, Even Under Load

Blazingly fast way to build, track and deploy your models!

- Handles 350+ RPS on just 1 vCPU — no tuning needed

- Production-ready with full enterprise support

AI agents are changing how humans interact with the web. Two years ago, getting work done online meant opening a browser, navigating to websites, and manually completing tasks. Today, AI agents handle that autonomously based on natural language instructions.

Instead of opening Chrome to book a flight, you tell an agent "Find me the cheapest flight to Tokyo next Friday," and it handles everything. We call this shift from human-driven browsing to prompt-driven execution the agentic internet.

Most organizations are not ready for it. Deloitte's 2025 study found that only 14% have agentic AI solutions production-ready. The gap is not a model capability problem; it is an infrastructure problem.

What Does Browser to Prompt Mean?

Traditional web interaction follows a predictable pattern. A human opens a browser, navigates to a URL, visually interprets the page, and performs actions.

Consider a typical enterprise workflow. You open Salesforce and search for a customer record. You review their order history, then open Zendesk in another tab to create a support ticket. You manually copy information between systems and open Slack to notify a colleague. Ten minutes pass. Fifteen clicks happen. Three browser tabs stay open.

Prompt-Driven Execution

With prompt-driven execution, you type: "Create a support ticket for Acme Corp about their delayed shipment and include their last three orders."

The agent connects to Salesforce via MCP, retrieves customer data, opens Zendesk via another MCP server, creates the ticket, and populates all fields automatically. One prompt replaces dozens of clicks. But making this work in production requires infrastructure that traditional systems do not provide.

Why Traditional Infrastructure Falls Short

Running AI agents at scale introduces requirements that existing systems were never designed to handle.

Session and State Management

Traditional browser automation operates statelessly: each script execution starts fresh. Agents require the opposite. An AI agent completing a multi-step workflow needs to maintain context across multiple system interactions, authenticate once, and carry that session through dozens of tool calls.

Anti-Bot Detection

Every major website deploys measures to block automated access, including CAPTCHA challenges, browser fingerprinting, and behavioral analysis. Standard automation tools like Puppeteer and Playwright get detected immediately because they leave obvious automation signatures.

Tool Orchestration Complexity

An AI agent calls APIs, queries databases, sends Slack messages, creates Jira tickets, and updates CRM records. Each integration traditionally requires custom authentication flows, request/response formatting, error handling, and retry logic. Without standardization, teams spend 80% of their time on integration plumbing.

Observability Challenges

When an AI agent fails, the cause could be anywhere. The model may have misinterpreted the task. The webpage layout may have changed. An API may have returned an unexpected response. An authentication token may have expired. Without end-to-end tracing, debugging becomes nearly impossible.

MCP: The Standard Protocol for Agent-to-Tool Communication

Anthropic introduced the Model Context Protocol (MCP) to solve the tool integration problem. Instead of writing custom code for every system, developers wrap tools in MCP servers that expose capabilities through a standardized JSON-RPC interface.

How MCP Works

When an agent connects to an MCP server, it performs a discovery handshake. The agent sends an `initialize` request, and the server responds with capabilities and protocol version. The agent then calls `tools/list` to discover available tools, and the server returns tool schemas with parameters and descriptions.

The agent learns what tools are available at runtime, no hardcoding required. Major AI providers including Anthropic, OpenAI, and Microsoft have adopted MCP.

However, MCP alone handles only the protocol layer. Production systems need additional infrastructure for authentication, access controls, rate limiting, and audit logging.

The Gateway Pattern for Production Agentic AI

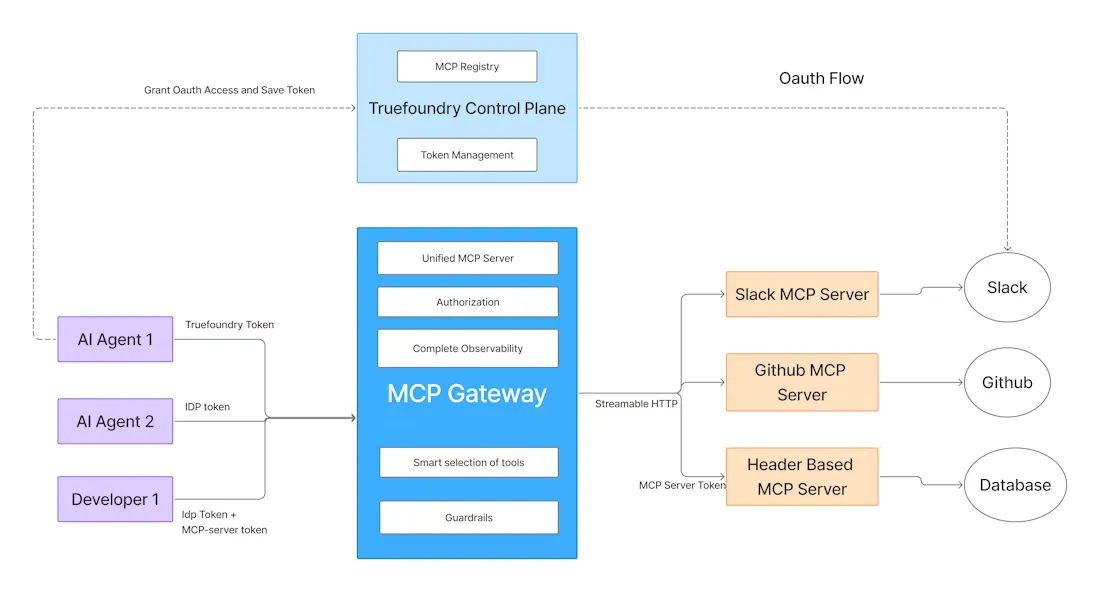

Production deployments require a gateway between AI agents and backend tools. The gateway provides a centralized control plane for managing all agent-to-tool interactions.

Core Gateway Capabilities

A properly designed gateway handles unified authentication, where agents authenticate once and the gateway manages all backend credentials. It provides centralized policy enforcement through rate limits, RBAC, and guardrails. The gateway ensures reliability through automatic failover, retries with exponential backoff, and load balancing. It also delivers observability via full traces through OpenTelemetry and cost attribution by team.

How TrueFoundry Enables Prompt-Driven Execution

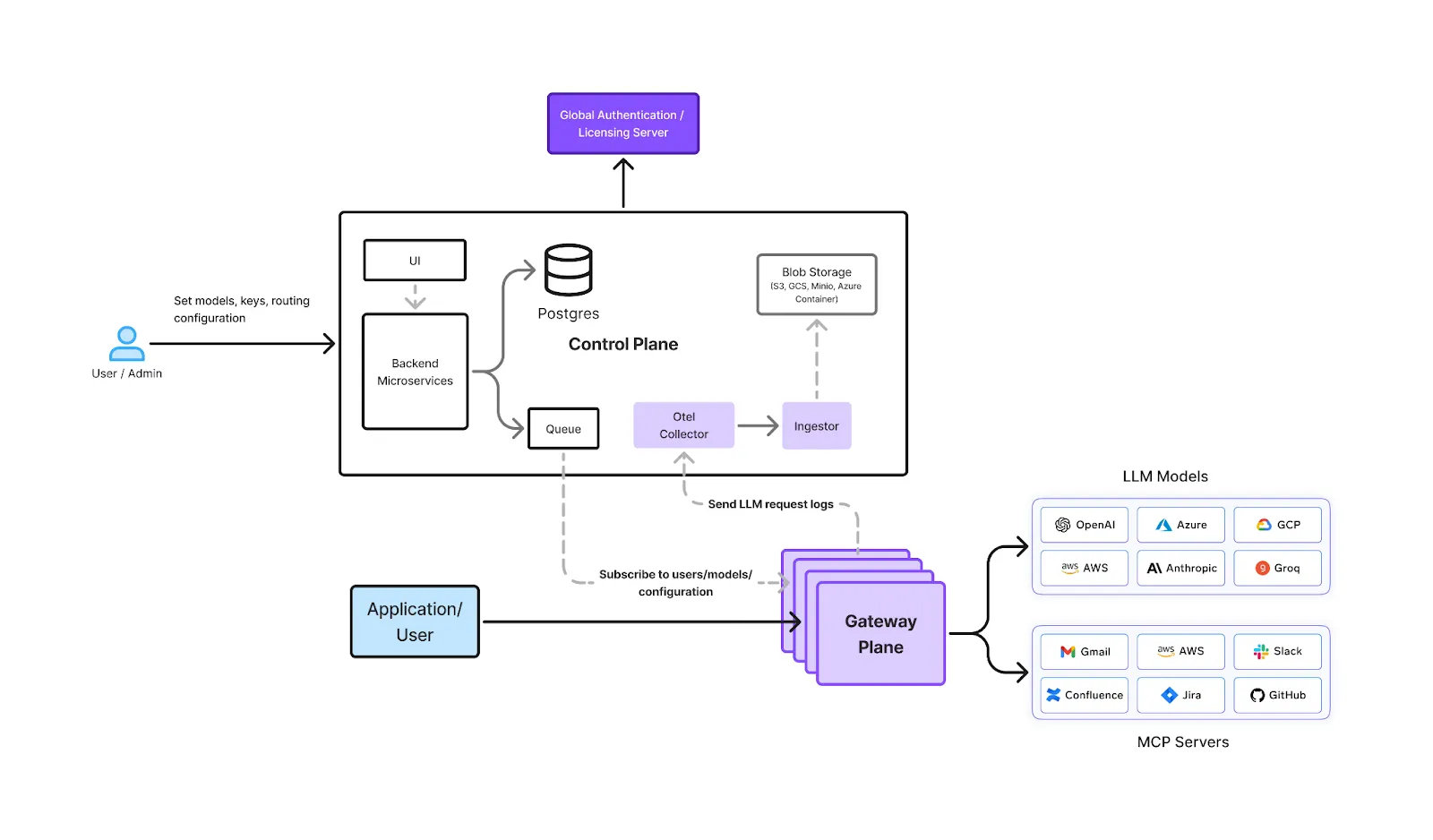

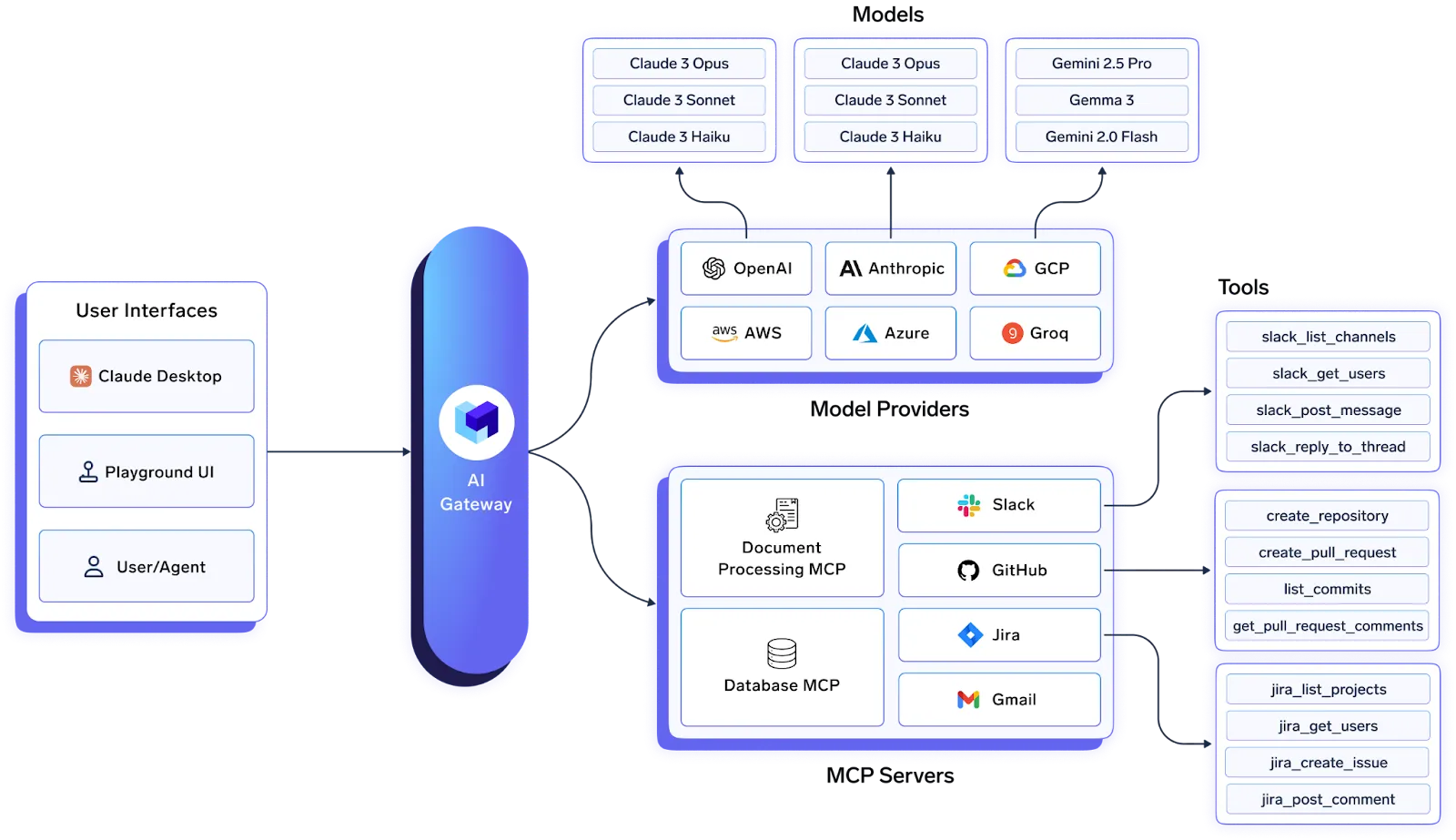

TrueFoundry's AI Gateway provides the infrastructure layer for production agentic AI. It combines LLM routing, MCP server management, and agent governance into a unified control plane.

Centralized MCP Registry

The MCP Gateway offers a centralized registry for all MCP servers: internal tools, third-party services, cloud-hosted or on-prem applications. You can organize servers into groups like `dev-mcps`, `staging-mcps`, and `prod-mcps` with separate RBAC rules for each environment. Agents authenticate once and discover all available tools automatically. The gateway stores credentials in its secret store and handles token refresh.

Pre-Built Integrations

TrueFoundry ships with MCP server integrations for Slack, GitHub, Confluence, Sentry, and Datadog. You can also register custom internal APIs in minutes. See the MCP Servers documentation for implementation details.

AI Gateway Playground

The AI Gateway Playground lets developers prototype workflows before deployment. You can add MCP servers, select specific tools, run natural language prompts, and observe every tool call and response in real time. The playground also generates production-ready code snippets in NodeJs, cURL, and more.

Enterprise Observability

TrueFoundry embeds OpenTelemetry-compliant tracing throughout the gateway. Every request includes request-level detail with span metadata, step-by-step agent execution traces, latency metrics at P50, P95, and P99, and cost breakdowns with attribution by team or application. The gateway handles 350+ RPS on a single vCPU with sub-3ms latency by processing authentication and rate limiting in-memory.

Security and Compliance

The platform provides tool-level RBAC via Access Control settings, input/output validation guardrails for prompt injection, PII, and toxicity, and OAuth 2.0 integration with Okta, Azure AD, or custom IdPs. TrueFoundry supports deployment across VPC, on-prem, air-gapped, and multi-cloud environments while maintaining SOC 2, HIPAA, and EU AI Act compliance.

The Infrastructure Gap Is Real

Gartner predicts over 40% of agentic AI projects will be canceled by 2027 because infrastructure is not ready. IBM reports that 99% of enterprise developers are exploring AI agents, but most continue bolting AI onto systems never designed for autonomous execution. The teams succeeding treat infrastructure as a platform investment, not a project-specific concern. They build the control plane first, then scale.

Conclusion

The browser-to-prompt shift fundamentally changes how agentic AI in enterprise will execute work across systems. Instead of clicking through interfaces, AI agents execute complex workflows based on natural language instructions.

Building infrastructure for this transition requires solving problems traditional systems were never designed to handle. You need session management for stateful workflows, tool orchestration without custom code, observability for non-deterministic behavior, and security controls for autonomous systems.

TrueFoundry's AI Gateway addresses these challenges through a unified control plane combining LLM routing, MCP server management, and agent governance. Engineering teams can move from browser-driven workflows to prompt-driven automation without rebuilding their entire infrastructure stack.

FAQs

1. What is the difference between browser automation and agentic browsing?

Tools like Puppeteer require explicit instructions for every action. Agentic browsing uses AI to interpret natural language goals and determine actions autonomously based on visual understanding.

2. Why do AI agents need a gateway?

Direct connections create credential sprawl, duplicated retry logic, and no visibility into agent behavior. A gateway centralizes authentication, enforces policies, and traces every interaction.

3. What is MCP?

The Model Context Protocol defines how AI agents discover and invoke tools through a consistent JSON-RPC interface. Agents learn available tools at runtime without hardcoded integrations.

4. How does TrueFoundry's MCP Gateway differ from custom integrations?

TrueFoundry provides pre-built connectors, unified registry, centralized RBAC, OpenTelemetry observability, and an interactive playground. Building equivalent infrastructure takes months.

5. What security controls are needed for production agentic AI?

Production deployments require tool-level RBAC, input guardrails for prompt injection and PII, output validation, enterprise IdP integration, and comprehensive audit logging.

TrueFoundry AI Gateway delivers ~3–4 ms latency, handles 350+ RPS on 1 vCPU, scales horizontally with ease, and is production-ready, while LiteLLM suffers from high latency, struggles beyond moderate RPS, lacks built-in scaling, and is best for light or prototype workloads.

The fastest way to build, govern and scale your AI

.webp)

.png)