TOKENMAXXING TRILOGY · PART 1 OF 3: Tokenmaxxing is the New Lines-of-Code Metric

Built for Speed: ~10ms Latency, Even Under Load

Blazingly fast way to build, track and deploy your models!

- Handles 350+ RPS on just 1 vCPU — no tuning needed

- Production-ready with full enterprise support

What is Tokenmaxxing?

Tokenmaxxing /ˈtoʊkənˌmæksɪŋ/ — the practice of optimising AI workflows for token consumption rather than business outcomes.

It's what happens when teams treat token counts as a productivity metric: agents recursively call themselves, prompts balloon to ship "context" that's never read, and routing logic favours expensive models because nobody loses their job for picking Opus over Haiku. Smart engineers do what smart engineers always do — they optimise the visible number.

In 2026, every internal AI dashboard, every vendor ROI deck, every quarterly review surfaces the same headline: tokens consumed. This post walks through the four enterprise failure modes that hide inside that single number — premium-model overuse, context stuffing, agent loops, and tokenizer drift — and the specific gateway controls that prevent each one from compounding into a six-figure invoice line.

TL;DR Tokens are an input cost, not an output value. Build the controls that let you measure and govern both.

How AI Usage Metrics Get Gamed

In 1976 British economist Charles Goodhart observed: 'When a measure becomes a target, it ceases to be a good measure.' Software engineering rediscovered this in the 1980s with lines-of-code productivity metrics, which produced longer programs, not better ones. The industry moved on. Yet in 2026, every internal AI dashboard, every vendor ROI deck, every quarterly review surfaces the same metric in slightly newer clothing: tokens consumed.

Tokens are not bad. Token volume is not bad. What is bad is treating the token counter as the leaderboard. The moment 'most tokens this week' is socially visible, smart engineers do what smart engineers always do: optimize the visible number. They paste larger contexts. They route to premium models when a smaller one would do. They build agents that recursively call themselves. They game the metric. We have a name for this now: tokenmaxxing.

Tokenmaxxing is what happens when 'AI usage' becomes a proxy for 'AI value' — and the proxy gets gamed. The only durable fix is to never let the proxy become the target in the first place.

How LLM Token Pricing Actually Works

Before discussing failure modes, the underlying cost structure deserves a clear-eyed look. As of April 2026, frontier-tier API pricing from Anthropic looks like this:

Two structural facts jump out. First, output tokens cost five times as much as input tokens across the entire frontier lineup. A workflow that returns long-form generation is fundamentally more expensive than one that returns a JSON classification, even with identical input. Second, the Opus-to-Haiku ratio is 5x on input and 5x on output. Routing a classify-intent task to Opus instead of Haiku is not a marginal optimization — it is paying 5x the rate for capability you do not use.

There is a third fact, easier to miss: the same prompt does not produce the same number of tokens across model versions. Anthropic's Opus 4.7 ships with a new tokenizer that can produce up to 35% more tokens than Opus 4.6 for the same input text — most pronounced on code, structured data, and non-English. Per-token rates are unchanged, but the effective cost per request can rise by up to 35% on a silent migration. Pricing stable, bills not.

If your only cost-tracking surface is the provider invoice that arrives at month-end, a tokenizer change can quietly add double-digit percent to your bill before you have any opportunity to react. This is exactly what governance at the gateway exists to prevent.

The Four Failure Modes of Tokenmaxxing

Across enterprise AI deployments we see the same four token-burn patterns recur. Each is a workflow design choice that compounds at scale, and each looks economical in isolation — which is exactly why they survive.

1. Premium-Model Overuse

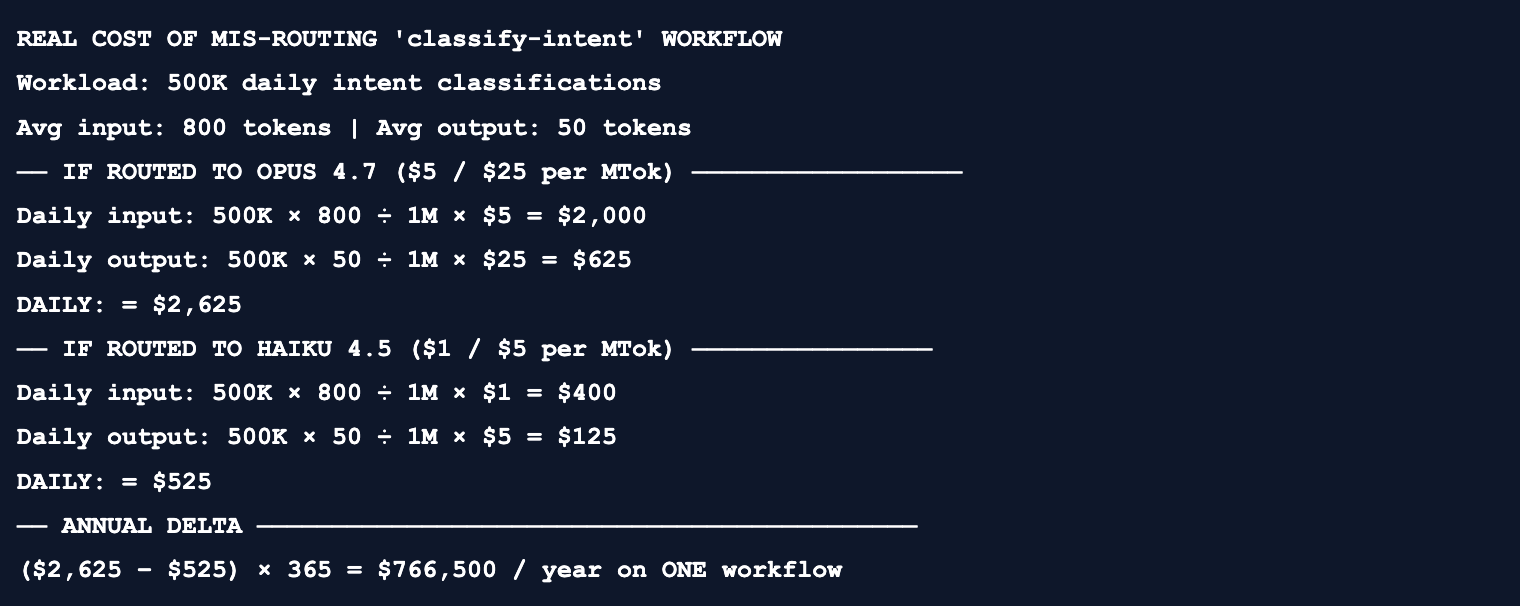

The single highest-leverage cost lever in any AI system is also the most invisible: which model handles which task. Most organizations route by default to the most capable model their procurement allows, because nobody loses their job for picking Opus over Haiku, but plenty of people lose theirs for shipping a regression. The math:

$766,500 per year of pure routing waste on a single workflow. The classification accuracy difference between Haiku and Opus on a domain-specific intent task is typically under 2 percentage points after a small fine-tune or few-shot prompt. The capability premium is real for hard tasks; for routine ones it is decorative.

2. Context Stuffing

The second failure mode is using the context window as a search index. An engineer building a code-review agent dumps the entire repository (500K tokens) into the prompt 'just to be safe.' A support-bot sends 50K tokens of historical tickets on every turn 'for context.' This works — the model returns a plausible answer — and the bill scales with the dump size, not with what was actually relevant.

The architectural alternative is active tool use via the Model Context Protocol (MCP). Instead of prepending all possible context, the model calls retrieval tools that return only the relevant snippets. TrueFoundry reports this delivers up to 99% inference token savings versus context stuffing, with tool-call overhead measured at roughly 10 ms.

3. Agent Loops (the Silent Budget-Killer)

The most expensive new failure mode in agentic systems is also the most invisible: the loop. An agent's exit condition is never satisfied, or its tool keeps returning errors, and it retries indefinitely. A single looping agent can consume the daily budget of an entire team in under an hour:

4. Tokenizer Drift

This is the failure mode no engineer ever caused. Anthropic's Opus 4.7 ships with a new tokenizer that maps the same input to between 1.0x and 1.35x more tokens than 4.6, with the upper end on code and structured data. Same prompt. Same task. Same headline rate card. Up to 35% higher invoice.

Without per-request token-count telemetry split by model version, this delta is invisible until it appears as a line item on the next invoice. Without an enforcement layer that can rate-limit or fall back when token-per-request anomalies cross a threshold, there is no automatic response. The fix is not 'more careful migrations.' The fix is making the gateway the source of truth for what every request actually consumed, in real time.

Failure Modes → The Control That Fixes Them

Each failure mode above maps to a specific gateway primitive. The point of the table below is not to claim the gateway is magic; it is to make the controls concrete enough that you can name the missing one when you see the symptom.

The Governed Request Lifecycle

If the controls above are to actually fire, they must run on the request path, not in a downstream analytics warehouse. The end-to-end shape of a governed request through the TrueFoundry AI Gateway:

Three properties of this lifecycle matter for the failure modes above. The gateway is in the request path, so its policies actually fire — a circuit breaker that can only see logs an hour later cannot stop a runaway loop. The overhead is sub-5-millisecond, which means the gateway can handle production traffic without the 'I'd rather not add a hop' objection. And every checkpoint emits OpenTelemetry, so the same trace that proves a request was governed also feeds the analytics that make governance tunable.

→ TrueFoundry AI Gateway Overview

Primitive 1 — The Identity Envelope

Every request that reaches a model must carry enough metadata to attribute, govern, and audit. Without this, every other primitive is guessing. TrueFoundry enforces this via X-TFY-* headers; an unmetadata'd request is a configuration bug, not a request.

# Every gateway request carries the identity envelope

POST /api/llm/api/inference/openai/chat/completions HTTP/1.1

Host: gateway.your-domain.tfy.app

Authorization: Bearer tfy-XXXXXXXX

Content-Type: application/json

X-TFY-Project: platform-search

X-TFY-Team: data-platform

X-TFY-User-ID: u_8f1c2d

X-TFY-Session-ID: sess_a3f9c2-b71d-4e

X-TFY-Workflow-Tag: classify-intent

X-TFY-Environment: production

X-TFY-Cost-Center: eng-platform-002

{

"model": "intent-fast", // VIRTUAL model, resolved by gateway

"messages": [...]

}Notice the model name: intent-fast. That is a virtual model defined in the gateway, not a physical endpoint. The gateway resolves it to a concrete provider call (haiku-4-5, sonnet-4-6, a self-hosted Llama, or whatever the routing policy says). Application code never names a provider. Re-routing from one provider to another is a YAML diff, not a code change.

Primitive 2 — Circuit Breakers (Rate Limits + Budgets)

Rate limits cap the rate of consumption. Budgets cap the total. Both are necessary; neither alone is sufficient. A single rate-limited agent can still burn $40K over a long weekend if its rate is $12/hr and nothing else watches the running total. A single budget without rate limits hits the ceiling once and then nothing fires until next month.

# rate-limit-config.yaml — enforce per-session, per-user, per-tag

name: production-rate-limits

type: gateway-rate-limit-config

rules:

- id: per-session-loop-guard

when:

metadata: {X-TFY-Environment: production}

limit:

tokens: 200000

window: 1h

scope: session # ← keyed on X-TFY-Session-ID

on_breach: hard_block

- id: per-user-burst

when:

subjects: {type: user}

limit:

tokens: 5000000

window: 1d

scope: user

on_breach: queue_then_429

- id: classify-intent-soft-cap

when:

metadata: {X-TFY-Workflow-Tag: classify-intent}

limit:

requests: 100000

window: 1h

on_breach: fallback_to_haiku # ← graceful degradation

The on_breach behavior is the unsung hero. A 429 is fine for batch workloads; for a customer-facing application, fallback_to_haiku is what keeps the lights on while still containing the spend. The same primitive expresses both.

# budget-config.yaml — hard ceilings per project per month

name: 2026-q2-project-budgets

type: gateway-budget-config

budgets:

- id: platform-search-monthly

scope:

metadata: {X-TFY-Project: platform-search}

ceiling_usd: 4000

window: monthly

alerts:

- {at_pct: 80, notify: ["slack:#ai-budget-alerts", "pagerduty:platform"]}

- {at_pct: 100, notify: ["slack:#ai-budget-alerts", "pagerduty:platform"]}

on_exceed: fallback_to_cheaper # uses fallback model from routing config

- id: intern-sandbox-cap

scope:

metadata: {X-TFY-Project: intern-sandbox}

ceiling_usd: 500

window: monthly

on_exceed: hard_block # interns get a hard stop→ Rate Limiting — windows, scopes, breach behaviors

→ Budget Limiting — ceilings, alerts, and graceful degradation

Primitive 3 — Virtual Model Routing

The single highest-leverage cost optimization in any AI system is choosing the right model for the right task. The right place for that decision is configuration, not application code. Virtual models give you a logical name (intent-fast, code-review-strong, support-cheap) that resolves at gateway time to a concrete provider call based on weight, priority, latency, or fallback rules.

# routing-config.yaml — a virtual model with multi-provider fallback

name: intent-fast

type: gateway-load-balancing-config

rule_type: weight-based

rules:

- id: primary-haiku

weight: 90

target:

provider: anthropic

model: claude-haiku-4-5

timeout_ms: 8000

- id: secondary-bedrock-haiku

weight: 10 # 10% A/B for resiliency

target:

provider: bedrock

model: anthropic.claude-haiku-4-5

timeout_ms: 8000

fallbacks: # tried in order on primary failure

- {provider: openai, model: gpt-4o-mini}

- {provider: vertex, model: gemini-2.0-flash}

circuit_breaker:

failure_threshold: 5 # 5 errors in window

window_seconds: 60

cooldown_seconds: 30Three things this YAML buys you. Cost optimization: 90% of intent-fast traffic flows to Haiku at $1/$5 per MTok instead of whatever default a developer hardcoded. Resiliency: when Anthropic has an outage, traffic flips to OpenAI or Vertex automatically; users see a 200ms degradation, not an outage. Provider portability: when a new model comes out, you change one line and it ships to production. Application code is unchanged.

→ Routing / Load Balancing Overview

→ Provider list (1000+ models supported)

Enterprise Criteria — What 'Production Gateway' Actually Means

A toy gateway you write in an afternoon can rate-limit and budget-cap. A production gateway has to do those things while satisfying a longer list of constraints that matter when AI is on the critical path of revenue.

The Weak Pitch and the Strong Pitch

Most internal proposals to adopt a gateway pitch the wrong thing. The weak pitch sells a dashboard. The strong pitch sells the architecture that makes a useful dashboard possible at all.

Where This Goes Next

Part 1 has done the diagnostic work: tokenmaxxing is the new lines-of-code metric, it has four characteristic failure modes, and each maps to a specific gateway primitive. We have introduced the three load-bearing pieces: the identity envelope, circuit breakers, and virtual model routing.

Part 2 takes those primitives and zooms out to architecture: the four envelopes (identity, policy, safety, observability) that wrap every governed request, and how they compose into a system that is simultaneously a security boundary, a cost-control surface, and an operational telemetry source. Part 3 then turns the architecture into the operating cadence — dashboards, scorecards, alerts, and the rituals that keep governed AI usage from drifting back into tokenmaxxing the moment your attention does.

The right metric is not tokens consumed. It is outcomes per dollar, with provable bounds on how that dollar was spent. Everything that follows builds toward making that metric measurable, defensible, and runnable.

TrueFoundry AI Gateway delivers ~3–4 ms latency, handles 350+ RPS on 1 vCPU, scales horizontally with ease, and is production-ready, while LiteLLM suffers from high latency, struggles beyond moderate RPS, lacks built-in scaling, and is best for light or prototype workloads.

The fastest way to build, govern and scale your AI

.png)

.webp)

.webp)

.webp)

.webp)

.webp)