AI Gateways: From Outage Panic to Enterprise Backbone

Built for Speed: ~10ms Latency, Even Under Load

Blazingly fast way to build, track and deploy your models!

- Handles 350+ RPS on just 1 vCPU — no tuning needed

- Production-ready with full enterprise support

Enterprises today are rapidly building and experimenting with multiple models and LLMs as part of their agentic AI journey. Different teams across functions are adopting AI in parallel — but without a common control layer, this often leads to fragmentation, lack of governance, and rising costs.

The cracks are already visible. On August 20, 2025, OpenAI went down. For hours, copilots froze mid-task, chatbots went silent, and enterprises lost productivity and revenue. A single outage disrupted thousands of businesses at once — showing that while AI is powerful, it’s also fragile. And this wasn’t the first outage — nor will it be the last.

At the same time, cloud bills for large models are spiraling. Every query, no matter how simple, hits expensive LLMs. For enterprises, the real question is no longer “Can we use AI?” but “Can we trust AI to run our business?”

Gartner’s Wake-Up Call

In August 2025, Gartner published Optimize AI Cost and Reliability Using AI Gateways and Model Routers. Their conclusion was clear: as AI becomes mission-critical, enterprises need a control layer to make it both reliable and cost-efficient.

By 2028, 70% of enterprises will use AI Gateways (up from 10% today).

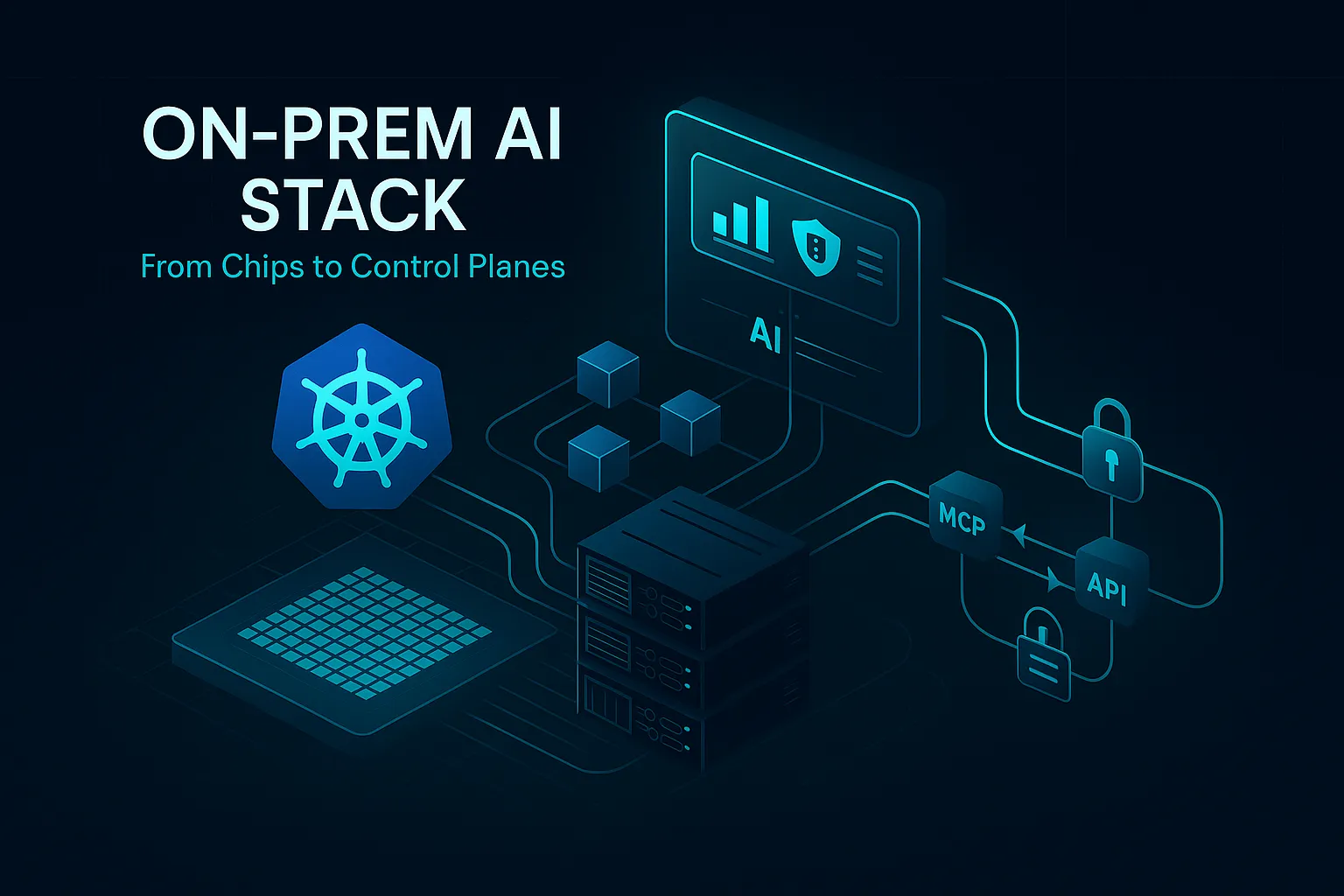

That control layer comes in two forms:

- AI Gateways → act like a control tower, enforcing budgets, rate limits, and uptime across multiple providers.

- Model Routers → work like a smart switchboard, directing each query to the most cost-effective model without sacrificing performance.

And adoption is accelerating fast. According to Gartner:

- Teams using Model Routers will cut costs by up to 60% (Gartner), with some studies showing as high as 85%.

- Reliability will matter as much as accuracy when choosing providers.

And here’s what we’re proud of: TrueFoundry was recognized in the Gartner report as an AI Gateway vendor — a milestone that validates our vision of being the control plane for enterprise AI.

For a fast-scaling startup, sharing that platform with global infrastructure leaders isn’t just recognition — it’s validation that enterprises can rely on TrueFoundry for their AI journey.

Why It Matters

AI Gateways act as the control tower, enforcing budgets, rate limits, and uptime through caching, load balancing, and multiprovider failover. Model Routers serve as the smart switchboard, sending simple queries to cheaper models and complex reasoning to advanced LLMs — reducing latency and cutting costs by up to 85%.

Together, they solve two pressing challenges:

- Reliability: Today’s AI services promise only 99.9% uptime vs. 99.99%+ for databases. That gap means hours of potential downtime each year — unacceptable for mission-critical systems.

- Cost: Without routing, AI bills grow uncontrollably. Gateways and Routers restore governance and visibility while keeping performance high.

The TrueFoundry Edge

Unlike API vendors extending into AI, TrueFoundry was built from the ground up as the central control plane for enterprise AI — with reliability, routing, and governance at its core.

Recognition in Gartner’s report validates that vision and places us in the same conversation as the world’s largest infrastructure providers — at exactly the moment enterprises are moving from experimentation to scale.

With TrueFoundry, businesses can stay online during provider outages, optimize spend through intelligent routing and caching, and take control of AI with observability and governance built in.

The Road Ahead

The OpenAI outage showed how fragile AI can be. Gartner’s research shows how urgent it is to fix. And TrueFoundry’s recognition shows we’re helping lead the way.

The future of AI isn’t just about what models can do — it’s about building AI you can trust to run your business.

Read Gartner’s full report: Optimize AI Cost and Reliability Using AI Gateways and Model Routers

TrueFoundry AI Gateway delivers ~3–4 ms latency, handles 350+ RPS on 1 vCPU, scales horizontally with ease, and is production-ready, while LiteLLM suffers from high latency, struggles beyond moderate RPS, lacks built-in scaling, and is best for light or prototype workloads.

The fastest way to build, govern and scale your AI

.webp)

.png)