Accelerator Series: Building a Resilient Web Scraper with LangGraph and TrueFoundry

Built for Speed: ~10ms Latency, Even Under Load

Blazingly fast way to build, track and deploy your models!

- Handles 350+ RPS on just 1 vCPU — no tuning needed

- Production-ready with full enterprise support

The sales team is panicking – there is a major healthcare conference next week. The event website lists 200 speakers -- physicians, executives, and researchers -- spread across a dozen paginated sub-pages. To build a lead list, someone needs to open the site, click a name, copy the details into a spreadsheet, open a new tab, search for that person on LinkedIn, copy the profile URL, and paste it back.

They have to do this 200 times.

For engineers, this request usually results in a quick Python script using Selenium or BeautifulSoup. You inspect the page source, find the div with the class speaker-name, and extract the text. It works perfectly for about a week. Then the website updates its frontend framework, the CSS classes change, and the script crashes.

We built the Profile Crawler accelerator to stop this cycle. It is an autonomous agent that navigates websites and extracts data based on what the page says, not how the HTML is structured.

Here is how we architected the solution using LangGraph for orchestration, Playwright for interaction, and TrueFoundry to manage the infrastructure.

The Shift: From DOM Selectors to Semantic Extraction

The main reason scraping scripts fail is their reliance on the Document Object Model (DOM). If you tell a script to look for div.content-wrapper > h2.title, it will break the moment a developer changes a class name.

We moved to an agentic approach. We don't tell the bot where the data is located pixel-wise. Instead, we feed the rendered HTML (converted to Markdown) to an LLM. The model reads the text just like a human would. It understands that a section labeled "Keynote Speakers" contains the data we want, regardless of the underlying tags.

- Old Way (Fragile): Hard-coded CSS selectors that break on UI updates.

- New Way (Resilient): Semantic understanding that adapts to layout changes.

Architecture Deep Dive

We needed a system that could handle decision-making, not just a linear script. The application needs to decide: Is this input a URL or just a company name? Did we hit a captcha? Is this page a list of people or a single bio?

We chose LangGraph to model this workflow as a state machine, especially where Langflow vs LangGraph decisions favor stateful orchestration.

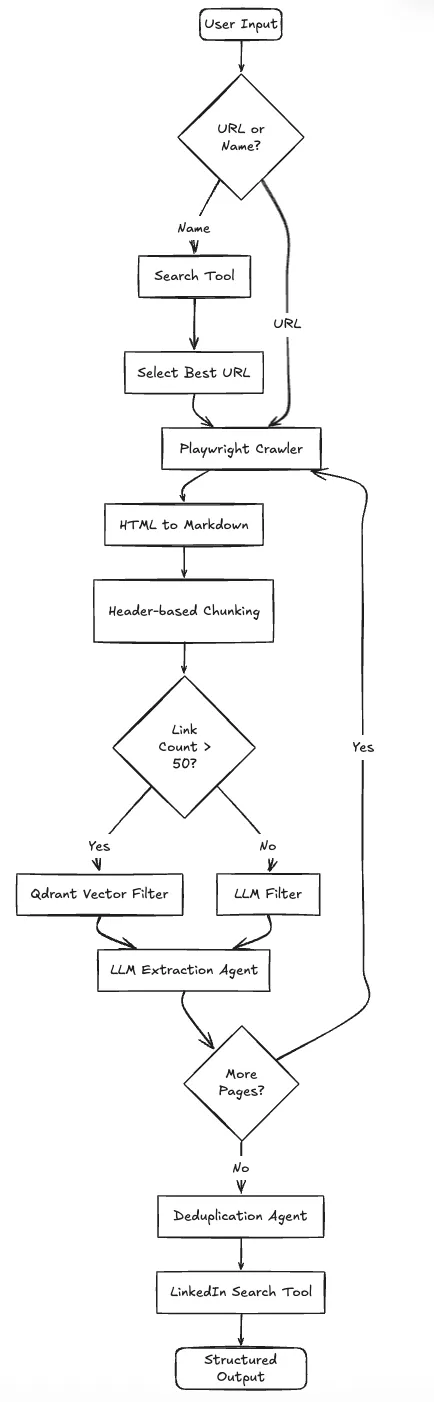

The Logic Flow

The system operates in a loop rather than a straight line:

- Input Router: The system checks if the user provided a direct URL or just a company name. If it's a name, it uses a search tool to find the correct domain first.

- Stealth Navigation: We use a modified Playwright instance to load the page. It handles cookie consent banners and lazy-loading images automatically.

- Vector Filtering (The Optimization): A single conference page might have 200 navigation links. Feeding all of them to an LLM context window is slow and expensive. We use FastEmbed to embed the link text and query a local Qdrant instance. This filters the list down to the top 10 links relevant to "Team" or "Speakers."

- Extraction: The LLM parses the filtered content and extracts structured entities (Name, Role, Company).

- Enrichment: Finally, we loop through the extracted names and use a search tool (Tavily) to find their specific LinkedIn profiles.

Here is the system architecture:

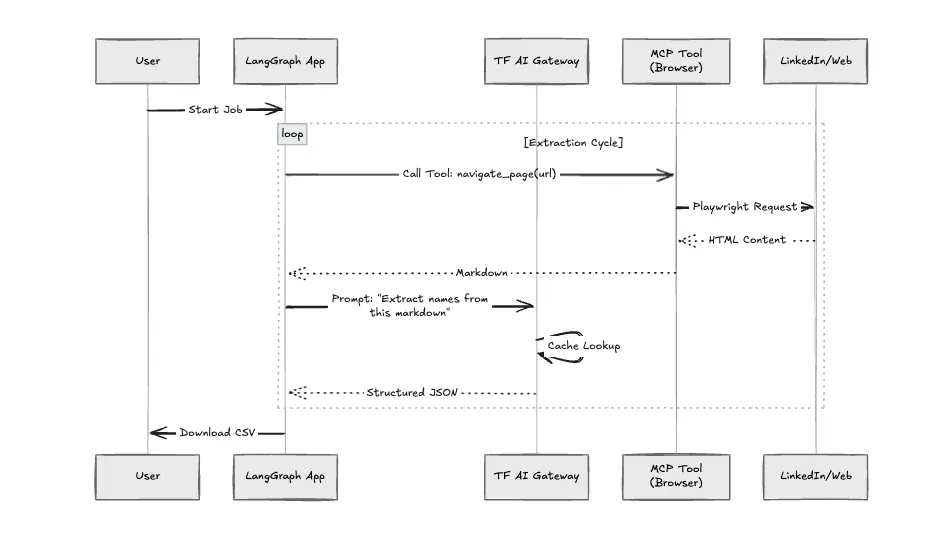

Infrastructure and TrueFoundry Integration

Running headless browsers and LLM agents in production creates operational headaches: memory leaks from Chromium, rate limits on LLM APIs, and the need for process isolation.

We deployed this on TrueFoundry to handle these specific constraints.

1. The AI Gateway (Observability & Caching)

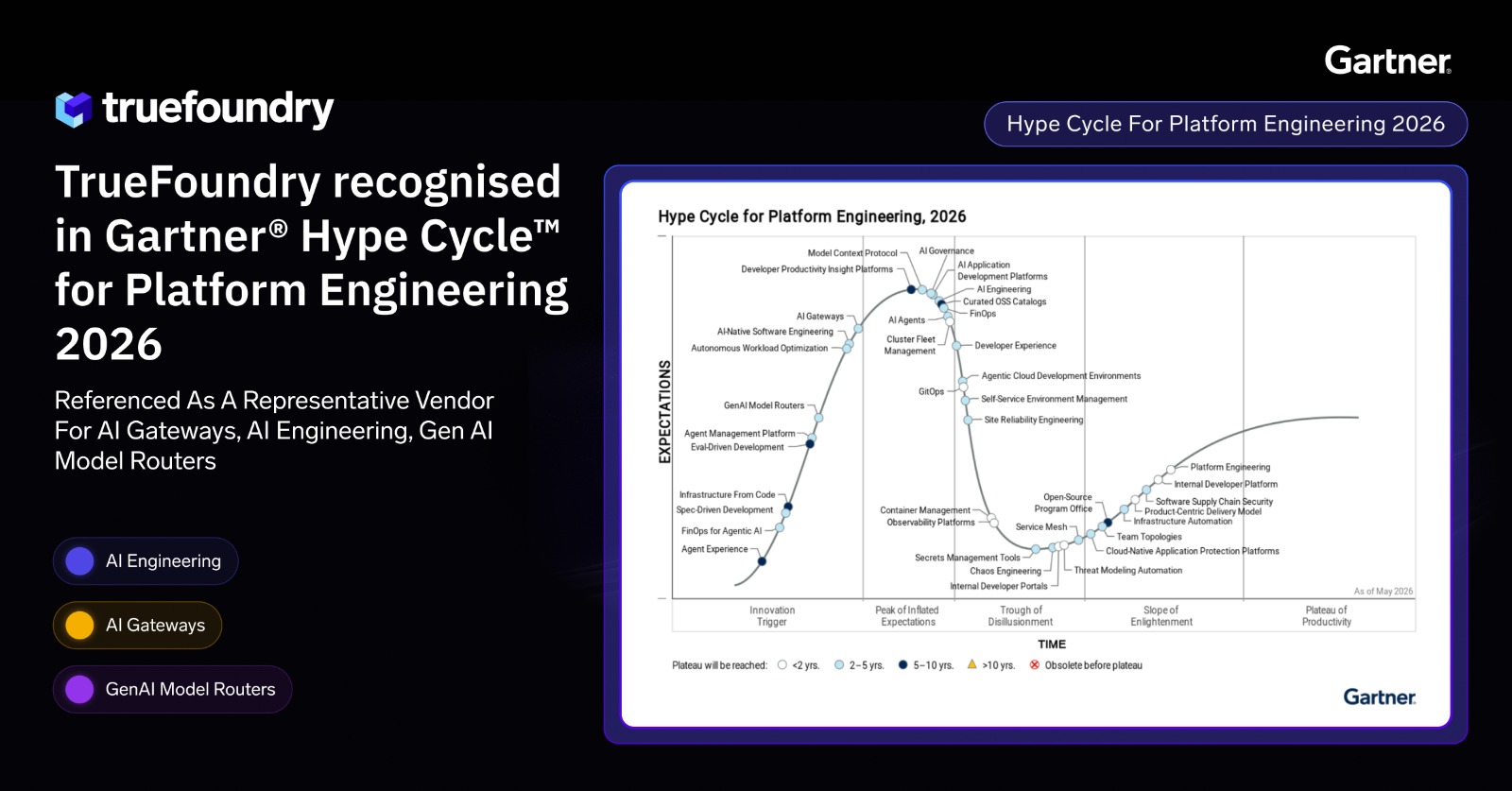

This application makes heavy use of LLMs for navigation decisions. Without governance, costs spiral quickly. We route all model calls through the TrueFoundry AI Gateway.

- Caching: If the agent scrapes the same site twice, the Gateway serves cached LLM responses for the extraction steps. This significantly reduces latency and cost.

- Rate Limiting: We set strict limits per user to prevent API quota exhaustion during large batch jobs.

- Failover: If OpenAI experiences downtime, the Gateway automatically reroutes requests to Anthropic or Azure OpenAI without failing the crawl.

2. Model Context Protocol (MCP)

We structured the application using the Model Context Protocol (MCP). The "Crawler" is not just a Python function; it is an MCP Server. This allows us to sandbox the browser environment. If the browser crashes (which happens often with heavy JavaScript sites), it doesn't take down the main application logic.

Infrastructure Diagram

Comparison: Script vs. Agent

We benchmarked the standard Python script approach against this architecture.

Handling Edge Cases

Building the happy path is easy. Making it reliable required solving three specific engineering problems:

- Duplicate Data: Profiles often appear on multiple pages (e.g., both "Leadership" and "About Us"). We added a Deduplication Node at the end of the graph. It passes the full list to a smaller, cheaper LLM to merge records based on name similarity before enrichment.

- Anti-Bot Detection: Standard Playwright is easily detected by modern WAFs. We implemented undetected-playwright within the Docker container, which patches the browser fingerprint (Navigator object, WebGL vendor) to appear as a standard user device.

- Token Limits: Large pages with privacy policies and footers waste tokens. We use header-based chunking to split the markdown. The LLM only processes chunks relevant to "Team" or "Speakers," discarding the rest.

Conclusion

This architecture solves the "Last Mile" of data acquisition by replacing brittle scripts with adaptive agents. By running it on TrueFoundry, we ensure the system is observable, cost-controlled, and scalable.

You can deploy this exact architecture -- including the Gateway configuration and Dockerized agents -- from the TrueFoundry application library today.

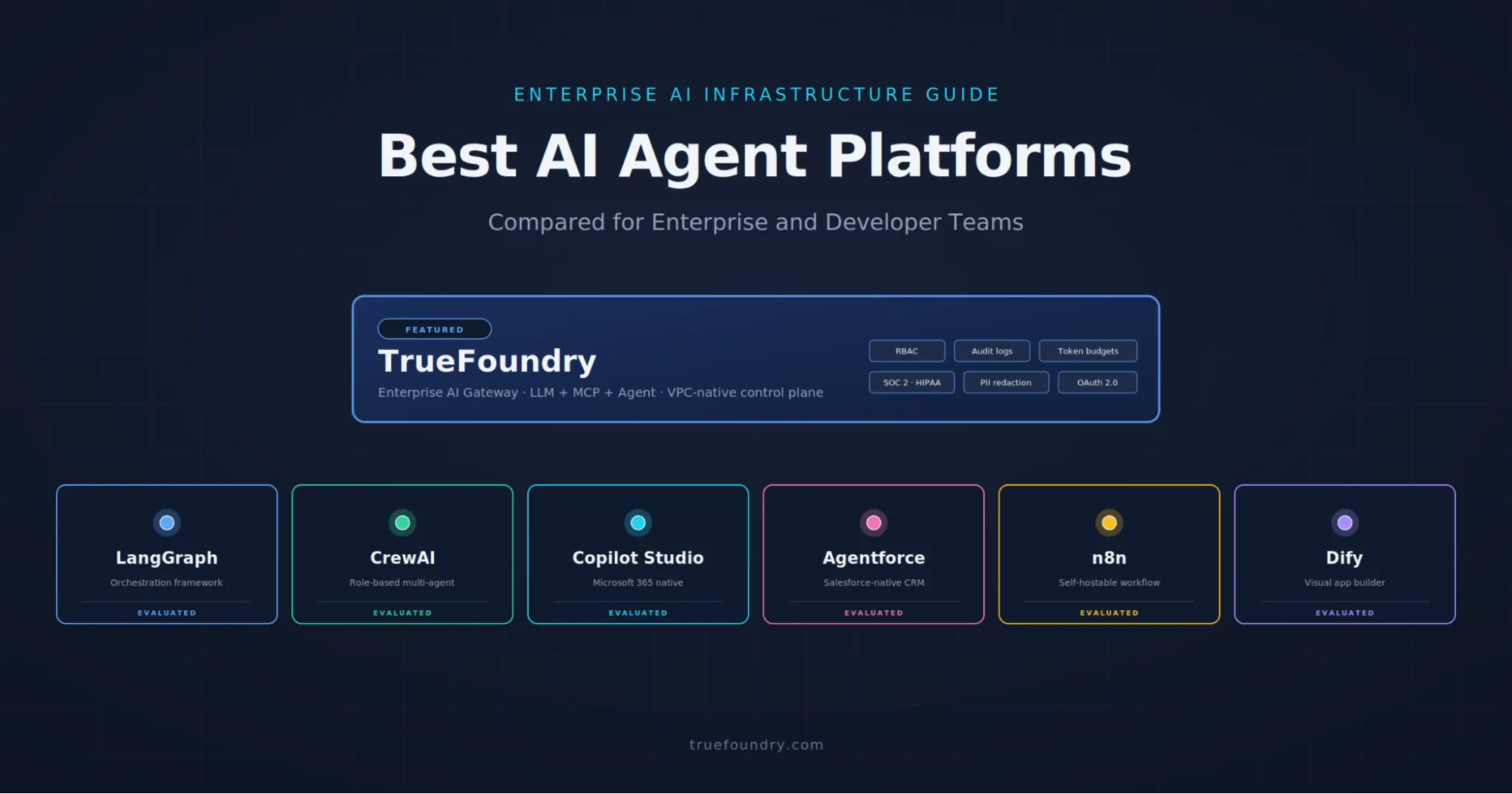

TrueFoundry AI Gateway delivers ~3–4 ms latency, handles 350+ RPS on 1 vCPU, scales horizontally with ease, and is production-ready, while LiteLLM suffers from high latency, struggles beyond moderate RPS, lacks built-in scaling, and is best for light or prototype workloads.

The fastest way to build, govern and scale your AI

.webp)

.webp)

.webp)

.webp)

.webp)