Obot AI Alternatives: Top 6 Tools You Can Consider in 2026

Built for Speed: ~10ms Latency, Even Under Load

Blazingly fast way to build, track and deploy your models!

- Handles 350+ RPS on just 1 vCPU — no tuning needed

- Production-ready with full enterprise support

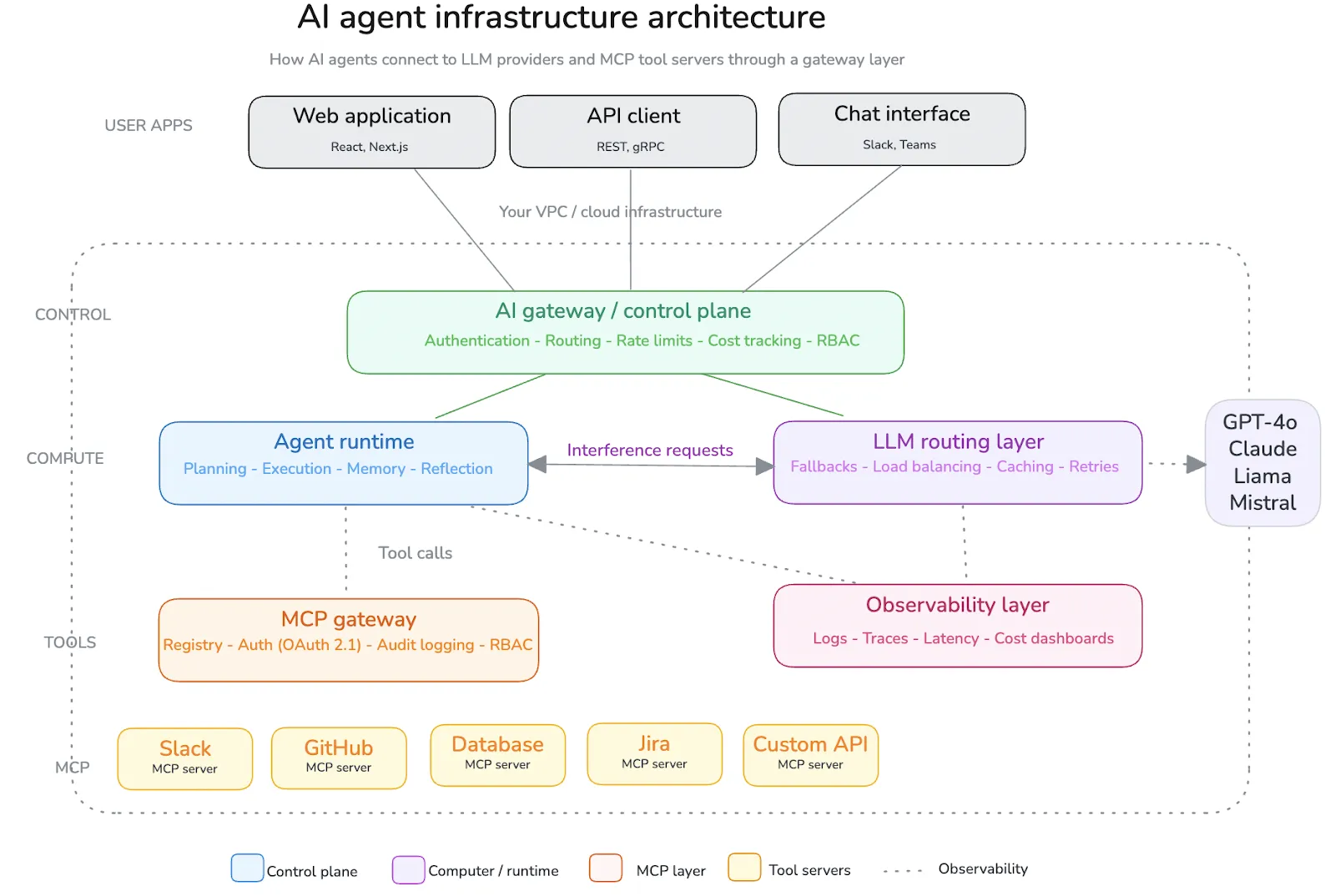

Today building AIs is more than simply determining which Model you would like to use.

There are several pieces to building AI agents i.e. connecting to the various tools, managing access, tracking usage, and preventing things from breaking once in production; this is where teams leverage services like MCP, and more recent examples would be Obot AI.

Obot AI provides a structured, Open Source framework to help teams manage their Model Context Protocol (MCP) infrastructure; this is effective for tool Governance.

However, as teams move into Production, many times their requirements grow such as further observability, the ability to work with multiple models, or control over the deployment and security of the Model.

That’s usually when teams start exploring Obot AI alternatives. In this guide, we’ll walk through the top options in 2026—so you can find the one that actually fits your technology stack.

What Is Obot AI?

Obot AI, previously known as Acorn Labs, provides an open-source platform for companies to manage their Model Context Protocol (MCP) based systems.

The core functionalities of Obot AI include:

- MCP hosting

- MCP registry

- MCP gateway

- MCP-compliant chat client

The MCP gateway acts as the centralized control point where IT teams can onboard, manage, and monitor MCP servers using either a modern administrative user interface (UI) or via GitOps-based workflow, including maintaining a full audit trail of every action taken in relation to an MCP server.

Obot AI can be deployed to self-host on either Docker or Kubernetes, allowing users the ability to maintain full control and sovereignty over their data and infrastructure.

Why Do Teams Look for Obot AI Alternatives?

While Obot AI is a strong MCP Server Management Foundation, there are limitations for teams looking for more extensive use of their AI infrastructure.

- Narrow focus on MCP — Designed strictly for governance of MCP. If an organization wants to perform model serving/buffering, tuning or prompting management functions, or full LLMOps, a separate provider must be sought out.

- No built-in model serving or inference layer — Tool connections are managed by Obot; however, the models themselves are managed by a separate stack that has yet to be built which will manage where LLM instances are hosted, how inference is routed, and how GPU compute is managed.

- Kubernetes Deployment — The ideal deployment option for teams that have a cloud-native infrastructure however; this could be a significant hurdle for organizations who do not possess the required resources or platform engineering expertise.

- Limited visibility & cost management Capabilities — While audit logging exists, there is no token-based (1) [Cost Attribution] (2) [Latency Dashboard] or (3) proper-level [Monitoring of Production], which are present in dedicated AI Gateways.

Evaluation Criteria

How Did We Evaluate These Obot AI Alternatives?

Not all alternatives to Obot AI are trying to solve the same issue; for example, some may be focused only on MCP while others are looking at AI infrastructure in a more comprehensive sense.

We evaluated each of the alternative solutions based on several practical aspects:

- MCP and AI Agents: Does the solution provide native support for MCP, or does it only integrate with MCPs?

- Infrastructure Ownership: Do you have the ability to run the solution in your own virtual private cloud (VPC), or is the solution only offered as a software-as-a-service (SaaS)?

- Model Flexibility: Does the solution offer support for both self-hosted and provider-based models?

- Observability & Governance: Does the solution provide robust role-based access control (RBAC) policies, auditing, and cost tracking to ensure that you can reliably use the solution in production?

- Developer Experience: How quickly can a development team go from an idea to a working solution?

No one alternative solution is a clear winner when evaluated across all of these areas, and that is the overall purpose of the evaluation.

Obot AI Alternatives at a Glance:

Top Obot AI Alternatives in 2026

1. TrueFoundry: Best for Enterprise AI Teams Needing Full-Stack Control

TrueFoundry is an AI platform delivered as Kubernetes-managed workloads on-premises or as cloud services within your VPC on all three major cloud platforms (AWS/Azure/GCP).

TrueFoundry addresses all aspects of managing and controlling the lifecycle of AI such as deploying models, routing inferences to them, orchestrating agents, governing MultiCloud deployments, via a single control plane.

Recognized for its completeness by Gartner in their 2025 Market Guide for AI Gateways and recent Board of Directors in North Carolina, TrueFoundry provides governance over models, agents, tools, and compute resources, whereas Obot provides only governance over hosted agents/passed-through requests.

The platform has been adopted by enterprises including Siemens Healthineers, Resmed, Automation Anywhere, and NVIDIA.

Key Features:

- Access AI unified gateway: OpenAI compatible API (250+ LLMs using either open source or proprietary LLM); Intelligent routing, failover, load balancing and token budgeting via a single API to the LLMs

- Virtual MCP Servers: Combine tools from multiple MCP servers into a single curated endpoint with tool-level filtering. Centralized authentication (OAuth2, PAT, VAT), RBAC, and audit logging handled by the AI gateway

- MCP Gateway with Centralized Registry: Public and self-hosted Registered MCP Servers available via AI Gateway Control Plane; user-per-user OAuth token provisioning and automatic token refresh capability; Support for Federated Identity Providers (IdPs) such as Okta and Azure AD

- Agent-Friendly Orchestration: Framework agnostic; compatible with custom Agent Frameworks, LangGraph, CrewAI & AutoGen; Built in Playground for testing of prompts against MCP tools with real-time streaming of Agentic Loop Data

- Built-in Observability: Latency, Token Usage, cost attribution, team specific dashboards and full logging of client requests/responses with no sidecars needed

- Prompt Lifecycle Management: Versioning of Prompts Managed by ai, Multi-Version Support of Prompts Managed by AI and CI/CD Integration with CLI / API.

Best For:

Platform engineering organizations, enterprises seeking a cloud-neutral (not simply an MCP gateway) governed AI platform with total model, agent, tool & infrastructure control (best suited for those transforming 1 or 2 LLM use cases).

Organizations transitioning from limited LLM use cases to broad LLM implementations typically have trouble getting a solid understanding of the size of their current environment, what the future will look like, and how to plan for scalability over the long term.

2. LangGraph (by LangChain)

LangGraph is a framework that offers an open-source way to create directed graphs of stateful multi-step agent workflows. LangGraph builds on LangChain by adding features including explicit state management, cycles, and human-in-the-loop patterns.

Key Features:

- A graph-based method of building complex services with support for branches, cycles, conditional routing, and parallel processing

- A platform for managed deployment (self-hosted or managed by LangGraph)

- Compatible with any model provider (including Claude, OpenAI, Gemini, Bedrock, Open Source)

Pros:

- Flexible platform for building complex multi-step agents with MIT license

- Robust ecosystem with other tools (LangChain, LangSmith, LangServe)

Cons:

- Framework instead of Platform; Requires upfront design of state schema; Does not provide default model serving, MCP gateway, or compute orchestration

Best for:

Engineering teams creating complex stateful agent workflows that are ready to manage their infrastructure.

3. CrewAI

CrewAI is a Python framework designed for collaborating with teams of AI agents by allowing them to complete tasks by their assigned roles within specified goals and delegation methods.

Key features:

- Defining roles for agents with goals, backstories, and tools assigning to each role. This allows for modeling tasks as teams with specialized skill sets.

- Offers unified control over agents with the CrewAI Enterprise AMP platform. This platform includes a real-time tracing capability, role-based access control (RBAC), and cloud or on-premise management of deployments.

- The CrewAI Studio is a no-code, graphical editing tool that allows the user to create agents without needing to know how to program.

Pros:

- Easy to model collaborative agents: Creating agent teams with the API is the most user-friendly way to build a working prototype of a multi-agents team in less than a day.

- Fast to prototype: The CrewAI core is open-source, and therefore, it can be used independently of any other framework (e.g., LangChain).

Cons:

- Limited observability: The abstraction layer on CrewAI can sometimes feel less intuitive than the abstraction layer of LangGraph making agent failure diagnosis time-consuming. The maturity of observability and cost tracking within the CrewAI ecosystem are also less than with LangSmith.

- CrewAI Enterprise Cost: The cost of using CrewAI Enterprise (AMP) is approximately $99/month, though further consultation may provide precise pricing.

Best for:

CrewAI is a great mult-agents collaboration tool for teams interested in rapid development of multi-agents workflows through no-code methods.

4. Composio

Composio, as a managed MCP gateway and tools integration platform, has over 500 pre-built tools to connect to your AI agents (and is enterprise-approved). The company's Universal MCP Gateway was launched in August of 2025 to support over 100,000 developers and eliminate the need for numerous separate MCP servers by allowing for one installation instead.

Key Features:

- 500+ managed MCP integrations (including Slack, GitHub, Salesforce, Google Workspace, Notion, Jira, etc.) featuring unified OAuth and automatic token refreshes

- Framework agnosticism: Supports LangChain, CrewAI, OpenAI Agents SDK, Claude Code, Cursor, etc.

- AI-powered tool routing: Understands intent, selects tools, sends parameters — no need for manual API doc lookups.

- Cloud hosting or private/self-host installation options

Pros:

- Largest pre-built tool catalogue (reducing integration time from weeks to less than 5 minutes)

- Developer-first Developer Experience: One line API connection per tool and full recipe templates.

Cons:

- Managed SaaS by default means less control of infrastructure than self-host options like Obot

- Connector quality can vary: rapid expansion means some of their integrations are less battle tested.

- Pricing is not very clear (based on the number of times a tool has been called); enterprise pricing requires contacting Composio sales directly.

Best For:

Development teams creating agents who need quick access to a large number of MCP tools without the time lag associated with developing custom integrations — when speed of integration is key.

5. Portkey

Portkey is a reliable and observable platform can help to manage costs related to the use of large scale Learning Management System (Edition) and therefore its primary focus is to provide a 'gateway to 1,600+ laws, across 40+ different companies, each with less than one millisecond' delay when generating new content for large-scale enterprise resources.

Key Features:

- Intelligent route generation — supports automatic retry capabilities; semantic-based caching functionalities; load balancing operations; circuit-breaker capabilities; and multi-model fall-back options

- Meets all applicable security compliance standards as follows: SOC 2 - Type 2; ISO 27001; GDPR; HIPAA; with additional methods of securing access control include; employing role-based access control; utilizing Single Sign-On/SCIM; and maintaining audit logs.

- The Portkey gateway serves as a centralized repository for storing all relevant data on each of its many different applications.

Pros:

- High-performance, compact and efficient when compared with traditional, legacy-type systems and proven history through large scale enterprises.

- Open-source gateway solution with a cloud-based version or cloud managed solution.

Cons:

- Portkey is strictly a gateway product with no support for developer-hosted models or compute infrastructure; MCP capabilities are limited when compared to other, dedicated, MCP gateways (e.g., Composio, TrueFoundry).

Best for:

Portkey is more useful to engineers that have already built their organizational structure, processes and have an extensive network used to route documents, monitor usage, and develop costing models.

6. n8n

n8n is an open source (OS) workflow automation tool with integrated native AI agent capabilities and bidirectional MCP support, connecting both traditional methods of automation: webhooks, API’s and databases to LLM-powered agent workflows via visual builder.

Key Features:

- A visual workflow designer that provides a means to connect together 500+ integration nodes (both deterministic automation and agent) for a seamless transition between the two types of automations.

- An AI Agent node that provides native AI agent capabilities through calling other tools, which also includes memory (two ways to store it) — using either Redis or a simple DB — as well as support for different models utilizing OpenAI (and Anthropic) in the AI agent node.

- Human-in-the-loop gating (requires explicit human approval) for agents to execute any high-impact tools (added Jan 2026).

Pros:

- Lowest level of difficulty for individuals who are not ML engineers to create automations using AI.

- Great self-hosted options with a thriving open-source community (for OS documentation and resources) and a fair licensing agreement.

Cons:

- Not designed specifically to work with AI, so no memory used to maintain an agent, will only work during each workflow run unless data is stored somewhere else.

- Does not have the levels of governance, Role-Based Access Control (RBAC), cost containment, or enterprise compliance features built in for dedicated AI software solutions.

- Limited performance ceilings are present when there are a lot of high-volume, time-sensitive workloads utilizing agents.

Best for:

If your team is in an Operations or Automation-related area and is looking to augment their existing automation solutions with AI agent powered capabilities without a full-fledged ML-based solution.

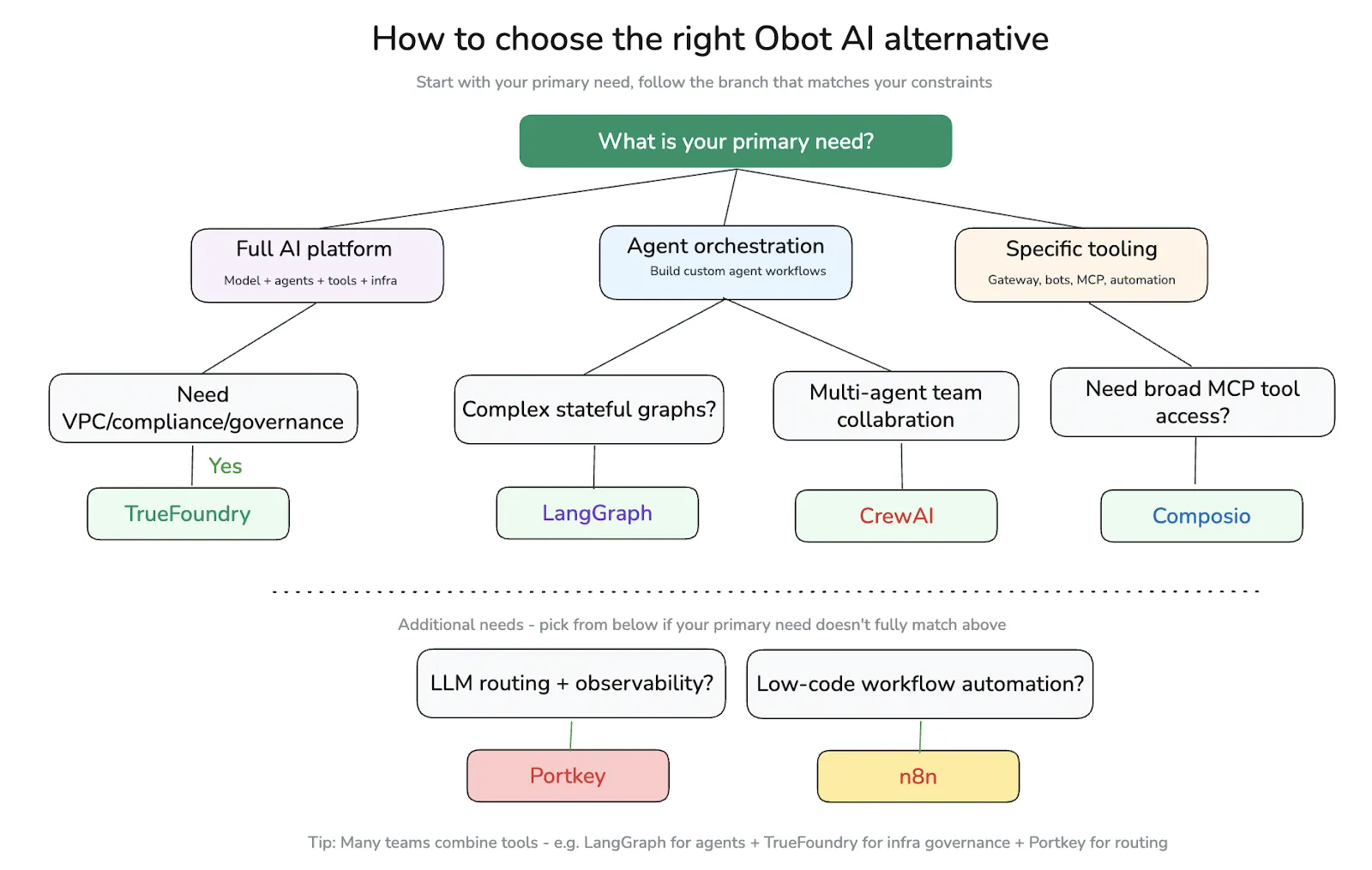

How to Choose the Right Obot AI Alternative

Choosing the Best Obot AI alternative Requires Consideration to Match Your Team's Needs

Your team will not benefit from the same Obot AI replacement due to differences in the size of your team along with what amount of infrastructure you want to manage and what task/function you want to complete first.

Scenario-Based Recommendations:

- Building multi-step research Agents that need to call tools to run? Use LangGraph and Portkey or select TrueFoundry as the all-in-one automate solution.

- Need VPC automated controls/regulatory access to the MCP server with numerous team members? Design with TrueFoundry on the basis of placing Virtual MCP Servers into the VPC with central RBAC.

- Are you adding artificial intelligence into your existing Zapier-style? Look to n8n to add into your current workflow.

- Need VPC and audit trails from your compliance team for any/all parts of the overall systems installed? Look to TrueFoundry as your deployment option.

Frequently Asked Questions

Question: What Is Obot AI Used For?

- Primarily designed to manage infrastructure built on the Model Context Protocol (MCP) — specifically how AI agents interface with and connect to external systems and tools.

- Provides user authentication management for AI agents, request routing through a centralized registry, and audit logging for all agent-to-system interactions.

- Functions as a governance (control) layer for AI agents interacting with external systems and tools — not a full AI OS or end-to-end AI platform.

Question: Why Do Teams Look for Obot AI Alternatives?

- Their needs exceed the scope of an MCP governance layer — requiring model serving, inference routing, cost tracking, and enterprise-grade production deployment.

- They need access to mature, frequently updated enterprise features without waiting on an early-stage release cycle.

They prefer a unified platform that consolidates models, agents, tools, and operational automation under a single system — rather than managing multiple independent layers.

Question: What are the best AI agent platforms in 2026?

The best artificial intelligence (AI) agent platform for your needs in 2026 will depend on which portion(s) you will require to own:

TrueFoundry - This is the preferred platform for large organizations to control the end-to-end model governance. TrueFoundry offers all the components of the end-to-end model governance stack including: Model serving, agent orchestration, management of MCP tools, and control of the infrastructure for model serving.

If you need a platform that provides production-level-compliance, cost attribution of models by department or team, and governance at the scalable level across many departments or teams, then TrueFoundry would be the best platform.

LangGraph - This is the framework of choice for engineering teams that are building complex, stateful agent-based applications with custom branching, cycles, and/or human-in-the-loop workflows.

CrewAI - This is the best platform for designing systems for collaboration between multiple agents with role-based delegation and task coordination.

Composio - This is the best platform when fast access to 500+ managed cross-platform (MCP) tool integrations is more important than owning the infrastructure.

If you are looking for a platform that has the largest surface area throughout to provide you with a governance, cost-control, and compliance solution across multiple departments or teams, then TrueFoundry would be by far the best option.

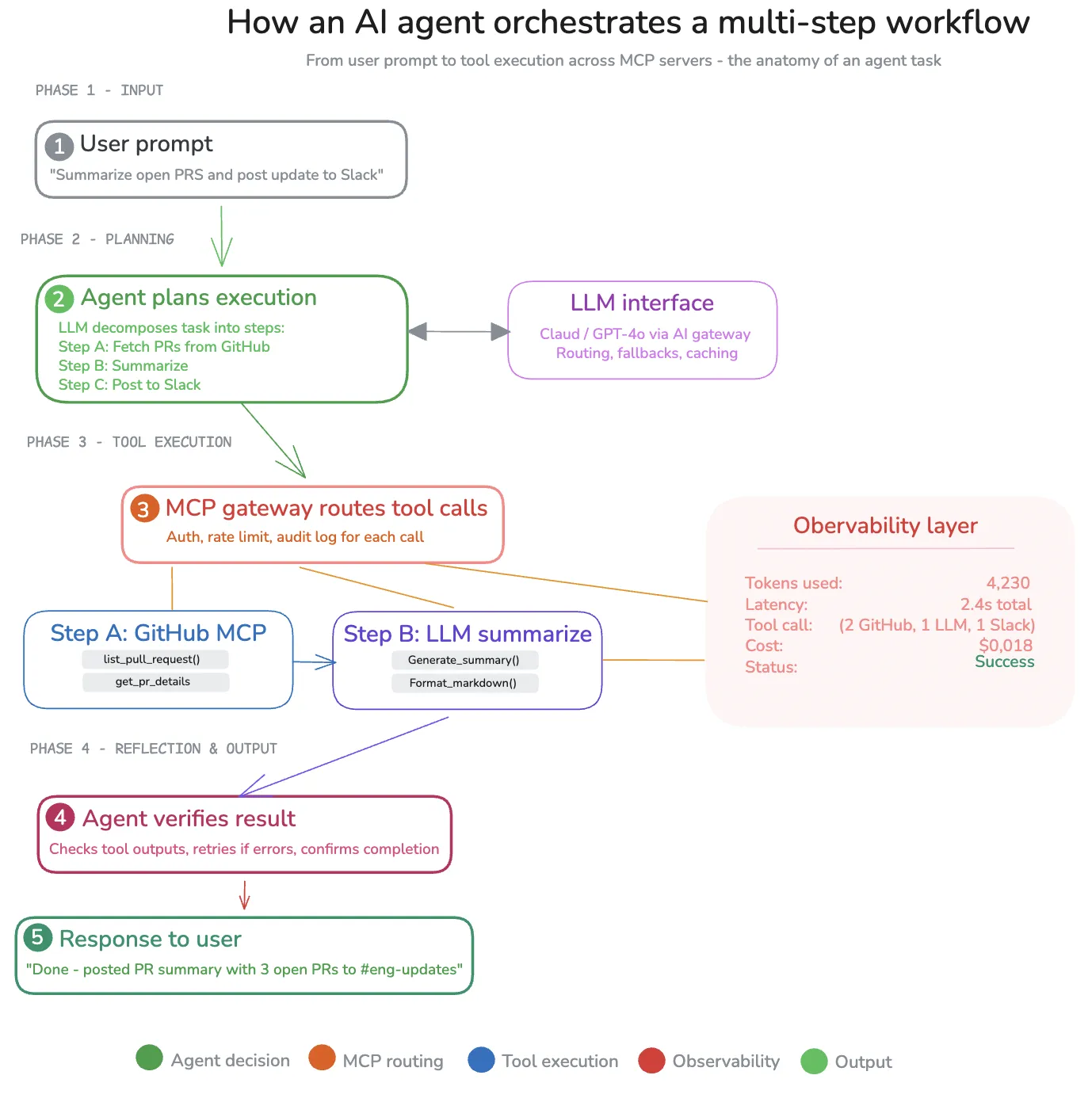

Question: How do AI agent tools compare to traditional automation tools?

An AI agent differs greatly from traditional/legacy automation applications (e.g., n8n/Zapier) in that these applications run on a very deterministic basis (i.e. the same code structure produces the same outcome every time).

They are usually appropriate for very well-defined (i.e. guaranteed) tasks, such as: synchronizing data, sending notifications and executing scheduled work.

On the other hand, AI agents use (LLM)-driven decision making, dynamically selecting tools based on the immediate environment’s requirements and performing multi-step reasoning which gives the AI agent some discretion in determining its next task.

Question:What should you look for in an AI orchestration platform?

Model Management: The ability to use a variety of large language models (LLMs) offered by third-party cloud vendors (OpenAI, Anthropic, open-source) and to host self-hosted (non-cloud) models.

Deployment Options: Options for executing AI workloads in a virtual private cloud (VPC), on-premise or in an air-gapped environment to ensure data privacy.

Observability: The ability to monitor latency, assign a token cost for each LLM, and provide per-team usage analytics.

Management of Tools and Agents: The ability to centrally manage the registration and auditing of data connections between models, tools and agents.

RBAC Compliance: The ability to create role-based access and apply standards for SOC 2 compliance and enterprise audit trails to everyone with access to the toolset.

Developer Experience: The speed in which developers can write, test and deploy their code to production, quality of the SDK and the frameworks the SDK supports

The main difference is whether you are developing an application or supporting an infrastructure layer. Platforms such as TrueFoundry are mainly for use by platform teams to manage models, agents and tools across multiple business units when transitioning AI from experimentation to production.

Conclusion

If you are looking for an open-source solution for your team’s hosting and governing of MCP servers, then Obot should still be one of the top contenders. But as the enterprise moves from trials of AI to producing and deploying AI-powered solutions at scale, you will find that most teams require additional functionality beyond hosting and governance of their models

This is where TrueFoundry is uniquely positioned as it is the only vendor on this list that has successfully merged the governance of the MCP with the equivalent of managing the entire AI life cycle from deploying models, having a connected AI Gateway providing intelligent routing , storing agent registries , providing observability dashboards and providing VPC-native execution — all without tying you to a single cloud provider.

If your team is considering using Obot AI alternatives and needs a platform to grow its use of AI across multiple teams and user communities, we recommend requesting a demo of TrueFoundry’s products and an overview of how they support your technology stack.

FAQs

1. What is the difference between an MCP gateway and an AI gateway?

An MCP gateway is intended for managing how AI agents interact with tools outside their system through the Model Context Protocol. The AI gateway is at a higher level, where requests are managed for multiple models. The gateway is responsible for enforcing policies, as well as monitoring latency, usage, and costs. In a production environment, both gateways will need to work together. This is where TrueFoundry is useful, as it brings both MCP and AI gateways together in a single platform.

2. Do I need both MCP infrastructure and LLM infrastructure to run AI agents in production?

Yes, a production environment for AI agents will require both MCP infrastructure and LLM infrastructure. MCP will manage how AI agents interact with tools outside their system. LLM will manage how AI agents are hosted. Obot AI is a tool designed for MCP, but a production environment will require both MCP and LLM. TrueFoundry brings both MCP and LLM together in a single platform, making it much simpler to manage production environments for AI agents.

3. When should a team move beyond Obot AI?

A team will need to move away from Obot AI when their needs go beyond MCP. This is when they need to move to a production environment. This is when they need to work with multiple models, have their costs tracked, and have a better view of their AI agents. The need for a production environment will come up when AI is used by many teams. At this point, it is complicated to manage different tools for different levels. At this point, a team will need a platform like TrueFoundry, where both MCP and AI can be managed in a single platform.

4. What are the key features that you would want in an Obot AI alternative?

When you want to choose Obot AI alternatives, you want to make sure you choose those that have the features required for production readiness. This means you want them to support multiple models, both hosted and self-hosted. Also, you want them to have good observability with features such as latency and token usage. Moreover, you want them to have good security features such as RBAC and audit trails. Finally, you want them to support deployment in VPC or on-premise environments. Another important feature is the developer experience, especially with regards to how quickly you want to go from prototype to production. TrueFoundry is often a consideration in this regard as it supports these features at both the MCP and model levels.

5. Can MCP tools such as Obot AI support production-level monitoring and cost control?

MCP tools such as Obot AI were designed primarily with the purpose of managing the tools themselves. They were not designed with the purpose of supporting production-level monitoring. Although they support features such as audit trails, they lack features such as token-level cost control or the ability to monitor the performance of the models and agents. This is the reason why you would want to use TrueFoundry as it supports production-level monitoring.

6. What is the best way to start with AI agent infrastructure in 2026?

The simplest way to get started varies based on the level of development. For instance, teams who are still experimenting with agents might want to use a framework or a workflow tool to build initial use cases. However, as the complexity level increases, teams might want to add MCP systems to help manage access to the tools, followed by infrastructure to handle routing and monitoring. However, it might eventually become hard to manage these layers separately, and this is where the need to use a more unified system arises. TrueFoundry makes it easy to achieve this by supporting both experimentation and infrastructure in the same environment.

TrueFoundry AI Gateway delivers ~3–4 ms latency, handles 350+ RPS on 1 vCPU, scales horizontally with ease, and is production-ready, while LiteLLM suffers from high latency, struggles beyond moderate RPS, lacks built-in scaling, and is best for light or prototype workloads.

The fastest way to build, govern and scale your AI

.png)

.webp)

.webp)

.webp)

.webp)