Enhancing Customer Support with Real-Time AI Assistance Using Cognita

Built for Speed: ~10ms Latency, Even Under Load

Blazingly fast way to build, track and deploy your models!

- Handles 350+ RPS on just 1 vCPU — no tuning needed

- Production-ready with full enterprise support

About Cognita

Cognita is a versatile open-source RAG framework designed to enable Data Science, Machine Learning, and Platform Engineering leaders to build and deploy scalable RAG applications. It features a fully modular, user-friendly, and adaptable architecture, ensuring complete security and compliance. It also ships with a UI that makes it easier to try out different RAG configurations and see the results in real-time.

Introduction to the Use Case

In an era where customer experience defines business success, the ability to provide immediate and precise support is crucial. TrueFoundry's Cognita framework enables the development of sophisticated real-time AI applications tailored for customer support. By leveraging the modular and open-source nature of Cognita, businesses can enhance their support systems to deliver superior customer service.

What is the problem we are trying to solve?

Present customer support systems have substantial problems in delivering customers' high expectations for prompt and accurate responses. Conventional support approaches fail to handle vast amounts of requests, ensure consistency in responses, and provide 24/7 availability. These difficulties lead to higher operating expenses, lower customer satisfaction, and inefficiencies, which can deter business growth.

Manual vs Automated Customer Support

In a traditional manual customer support system, human agents are responsible for addressing each customer inquiry individually. This labor-intensive process involves agents navigating through extensive knowledge bases, documentation, and past query records to find accurate and relevant information. The variability in human performance can lead to inconsistencies in responses, with the quality of support depending heavily on the agent's expertise and experience. Furthermore, maintaining a 24/7 support system requires a significant workforce, necessitating shift rotations and leading to increased operational costs. During peak query times, the manual approach often results in backlogs, prolonged response times, and customer dissatisfaction.

This automated pipeline not only significantly reduces response times but also ensures that each customer interaction is handled with consistent accuracy and reliability. Cognita's scalability enables the system to handle high numbers of requests at once, making it a practical choice for enterprises facing growth or shifting support demands. Furthermore, this automation relieves human agents of mundane questions, allowing them to concentrate on more complicated issues, thus increasing the overall efficiency and efficacy of the support operation.

Solution

Transitioning to an automated system powered by TrueFoundry's Cognita framework enables the integration of advanced AI components to automate customer query handling. Specifically, the use of data loaders and parsers ensures that a comprehensive and structured dataset is readily available for the system to learn from. By implementing embedders, textual data is converted into high-dimensional vectors, facilitating efficient and accurate similarity searches. The vector databases support rapid retrieval of this embedded information, ensuring real-time performance. When a query is received, the query controller orchestrates the process, utilizing rerankers to evaluate and prioritize the most relevant responses.

Implementing Cognita for customer support can address these challenges by:

- Automated Query Handling: Using Cognita's embedders and vector databases to quickly retrieve relevant information and provide accurate responses to customer queries.

- Real-Time Assistance: Leveraging the reranking and query controller modules to ensure that the most relevant and concise information is provided, enhancing the customer's experience.

- Scalability: Cognita's modular design allows for easy scaling of the system to handle increasing volumes of queries without compromising performance.

Deploying Cognita using TrueFoundry

You can use Cognita locally or with/without using any Truefoundry components. However, using Truefoundry components makes it easier to test different models and deploy the system in a scalable way. Cognita allows you to host multiple RAG systems using one app. Hence, we will be using TrueFoundry components to create a small-scale support bot for just the MacBook Pro initially and then add a few more products and support for different languages to scale it.

Once you've set up a cluster, added a Storage Integration, and created an ML Repo and Workspace, you are all set to begin deploying a Cognita-based RAG application using TrueFoundry. More information on this one-time setup can be found here. Once done:

- Navigate to the Deployments tab.

- Click on the

+ New Deploymentbutton on the top-right and selectApplication Catalogue. Select your workspace and the RAG Application. - Fill up the deployment template

- Give your deployment a Name

- Add ML Repo

- You can either add an existing Qdrant DB or create a new one

By default, the release branch is used for deployment (You will find this option in Show Advance fields). You can change the branch name and git repository if required.

Make sure to re-select the main branch, as the SHA commit does not get updated automatically.

- Click on

Submit, and your application will be deployed.

Implementation Steps

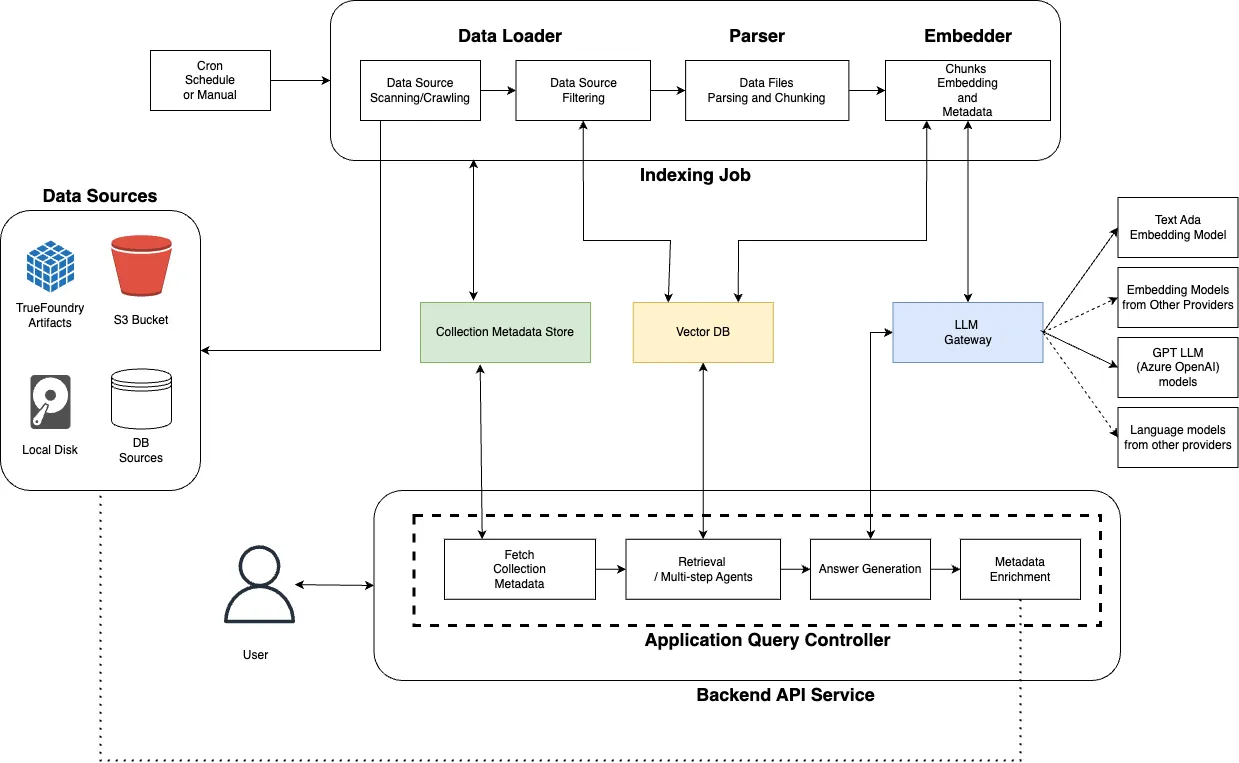

Overall, the architecture of Cognita is composed of several entities. We will be delving into each of them through the implementation steps below.

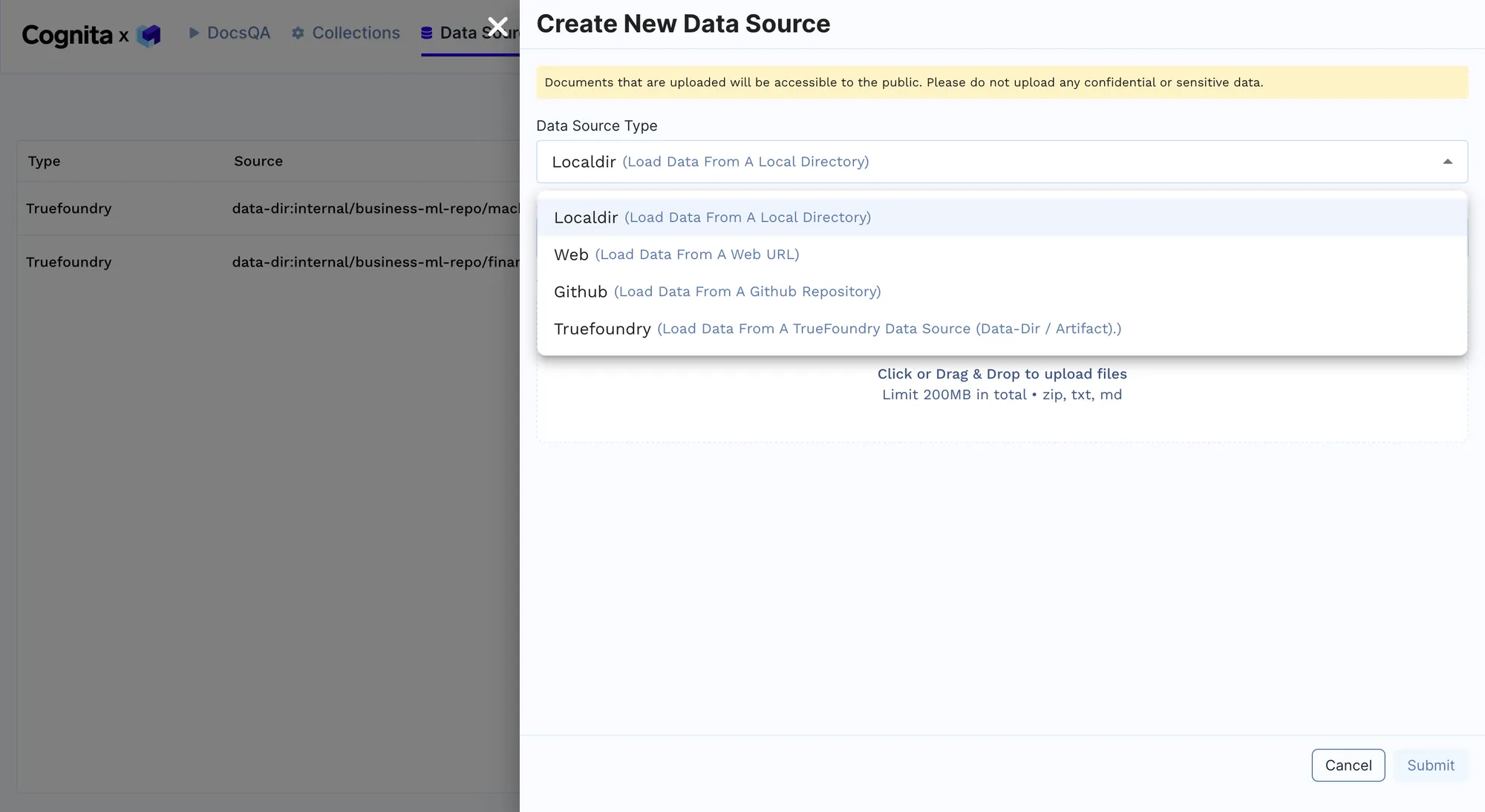

- Data Loading: Cognita's data loaders are used to import customer support documents and historical query data from various sources, such as local directories or cloud storage. This can be done by adding a new data source from the RAG Endpoint provided after deployment, as shown below. Multiple sources of data can be added here as per the requirements to improve the model's performance. We will begin with adding just one MacBook guide initially and then add other data later. The link to all the documents uploaded can be found here.

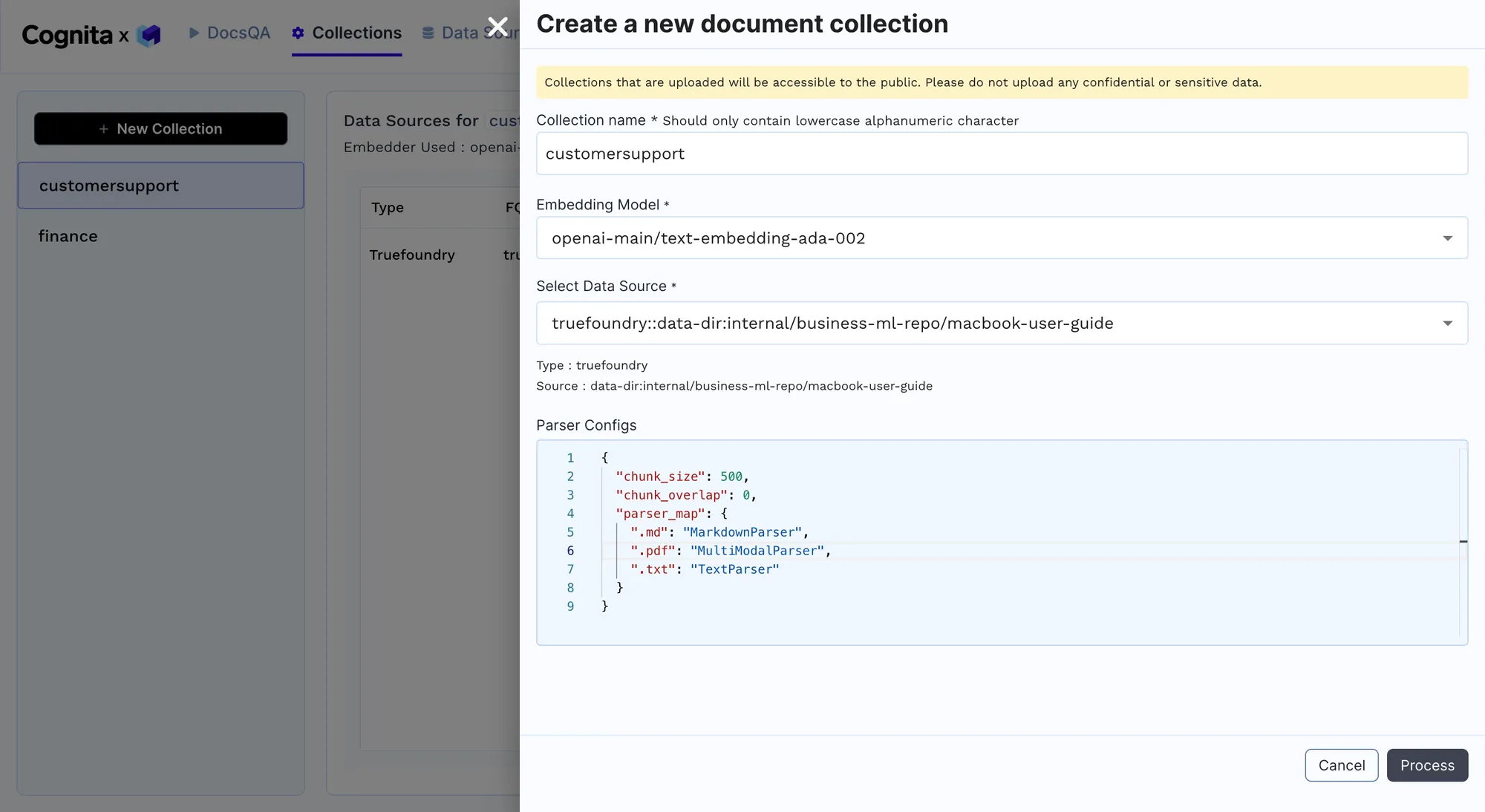

- Parsing and Embedding: Parse the documents into a uniform format and create embeddings using pre-trained models to facilitate quick retrieval of relevant information. A new collection of documents from a data source added in the previous step can be used for parsing and embedding. We are trying to solve a multimodal use-case here, where we are taking a PDF, converting it into an image, and breaking it down into pages, and each page is converted into images. Then, specific analysis is done through prompts, where insights are gathered and stored in the VectorDB. When a question is asked, the question is searched across all the stored insights; the page is retrieved, which is then sent to the vision model for question answering. Once the Process button is clicked, the collection is created, a new pod is created, the indexing job begins, and the data is ingested into the different qdrants. Note: This may take a few minutes.

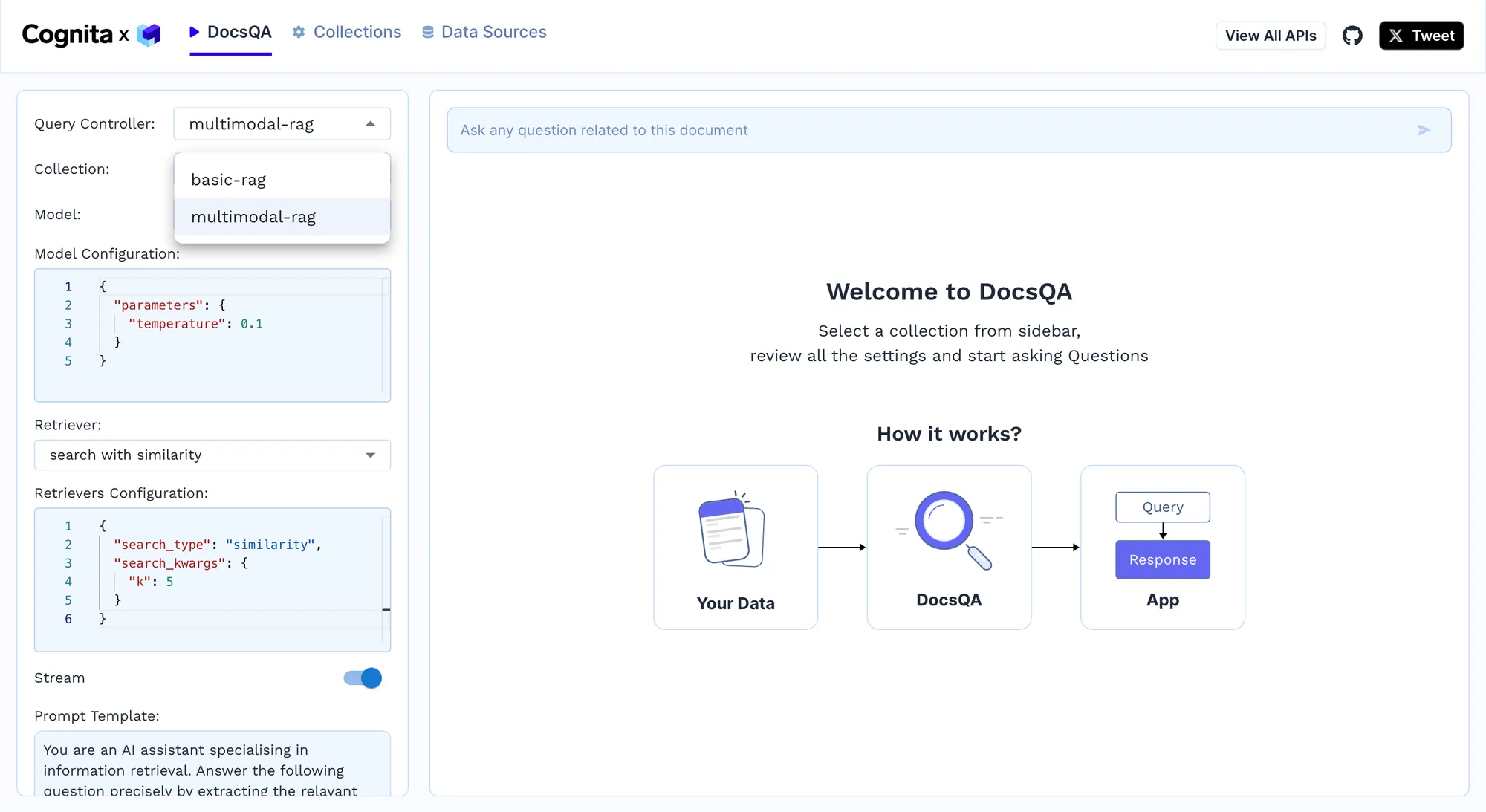

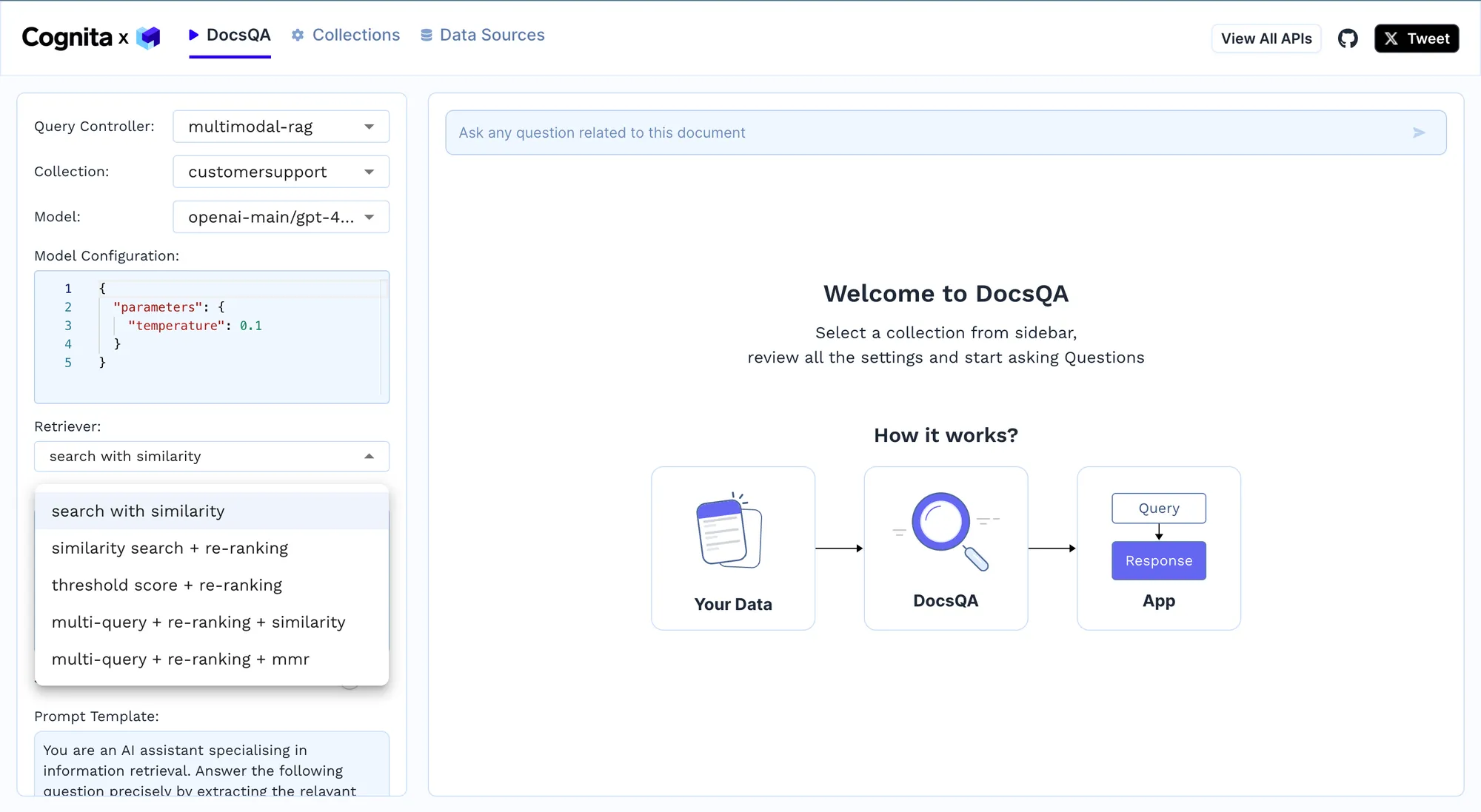

- Query Handling: Implement the query controller to process incoming queries, rerank potential answers, and provide the most accurate responses in real time. For example, we can use the basic-rag for simple text parsing. However, when dealing with PDF documents, a multimodal-rag will be a better option since it uses the vision model, presently GPT-4, to answer questions on PDF, which are parsed using the multimodal parser. Since we are using a multimodal parser, the multimodal-rag leads to better results.

- Continuous Improvement: Continuously update the embeddings and reranking models based on new data and customer interactions to improve the system's accuracy and efficiency. Different retrievers can be used from the dropdown, as shown below. Furthermore, new documents can be added to the data source, and the indexing job can be rerun to improve the model. E.g. For more complex user queries, a multi-query + re-ranking + similarity model can be used, which requires k in search_kwargs for similarity search, and the search_type can either be similarity or MMR or similarity_score_threshold. This works by breaking down complex queries into more straightforward queries, finding relevant documents for each of them, reranking them, and sending them to LLM. Then, the results are accumulated and provided. We can play with the prompt template below the Retriever option to get richer responses.

Suppose you want to scale the RAG application. In that case, we can do this by adding different data sources to allow it to cater to various customer queries and be an all-inclusive solution. We add other documents, including support documents for different MacBooks, iPads, iPhones, AirPods, and watchOS, by adding a new data source and linking it to the collection. The RAG now acts as a comprehensive AI customer support agent for a broad suite of Apple products. Some documents are also in different languages to further scale it by adding multi-language support.

Implementation Example

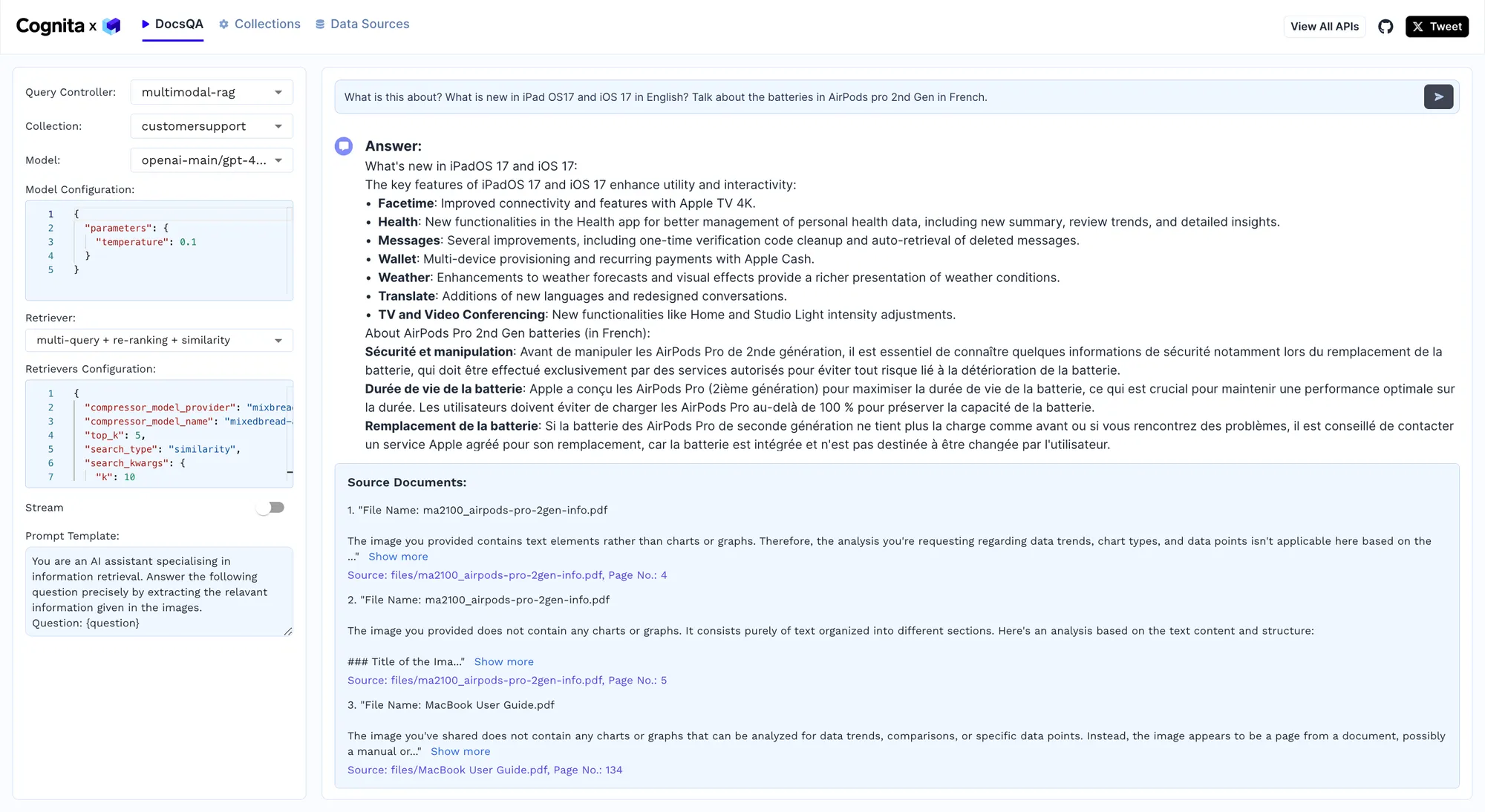

We will now test the model by giving it a complex query, and the results are shown below.

In a test of the Cognita framework, the model successfully answered the query, "What is new in iPadOS 17 and iOS 17 in English? Talk about the batteries in AirPods Pro 2nd Gen in French," demonstrating its ability to handle complex, multilingual questions. The model utilized the multimodal-rag configuration to process and synthesize information from various documents, providing a detailed list of new features in iPadOS 17 and iOS 17, such as improved FaceTime capabilities and enhancements in the Health app. Additionally, it delivered precise information about AirPods Pro 2nd Gen batteries in French, addressing safety, battery lifespan, and replacement procedures. This test underscores Cognita's capability to integrate advanced NLP and vision models, ensuring accurate, contextually relevant responses in multiple languages, thereby enhancing customer support operations with real-time, high-quality information retrieval.

Benefits

- Reduced Latency and Enhanced Throughput: By leveraging advanced embedding techniques and efficient vector databases, Cognita ensures rapid query processing, reducing response times to milliseconds. This is critical for maintaining customer satisfaction in high-pressure environments.

- Adaptive Learning and Continuous Improvement: Integrating feedback loops and continuously updating the model embeddings based on real-time interactions allows the system to learn and improve, reducing error rates and enhancing the accuracy of responses over time.

- Resource Optimization and Cost Efficiency: Automating query handling significantly lowers the need for extensive human support staff, resulting in substantial cost savings. Moreover, it allows human agents to focus on more complex, high-value tasks, improving overall support quality.

- Scalability and Flexibility: The modular architecture of Cognita ensures that the system can quickly scale horizontally to accommodate growing query volumes without compromising performance. This versatility is critical for firms with quick development or seasonal surges in assistance needs.

- Enhanced Customer Retention and Loyalty: By providing consistent, accurate, and timely responses, Cognita enhances the customer experience, leading to higher satisfaction rates, increased loyalty, and reduced churn. This translates directly into improved lifetime customer value and business revenue.

Additional Improvements by Companies

- Advanced Personalization and User Profiling:

By integrating user profiling and advanced personalization algorithms, companies can tailor responses based on individual user preferences and past interactions. This can be achieved by analyzing historical data and embedding user-specific context into queries, enhancing the relevance and personalization of responses. - Multilingual Support:

Incorporating multilingual capabilities allows companies to provide support in multiple languages. This can be implemented by integrating language detection and translation modules within Cognita, enabling seamless support for a global customer base without the need for additional human resources. - Sentiment Analysis and Emotional Intelligence:

Companies that integrate sentiment analysis and emotional intelligence modules can gauge client feelings and tailor answers accordingly. This entails real-time analysis of client tone and attitude, which enables the AI to give empathic and suitable responses, hence increasing overall customer satisfaction. - Proactive Support and Predictive Analytics:

Predictive analytics enables businesses to anticipate client requirements and challenges before they happen. Furthermore, by evaluating usage patterns and historical data, Cognita can initiate proactive support interventions such as providing solutions to often encountered problems or informing clients of prospective issues, thereby improving the customer experience and lowering incoming requests. - Integration with CRM Systems:

Seamless integration with CRM systems can provide a holistic view of customer interactions. By pulling in data from CRM platforms, Cognita can offer more informed and contextually aware responses, ensuring that customer interactions are consistent and personalized across all touchpoints. - Enhanced Security and Privacy:

Implementing advanced security measures ensures that customer data is handled securely. Companies can integrate Cognita with secure data storage solutions and utilize encryption protocols to protect sensitive information, ensuring compliance with data protection regulations and maintaining customer trust. - Dynamic Content and Knowledge Base Updates:

Automating the updating process of knowledge bases ensures that the system always has access to the most current information. By setting up automated pipelines to ingest and process new content, Cognita can continuously learn from fresh data, keeping the support system up to date with the latest information and trends.

Conclusion

Cognita's modular architecture and advanced AI capabilities provide a robust solution for enhancing customer support. It efficiently handles complex queries, processes diverse data types, and delivers accurate, real-time responses. By integrating features like multilingual support and predictive analytics, Cognita significantly improves customer satisfaction and operational efficiency, making it an invaluable tool for modern support systems.

TrueFoundry AI Gateway delivers ~3–4 ms latency, handles 350+ RPS on 1 vCPU, scales horizontally with ease, and is production-ready, while LiteLLM suffers from high latency, struggles beyond moderate RPS, lacks built-in scaling, and is best for light or prototype workloads.

The fastest way to build, govern and scale your AI

.png)

.webp)

.webp)

.webp)