8 Best Mint MCP Alternatives for AI Agent Infrastructure in 2026

.webp)

Built for Speed: ~10ms Latency, Even Under Load

Blazingly fast way to build, track and deploy your models!

- Handles 350+ RPS on just 1 vCPU — no tuning needed

- Production-ready with full enterprise support

As AI moves from testing to use in production, the important thing that is coming to light is:

that the challenge of providing access to tools had previously been viewed merely as an integration problem, but now it is being seen as an infrastructure issue.

This has created the opportunity for the emergence of the Model Context Protocol (MCP), which defines a set of standards regarding how agents can connect to external tools. Several companies are building these protocols (such as Mint MCP), which provide teams with ways to control, secure, and manage agents’ use of external tools (including cloud, on-premise, and mixed deployments).

However, Mint MCP is not the only choice.

Depending on what you require (your specific use case) — such as performance, throughput, deployment architecture, scalability, etc. — you may find that other MCP Gateways meet your needs more effectively than Mint MCP.

In this guide, we will cover:

- What Mint MCP is

- Why teams look for MCP alternatives

- 8 best MCP gateway tools in 2026

- Guidance regarding selecting the proper MCP gateway for your solution.

Introduction

What is Mint MCP?

MintMCP has just released an Enterprise Governance Platform for AI Agents and MCP Servers, giving teams the tools they need to implement, manage and secure AI Agent Infrastructure globally. With the Vendor Governmental Platform, organizations can deploy and manage AI Agents at scale while maintaining thorough audit logs and supporting policies.

What is MCP (Model Context Protocol)?

MCP (Model Context Protocol) is a framework established by Anthropic that allows Claude Code to communicate externally with systems in a structured manner.

MCP complements the use of Tool Logic by providing a standardised middleware layer. It allows Claude to use a standard format when calling a Tool, at which point the MCP Provider will simulate the request and execute it upon the real system behind it (i.e., Database, Internal API, Cloud Service, or Ticket Management System).

Why do teams explore Mint MCP alternatives?

While MINT MCP establishes the framework for governing AI-based tools, it has drawbacks for enterprises who have strict security-related needs.

Trust assumptions

MINT MCP relies on enforcement of access policies at the gateway level but requires secure acquisition of each back-end Mint MCP server. An improperly configured or improperly authorized tool server is a potential weak link that a gateway alone cannot address.

Limited customization

MINT MCP is a commercially available product and closed source. Teams may be concerned with vendor lock-in and lack of transparency, and if they require broad-scale customization, self-audit, or additional access control options that go above and beyond what the vendor supports, MINT MCP does not provide for this at all, even if using the self-hosted version.

<INSERT MCP CHECKLIST BANNER HERE>

Governance Gaps

MINT MCP includes Single Sign-On (SSO), Role-Based Access Control (RBAC), and some level of audit logging. Still, for most heavily regulated organizations, such as those that fall under regulatory oversight, the basic governance afforded through MINT MCP (e.g. SSO and RBAC) is simply not sufficient, and they require additional custom approval workflows, potential real-time auditing of content, and/or integration with their internal secrets management in a way that a standard (turn-key) product do not typically provide by default.

Operational Overhead

Adding MINT MCP to your environment creates an additional and critical piece of infrastructure that requires management, maintenance, as well as trusting your platform. Many high-security organizations (banks, government) would prefer to manage the routing of these types of tools through their own systems rather than through a vendor. Therefore, organizations with high-security and trust requirements tend to seek vendor-neutral, open-source alternatives for these tools.

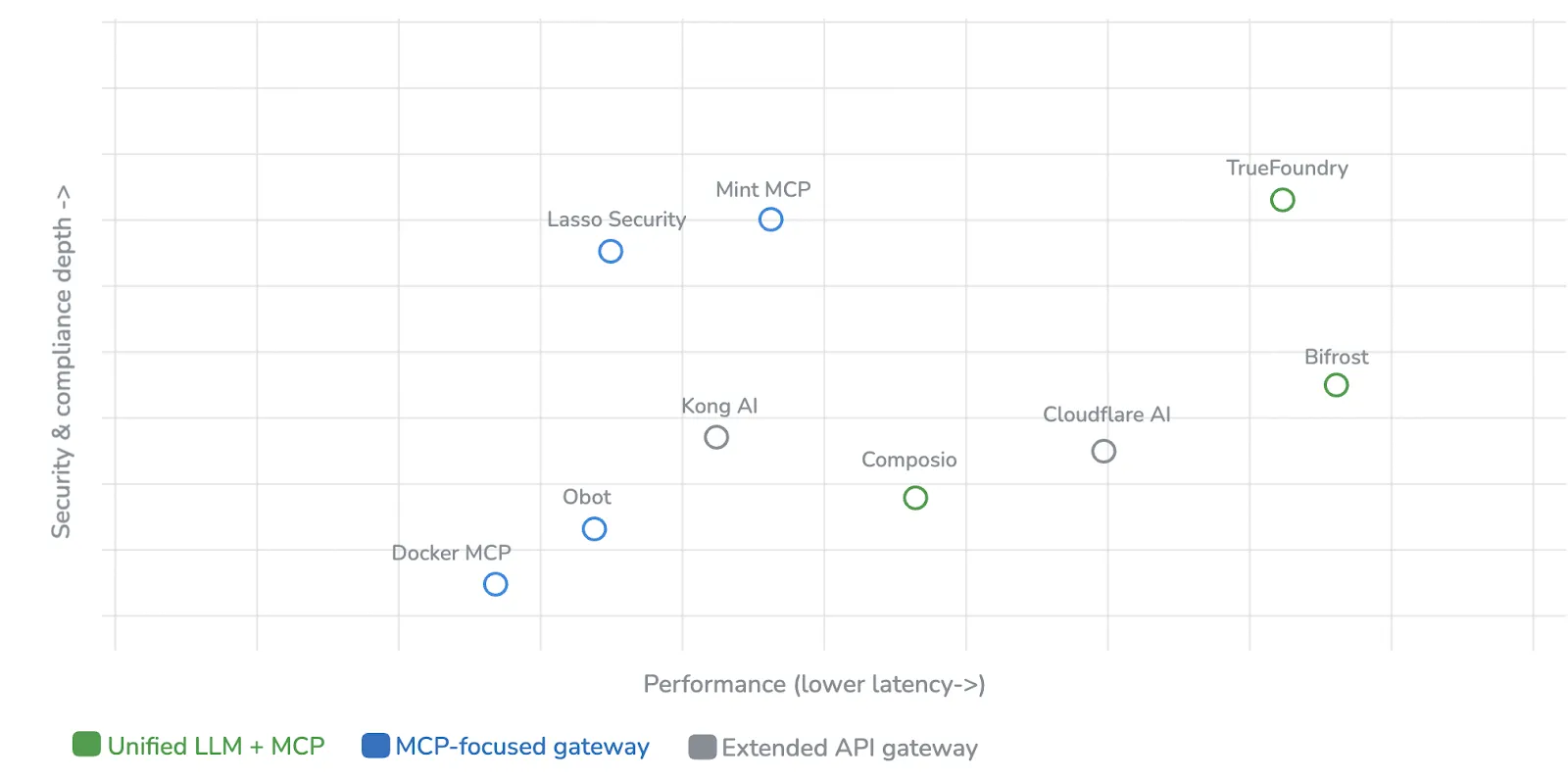

Evaluation Criteria

How Did We Evaluate Mint MCP Alternatives?

To keep things practical, we evaluated tools across six dimensions:

MCP Protocol Support

- STDIO

- Streamable HTTP

- SSE

- MCP client/server compatibility

Security & Compliance

- SOC 2, HIPAA, GDPR

- OAuth 2.1

- RBAC, SSO

Performance

- Gateway latency (p50 / p95)

Deployment Flexibility

- SaaS vs self-hosted

- VPC, air-gapped, Kubernetes

Unified LLM + MCP Management

- Does it handle both models and tools?

Ecosystem & Integrations

- LangChain, CrewAI, AutoGen

- Number of supported tools

Comparison Table

Mint MCP Alternatives at a Glance

The 8 Best Mint MCP Alternatives in 2026

1. TrueFoundry

Enterprise AI infrastructure platform combining LLM routing, MCP tool governance, and model serving in a single control plane — deployable in your VPCs, on-prem, or air-gapped environments. Named a Representative Vendor in the 2025 Gartner Market Guide for AI Gateways.

Key Features:

- Unified LLM + MCP + A2A gateway – one endpoint to 1,000+ models across OpenAI, Anthropic, Gemini, Groq, Mistral, and many more with native MCP, A2B protocols.

- 3-4 ms p95 latency; 350+ RPS on 1 vCPU - powered by a stateful Rust data plane, scales with no tuning.

- Kubernetes-native deployment — AWS, Azure, GCP, on-prem, or air-gapped environments; your data never leaves your domain

- Virtual MCP Servers — merge several back-end applications into single, logically or physically managed servers with fine-grained RBAC and OAuth 2.0 identity injection.

- MCP Registry for dynamic tool discovery and schema validation

- Built-in observability — token usage, cost attribution per team/user/app, latency tracking, full request/response audit logs

- Interactive Playground — test LLMs, prompts, MCP tools, and guardrails in a UI before wiring them into applications

- Model serving included — deploy self-hosted models via vLLM, SGLang, or TRT-LLM with GPU optimization

Best For:

Enterprise level platform engineering team needing a governed, performant AI infrastructure layer for LLM inference, MCP tooling and agent orchestration in a governed or multi cloud environment.

2. Composio

Developer first MCP platform to enable rapid integrations and iterations.

Key Features:

- 500+ prebuilt integrations

- Unified authentication layer

- MCP-compatible tool access

- Quick onboarding workflows

Pros:

- Fastest path to production for integrations

- Large ecosystem of connectors

Cons:

- Limited low-level control

- Less focus on performance tuning

Best for:

Groups looking for fast and easy-to-use tools as opposed to infrastructure access.

3. Bifrost

Open-source gateway that supports multiple workloads (MCC + LLM).

Key features:

- Ultra Low Latency (below 3 ms)

- Written in Go

- Routing for both MCC/LLM

- Tokenization for optimization

Pros:

- High Performance

- Open source flexibility

Cons:

- Self-hosting needed

- Increased Operating cost

Best for:

Teams that require high performance and full control.

4. Docker MCP Gateway

Docker's open-source gateway is used for orchestrating MCP servers. Each server is built and run inside an isolated container, using limited privileges, network and resource limits of the container. The gateway can be accessed through a command-line interface (CLI) (docker mcp), and integrates with Docker Desktop/Compose, creating profiles to define the scope of which tools are available to the client. Designed for use with MCP only — no routing to LLMs.

Pros:

- Container-native isolation with supply chain verification (provenance verification, construction of software bill of materials (SBOM), use of Docker scout)

- The profile system enables you to define the scope of access to your tools depending on your client’s needs or team.

Overall, user experience is similar to the Docker workflow, so those familiar with Docker will use the same tools with Kubernetes, Swarm and existing CI/CD systems.

Cons:

- Only enables connections to an MCP (no routing to LLM, failover and model load balancing).

- Limited observability and policy management compared to existing enterprise gateways.

- The overhead of having a container creates latency compared to native process execution.

- Requires the use of Docker Desktop or a manual install of the Docker CE binary.

5. Kong AI Gateway

Robust and proven API gateway (open source Core, using Apache 2.0 Licensed) extended with over 60 different AI specific plugins, can route LLMs (OpenAI, Anthropic, Bedrock, Gemini, etc.) and govern traffic to MCP (when travelling to the gateway).

Pros:

- Existing users using the API gateway to be able to take advantage of the LLM and MCP tools without requiring new infrastructure (i.e. all clients use a common control plane for all LLMs and all plugins).

- Automatically converts REST APIs into MCP Tools that are using OpenAPI definitions.

- Over 60 AI specific plugins (semantic caching, semantic routing, guard rails, LLM analytic) are available to all clients on the API.

- Kubernetes-native with its Ingress Controller.

Cons:

- AI and MCP functionality are delivered as plugins.

Also Read: Kong vs LiteLLM

6 Lasso Security

Lasso Security is a security-first Gateway for GeneAI that uses OpenSource, Plugin-Based Proxy, also known as NAC, to provide a real-time threat detection system by detecting prompt injections, data exfiltration, memory poisoning, misuse of tools, and governance/compliance.

Pros:

- Was created for AI security (Prompt Injection, Data Exfiltration, Memory Poisoning, and Misuse of Tools)

- Natural language-based security policies are customized for a business context

- Ability to build additional capabilities in a plug-in environment, rather than all at once

- Max latency of <50 milliseconds; compliance dashboards in alignment with NIST and the EU AI act

Cons:

- Security layer, does not have LLM orchestration features (Routing), Failover, or Semantic Caching

- Commercial fee requirements for enterprise level plugins (Additional Guardrail APIs or Advanced Threat Models)

- Smaller community/ecosystem compared to Docker or Kong

7. Cloudflare AI Gateway

An Edge Native AI Proxy built on top of Cloudflare with built-in analytics, caching, automatic request retry, and rate limiting. It allows for monitoring LLM providers like OpenAI, Anthropic, and Bedrock.

Pros:

- Zero Infrastructure to manage (Fully Managed, Globally Distributed)

- Single Billing across multiple LLM providers from a single dashboard

- Paperless gateways with Zero Trust principles, MFA, and device posture checks.

Cons:

- Cloudflare Lock-In to their Ecosystem (Most Benefits come from using their services exclusively)

- MCP Governance is not as granular as other Gateways

8. Obot (by ACORN Labs)

The Obot platform is part of the open-sourced and self-hosted MCP software suite built by ACORN Labs. The platform covers the entire infrastructure required for building, deploying, and managing an MCP environment: hosting, registry, gateway, and a built-in chat client that is fully compliant with all MCP requirements. The platform can be run on either Docker or Kubernetes.

Pros:

- All of the necessary components needed to operate a complete MCP environment are included in the same package. Components include hosting, registry, gateway, and a chat interface (UI).

- Data never leaves a company's infrastructure as all capabilities are within the company's infrastructure and the platform can be deployed on a customer's own Kubernetes (K8S) cluster.

Cons:

- Due to its earlier development stage, Obot has a smaller community and less developed ecosystem when compared to similar platforms that use Docker, Kong, or Cloudflare.

- Obot is completely DIY in terms of maintaining the platform, including deployment, scaling and operations; customers are responsible for managing their own K8S environments.

- The documentation and polish are not on par with the features provided by the platform and will take additional time to catch up in these areas.

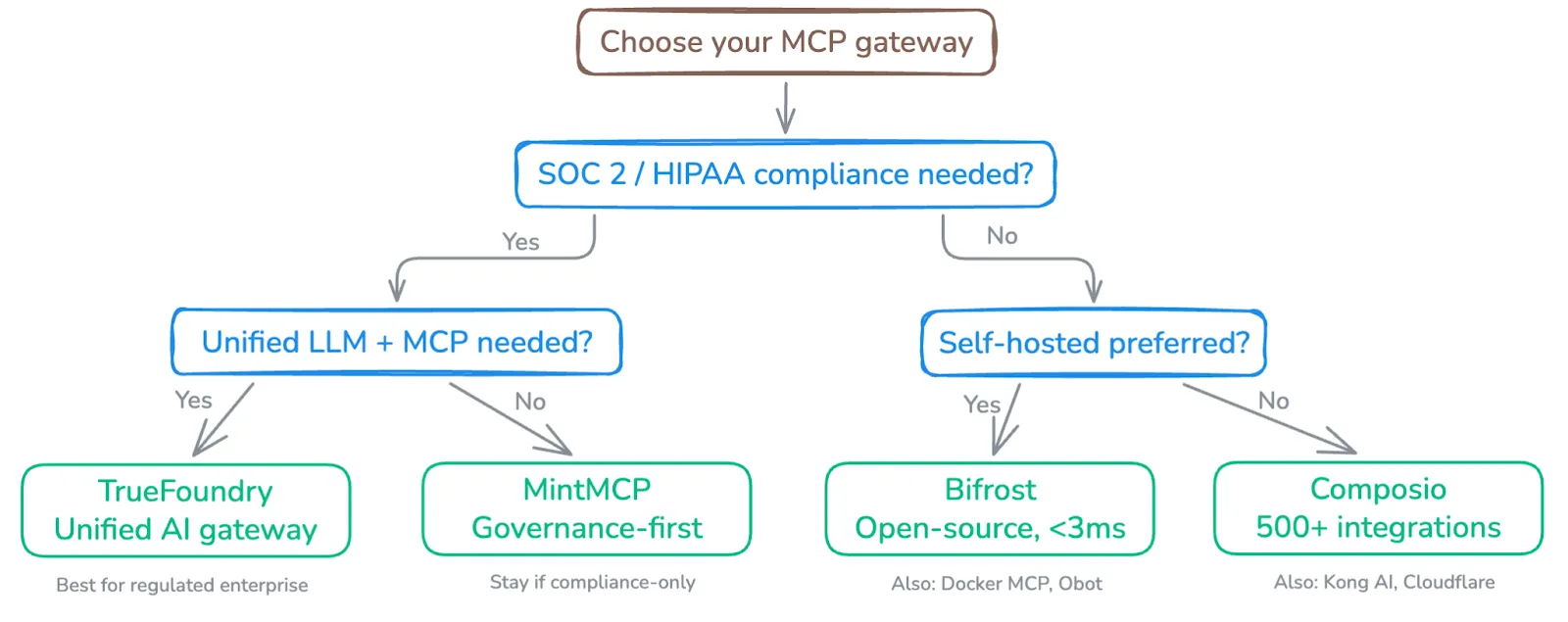

How to Choose the Right Mint MCP Alternative

Structured as scenario-based guidance (paragraphs, not bullets in the body — but can use a small decision table):

FAQs

What is Mint MCP used for?

Mint MCP is an MCP gateway focused on governance, compliance and security when accessing services/tools for Artificial Intelligence (AI) agents. Mint MCP provides OAuth wrapping, audit logging, and SOC 2 type II certification.

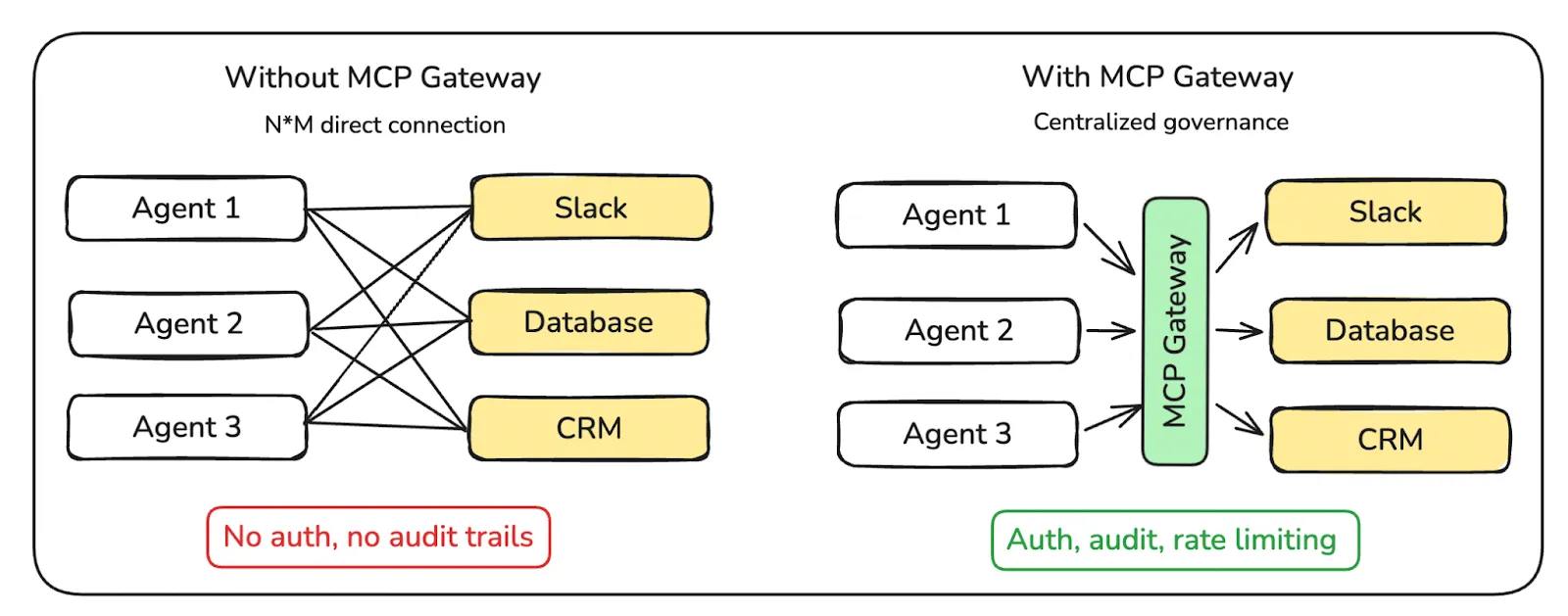

Are MCP gateways a requirement for production AI agents?

Yes, MCP gateways are a requirement for deploying agents into production. Without a gateway, teams can expect:

Inability to rotate credentials because the credentials are scattered across multiple sources

No visibility into the way agents interact with the tools used by the agents

Exposure to the possibility of data leakage from agents having unmanaged access

No audit capabilities to meet regulatory compliance, such as SOC 2, HIPAA, or EU AI Act.

No rate limiting, which can lead to runaway costs associated with APIs.

Why do teams seek alternatives to Mint MCP?

Generally speaking, teams who are looking for alternative solutions to using Mint MCP have several factors such as needing a more unified LLM+MCP management system across their organization, needing a lower latency through the use of technology, preferring an open-source/self-hosted solution, requiring a more comprehensive integration ecosystem, having a better compatibility with existing infrastructure like Kong, Docker or K8s and many others.

How do MCP tools compare to the traditional API Gateway?

In short, traditional API Gateways like Kong and Envoy are HTTP-based.

However, MCP Gateways work as a layer (protocol) in between the agents and tools. They provide functionality around agent discovery, credential management, RBAC by tool, as well as allowing for audit logs that can be associated with the agent. Additionally, there are tools that provide functionality to bridge both of these worlds – Kong and TrueFoundry – acting as where traditional API Gateways meet MCP Gateways.

What makes TrueFoundry the best Mint MCP alternative for enterprise teams?

- Unified Control Plane - TrueFoundry combines LLM routing and MCP tool governance into one dashboard. Mint only offers MCP governance.

- Sub-5ms latency - TrueFoundry can provide one of the fastest gateways to any form of cloud computing with the ability to process over 350 requests per second (RPS) on a single virtual CPU.

- VPC Native Deployment - TrueFoundry runs within your own cloud and does not require you to leave your data behind.

- SOC 2 and HIPAA - TrueFoundry is rated as being SOC 2 compliant and HIPAA compliant. In addition, it provides users with role-based access control and virtual MCP Servers.

- TrueFoundry has more than 25 different agent frameworks that can connect natively with LangChain, CrewAI, AutoGen, and many more

TrueFoundry serves as the path for teams who want more than just black-box audit trails and OAuth security for compliance requirements.

Conclusions:

- Mint MCP is the ideal option for organizations that require compliance-driven governance solutions; however, as of 2026, many organizations will look to leverage multiple features outside those offered by Mint.

- No single tool will serve all teams equally. Choose based on compliance needs, deployment/architecture location and performance targets.

- As such, TrueFoundry is the best-in-class solution for enterprise team collaboration by offering LLM and Mint governance; delivering sub 5ms latencies; natively hosting the solution in a user’s VPC, and integrating through 25+ different agent framework solutions, all from a single unified control plane.

TrueFoundry AI Gateway delivers ~3–4 ms latency, handles 350+ RPS on 1 vCPU, scales horizontally with ease, and is production-ready, while LiteLLM suffers from high latency, struggles beyond moderate RPS, lacks built-in scaling, and is best for light or prototype workloads.

The fastest way to build, govern and scale your AI

Govern, Deploy and Trace AI in Your Own Infrastructure

Recent Blogs

Frequently asked questions

What are the best Mint MCP alternatives?

The best alternatives to Mint MCP for AI agent infrastructure include TrueFoundry's MCP Gateway, Portkey AI Gateway, and LiteLLM. TrueFoundry is particularly strong for enterprises needing centralized MCP server management, access controls, and multi-model routing alongside their agentic tool deployments.

Who are the competitors to Mint MCP?

Mint MCP competes with platforms that offer MCP server hosting, management, and routing capabilities. Key competitors include TrueFoundry's AI Gateway with MCP support, Smithery MCP Registry, and custom MCP deployments built on LiteLLM or Kong. The competitive landscape is rapidly evolving as MCP adoption grows across the industry.

How is TrueFoundry different from Mint MCP?

TrueFoundry is not just an MCP connectivity layer it is an enterprise-grade AI Gateway platform designed to govern the full lifecycle of agentic AI workloads. While Mint MCP primarily focuses on MCP server hosting, protocol connectivity, and tool access for agents, TrueFoundry combines LLM Gateway, MCP Gateway, and Agent Gateway within a single control plane.This means enterprises can manage not only MCP tool access, but also model routing, security policies, observability, guardrails, and agent execution from one platform.

.png)

.webp)

.webp)

.webp)

.webp)