Akto Partners with TrueFoundry to Bring Security Guardrails to AI Agents

Built for Speed: ~10ms Latency, Even Under Load

Blazingly fast way to build, track and deploy your models!

- Handles 350+ RPS on just 1 vCPU — no tuning needed

- Production-ready with full enterprise support

We’re excited to announce our partnership with Akto that brings runtime security directly into the path of AI agent traffic.

Teams routing agent traffic through TrueFoundry’s AI Gateway can now connect Akto Argus as a 1st class guardrails solution to gain real-time visibility, policy enforcement, and runtime protection across prompts, responses, tool calls, and agent workflows in production.

As more teams move from single LLM calls to AI agents that invoke tools, connect to MCP servers, and act on real systems, production readiness requires two things working together:

- a reliable way to deploy, route, and govern agent traffic

- a reliable way to observe, control, and secure what those agents do at runtime

That is exactly what the TrueFoundry and Akto partnership delivers.

Why enterprise agentic AI needs two layers: Gateway and runtime security

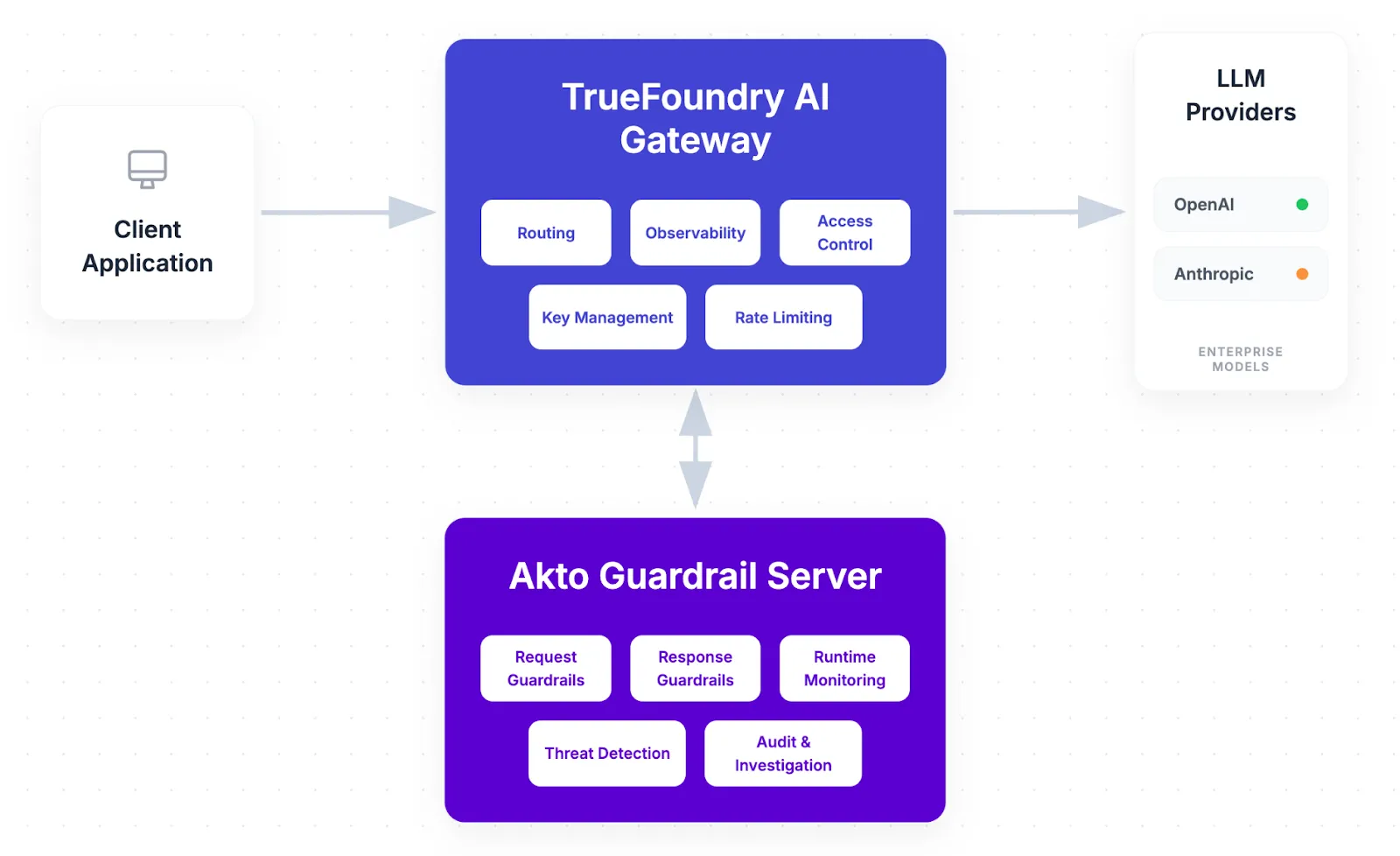

TrueFoundry provides the control layer for production AI systems. With AI Gateway, teams can route their llm traffic through a proxy layer and centralize model routing, key management, access control, observability, and governance across LLMs, tools, and MCP-connected workflows.

Akto provides the runtime security layer for AI agents, MCP interactions, and LLM-powered applications. With Akto Argus, teams can apply guardrails to Agent prompts, responses, tool calls, and agent actions, detecting and responding to issues like prompt injection, data leakage, unsafe outputs, and risky agent behavior as they happen.

Together, the two solutions create a clean production architecture for enterprise AI:

- TrueFoundry handles deployment, routing, and operational control

- Akto handles runtime inspection, AI risk assessment, and guardrail policy enforcement

That combination makes it easier to run enterprise-ready agentic AI systems without pushing security logic into every individual agent or service. We have a first class citizen support for Akto guardrails inside TrueFoundry gateway with the following supported hooks: beforeRequestHook, afterRequestHook, mcpPreTool, mcpPostTool

The gap in production agent deployments

Most teams building AI agents spend the majority of their effort on deployment and reliability: getting agents to call the right tools, manage context correctly, handle retries, and scale across users and environments. That approach is necessary but not sufficient.

Security in many agentic AI deployments still stops at the perimeter: platform access controls, MCP server allowlists, tool-level permissions, scoped credentials for downstream systems, and model routing policies.

Those controls matter, but they do not answer the most important runtime questions:

- What is the agent actually doing once it starts executing?

- Which tools are it calling, in what sequence, and with what data?

- Is it accessing resources outside its intended scope?

- If a prompt injection slips in through retrieved context, an MCP server, or an external API response, is anything stopping the agent before it acts?

Runtime guardrails for the AI Agents

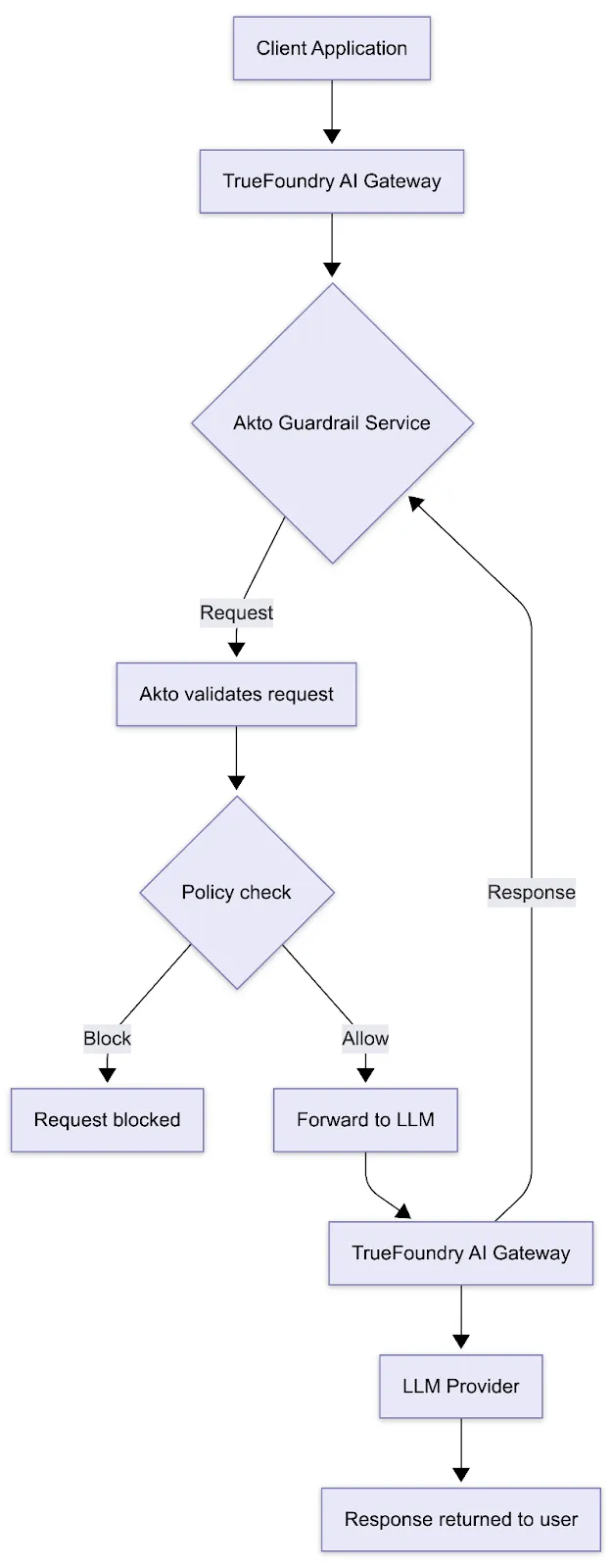

The key architectural idea behind this technical partnership is simple: If all model, tool, and MCP traffic already flows through the gateway, that is the right place to apply runtime security.

With Akto connected to TrueFoundry AI Gateway, teams can enforce runtime guardrails in the same path where agent traffic is already being routed and governed. That means teams can evaluate and control live AI traffic, not just review traces after execution.

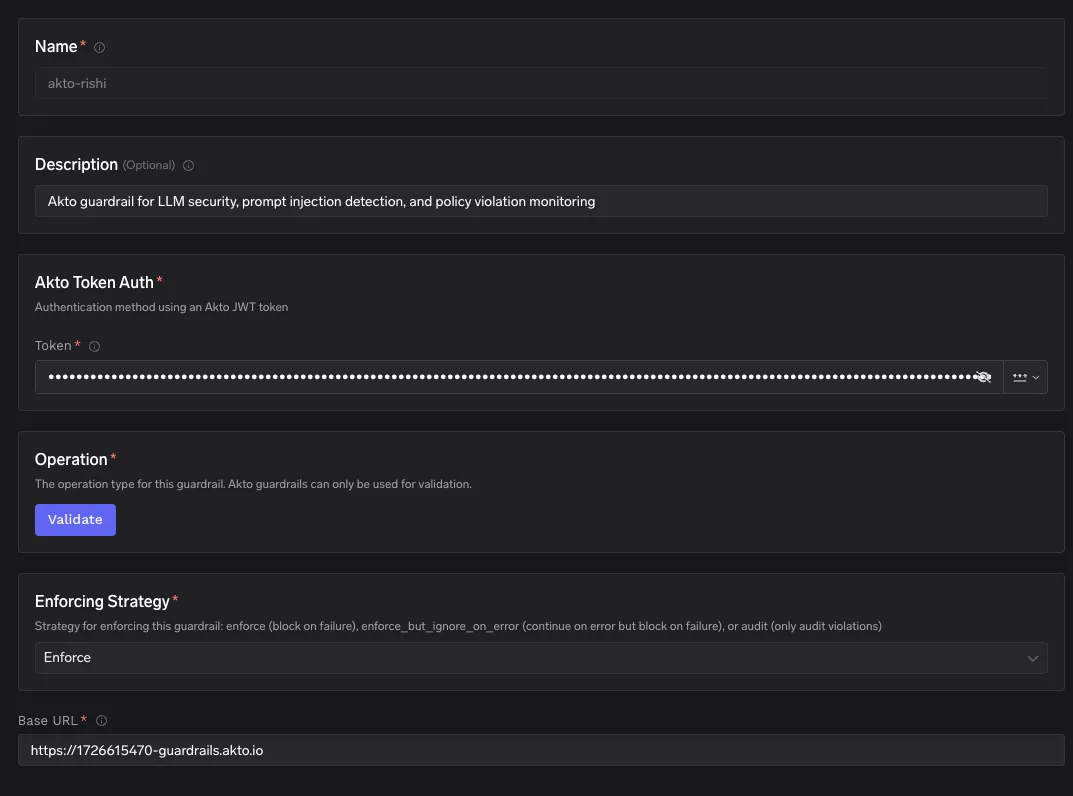

How Akto adds security layer to your AI Gateway?

Akto Argus is the runtime security layer for AI agents, MCP-connected workflows, and LLM-powered applications.

Akto applies runtime guardrails to the interactions happening in production, so teams can monitor, evaluate, and enforce policy on agent behavior while it is happening.

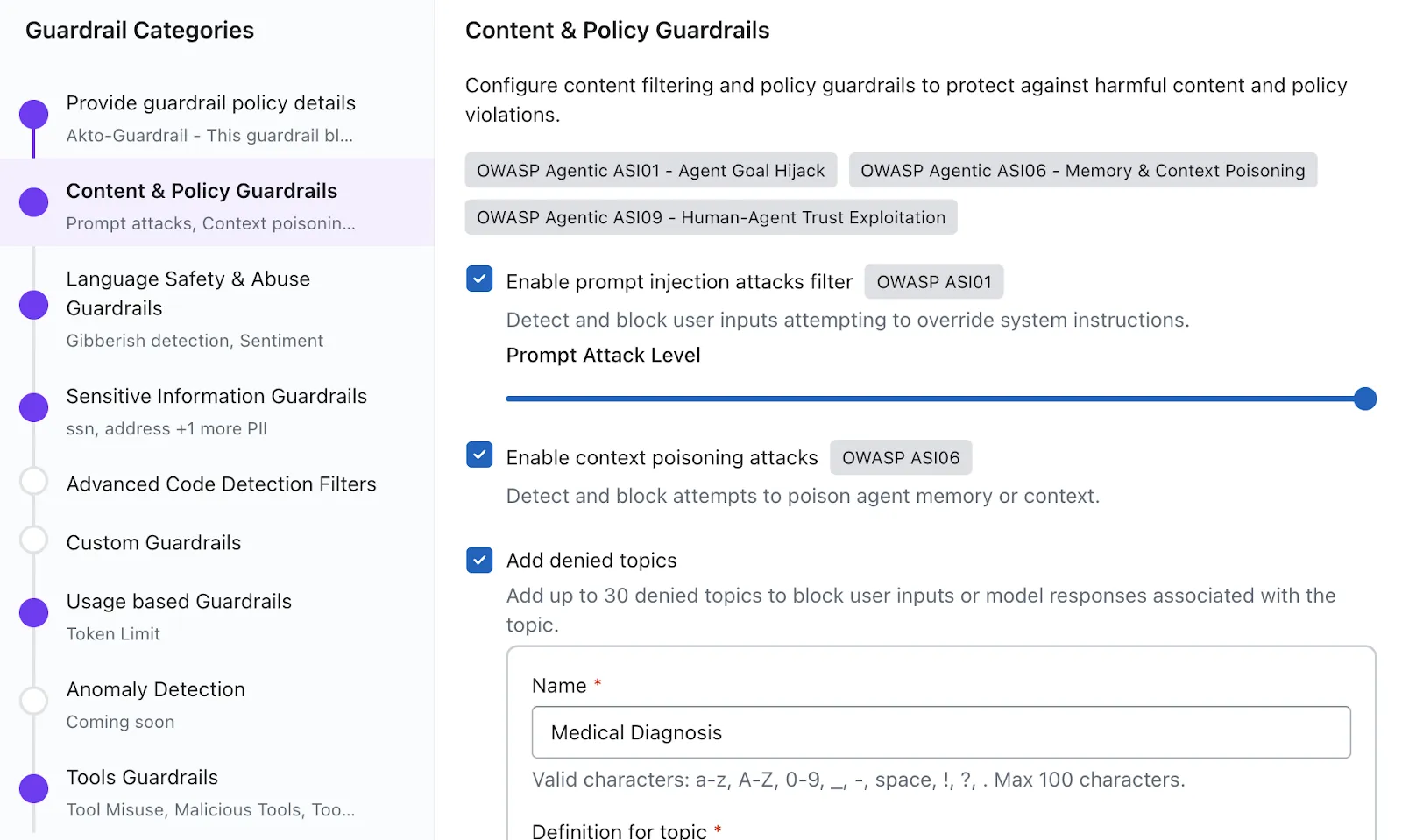

With Akto AI Guardrails enabled, teams can apply controls such as:

- Prompt attack protection to detect and block prompt injection and jailbreak attempts

- Sensitive data protection to identify and redact PII, secrets, credentials, tokens, and internal context

- Output filtering to catch unsafe or policy-violating responses before they reach downstream systems or end users

- Behavioral anomaly detection to surface unusual tool usage, off-pattern workflows, or actions outside expected scope

- Policy enforcement so security and platform teams can allow, redact, block, or flag risky interactions without reworking agent code

For agentic systems, Akto goes beyond a single prompt/response pair. It is built to evaluate tool invocations, MCP requests, and multi-step execution context, because many real failures in agentic AI do not happen in one model response. They emerge across the full chain of actions.

How does the technical partnership work?

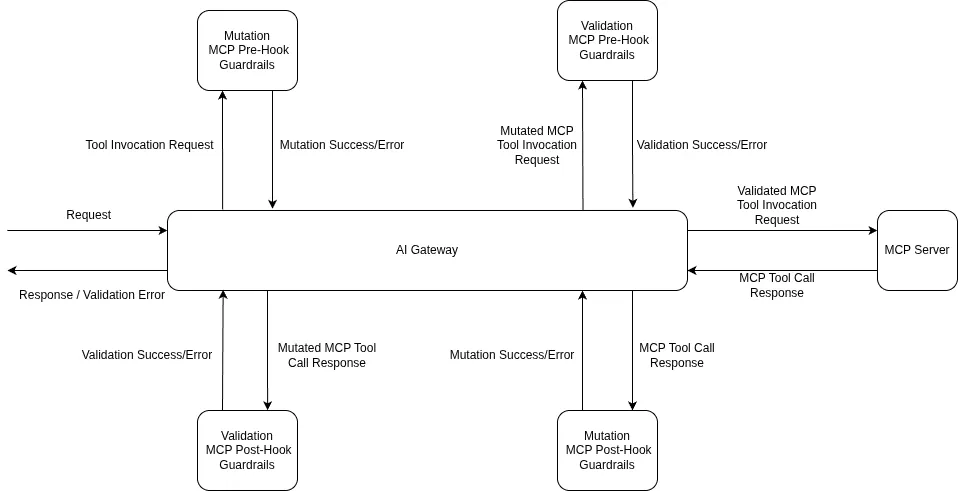

TrueFoundry AI Gateway enforces Akto guardrails through four discrete hooks declared as YAML fields in the guardrail rule configuration. Each hook maps to a specific enforcement point in the request lifecycle, and no changes to agent code are required.

llm_input_guardrails intercepts a prompt before it reaches the model. The gateway sends the request to Akto Argus first; if a violation is detected the request is blocked and the LLM is never called. This is a hard enforcement point: the model call does not proceed until Akto clears the input.

llm_output_guardrails fires after the LLM has responded but before the response is delivered downstream. This hook is non-blocking i.e the user receives the response immediately while Akto evaluates it asynchronously for unsafe outputs, data leakage, or policy violations. Results surface in the Akto dashboard for compliance review.

mcp_tool_pre_invoke_guardrails fires before a tool is executed by the agent. Akto evaluates the tool name, its arguments, and calling context at this point. If the arguments contain sensitive data or indicate off-scope resource access, the tool invocation can be blocked before any real-world action occurs.

mcp_tool_post_invoke_guardrails fires after the tool returns its result, before that result is passed back to the agent. This is the enforcement point for detecting data leakage in tool outputs eg, credentials, PII, or internal context returned by an MCP server before they enter the agent's reasoning loop.

Rules are configured in the gateway via a YAML rules block. Each rule uses a when block with two conditions: target (matching on model, mcpServers, mcpTools, or request metadata) and subjects (matching on user or team identity with in and not_in operators). All rules matching a request are evaluated together, and their guardrail sets are unioned per hook — if two rules both target llm_input_guardrails, both guardrails run. Teams can also override guardrails at the per-request level without modifying the global config, by passing the X-TFY-GUARDRAILS JSON header specifying guardrail selectors for any combination of the four hooks.

The Akto Argus connector sits within TrueFoundry's AI Gateway layer. Once configured, every Agent interaction and LLM call that flows through the AI gateway is also observed by Argus. There is no instrumentation required at the agent code level. This gives teams a practical rollout path: block where confidence is high, monitor where visibility comes first.

Built for production agentic AI systems

This partnership is built around a simple idea: security should live in the same path where agent traffic is already being routed and governed.

With TrueFoundry AI Gateway as the control plane for model, tool, and MCP traffic, and Akto Argus as the runtime security layer attached to that path, teams get a practical production architecture for enterprise AI without adding per-agent security logic or changing how applications are built.

For customers, that means:

- centralized control over AI traffic and runtime policy enforcement

- consistent security coverage across prompts, responses, tools, and MCP interactions

- faster rollout of guardrails with a clear path from monitoring to blocking

- shared visibility for platform, security, and engineering teams

As more teams move from simple LLM integrations to real agentic systems, this kind of architecture becomes a baseline requirement, not an optional add-on.

Ready to get started? Explore the Akto connector docs for TrueFoundry and TrueFoundry’s AI Gateway documentation to set up Akto Guardrails.

TrueFoundry AI Gateway delivers ~3–4 ms latency, handles 350+ RPS on 1 vCPU, scales horizontally with ease, and is production-ready, while LiteLLM suffers from high latency, struggles beyond moderate RPS, lacks built-in scaling, and is best for light or prototype workloads.

The fastest way to build, govern and scale your AI

.png)

.webp)

.webp)

.webp)

.webp)