MCP vs A2A: Key Differences, Use Cases, and Enterprise Integration

Updated: September 16, 2025

.webp)

Built for Speed: ~10ms Latency, Even Under Load

Blazingly fast way to build, track and deploy your models!

- Handles 350+ RPS on just 1 vCPU — no tuning needed

- Production-ready with full enterprise support

As more enterprises roll out multiple AI agents, a new challenge is surfacing, getting them to actually work together. Too often, one agent’s output doesn’t quite fit as another’s input, leading to broken workflows and inconsistent results. So the question isn’t just ‘can you build an agent?’ anymore, it’s ‘can your agents collaborate?’

That’s where two emerging standards come in: the Agent-to-Agent Protocol (A2A) and the Model Context Protocol (MCP). They may sound similar, but MCP and A2A play different role. A2A gives agents a common language to communicate, while MCP keeps them anchored in the same context. The real choice for enterprises is deciding which one to trust first.

What is Agent-to-Agent (A2A)?

The Agent-to-Agent (A2A) Protocol, announced by Google Cloud in April 2025 with support from over 50 leading technology and consulting partners, is an open standard for agent interoperability. Its goal is straightforward yet transformative: to enable AI agents, regardless of vendor, framework, or modality, to communicate, collaborate, and coordinate tasks seamlessly across enterprise systems.

Key Features of A2A

- Capability Discovery: Agents publish their functions using a JSON-based Agent Card, allowing other agents to identify the right partner for a given task.

- Task Lifecycle Management: Every task has a defined lifecycle, supporting both instant responses and long-running processes with real-time updates and artifact outputs.

- Enterprise-Grade Security: A2A enforces strong authentication and authorization, aligning with OpenAPI security schemes to ensure safe agent collaboration across platforms.

- Modality Agnostic Communication: Beyond text, the protocol supports audio, video, and structured data streams, enabling agents to collaborate in richer, more flexible formats.

Unlike brittle, one-off integrations, A2A provides a formalized protocol layer built on standards like HTTP, SSE, and JSON-RPC, making it highly compatible with existing IT stacks.

The result is a universal framework where agents become interoperable components of a scalable, multi-agent ecosystem, capable of automating complex enterprise workflows with reliability and reduced integration costs.

Secure and Scale Your Agentic AI

- Deploy, govern, and scale your Agentic AI systems confidently with TrueFoundry, ensuring full security, compliance, and control from development to production.

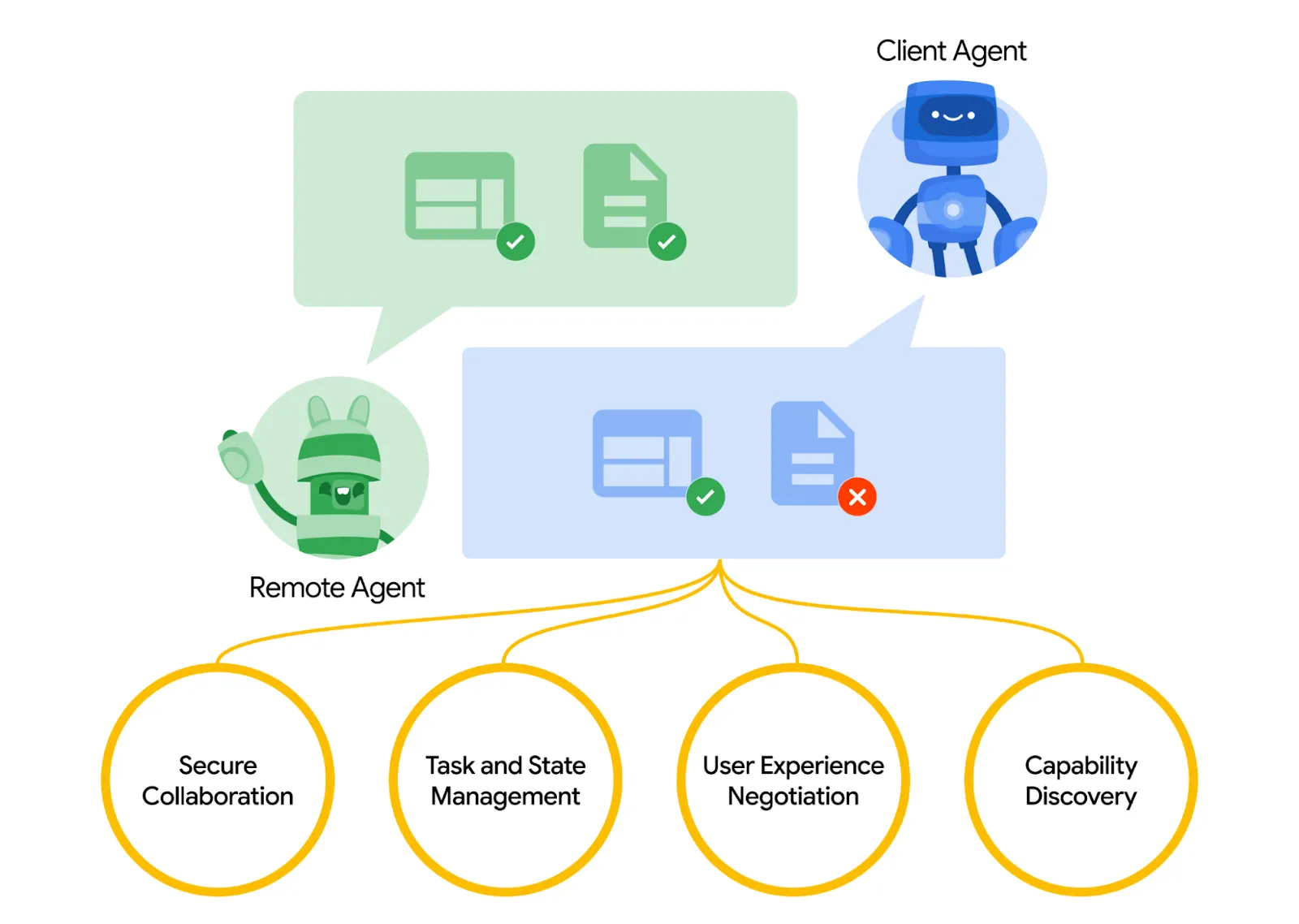

How Agent-to-Agent (A2A) Works?

.webp)

Agent-to-Agent (A2A) enables seamless collaboration between a “client” agent and a “remote” agent. The client agent defines tasks and communicates what needs to be done, while the remote agent executes those tasks to deliver the desired outcomes.

Agents share their capabilities through “Agent Cards” in JSON format, allowing the client to identify the most suitable agent for each task. Once a task is assigned, both agents coordinate throughout its lifecycle, keeping each other updated on progress and exchanging results, or “artifacts.”

Beyond task execution, agents communicate context, instructions, and responses to stay aligned. Each message consists of distinct “parts” with specified content types, enabling agents to negotiate the correct format for the user interface, whether images, video, interactive forms, or other elements, so the end user receives the information exactly as needed.

Also Read: 6 Best LLM Gateways in 2026

What is Model Context Protocol (MCP)?

The Model Context Protocol (MCP), introduced by Anthropic in 2024, is an open standard for connecting AI applications with external tools, databases, and services. Acting as a universal integration layer, MCP eliminates the brittle, ad hoc connectors that often plague multi-agent systems.

Instead, it provides a standardized communication channel that makes agents context-aware, scalable, and more reliable in production environments.

Just like how USB-C standardized hardware connectivity, MCP standardizes how agents interface with heterogeneous tools and data sources.

Key Features of Model Context Protocol (MCP)

The Model Context Protocol (MCP) empowers a single AI agent to leverage external tools and resources efficiently. Here, have a look at the features of MCP.

- Standardized Tool Integration: Provides a uniform protocol for LLMs and agents to connect with APIs, databases, and services without custom glue code.

- Context Management: Streamlines the flow of relevant information, including memory, prior outputs, and tool results, so agents operate with the right context at the right time.

- Client–Server Architecture: Uses a modular model with MCP hosts, clients, and servers, enabling flexible integration with IDEs, collaboration platforms, and cloud services.

- Transport-Layer Interoperability: Employs JSON-RPC 2.0 over stdio or Server-Sent Events (SSE), supporting both lightweight synchronous tasks and asynchronous, event-driven workflows.

Unlike orchestration frameworks such as LangChain or CrewAI, MCP does not decide when a tool should be invoked.

Instead, it establishes the standard wiring layer that ensures tools, prompts, and resources are seamlessly available to agents. This transforms multi-agent systems from brittle prototypes into enterprise-grade, interoperable AI ecosystems.

How does the Model Context Protocol (MCP) work?

.webp)

The Model Context Protocol lets an LLM complete tasks by using external tools that go beyond its native capabilities. For example, if you ask an AI assistant to “Check the inventory for the latest smartphone models and create a summary report,” MCP coordinates the process.

The LLM recognizes it cannot directly access the inventory database or generate a report on its own, so it queries the MCP system to discover relevant tools. It finds an inventory lookup tool to retrieve product data and a report generator tool to create the summary.

The LLM then sends structured requests to these tools: the inventory tool fetches the latest product information, and the report generator formats this data into a readable summary. Once both steps are completed, the LLM presents the final report to the user. By orchestrating tool discovery, invocation, and response handling, MCP enables LLMs to safely and efficiently extend their capabilities for real-world tasks.

Also Read: What is MCP Proxy?

MCP vs A2A: Core Differences

When scaling AI, choosing the right protocol determines how agents share context, access tools, and collaborate. Both MCP and A2A are complementary but focus on different layers: MCP standardizes model-tool interactions, while A2A enables agents to coordinate tasks and communicate across systems.

Both protocols serve distinct but complementary purposes. MCP powers agents internally with context and tools, while A2A connects agents externally for collaboration and task execution. Together, they form a robust framework for scalable, multi-agent AI systems.

Here’s a concise comparison of MCP vs A2A.

| Feature | MCP | A2A |

|---|---|---|

| Goal | Standardize tool & data integration for agents | Enable multi-agent collaboration and task sharing |

| Architecture | Client-server: hosts, clients, servers; JSON-RPC over stdio/SSE | Peer model: client & remote agents; tasks, artifacts, streams |

| Scope | Enhances single-agent capabilities | Coordinates multiple agents across workflows |

| Discovery | Exposes available tools/resources/prompts | Agent Cards advertise capabilities dynamically |

| Task handling | Tools executed as needed; context-driven | Tasks with lifecycles, long-running, with updates/artifacts |

| Modality | Structured data, APIs, prompts | Structured data + audio, video, UI negotiation |

| Messaging layer | JSON-RPC 2.0 over stdio, HTTP, SSE | HTTP, SSE, JSON-RPC with secure cross-agent messaging |

| Security | Tool/resource access control, permissions | Cross-agent auth, secure discovery, identity management |

Advantages of the Agent2Agent (A2A) Protocol

The Agent2Agent (A2A) Protocol is a transformative standard that enables AI agents to collaborate seamlessly across various platforms and frameworks. By facilitating secure, context-aware communication, A2A empowers enterprises to build scalable, interoperable multi-agent ecosystems.

Seamless Interoperability: A2A allows agents from different vendors and frameworks to communicate effortlessly, eliminating integration barriers and promoting a unified AI ecosystem.

Enhanced Task Orchestration: The protocol supports complex task management, enabling agents to delegate responsibilities, track progress, and manage long-running workflows efficiently.

Modality-Agnostic Communication: A2A accommodates various communication modalities, including text, audio, and video, allowing agents to interact in diverse formats suited to specific tasks.

Enterprise-Grade Security: Built with robust authentication and authorization mechanisms, A2A ensures secure agent interactions, safeguarding sensitive enterprise data.

Scalability and Flexibility: The protocol's design supports the dynamic addition of new agents and capabilities, facilitating the growth of AI ecosystems without significant reconfiguration.

Standardized Communication Protocol: A2A utilizes widely adopted standards such as HTTP, SSE, and JSON-RPC, simplifying integration with existing IT infrastructures.

Context-Aware Collaboration: Agents can share and understand each other's context, leading to more informed decision-making and efficient task execution.

Accelerated Development Cycles: By providing a common communication framework, A2A reduces development time for multi-agent systems, enabling faster deployment of AI solutions.

Several enterprises have already seen tangible benefits from implementing A2A. For example, Comparus, which uses IBM watsonx.ai solutions, reported that integrating the protocol significantly streamlined their AI operations.

Their agents are now able to collaborate more effectively across different workflows, resulting in faster task completion and improved service delivery for clients. This real-world adoption underscores the protocol’s potential to transform multi-agent AI ecosystems.

Also Read: Top 5 LiteLLM Alternatives for Enterprises in 2026

Advantages of the Model Context Protocol (MCP)

The Model Context Protocol (MCP) is revolutionizing the way AI agents interact with external tools and data sources. By providing a standardized framework, MCP enables seamless integration, enhancing the capabilities and efficiency of AI systems. Here are the key advantages:

Standardized Integration: MCP offers a universal interface for connecting AI agents to various tools and data sources, reducing the complexity of custom integrations.

Enhanced Interoperability: AI agents can access a diverse ecosystem of resources, including APIs, databases, and files, ensuring consistent performance across different platforms.

Reduced Development Time: Developers can leverage MCP to quickly integrate new tools and data sources, accelerating the development cycle and time-to-market for AI applications.

Improved Security: MCP incorporates robust security measures, such as controlled access to resources and secure communication protocols, safeguarding sensitive data during interactions.

Dynamic Context Management: The protocol allows AI agents to maintain context across different tools and interactions, enabling more coherent and context-aware responses.

Scalability: MCP's modular architecture supports the addition of new tools and data sources without significant reconfiguration, facilitating scalable AI solutions.

Ecosystem Growth: By providing a common standard, MCP encourages the development of a wide range of compatible tools and services, fostering a vibrant AI ecosystem.

Future-Proofing: As an open-source protocol, MCP is continuously evolving, ensuring that AI systems remain adaptable to emerging technologies and requirements.

Several enterprises have reported measurable improvements after adopting MCP. Tech Innovators Inc., for example, found that integrating the protocol streamlined their development process, allowing their AI agents to connect seamlessly with multiple tools and data sources.

As a result, the agents capabilities expanded, workflows became more efficient, and overall system performance improved significantly.

MCP Vs A2A: When to Use

Scaling AI in the enterprise isn’t just about building powerful agents; it’s about making them work together effectively. Two protocols are leading this effort: MCP and A2A.

While both enhance AI systems, they operate at different layers. MCP focuses on providing context and connecting tools, whereas A2A enables agents to communicate and collaborate seamlessly. The question isn’t which is better, but which fits your use case or how you can combine them to maximize results.

MCP: Context and Tool Integration

MCP shines when your AI agents need structured, reliable access to external resources. Its primary strength is standardization, ensuring agents can consistently interact with APIs, databases, and templates.

Enterprises should consider MCP when:

- Agents require access to internal or external data sources like databases or knowledge bases.

- You want a standardized tool execution across multiple agents.

- Long-running or multi-step tasks demand a maintained context.

- You aim to reduce integration overhead, avoiding custom code for every new tool.

For instance, a customer service agent using MCP can seamlessly query multiple knowledge bases and use prebuilt templates to respond to users without custom scripting.

A2A: Multi-Agent Collaboration

A2A is essential when multiple agents need to coordinate and communicate in real time. It provides a secure, modality-agnostic framework for cross-agent task orchestration.

Consider A2A when:

- You have multiple autonomous agents handling shared workflows.

- Tasks involve handoffs, artifact sharing, or collaborative decision-making.

- Agents need to communicate over text, audio, or video channels.

- Security and identity management for agent interactions are critical.

For example, in a supply chain scenario, procurement, logistics, and customer notification agents can use A2A to synchronize tasks and share updates automatically.

Also Read: MCP Servers in Claude Code

Future Of AI Agent Protocol

As AI continues to evolve, agent protocols like MCP and A2A are poised to become the backbone of intelligent, collaborative systems.

- Seamless multi-agent collaboration: Agents will coordinate more efficiently, dynamically allocating tasks and sharing context in real time.

- Advanced capability discovery: Agents will autonomously identify the best tools and collaborators for each task.

- Adaptive workflows: Systems will adjust automatically to changing requirements, contexts, and user needs.

- Enhanced security and governance: Cross-agent communication and tool usage will be safe, auditable, and compliant.

- Scalable, production-ready applications: Protocols will simplify building complex AI workflows, making enterprise-grade AI accessible and reliable.

- Human-like team efficiency: Agents will operate together more like a cohesive team, tackling increasingly sophisticated tasks.

Can We Use Both MCP and A2A?

Practically, many enterprises use both protocols together. MCP ensures each agent has the right context and tools, while A2A enables those agents to collaborate effectively across complex workflows. This combination maximizes efficiency, scalability, and security.

Choosing the right protocol or layering both is a strategic decision. By understanding the strengths of MCP and A2A, enterprises can design AI ecosystems that are not only powerful but cohesive, context-aware, and collaborative.

Misconceptions Related To MCP and A2A

Despite their power, MCP and A2A protocol are often misunderstood. A few common misconceptions include:

- MCP is only for complex systems: Many assume MCP is useful only in large-scale setups, but it also simplifies single-agent workflows by standardizing tool access and task execution.

- A2A is just messaging between agents: While A2A enables agent communication, it is more than a messaging layer- it orchestrates task lifecycles, artifact exchange, and user interface negotiation.

- Agents must be human-like: Some think agents need human-like reasoning to use MCP or A2A effectively. In reality, these protocols focus on structured coordination, tool usage, and reliable task handling, independent of human-like intelligence.

- Security and governance are optional: Another misconception is that cross-agent protocols compromise safety. Both MCP and A2A are designed with access control, authentication, and observability in mind.

- MCP and A2A are mutually exclusive: Users may believe you have to choose one protocol over the other. In practice, they complement each other- MCP strengthens single-agent capabilities, while A2A enables multi-agent orchestration.

Also Read: Top 5 AWS MCP Gateway Alternatives

Final Thoughts

As enterprises scale AI, protocols like MCP and A2A are no longer optional; they are essential. MCP ensures agents have the right context and tools to operate efficiently, while A2A enables seamless collaboration between multiple agents across workflows and platforms.

Together, they create a powerful, interoperable AI ecosystem capable of handling complex tasks, automating processes, and driving productivity. Choosing the right protocol or combining both strategically can make the difference between fragmented, siloed AI and a cohesive, high-performing agent network. For Enterprises aiming to lead in the AI era, understanding and adopting these protocols is the first step toward future-ready, scalable intelligence.

Deploy Your Multi-Agent AI Workflows today with TrueFoundry. Book a demo to see it in action.

Frequently Asked Questions

Can MCP replace A2A?

While both standards improve AI interoperability, MCP cannot fully replace A2A because they solve different architectural challenges. MCP is designed to connect a single agent to tools and data, whereas A2A focuses on coordinating communication and task handoffs between multiple independent agents. Using them together creates a more scalable AI ecosystem.

What is the difference between A2A vs MCP?

The primary difference between MCP and A2A lies in their functional scope and architecture. MCP uses a client-server model to provide agents with external context and tool access, while A2A utilizes a peer-to-peer model for agent collaboration. While MCP anchors an agent in data, A2A enables agents to negotiate and share tasks across different systems.

Can MCP and A2A be used together?

Yes, combining MCP and A2A is often the ideal strategy for enterprise agentic workflows. In this hybrid setup, MCP ensures each individual agent has standardized access to the tools it needs, while A2A manages the high-level orchestration and communication between those agents. This layering maximizes both internal agent intelligence and external collaborative efficiency.

What is MCP and A2A in agentic AI?

MCP and A2A serve as the critical communication backbone. MCP acts as the universal connector for models to interact with databases and APIs, while A2A provides the common language for agents to work together on complex goals. TrueFoundry unifies these protocols into a single gateway, providing the governance and security required for production-grade agent deployment.

Is A2A part of MCP?

No, A2A is not part of MCP. While MCP standardizes tool access and task execution for a single agent, A2A focuses on enabling communication and coordination between multiple agents. They serve complementary purposes: MCP enhances individual agent capabilities, and A2A orchestrates multi-agent workflows for collaboration and shared task completion.

What is one limitation of MCP that A2A addresses?

MCP is designed for single-agent workflows, limiting its ability to coordinate tasks across multiple agents. A2A addresses this by enabling agents to communicate, share context, exchange artifacts, and negotiate outputs. This multi-agent orchestration allows complex, adaptive workflows that MCP alone cannot manage, providing greater flexibility and collaboration in AI systems.

TrueFoundry AI Gateway delivers ~3–4 ms latency, handles 350+ RPS on 1 vCPU, scales horizontally with ease, and is production-ready, while LiteLLM suffers from high latency, struggles beyond moderate RPS, lacks built-in scaling, and is best for light or prototype workloads.

The fastest way to build, govern and scale your AI

One Layer of Control for All AI

Govern, Deploy and Trace AI in Your Own Infrastructure

Book a 30-min with our AI expert

.png)

.webp)

.webp)

.webp)