Documentation Index

Fetch the complete documentation index at: https://www.truefoundry.com/llms.txt

Use this file to discover all available pages before exploring further.

Adding TrueFoundry Hosted Models to the Gateway

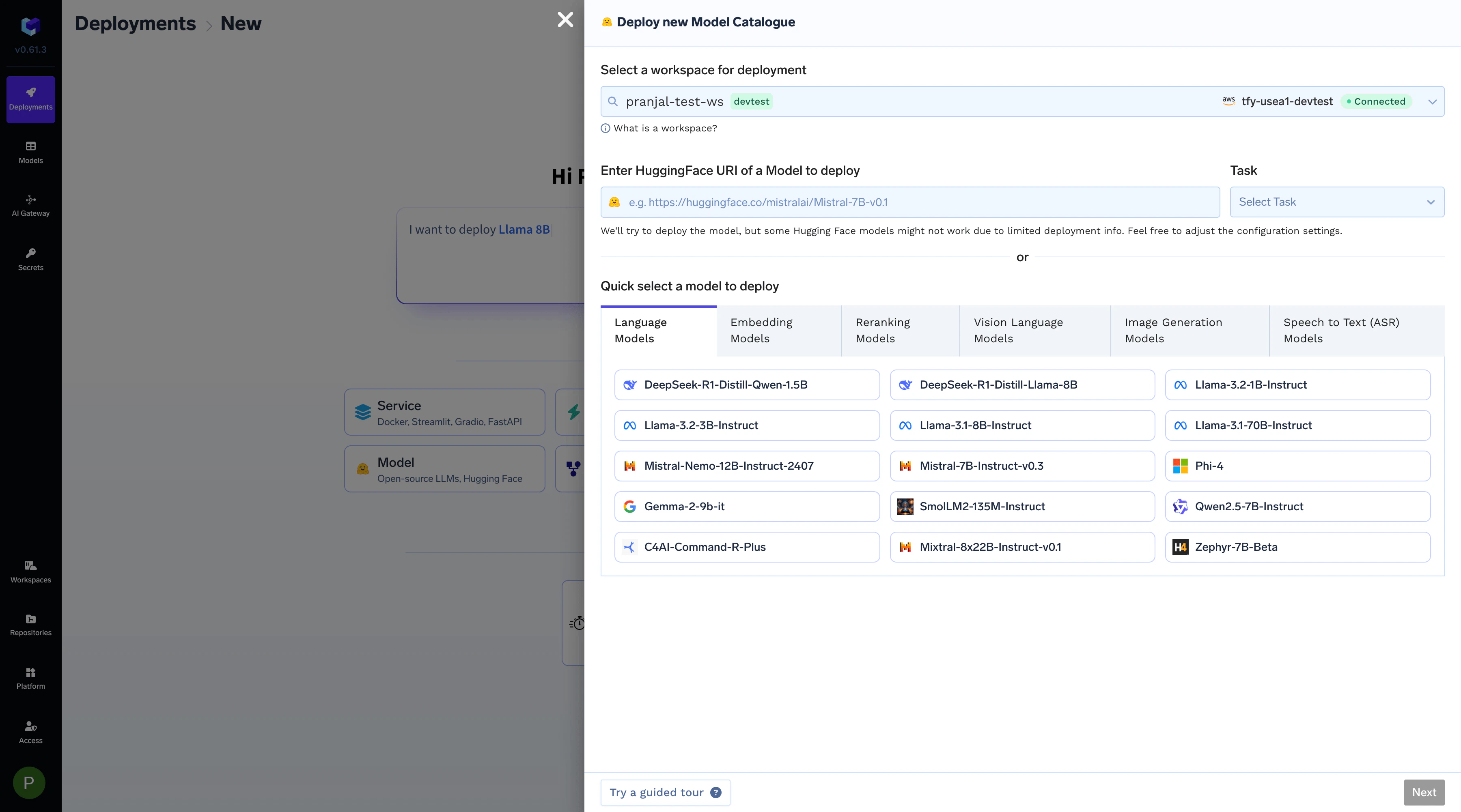

Follow these steps to add a model you have deployed on TrueFoundry to the AI Gateway.Deploy a Model

First, deploy your open-source or private model as a service. You can refer to our documentation on Deploying LLMs From Model Catalogue for detailed instructions.

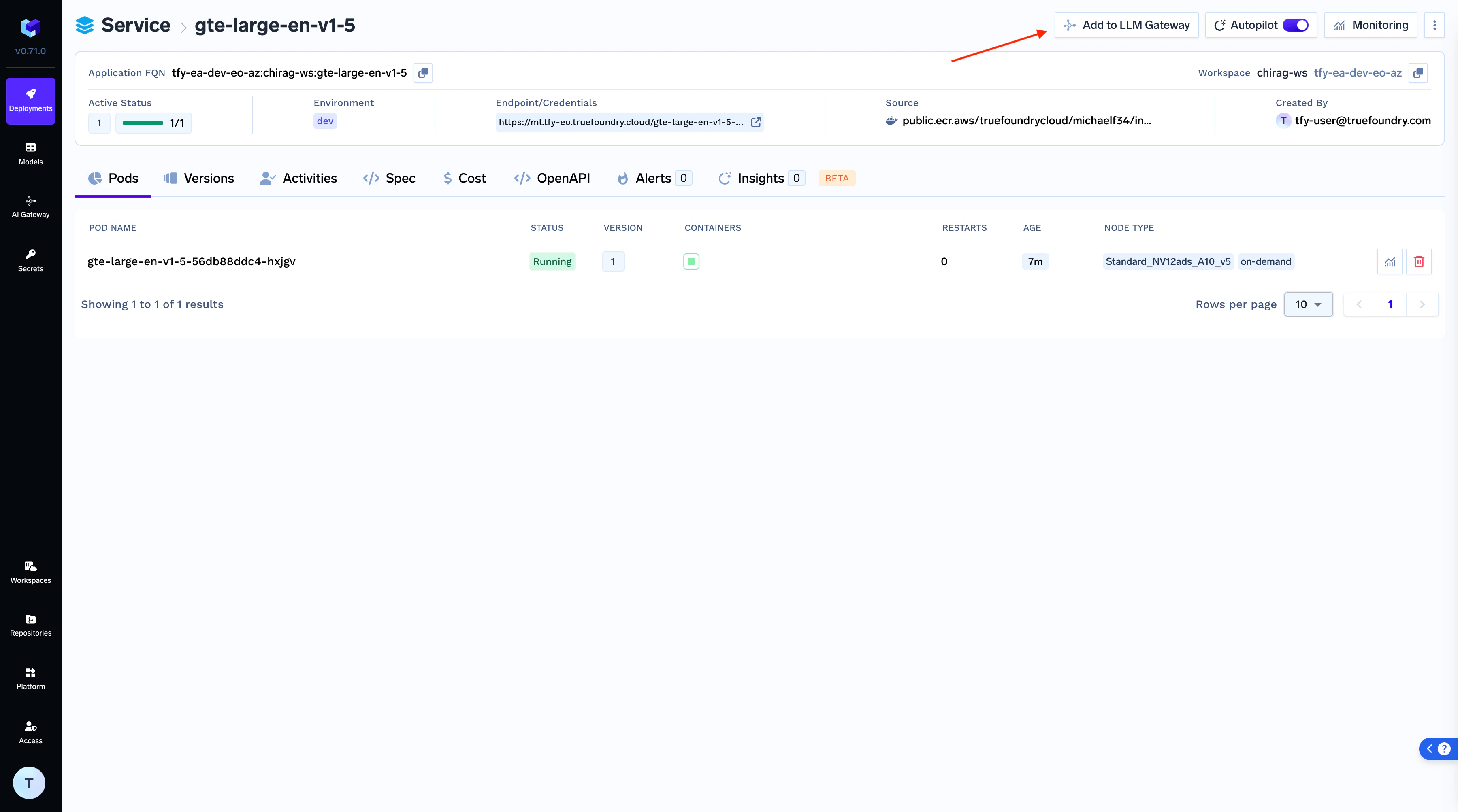

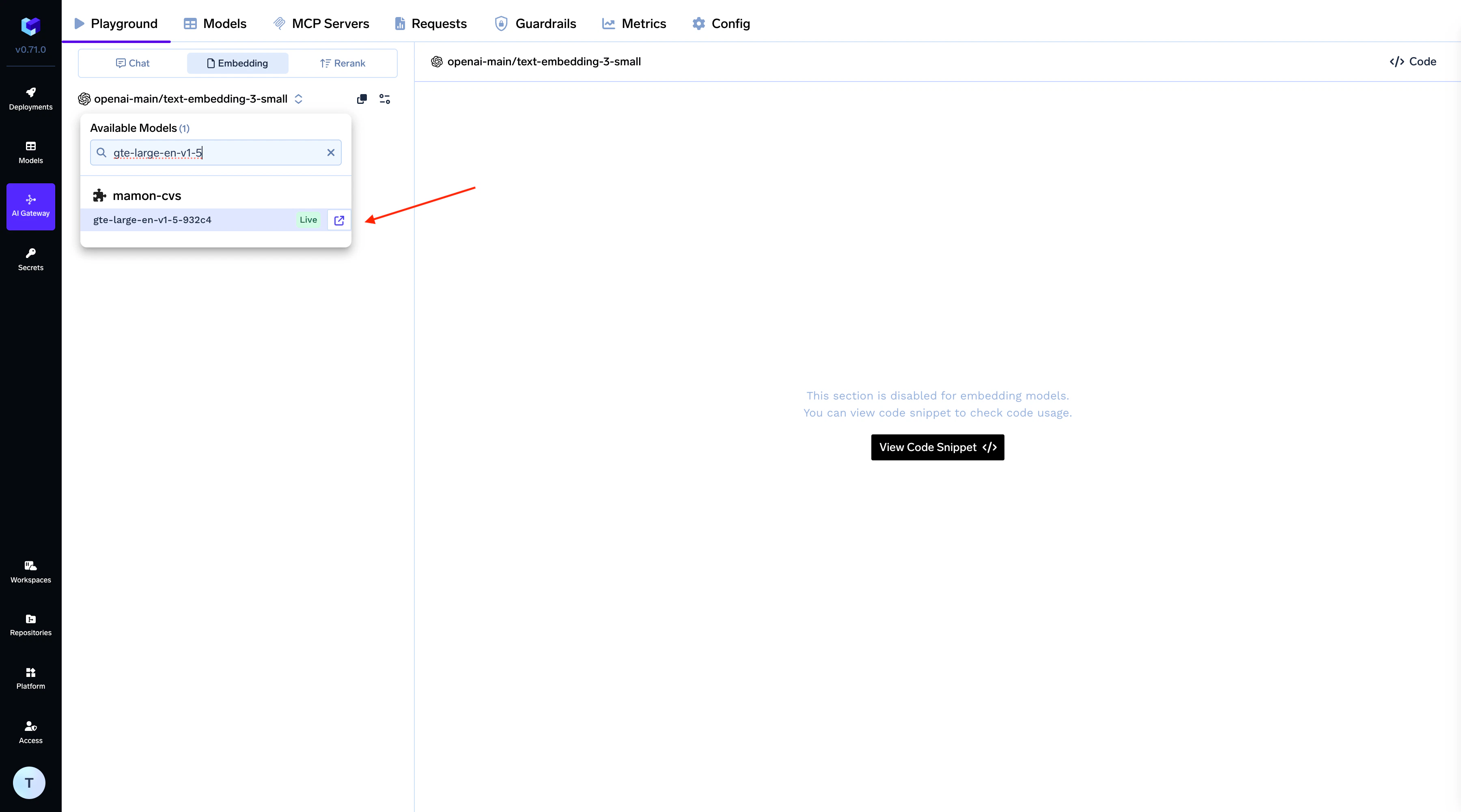

Add Model to AI Gateway

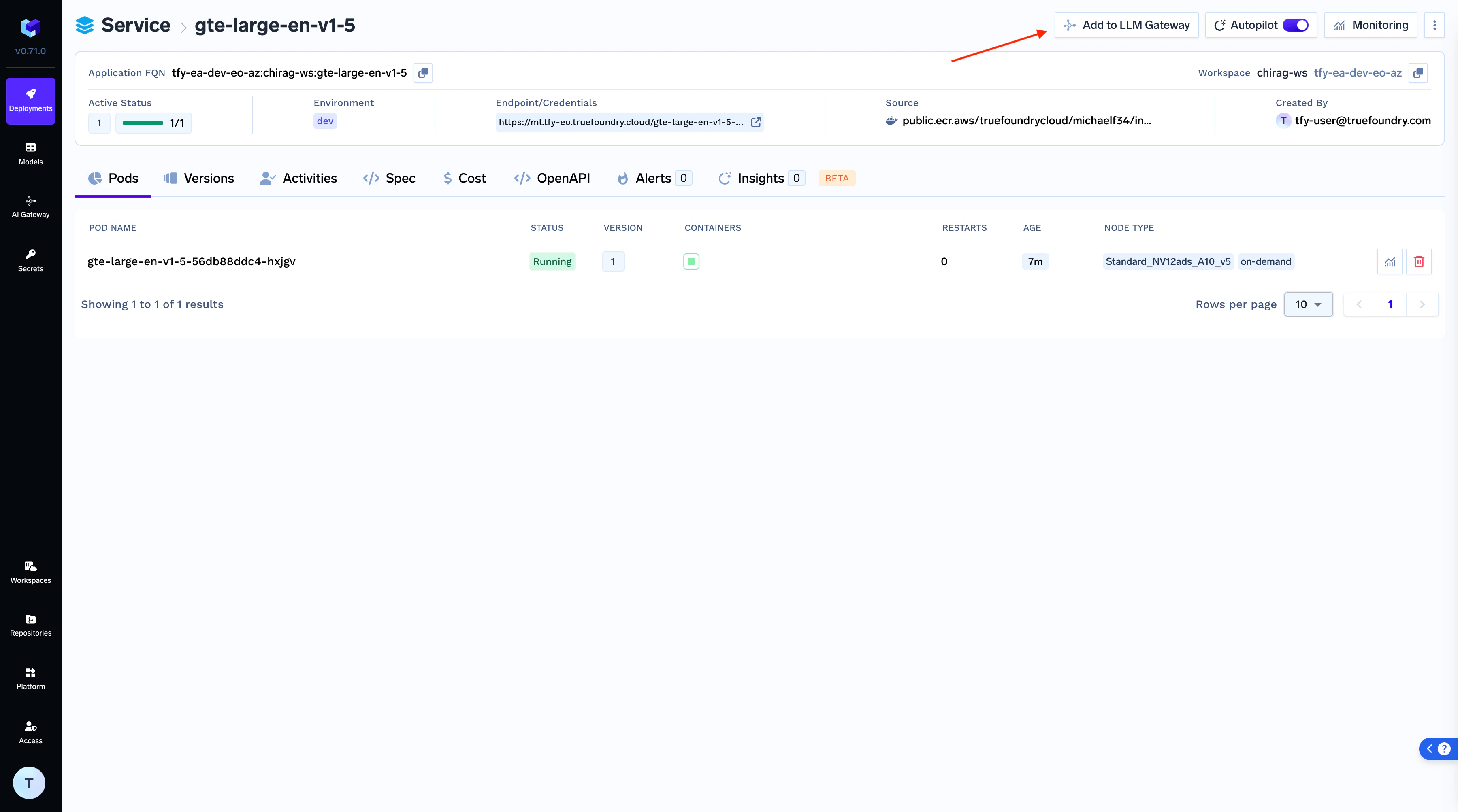

Once your model is successfully deployed, click the “Add to Gateway” button on the deployment page to begin the integration process.

Configure Model Details

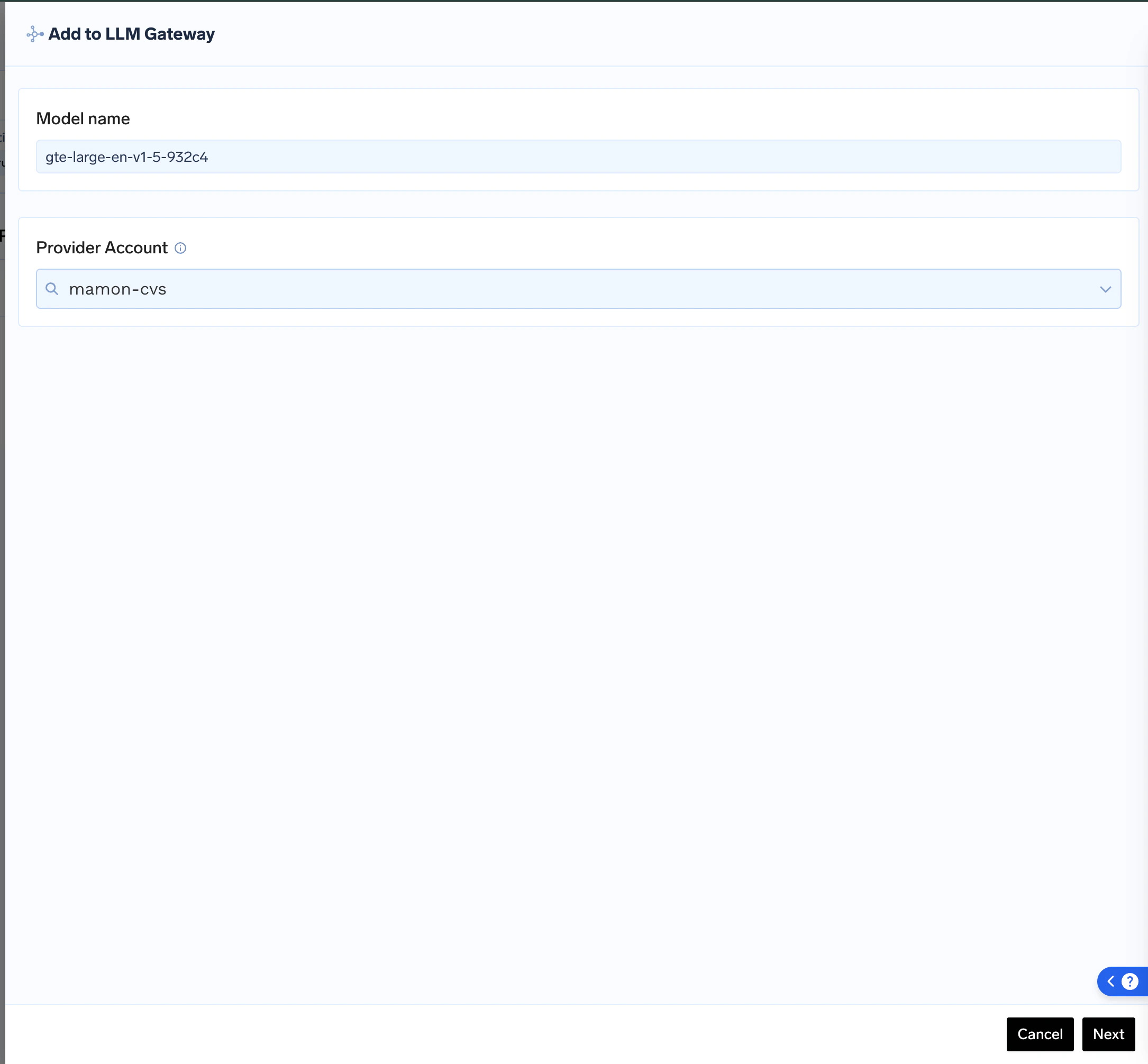

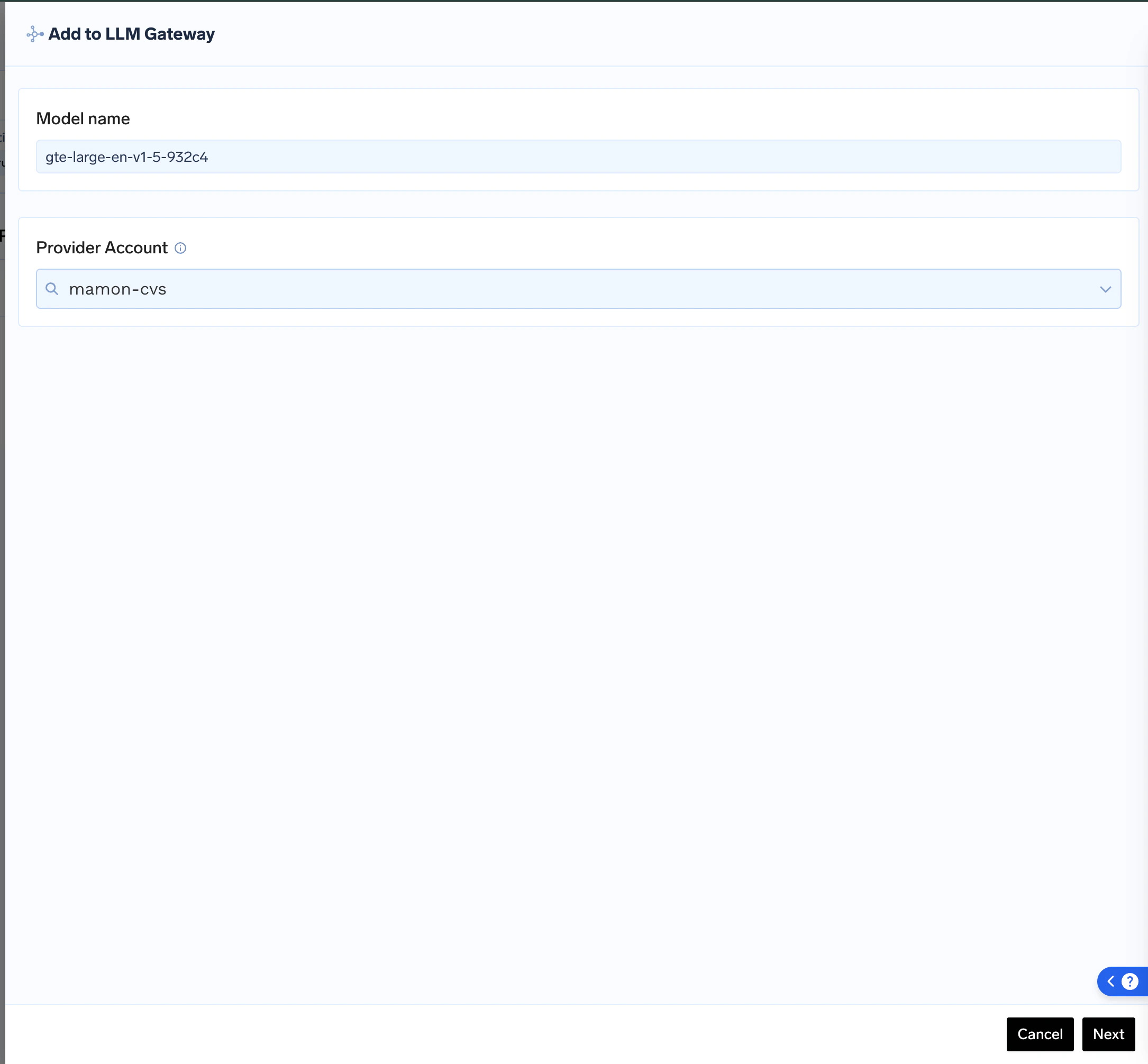

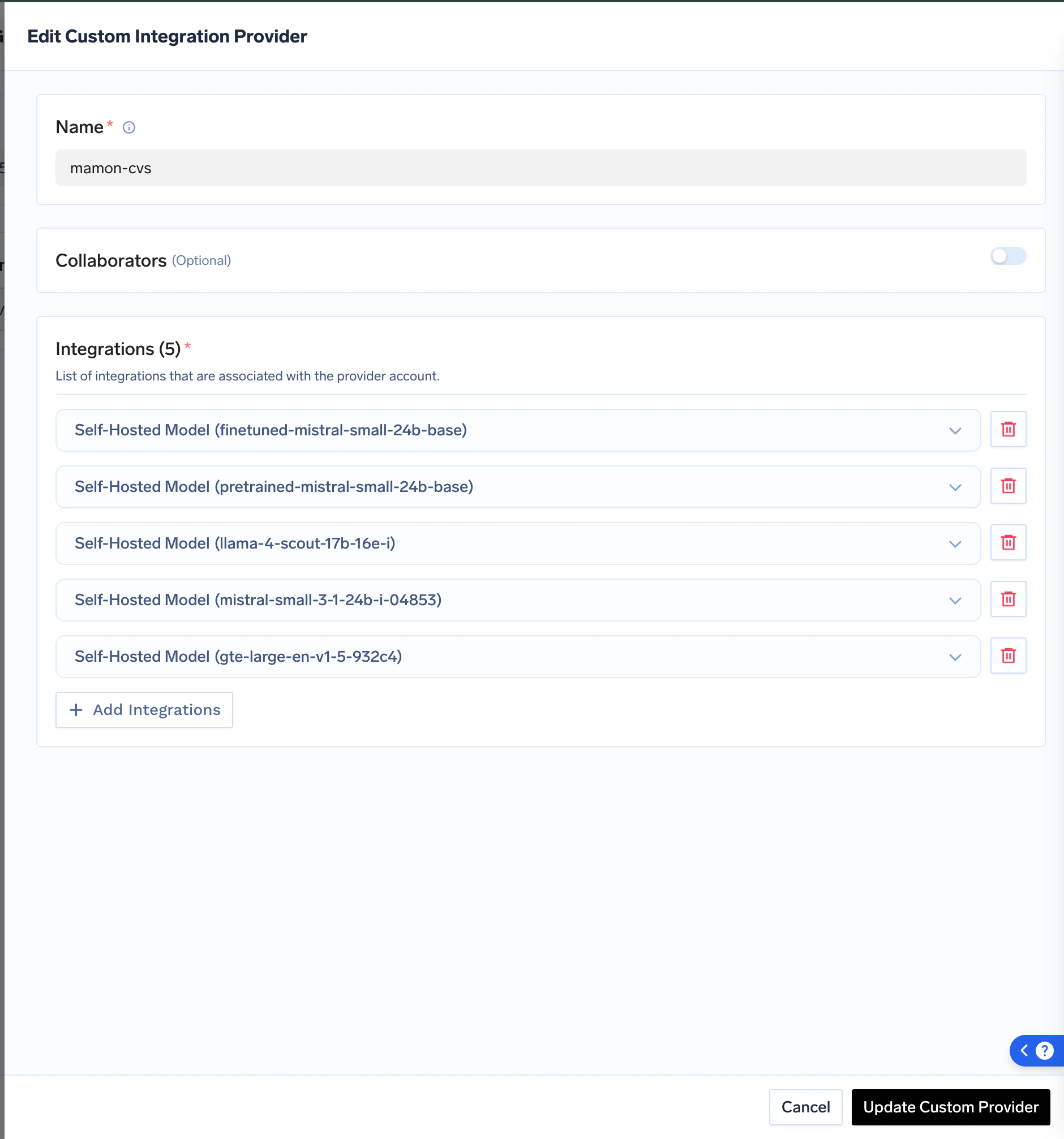

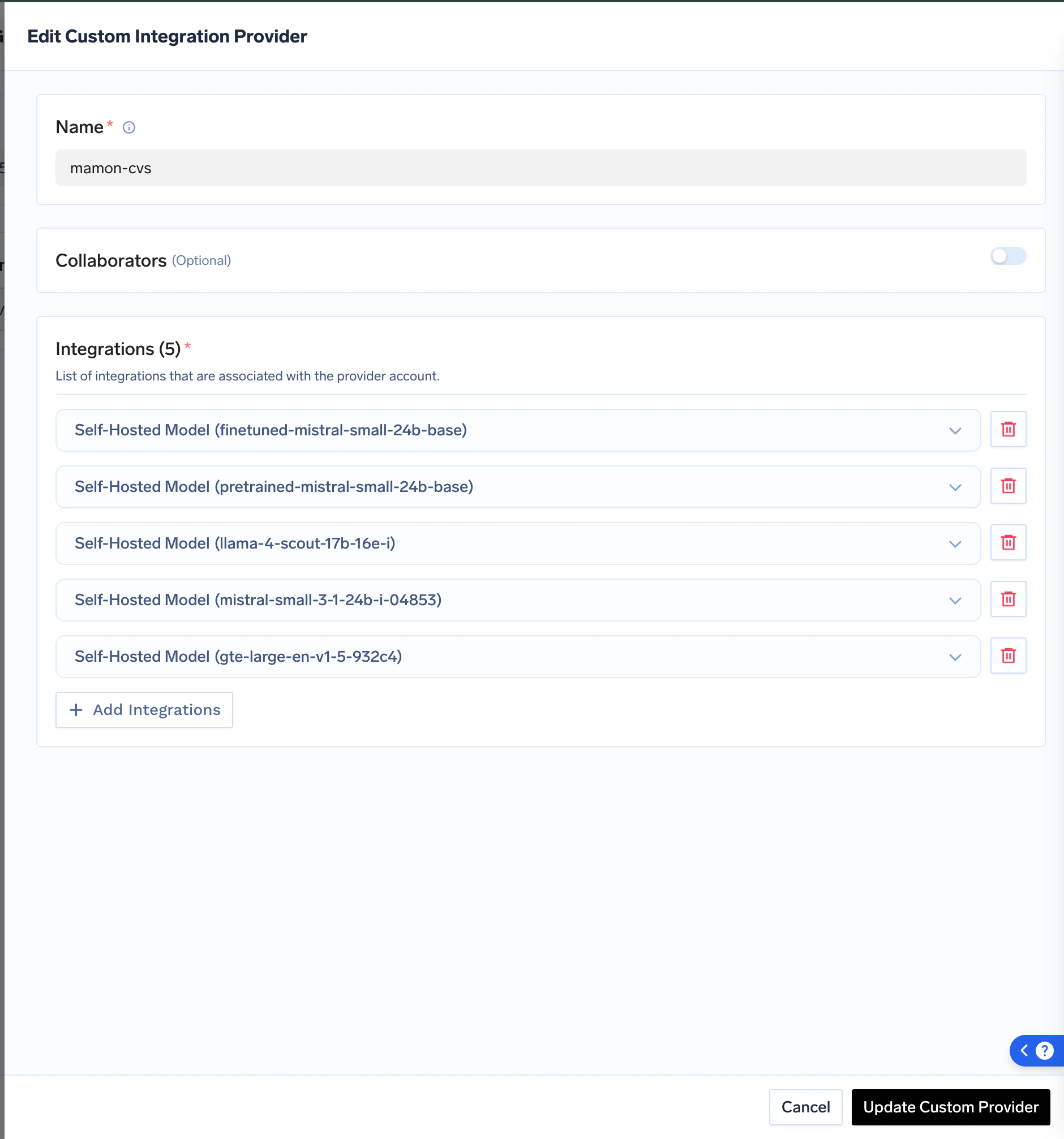

Give your model a unique Model Name and choose an existing Provider Account to add it to, or create a new one. This helps in grouping and managing your self-hosted models.

Add Collaborators

Add collaborators to your account, this will give access to the account to other users/teams. Learn more about access control here.

Cost Management for Self-Hosted Models

GPUs are costly, and running GPU-powered deployments can quickly drive up expenses. TrueFoundry offers built-in solutions to help reduce deployment costs. You can choose from two cost-saving options:- Auto-shutdown based on inactivity: Set up automatic shutdown of deployment pods if no new requests are received within a specified time frame.

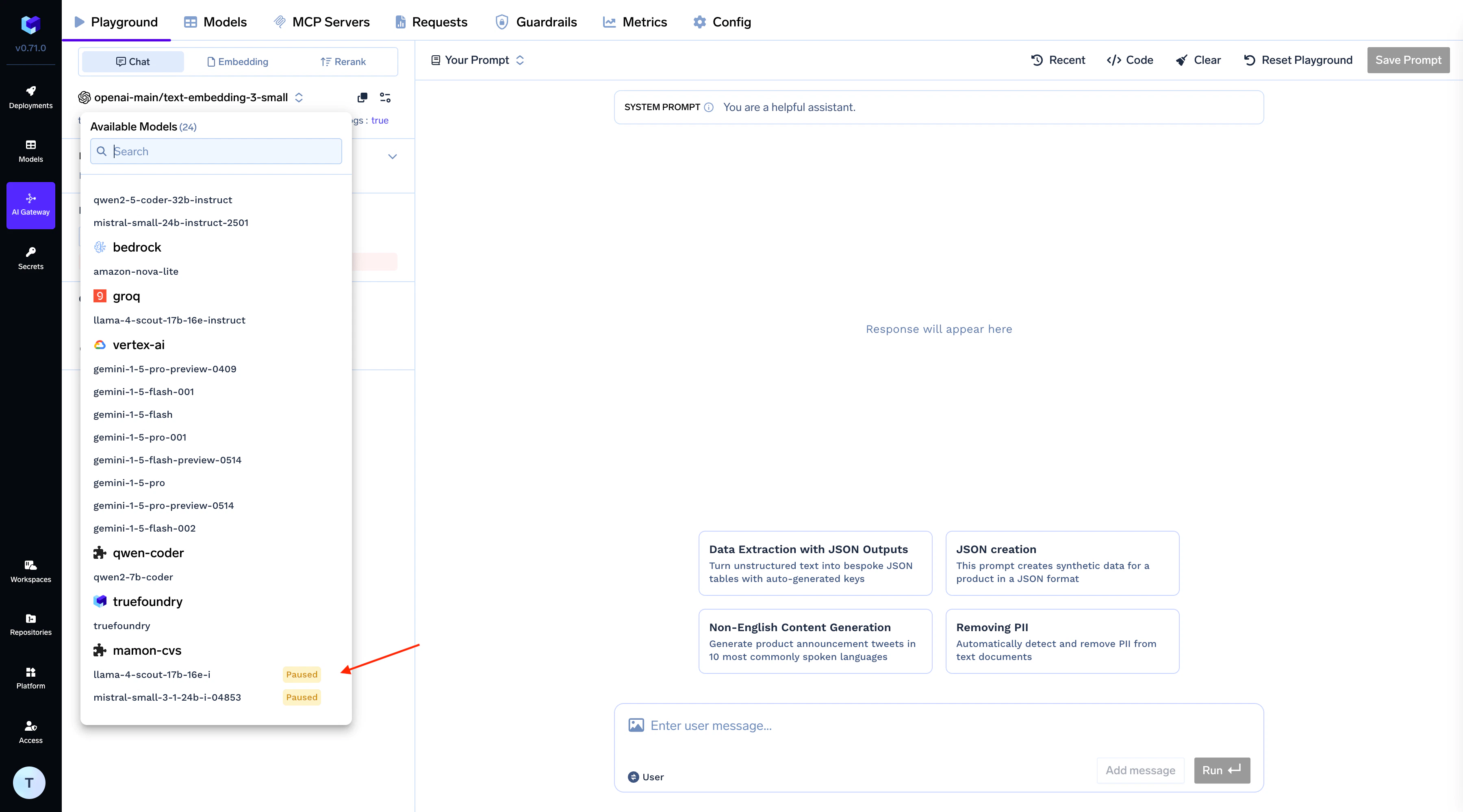

- Manual pause/resume: You can manually pause deployments that you anticipate won’t be used for a certain period.