MCP vs API: What Is The Difference?

Built for Speed: ~10ms Latency, Even Under Load

Blazingly fast way to build, track and deploy your models!

- Handles 350+ RPS on just 1 vCPU — no tuning needed

- Production-ready with full enterprise support

AI systems are evolving fast, but getting them to work seamlessly with real-world tools and data is still a major hurdle. Model Context Protocol (MCP) is a new standard that promises to make AI integration smoother and more secure by giving models structured access to external data and services.

Sounds familiar?

That’s because APIs have been doing something similar for decades, acting as the backbone of how software systems talk to each other. At first glance, MCP and APIs might seem like two versions of the same idea. But in reality, they operate at different layers and solve different problems.

In this MCP vs API article, we’ll break down what MCP actually is, how it compares to APIs, where each shines, and what it all means for developers, enterprises, and the future of AI integration.

What is the Model Context Protocol (MCP)?

A standard interface that lets AI models dynamically access and orchestrate tools and data for secure, context-aware workflows.

The Model Context Protocol (MCP) is an open standard that allows AI models to connect with external tools, data sources, and services in a safe and structured way. Instead of hardcoding integrations or relying on custom connectors, MCP defines a consistent protocol for exchanging context between a model and its environment.

This makes it easier for developers to extend model capabilities, ensure secure access to sensitive data, and standardize how AI interacts with external systems.

Key Features of MCP

Standardized Communication: MCP establishes a standardized, open protocol for communicating context between AI models and other external tools. This eliminates the need to deal with the messy landscape of proprietary connectors and hardcoded integrations. All tools, data sources, or services communicate with each other with a common language. This greatly reduces the engineering effort required to integrate AI with other enterprise tools. TrueFoundry's MCP Gateway serves as a single endpoint for agents to call any registered MCP Server, which could be an internal API, a cloud service, or a pre-built integration such as Slack, Confluence, or Datadog.

Security and Governance: MCP enables controlled and permissioned access to all tools and services. With TrueFoundry's implementation, this includes federated identity with enterprise IdPs such as Okta or Azure AD, OAuth 2.0 with dynamic token discovery, and Role-Based Access Control (RBAC) on a per MCP Server or per Tool basis. An agent can only call tools for which it has been explicitly granted access. Therefore, an over-privileged agent cannot act as a "superuser" across your entire enterprise tools landscape. Additionally, all activity is audit logged, which makes MCP-based workflows inherently friendly for heavily regulated industries such as healthcare or finance.

Extensibility: MCP has been architected to allow new tools or new data sources to be easily plugged in without having to change the underlying model or application code. In TrueFoundry, this means that you can use pre-built MCP Servers for popular enterprise platforms out of the box, or you can easily onboard any internal service – a proprietary REST API, legacy application, or custom database – as an MCP Server in minutes. Once registered, it’s immediately discoverable and callable by any agent.

Unified Discovery: A unified MCP Server Registry allows agents (and developers) to discover all the tools and services that are available. Instead of having to hardcode which tools an agent can use, we can now leverage the unified registry to expose to the agent at runtime the complete catalog of authorized, online MCP Servers.

Observability: All MCP Server calls, tool invocations, agent decisions are now traceable end-to-end. TrueFoundry captures rich telemetry – latency, errors, usage, cost – which can be filtered by user, tool, team, or environment. This effectively transforms what has traditionally been a black box (what did the agent do?) into a completely auditable, debuggable activity.

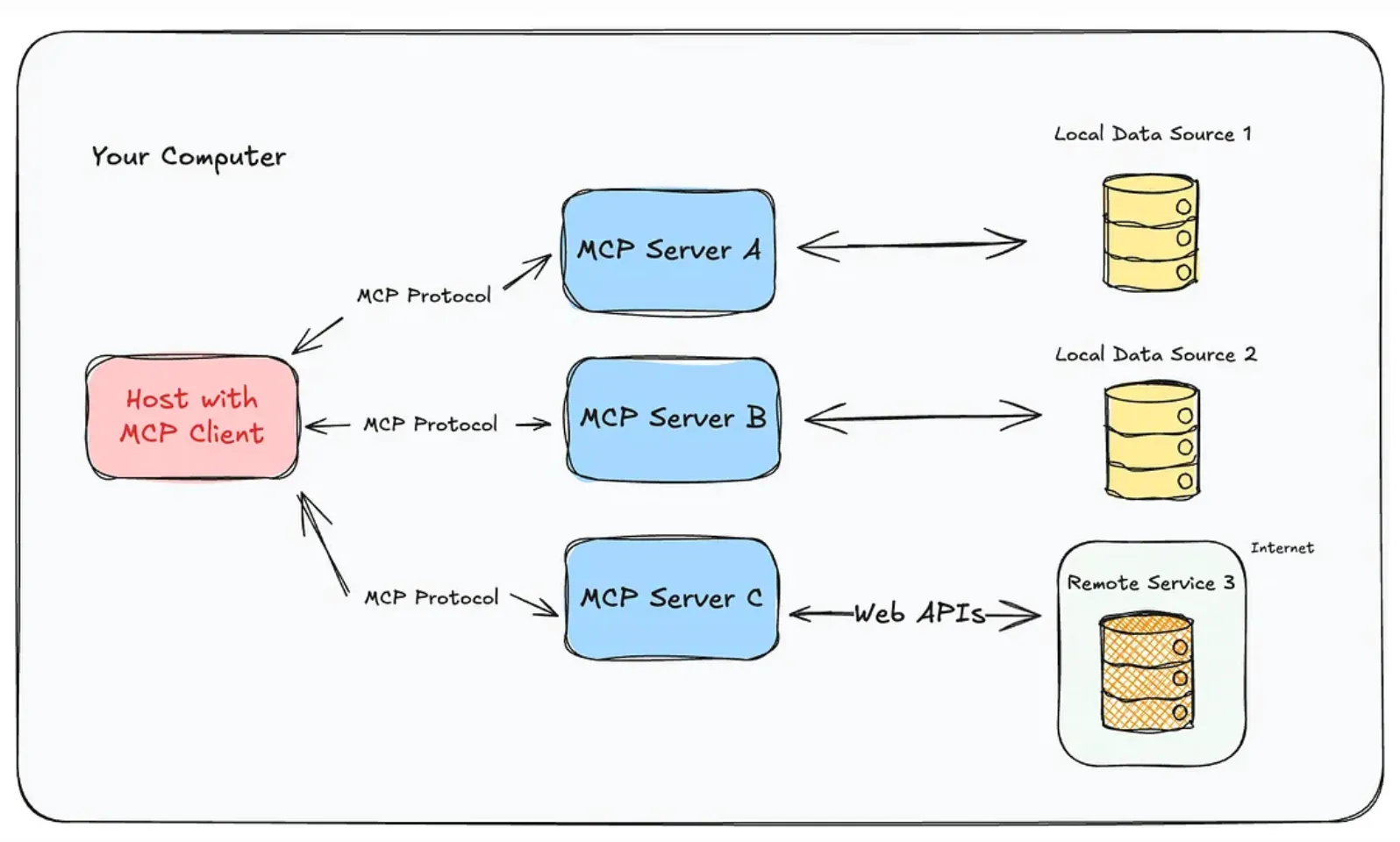

How Does MCP Work?

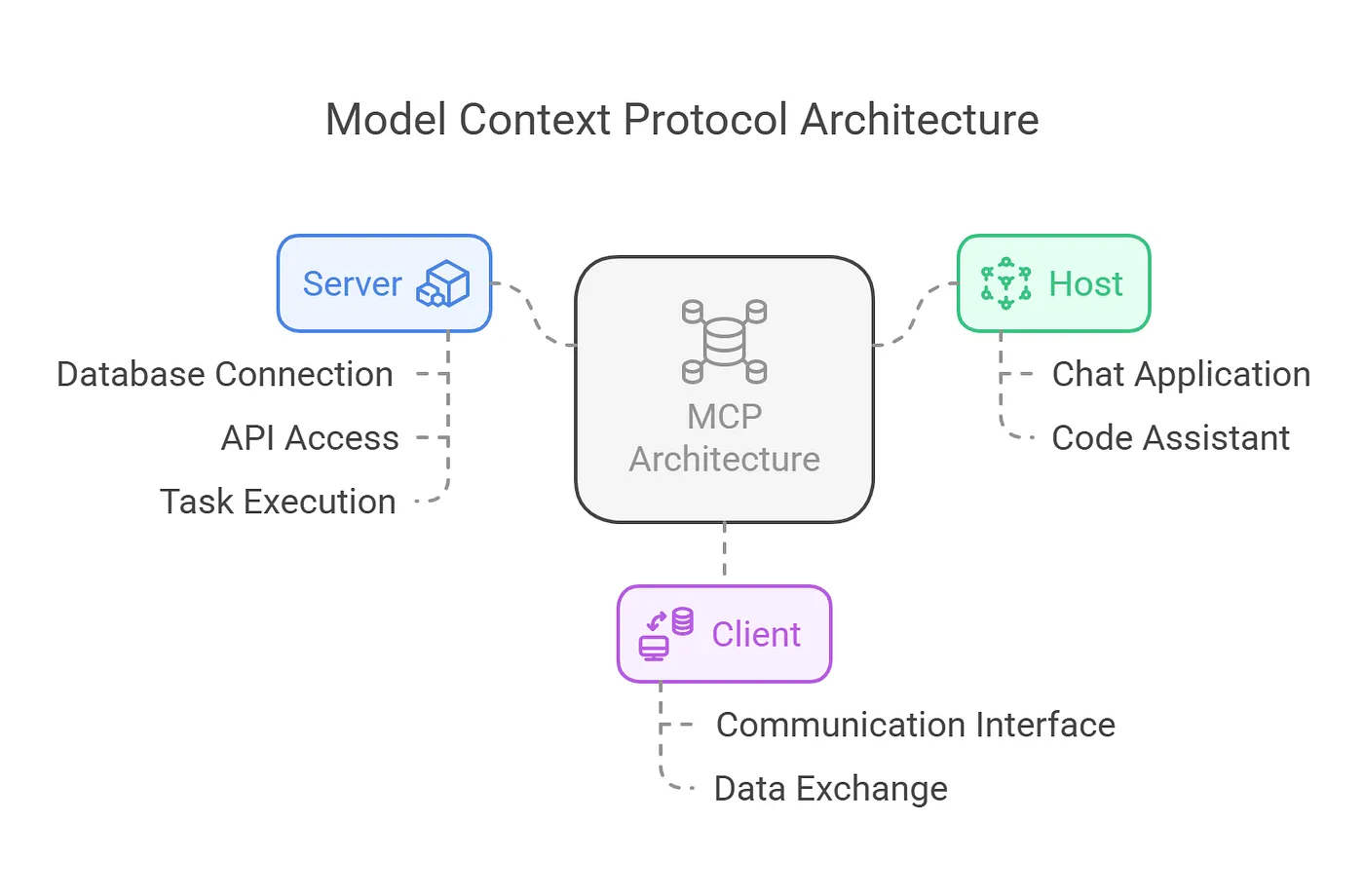

The architecture of MCP is built to balance flexibility with strict security controls. It follows a layered design that separates the model, external services, and communication channel. This separation ensures clear responsibilities, reduces complexity, and makes it easier to scale or extend the system without breaking existing workflows.

- Client Layer: The AI model or agent that initiates requests.

- Server Layer: External tools, APIs, or databases that provide data or functionality.

- Transport Layer: The communication channel, often built on JSON-RPC, that ensures structured, reliable message exchange.

- Permission Controls: Rules that govern what the model can access, protecting sensitive or private resources.

This layered approach ensures that models can interact with external environments while remaining secure, scalable, and easy to extend.

What is Application Programming Interface (API)?

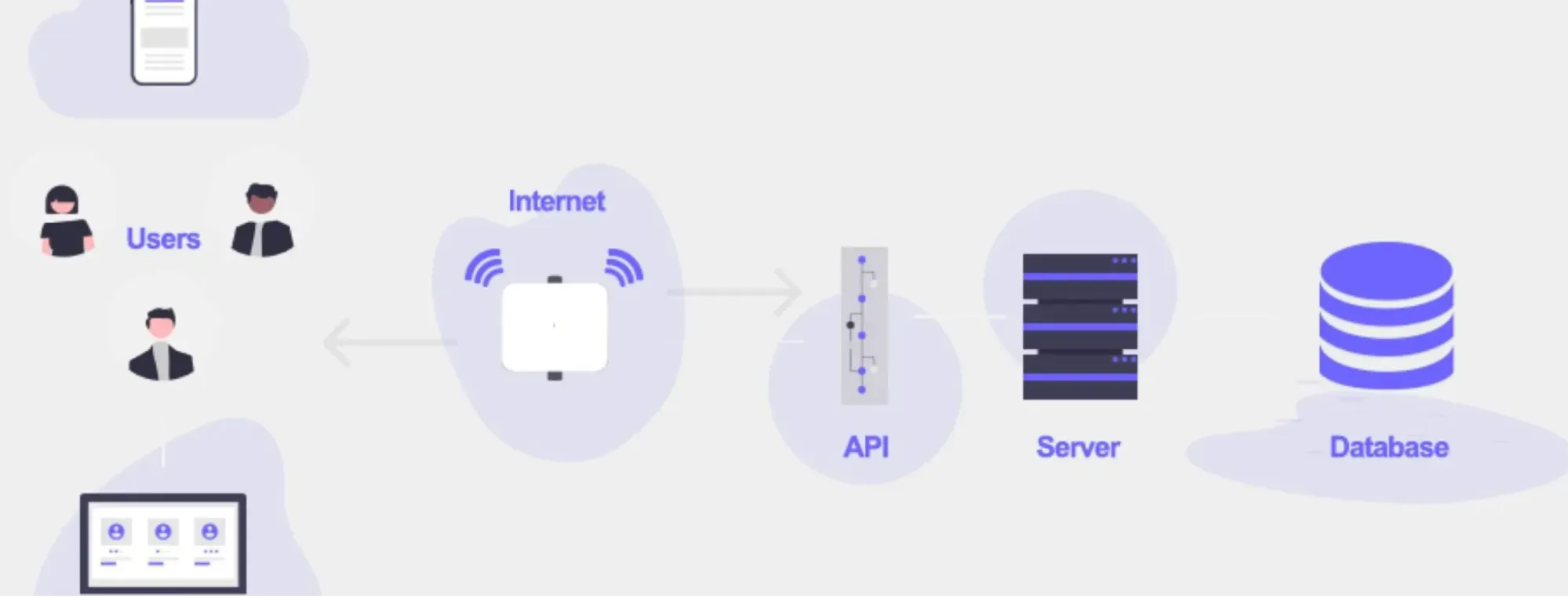

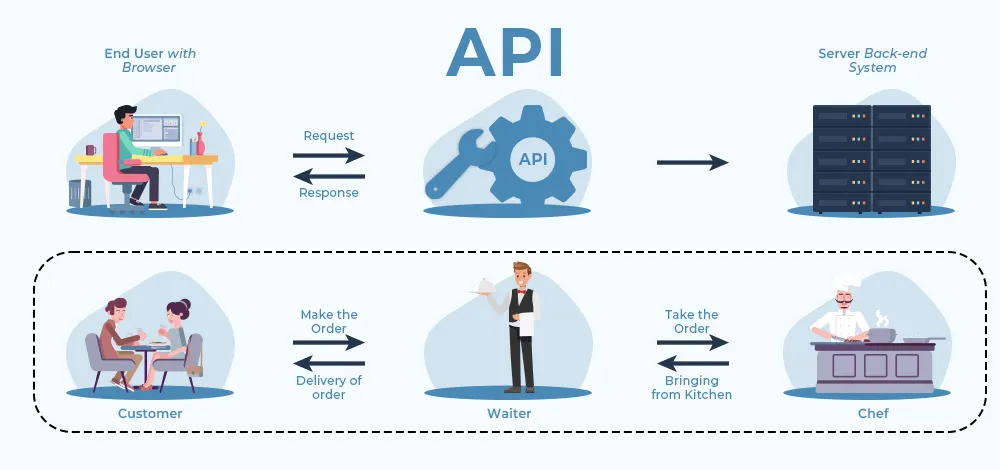

An Application Programming Interface (API) is a defined set of rules that allows two software applications to communicate with each other. Instead of directly accessing a system’s code or database, developers use APIs to request specific services or data in a structured and reliable way.

APIs are the backbone of modern software development, powering everything from mobile apps and web platforms to enterprise integrations.

Key Features of API

- Standardized Access: Provides a consistent way to interact with software components without exposing internal logic.

- Reusability: Enables developers to build once and reuse the same API across multiple applications or services.

- Interoperability: Makes it possible for diverse systems, often built on different technologies, to work together seamlessly.

How Does API Work?

API architecture is developed with the aim of facilitating communication between two or more systems while at the same time ensuring that the process is reliable and secure. Most modern APIs use REST or GraphQL protocols. However, the underlying principles remain the same across the two. Therefore, the following is a description of the key concepts used in API architecture:

- Client: An application that sends a request to the server. In this case, the client could be a mobile application requesting the balance of a user's account. In the modern world, where the use of AI is becoming more common, the client could be the AI agent requesting the use of a tool to perform a given action. In any case, the client is the one that sends the request. Therefore, the client does not receive any information that is not requested.

- Server: A server is the one that receives the request from the client and sends a response. In the case of enterprise AI, the server is the MCP server. MCP is the standardized server that is used as a wrapper around the internal API or the tool. Therefore, the server is the one that sends the response after the request is processed.

- Endpoints: These are the defined URLs that the client uses to access the server. Therefore, the endpoints define the functions that the server is able to handle.

- Protocols: Communication protocols such as HTTP or WebSockets define the communication standards for the transfer of data. HTTP is the foundation of most REST APIs, where the request consists of a method (GET, POST, PUT, DELETE), headers, and an optional body, and the response consists of a status code and body. WebSockets provide a persistent connection for real-time communication, such as the output of an agent or the transcription of voice AI. Other protocols such as Server-Sent Events (SSE) are also gaining traction, particularly in the MCP architecture, where the server needs to send updates to the client without the overhead of a WebSocket connection.

- Authentication & Authorization: At the heart of all API communication is the security mechanism that controls what users are allowed to access the API and what they can do with it. The most common forms of authentication and authorization include using an API key, OAuth 2.0, or JWT.

- Request-Response Cycle: The request-response pattern of an API is where the client sends a request over the protocol, which is received by the server on the endpoint. The request is then received and processed by the server, where the request is verified, the logic is executed, and the response is sent back in the desired format, such as JSON or XML. The request-response pattern is stateless in the case of the REST architecture, meaning that the request is self-contained and the server does not store the state of the request or the client.

- Rate Limiting & Throttling: APIs have their own security mechanisms that limit the number of requests that a client sends. They limit the abuse of their resources and the costs of the resources. In the context of the AI gateway, the rate limiting includes token-based limits. In the case of the TrueFoundry AI Gateway, the rate limiting includes the limits on the number of tokens that a user or application can use on a daily basis.

This structure makes APIs flexible, scalable, and essential for building connected ecosystems

What Is the Difference Between MCP and API?

Both MCP and APIs are designed to connect systems, but they approach integration from very different angles. MCP focuses on giving AI models a secure, standardized way to access external tools and data, while APIs are built to let applications talk to each other using defined requests and responses.

When deciding between the two, it’s important to see how they compare across aspects like architecture, scalability, security, and developer experience.

The table below highlights ten key differences:

MCP enables AI models to access tools and data securely, while APIs connect software applications reliably. Understanding their differences helps you choose the right integration for each scenario. Together, they can complement each other to build scalable, secure, and efficient systems.

Core Architecture Comparison

Understanding the core difference between architectures of API vs MCP is crucial for building scalable, secure, and efficient systems. While both aim to facilitate communication between components, their design philosophies and technical implementations differ substantially.

MCP (Model Context Protocol)

MCP is designed for AI/LLM-driven workflows, enabling models to securely and efficiently interact with external tools, data sources, and services in a structured, standardized manner. Its layered, modular, and context-aware architecture makes it ideal for today’s autonomous AI agents, real-time enterprise applications, and multi-step reasoning tasks.

Key Components:

- Client Layer: AI models or agents initiate requests, interpret tool capabilities, and determine execution flows. Clients manage local context and generate structured queries compatible with server schemas. This supports adaptive, real-time decision-making, crucial for autonomous AI assistants and enterprise AI agents.

- Server Layer: Exposes tools, datasets, or services with machine-readable schemas. Servers enable dynamic discovery of capabilities, allowing models to adapt without code changes. This is essential for hybrid-cloud AI deployments and on-demand orchestration of multiple APIs or services.

- Transport & Access Control Layer: Communication occurs via JSON-RPC, HTTP, or stdio transports. Hosts enforce strict authorization, session isolation, and permission controls. This ensures secure handling of sensitive or regulated data, reducing risks in enterprise-grade AI workflows.

- Schema & Capability Registry: MCP uses structured schemas to define tool inputs, outputs, and constraints. This allows LLMs to reason about tool usage safely, supporting compliance, safe autonomous actions, and efficient multi-step workflows in modern AI applications.

API (Application Programming Interface)

APIs are designed for general-purpose application integration, emphasizing predictable request-response patterns, scalability, and interoperability across diverse systems. They remain a cornerstone of enterprise software, cloud services, and web-scale applications, providing reliable connectivity for both traditional and modern AI-driven workflows.

Key Components:

- Client Layer: Applications or services initiate requests to server endpoints, managing authentication tokens, rate limits, retries, and local caching. This ensures robust performance and reliability in high-volume enterprise or cloud environments.

- Server Layer: Hosts endpoints and defines resource or action contracts. Servers handle request parsing, data validation, processing, and response formatting, typically returning JSON, XML, or protocol-specific payloads. This layer supports predictable, standardized workflows critical for mission-critical systems.

- Endpoints & Protocols: REST, GraphQL, SOAP, or WebSockets govern communication, supporting synchronous or asynchronous operations. Structured endpoints provide consistent access patterns, enabling smooth integration with legacy systems, SaaS platforms, and cloud-native applications.

- Security & Governance Layer: Implements API keys, OAuth, JWT authentication, throttling, and CORS policies. Logging and rate-limiting ensure compliance, auditability, and protection against misuse, which is increasingly important in regulated industries and multi-cloud deployments

Use Cases Analysis

In real-world applications, API and MCP serve different but complementary roles. MCP empowers AI models to interact with multiple tools and datasets dynamically, while APIs provide standardized, reliable connectivity between software systems. Understanding their practical applications helps organizations choose the right integration strategy.

-world applications, MCP and APIs serve different but complementary roles. MCP empowers AI models to interact with multiple tools and datasets dynamically, while APIs provide standardized, reliable connectivity between software systems. Understanding their practical applications helps organizations choose the right integration strategy.

MCP is ideal for dynamic, context-aware AI workflows, while APIs excel in structured, predictable software integration. By leveraging both together, organizations can build systems where AI models intelligently utilize API-driven data and services to deliver smarter, real-time solutions.

Security and Governance

Security and governance are critical considerations for both MCP and APIs, but they address different challenges based on their design and use cases.

MCP (Model Context Protocol) focuses on secure AI model interactions. Its architecture includes host-managed access controls, session isolation, and transport-level security. MCP allows hosts to define which tools, datasets, or services a model can access, reducing the risk of unauthorized actions or data leaks. Schema-based tool descriptions help models understand input/output constraints, preventing accidental misuse or injection attacks. Logging and auditing of model-tool interactions enable governance and compliance tracking in enterprise environments. MCP’s security model is particularly suited for agentic AI applications where LLMs execute multi-step workflows across sensitive data sources.

API Security and Governance emphasizes application-level protection and standardization. APIs implement authentication and authorization mechanisms such as API keys, OAuth, and JWT tokens, alongside rate limiting and throttling to prevent misuse or overloading. Logging, monitoring, and versioning policies ensure compliance, traceability, and backward compatibility across systems. Enterprises can enforce governance policies at the API gateway level, controlling who can access which endpoints and under what conditions.

MCP prioritizes context-aware, AI-specific security, while APIs provide broad, standardized application security and governance. Using both together allows organizations to maintain robust security across AI-driven workflows and traditional software integrations.

Developer Experience

Developer experience plays a crucial role in adoption and productivity for both MCP and APIs. MCP (Model Context Protocol) is designed to streamline AI model integration with external tools, offering SDKs, reference servers, and client libraries in multiple programming languages.

Developers can quickly set up hosts and servers, define tool schemas, and connect LLMs to services without extensive boilerplate code. Its structured JSON-RPC transport, schema validation, and dynamic discovery capabilities reduce errors and simplify debugging in complex AI workflows.

MCP:

- SDKs and reference implementations in multiple languages

- Dynamic tool discovery and schema validation for LLMs

- Built-in error handling and debugging support for AI workflows

APIs, by contrast, offer a mature ecosystem for general software integration. Developers benefit from standardized specifications like OpenAPI/Swagger, client libraries, and API gateways that simplify authentication, versioning, and monitoring. Clear endpoint contracts and extensive documentation make onboarding and maintenance predictable. Tools for testing, mocking, and monitoring APIs enhance developer productivity while ensuring that integrations remain stable and secure.

APIs:

- Standardized specifications (OpenAPI/Swagger) and endpoint contracts

- Client libraries, gateways, and monitoring tools for smooth integration

- Extensive documentation and testing frameworks for reliability

Overall, MCP optimizes AI-focused development, making multi-tool orchestration easier, while APIs provide robust, standardized developer support across general software applications.

Performance and Scalability

Performance and scalability are essential for both MCP and APIs, but their design focuses differ due to their target use cases

Low-Latency Communication

MCP is optimized for AI-driven workflows, enabling low-latency interactions between LLM clients and multiple tool servers using JSON-RPC over HTTP or stdio. Structured schemas reduce processing overhead, ensuring rapid responses for multi-step AI tasks. APIs, on the other hand, rely on REST, GraphQL, or WebSockets, providing predictable latency for general application requests. While APIs are highly reliable, they may not dynamically adapt to complex, multi-tool AI workflows in real-time.

Horizontal Scaling and Concurrency

MCP supports horizontal scaling through multiple server instances handling concurrent model requests. Session isolation prevents workflow conflicts and ensures consistent performance. APIs also scale horizontally across distributed servers and cloud infrastructure, handling large volumes of client requests with load balancing, caching, and throttling. While API scaling is mature and well-understood, MCP scaling focuses specifically on parallel AI operations with dynamic tool access.

Workflow Efficiency and Optimization

MCP’s schema-based design allows AI models to reason about tool capabilities and execute tasks efficiently, minimizing redundant computations and data fetching. APIs achieve efficiency through optimized endpoints, caching strategies, and monitoring tools that maintain throughput and reliability. Unlike MCP, API efficiency is centered on predictable, general-purpose request-response patterns rather than dynamic AI reasoning.

MCP ensures low-latency, AI-optimized operations, while APIs provide scalable, robust performance for traditional software communication, excelling in their respective domains.

MCP vs API: When to use each one of these?

Choosing between MCP vs APIs depends on the workflow, application needs, and the level of AI integration required:

Use MCP When:

- You need dynamic, context-aware AI workflows that can access multiple tools or data sources in real time.

- LLMs or AI agents must reason, orchestrate, and adapt to changing inputs without pre-defined scripts.

- Security, session isolation, and governance for autonomous AI operations are critical.

- You are building agentic AI systems, intelligent assistants, or multi-step decision-making pipelines.

Use APIs When:

- You require predictable, reliable integration between traditional applications or services.

- Workflows involve structured, pre-defined request-response patterns rather than dynamic AI reasoning.

- Scalability, monitoring, and compliance for enterprise-grade software systems are priorities.

- You are connecting legacy systems, SaaS platforms, or cloud services that expose endpoints for data or actions.

How MCP and API Relate?

MCP and APIs are complementary technologies that often work together in modern AI and software ecosystems. While APIs provide stable, standardized endpoints for accessing services, databases, and applications, MCP enables AI models and agents to dynamically discover, reason about, and orchestrate these tools in real time.

Essentially, MCP leverages APIs as the underlying interface, adding context-awareness, adaptive decision-making, and multi-step workflow orchestration on top of the predictable functionality that APIs provide.

This combination allows organizations to build systems where AI models can intelligently interact with API-driven services, ensuring both flexibility and reliability.

In 2026, such hybrid MCP-API architectures are increasingly standard in enterprise AI, supporting autonomous agents, secure workflows, and real-time decision-making without compromising interoperability or performance.

The Future of MCP and API

As AI continues to evolve, MCP and APIs are shaping the way intelligent systems interact with tools and data. Understanding their future roles helps organizations build adaptive, secure, and efficient AI-driven workflows.

MCP as a Standardized AI Interface: MCP is evolving as a universal, model-agnostic interface, sometimes referred to as the “USB-C for AI.” It allows AI agents to seamlessly connect with a wide range of applications and services without the need for custom, hardcoded integrations for each tool.

Dynamic Tool Discovery and Usage: Unlike traditional APIs, which require pre-defined endpoints and manual coding for every interaction, MCP enables AI models to discover and interact with new tools dynamically. This makes AI workflows more adaptive and capable of responding to changing business needs in real time.

From Data Access to Controlled Actions: While APIs primarily provide raw, structured data for trusted systems, MCP empowers AI agents to securely execute tasks, interpret complex responses, and handle rich, structured error feedback. This enables safer, more autonomous AI operations in enterprise environments.

Hybrid Architecture – Wrapping APIs: MCP does not replace APIs; instead, it complements them by acting as a layer that makes API-driven data and services usable for AI. APIs continue to manage reliable, standardized endpoints, while MCP adds intelligence, context-awareness, and orchestration capabilities.

Rapid Ecosystem Expansion: The MCP ecosystem is growing quickly, with hundreds of community-built servers and services that give AI models the ability to interact with real-world applications and tools. This accelerates the adoption of agentic AI, enabling smarter, autonomous workflows that combine real-time reasoning with secure, reliable data access.

Conclusion

MCP and APIs serve distinct but complementary roles in modern software and AI ecosystems. MCP excels in AI-driven, context-aware workflows, enabling LLMs to dynamically access multiple tools and datasets while maintaining secure, structured interactions.

APIs provide robust, standardized communication across applications, microservices, and external platforms, ensuring predictable performance and scalability. Understanding the differences in architecture, use cases, security, developer experience, and performance allows organizations to choose the right integration strategy.

By combining MCP’s AI-focused capabilities with APIs’ general-purpose connectivity, teams can build intelligent, efficient, and secure systems that meet both AI and traditional software needs.

Frequently Asked Questions

When to use MCP vs API?

Use traditional APIs for fixed, developer-coded connections between software systems. Choose MCP when enabling AI agents to interact with multiple tools dynamically. MCP is ideal for production environments where models need to discover resources and fetch context without manual endpoint configuration for every new integration.

Will MCP replace API?

No, MCP functions as a standardized wrapper rather than a replacement for traditional APIs. It abstracts existing API endpoints into a machine-readable schema that LLMs can understand. By using MCP and API together, enterprises leverage their existing infrastructure while making it accessible to autonomous AI agents through a universal interface.

When is MCP better than API?

In the context of MCP vs API, MCP is better for agentic workflows requiring multi-tool orchestration. It excels when AI models must choose between various data sources dynamically. Using a centralized gateway like TrueFoundry to manage these MCP connections provides the governance, security, and auditing that basic API integrations lack.

Is MCP the same as an API?

No, MCP and APIs are not the same. APIs provide standardized, fixed endpoints for software integration, while MCP is designed for AI/LLM workflows, enabling dynamic tool discovery, context-aware orchestration, and structured reasoning. MCP often leverages APIs as underlying interfaces but adds intelligence, adaptability, and secure multi-step execution.

Is MCP faster than API?

MCP is not inherently faster than APIs in raw data transfer. Instead, it is optimized for AI workflows, reducing overhead for multi-step reasoning and tool orchestration. APIs provide predictable latency for standard requests, while MCP prioritizes dynamic interactions and context-aware decision-making, improving efficiency in complex AI-driven tasks.

How to convert API to MCP server?

To convert an API into an MCP server, define machine-readable schemas for the API endpoints, inputs, and outputs. Implement a server layer exposing these tools via MCP’s transport protocols (JSON-RPC/HTTP). Add access controls, session isolation, and metadata for AI reasoning, enabling LLMs to dynamically discover and use the API safely.

TrueFoundry AI Gateway delivers ~3–4 ms latency, handles 350+ RPS on 1 vCPU, scales horizontally with ease, and is production-ready, while LiteLLM suffers from high latency, struggles beyond moderate RPS, lacks built-in scaling, and is best for light or prototype workloads.

The fastest way to build, govern and scale your AI

.png)

.webp)

.webp)

.webp)