A Guide to Cloud Node Auto-Provisioning

Built for Speed: ~10ms Latency, Even Under Load

Blazingly fast way to build, track and deploy your models!

- Handles 350+ RPS on just 1 vCPU — no tuning needed

- Production-ready with full enterprise support

Different workloads require varying hardware specifications such as machine type, size, and geolocation. With the rise of ML/LLMs, selecting the right hardware has become crucial. We need to make choices based on hardware specifications like OS type, architecture, processor, GPU type, and storage. Kubernetes aids in orchestrating and distributing resources among similar workloads, but dynamically provisioning these resources on demand remains a challenge.

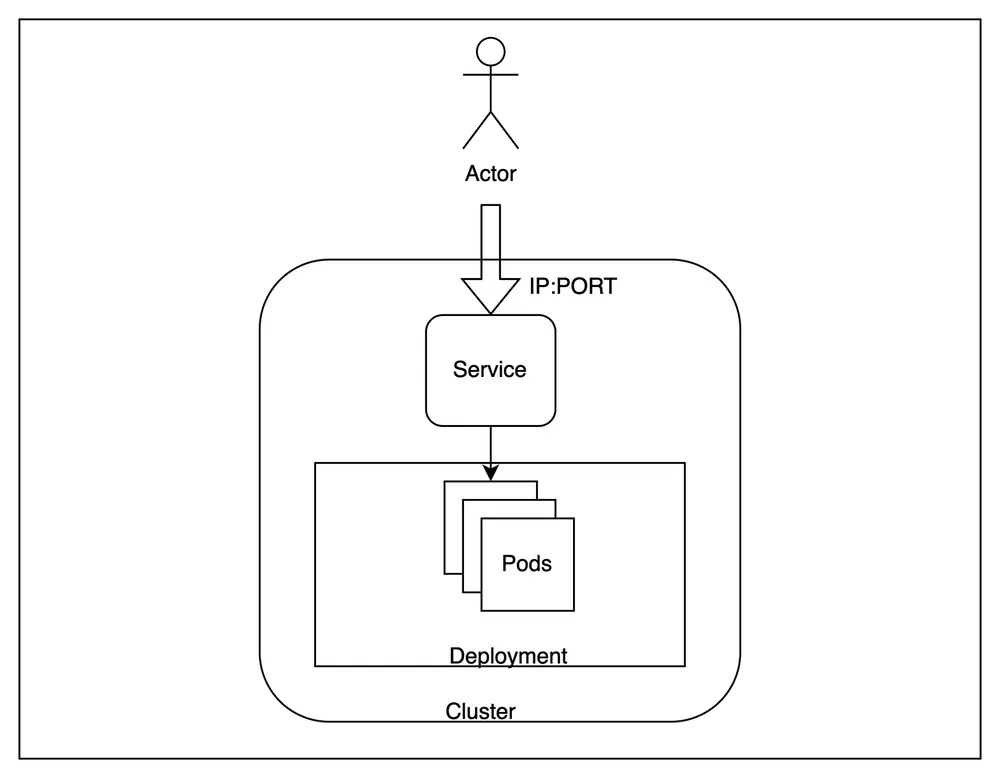

A Kubernetes (K8s) cluster is a grouping of nodes that run containerized apps in an efficient, automated, distributed, and scalable manner. Each node within a Kubernetes cluster has specific attributes like machine type, size, and location.

This blog explores the necessity of cloud node auto-provisioners to manage diverse workload requirements within Kubernetes clusters automatically. We also provide insights into solutions offered by major cloud providers such as AWS, GCP, and Azure. Lastly, we delve into how TrueFoundry addresses these challenges as a platform.

Introduction

Node auto-provisioning automates the provisioning of the appropriate node group based on unscheduled pod constraints to optimize infrastructure costs. Node auto-provisioners in a Kubernetes cluster are responsible for the following actions:

- Scheduling: launching of nodes in response to unscheduled pods after solving scheduling constraints by optimally deciding the best possible machine type and size.

- Disruption: Deleting of nodes when no longer in use due to expiration, consolidation, drift or interruption

Why Node auto-provisioning?

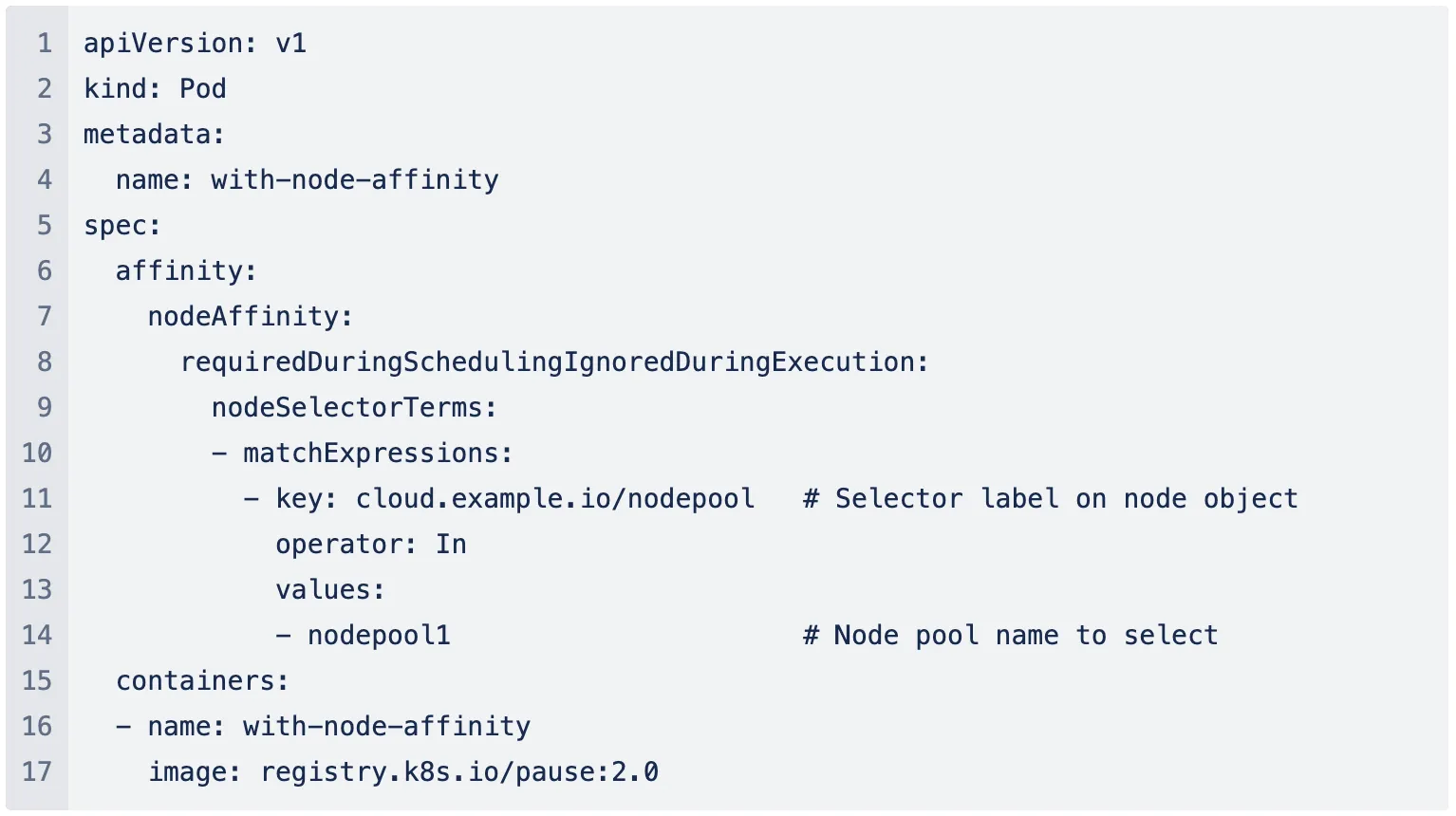

Kubernetes has nodes as its worker machines with specific hardware configurations like machine type, size and capacity type and node pools refer to pools of such common worker machines. In the absence of a node auto-provisioner, the only way to allocate a specific hardware configuration to your workload was through node pool selection. This requires the user to create a node pool with the required configuration in their cloud and then add node affinity for the specific node pool in Pod spec.

Overall, this mechanism involves the following steps:

- Pre-defining your hardware requirements.

- Searching and selecting the best possible machine type and size for given requirements.

- Provisioning of selected node pool(s).

The combinations of node pool requirements could be numerous, especially during the experimentation phase for ML/LLM workloads. Coordination between separate DevOps and Platform teams could consume significant time during development. Therefore, a controller that dynamically assesses requirements and automatically provisions infrastructure becomes crucial.

How does node auto-provisioning work?

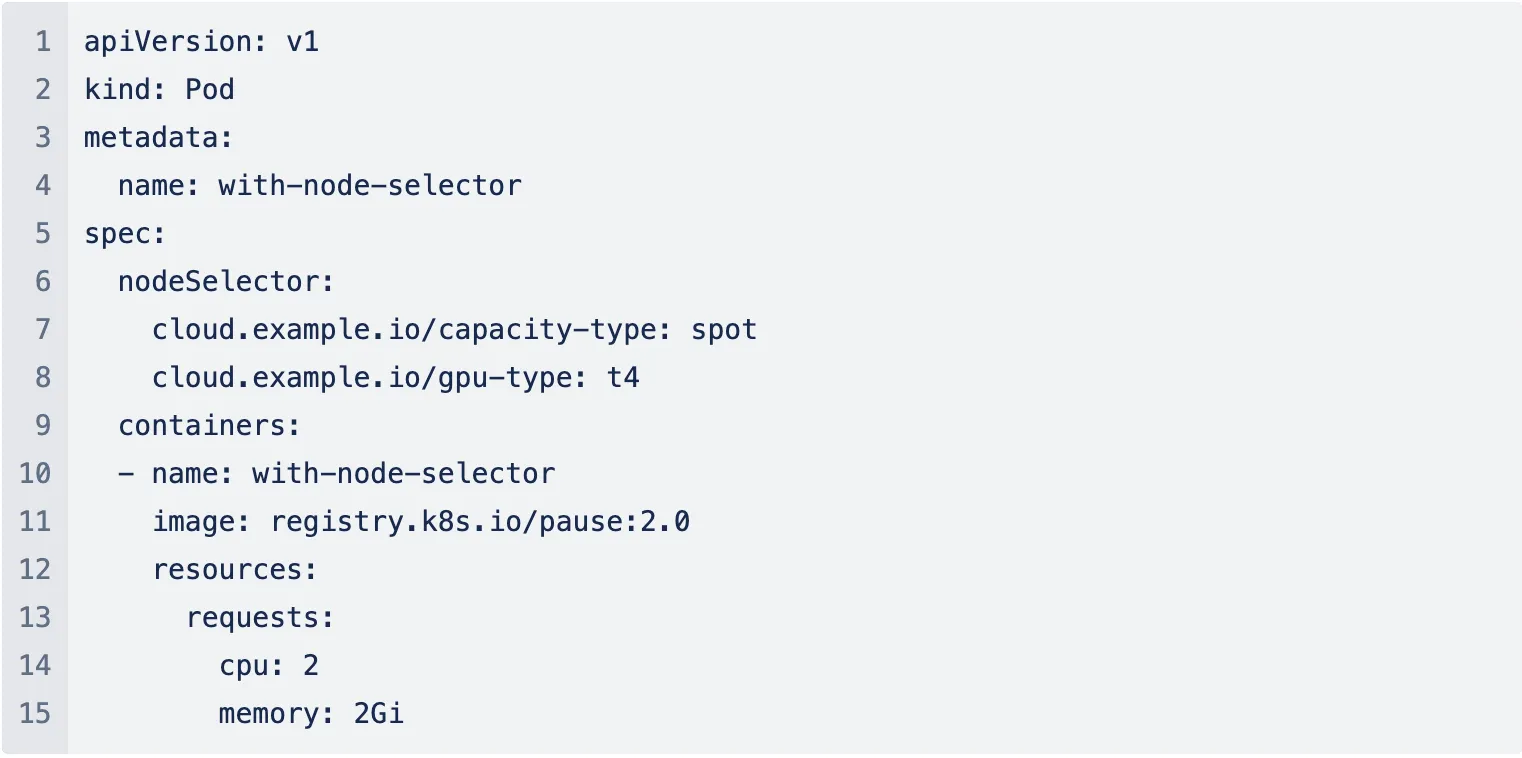

Node auto-provisioning eliminates the manual step of pre-creating node pools by allowing users to add high-level requirements as constraints. It automatically determines the best machine type or available node for the workload.

Some of the commonly used constraints:

- cpu: required CPU e.g.

2 - memory: required memory for workload e.g.

1000 - gpu-type: required GPU type e.g.

t4, a100 - capacity type: Purchasing option e.g.

on-demandorspot - zones: geological locations e.g.

us-east-1a - operating system: os of the machine e.g.

linuxorwindows - architecture: the architecture of the machine e.g.

arm64oramd64

Cloud Providers offering node auto-provisioning

Each cloud provider offers its auto-provisioning mechanisms. AWS requires the installation of tools like Karpenter, while GCP provides a built-in solution. Azure recently introduced its auto-provisioner project, currently in preview mode.

AWS Karpenter

Karpenter, an open-source node lifecycle management project designed for Kubernetes, significantly enhances the efficiency and cost-effectiveness of running workloads on clusters. By considering scheduling constraints such as resource requests, node selectors, affinities, tolerations, and topology spread constraints, Karpenter intelligently provisions and deallocates nodes as needed.

GCP Node auto-provisioning

Node auto-provisioning, integrated into the cluster autoscaler, scales existing node pools based on the specifications of unschedulable Pods. GCP's auto-provisioning feature ensures optimal resource utilisation by considering CPU, memory, ephemeral storage, GPU requests, node affinities, and label selectors.

Azure Node auto-provisioning

Azure's Node auto-provisioning (NAP) project, currently in preview mode, leverages the open-source Karpenter project to determine the optimal VM configuration for running workloads efficiently and cost-effectively. NAP automatically deploys and manages Karpenter on AKS clusters, providing users with a seamless experience.

💡

Node auto-provisioning (NAP) for AKS is currently in PREVIEW. We are very excited for this new project and looking forward to use it for our customers. Learn more

TrueFoundry - Get node auto-provisioning experience

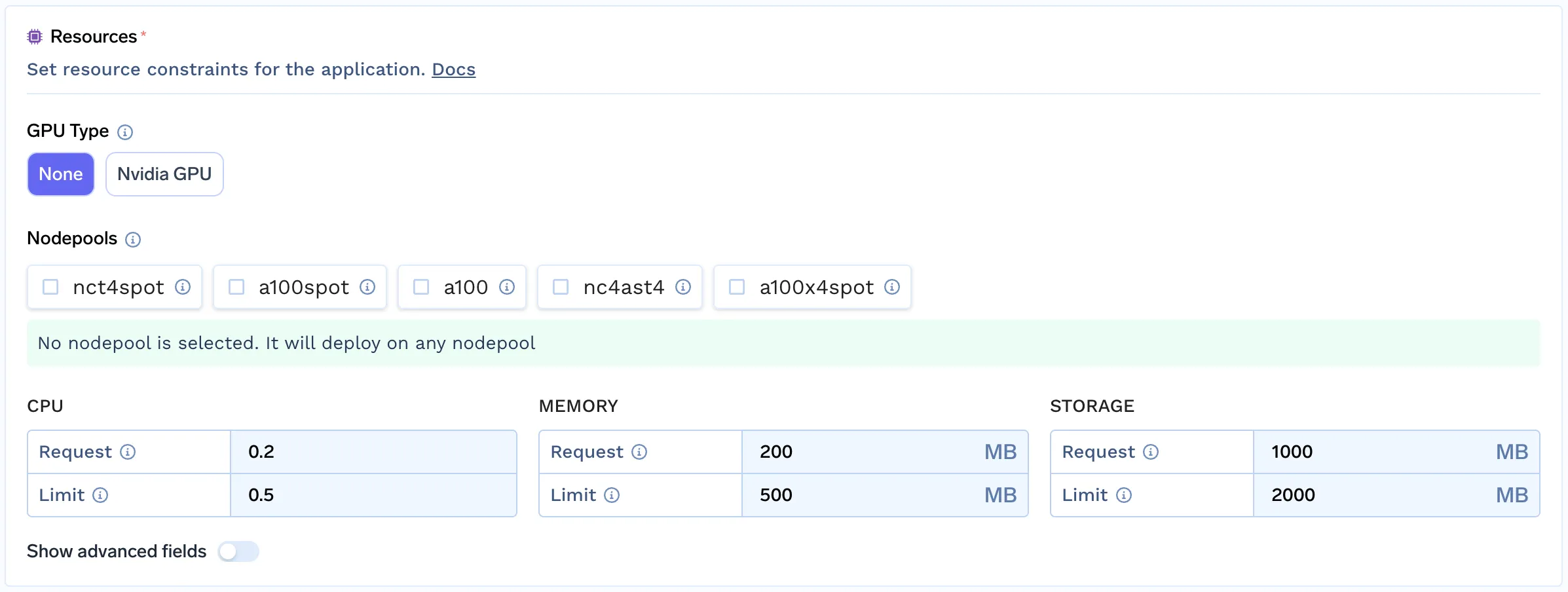

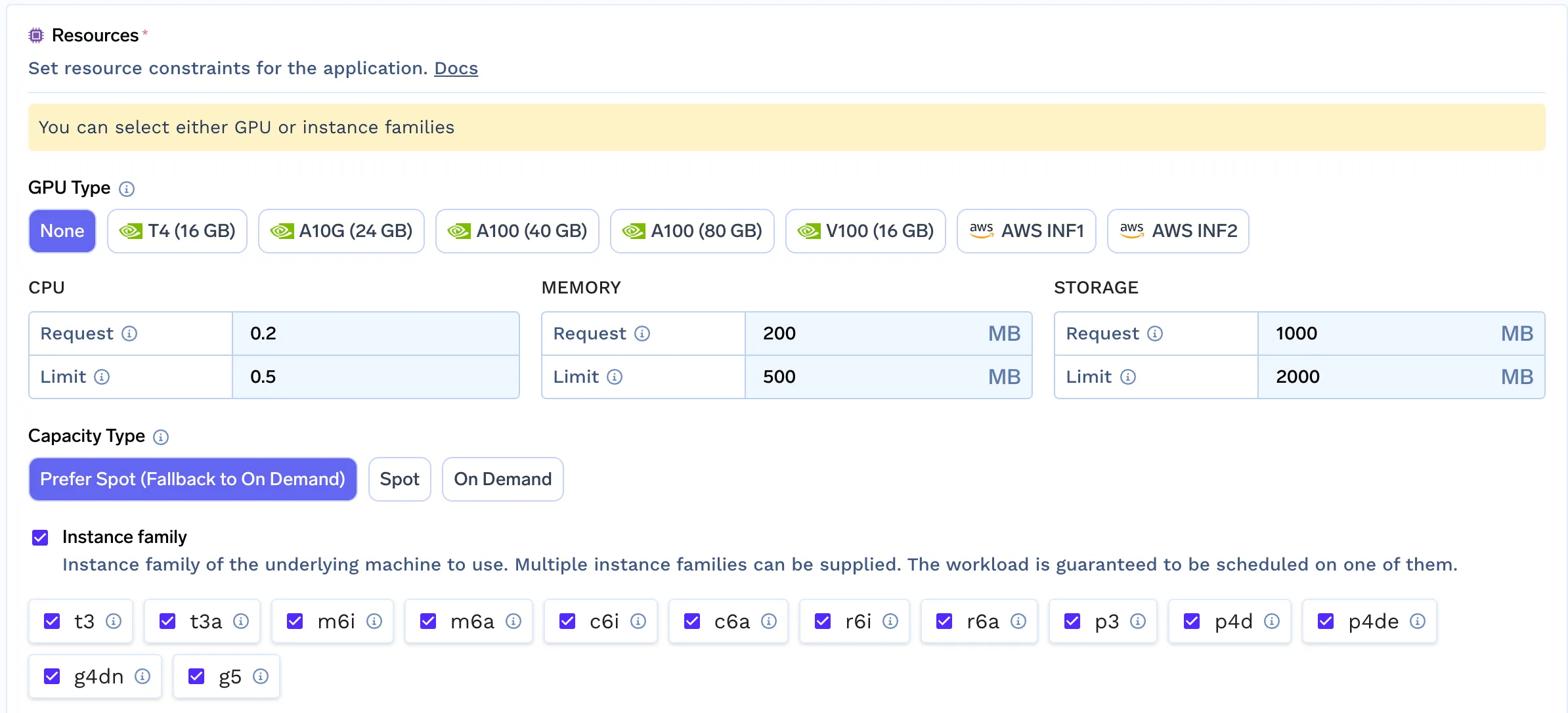

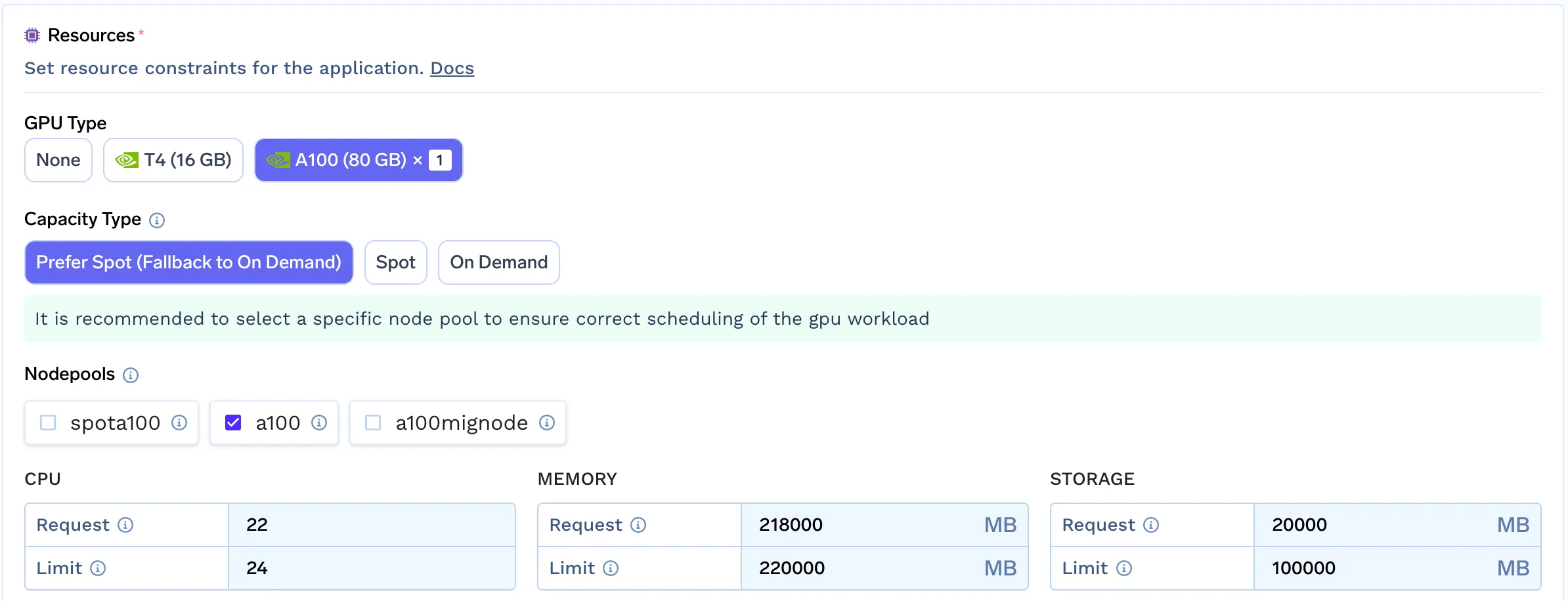

TrueFoundry offers advanced filtering capabilities for node pools, simulating the node auto-provisioning experience.

To accomplish this, we followed a few simple steps:

- Programmatically create node pools with all combinations of machine types and capacity types, enabling autoscaling while minimizing to zero.

- Link the node pool to its machine type, CPU, memory, GPU type and count.

- We then filter out these node pools based on requirements making it easy for the end user to choose the correct infra.

This approach enables Developers/Data scientists to select the best node pool for their workload by analysing their requirements. This simple mechanism allows us to provide the same experience for any cloud that does not have built-in support for auto-provisioners yet.

Conclusion

As infrastructure requirements continue to evolve, cloud providers are dedicated to streamlining the process of selecting the optimal infrastructure for diverse workloads. At TrueFoundry, we share this commitment by striving to empower Developers with the tools and knowledge they need to deploy their workloads seamlessly.

TrueFoundry AI Gateway delivers ~3–4 ms latency, handles 350+ RPS on 1 vCPU, scales horizontally with ease, and is production-ready, while LiteLLM suffers from high latency, struggles beyond moderate RPS, lacks built-in scaling, and is best for light or prototype workloads.

The fastest way to build, govern and scale your AI

.webp)

.webp)

.webp)

.webp)

.webp)

.webp)

.png)

.webp)