TrueML Talks #29 - GenAI and LLMs for Location Intelligence @ Beans.AI

Built for Speed: ~10ms Latency, Even Under Load

Blazingly fast way to build, track and deploy your models!

- Handles 350+ RPS on just 1 vCPU — no tuning needed

- Production-ready with full enterprise support

We are back with another episode of True ML Talks. In this, we again dive deep into MLOps pipelines and LLMs Applications in enterprises as we are speaking with Sandeep Singh

Sandeep is the head of machine learning applied AI at the company called Beans AI.

📌

Our conversations with Sandeep will cover below aspects:

- Revolutionizing Location Intelligence with AI

- The Machine Learning Engine Behind Beans.AI

- Beyond the Cloud: A Hybrid Machine Learning Workflow

- A Deep Dive into the Model Zoo

- Managing Large Language Models

- Experimentation vs. Cost in Model Hosting

Watch the full episode below:

Revolutionizing Location Intelligence with AI

Beans.AI offers a suite of software-as-a-service (SaaS) solutions that utilize AI to understand and navigate physical spaces. Their solutions go beyond traditional mapping, offering:

- Indoor Navigation: Beans.AI can pinpoint your exact location within large buildings, guiding you to your destination with ease. Imagine navigating a sprawling hospital complex or a massive stadium with confidence, knowing exactly where you are and where you need to go.

- Delivery Optimization: Delivery companies like FedEx rely on Beans.AI's technology to optimize routes and ensure timely deliveries. The platform accurately identifies even complex secondary addresses, like specific apartments within large buildings, eliminating delivery delays and frustrations.

- Real-time Data Insights: Beans.AI provides valuable data insights for various industries, including insurance, retail, and public safety. Their intelligent clustering methods help identify related buildings, entrances, and walkways, enabling smarter decision-making.

Beans.AI utilizes a combination of data sources, including:

- Satellite Imagery: High-resolution satellite images provide a base layer for understanding the physical environment.

- Public and Proprietary Data: Beans.AI leverages various public and partner-acquired data sets, including location data, text data, image data, and more.

- Human-in-the-Loop Approach: The platform combines AI with human expertise, ensuring accuracy and adaptability. Users can review and refine data points, further enhancing the platform's effectiveness.

The Machine Learning Engine Behind Beans.AI

From GIS Basics to Cutting-Edge Tech:

It is very important to understand the geographical information systems (GIS) before diving into AI. This foundation, combined with expertise from Esri, a leader in mapping solutions, forms the bedrock of their approach.

Beans.AI doesn't rely on a single set-up. They leverage a flexible mix of tools and platforms:

- Esri: For core GIS functionalities and computer vision solutions.

- PyTorch: A popular deep learning framework for model development.

- VS Code: A versatile code editor for developers.

- Google Cloud Platform (GCP): Their primary platform for model training and deployment.

- Vertex AI: Google's managed machine learning platform for versioning and serving models.

- Labelbox, V7 Labs, Landing.AI: Diverse platforms for data labeling and annotation, catering to specific needs.

Beans.AI prioritizes speed and adaptability. They experiment with different tools and stay nimble, keeping an eye on evolving technologies. Their approach isn't about rigid processes, but about choosing the right tool for the job, allowing them to move fast and innovate.

Building these models requires close collaboration. Their Chief GIS Officer bridges the gap between geographical expertise and AI development, facilitating seamless communication and knowledge sharing.

While AI plays a crucial role, Beans.AI recognizes the value of human expertise. Their GIS knowledge and understanding of specific use cases guide the development process, ensuring models are well-aligned with real-world needs.

Beyond the Cloud: A Hybrid Machine Learning Workflow

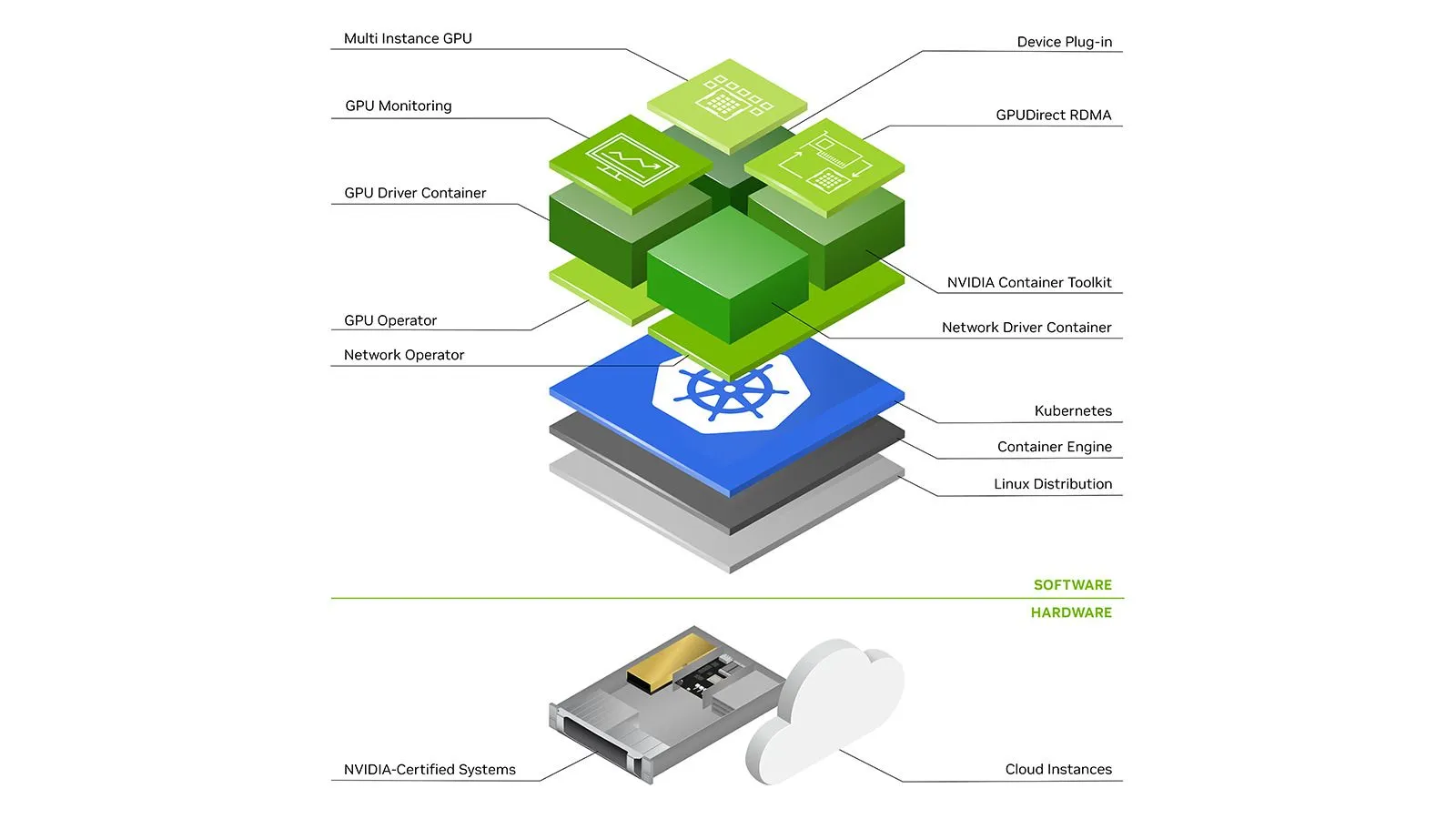

When experiments graduate to production-ready models, Beans.AI turns to GCP. From training complex algorithms to serving predictions at scale, GCP provides a robust and scalable infrastructure. They leverage Kubernetes clusters for seamless horizontal scaling, ensuring responsiveness during peak seasons when package deliveries soar.

Beans.AI recognizes that a single platform can't solve everything. They actively experiment with other solutions like Vertex AI for specific tasks. However, they advocate for flexibility and data ownership. Solutions like Landing.AI, which allow model portability beyond their platform, resonate with their philosophy of operational ease and cost optimization.

Beans.AI navigates the ever-evolving landscape of AI responsibly. They actively explore new solutions like Palm APIs and OpenAI's Falcon, prioritizing quality and agility. Balancing cost and functionality, they advocate for open model access after training, allowing for wider deployment and impact.

A Deep Dive into the Model Zoo: From Computer Vision to Text Processing

Beans.AI's approach is anything but monolithic. They constantly explore and experiment with various open-source models, tailoring them to specific needs:

- Computer Vision: For tasks like building and parking segmentation, they've moved from ResNet-based models to the latest visual transformers, always seeking the best fit.

- Text Processing: From chatbots answering user queries to email parsing for automated order creation, they utilize models like Falcon 40B and leverage H2O.AI's LLM Studio for faster experimentation.

Beans.AI champions open-source models, enabling transfer learning and customization:

- Apache-licensed models: Allow commercial use and fine-tuning for specific tasks.

- Experimentation Platforms: H2O.AI's LLM Studio streamlines testing different models and fine-tuning techniques.

They emphasize the importance of testing models on your own data and tasks, as benchmarks don't always translate to real-world performance.

Beans.AI is exploring exciting text-to-image applications:

- Generating variations of uploaded package photos: Using NeRF-like solutions to enhance user experience by displaying multiple views.

- Creating descriptions from photos: Automatically describing package placement or damage for improved analytics.

They're exploring Stable Diffusion to create multiple variations of package photos, adding a touch of surprise and delight to the user experience.

Managing Large Language Models

There is a clear distinction between the need for training and inference when it comes to LLMs:

- Training: Requires immense computational power, often involving 5x GPUs per job. Beans.AI leverages diverse platforms like Runpod.IO, Paper Space, and Nvidia NGC for flexibility and cost optimization.

- Inference: Smaller "animal" to handle, often deployed on Google VMs. This grants complete control over scaling and performance, crucial for their fast-paced environment.

While Google remains their primary ecosystem, Beans.AI doesn't shy away from exploring other options:

- Lambda Labs: Initially considered but deemed cost-prohibitive.

- Azure: A promising alternative with improved model hosting, provisioning, and GPU availability. They're actively evaluating its potential for data science tasks.

Beans.AI emphasizes a flexible approach, adapting its strategy based on specific needs:

- Control is paramount: Vanilla VMs offer full control over scaling and performance, outweighing the convenience of managed solutions like Vertex AI.

- Maturation is happening: As managed platforms like Vertex AI evolve and offer more control, they might become viable options in the future.

- Azure's potential: Its competitive offerings in model hosting, provisioning, and GPU availability make it a promising candidate for future exploration.

Experimentation vs. Cost in Model Hosting

While latency and platform specifics aren't immediate concerns, Beans.AI, emphasizes upfront cost estimation:

- Macro calculations: Estimating overall costs before experimentation helps set expectations and plan effectively.

- Industry-wide need: Intelligent cost forecasting tools for experimentation remain a gap in the field.

Beans.AI navigates the trade-off between prompt engineering and fine-tuning:

- Prompt engineering: Almost zero cost, perfect for simple interactions, but potentially less graceful.

- Fine-tuning: More powerful, but incurs significant training costs.

They strategically combine both techniques for optimal results:

- Cost-conscious exploration: Prompt engineering for initial experimentation.

- Fine-tuning for key applications: When performance outweighs cost concerns.

It is important to have cost awareness:

- Training from scratch is rarely feasible: High costs and complexity make it a risky option.

- Intelligent cost management is key: Optimizing experimentation saves resources and accelerates innovation

Read our previous blogs in the True ML Talks series:

Keep watching the TrueML youtube series and reading the TrueML blog series.

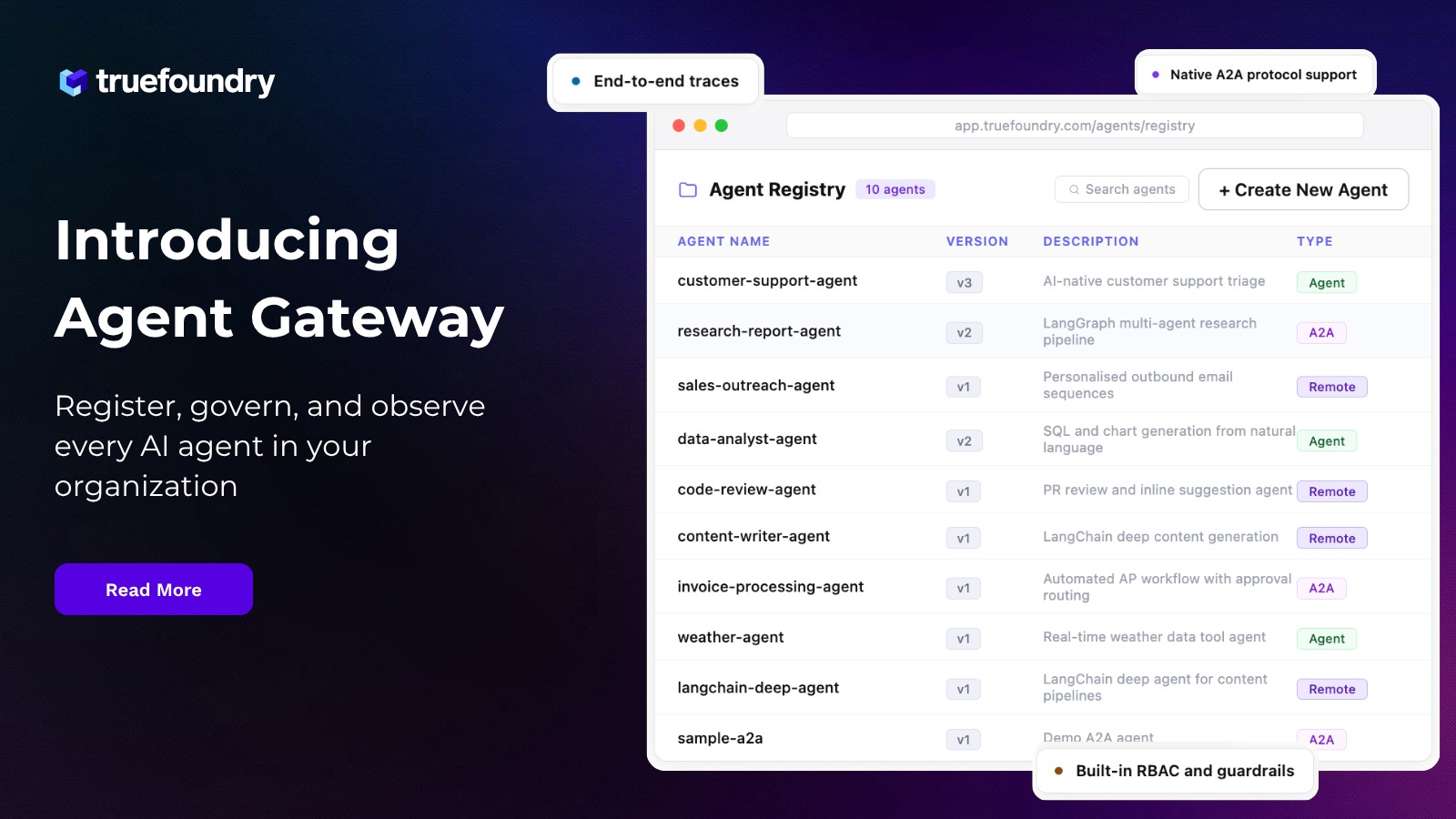

TrueFoundry is a ML Deployment PaaS over Kubernetes to speed up developer workflows while allowing them full flexibility in testing and deploying models while ensuring full security and control for the Infra team. Through our platform, we enable Machine learning Teams to deploy and monitor models in 15 minutes with 100% reliability, scalability, and the ability to roll back in seconds - allowing them to save cost and release Models to production faster, enabling real business value realisation.

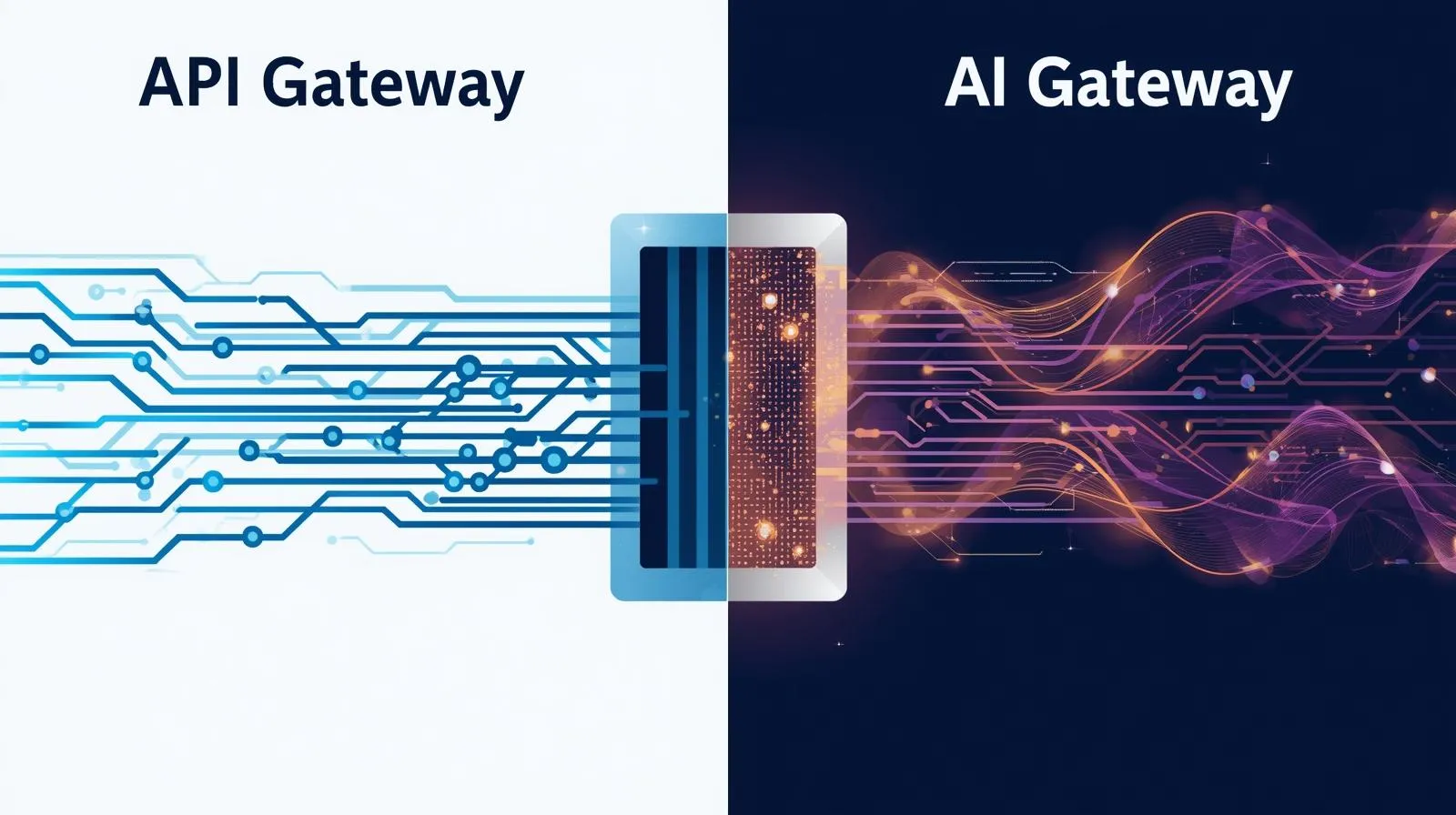

TrueFoundry AI Gateway delivers ~3–4 ms latency, handles 350+ RPS on 1 vCPU, scales horizontally with ease, and is production-ready, while LiteLLM suffers from high latency, struggles beyond moderate RPS, lacks built-in scaling, and is best for light or prototype workloads.

The fastest way to build, govern and scale your AI

.webp)

.webp)

.webp)