Best Prompt Engineering Tools in 2026 : All you need to know

Built for Speed: ~10ms Latency, Even Under Load

Blazingly fast way to build, track and deploy your models!

- Handles 350+ RPS on just 1 vCPU — no tuning needed

- Production-ready with full enterprise support

Prompt Engineering refers to improving inputs to get better outputs from LLMs.

Prompt engineering is like learning how to talk effectively to AI. It's about choosing the right words when asking AI to do something, whether it's writing text, coding, or creating images.

There are special tools that help us get better at this, making sure the AI understands us correctly and does what we want more accurately.

It's all about making communication between humans and AI smoother and more effective. In this blog, let us talk about the best prompt engineering tools available in 2026.

What is a Prompt Engineering Tool?

A prompt engineering tool is a software platform, application, or framework that helps users craft, test, refine, and organize the instructions (prompts) they provide to large language models (LLMs) or generative AI systems.

These tools are crucial for improving AI accuracy, maintaining consistent output, minimizing errors or hallucinations, and streamlining the way humans interact with AI. They go beyond simple chat interfaces, allowing for structured, repeatable workflows.

The best prompt engineering tools act as a bridge between human ideas and machine responses, helping users transform vague or simple requests into precise, actionable prompts that generate more reliable results.

.webp)

Best Prompt Engineering Tools

Here is a quick overview of the best prompt engineering tools in 2026:

LLM Gateway (Truefoundry)

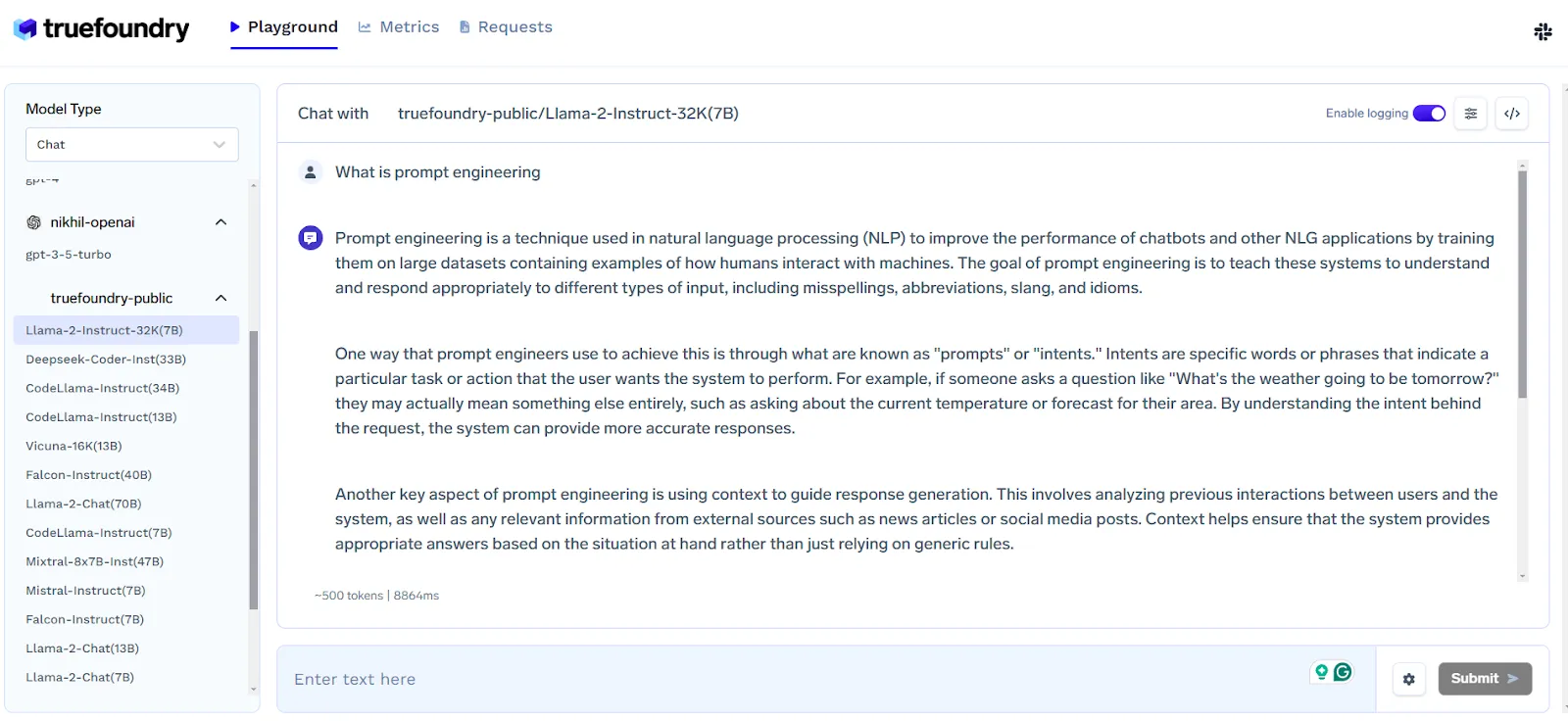

The TrueFoundry LLM playground is a platform that simplifies experimenting with open-source large language models (LLMs). It offers an easy way to test different LLMs through an API, without the need for complex setups involving GPUs or model loading.

This playground allows you to compare models to find the best fit before deciding on a hosting solution.

Interacting with the LLM Gateway

Here you can easily choose among different LLMs including OpenAI for inference.

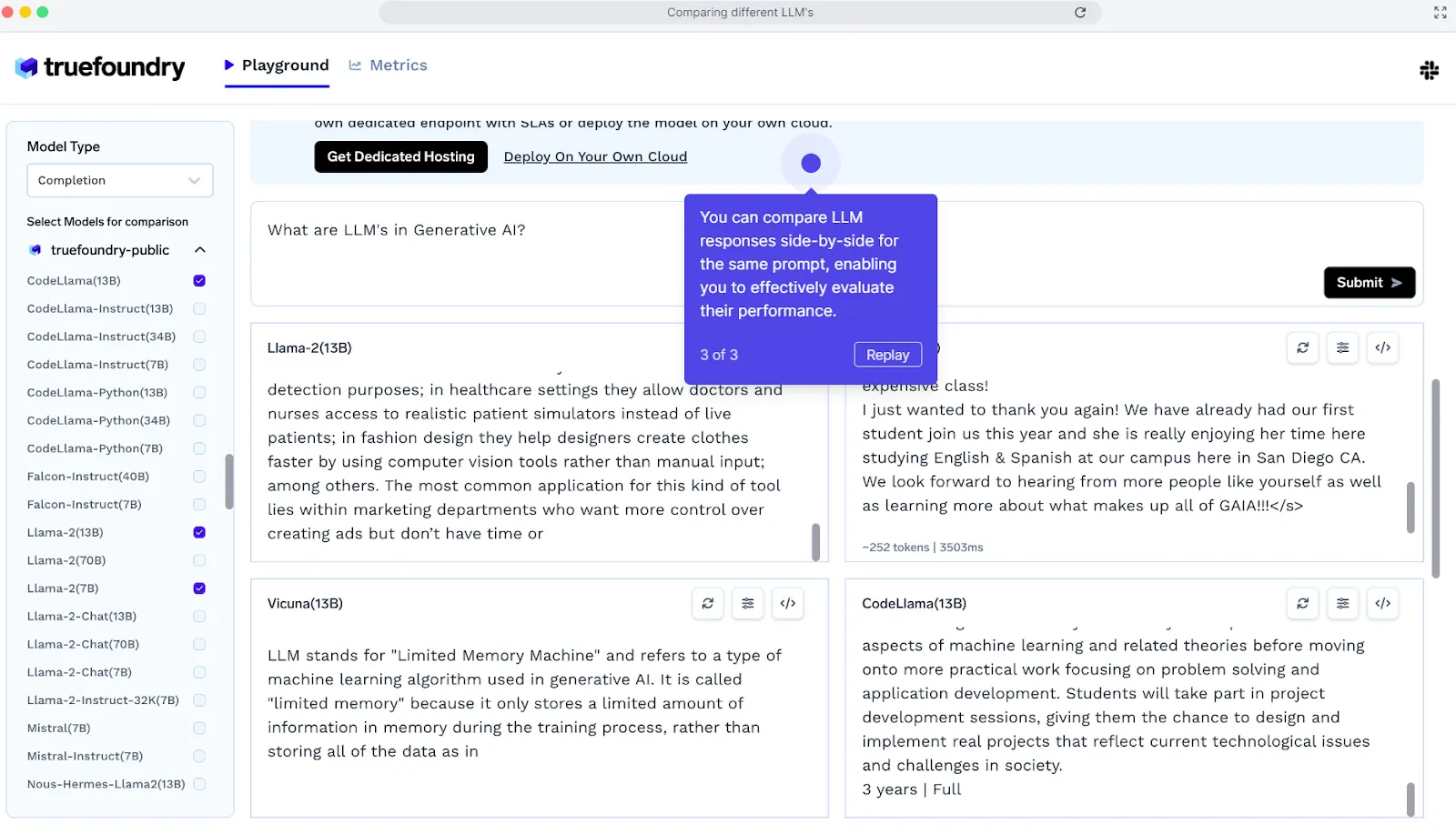

Compare different models with LLM Gateway:

Here you can compare up to 4 models for a specific prompt and decide which works better for a specific prompt.

Key features:

LLM Gateway provides a single API using which you can call any LLM provider - including OpenAI, Anthropic, Bedrock, your self-hosted model and the open source LLMs. It provides the following features:

- Unified API to access all LLMs from multiple providers including your own self hosted models.

- Centralized Key Management

- Authentication and attribution per user, per product.

- Cost Attribution and control

- Fallback, retries and rate-limiting support

- Guardrails Integration

- Caching and Semantic Caching

- Support for Vision and Multimodal models

- Run Evaluations on your data

While Truefoundry offers great tools for prompt engineering, TrueFoundry's capabilities extend far beyond, including features like seamless model training, effortless deployment, cost optimization, and a unified management interface for cloud resources.

Pricing:

TrueFoundry offers a free trial option for developers and early builders experimenting with AI workflows. TrueFoundry’s paid plan starts at $499/month.

Hugging Face Transformers

Hugging Face Transformers offers easy-to-use APIs and utilities to access and train state-of-the-art pre-trained NLP models. It supports tasks like translation, entity recognition, and text classification, while encouraging open-source collaboration.

Pros:

- User-friendly with accessible APIs for NLP tasks.

- Supports multiple frameworks: PyTorch, TensorFlow, and JAX.

- Flexible model training and inference across frameworks.

- Open-source, fostering community collaboration and innovation.

Cons:

- No standalone dashboard or GUI.

- Can be overwhelming for beginners due to extensive configuration options.

Best For

- NLP practitioners and researchers focusing on prompt engineering.

- Developers needing flexible, pre-trained models for text classification, translation, or entity recognition.

- Teams integrating models across different ML frameworks seamlessly.

Pricing

- Hugging Face Transformers is open-source and free. Some premium features and hosted inference APIs may have paid tiers via Hugging Face Hub.

AllenNLP

AllenNLP is an open-source NLP library designed to simplify a wide range of natural language processing tasks. While slightly more complex than AdaptNLP, it provides a rich collection of tools and pre-built components, making it ideal for researchers and developers working with advanced NLP models.

Pros:

- High-level configuration for easy setup of complex NLP tasks.

- Modular abstractions for building and experimenting with state-of-the-art models.

- Open-source and community-driven with active support and contributions.

Cons:

- Steeper learning curve compared to simpler NLP libraries.

- Requires familiarity with Python library and NLP concepts for full utilization.

Best For

- Researchers and developers working on advanced NLP tasks.

- Multi-task learning, transformer-based models, and text classification projects.

- Experimenting with modular NLP architectures and custom pipelines.

Pricing

- AllenNLP is completely free and open-source, supported by the community.

AdaptNLP

AdaptNLP is an easy-to-use NLP library that simplifies working with advanced language models for both beginners and experts. Built on top of fastai and Hugging Face Transformers, it provides fast, flexible, and efficient solutions for training and fine-tuning models.

Pros:

- Combines Transformers and Flair for versatile NLP capabilities.

- Simplifies complex tasks like text classification, entity extraction, and question answering.

- User-friendly API enables quick experimentation and training.

- Supports modern training techniques with fast, efficient inference.

Cons:

- Primarily Python-focused; limited support for non-Python environments.

- May require familiarity with Hugging Face or fastai for advanced customizations.

Best For

- Beginners looking for easy-to-use NLP pipelines.

- ML engineers needing efficient fine-tuning of transformer models.

- Quick prototyping of NLP tasks like classification, entity recognition, and POS tagging.

Pricing

- AdaptNLP is open-source and free, leveraging community-driven development.

LMScorer

LMScorer is an open-source tool that provides a simple programming and command-line interface to score sentences using various ML language models. It helps evaluate and refine prompts to improve AI interactions and ensure outputs are more effective.

Pros:

- Open-source and free to use with accessible code for modification.

- Simple programming interface and CLI for easy integration.

- Scores sentences using ML language models to evaluate quality.

- Helps improve prompts for better AI performance.

Cons:

- Limited to scoring; not a full NLP or model-training framework.

- Requires basic programming knowledge for effective use.

Best For

- AI developers and researchers refining prompt quality.

- Experimenting with language models for natural, understandable outputs.

- Quickly evaluating multiple variations of prompts for optimization.

Pricing

- LMScorer is completely free and open-source.

Promptfoo

Promptfoo is an open-source command-line tool and library designed to streamline testing and development of large language models (LLMs). It enables developers to systematically test prompts, compare outputs, and automatically score results, replacing trial-and-error with a test-driven approach.

Pros:

- Open-source and free, with both CLI and library integration.

- Supports concurrent testing for faster evaluation of LLMs.

- Works with multiple LLM APIs like OpenAI and Google.

- Enables systematic, test-driven development for high-quality model outputs.

Cons:

- Primarily aimed at developers; less beginner-friendly.

- Requires familiarity with command-line operations and LLM APIs.

Best For

- LLM developers testing and refining prompts efficiently.

- QA engineers and researchers wanting systematic evaluation of model responses.

- Teams seeking to implement test-driven workflows for AI output quality.

Pricing

- Promptfoo is completely free and open-source.

PromptHub

PromptHub is a closed-source platform built for testing, evaluating, and optimizing prompts across multiple language models. It allows users to assess prompt effectiveness, explore model responses, and analyze the impact of different hyperparameter settings.

Pros:

- User-friendly interface with API access and optional Docker deployment.

- Provides a rich library of ready-to-use prompts for NLP and chatbot development.

- Supports prompt customization for specific models and tasks.

- Facilitates team collaboration, version control, and continuous improvement.

Cons:

- Lacks full transparency.

- May require subscription or licensing for advanced features.

- Limited flexibility compared to open-source alternatives for experimentation.

Best For

- Teams and developers testing prompts across multiple LLMs.

- NLP practitioners creating chatbots or AI content generation pipelines.

- Organizations needing collaboration and version-controlled prompt management.

Pricing

- PromptHub is a paid platform with subscription plans staring at $9 /month.

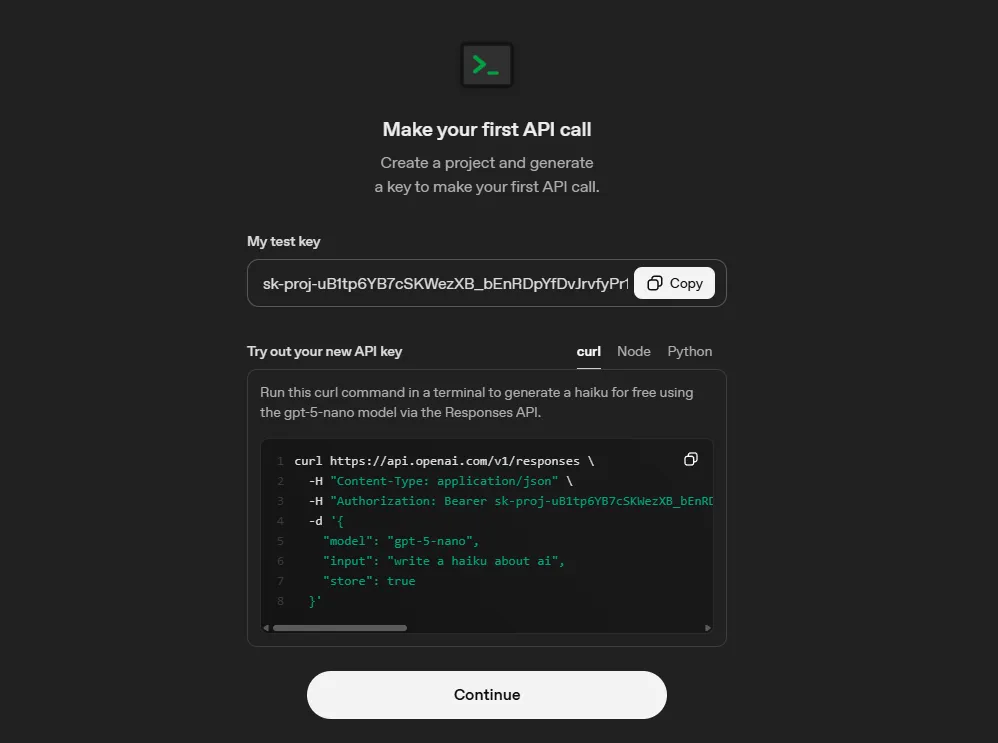

OpenAI Playground

OpenAI Playground is a closed-source web tool designed for experimenting with OpenAI’s advanced AI models, including GPT-4. It allows users to test prompts, compare strategies, and fine-tune language models in an interactive, user-friendly environment.

Pros:

- Intuitive, browser-based interface ideal for prompt testing and prompt engineering.

- Supports multiple OpenAI models and adjustable parameters for experimentation.

- Provides immediate feedback for rapid iteration.

- Rich educational resources, tutorials, and API documentation available.

Cons:

- Limited to OpenAI models.

- May require an OpenAI subscription or API credits for extended usage.

- Less suitable for non-OpenAI models or custom LLM deployment.

Best For

- Developers and researchers experimenting with GPT-4 or other OpenAI models.

- Prompt engineers exploring zero-shot, few-shot, or fine-tuning strategies.

- Educators and students learning to work with advanced AI language models.

Pricing

- OpenAI Playground usage typically requires API credits or subscription, depending on usage limits and model choice.

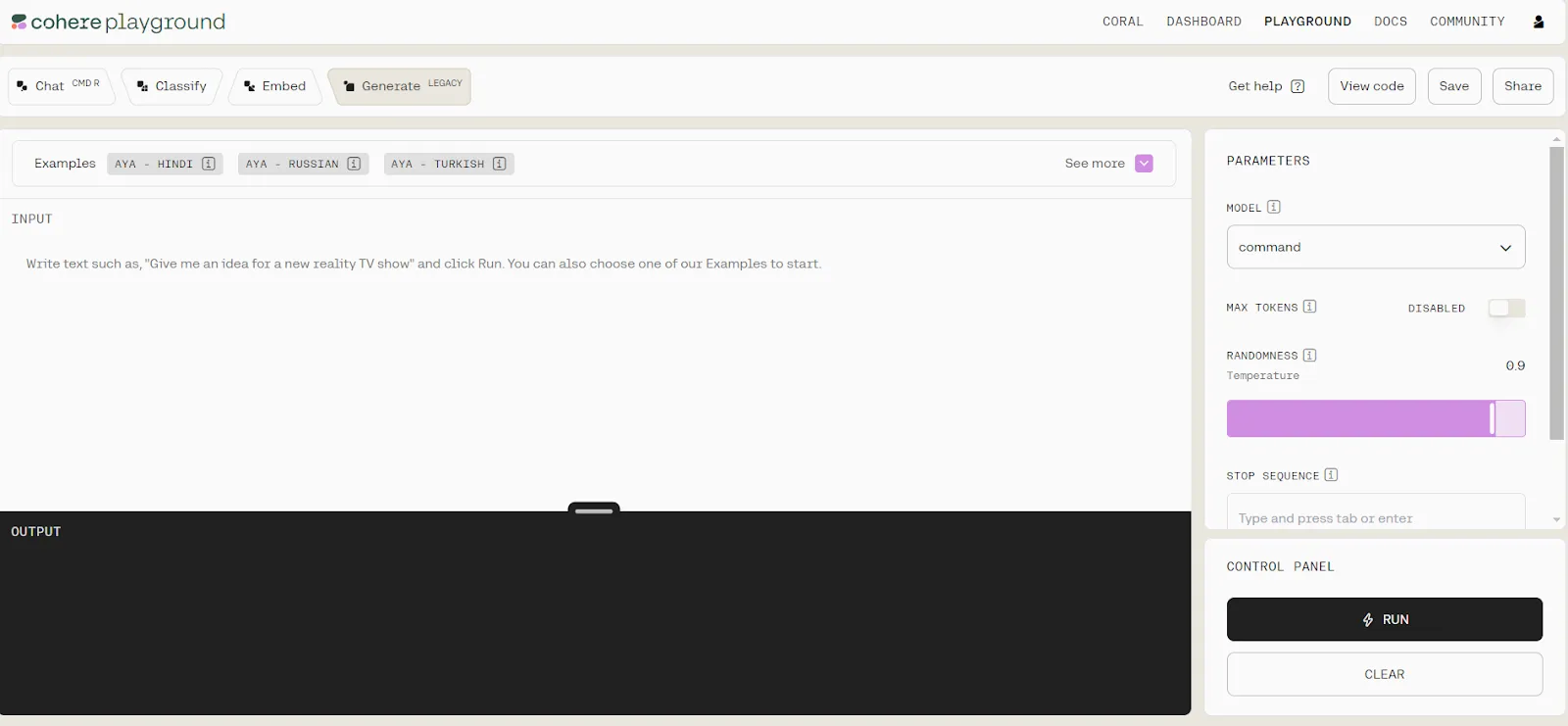

Cohere Playground

Cohere Playground is an intuitive online platform that allows users to work with large AI language models without coding. It’s suitable for both beginners and experienced users, enabling text generation, embedding analysis, classifier creation, and simple chat-based interactions.

Pros:

- No coding required; beginner-friendly interface.

- Generate natural language text and create text classifiers easily.

- Visualize embeddings for semantic analysis in a 2D space.

- Select model size based on requirements.

Cons:

- Limited model selection and features compared to other platforms.

- Cannot compare different prompts or models side by side.

- Requires special permissions to train your own models.

Best For

- Beginners exploring large language models.

- Users needing quick experimentation with text generation or classification.

- Semantic analysis using embeddings without technical setup.

Pricing

- Cohere Playground may require an account or subscription for extended usage and model access

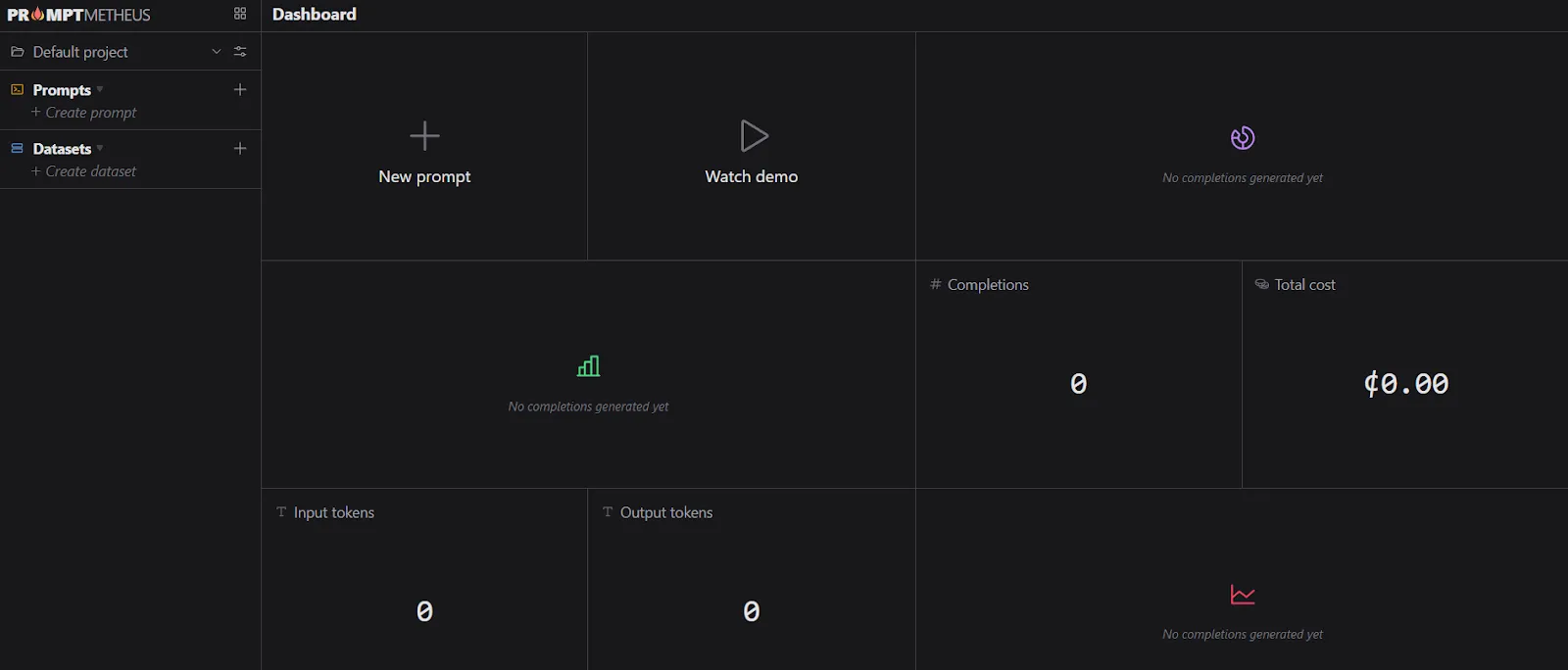

PromptMetheus

PromptMetheus is a specialized Prompt Engineering IDE designed for creating, testing, and deploying prompts for large language models (LLMs). It offers a structured environment with analytics, collaboration tools, and real-time deployment capabilities, making prompt engineering more efficient and manageable.

Pros:

- Composable prompts using text and data blocks.

- Full history tracking and traceability for prompt design.

- Cost estimation for LLM API usage before execution.

- Access to performance statistics and analytics.

- Real-time collaboration with team members.

Cons:

- May require a subscription.

- More complex than general-purpose prompt tools; learning curve for beginners.

- Limited to LLMs and integrations supported by the IDE.

Best For

- AI developers focusing on structured prompt engineering.

- Teams collaborating on large-scale prompt development.

- Users needing analytics and cost estimates before deploying prompts.

Pricing

- Pricing details depend on subscription plans or enterprise licensing, starting at $29/ month

How to Choose the Right Prompt Engineering Tool?

Choosing the right prompt engineering tool depends on your goals, technical expertise, and the AI models you plan to use. Here’s a step-by-step guide to help you decide.

1. Define Your Use Case

Start by clearly identifying what you want to achieve with prompt engineering. If your focus is content creation, such as writing articles, marketing copy, or blog posts, you’ll want a tool that allows style customization and produces high-quality text.

For developers, tools that integrate directly with IDEs and offer code generation or debugging assistance are ideal. If your goal is research or data analysis, look for tools that can summarize documents, handle structured data, or generate detailed reports.

2. Evaluate Model Compatibility

Not all tools support every AI model. Check which models the tool is compatible with, like GPT-4, Claude, or LLaMA, and whether it allows you to switch models depending on the complexity of your task.

Using a tool that supports multiple models gives you flexibility and can improve output quality for specialized use cases.

3. Assess Prompt Management Features

A good prompt engineering tool should make managing and refining prompts easy. Features like a template library provide pre-built prompts that save time and maintain consistency. Version control helps you track edits and optimize prompts over time.

If you are working in a team, collaborative features like shared repositories allow multiple users to contribute and maintain a single prompt workflow.

4. Check User Interface & Usability

The interface can make or break your experience. Some tools provide visual drag-and-drop interfaces, while others are purely text-based. Choose one that matches your comfort level and workflow.

An intuitive interface reduces the learning curve, allowing you to focus on prompt creation rather than navigating complex menus.

5. Consider Integrations

Consider how well the tool fits into your existing workflow. Check if it supports APIs or plugins that connect with platforms like Slack, Notion, or Google Workspace. Export options are also valuable, enabling you to download prompts, outputs, and logs for further analysis or reporting.

6. Evaluate Pricing & Scalability

Pricing structures vary widely. Free tiers are great for experimentation but often limit API calls, templates, or advanced features.

Paid plans usually provide higher usage limits, better support, and collaborative capabilities. Make sure the tool scales with your needs to avoid switching platforms later.

7. Test for Flexibility & Output Quality

Before committing, run sample prompts to evaluate how well the tool interprets instructions. Assess whether it allows customization of output length, tone, or persona. Tools that provide fine-tuned control over output can significantly enhance productivity and result quality.

8. Review Community & Support

Finally, consider the support ecosystem. Comprehensive documentation and tutorials help you get started quickly. Active user communities provide shared prompt libraries and tips. Reliable customer support is essential for troubleshooting and scaling your usage efficiently.

Why Prompt Engineering Tools Matter in 2026?

Prompt engineering tools have become essential in 2026 because AI has become deeply integrated into almost every aspect of work and daily life. With increasingly powerful models like GPT-4.5, Claude 3, and LLaMA 3, generating high-quality, reliable outputs depends heavily on how prompts are crafted.

A well-designed prompt can drastically improve accuracy, relevance, and creativity, while a poorly structured one can produce confusing or low-value results.

These tools also streamline workflows by offering features like prompt templates, version control, and team collaboration. They reduce the trial-and-error process, allowing users, from content creators to developers and data analysts, to achieve their goals faster and more efficiently.

Additionally, as AI adoption grows in business and research, organizations rely on prompt engineering tools to maintain consistency, compliance, and reproducibility in AI outputs.

In short, prompt engineering tools bridge the gap between raw AI capabilities and practical, actionable results, making them indispensable for anyone leveraging AI in 2026.

Evaluating a Prompt Engineering Tool

When evaluating a prompt engineering tool, you can use a set of simple guiding questions to assess its usefulness. Keep in mind that these questions are broad, and not each will apply to every tool.

Usability

- Is the interface simple, clear, and easy to navigate?

- Can I learn how to use this tool quickly?

- Does it provide helpful guidance or documentation?

- When something goes wrong, does it give clear error messages and fixes?

Effectiveness

- Is the tool fast and responsive during use?

- Does it produce accurate and correct results?

- Does it work reliably over time and across different use cases?

Integration

- Does it work well with the tools and systems I already use?

- Are there strong and flexible APIs available?

- Is it easy to import/export or move data in and out?

Scalability

- Does the tool maintain performance with large or complex workloads?

- What kind of computational resources does it require?

- Can it handle increased demand without breaking down?

Customization Options

- Can I configure the tool to match my workflow?

- Does it allow personalization for me or my team?

- Can I tailor outputs or behavior to specific needs?

.webp)

Future of Prompt Engineering Tools

The future of prompt engineering tools in 2026 and beyond is shaping up to be highly advanced and user-centric. Key trends and developments include:

- Intelligent Prompt Optimization: Tools will suggest, refine, and even auto-generate prompts based on the task, user style, and past performance.

- Real-Time Feedback: Users will receive instant guidance on prompt effectiveness, helping improve output quality without trial and error.

- Automated Error Correction: AI-driven tools will detect and correct poorly structured prompts or ambiguous instructions.

- Enhanced Collaboration: Team-wide prompt repositories, version tracking, and seamless multi-user access will improve workflow consistency.

- Multi-Model Orchestration: Users will be able to leverage different AI models within a single workflow, selecting the best model for each task.

- Integration with Productivity Platforms: Direct integration with tools like project management apps, communication platforms, and data systems will streamline workflows.

- Bias Detection and Safety Checks: Built-in monitoring will ensure AI outputs are ethical, safe, and aligned with regulatory or organizational standards.

- Centralized AI Workflow Management: Prompt engineering tools will evolve into hubs for managing, optimizing, and governing AI-driven tasks across teams and projects.

Conclusion

Prompt engineering tools are essential in 2026, helping turn human intent into accurate, creative, and reliable AI outputs. They streamline workflows through templates, collaboration, multi-model support, and real-time feedback. Choosing the right tool carefully, considering usability, compatibility, and scalability, ensures better results and efficiency.

Platforms like TrueFoundry take this a step further by offering a unified playground for multiple LLMs, centralized version control, cost tracking, and team collaboration, making it easier to test, optimize, and deploy prompts at scale.

Start optimizing your AI workflows today, explore TrueFoundry and see how streamlined prompt engineering can boost your productivity and output quality.

Frequently Asked Questions

What are some commonly used prompt engineering tools?

Commonly used best prompt engineering tools include open-source libraries like Hugging Face Transformers and Promptfoo for automated testing. Closed-source platforms such as the OpenAI Playground and PromptHub are popular for iterative experimentation. These tools standardize how developers refine model inputs to achieve higher output accuracy.

What is the best prompt engineering tool?

For production-grade management, TrueFoundry is the best prompt engineering tool because it combines a multi-model playground with centralized versioning and cost tracking. It unifies prompts across providers, allowing teams to evaluate and deploy optimized inputs with built-in governance.

What are the three types of prompt engineering?

The three primary types include zero-shot, few-shot, and chain-of-thought prompting. Zero-shot provides a task without examples, while few-shot includes specific demonstrations to guide the model. Chain-of-thought engineering encourages the LLM to process complex reasoning steps, which is essential for solving intricate logic or coding problems.

What are the top 5 prompt engineering techniques?

Top prompt engineering techniques involve Chain-of-Thought, Few-Shot prompting, Delimiters for structure, Role Prompting, and Iterative Refinement. Using best prompt engineering tools like Promptfoo helps automate the evaluation of these techniques. These methods ensure the model maintains focus, reduces hallucinations, and follows specific formatting constraints required for production.

What are some best practices while using prompt engineering tools?

Best practices include versioning every prompt, utilizing side-by-side model comparisons, and implementing automated scoring. You should track token costs and latency across different providers to optimize performance. Using a centralized management platform ensures your entire team utilizes the most effective prompts while maintaining strict security and auditability.

TrueFoundry AI Gateway delivers ~3–4 ms latency, handles 350+ RPS on 1 vCPU, scales horizontally with ease, and is production-ready, while LiteLLM suffers from high latency, struggles beyond moderate RPS, lacks built-in scaling, and is best for light or prototype workloads.

The fastest way to build, govern and scale your AI

.webp)

.png)

.png)

.webp)

.webp)