What is Cursor?

Cursor is an AI-powered code editor that enhances developer productivity with intelligent code completion, generation, and debugging capabilities. Built upon Visual Studio Code, it integrates artificial intelligence features directly into the coding environment to streamline the development process.Key Features of Cursor

- AI-Powered Code Completion: Provides real-time, context-aware code suggestions and multi-line completions that accelerate the coding process with intelligent autocomplete capabilities

- Intelligent Code Understanding: Offers code explanation, documentation generation, and smart navigation to help developers comprehend and manage complex codebases effectively

- Integrated AI Chat Assistant: Includes built-in AI chat for instant programming help, supports multiple programming languages and frameworks, and integrates with version control systems for seamless development workflow

Prerequisites

Before integrating Cursor with TrueFoundry, ensure you have:- TrueFoundry Account: Create a Truefoundry account and follow the instructions in our Gateway Quick Start Guide

- Cursor Installation: Download and install Cursor from the official website

- Virtual Model: Create a Virtual Model for each model you want to use in Cursor (see Create a Virtual Model below)

Important Network Requirements

Gateway URL Public Exposure Required: Your TrueFoundry Gateway URL must be exposed to public internet for Cursor integration to work properly. If your gateway is behind a VPN, the request flow is: Cursor → Cursor Server → Service Provider Server. This means Cursor’s servers need to be able to reach your TrueFoundry Gateway endpoint. For more information about Cursor’s security practices, visit Cursor’s security page.

Why You Need a Virtual Model

Cursor has internal logic that works optimally with standard OpenAI model names (likegpt-4o, claude-3-sonnet), but may experience compatibility issues with TrueFoundry’s fully qualified model names (like openai-main/gpt-4o or azure-openai/gpt-4o). When Cursor encounters these fully qualified names directly, it may not function as expected due to internal processing differences.

Virtual Models fix this by letting you:

- Use a standard model name as the Virtual Model name in Cursor (e.g.,

gpt-4o) that Cursor recognizes. - Have the TrueFoundry Gateway map that name to the fully qualified target model (e.g.,

openai-main/gpt-4o) and route requests there.

Integration Guide

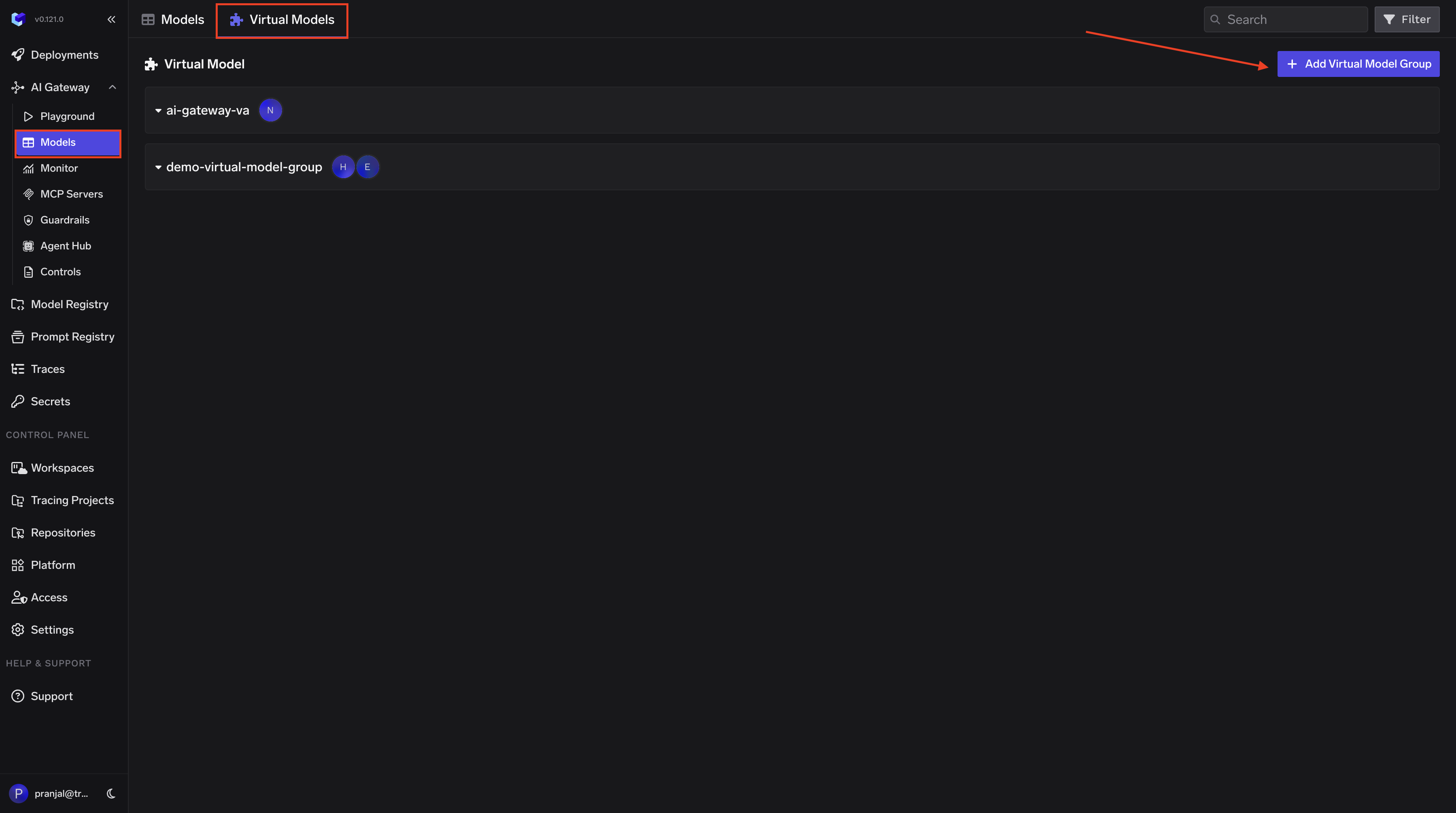

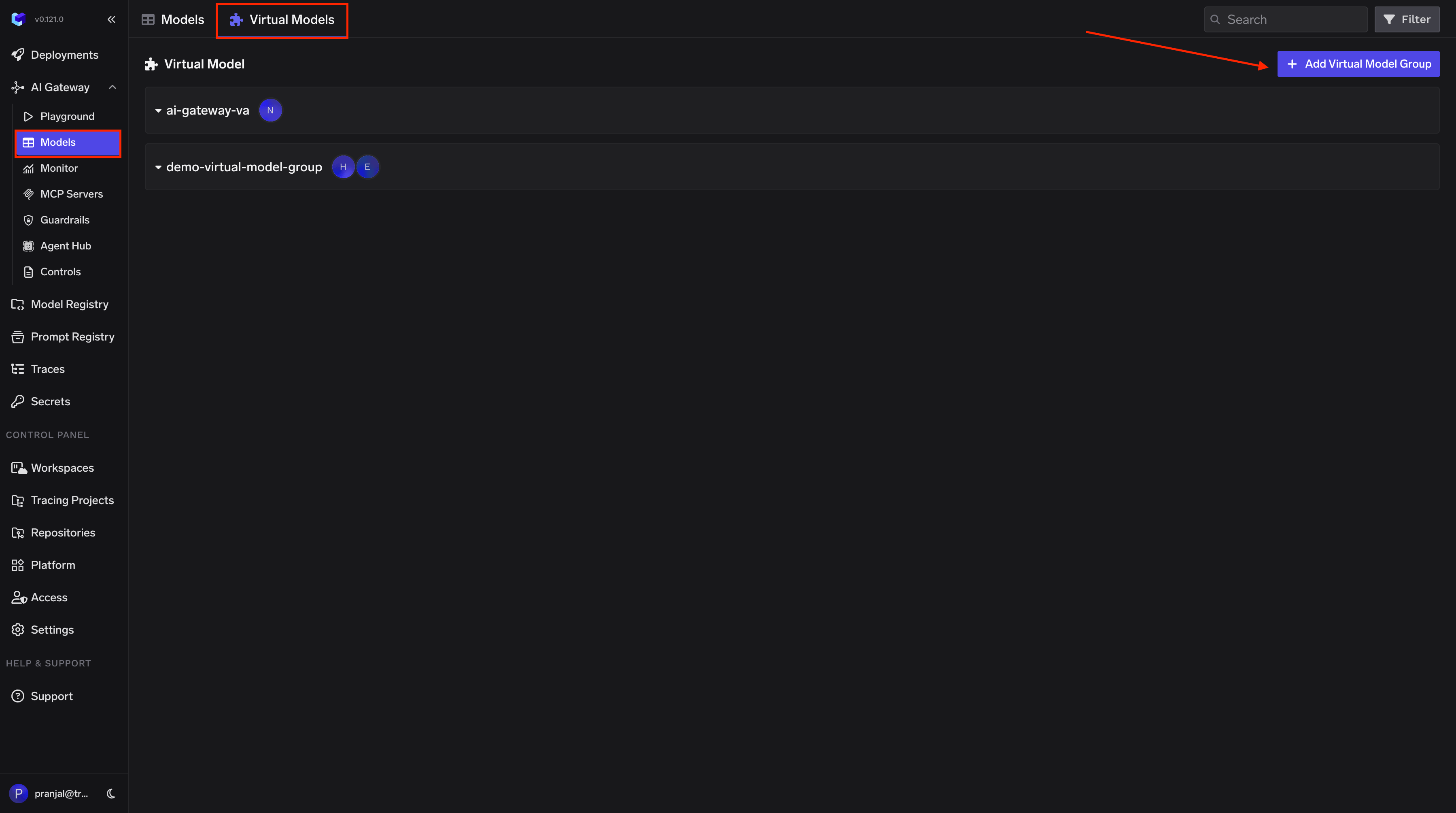

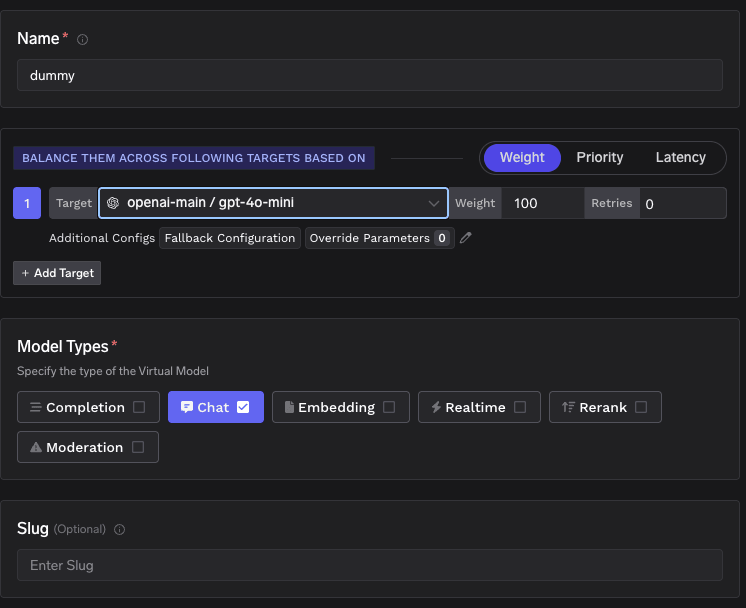

Step 1: Create a Virtual Model

Create a Virtual Model so Cursor can use a simple model name that the Gateway maps to your provider. Follow these steps:- Open the Virtual Model / Routing page in the TrueFoundry AI Gateway dashboard

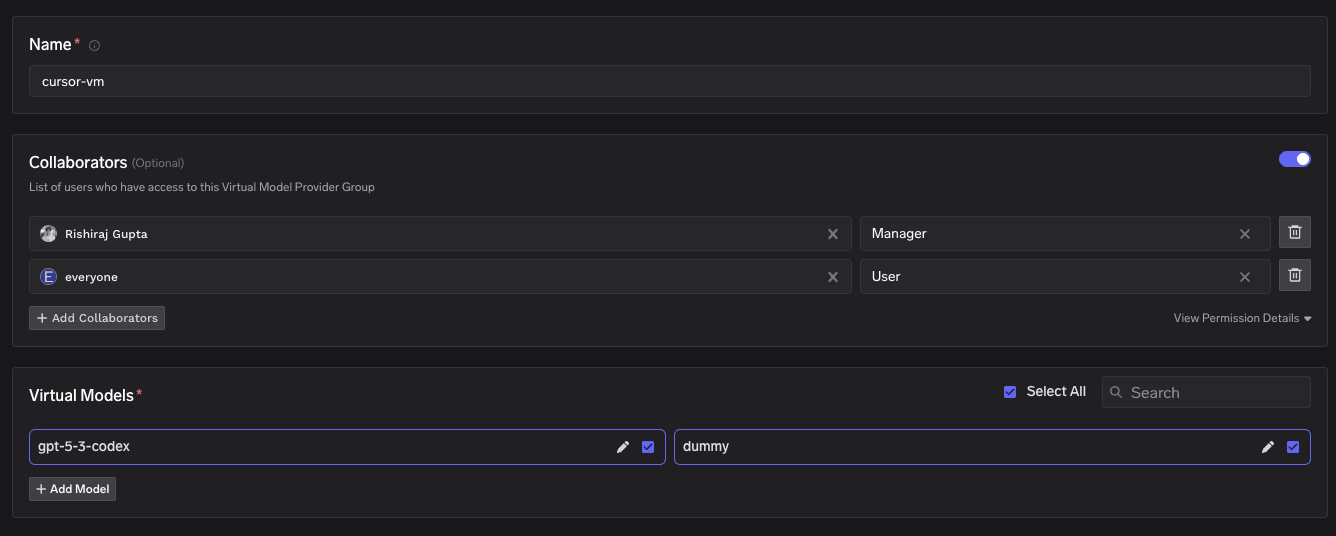

- Create a new Virtual Model and give it a name. This name (in the format

{group-name}/{model-name}) is what you will use in Cursor (e.g.,cursor-vm/gpt-5-3-codex).

- Set the target to the fully qualified model name (e.g.,

openai-main/gpt-4o-mini). You can use one target at 100% weight or add multiple targets with weights for routing across providers.

cursor-vm/dummy and the target is openai-main/gpt-4o-mini at 100% weight, then when you use cursor-vm/dummy in Cursor, the Gateway routes that request to openai-main/gpt-4o-mini.

Use the Virtual Model name (e.g.,

cursor-vm/dummy) in Cursor. Do not use the fully qualified name (e.g., openai-main/gpt-4o-mini) directly in Cursor, as that can cause compatibility issues.Step 2: Get TrueFoundry Gateway Configuration

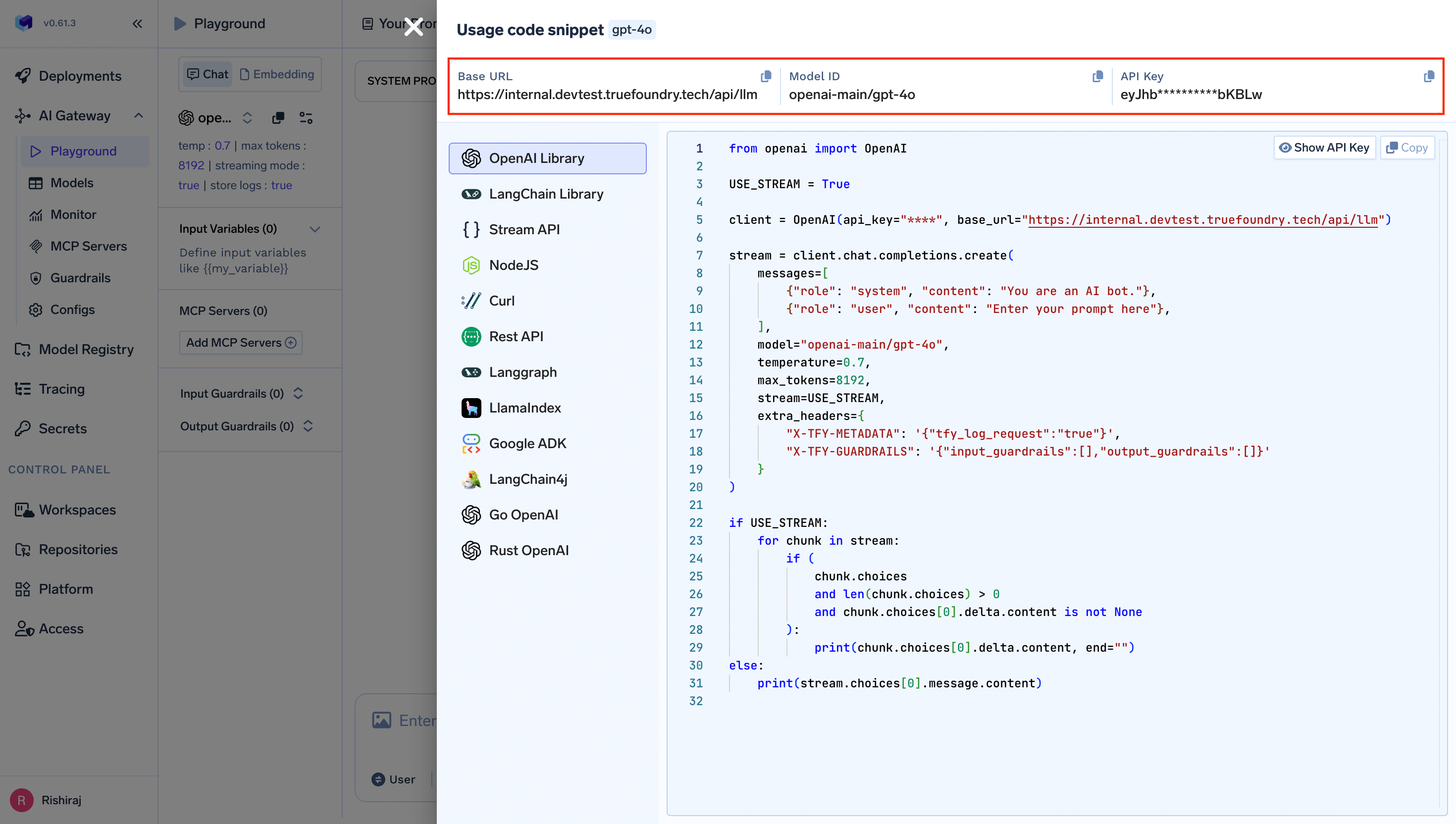

Gather your TrueFoundry Gateway details:- Navigate to AI Gateway Playground: Go to your TrueFoundry AI Gateway playground

- Access Unified Code Snippet: Use the unified code snippet

- Copy Base URL: You will get the base URL from the unified code snippet

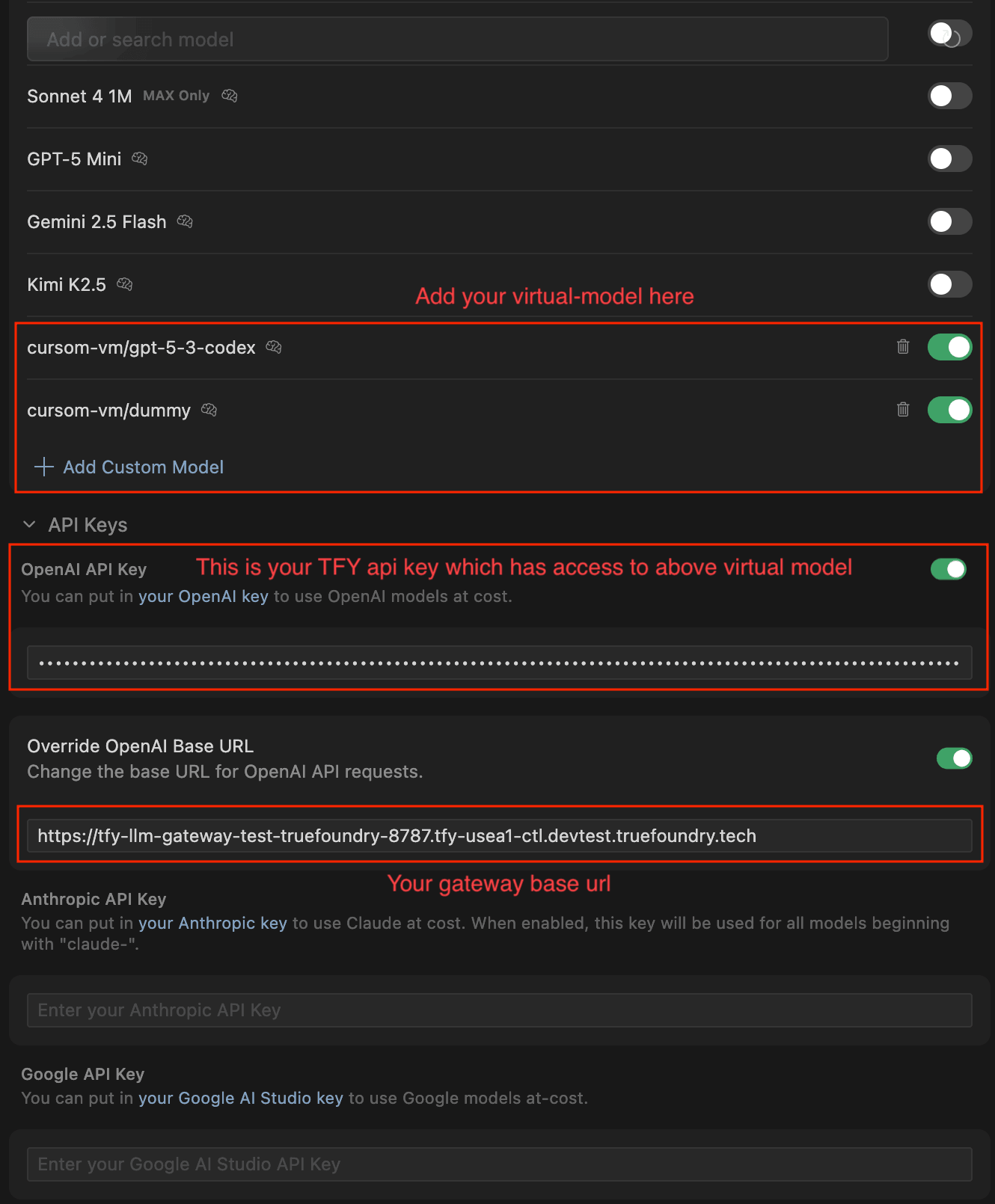

Step 3: Configure Cursor with TrueFoundry Gateway

- Open Cursor Settings

- In the Models section, add your Virtual Model names as custom models (e.g.,

cursor-vm/gpt-5-3-codex,cursor-vm/dummy) - Under API Keys, enter your TrueFoundry API key in the OpenAI API Key field

- Enable Override OpenAI Base URL and enter your TrueFoundry Gateway base URL

Step 4: Start Using Cursor

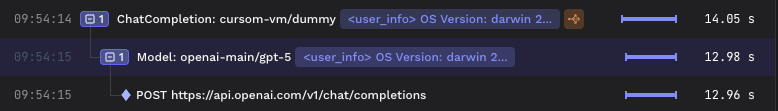

Select your Virtual Model from the model dropdown in Cursor and start sending requests. All your calls from Cursor will now go through the TrueFoundry Gateway, with automatic routing to the target models configured in your Virtual Model. You can add multiple targets with different weights in your Virtual Model for intelligent routing and fallback across providers. To learn more about routing strategies, see the Virtual Models documentation.Request Traces

You can monitor and inspect your Cursor requests in the TrueFoundry AI Gateway dashboard. Navigate to the request traces view to see request details, latency, token usage, and routing information for each call.