Helping Enterprises accelerate the time to value for GenAI

Built for Speed: ~10ms Latency, Even Under Load

Blazingly fast way to build, track and deploy your models!

- Handles 350+ RPS on just 1 vCPU — no tuning needed

- Production-ready with full enterprise support

In this article, we cover the constraints and considerations Enterprises face when building GenAI framework/platform internally, both from a tech perspective as well as governance and execution perspective. We also briefly talk about how enterprises could structure themselves to prepare better to accelerate the Time to value of GenAI applications at scale in an optimal way with the right governance.

2023 was the year of Experimentation, 2024 is about Productionization

In 2023, post the ChatGPT launch, the landscape of artificial intelligence (AI) in enterprises underwent a significant shift, marked by a surge in experimentation with GenAI technologies. Organizations across industries embarked on a multitude of Proof of Concepts (POCs) aimed at testing the feasibility, applicability, and potential of integrating GenAI into their operations. These POCs served as laboratories for innovation, allowing businesses to explore various AI technologies and use cases to determine their viability within their specific contexts.

As the dust settled on 2023, some early adopters experienced remarkable upswings and substantial returns on investment (ROI) from their GenAI initiatives. The success stories acted as beacons of progress,, demonstrating the tangible benefits and transformative potential of GenAI technologies. The stage is now set for a broader wave of adoption, as enterprises seek to capitalize on the momentum and scale their GenAI initiatives to achieve broader impact and integration within their operation. 2024 stands poised as the year of GenAI productionization.

What does it mean for enterprises?

Moving from 2023 to 2024 requires enterprises to recalibrate their strategies, processes, and governance frameworks to align with the demands of enterprise-wide GenAI deployment. This shift entails a deeper understanding of the technical, operational, and cultural implications of scaling GenAI initiatives, as well as a heightened focus on addressing key constraints and challenges that may impede progress. From ensuring scalability and data governance to tackling talent shortages and skill gaps, organizations must adopt a proactive and adaptive mindset to overcome the hurdles on the path to GenAI productionization. As enterprises navigate the landscape of Generative Artificial Intelligence (GenAI), crafting a robust charter becomes essential to optimize key considerations that drive success in this domain.

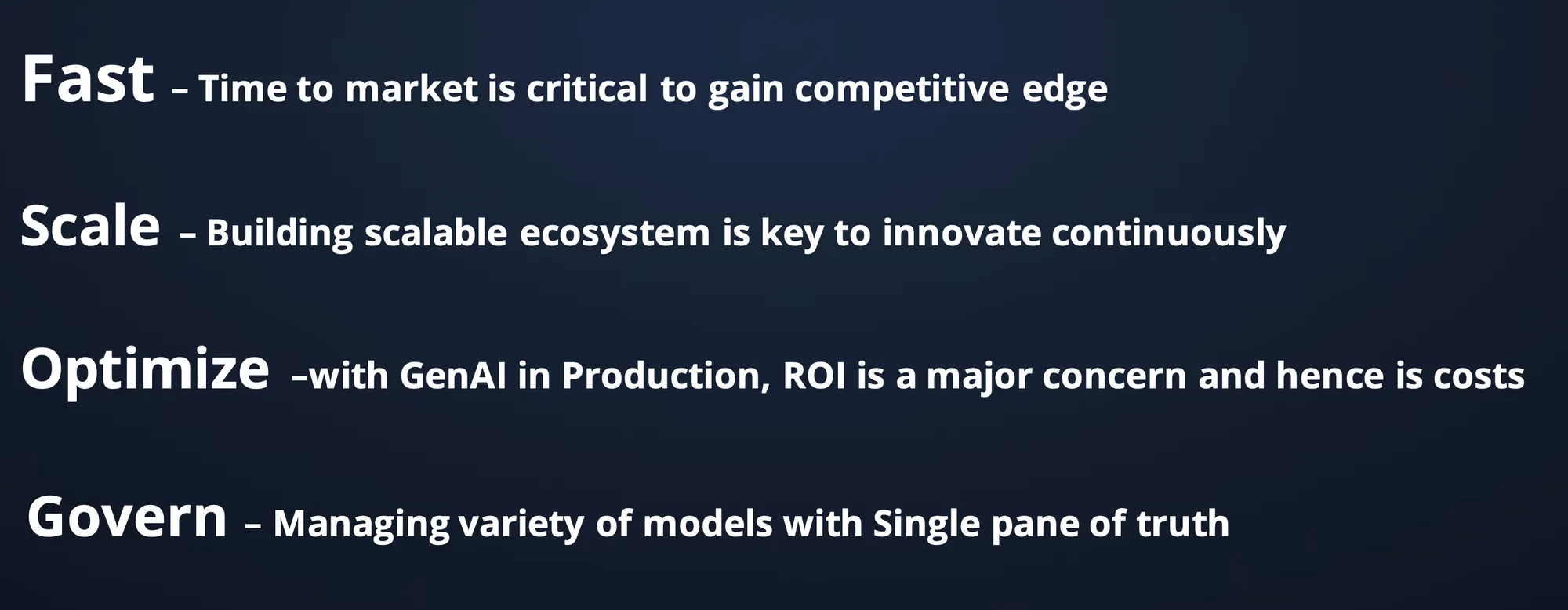

- Speed: One paramount factor is time to market, as gaining a competitive edge hinges on the ability to swiftly develop and deploy GenAI applications. Thus, the framework should empower developers and teams to build and ship solutions rapidly, without encountering undue constraints that hinder agility. By fostering a culture of speed and innovation, enterprises can capitalize on emerging opportunities and stay ahead in dynamic markets

- Scalability: Furthermore, scalability lies at the core of continuous innovation in GenAI ecosystems. Enterprises must focus on building a scalable framework that enables the seamless integration of GenAI applications and facilitates the reuse of components across diverse use cases. By embracing scalable architectures and flexible design principles, organizations can foster a culture of innovation and adaptability, ensuring their GenAI ecosystem remains agile and responsive to evolving business needs and technological advancements.

- Cost optimal: However, amidst the pursuit of innovation and agility, enterprises must remain vigilant about maximizing return on investment (ROI) while managing the substantial costs associated with GenAI initiatives. To achieve this balance, organizations should implement systems and processes that optimize infrastructure utilization without imposing prohibitive constraints on developers. Cost visibility and tracking mechanisms should be integrated into the framework to provide insights into resource consumption and facilitate informed decision-making, ensuring that investments in GenAI yield tangible value and sustainable growth over time.

- Govern: Governance emerges as a critical enabler of success in the GenAI landscape, serving as the foundation for ensuring compliance, security, and alignment with organizational objectives. A comprehensive governance framework should provision access controls at various levels - including teams, resources, and models ; have budgeting flexibility, evaluation frameworks, while also incorporating guardrails to mitigate risks and promote responsible AI development. It should be able to create a single pane of truth for the enterprise architecture teams to monitor GenAI initiatives. However, governance should not impede speed; rather, it should streamline processes and provide guardrails that accelerate innovation while ensuring adherence to regulatory requirements and best practices.

The diagram below shows the key design principles from a technical perspective that needs to be baked into the GenAI platform and framework that enterprises are building to achieve objectives of speed, scale, cost optimality and right governance. We will go into a deep dive of these technical considerations for building a scalable LLMOps platform in a separate blog.

Centralised Governance and Federated Execution

As enterprises embark on the journey of integrating Generative AI into their operations, governance emerges as a critical factor for ensuring success without compromising agility. While governance frameworks are essential for maintaining compliance, security, and alignment with organizational objectives, they must not impede the speed of innovation and execution inherent in AI projects.

Two prevalent structural approaches have emerged in enterprises regarding governance in AI rollout.

- In the first approach, the Central Platform team or architecture team assumes responsibility for defining the core GenAI platform's features and governance frameworks. This team ensures alignment across various departments and projects, while also empowering individual teams to execute their AI initiatives autonomously within the established governance guidelines. The emphasis here lies on fostering collaboration and ensuring consistency without stifling innovation at the grassroots level.

- Alternatively, some organizations opt for a more centralized governance model, where both the governance framework and final delivery of AI projects are controlled by the Central Platform team. While this approach may streamline decision-making and enforce standardization, it can potentially limit agility and responsiveness to specific business needs and use cases.

However, we advocate for a balanced approach that combines Centralized governance with federated execution. This model allows for the establishment of overarching governance principles and frameworks by the Central Platform team while granting individual teams the autonomy to execute their AI projects based on their unique requirements and objectives. Achieving this delicate balance requires a robust platform that offers flexibility in controlling various aspects of governance, including access controls, cost tracking mechanisms, scalable guardrails, and evaluation frameworks.

💡

In essence, the optimal structure for GenAI rollout in enterprises entails centralized governance to ensure consistency, compliance, and alignment with organizational goals, coupled with federated execution to foster innovation, agility, and responsiveness at the grassroots level. This approach requires investing in a versatile platform capable of facilitating seamless collaboration, enforcing governance standards, and accommodating diverse GenAI initiatives across the organization.

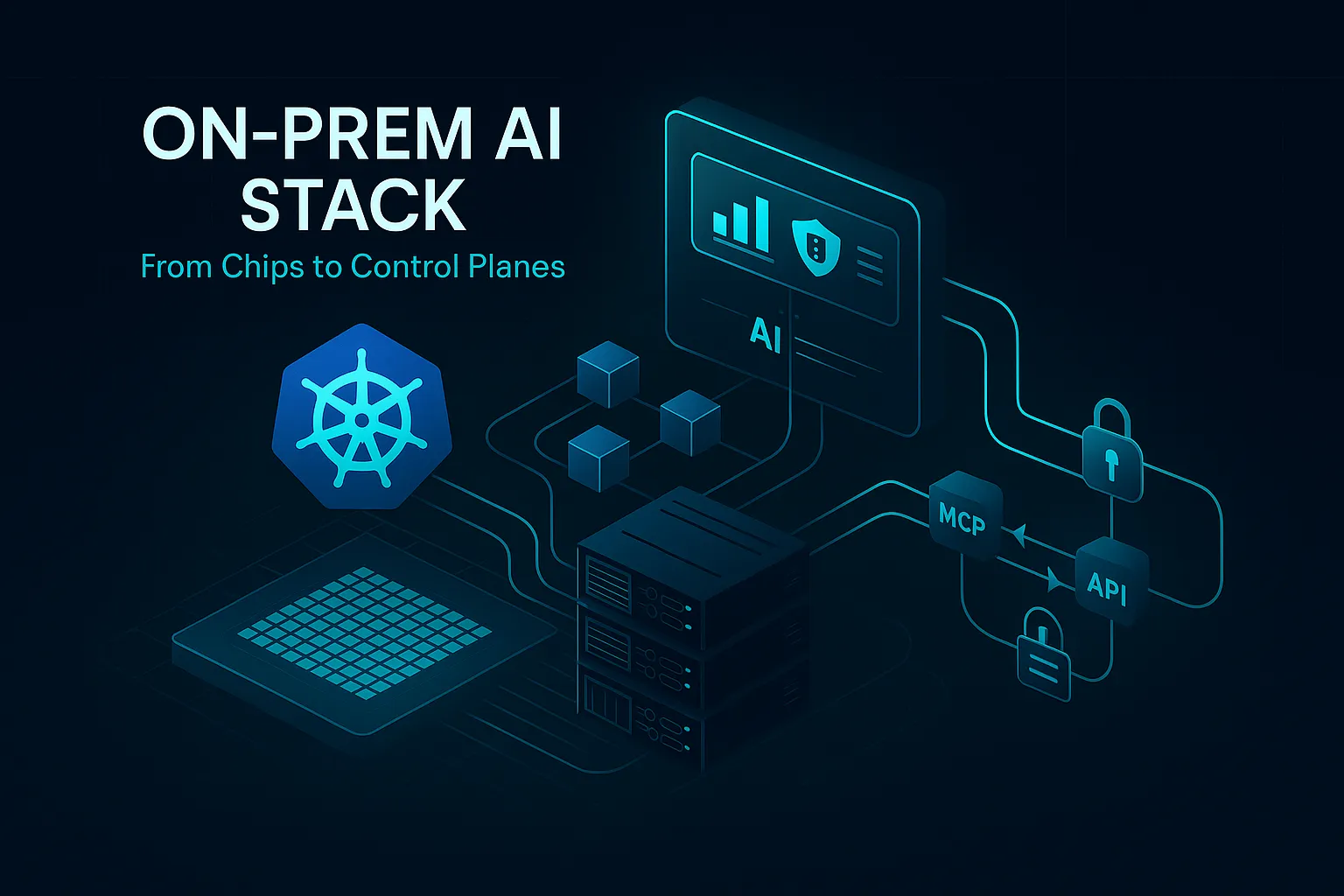

TrueFoundry could be a partner to accelerate your GenAI journey

TrueFoundry is a self-hosted PaaS for enterprises to build, deploy and ship secure LLM applications in a faster, scalable, cost-efficient way with right governance controls. We abstract out the engineering required and offer GenAI accelerators - LLM PlayGround, LLM Gateway, LLM Deploy, LLM Finetune, RAG Playground and Application Templates that can enable an organisation to speed up the layout of their overall GenAI/LLMOps framework. Enterprises can plug and play these accelerators with their internal systems as well as build on top of our accelerators to enable a LLMOps platform of their choice to the GenAI developers.

You can get a sneak peek into our Product Tour here.

TrueFoundry AI Gateway delivers ~3–4 ms latency, handles 350+ RPS on 1 vCPU, scales horizontally with ease, and is production-ready, while LiteLLM suffers from high latency, struggles beyond moderate RPS, lacks built-in scaling, and is best for light or prototype workloads.

The fastest way to build, govern and scale your AI

.png)

.webp)

.webp)

.webp)